Discover Enterprise Test Data®

Simplify your complex data landscape and provide confidence and clarity at every step of your data...

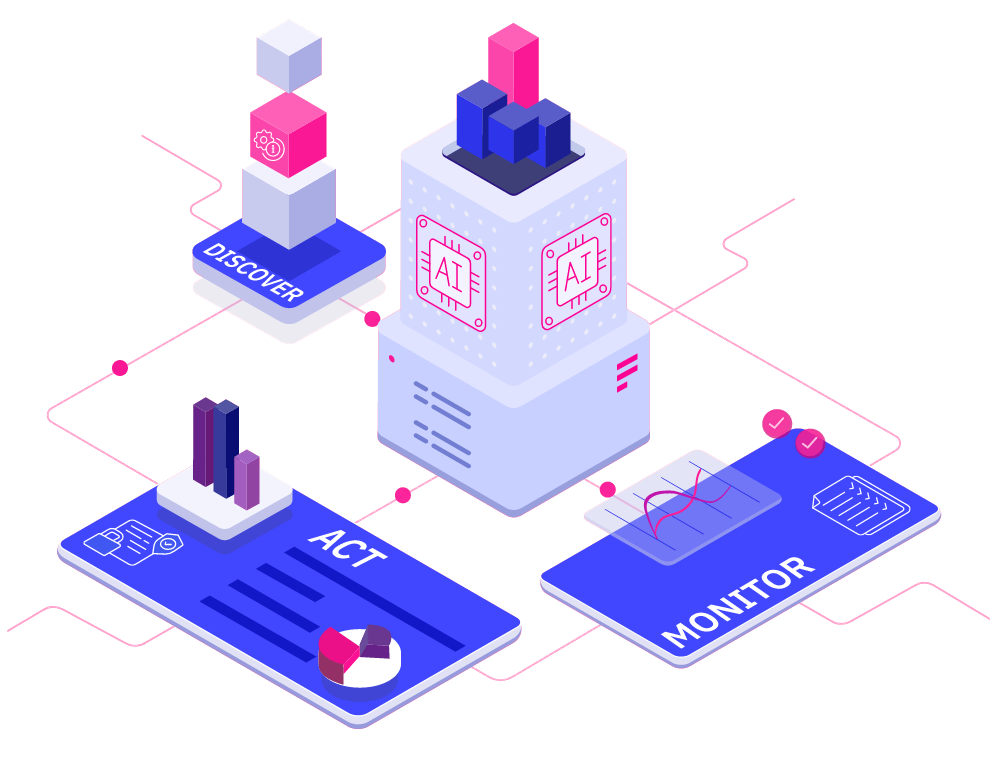

Read more about Discover Enterprise Test Data® Learn moreAI-powered. End-to-end. Your complete test data management platform.

Explore Curiosity's collection of webinars, podcasts, blogs and success stories, covering everything from visual modelling to artificial intelligence and test data management.

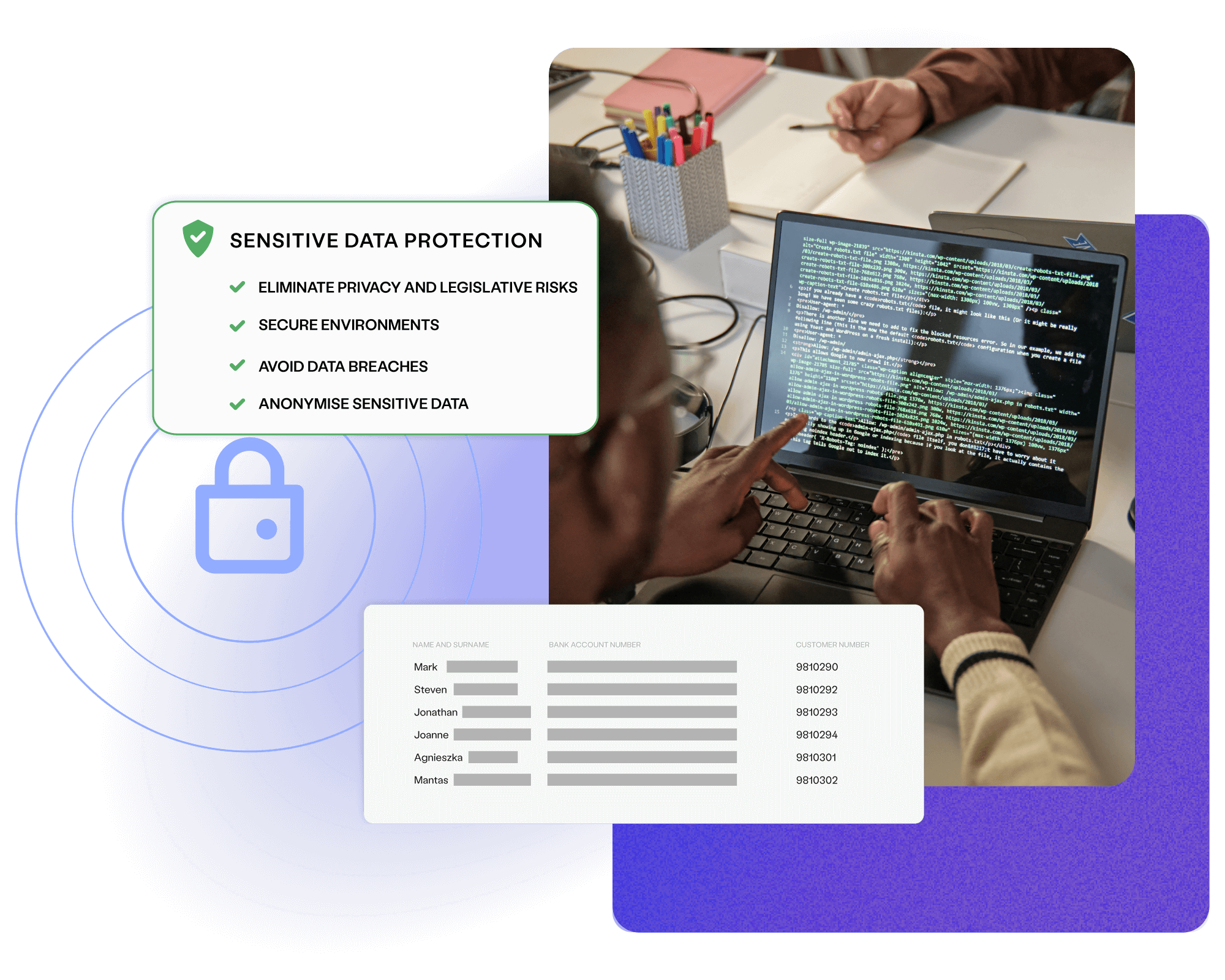

Deliver superior test data and overcome the challenges of complexity, legacy, scale, and regulation with Curiosity Software.

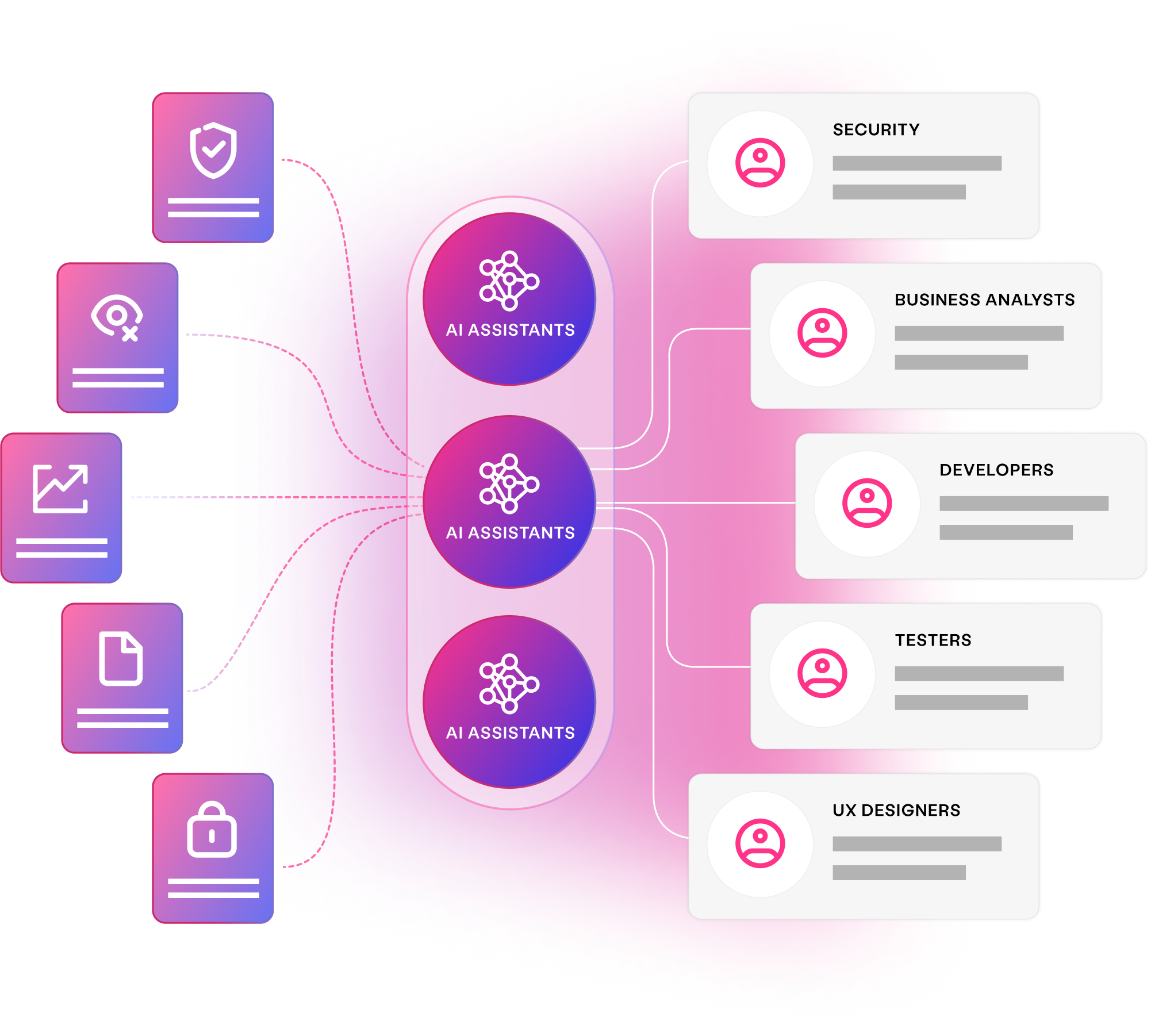

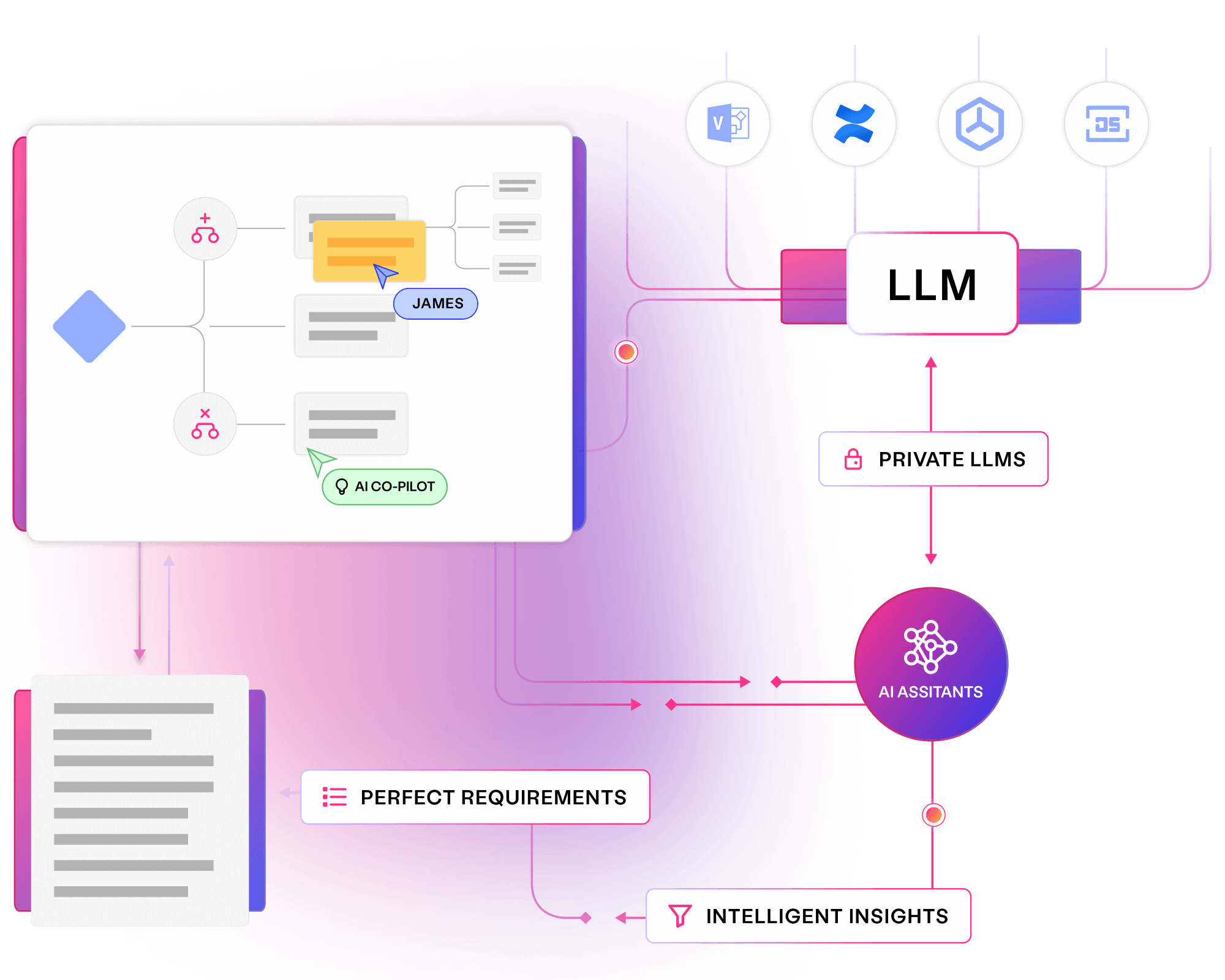

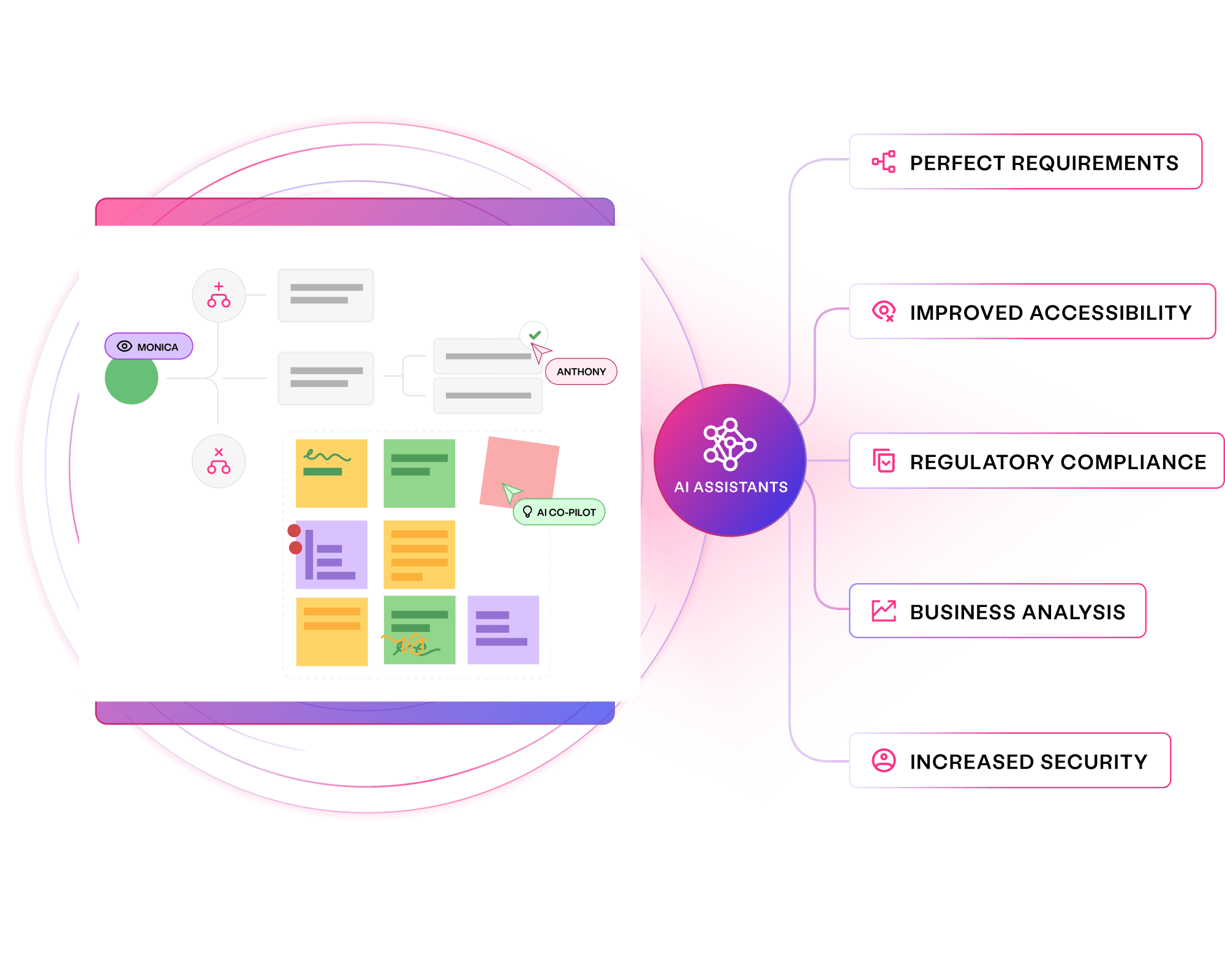

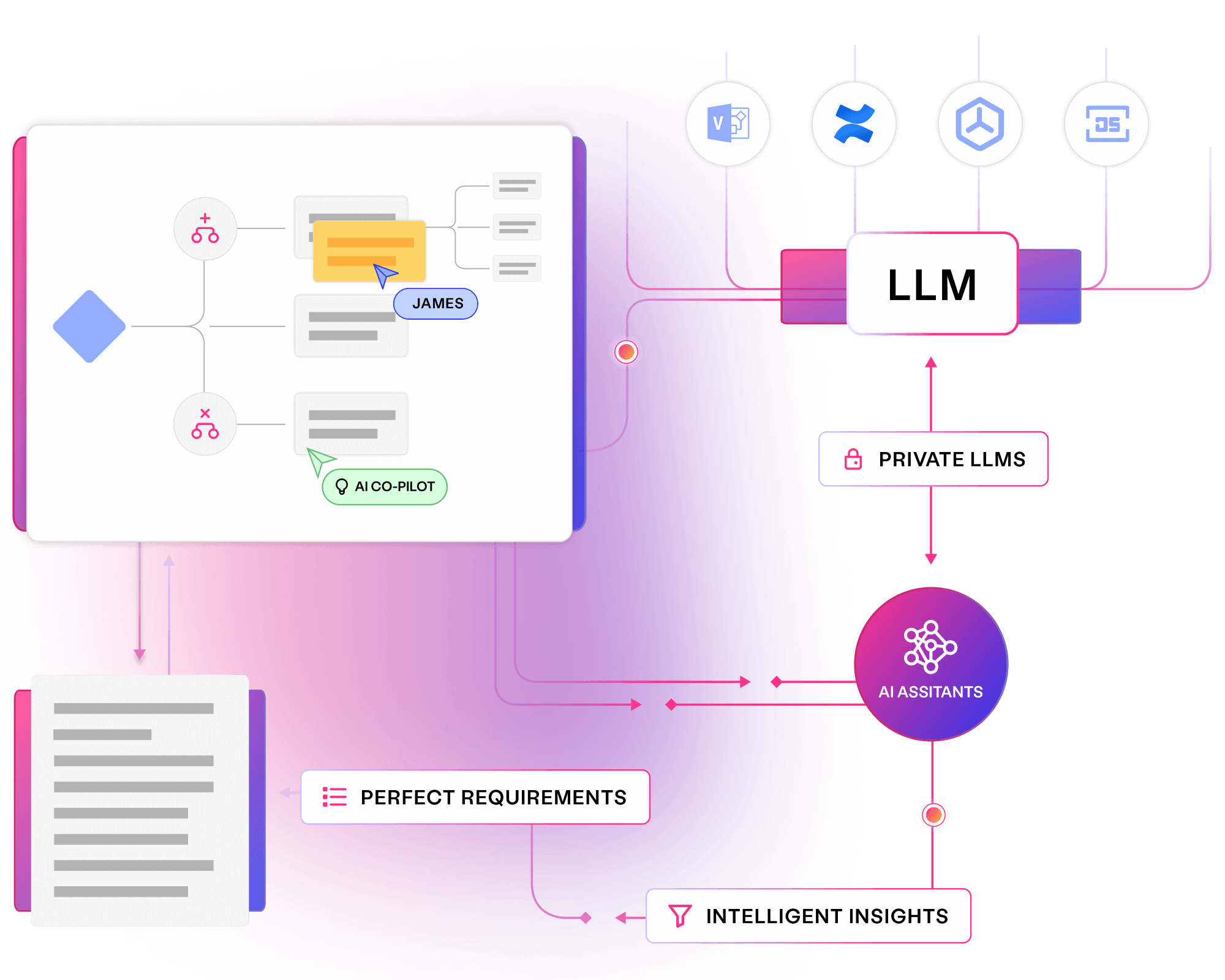

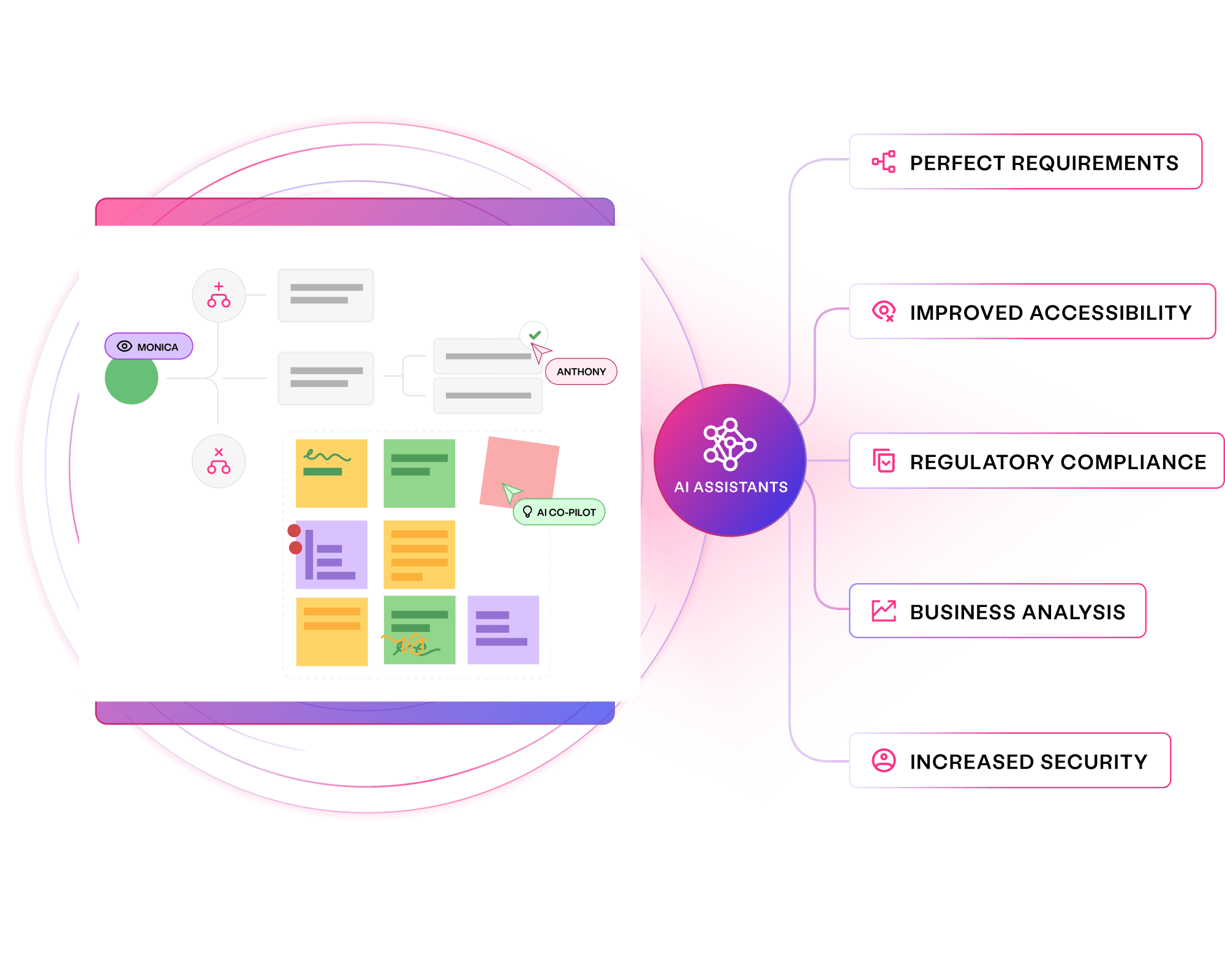

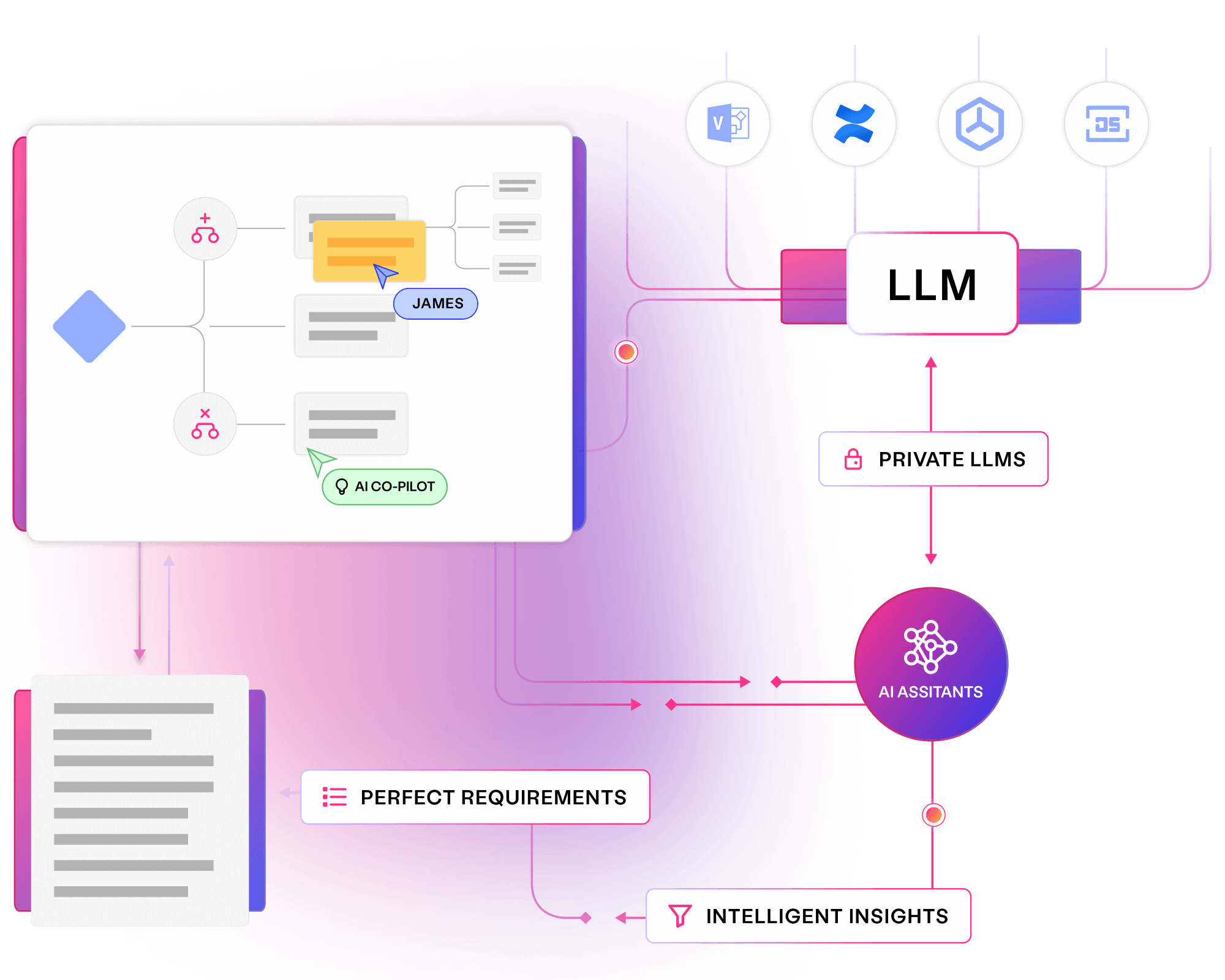

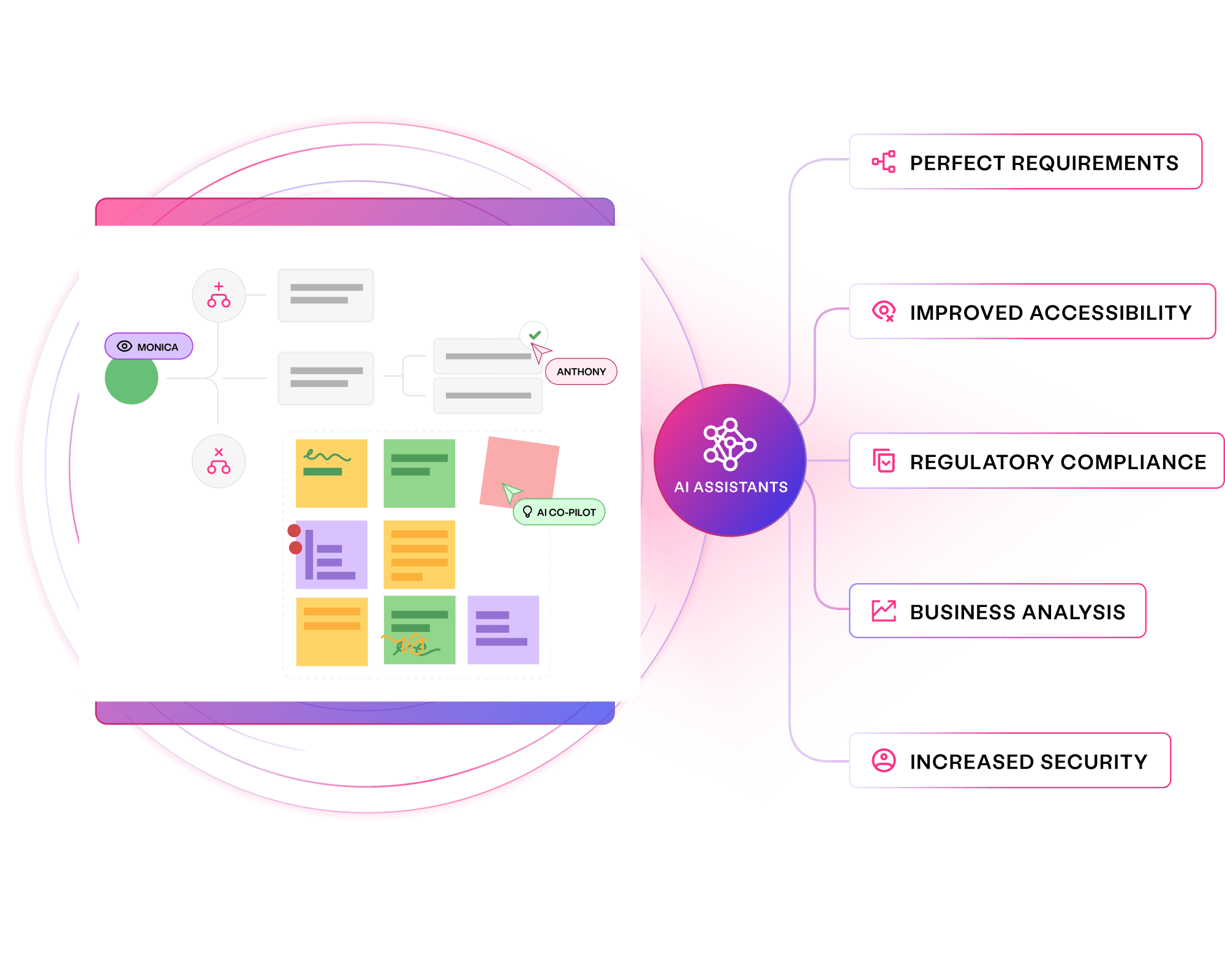

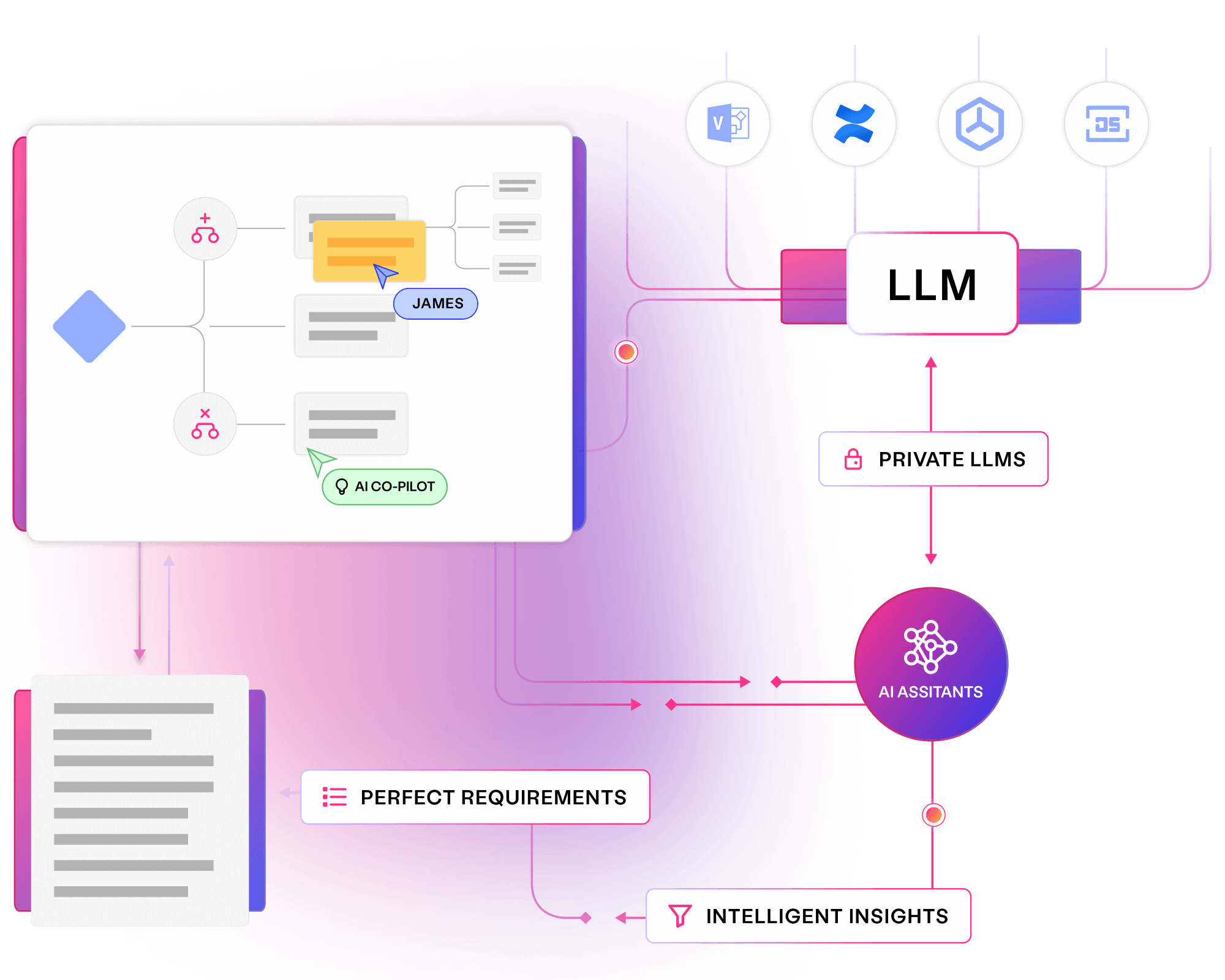

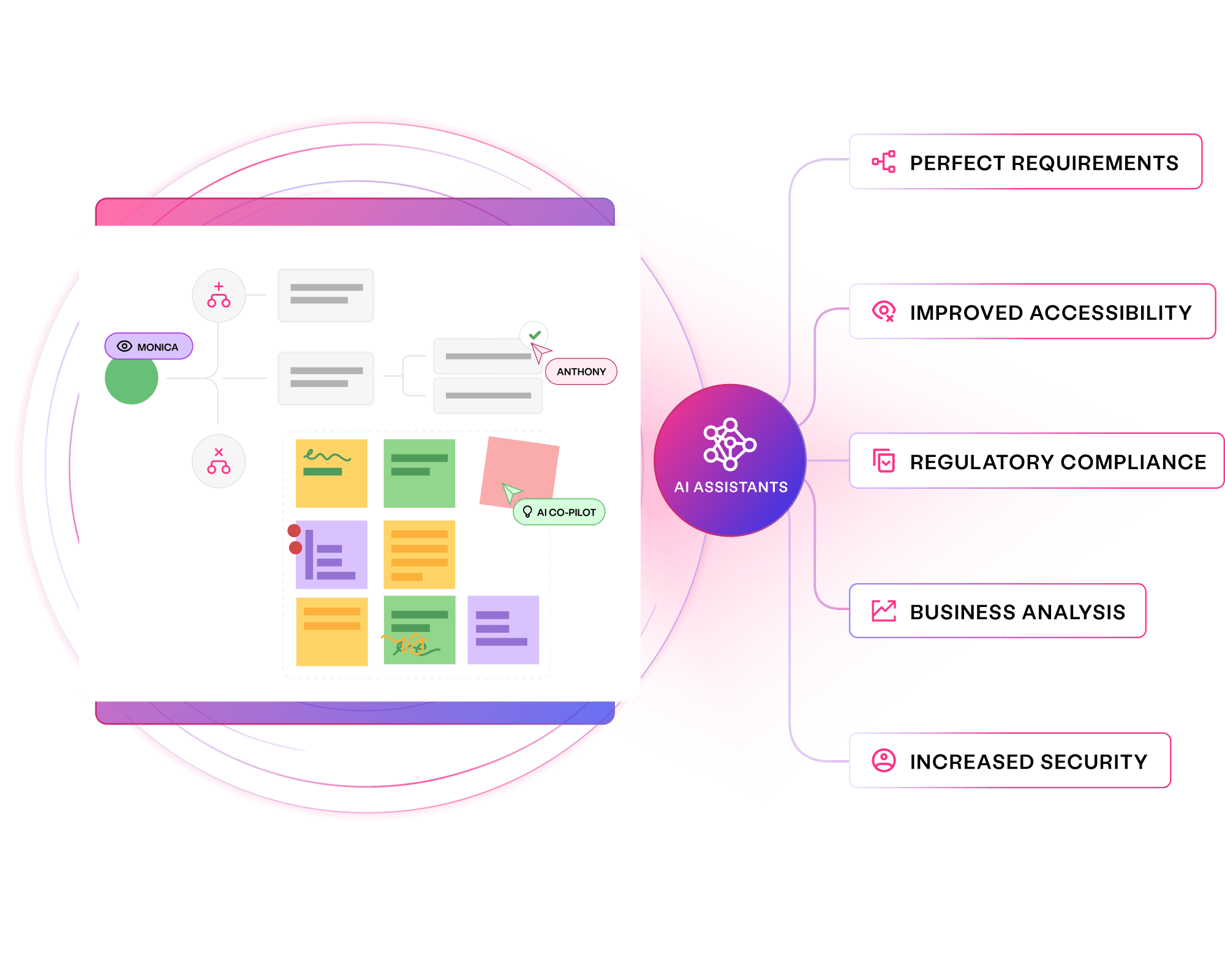

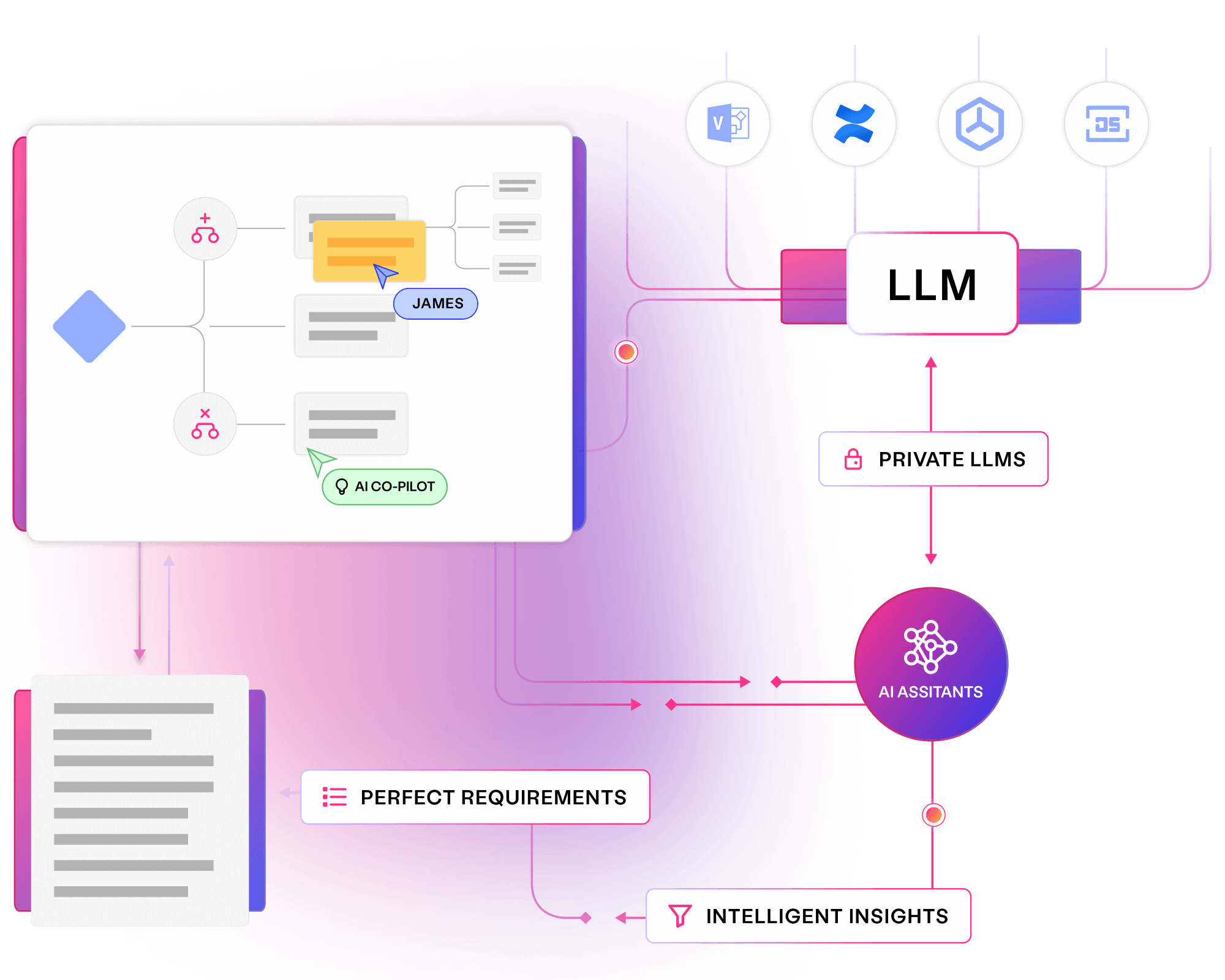

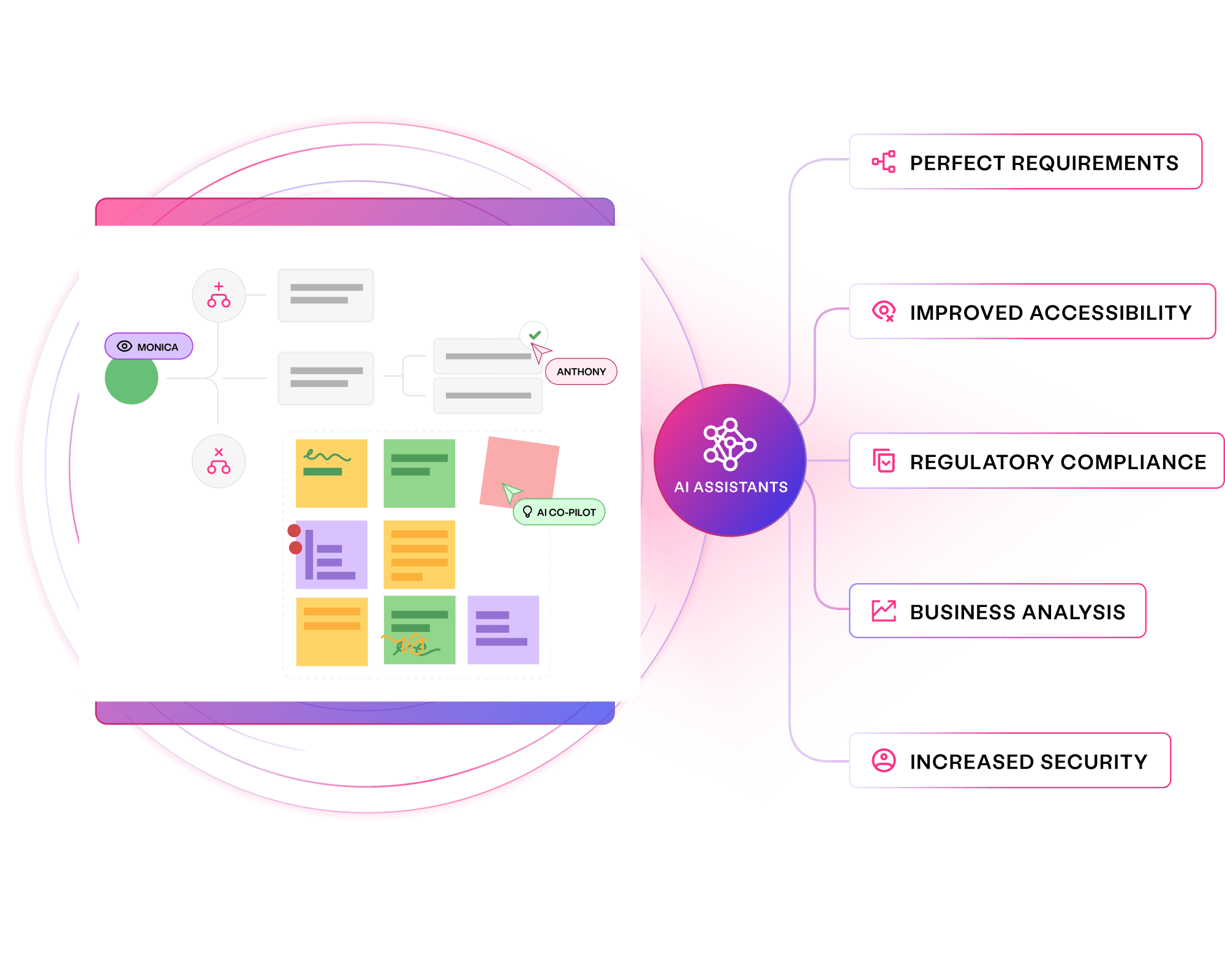

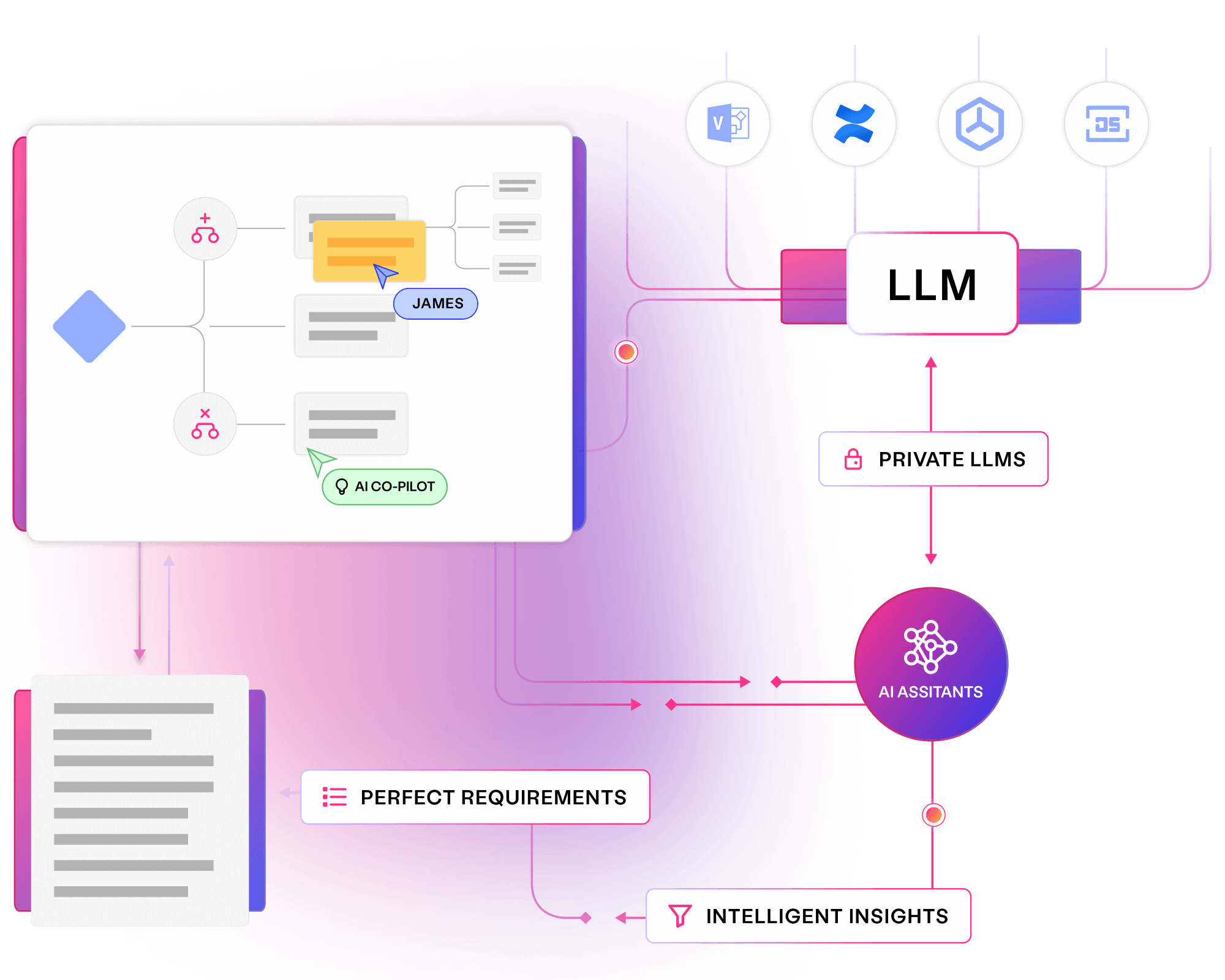

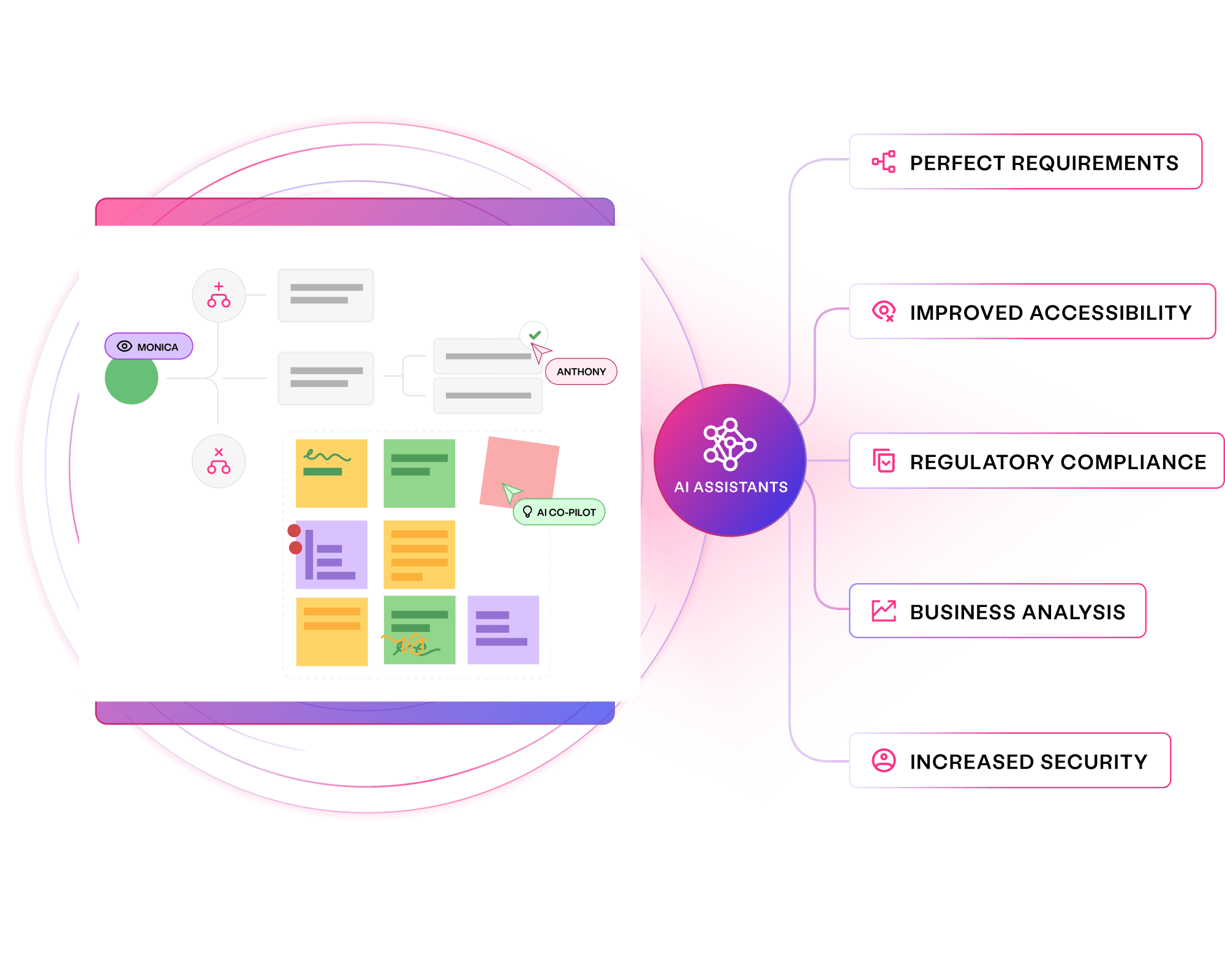

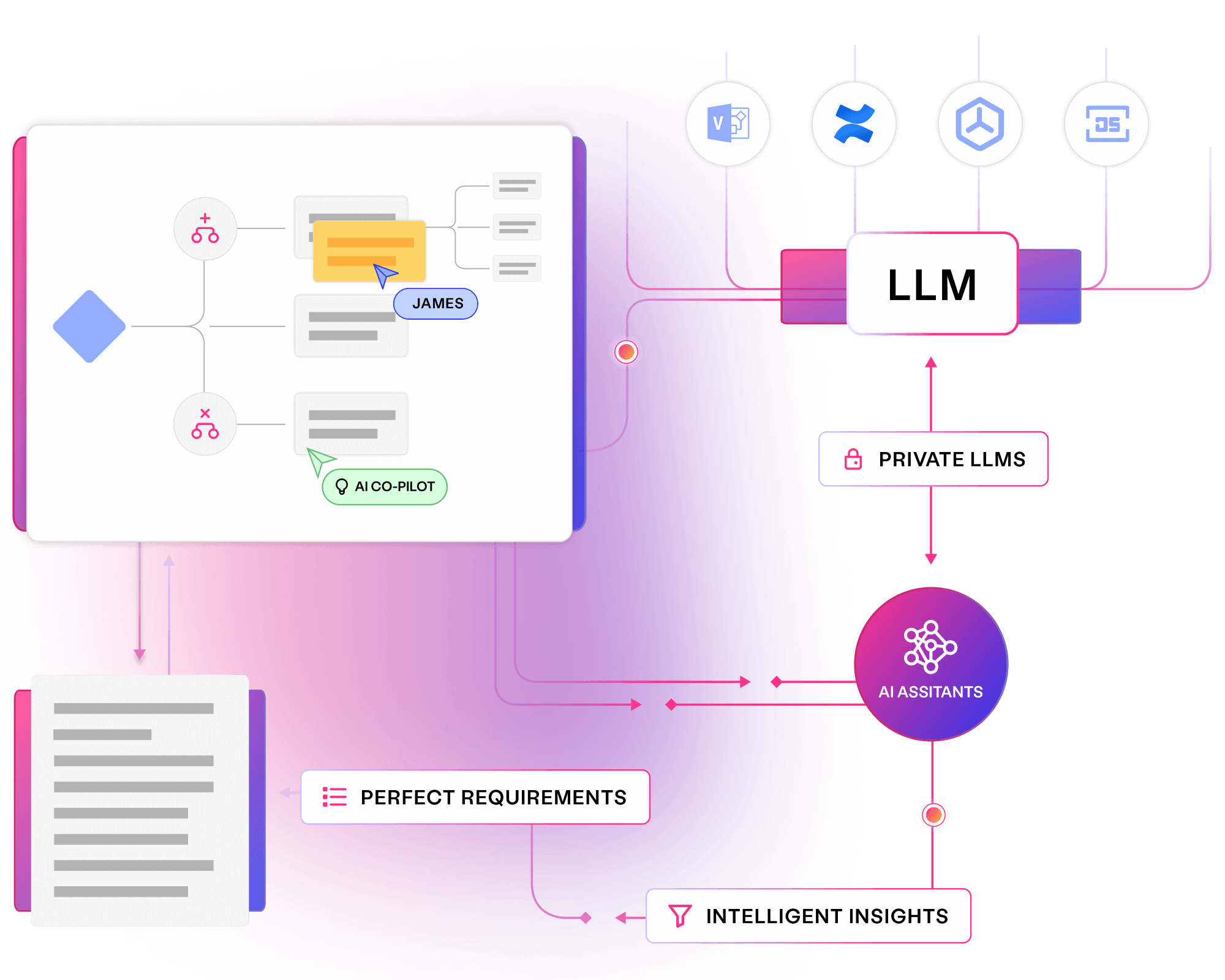

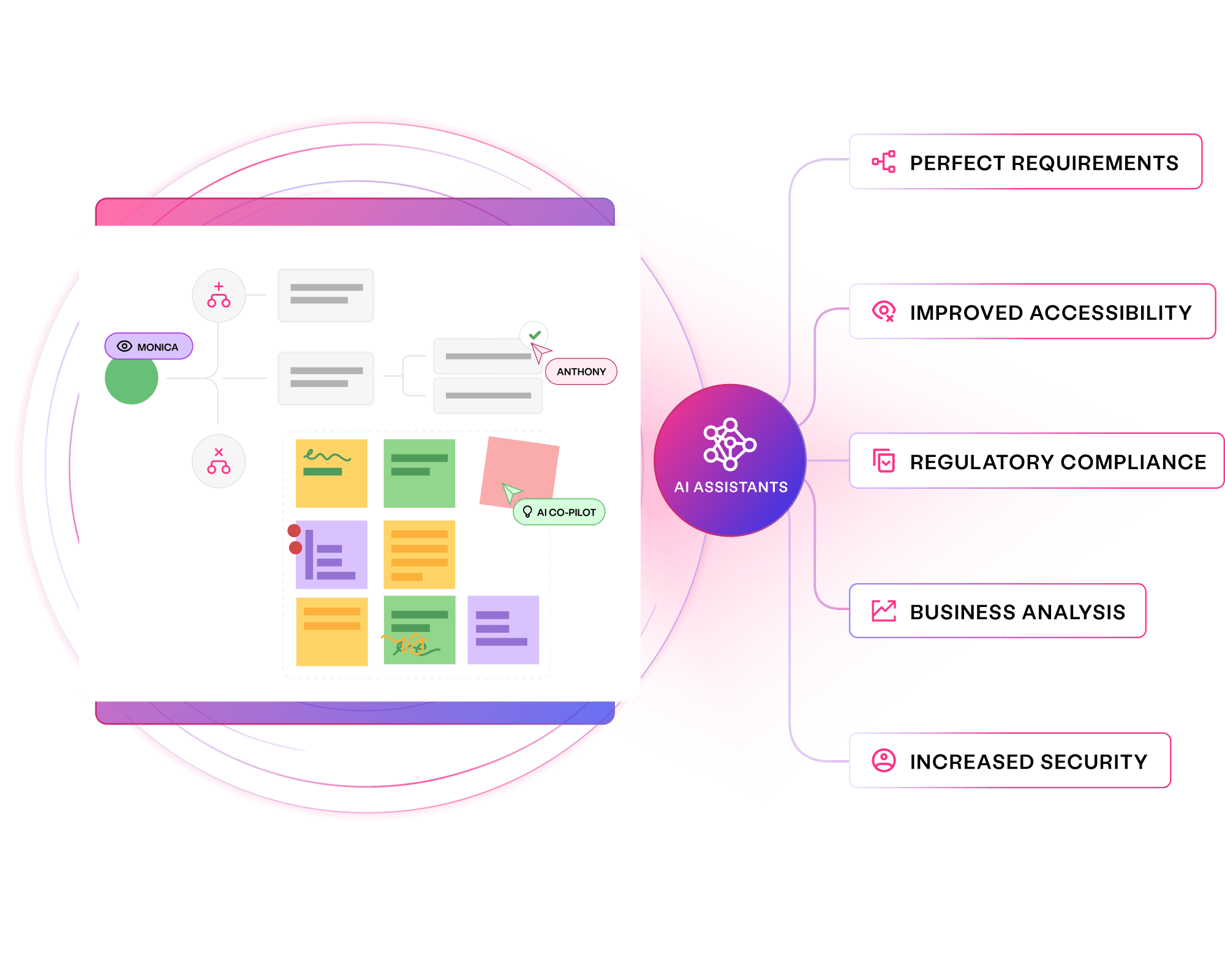

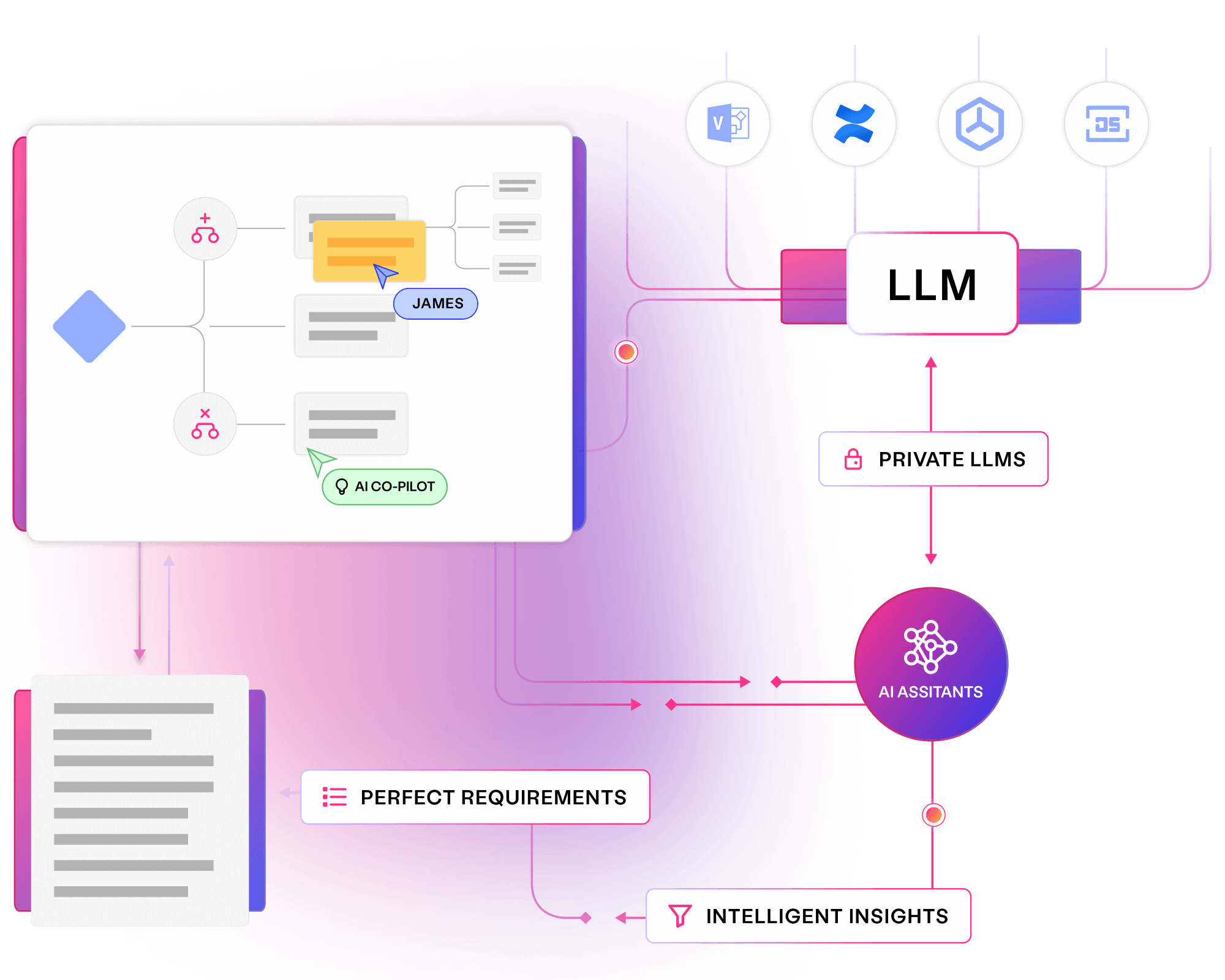

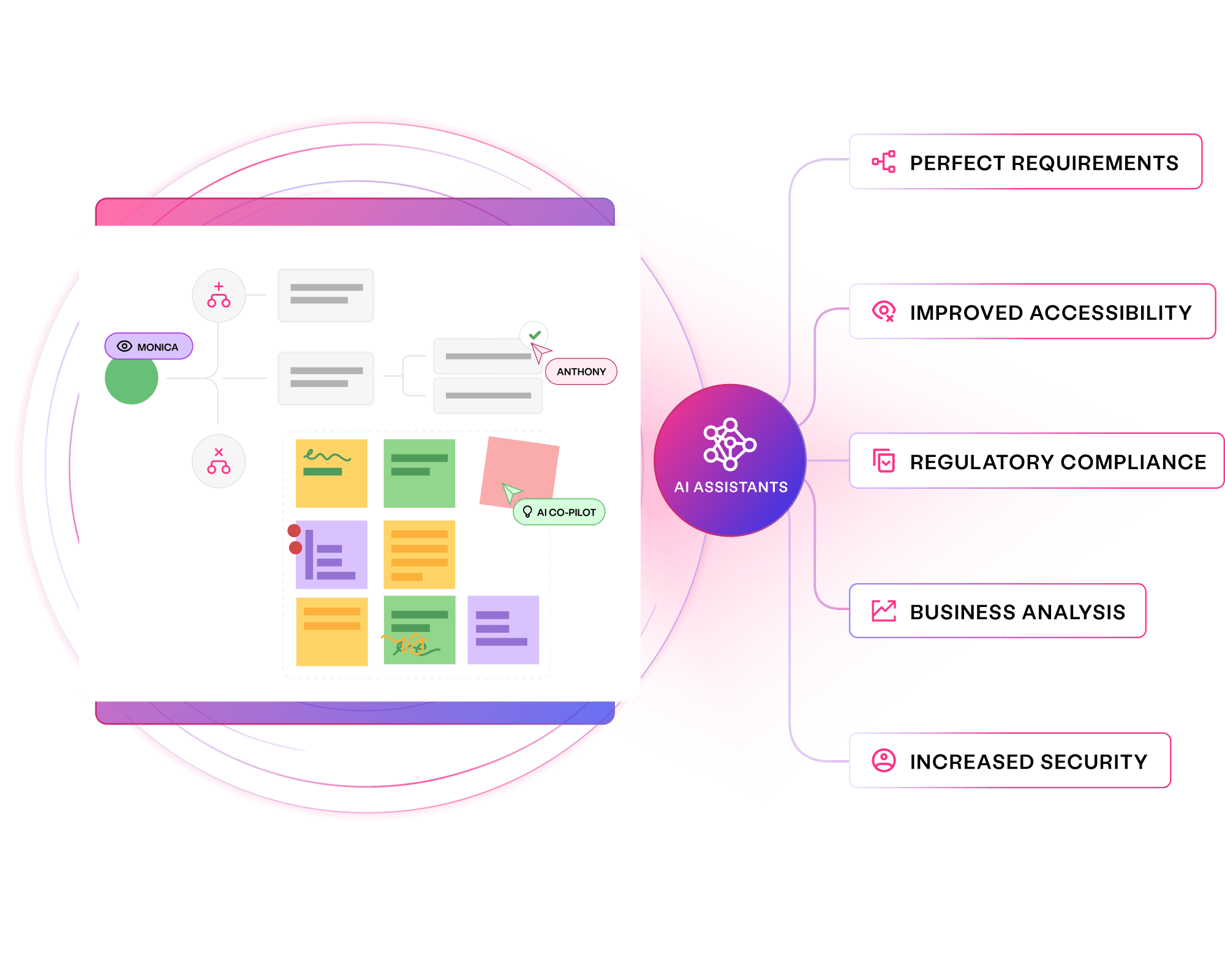

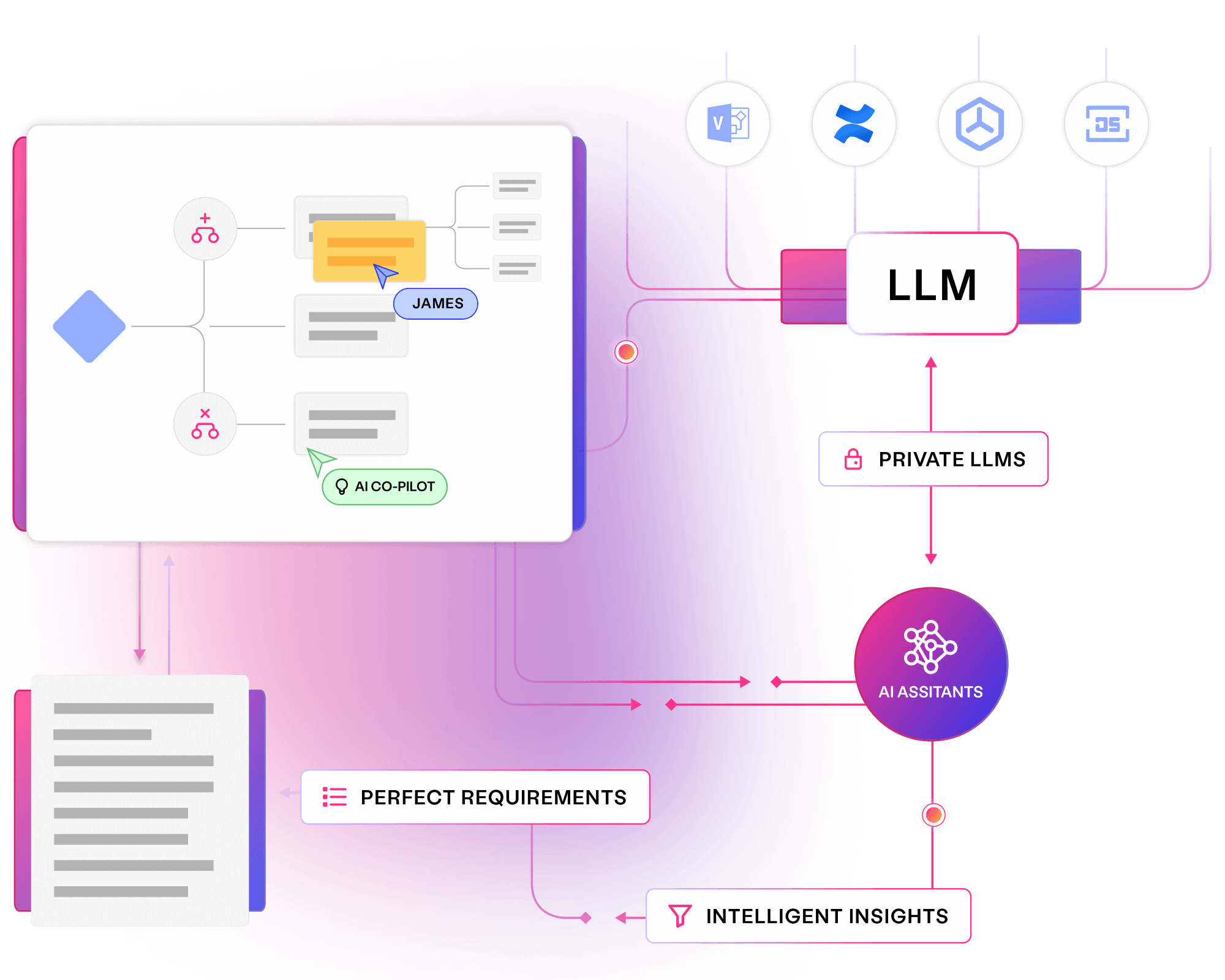

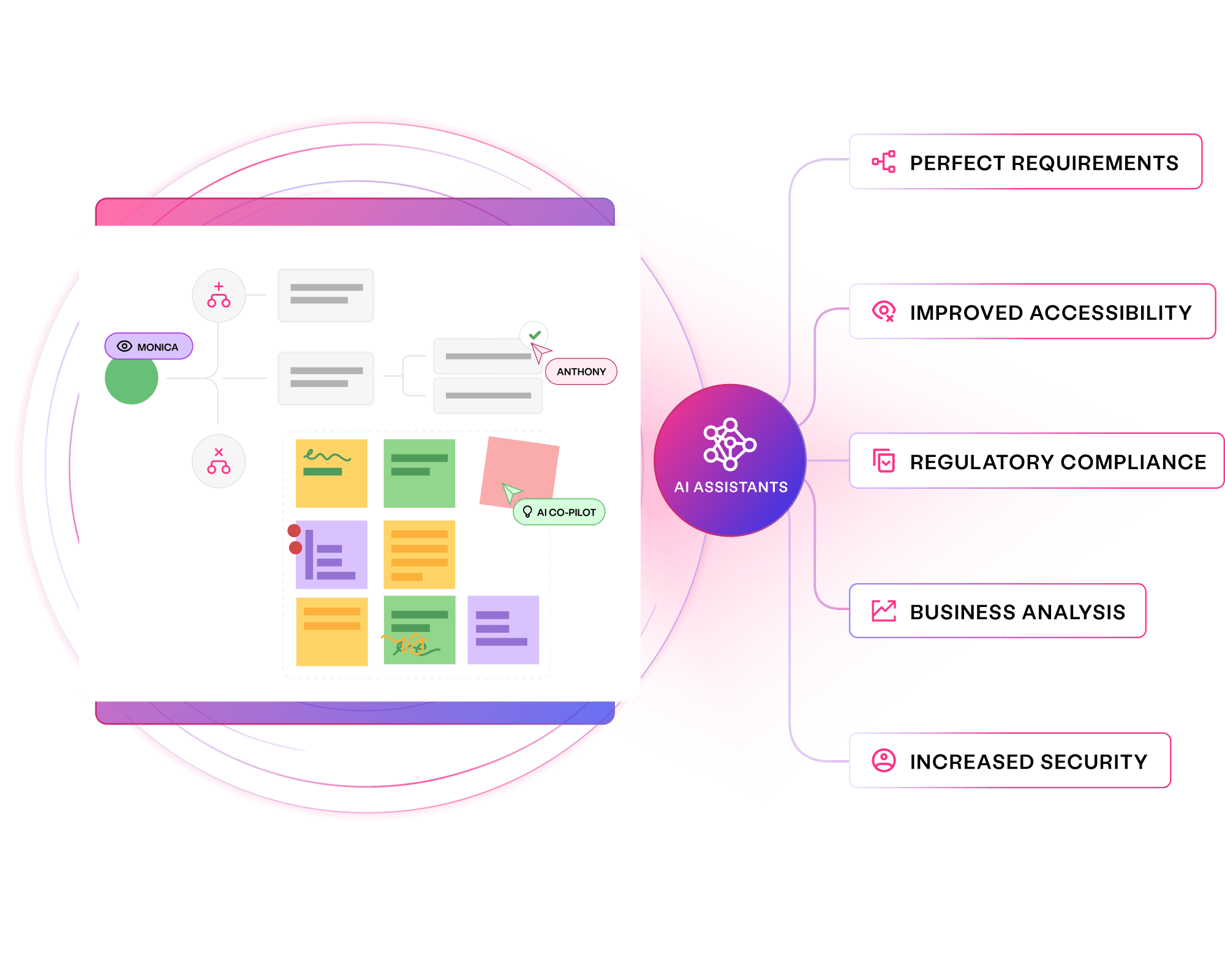

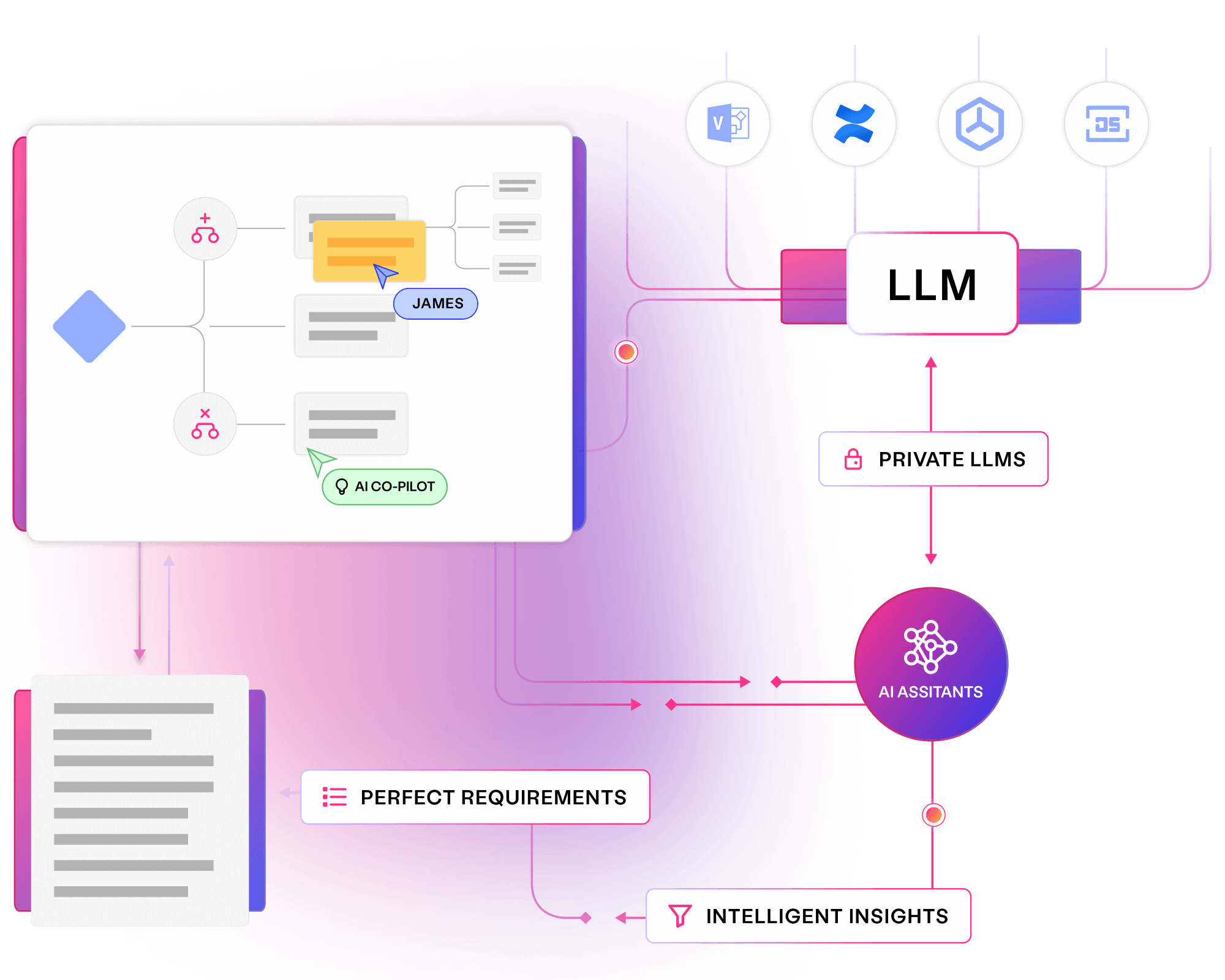

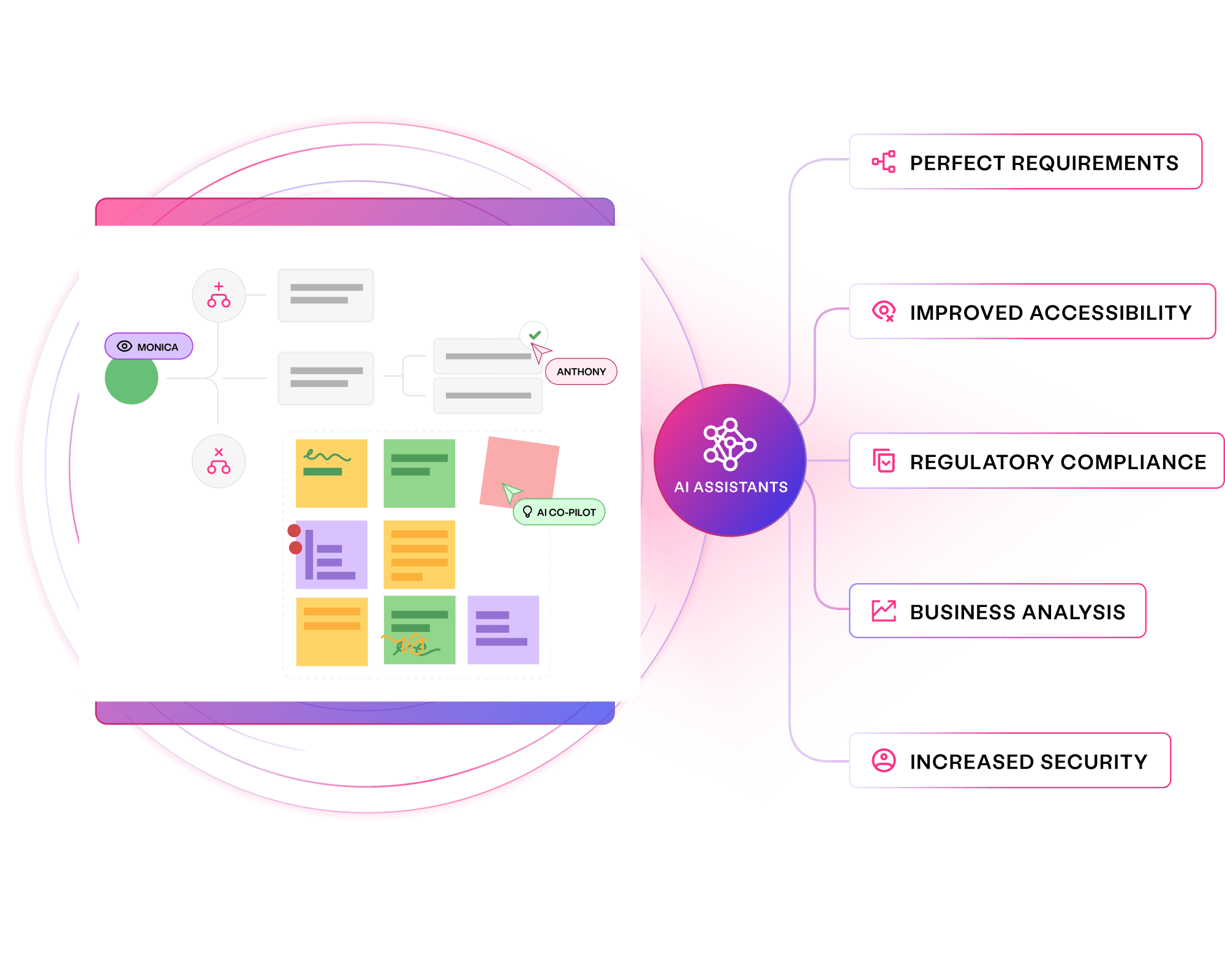

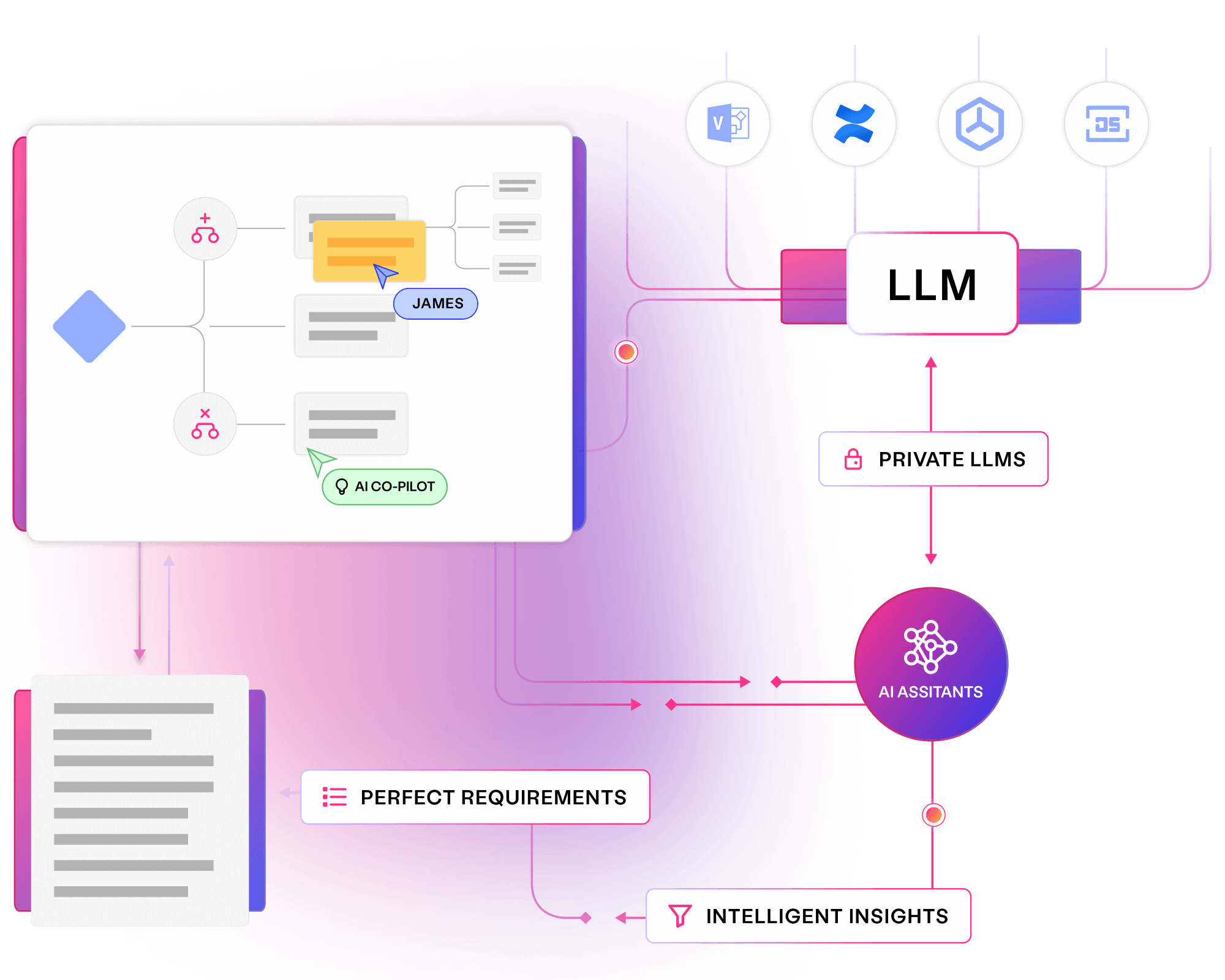

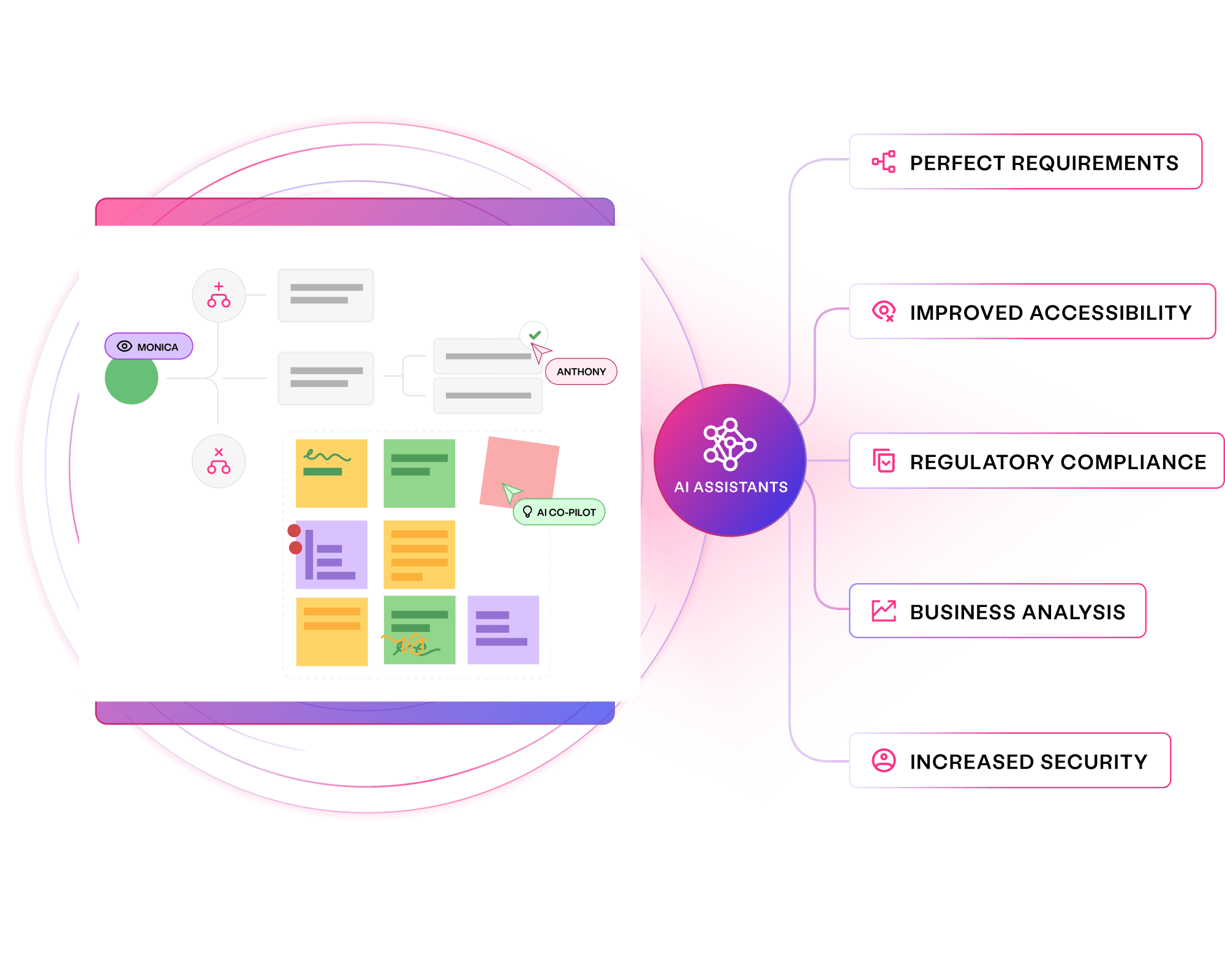

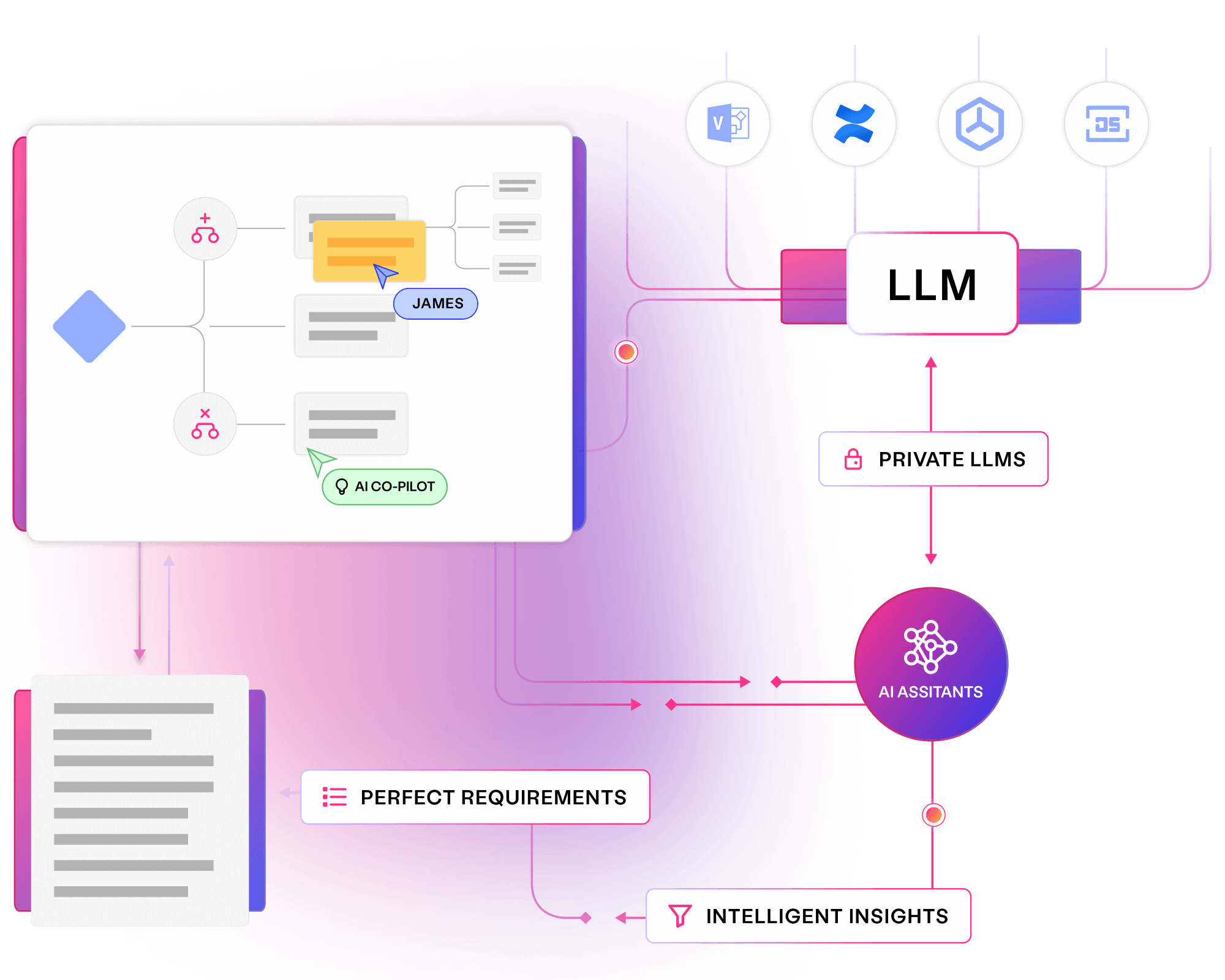

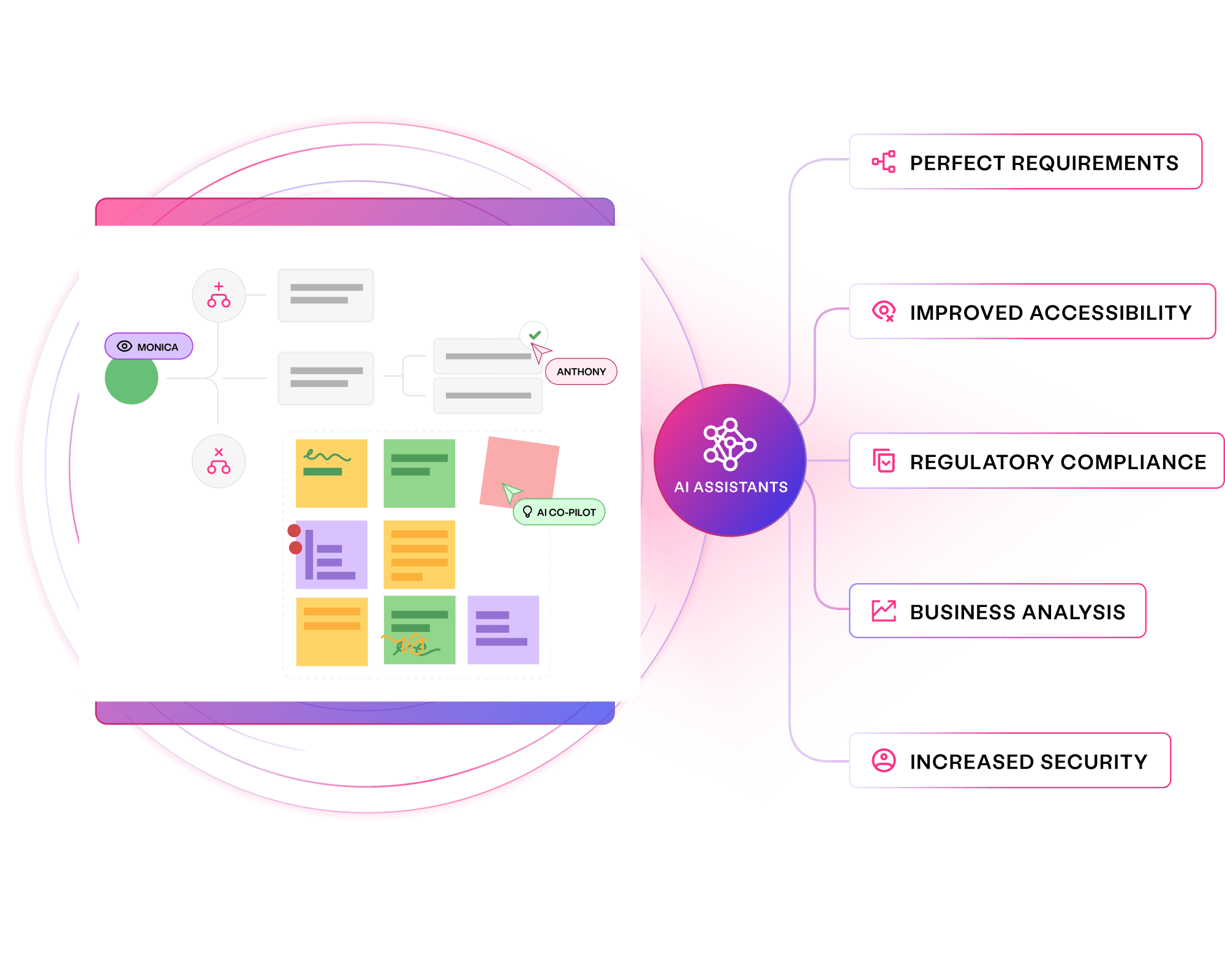

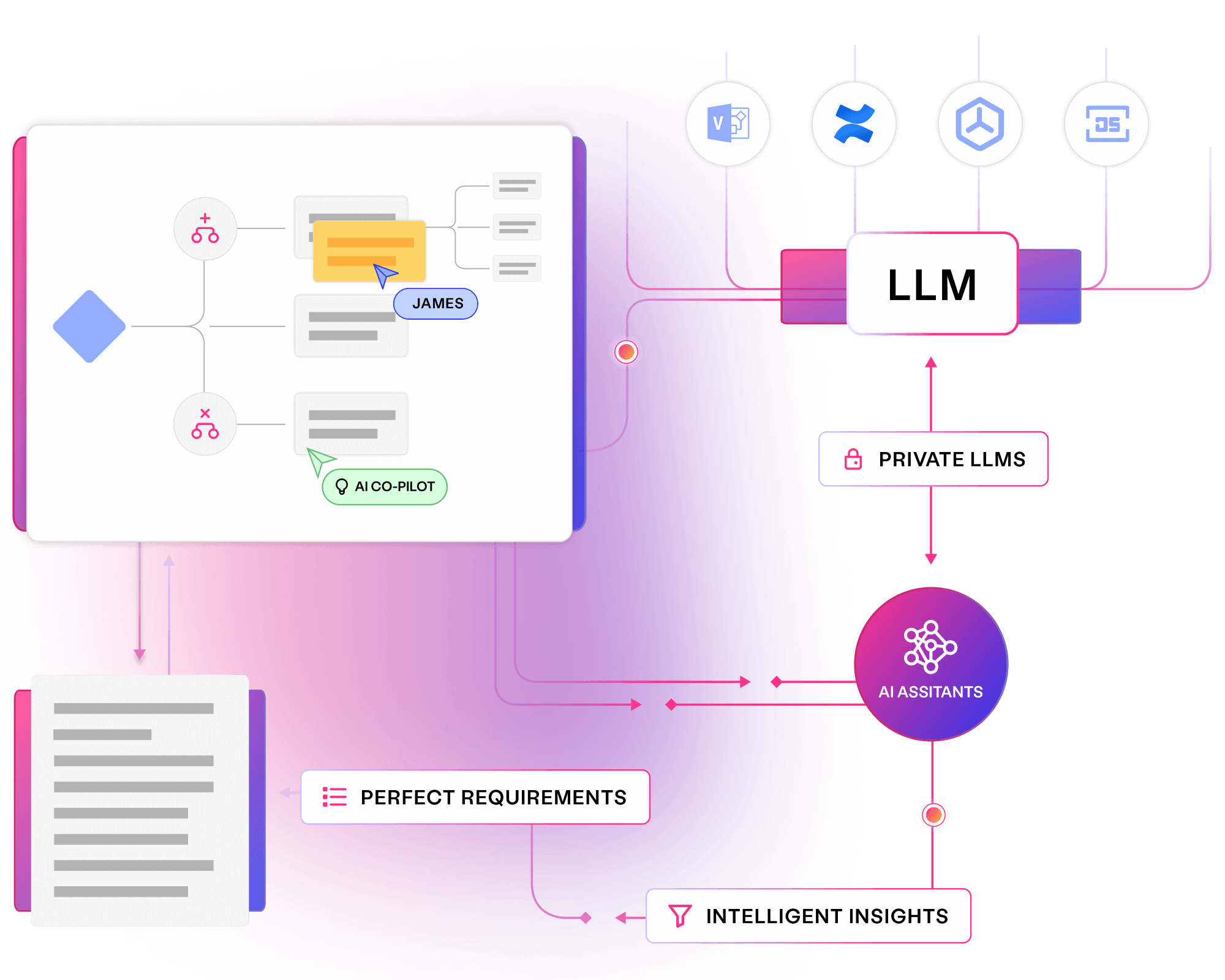

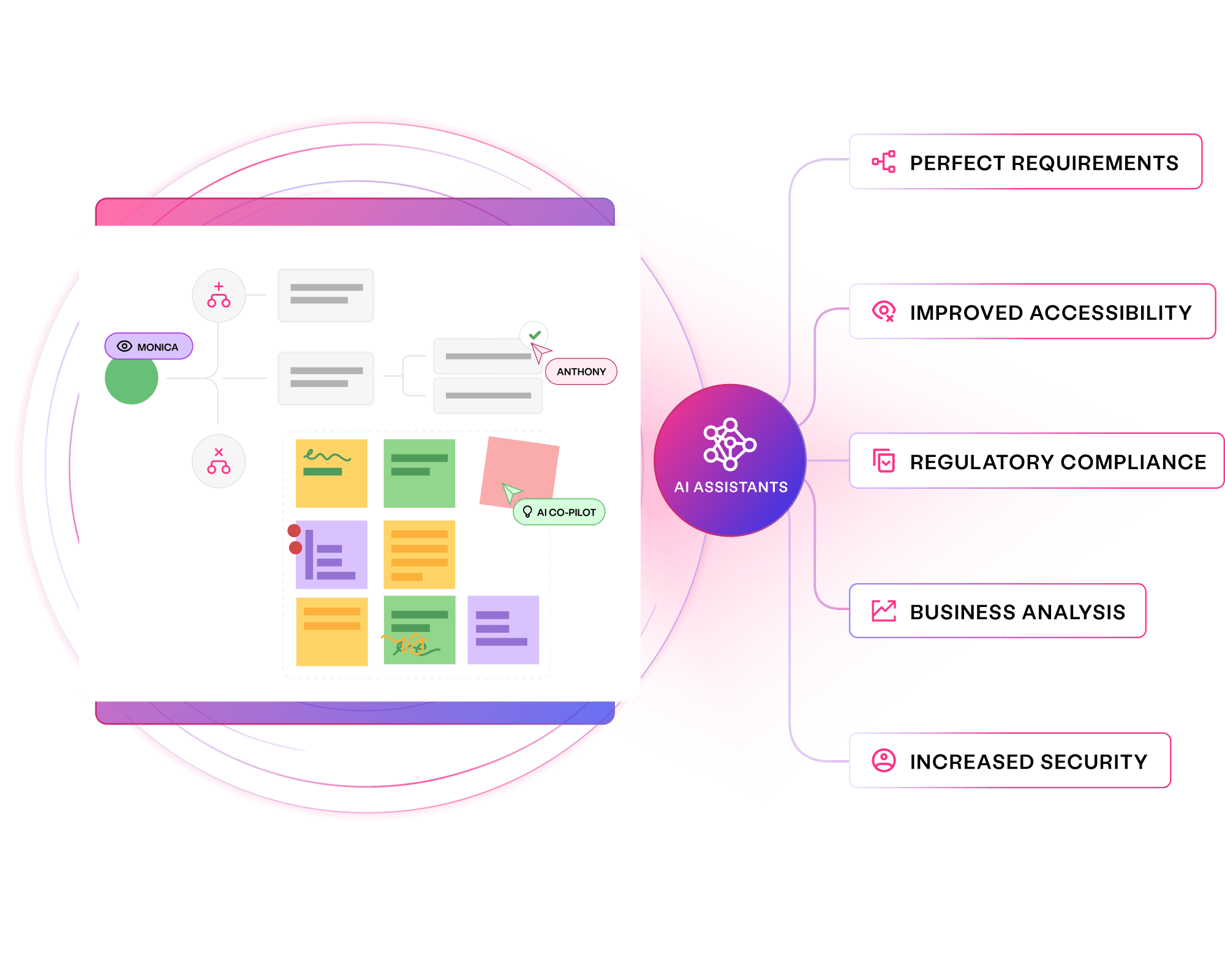

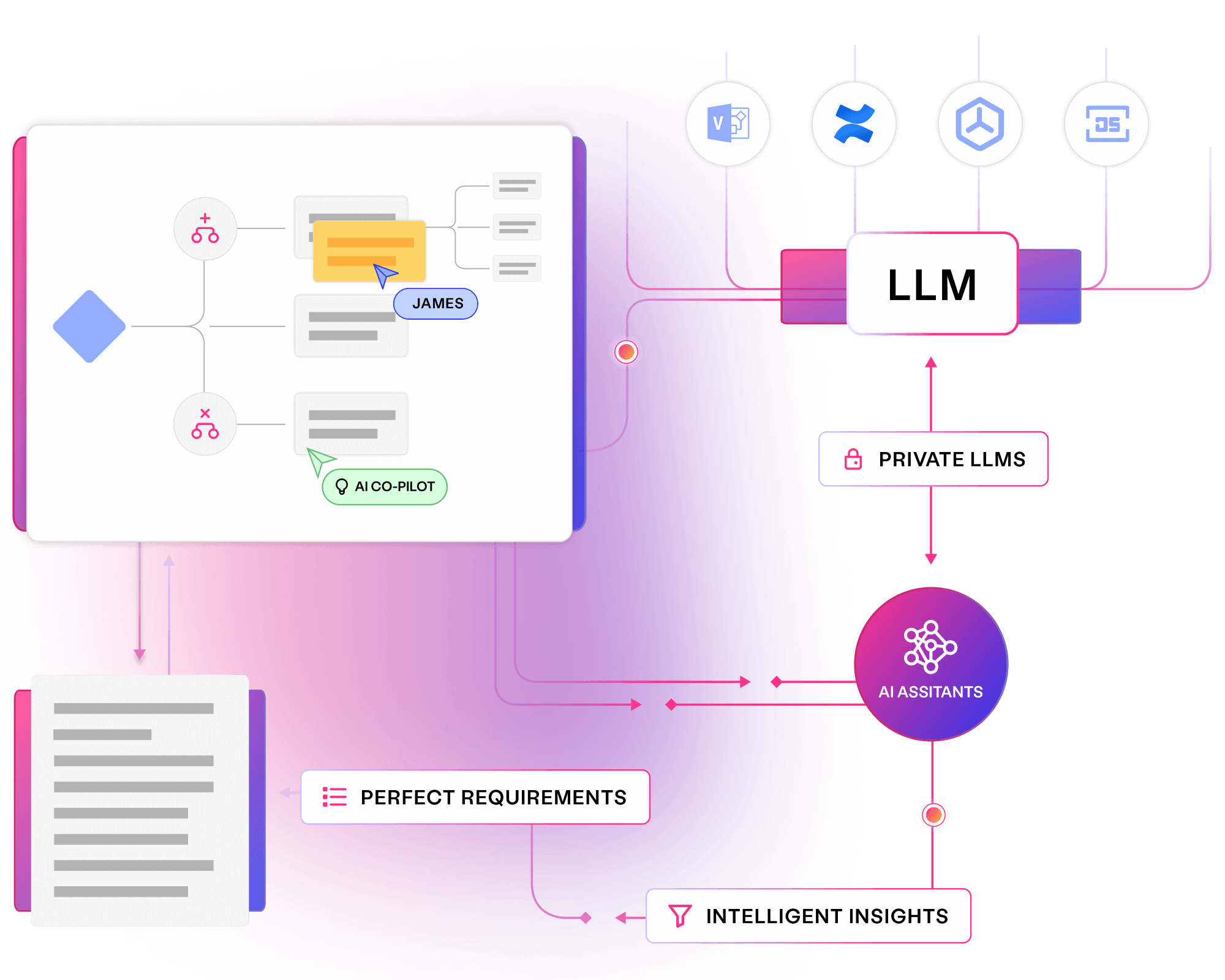

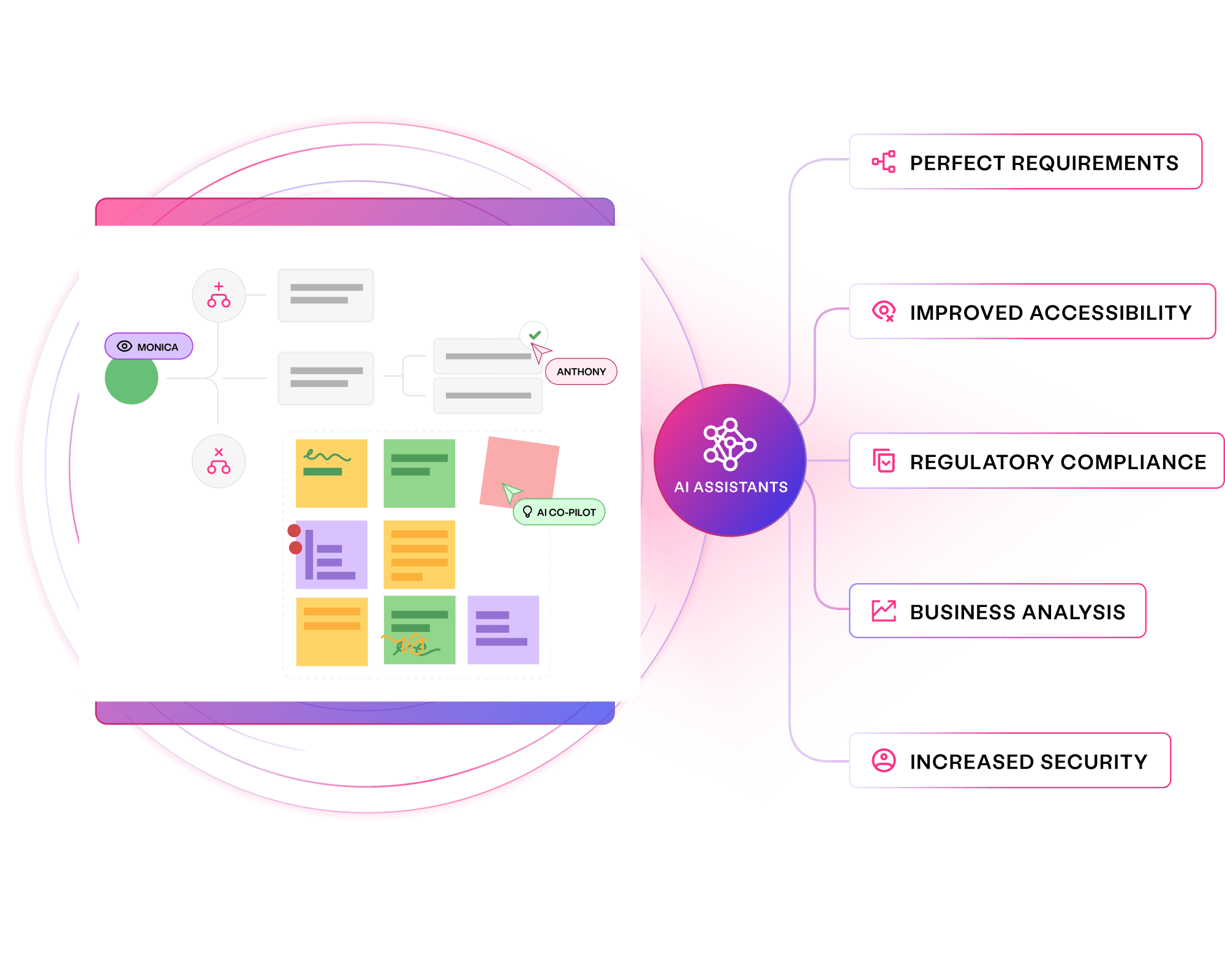

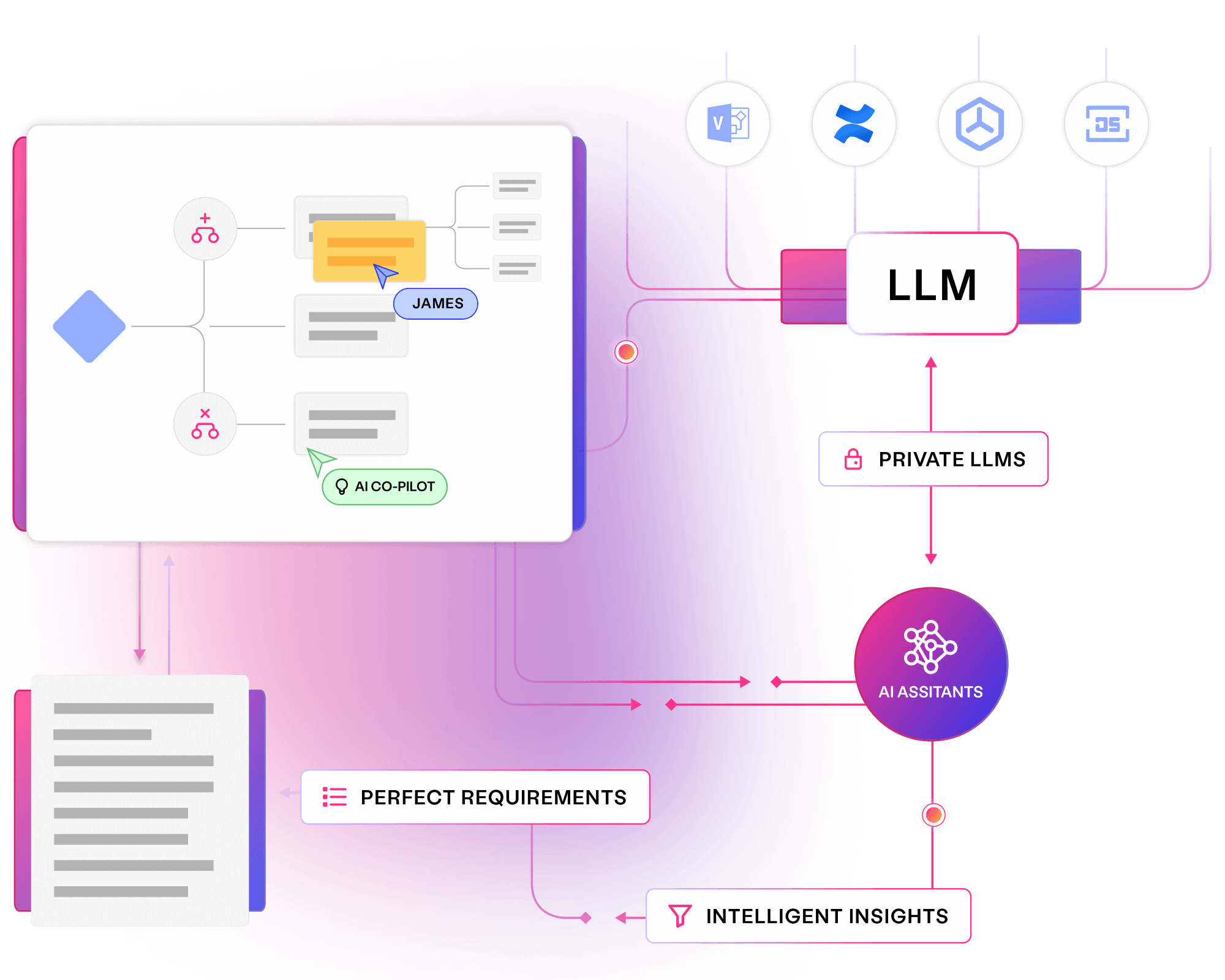

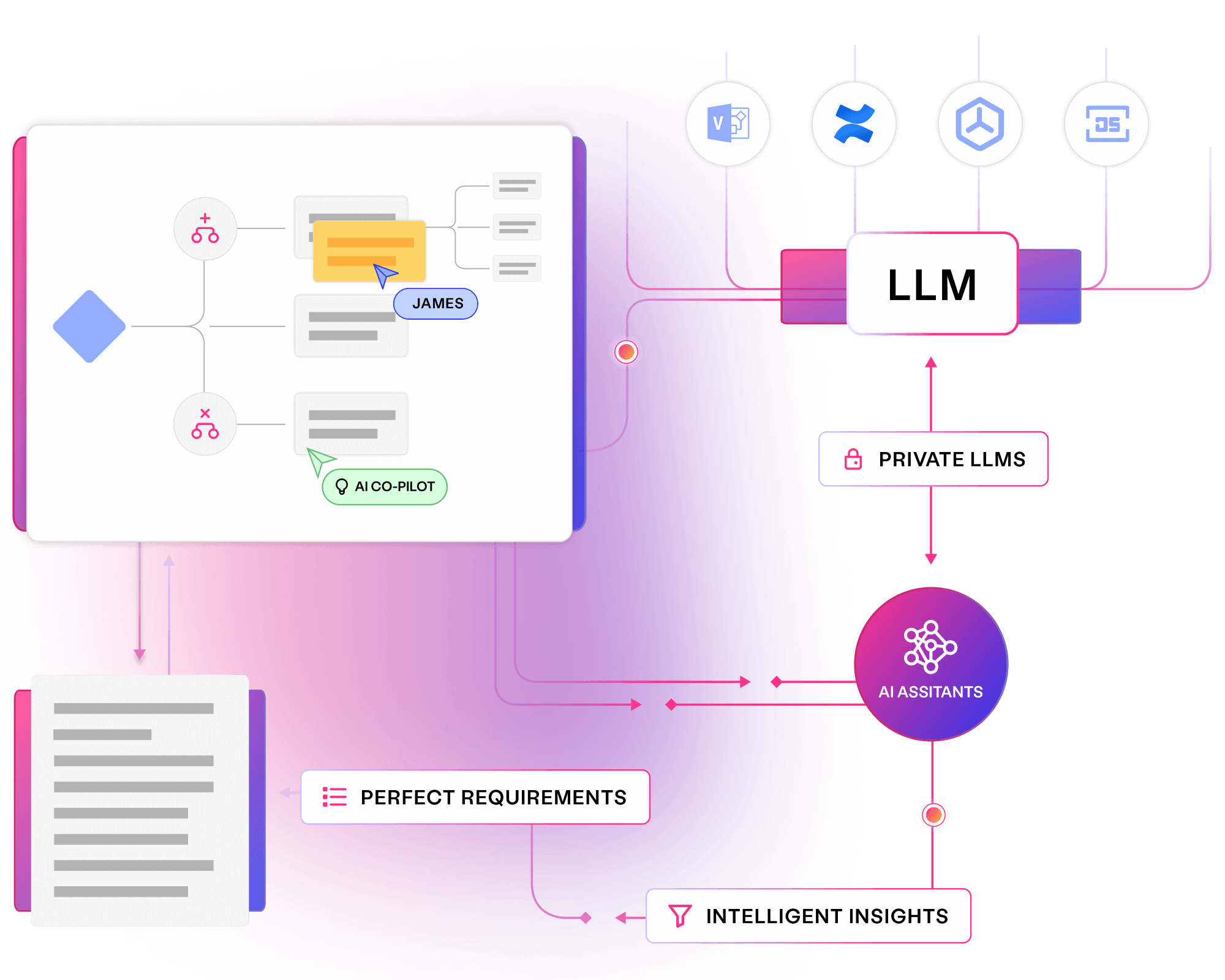

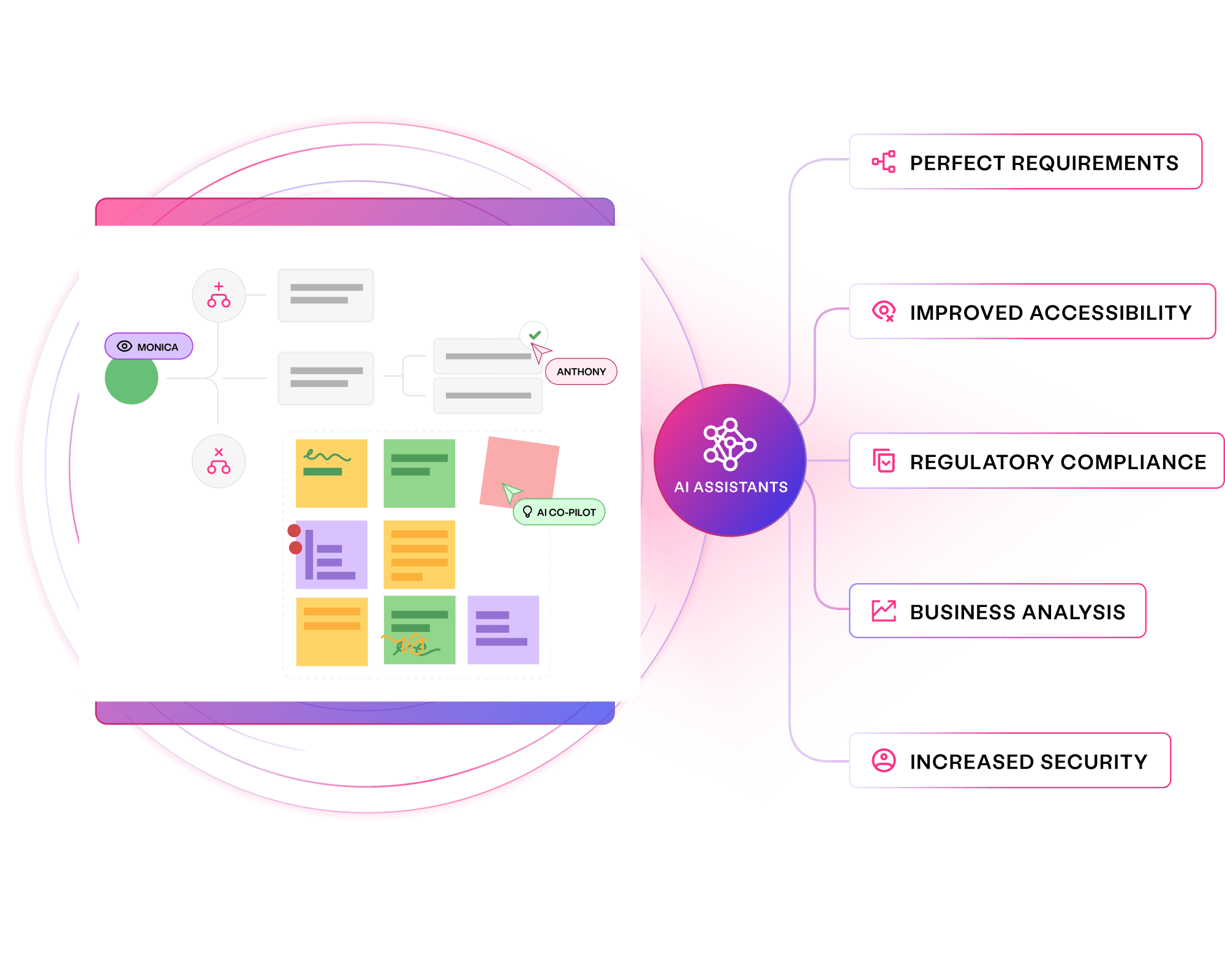

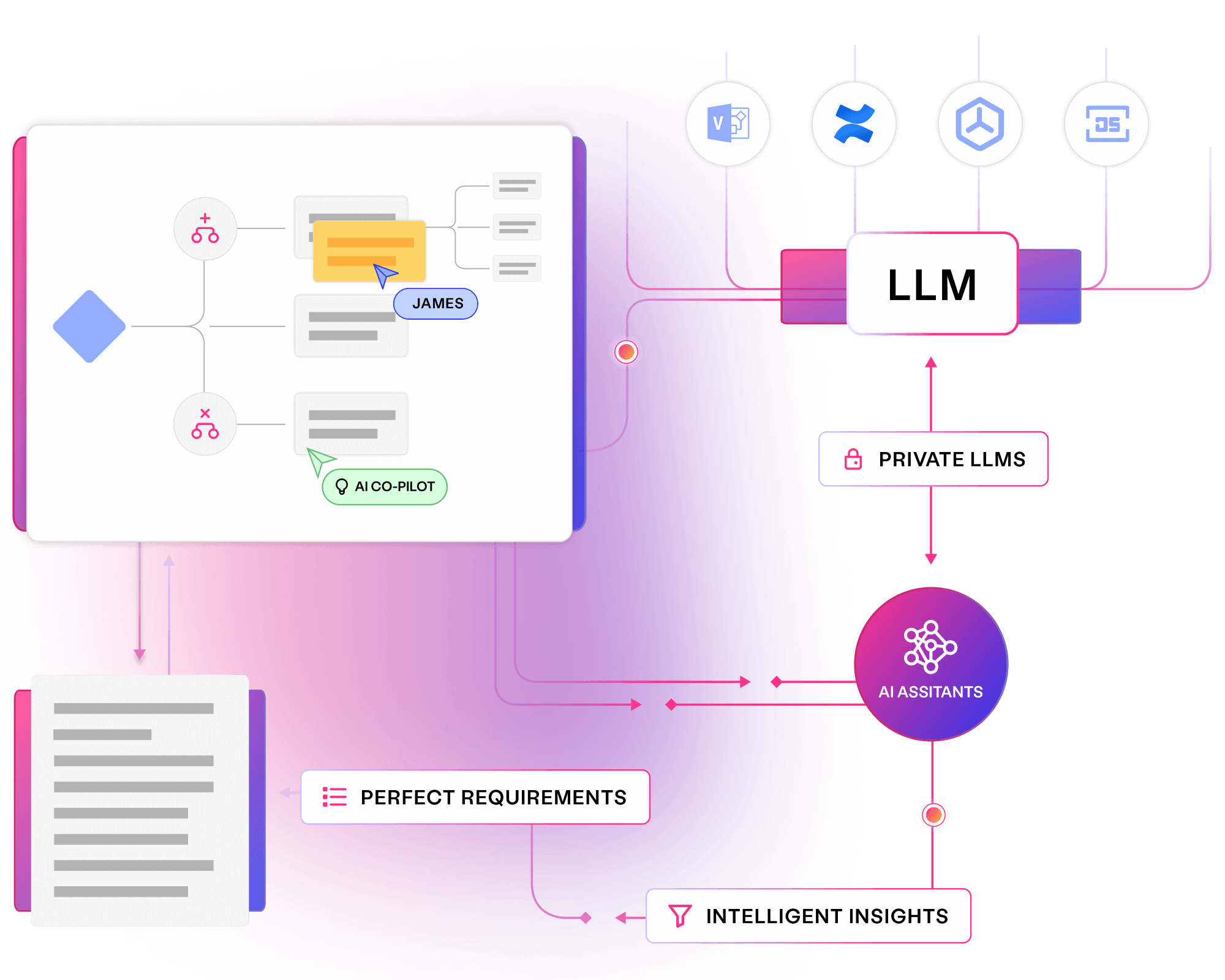

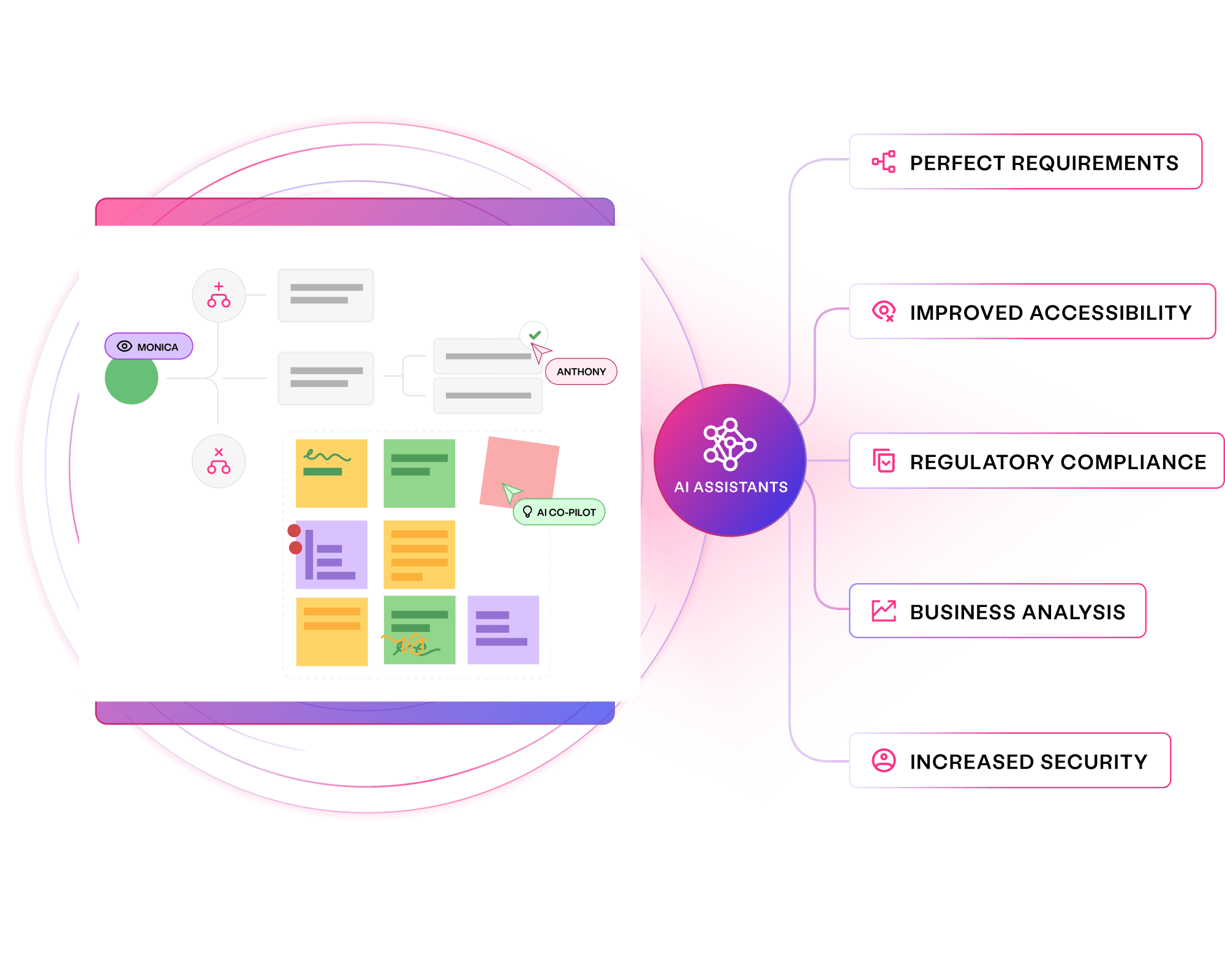

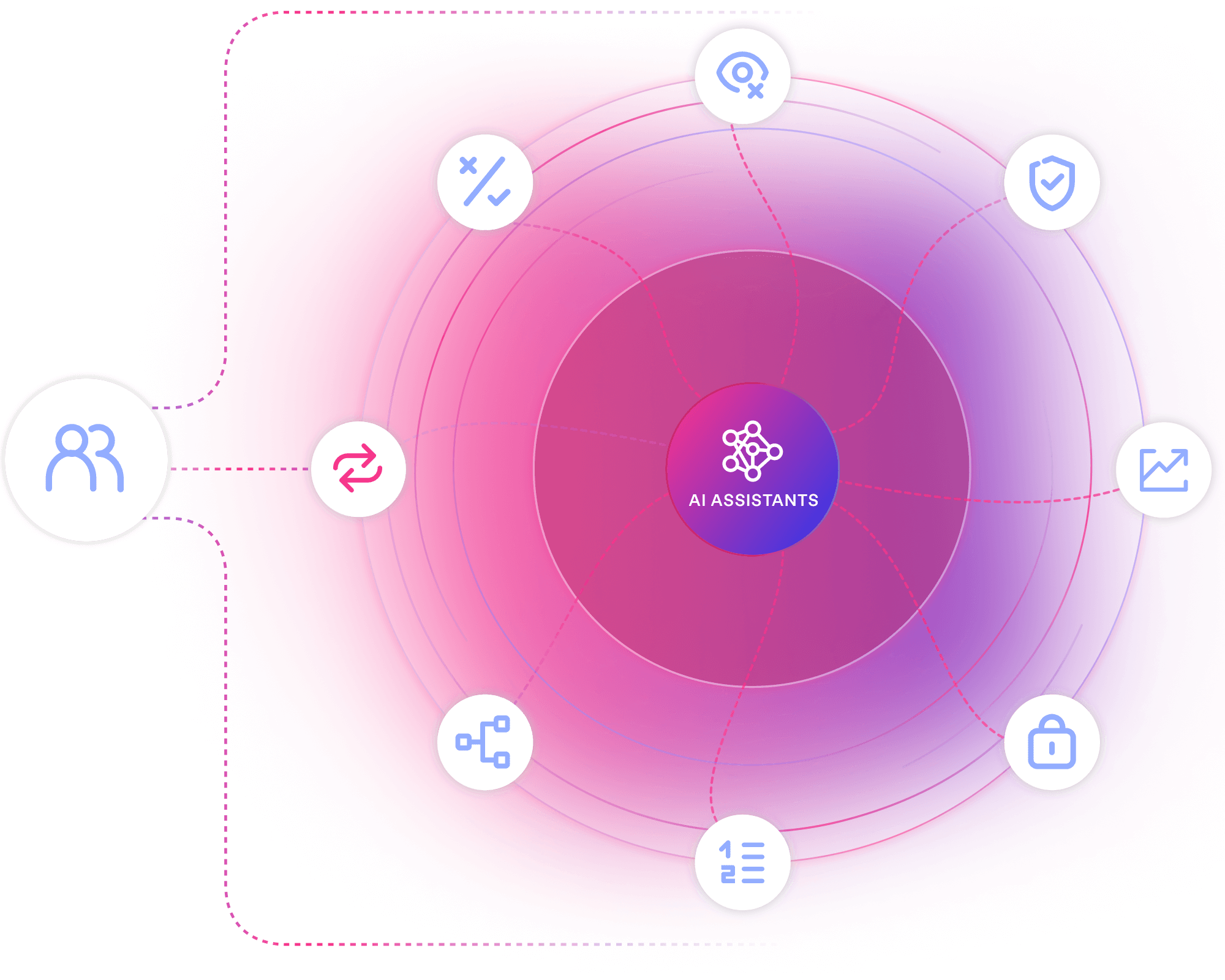

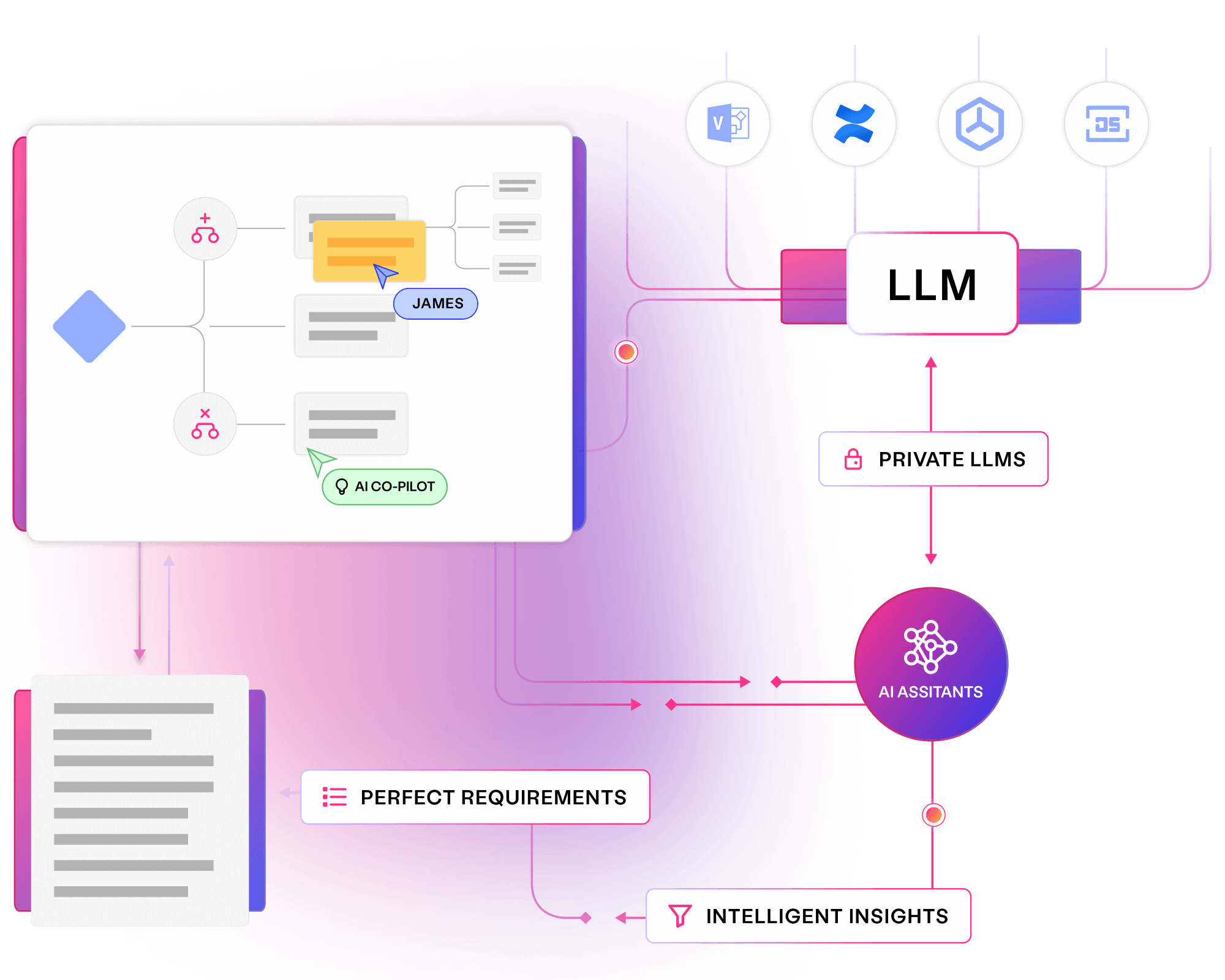

Provide the domain expertise and specialist feedback your teams need. AI assistants pay off technical debt, provide on demand clarity, and augment your application landscape.

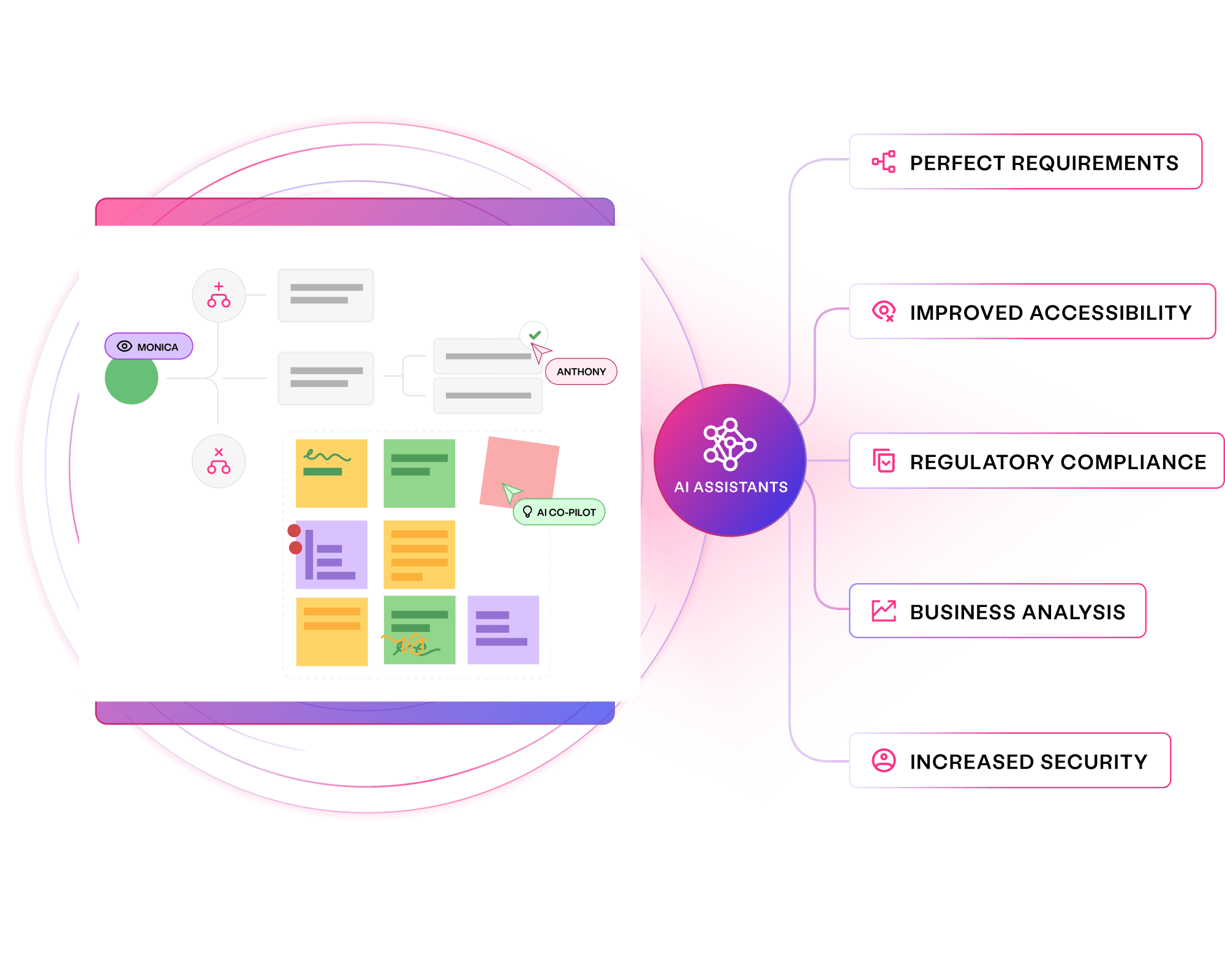

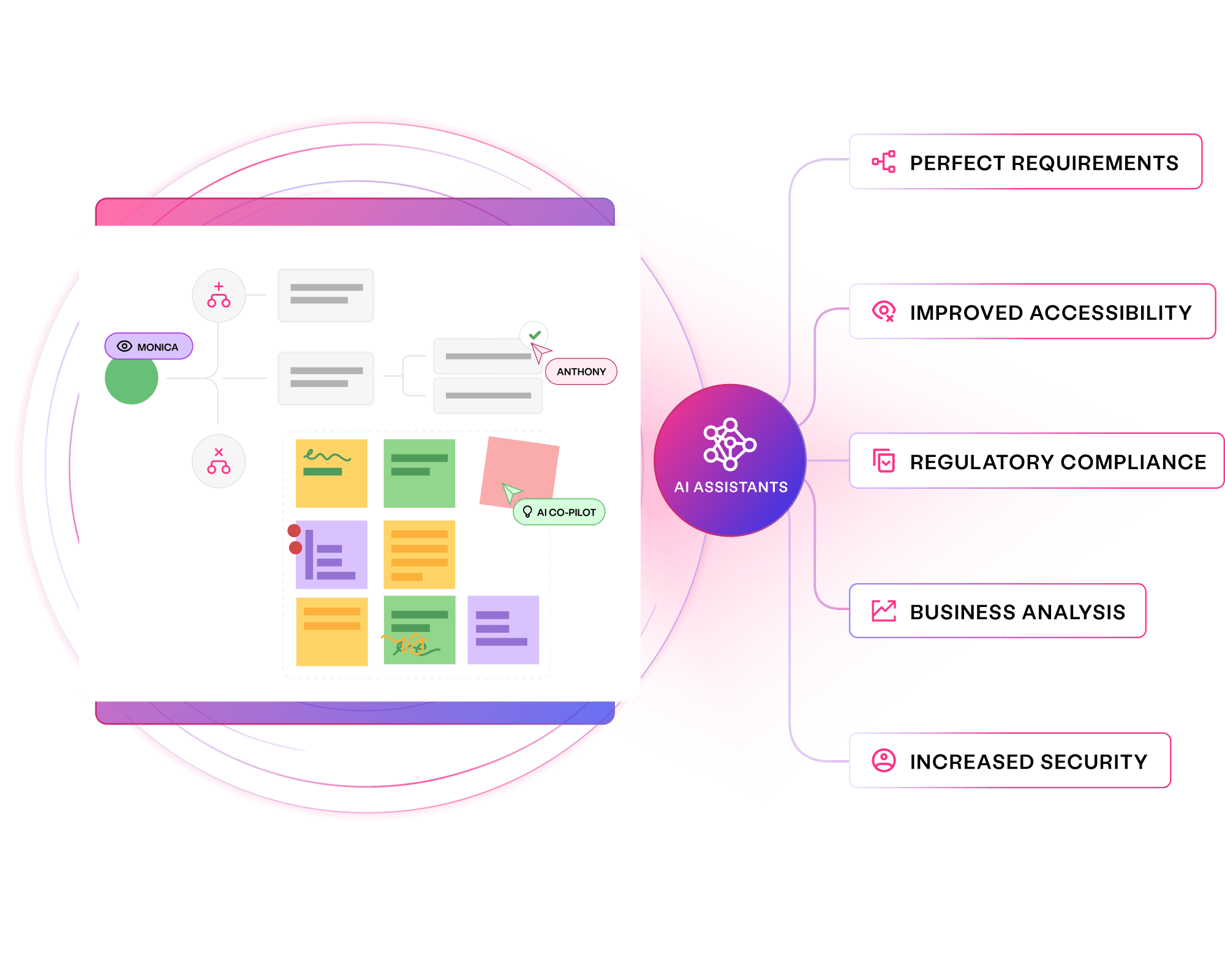

Collaborate with virtual experts to drive quality

Provide specialist knowledge on demand

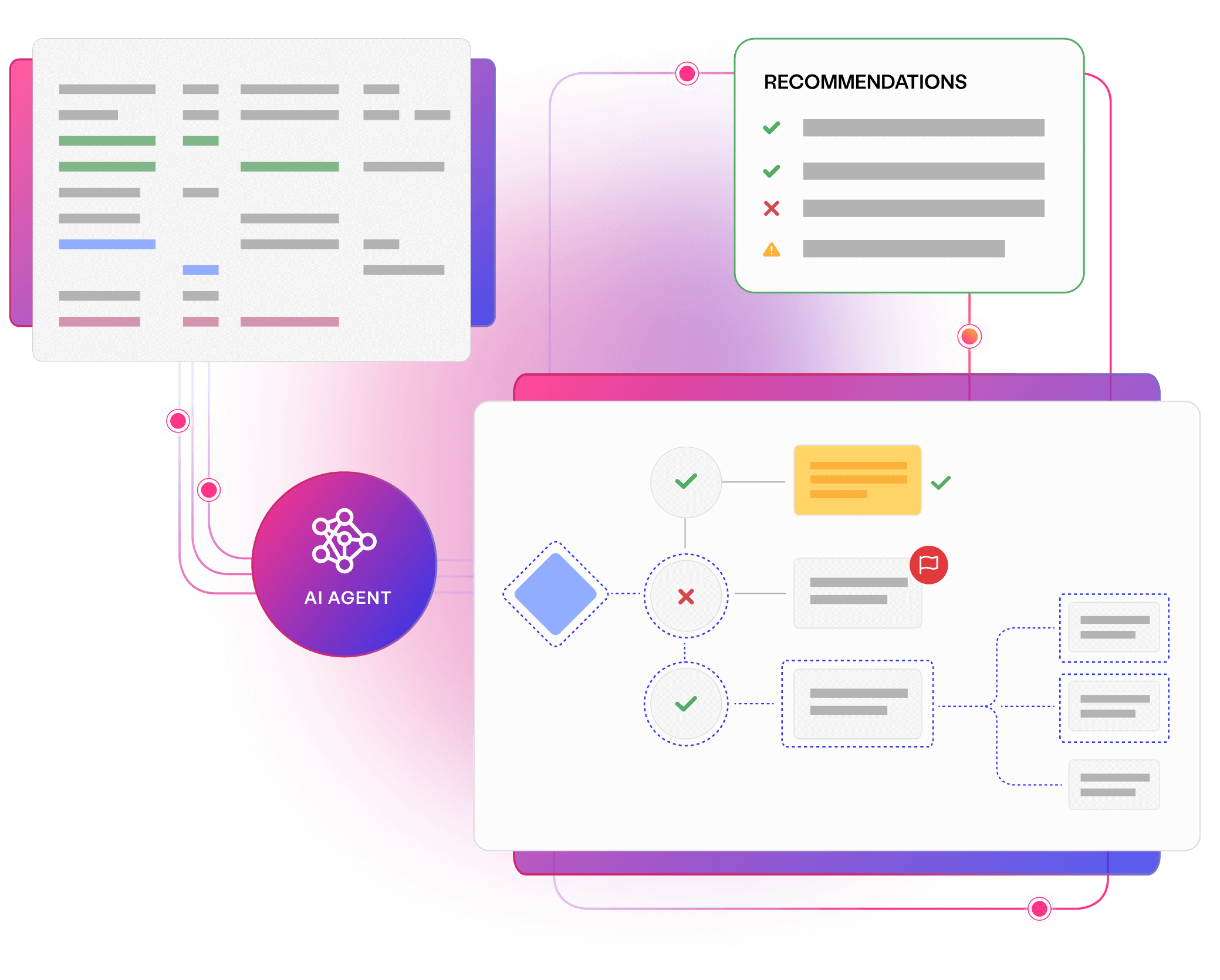

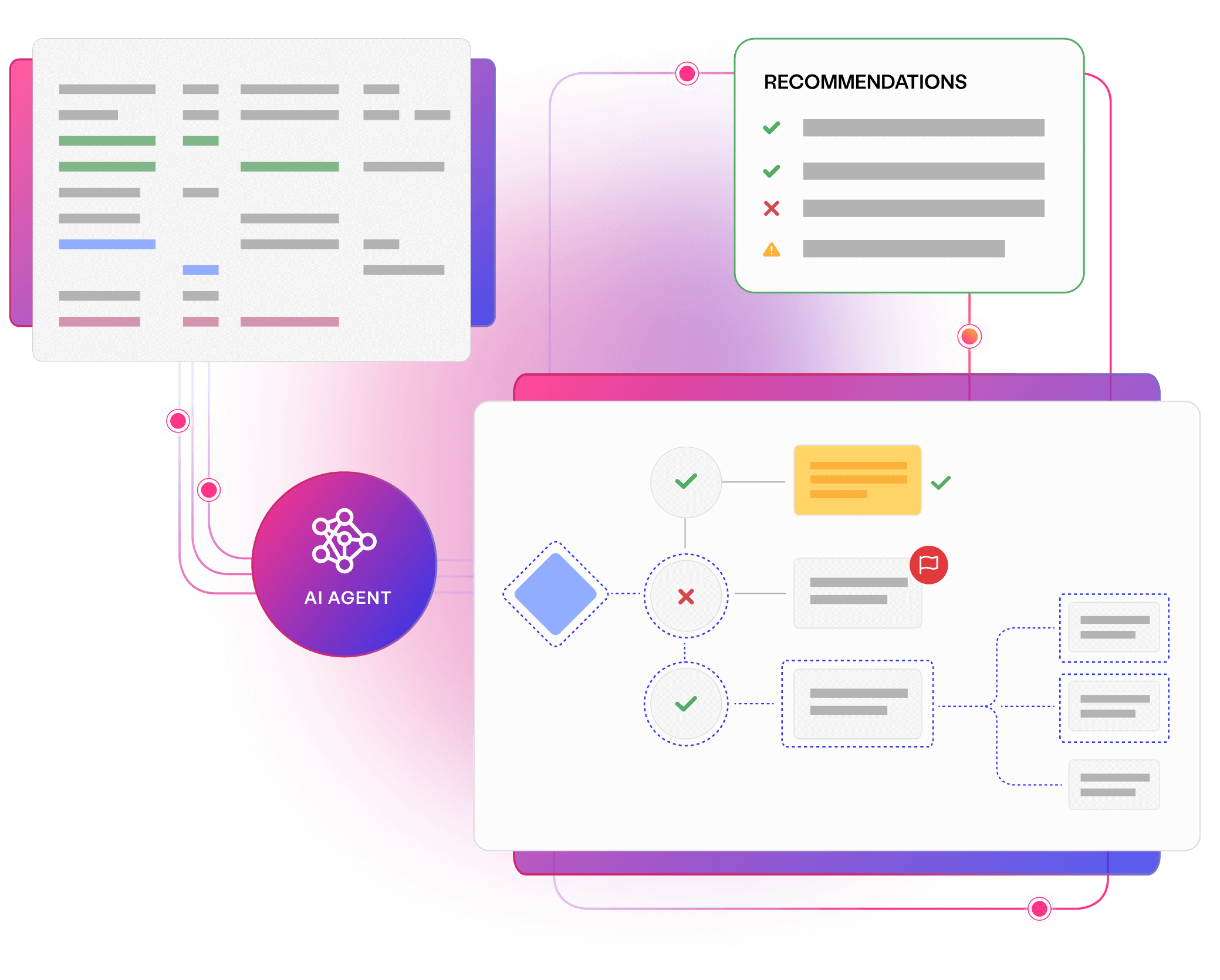

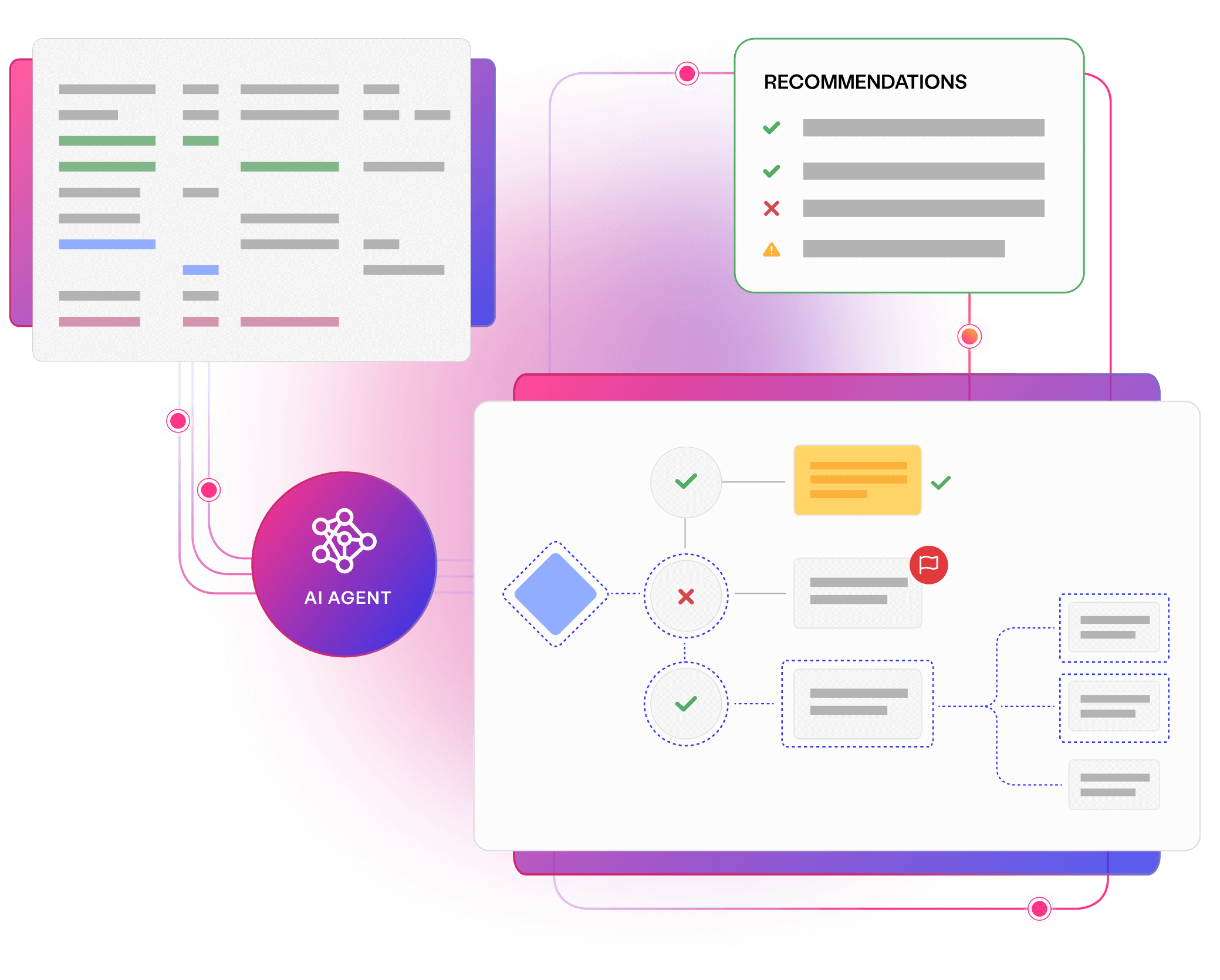

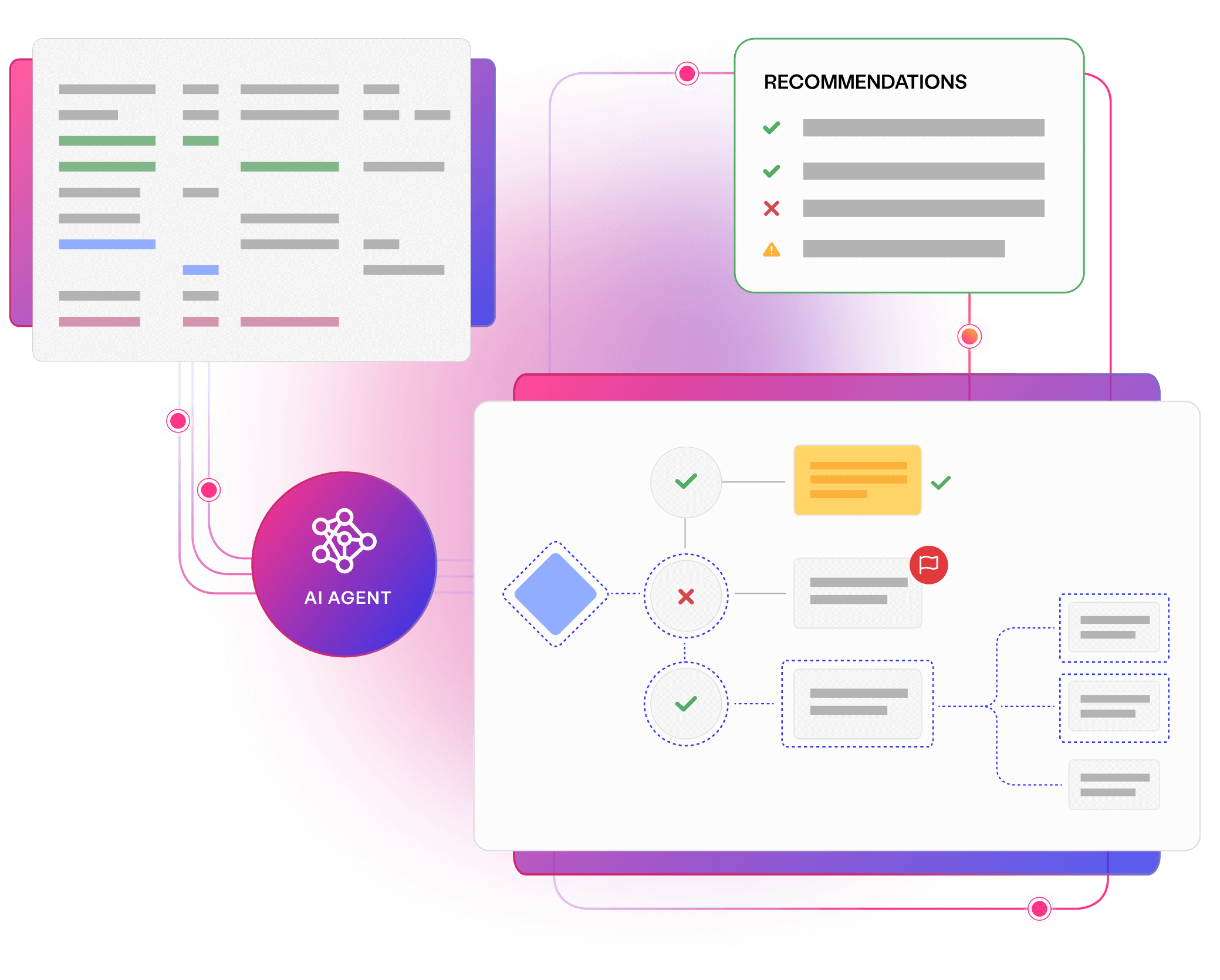

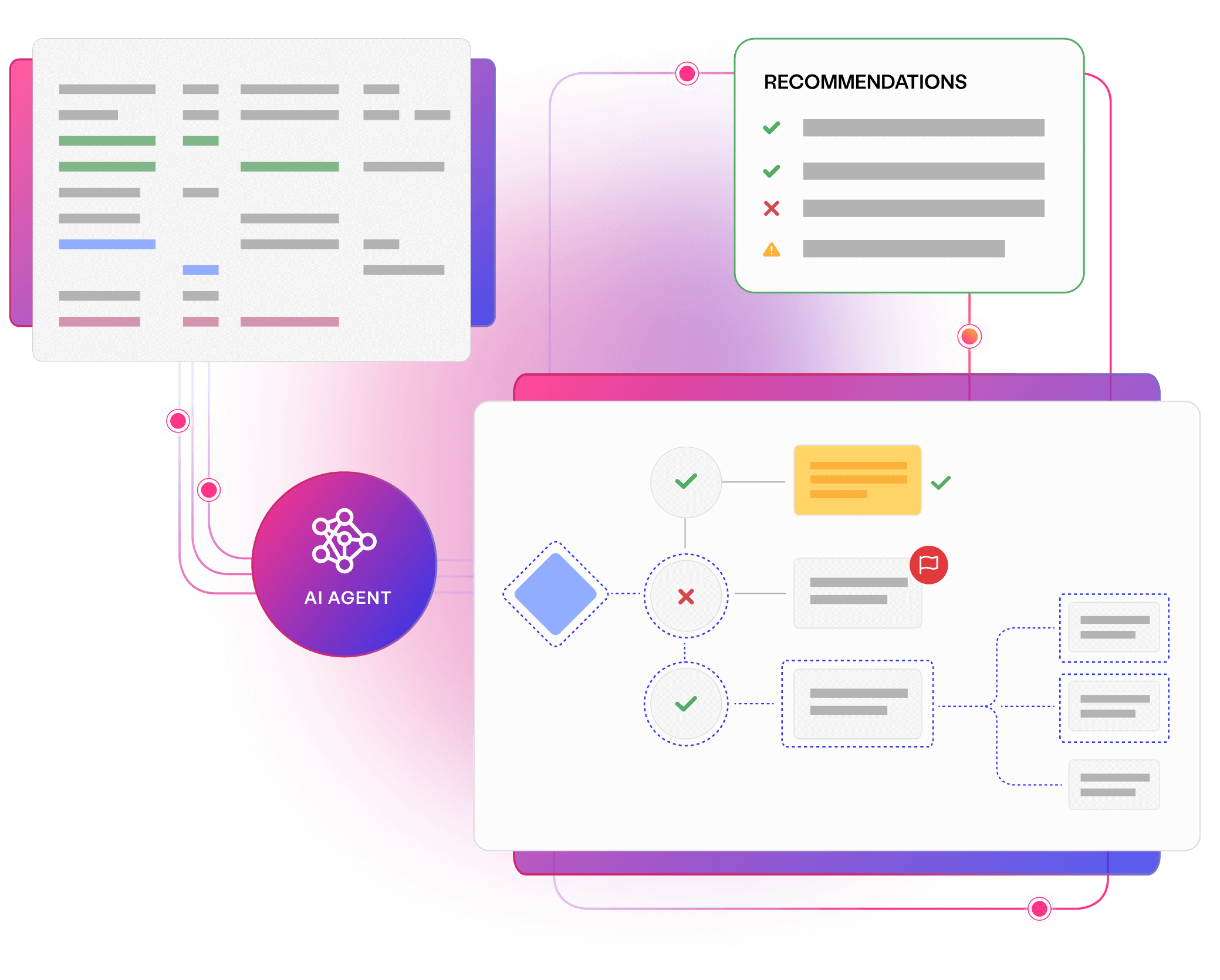

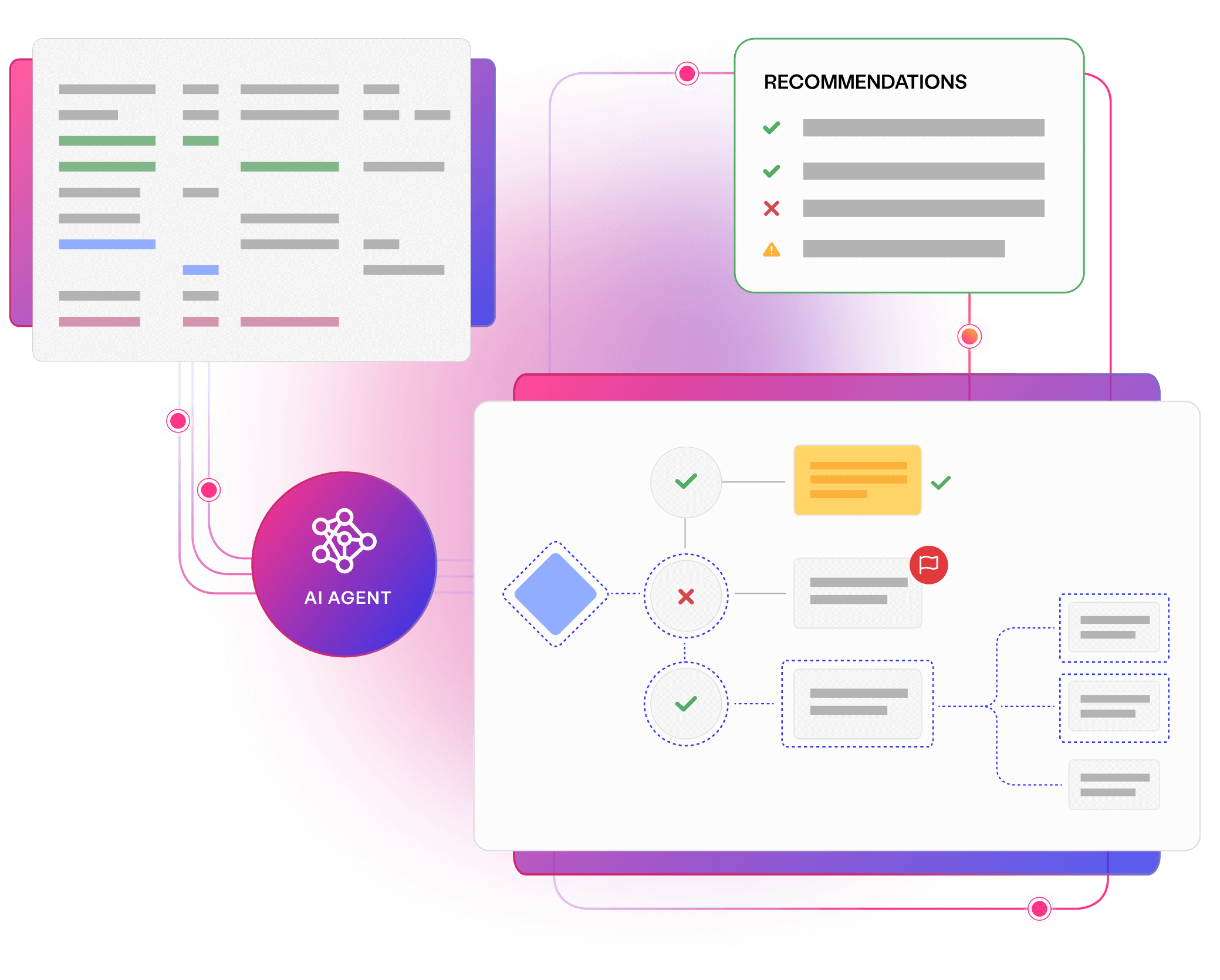

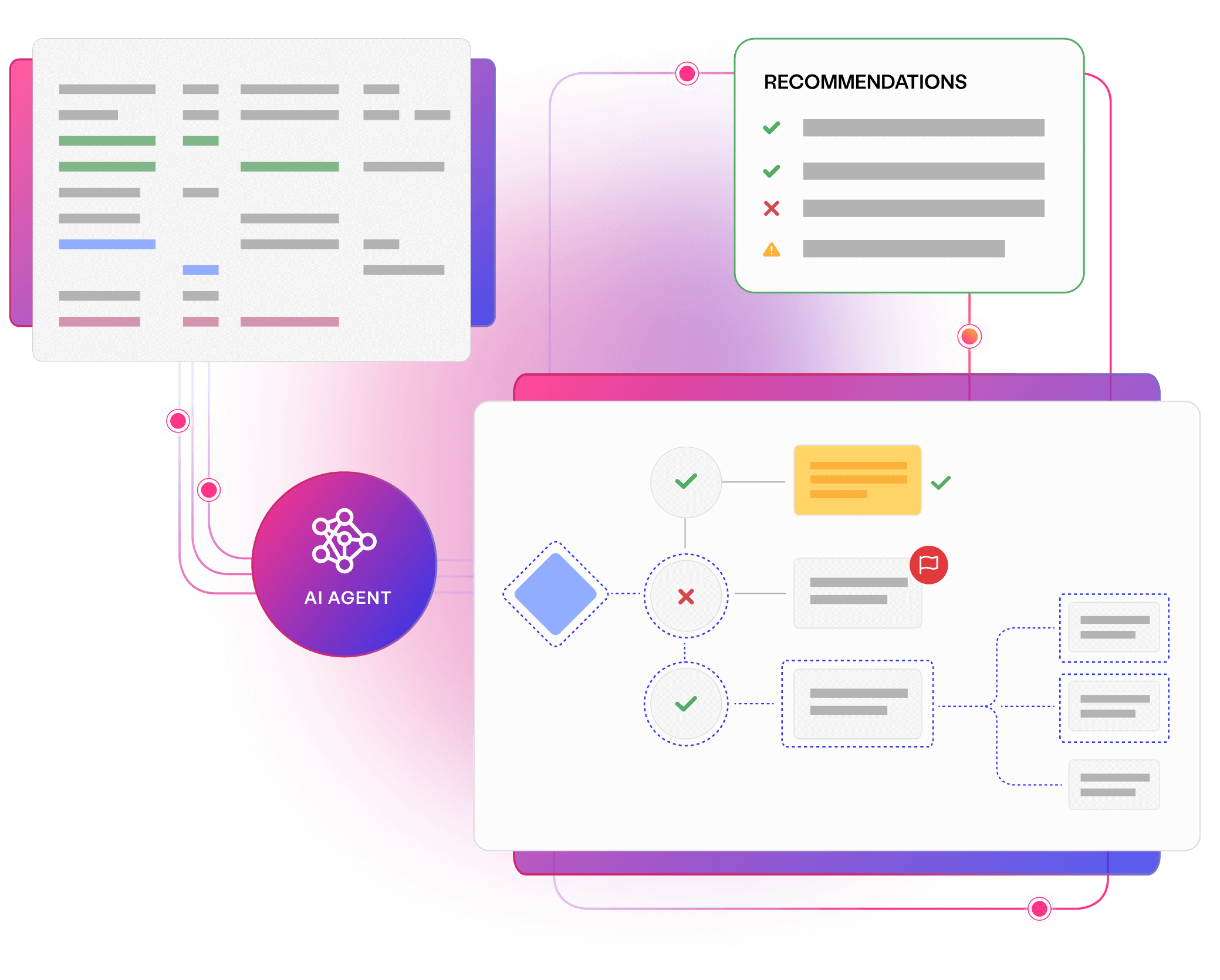

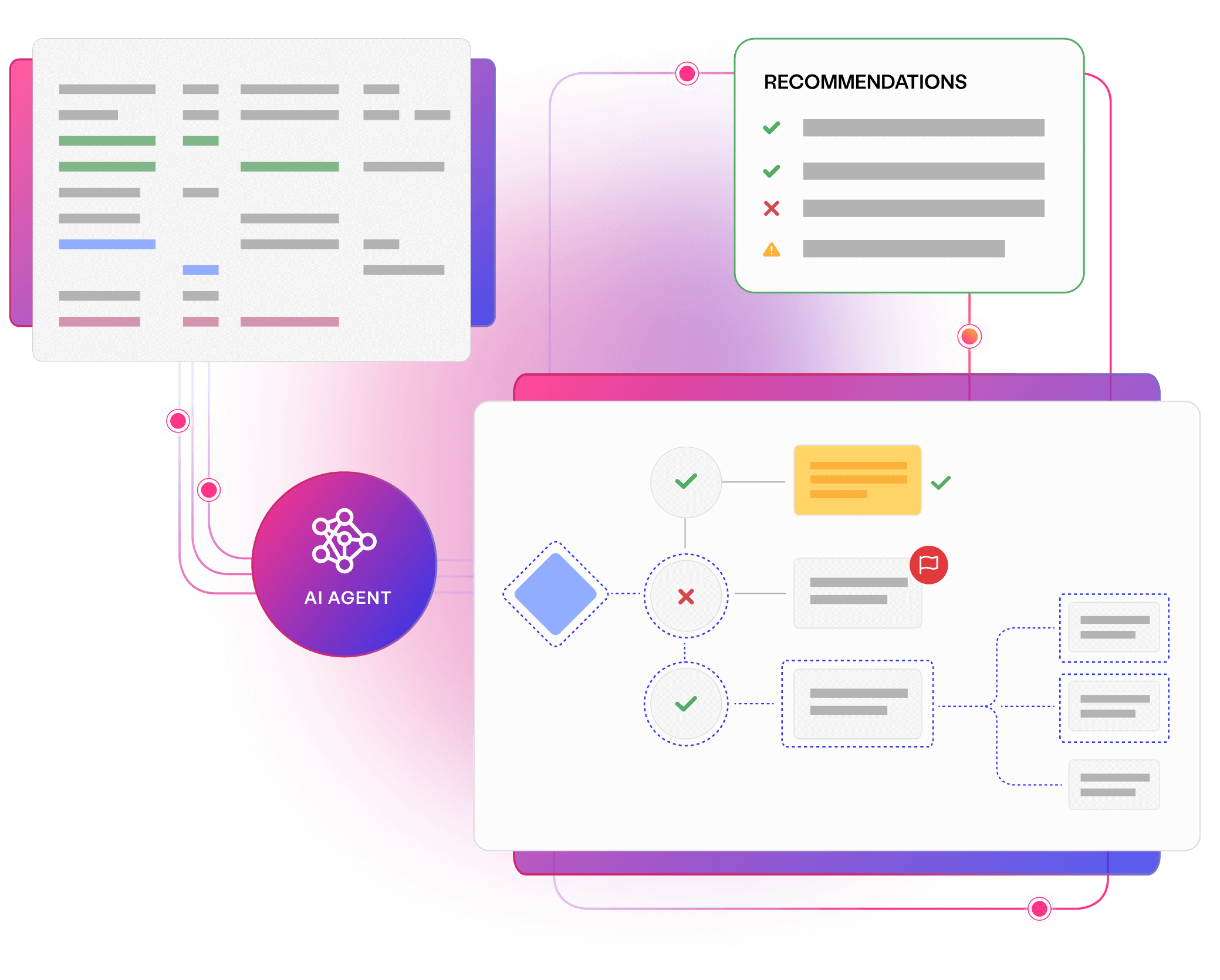

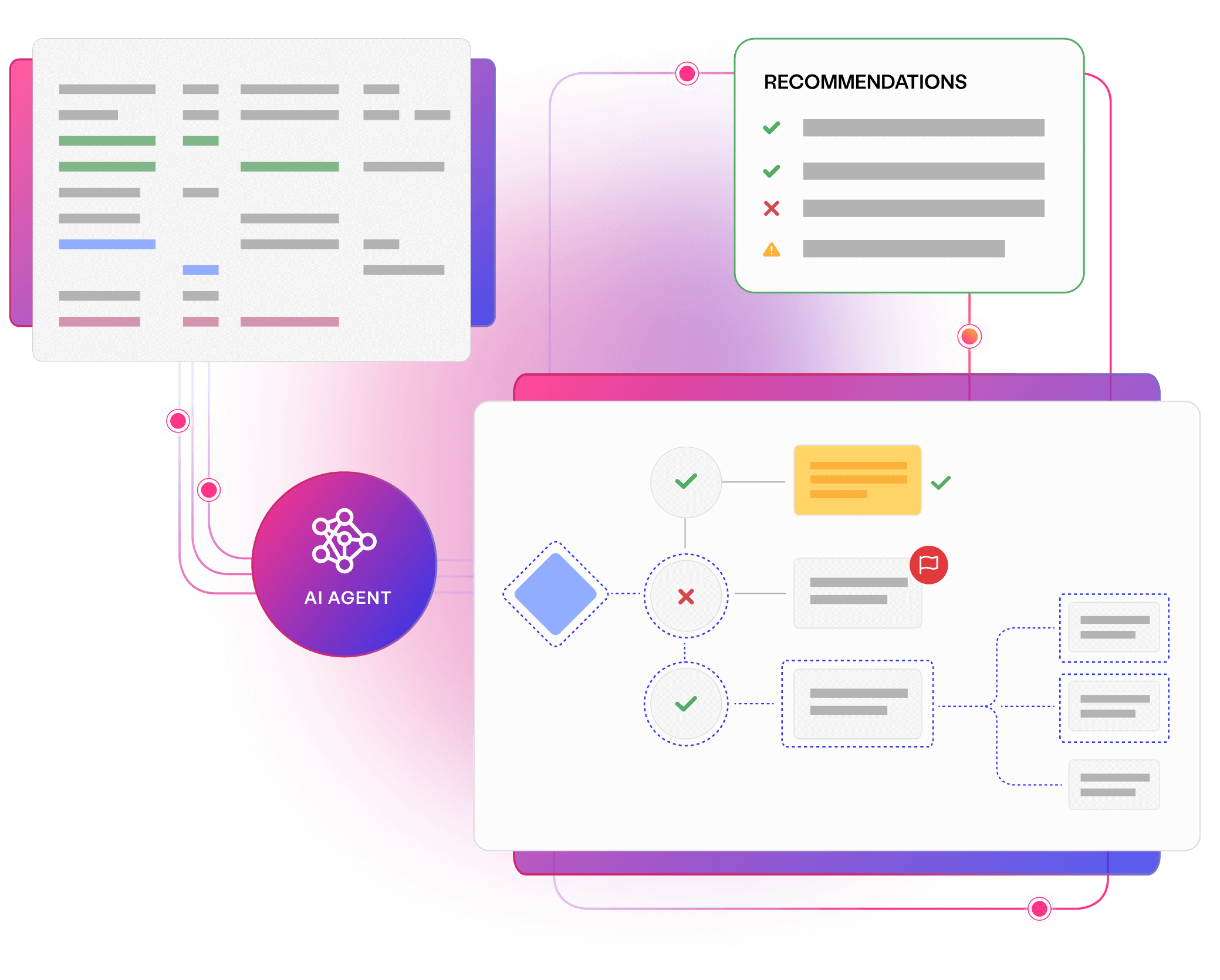

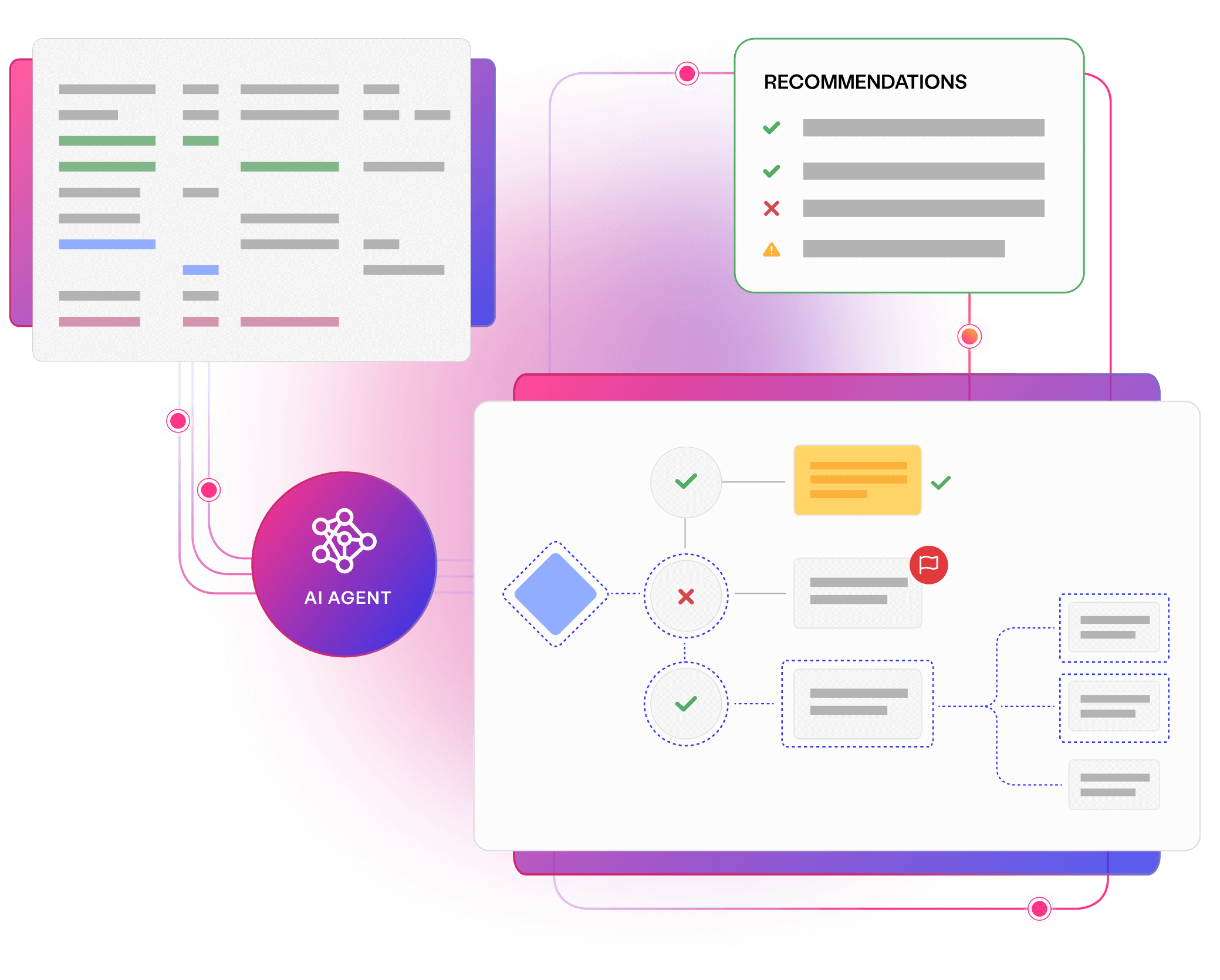

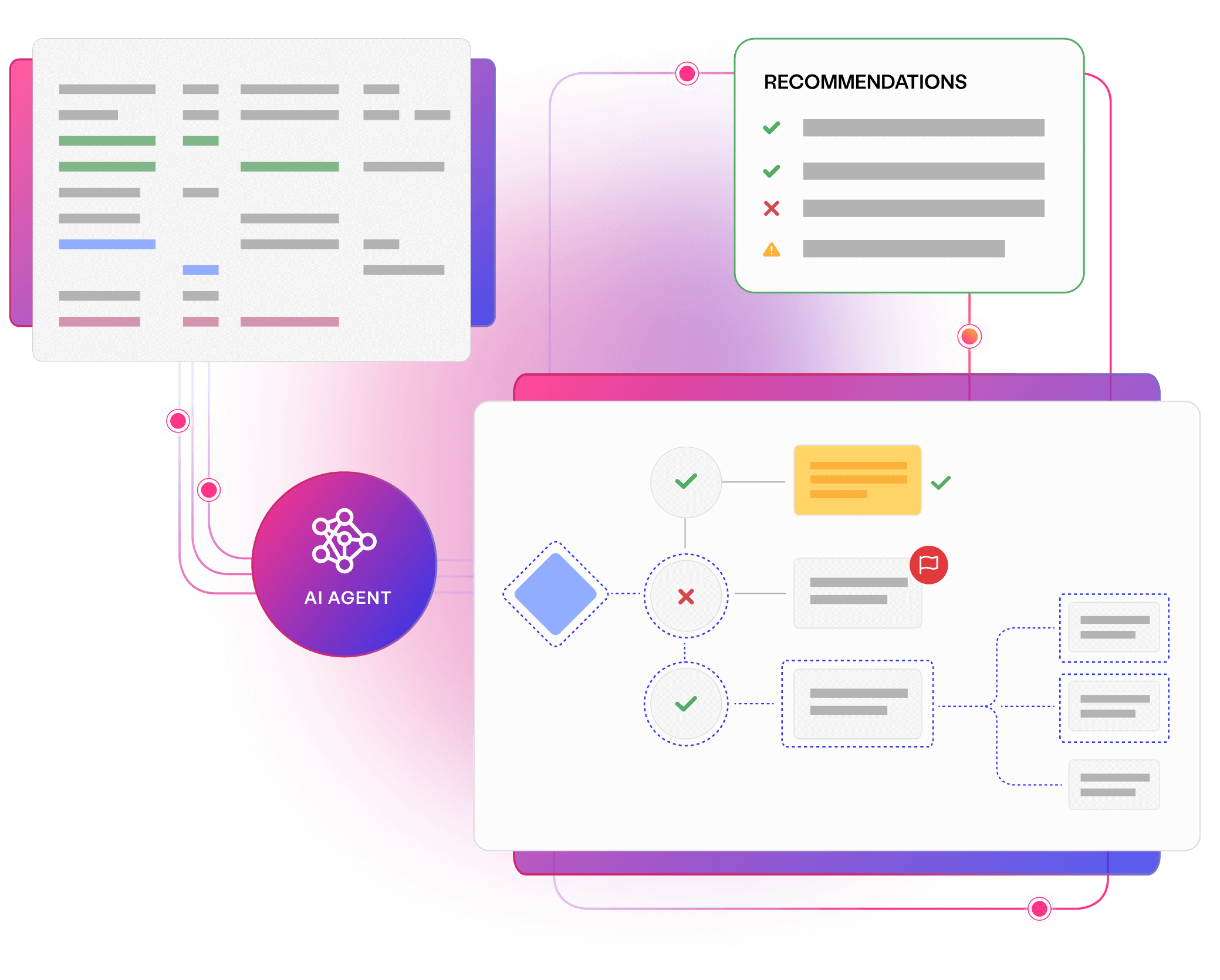

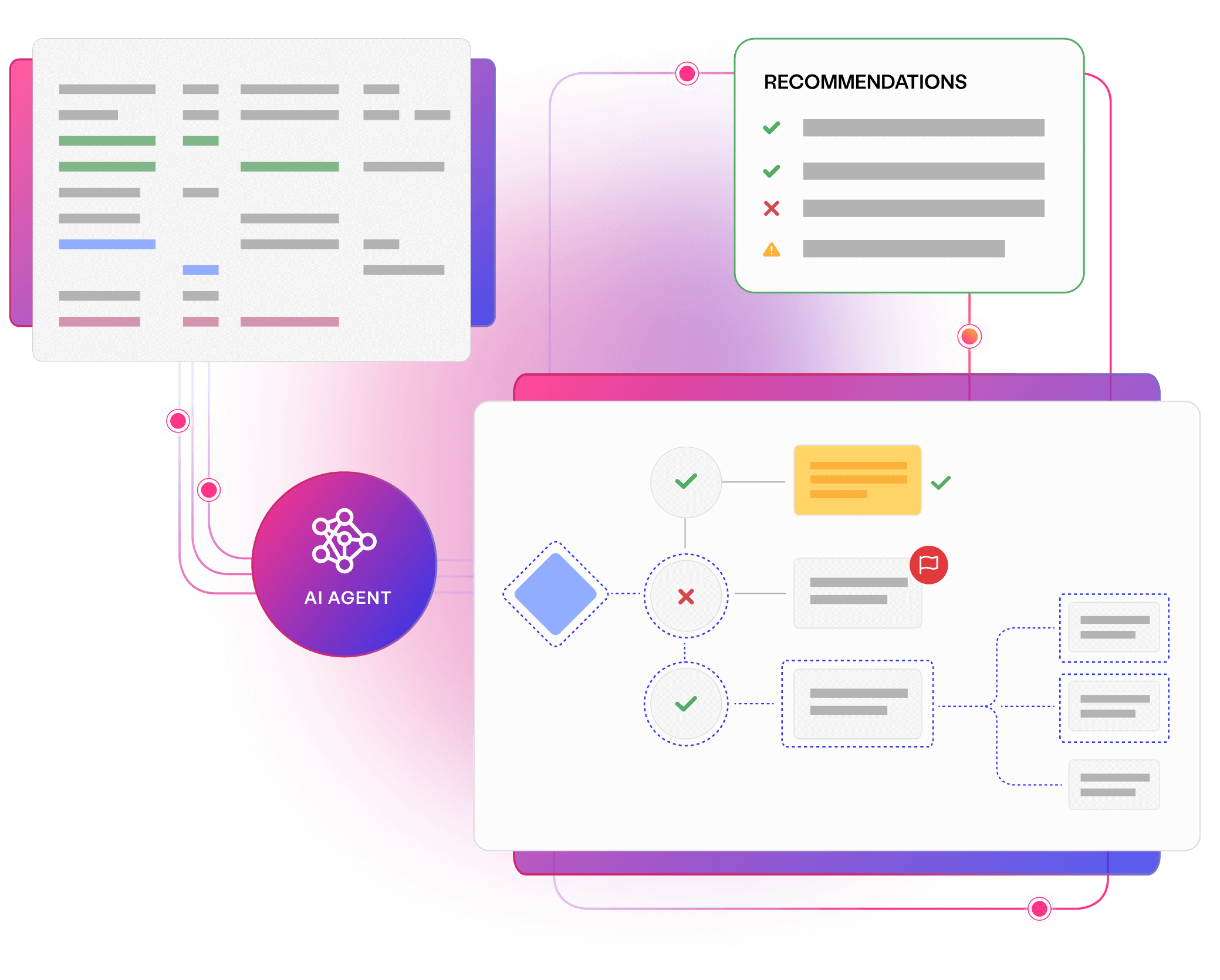

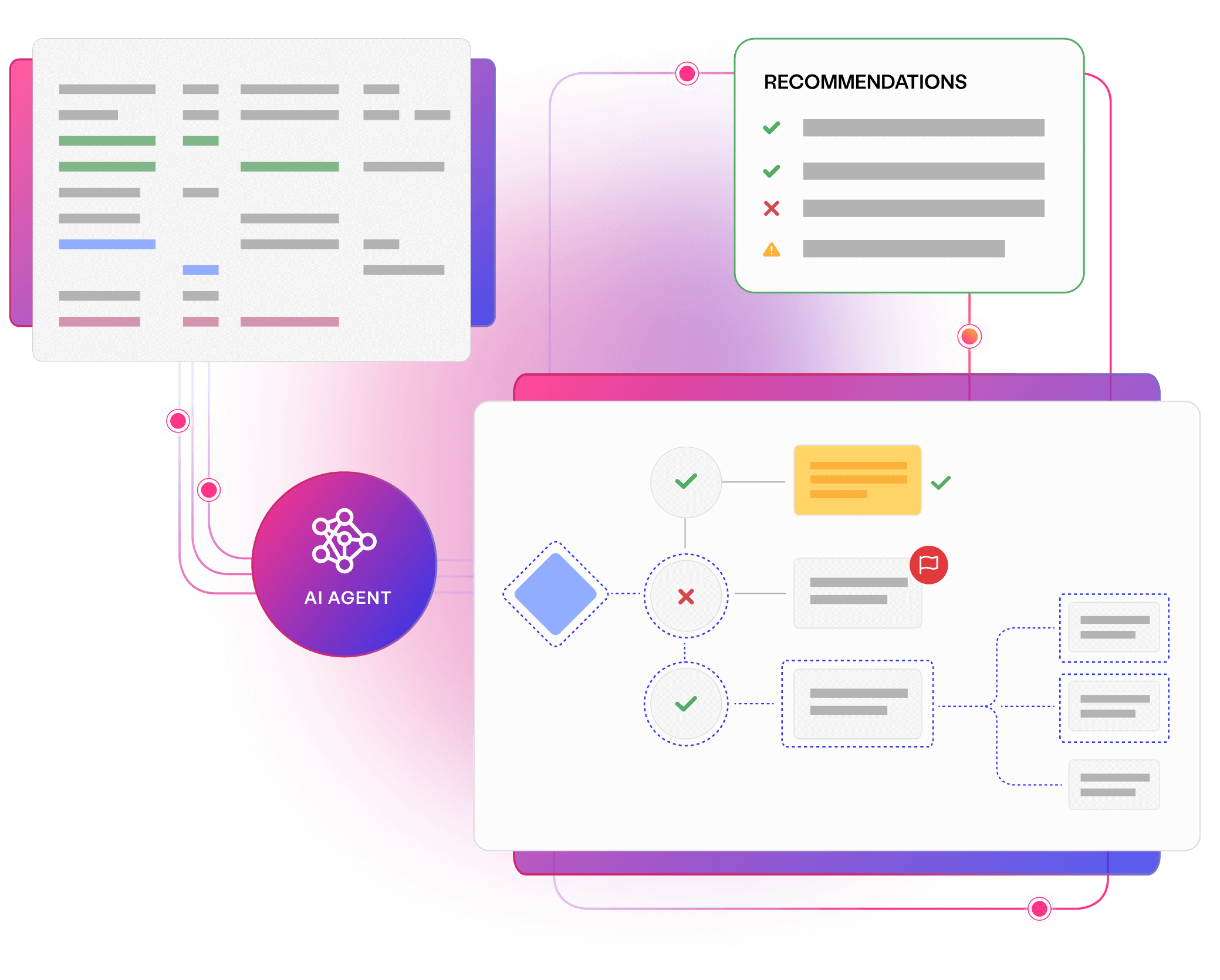

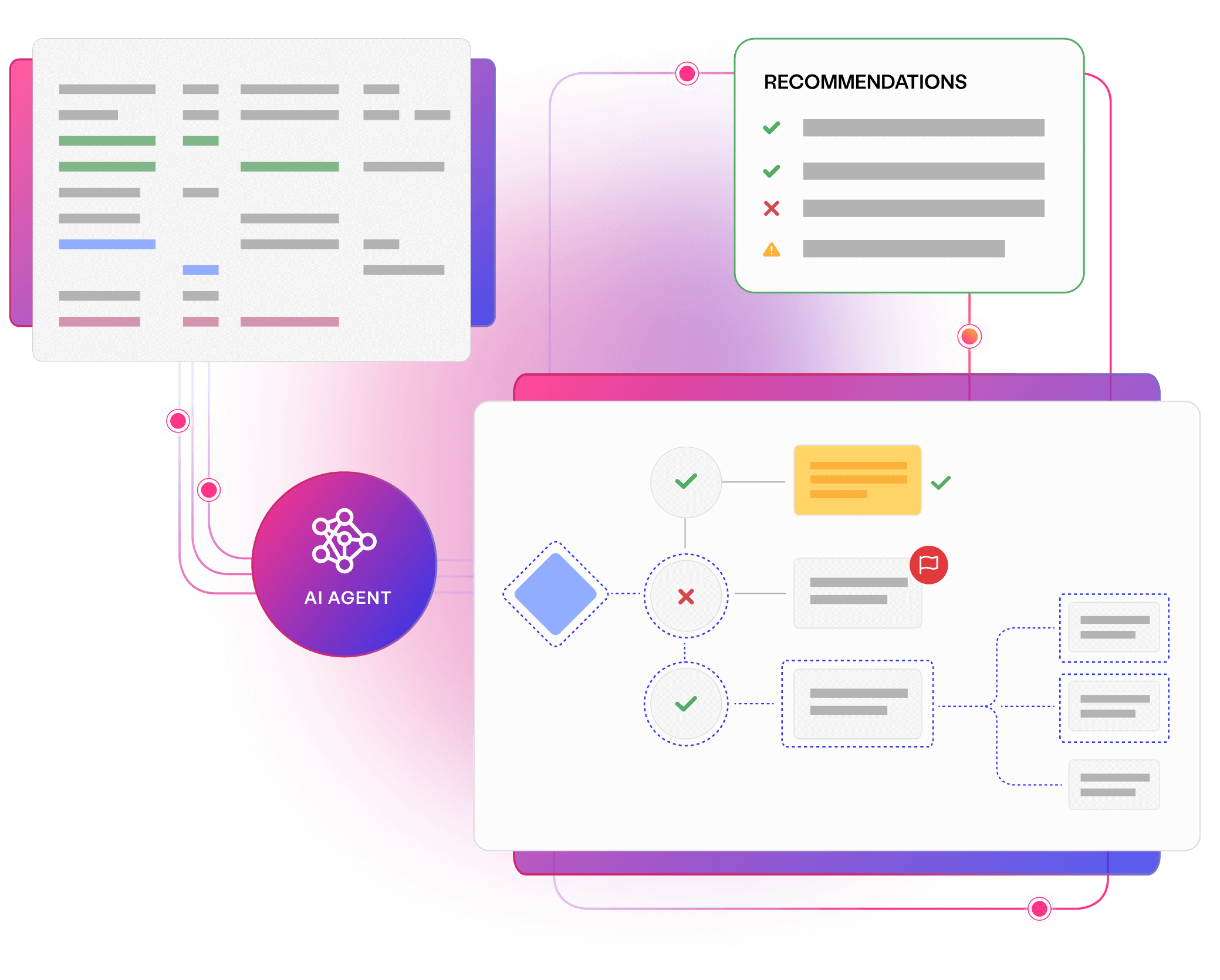

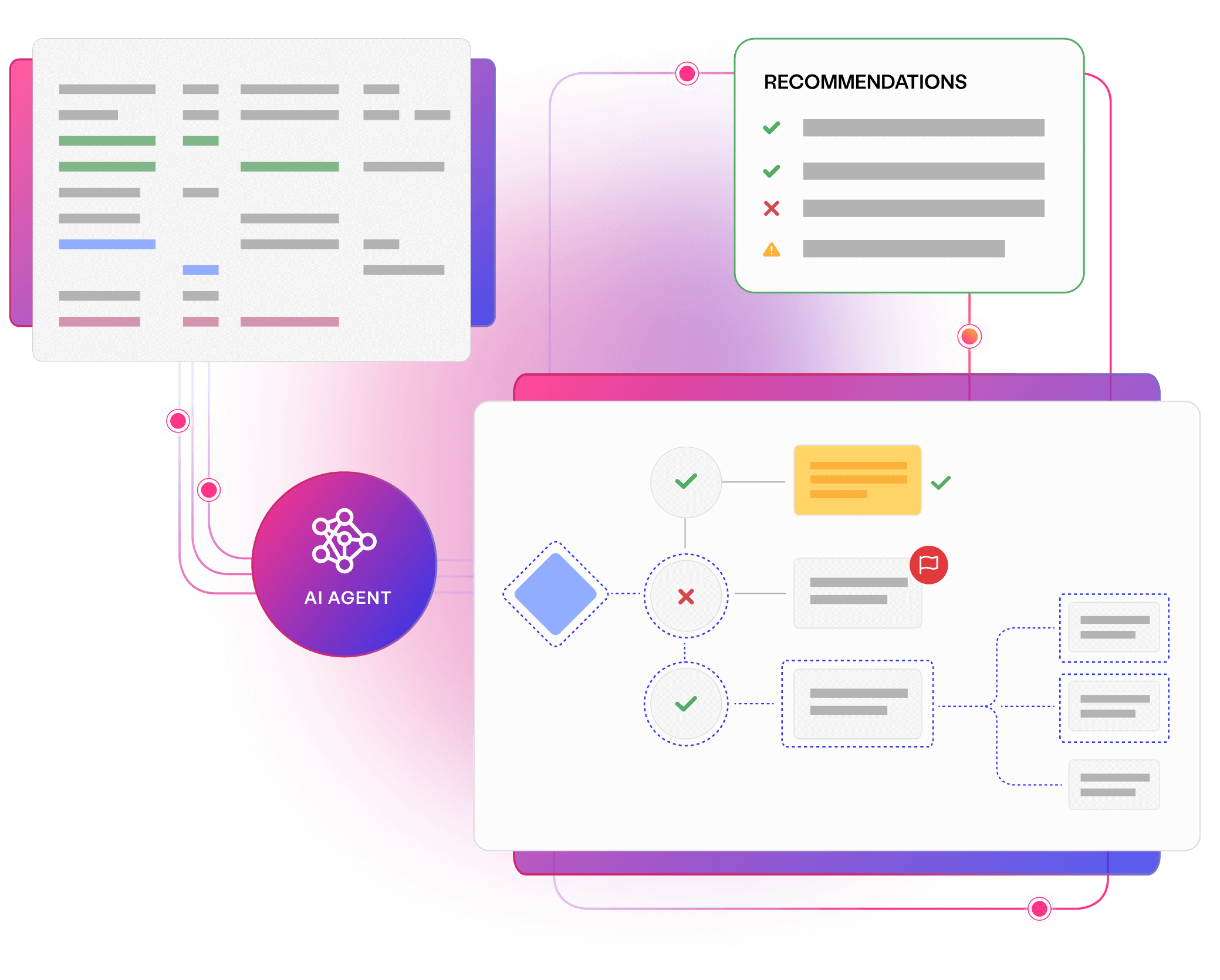

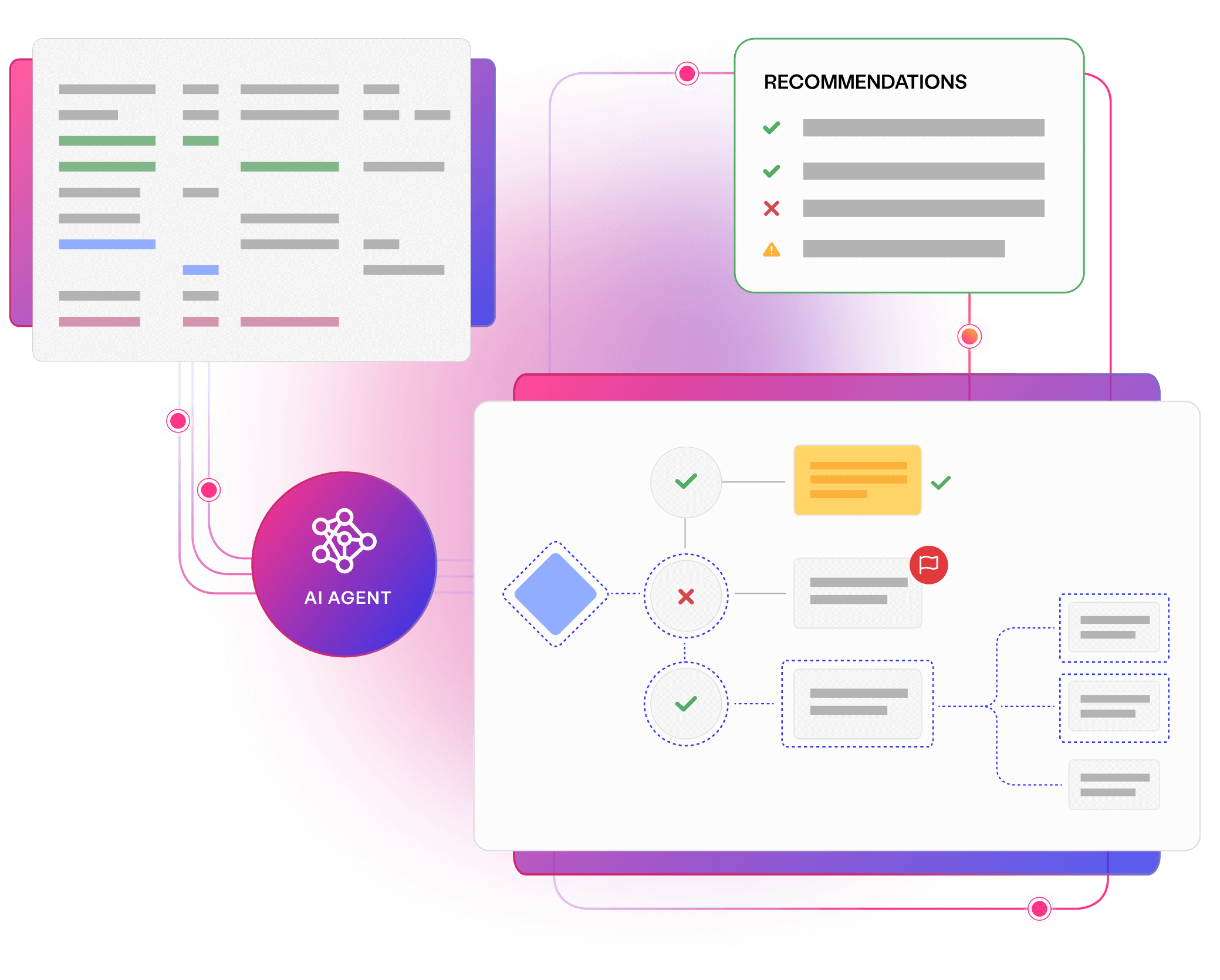

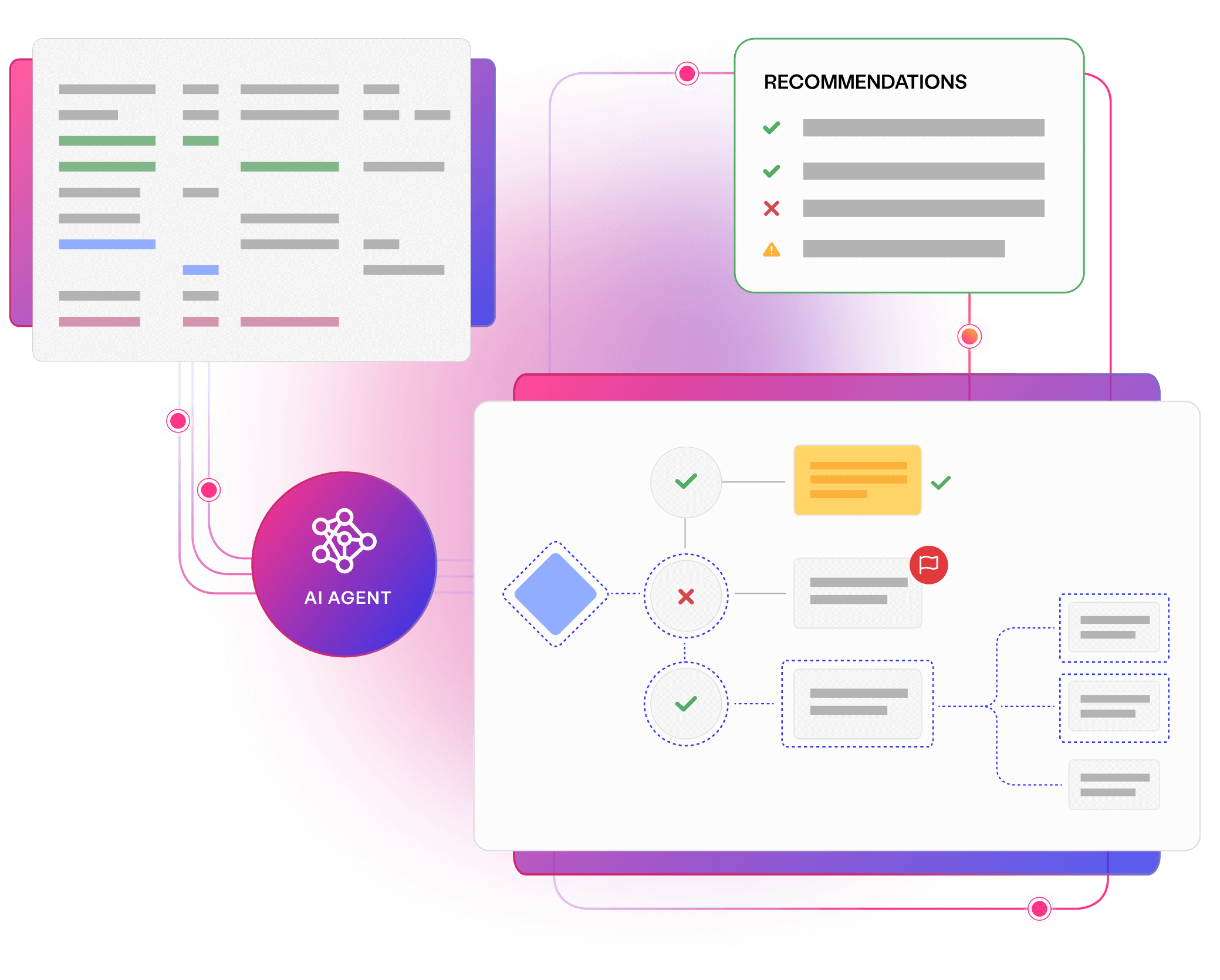

Generate autonomous test data and insights

Provide the skills, knowledge and assets your teams need to deliver superior software. Virtual domain experts provide feedback on your test data landscape, answer questions about your systems, and generate compliant data.

Introducing AI assistants provides knowledge not found in your team, answering questions about complex systems without waiting for human input.

AI-augmented visualisation converts data journeys into clear specifications, with specialist feedback to improve quality, accessibility, security, and more.

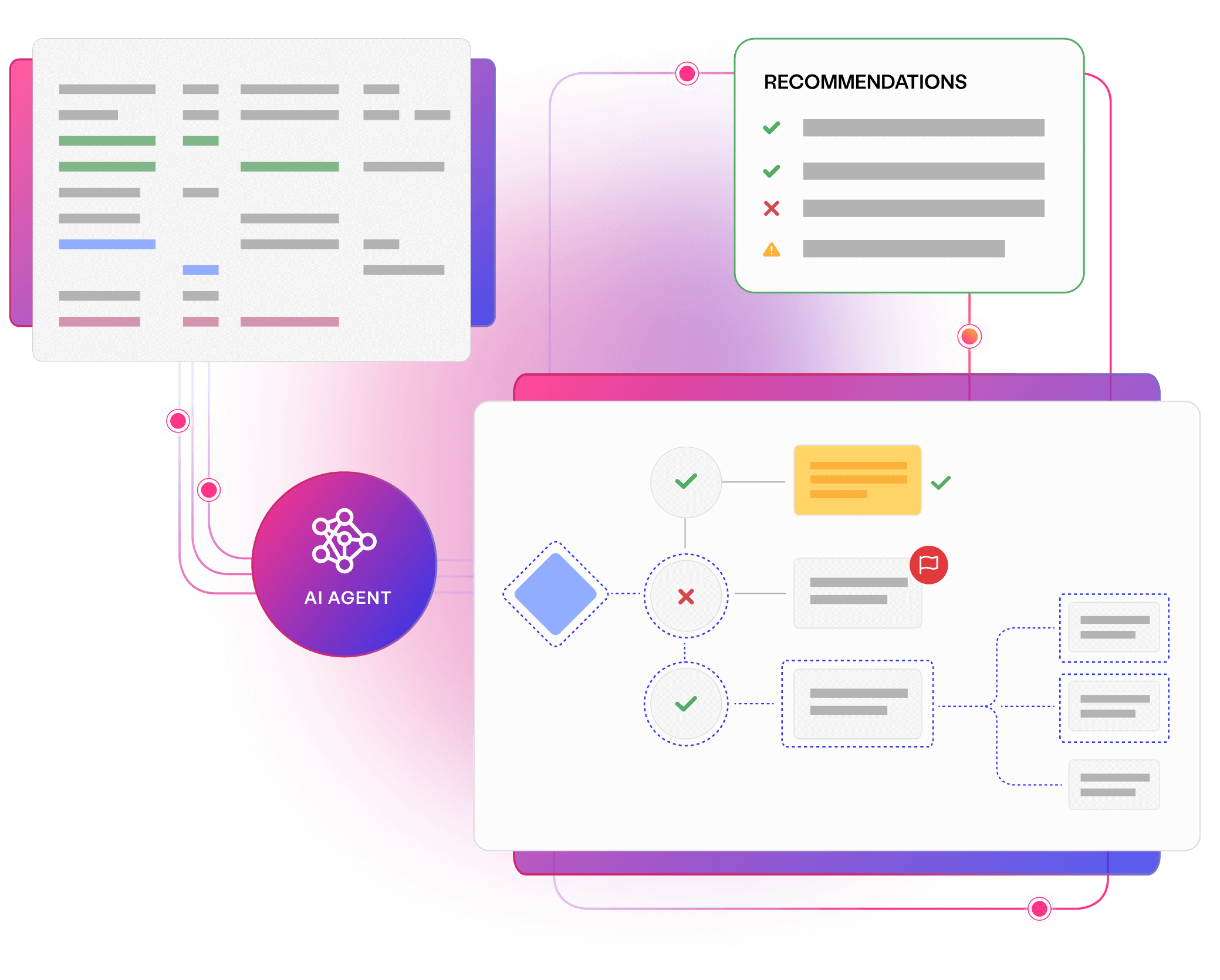

Analysing complex applications and generating data with AI, pinpoints changes to make, while ensuring your systems remain fully documented and secure.

Providing AI assistants and componentising data requirements in clear models allows everyone to comprehend systems in the level of detail they need.

Uncover smarter test data, with Curiosity’s all-in-one, AI-accelerated platform. Offering integrated, secure, and intuitive tools to simplify complex data landscapes and overcome test data management challenges.

Explore platform

The world’s largest enterprises use Curiosity's platform to ensure that AI delivers value for their test data management strategies.

AI is making system data more complex than ever. Developing AI-driven, AI-built systems requires diverse data that reflects intricate relationships, trends and hierarchies. Model-based data generation is perfect for overcoming this complexity.

Principal Test Data Engineer

Read a resource, or meet with an expert, to learn how AI can introduce new expertise, and autonomous data generation to your teams.

Simplify your complex data landscape and provide confidence and clarity at every step of your data...

Read more about Discover Enterprise Test Data® Learn more

Join Huw Price and James Walker for an eye-opening webinar, exploring an innovative, three-step...

Read more about 3 steps to seamless, secure, smarter test data management Watch now

Talk to us to learn how you can unlock the value of AI in enterprise software delivery.

Read more about Meet with a Curiosity expert Book now