Enterprise Test Data brochure

Discover how you can generate complete and compliant data on demand for your entire enterprise.

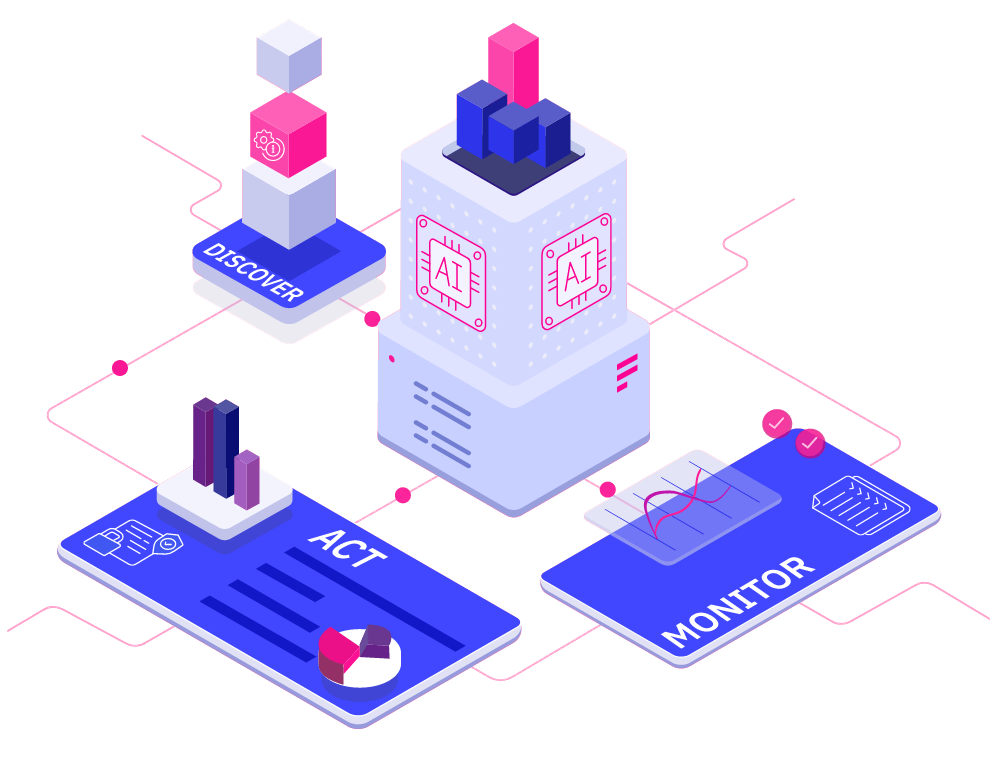

Read more about Enterprise Test Data brochure Download your copyAI-powered. End-to-end. Your complete test data management platform.

Explore Curiosity's collection of webinars, podcasts, blogs and success stories, covering everything from visual modelling to artificial intelligence and test data management.

Deliver superior test data and overcome the challenges of complexity, legacy, scale, and regulation with Curiosity Software.

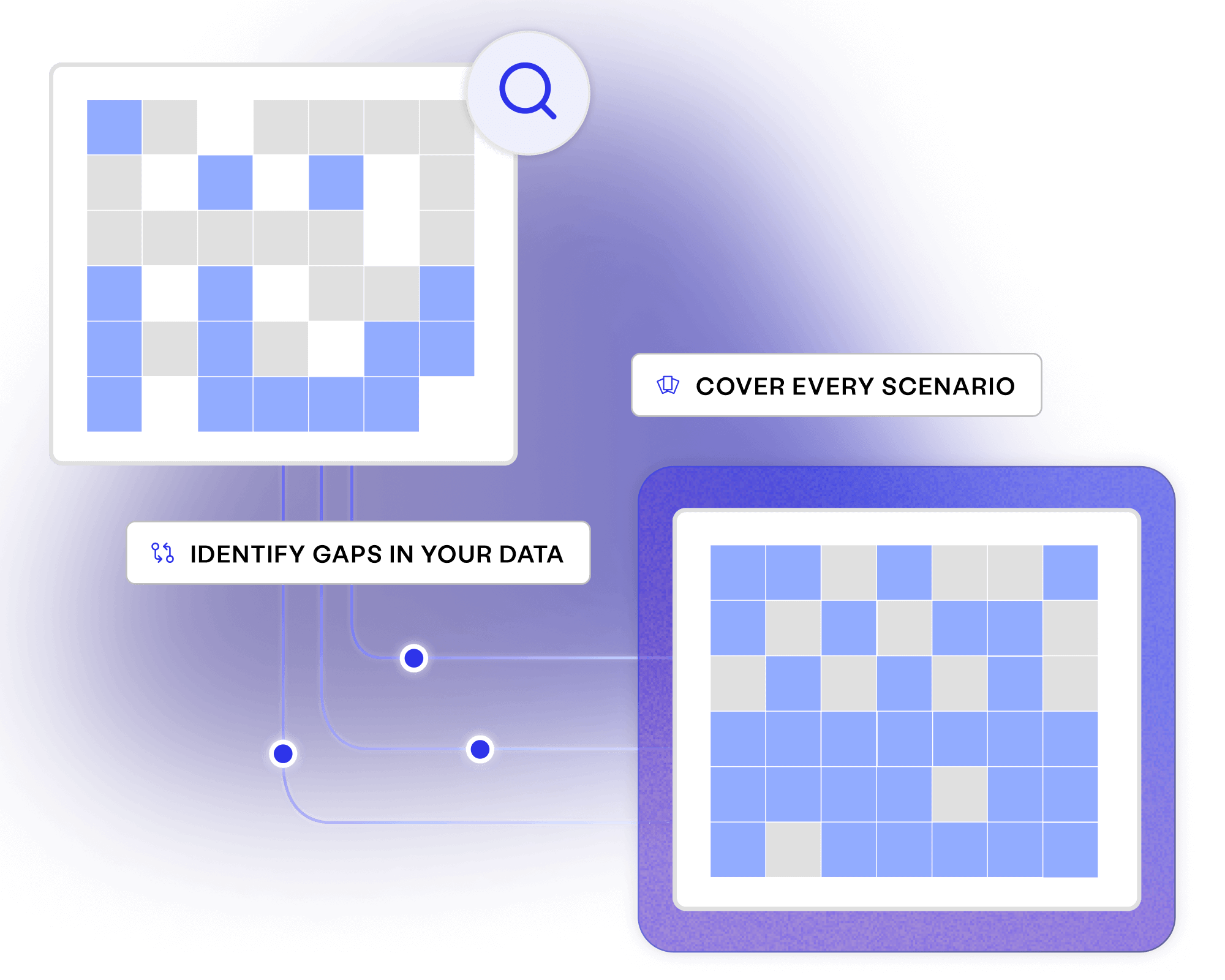

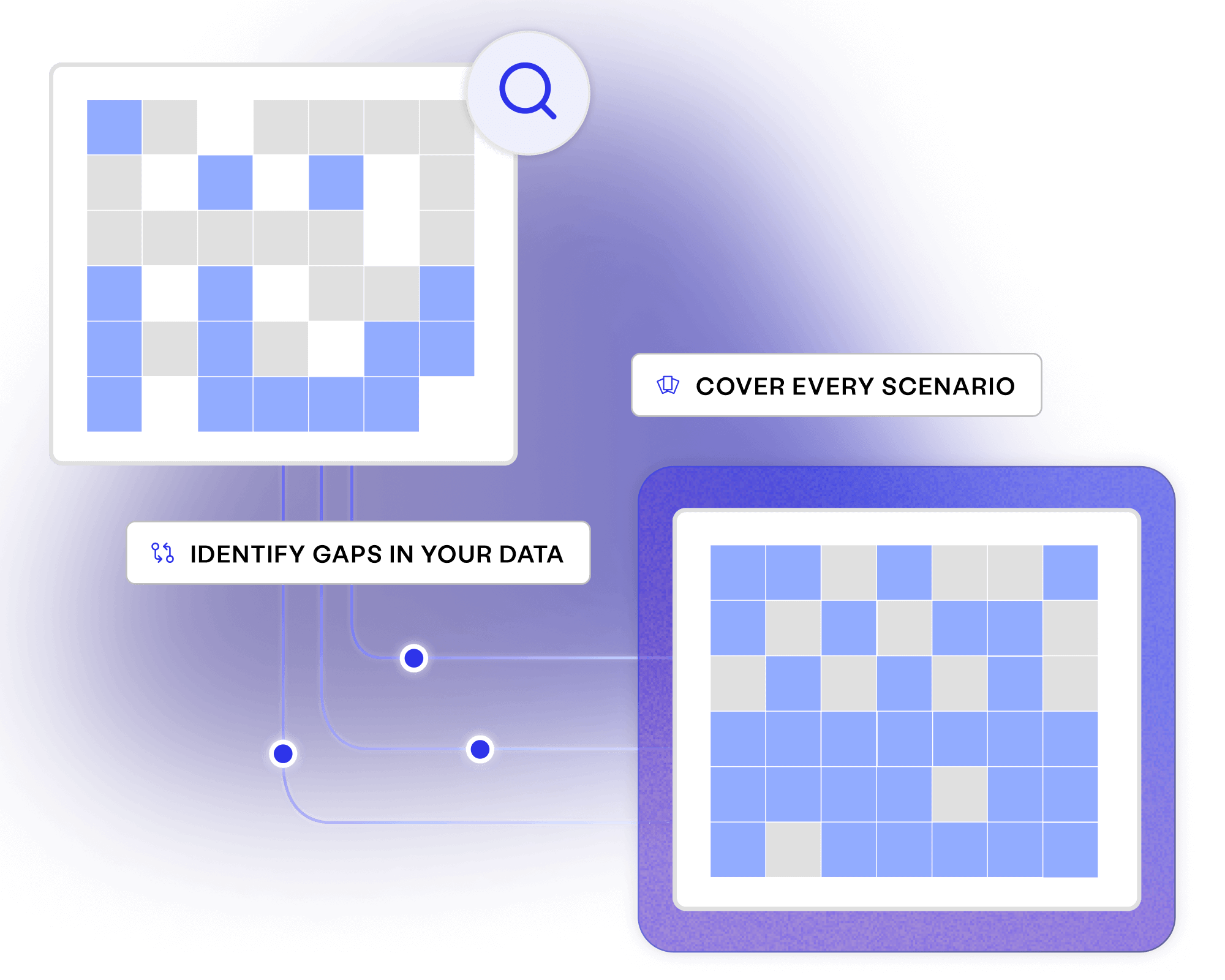

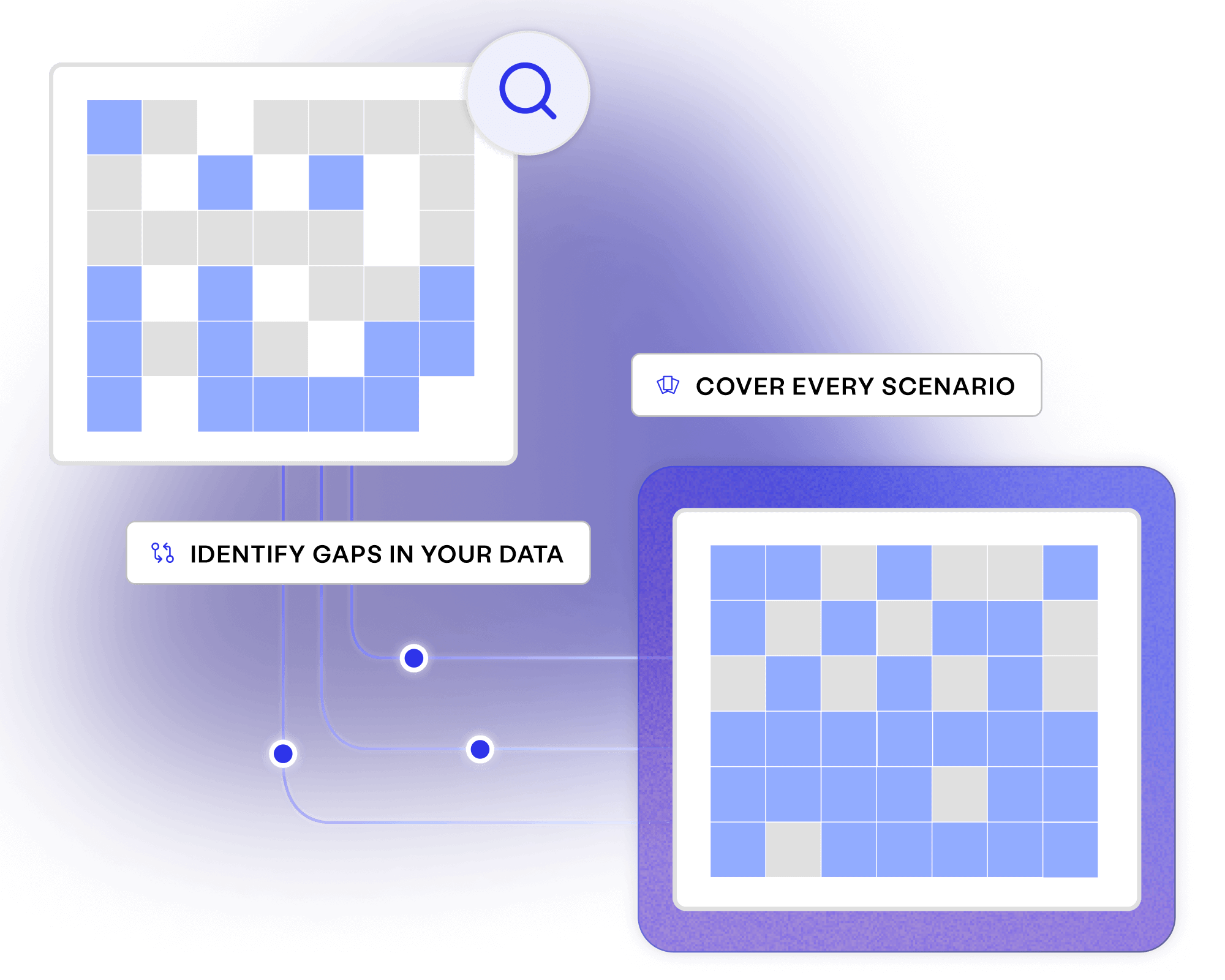

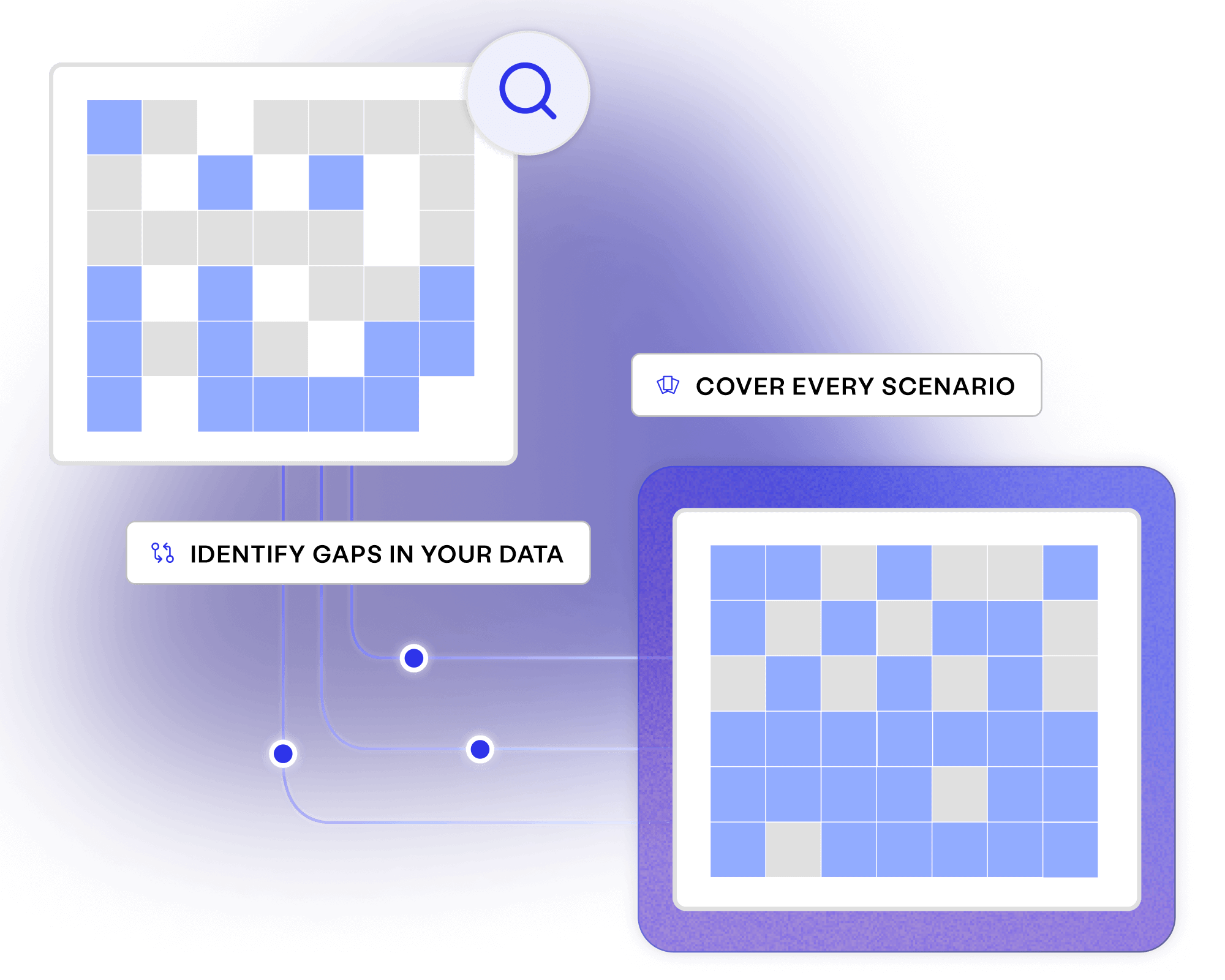

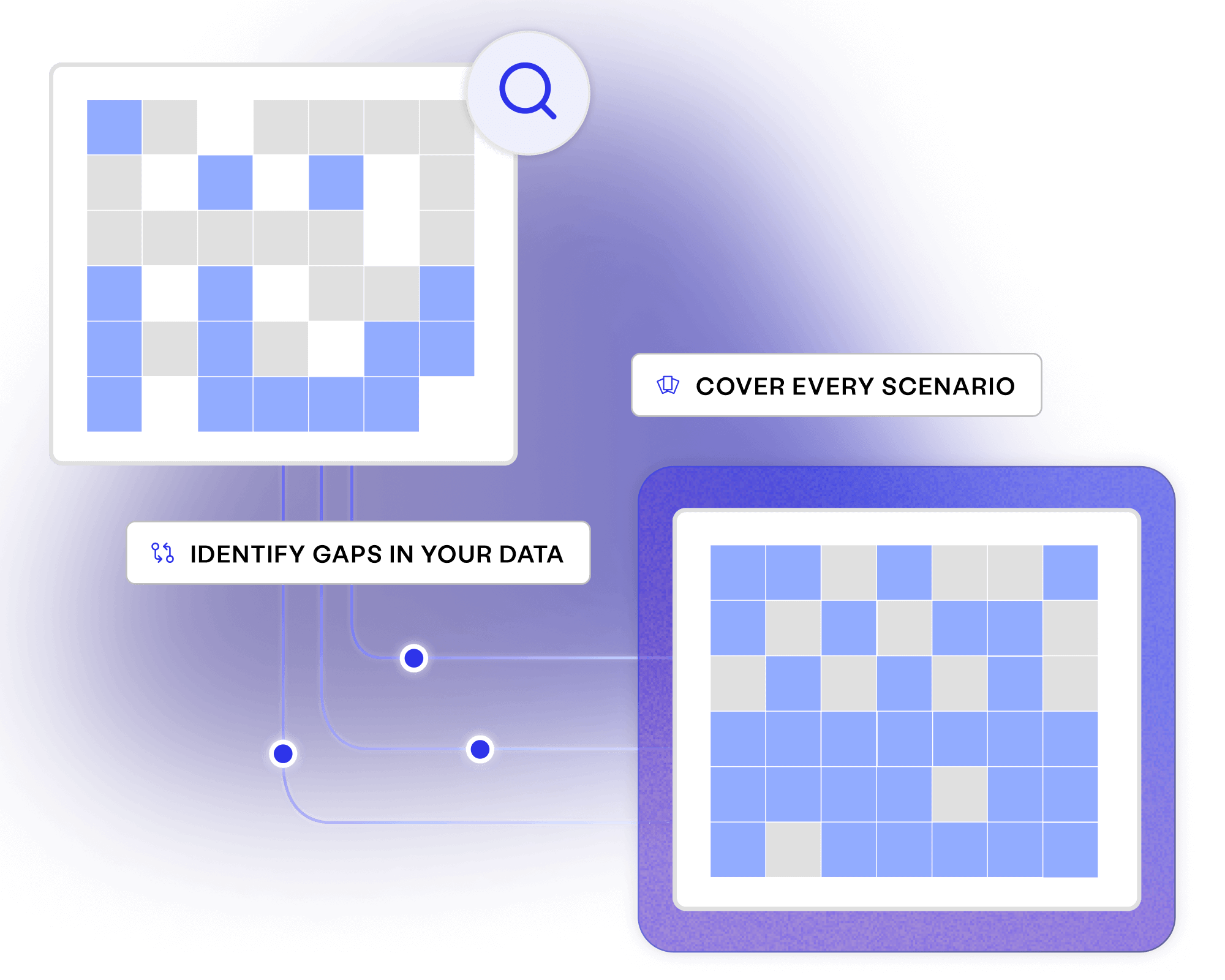

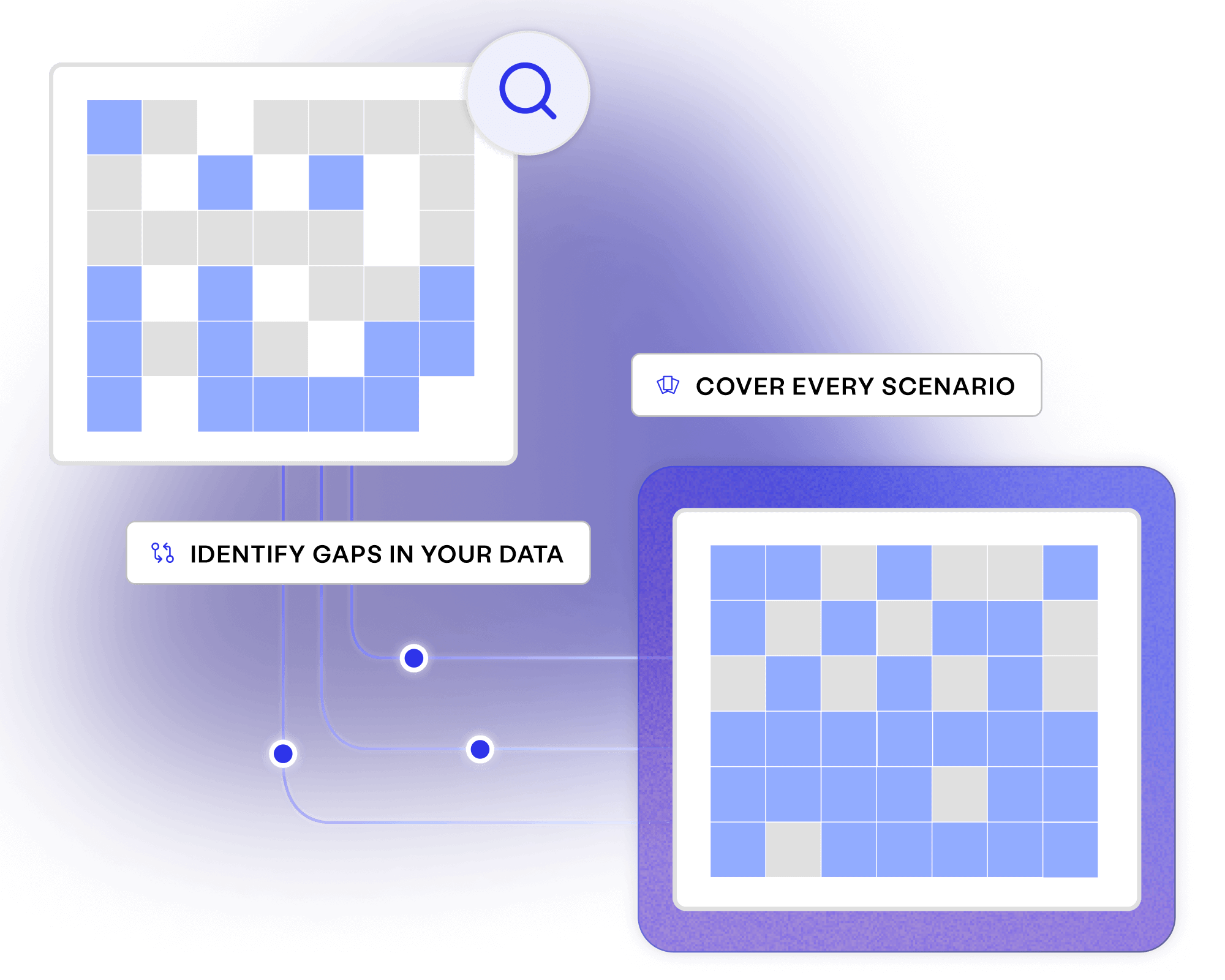

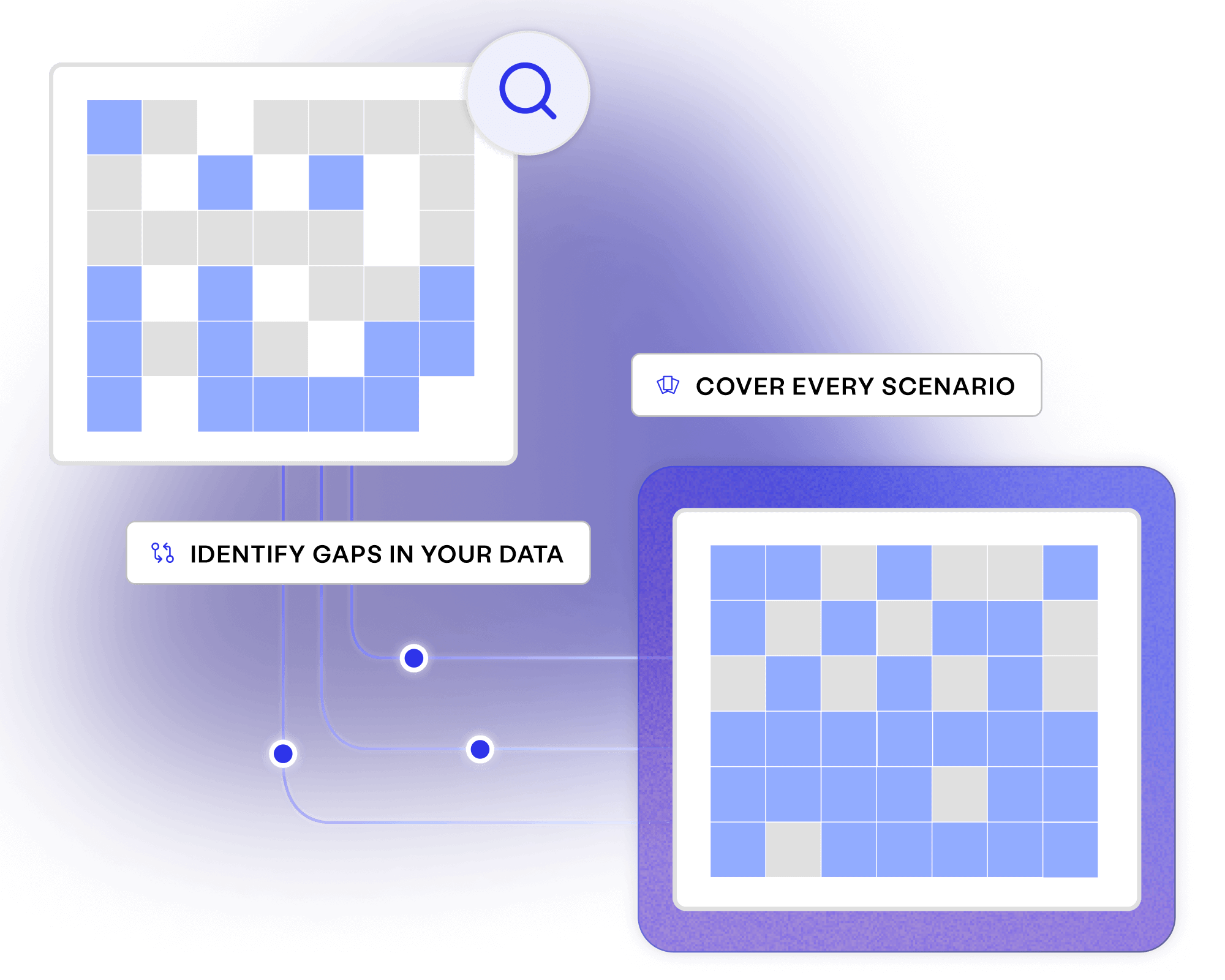

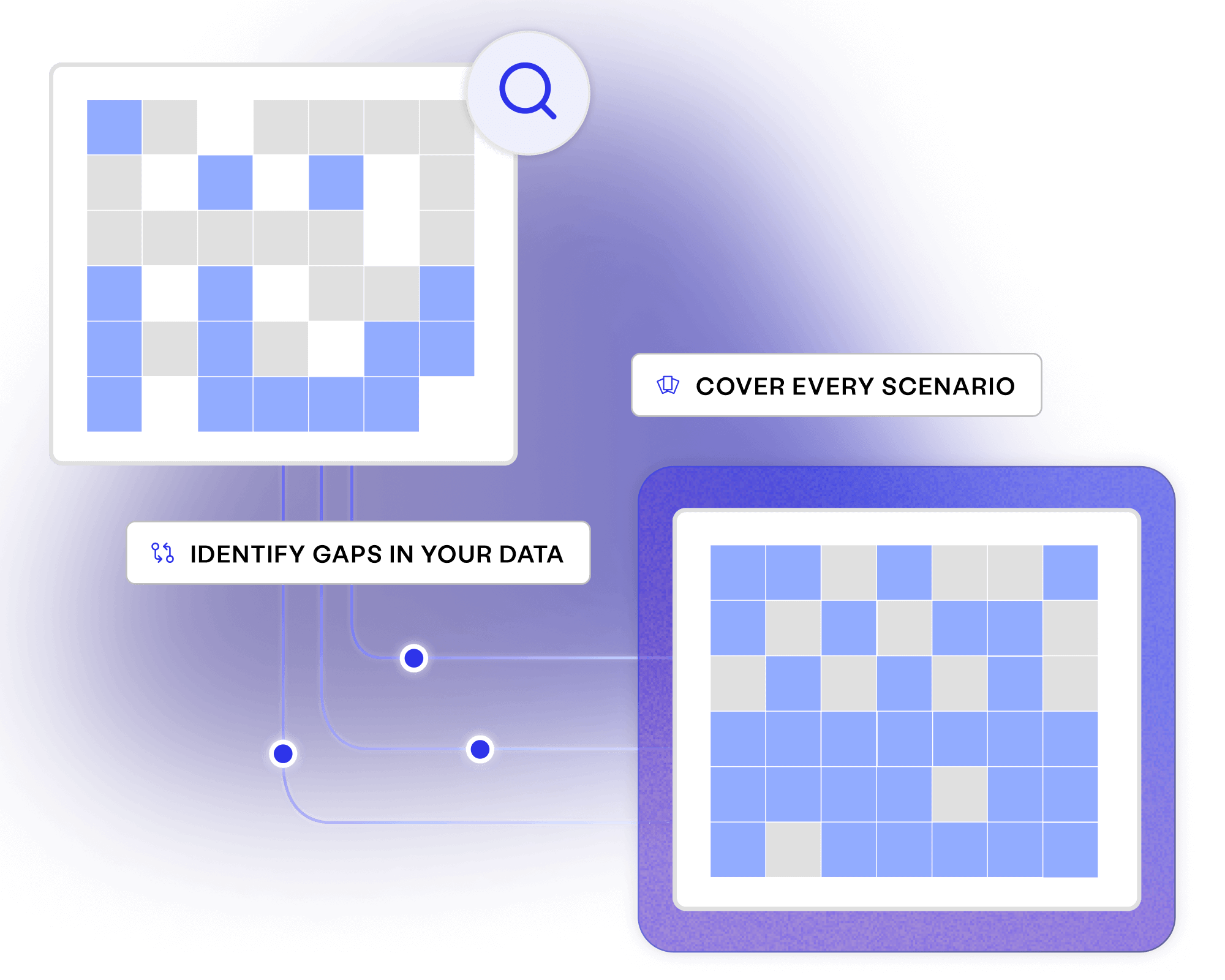

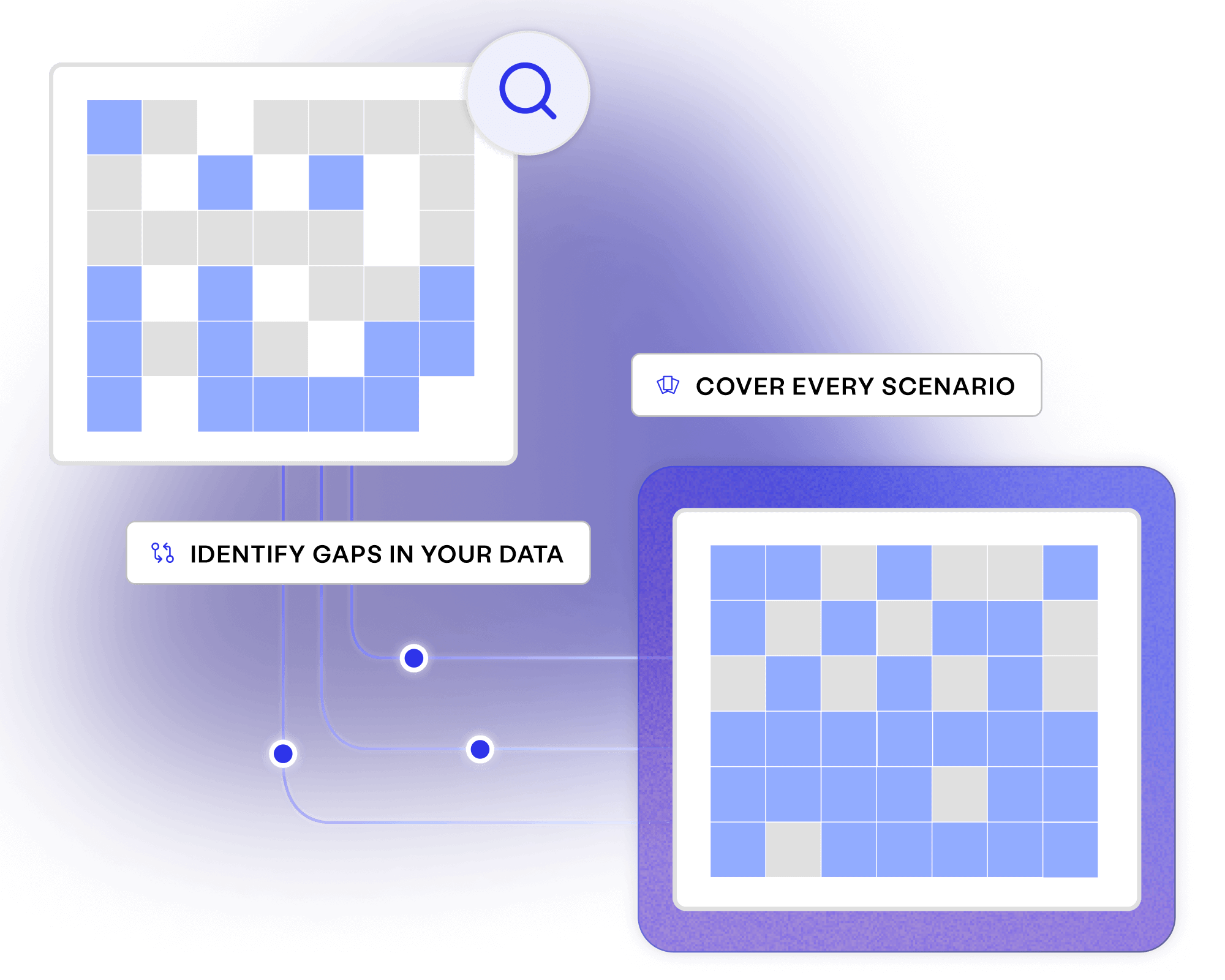

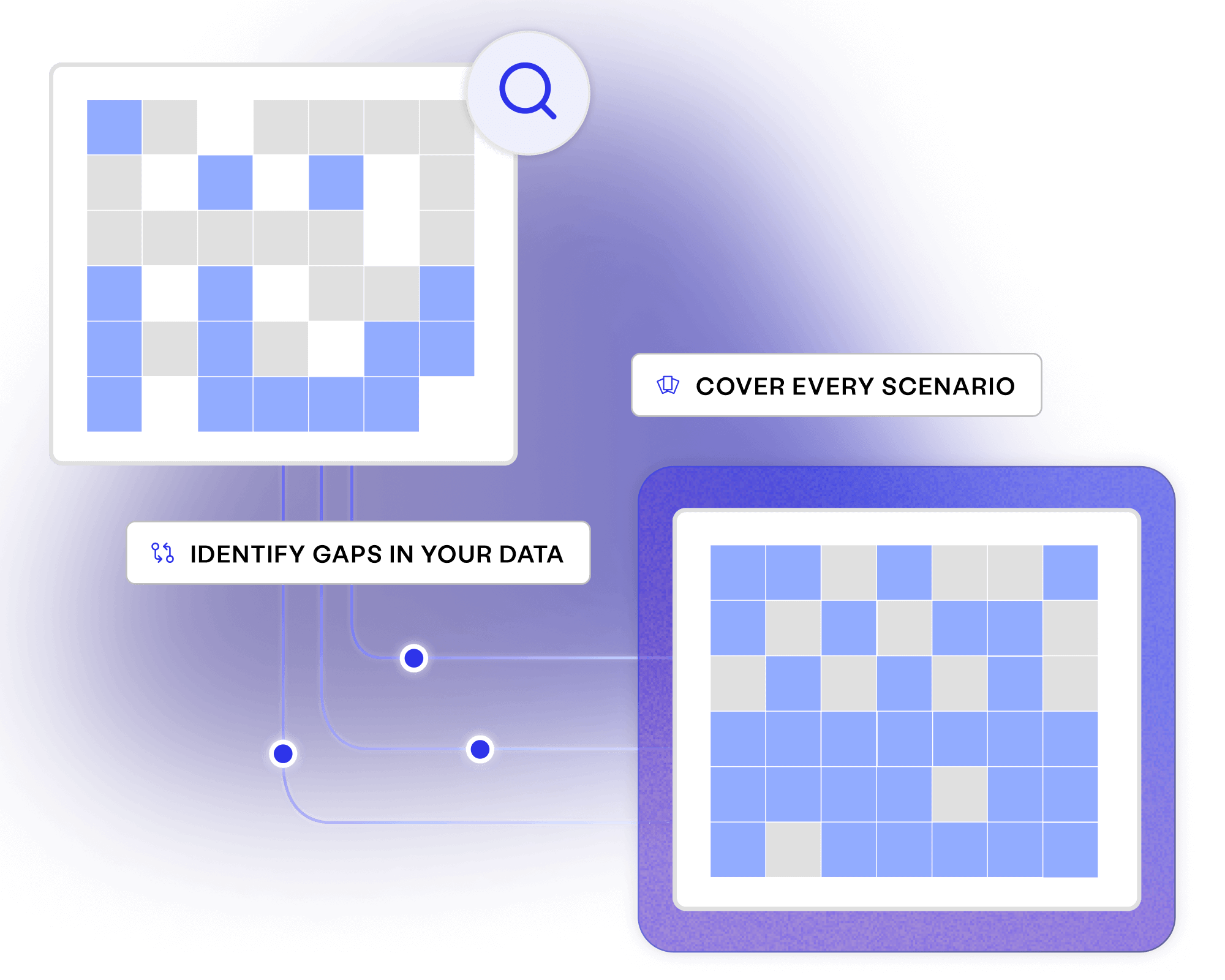

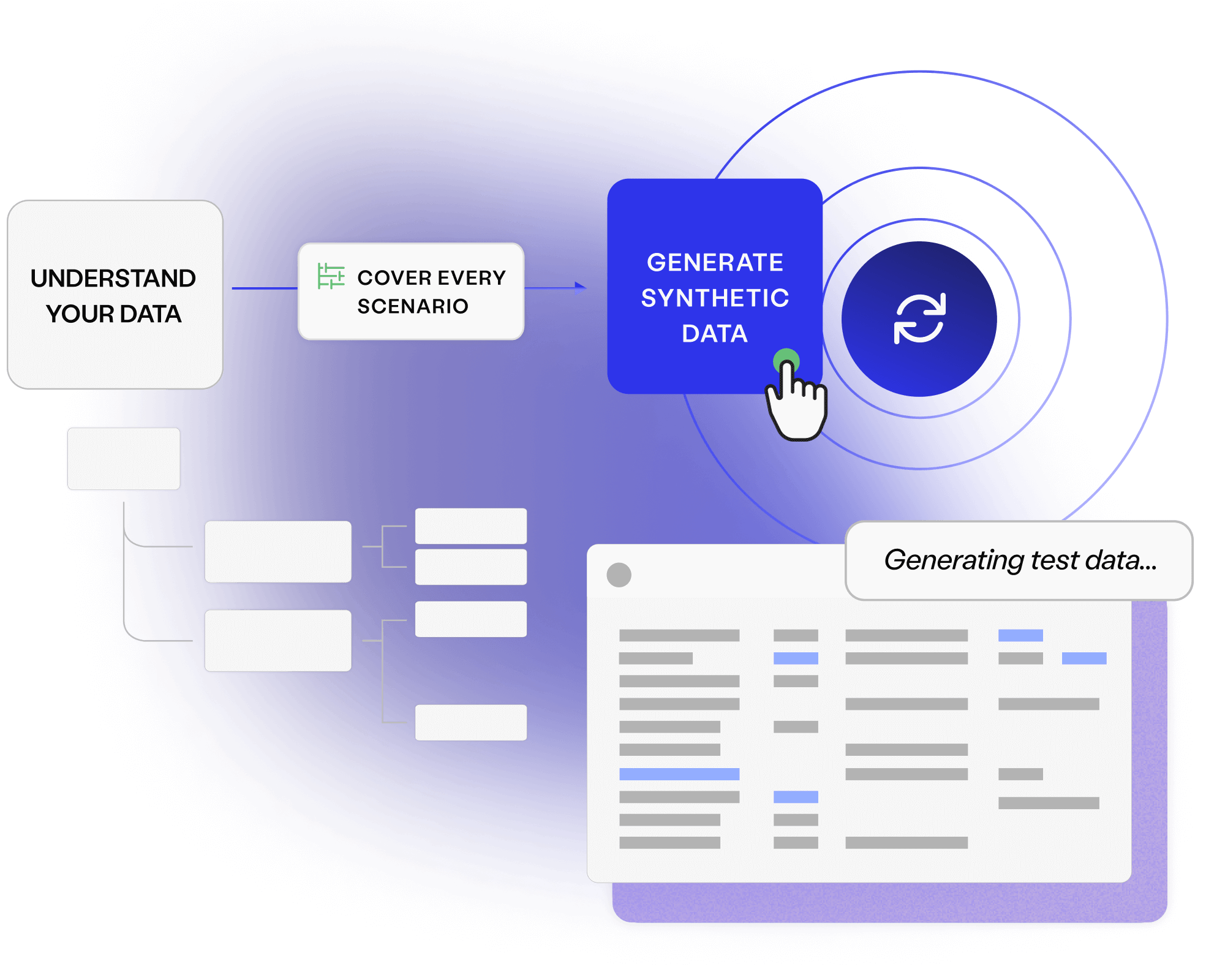

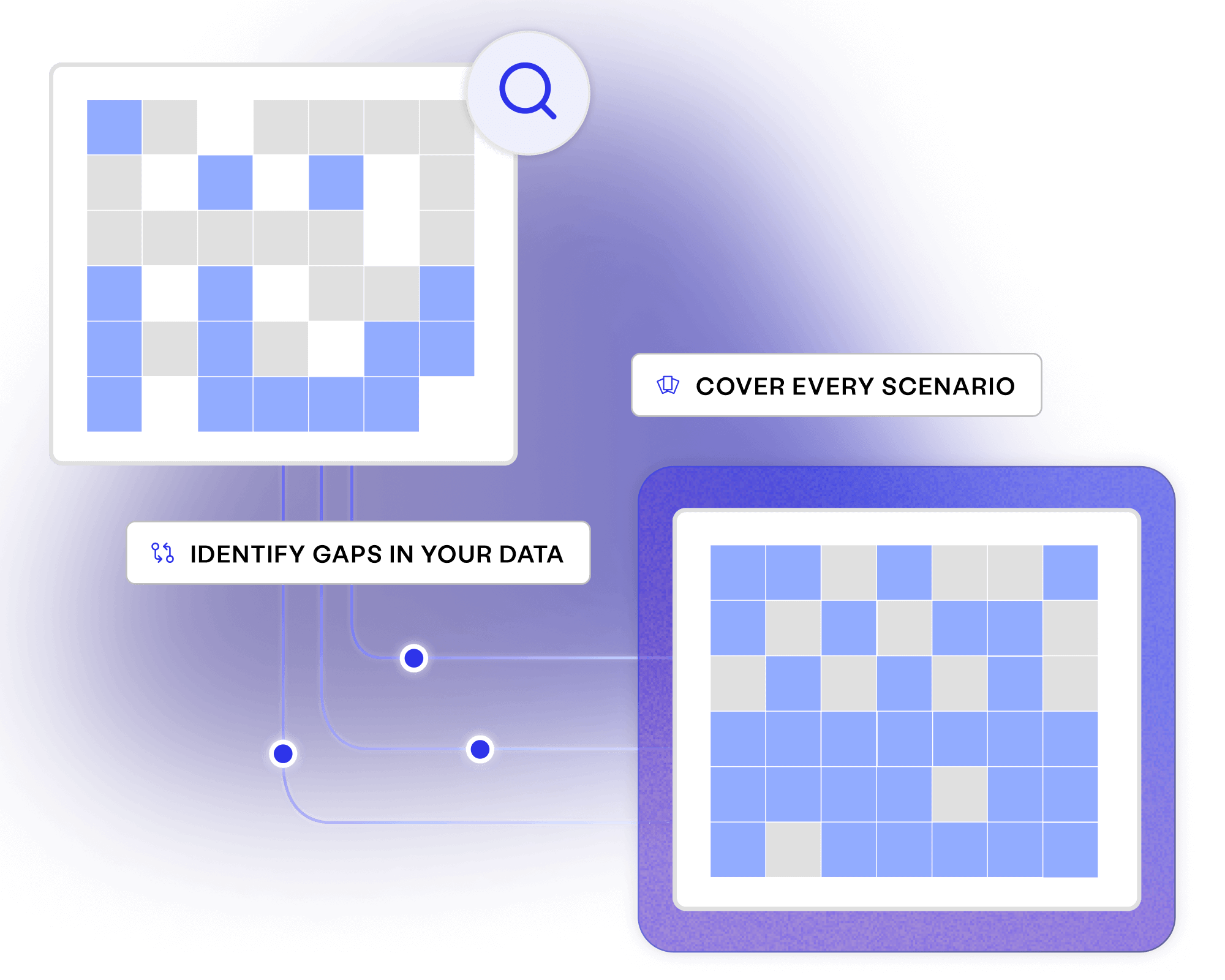

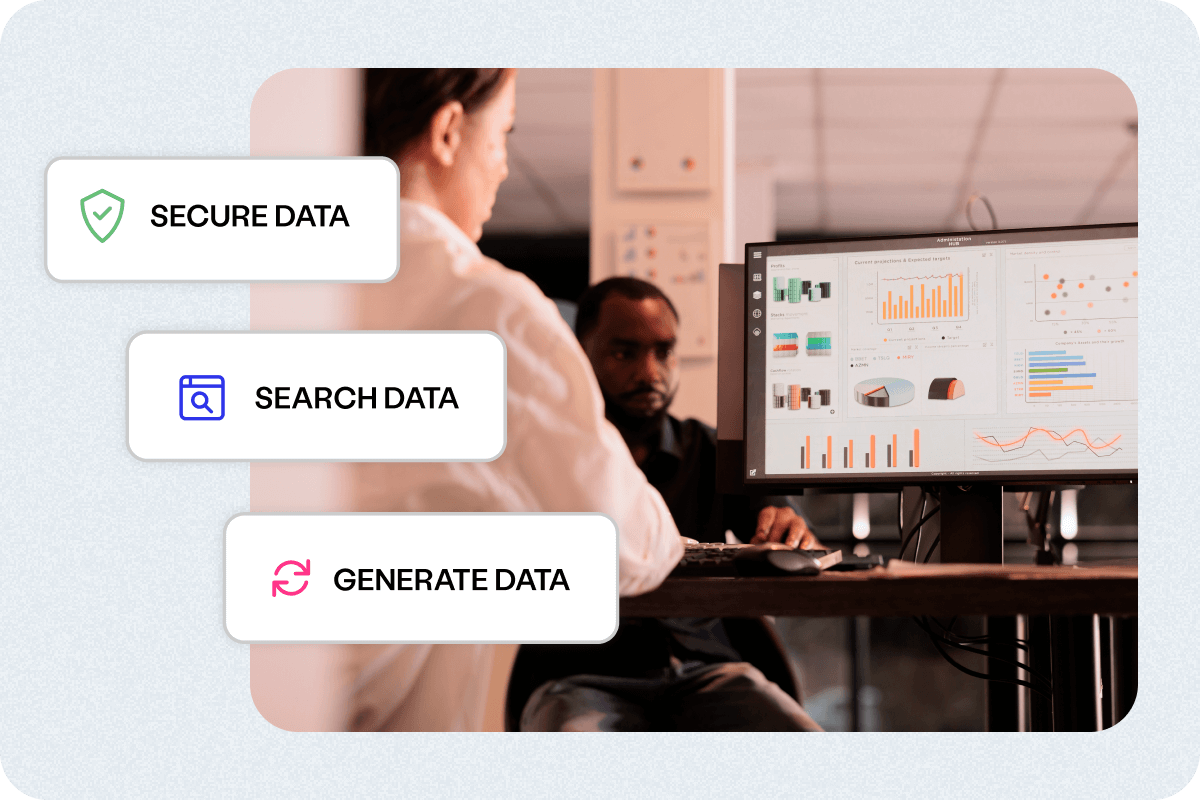

Gain a clear, visual understanding of your data flows and relationships, to create high quality synthetic data while ensuring compliance and eliminating risks.

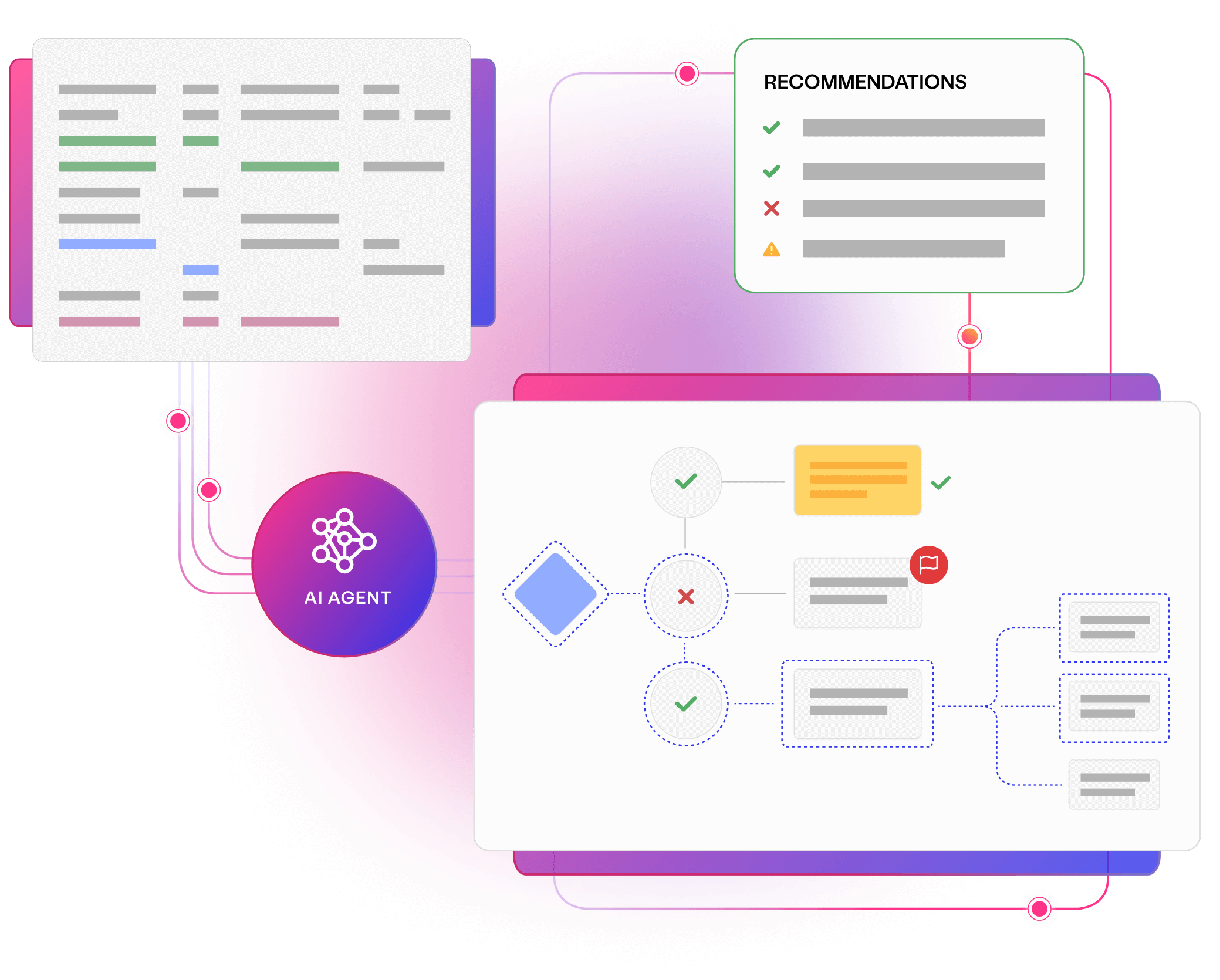

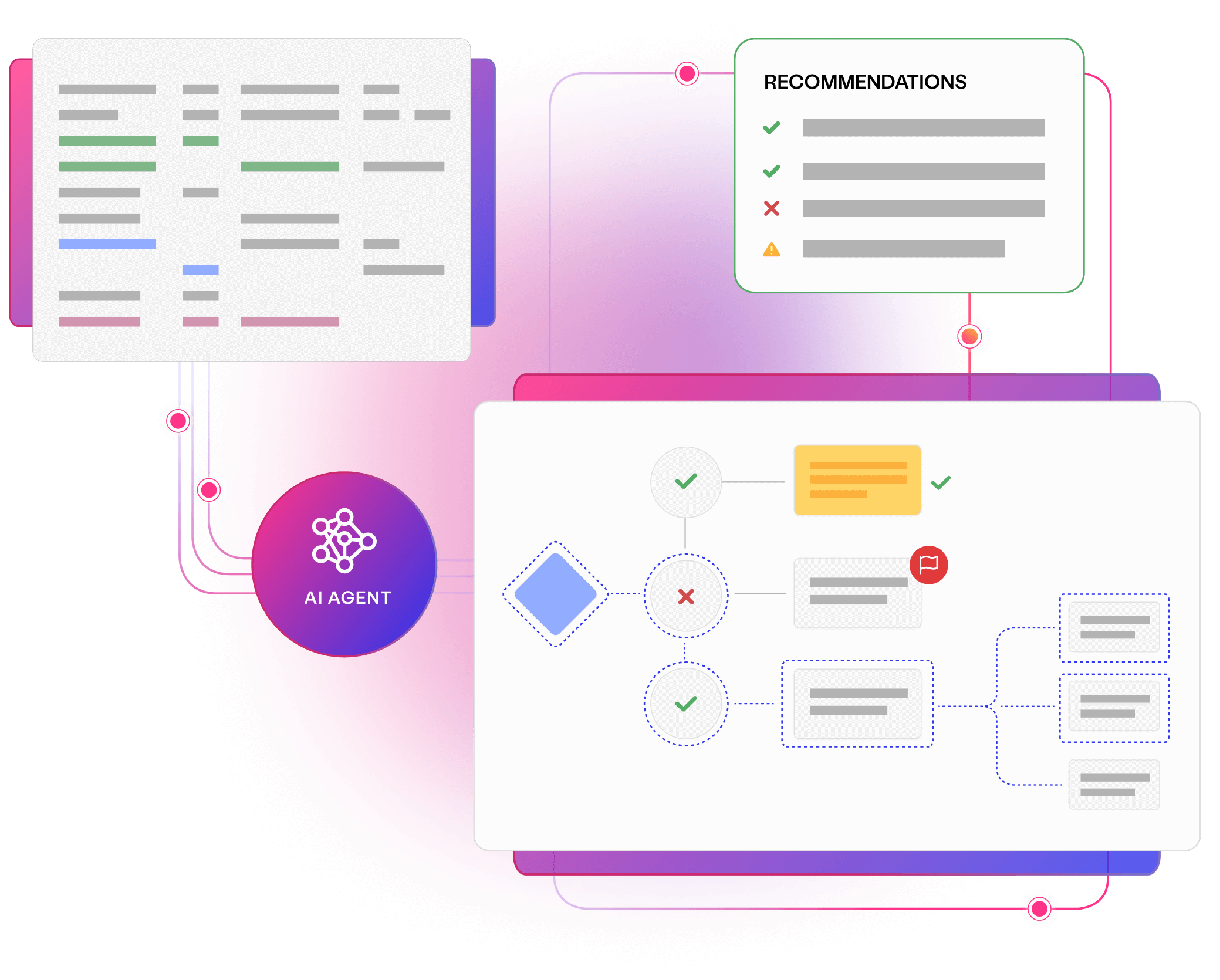

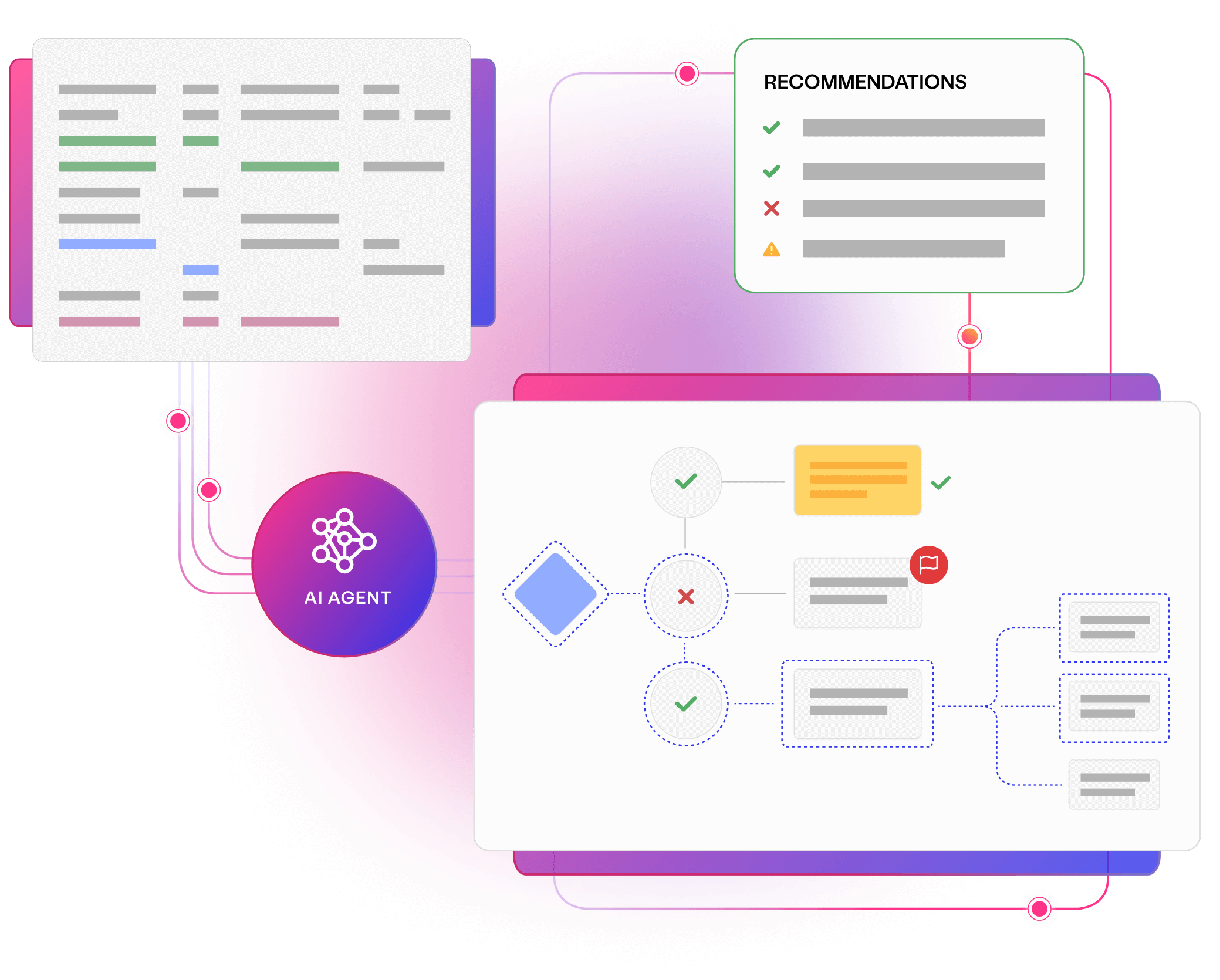

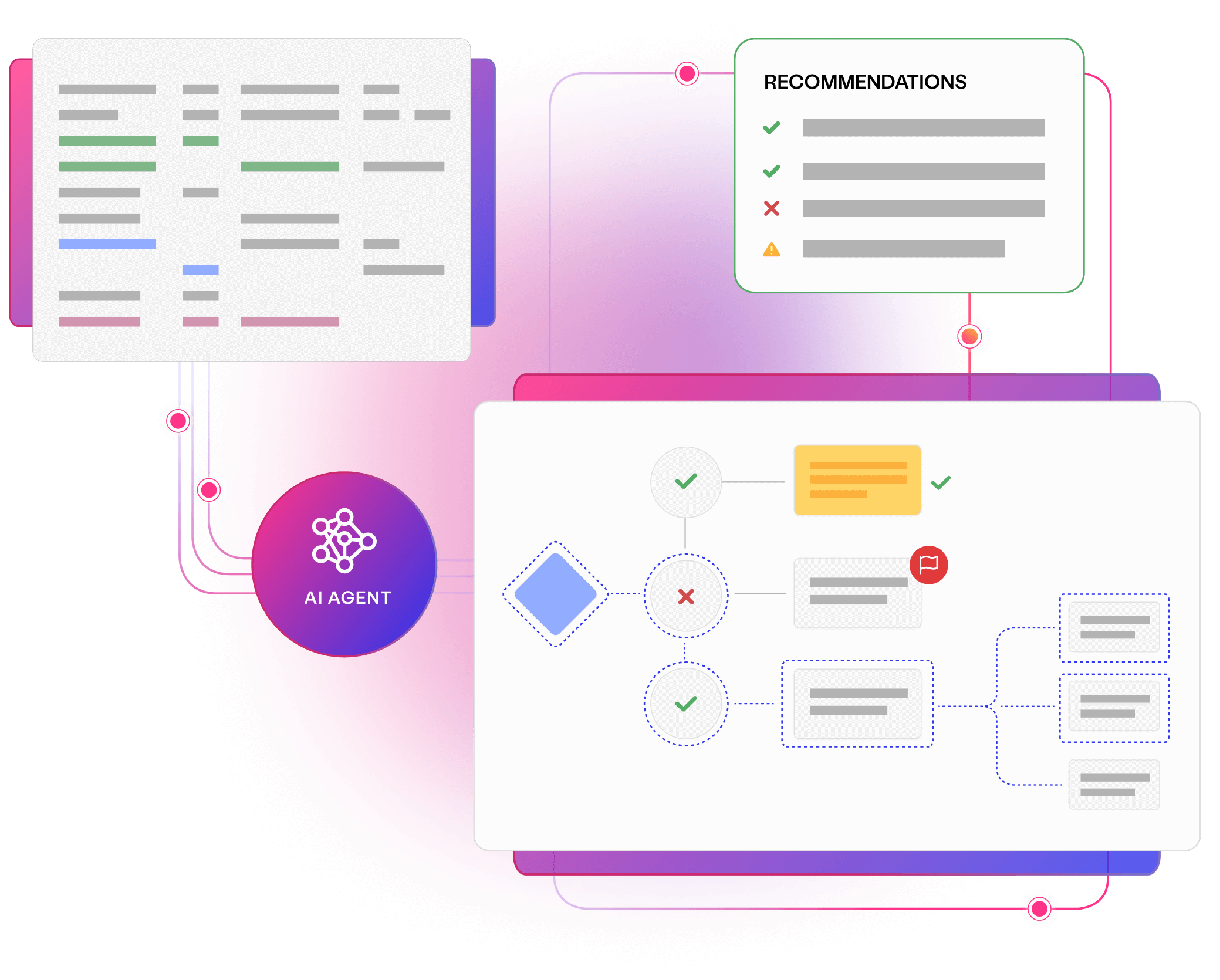

With complete visibility, ensure that sensitive information is correctly managed, and compliance risks are proactively addressed across your data landscape.

Minimise errors and inconsistencies by visualising dependencies and flows, ensuring that every piece of data is correctly identified and interpreted.

Gain a complete view of your test data landscape, enabling you to discover, centralise, and utilise data from various sources, minimising delays and improving data accuracy.

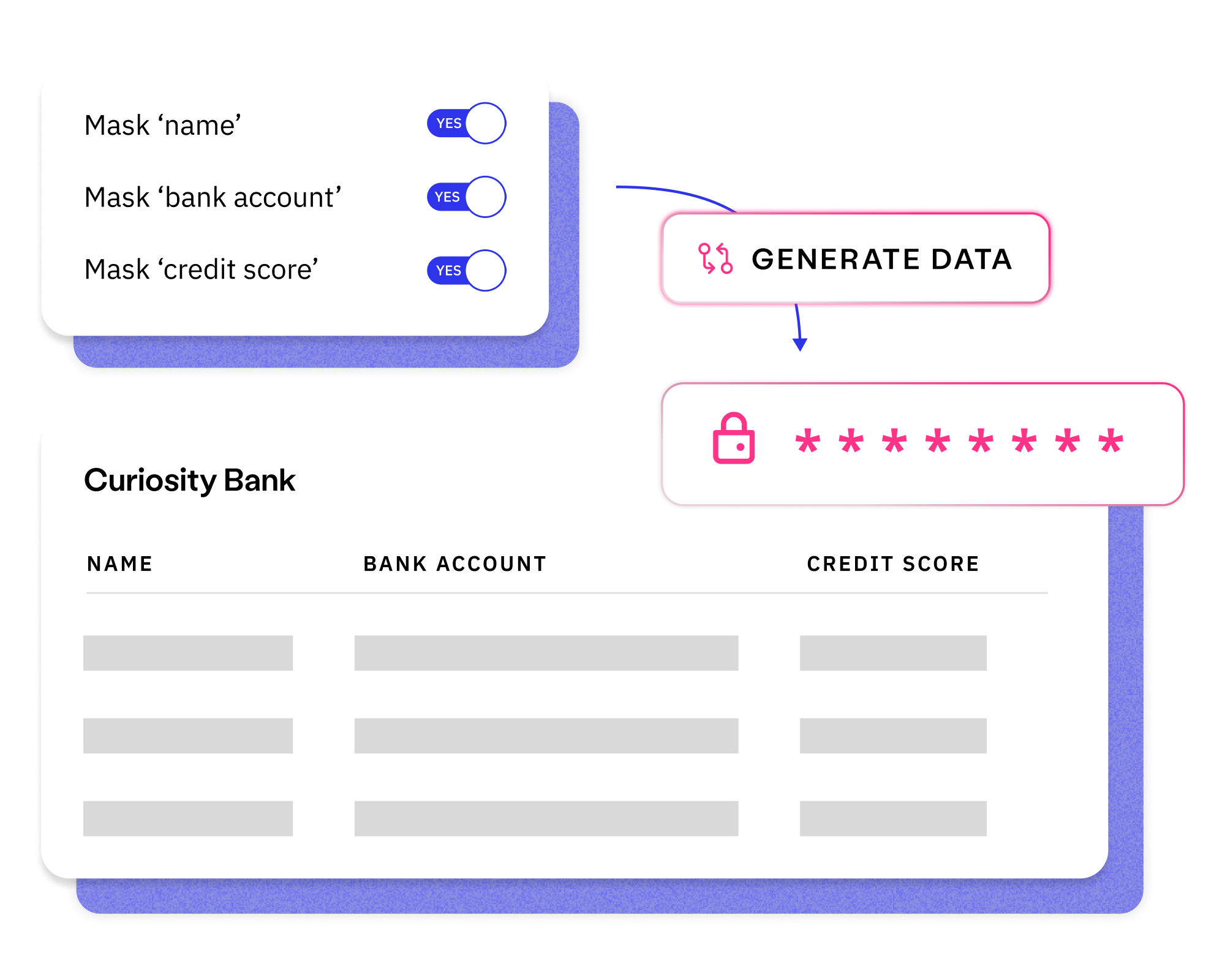

Use visual modelling to build a strong foundation for complex data management strategies like masking, synthetic data generation, and automated provisioning.

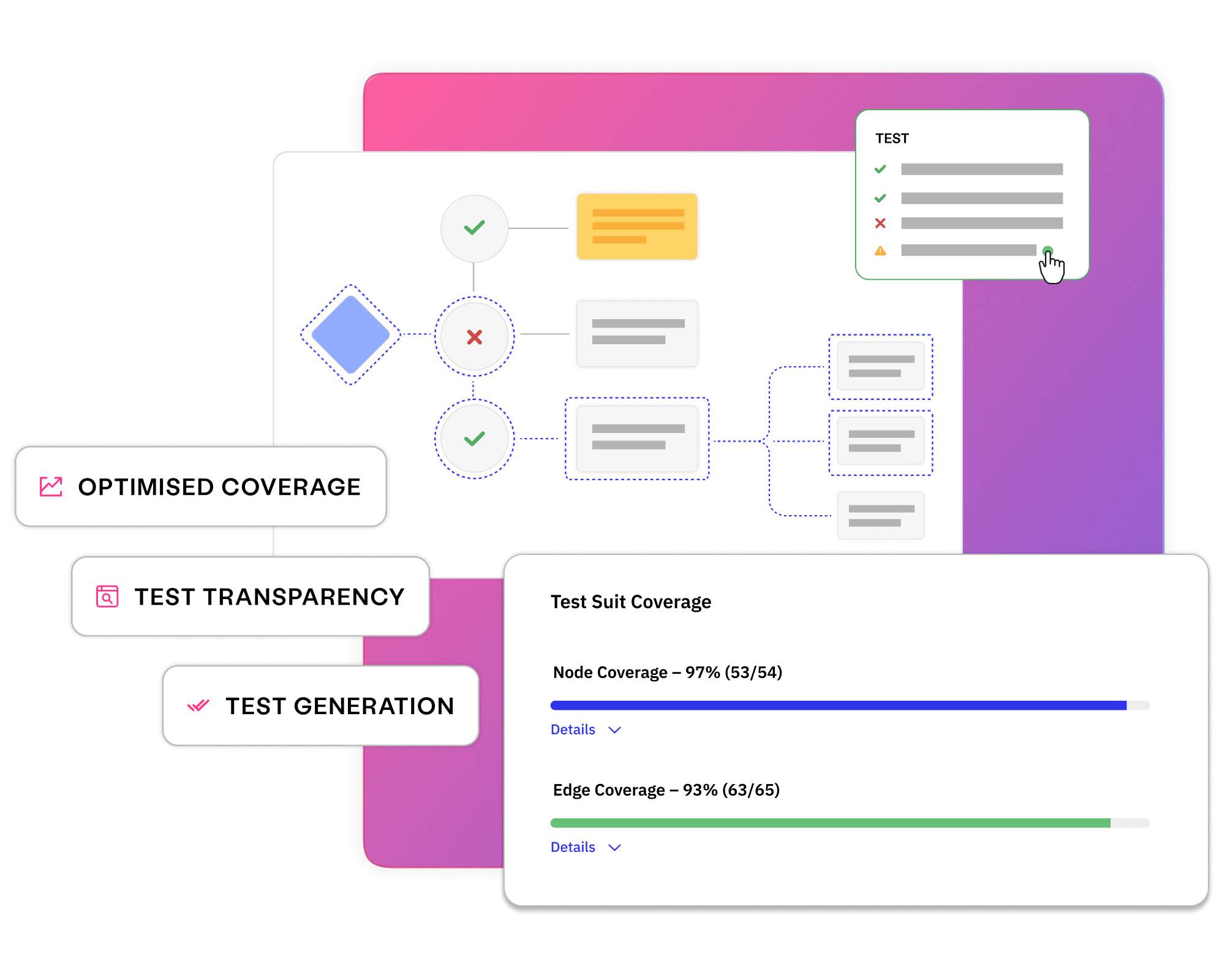

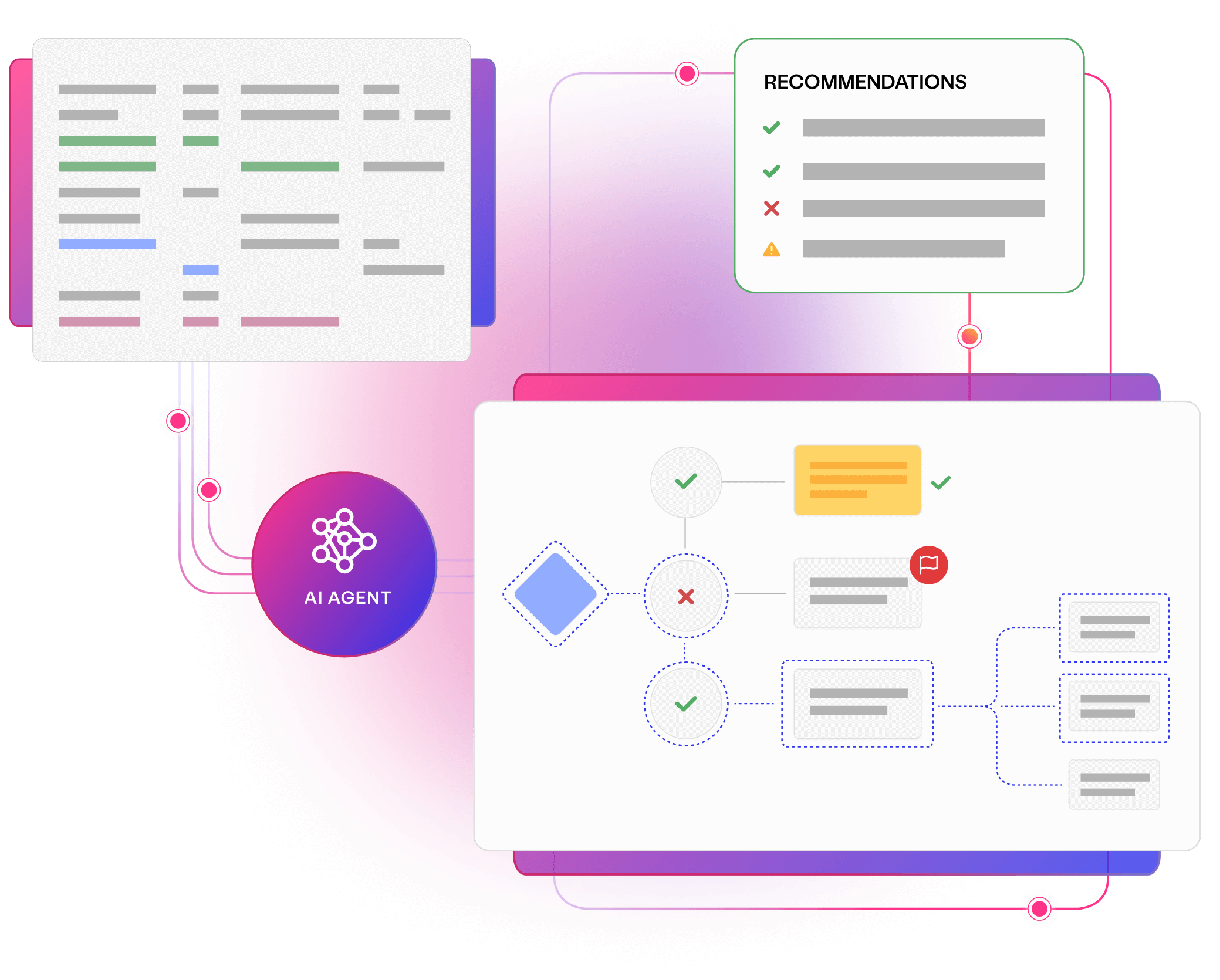

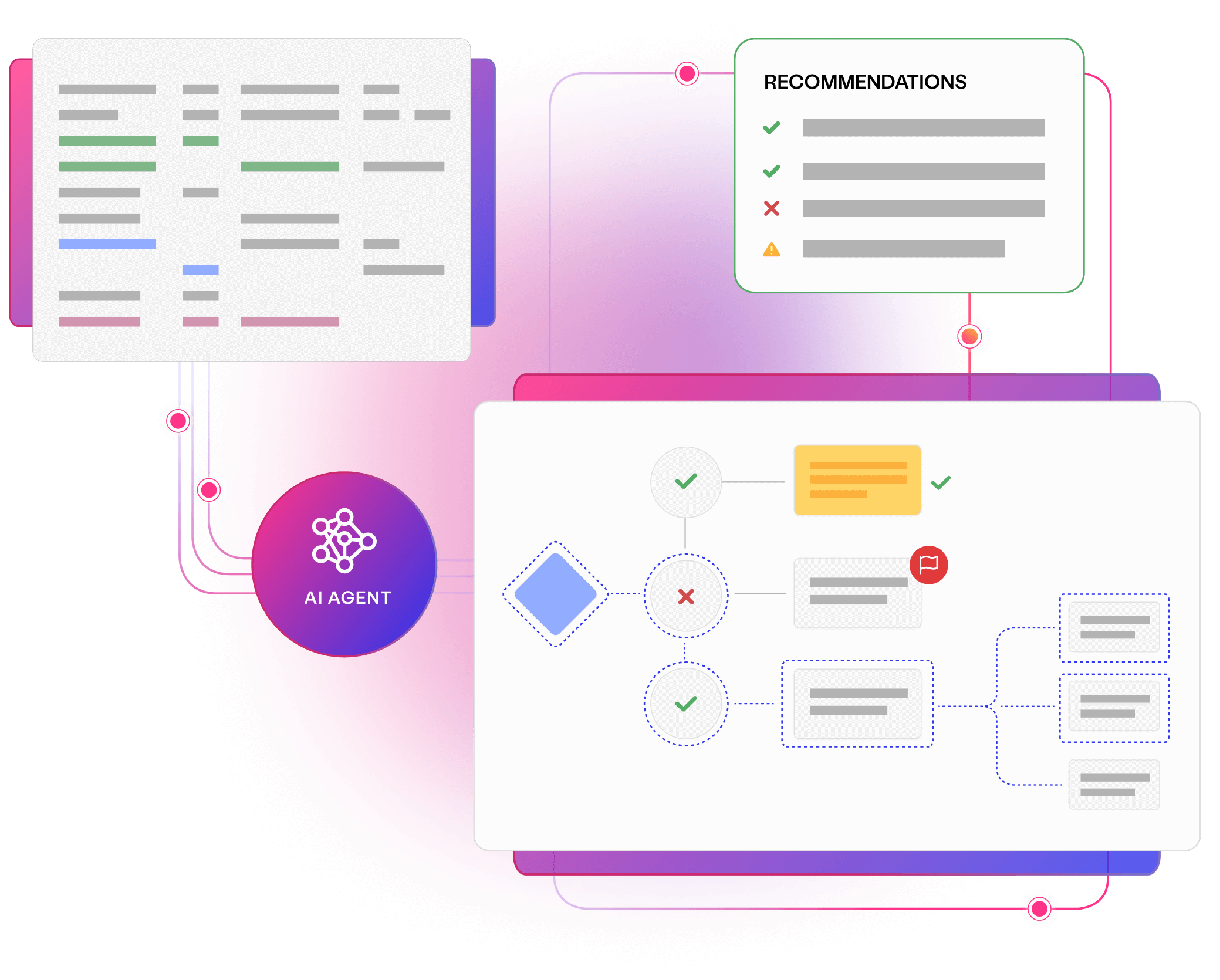

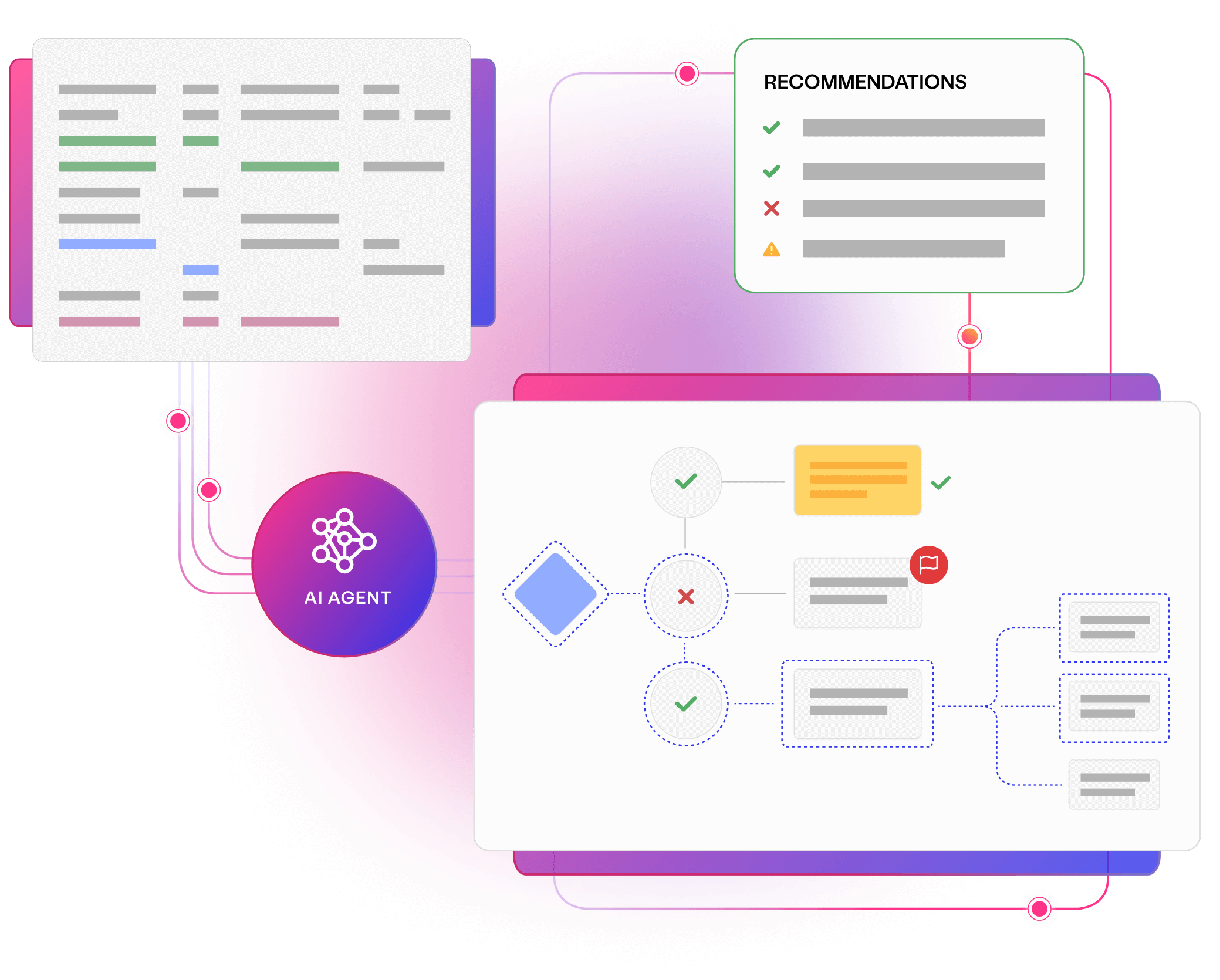

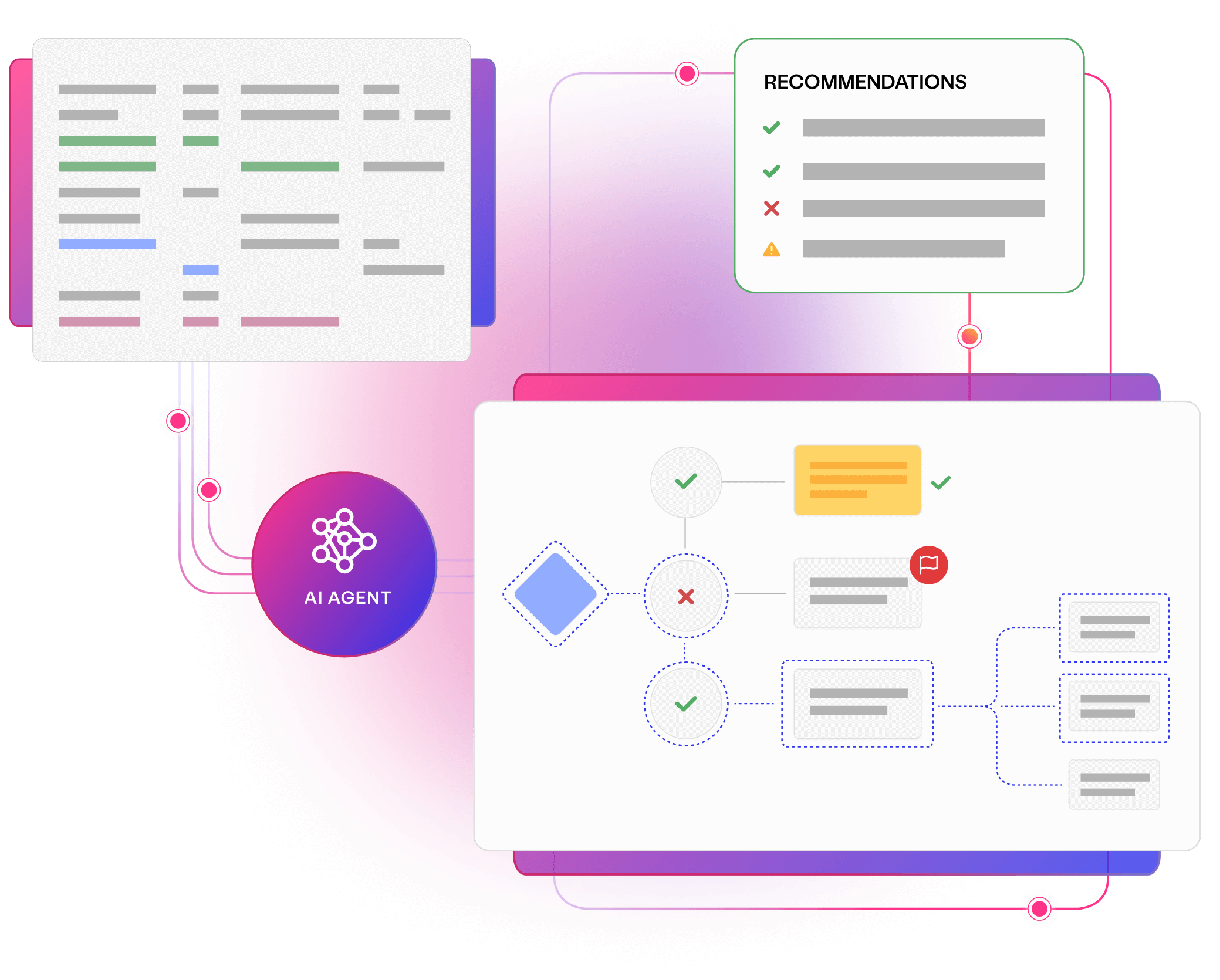

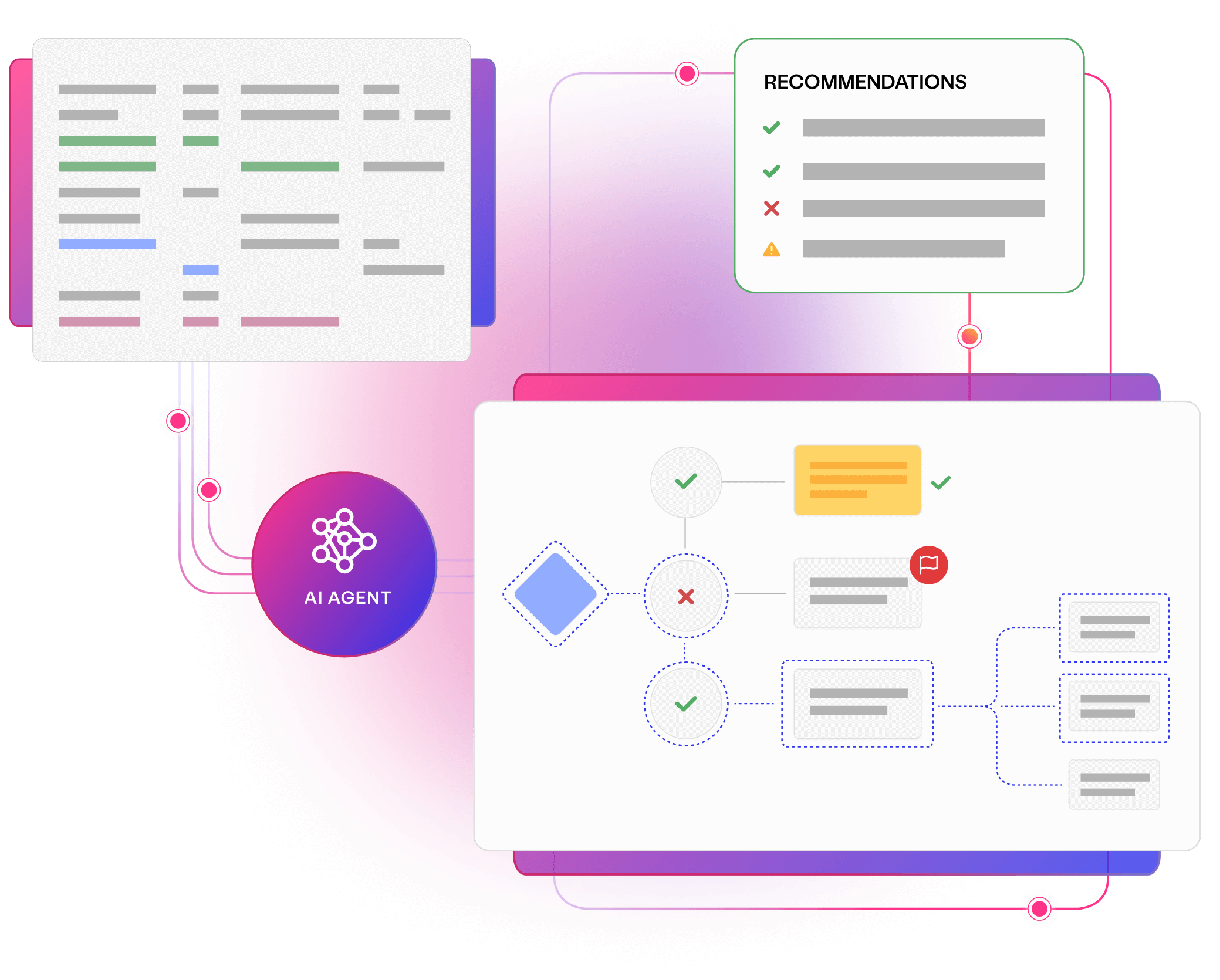

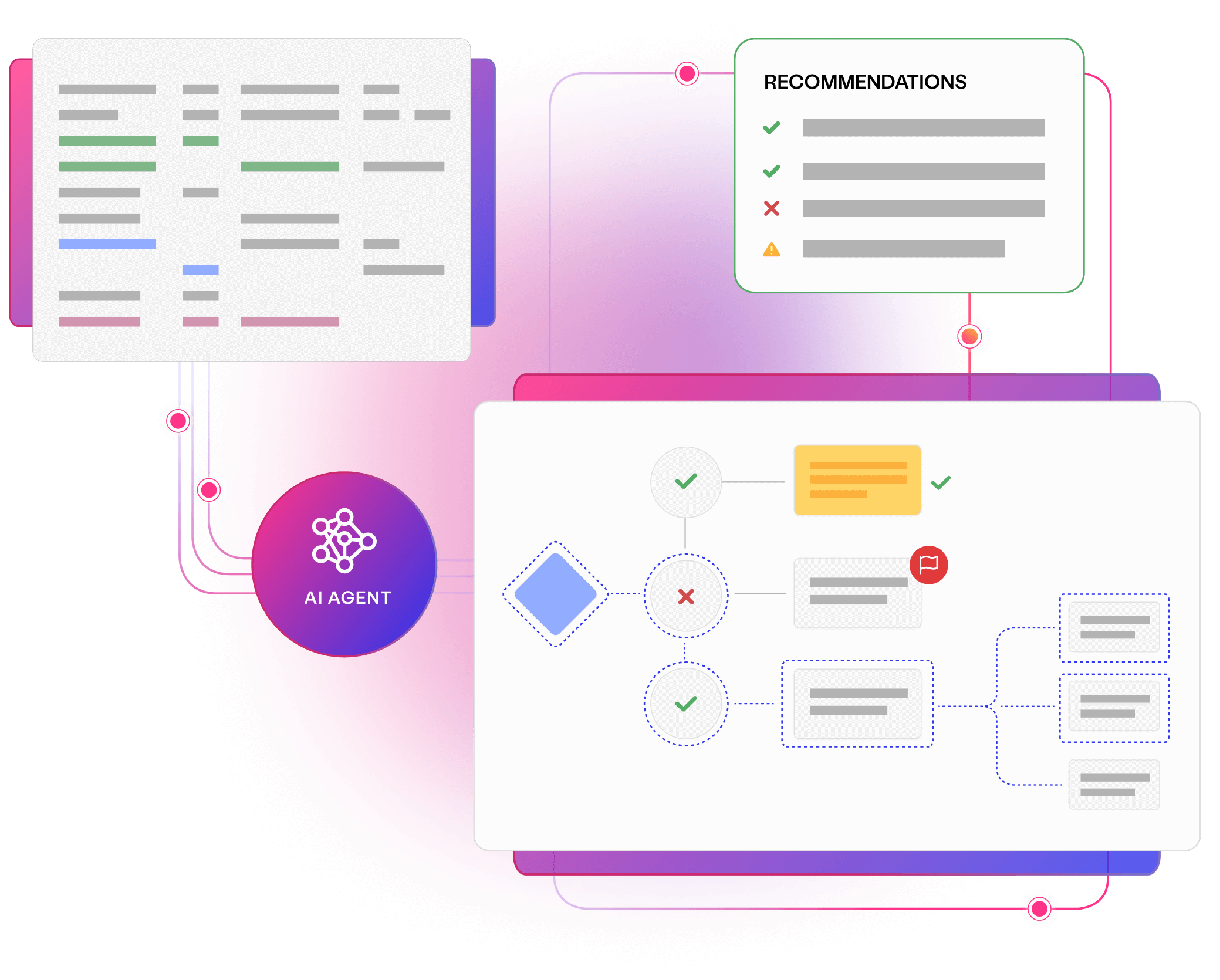

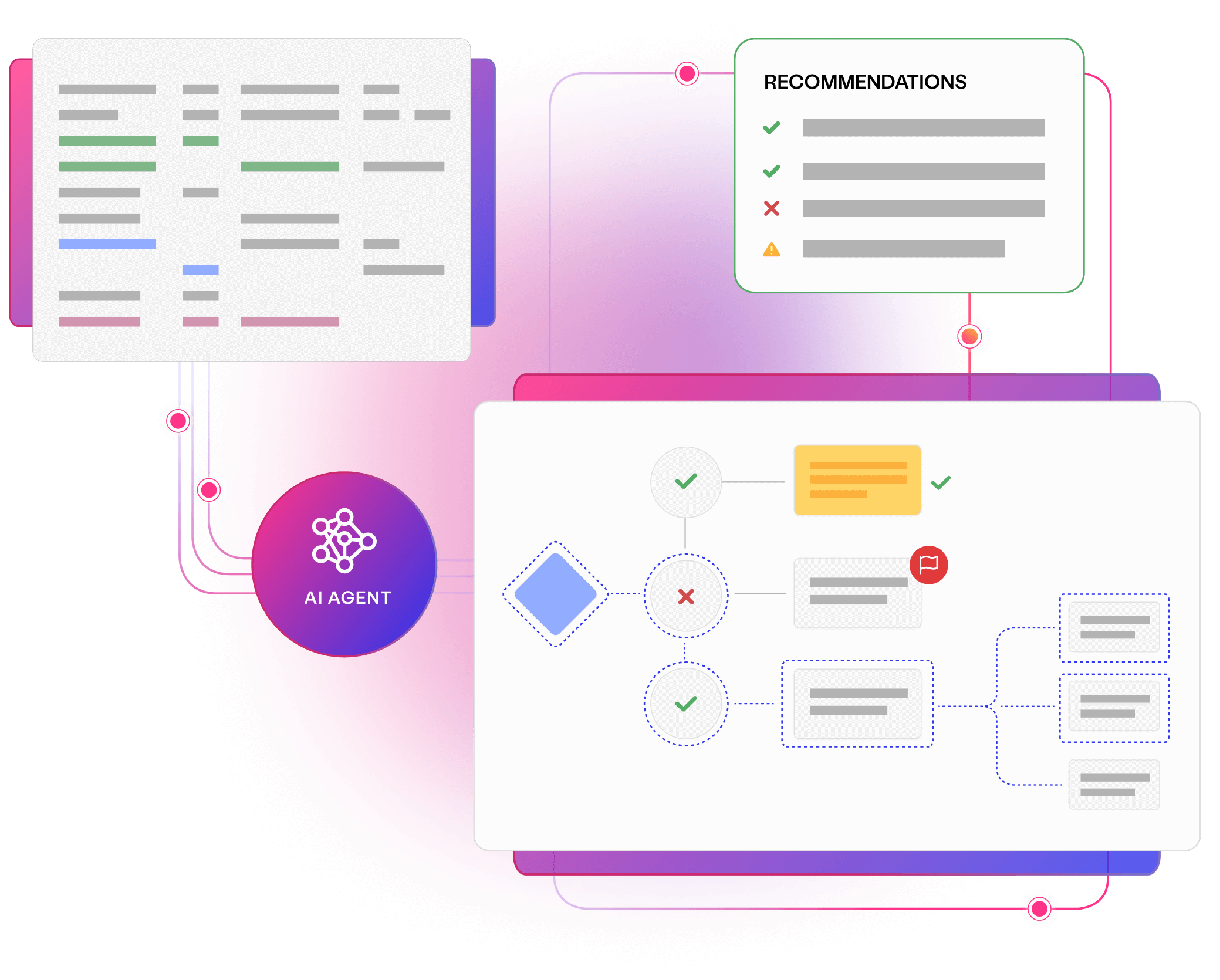

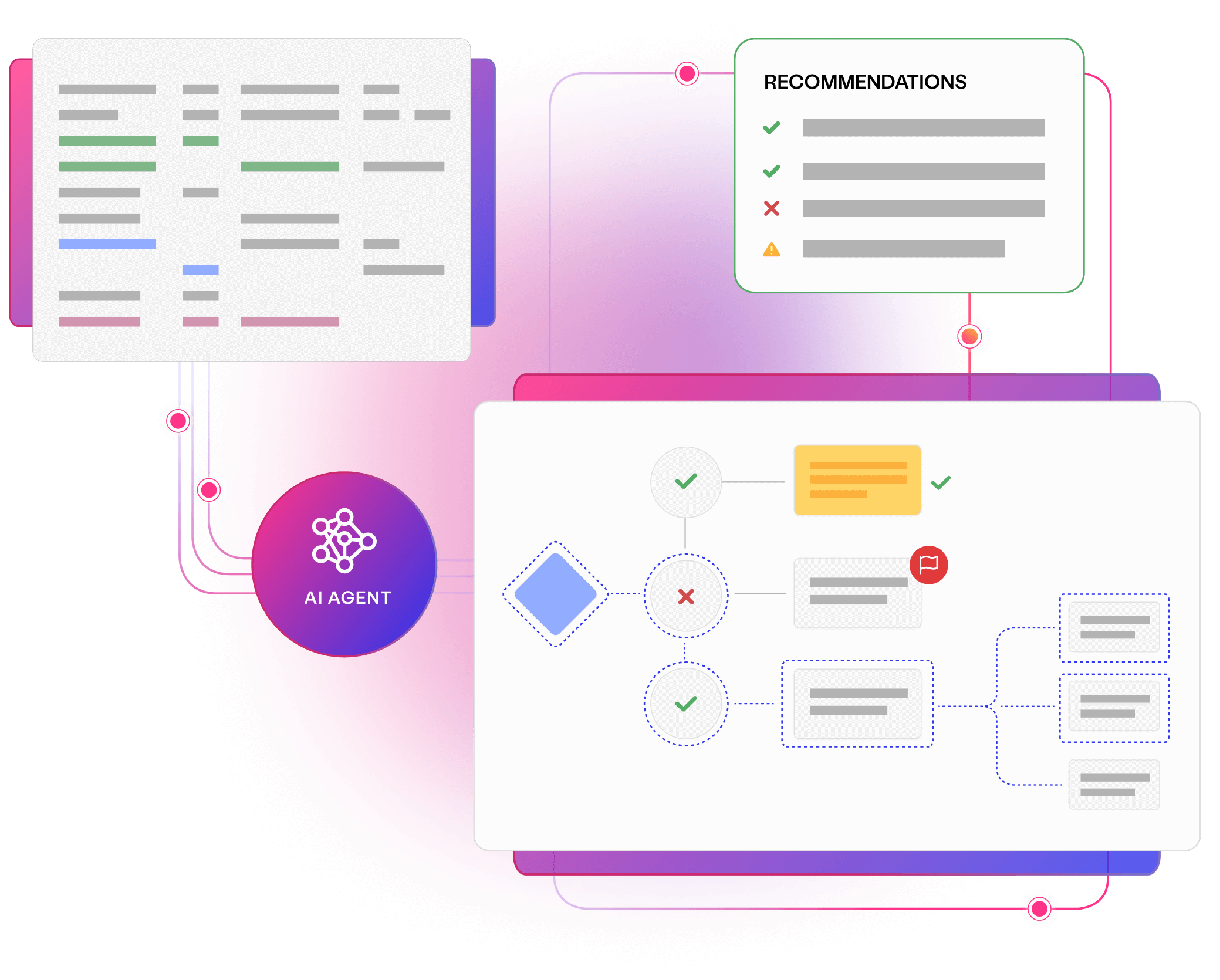

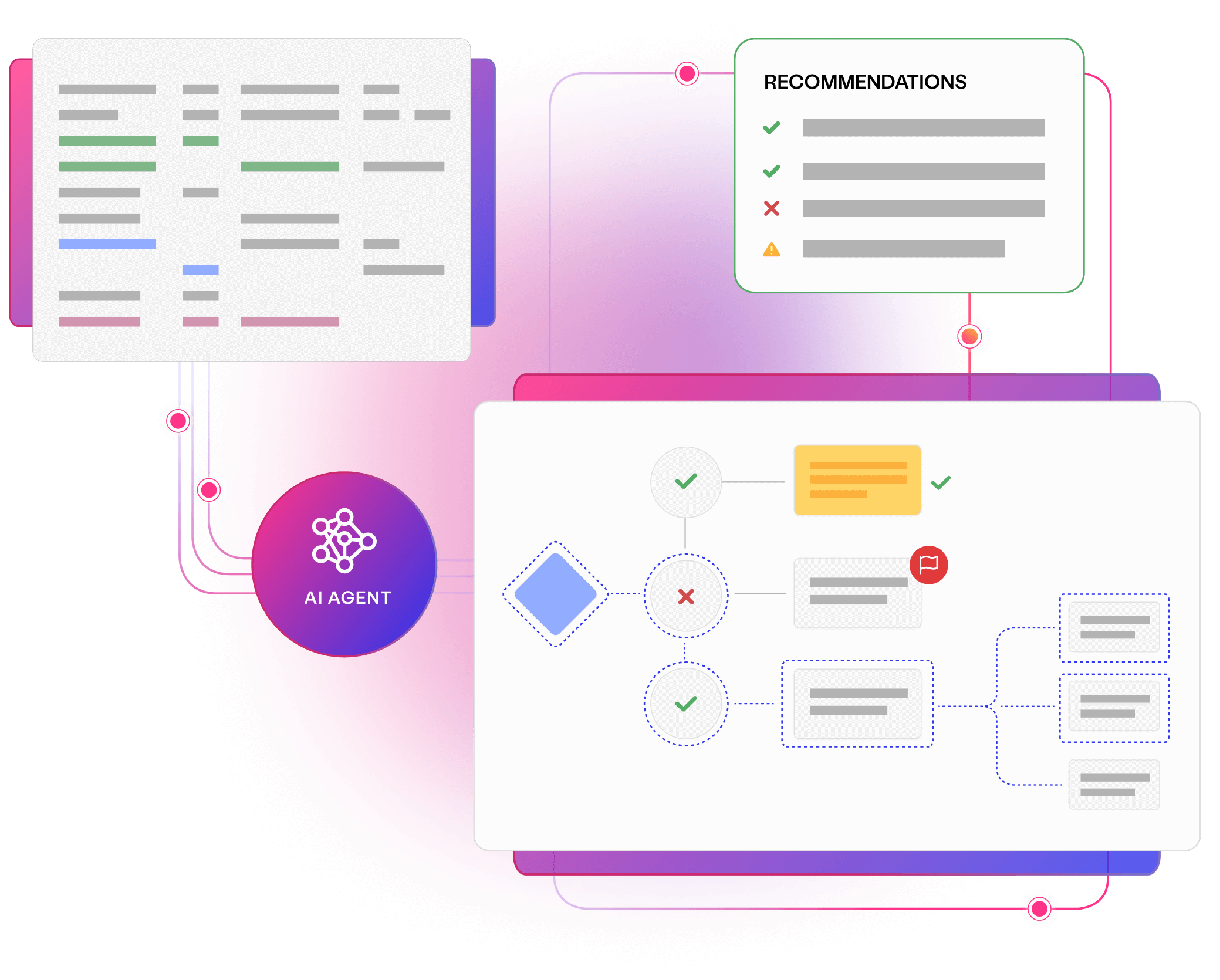

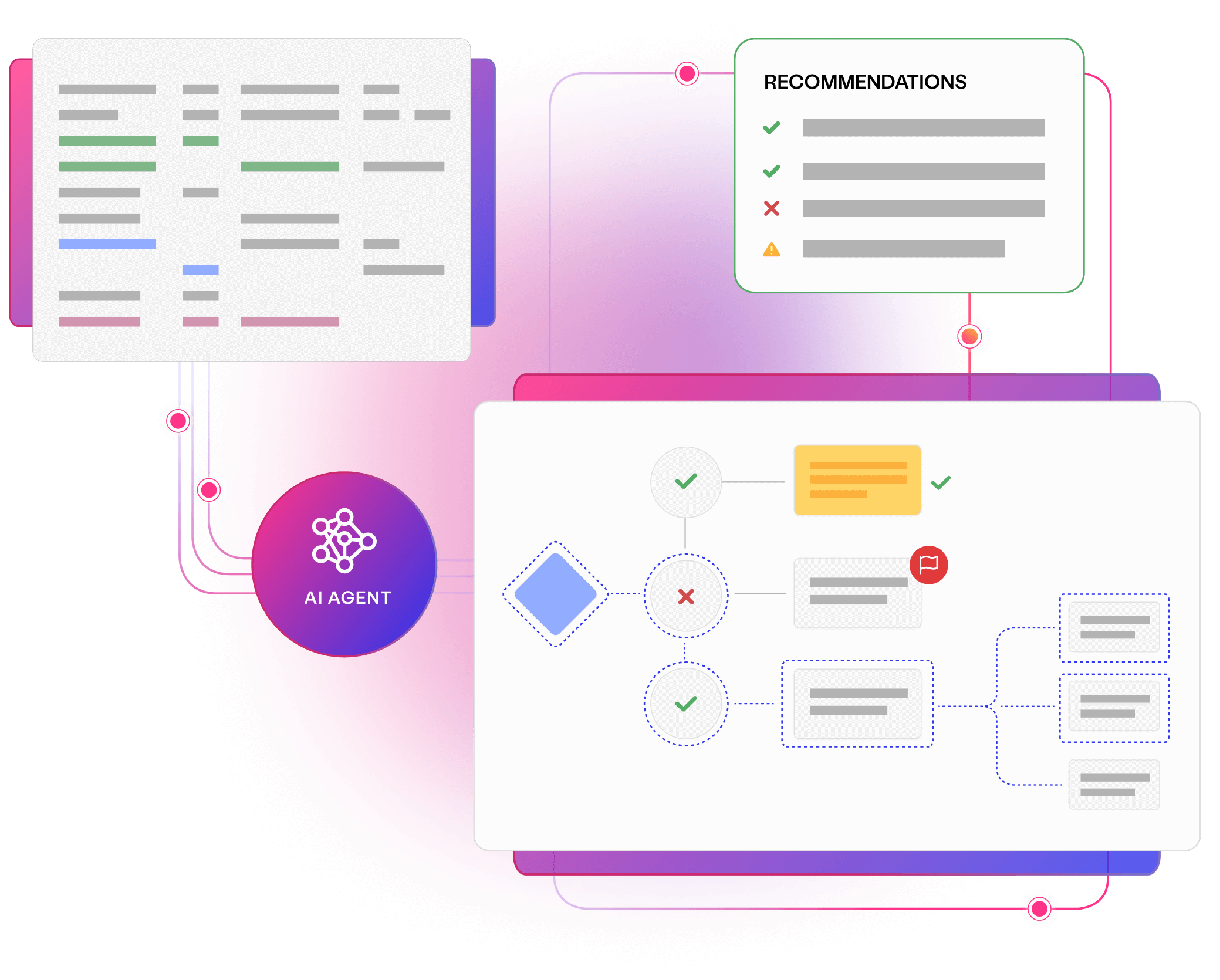

Uncover smarter test data, with Curiosity’s all-in-one, AI-accelerated platform. Offering integrated, secure, and intuitive tools to simplify complex data landscapes and overcome test data management challenges.

Simplify your complex data landscape and provide confidence and clarity at every step of your data journey with Enterprise Test Data.

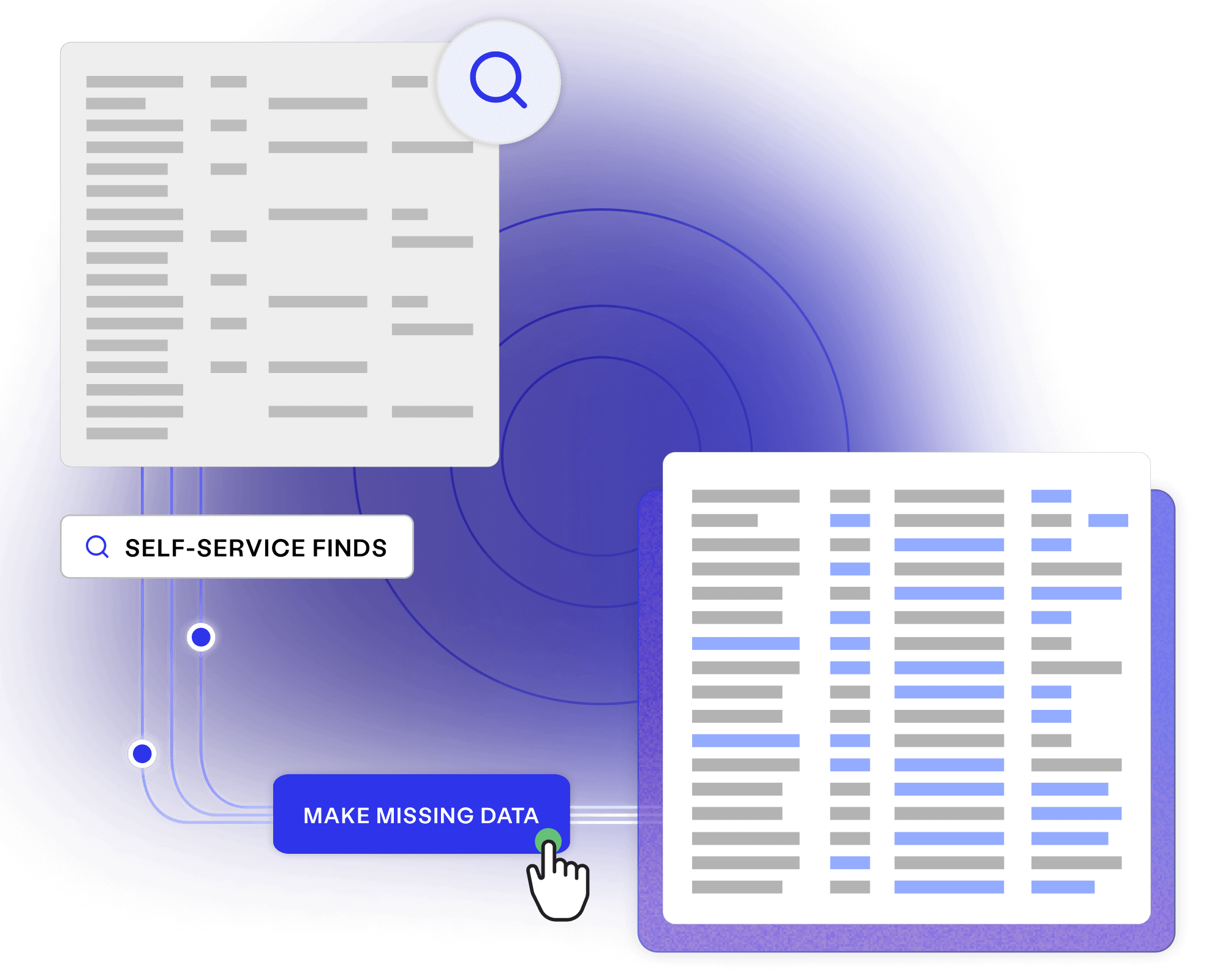

Manually finding and making data adds up to one of the most resource-intensive, time-consuming tasks in testing and development. Automating provisioning can make the difference when striving to hit release deadlines and stay on budget.

Senior Test Data Engineer

Read a resource, or meet with an expert, to discover how data modelling can maximise visibility and coverage throughout your entire enterprise.

Discover how you can generate complete and compliant data on demand for your entire enterprise.

Read more about Enterprise Test Data brochure Download your copy

Self-service generation removed 5-day provision-ing bottlenecks, hitting the volumes and variety...

Read more about Data generation at a large financial institution Read the full story

Talk to us to learn how synthetic data generation can embed productivity, quality and privacy...

Read more about Meet with a Curiosity expert Book now