Enterprise Test Data brochure

Discover how you can deliver complete and compliant data on demand across the enterprise.

Read more about Enterprise Test Data brochure Download your copyAI-powered. End-to-end. Your complete test data management platform.

Explore Curiosity's collection of webinars, podcasts, blogs and success stories, covering everything from visual modelling to artificial intelligence and test data management.

Deliver superior test data and overcome the challenges of complexity, legacy, scale, and regulation with Curiosity Software.

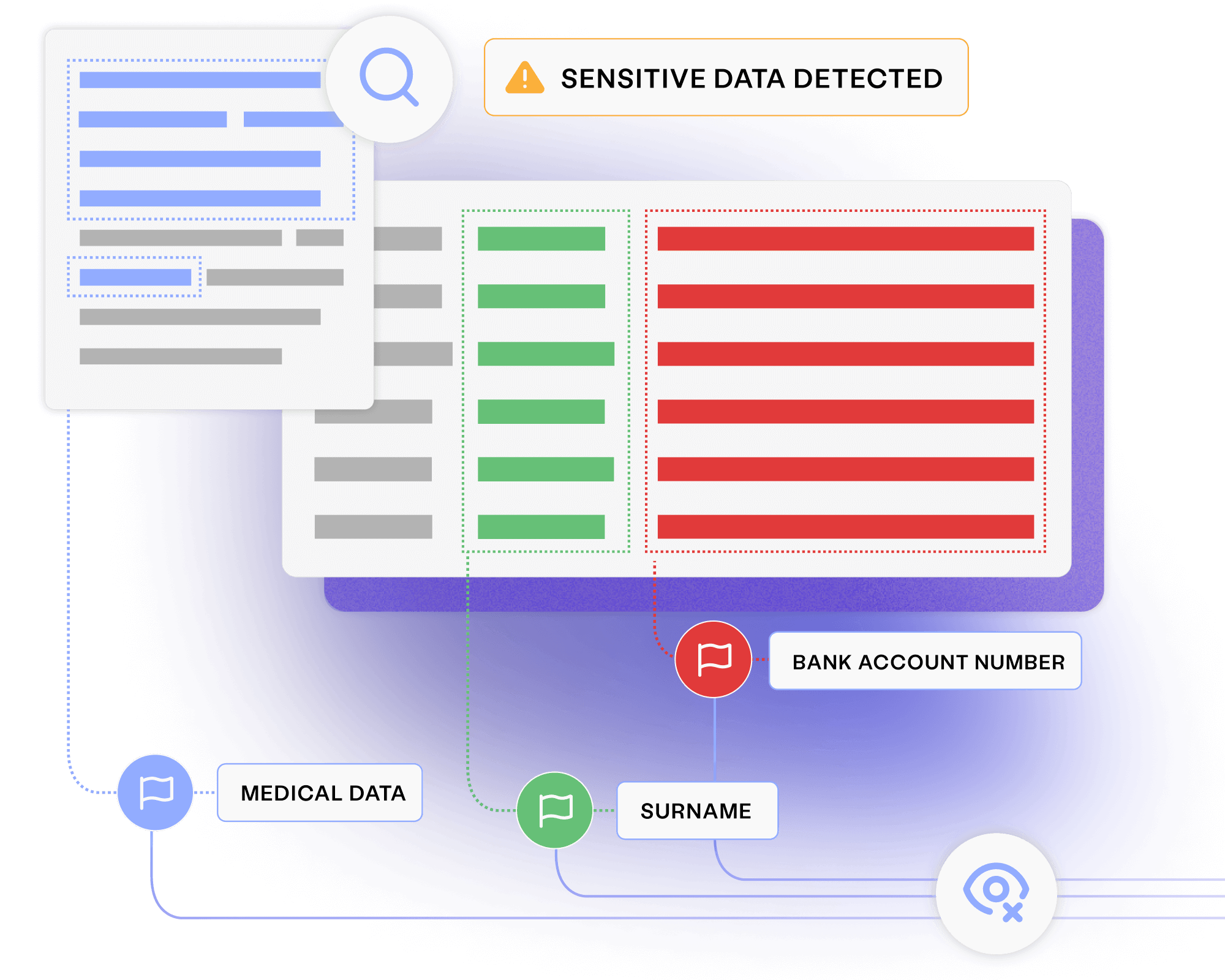

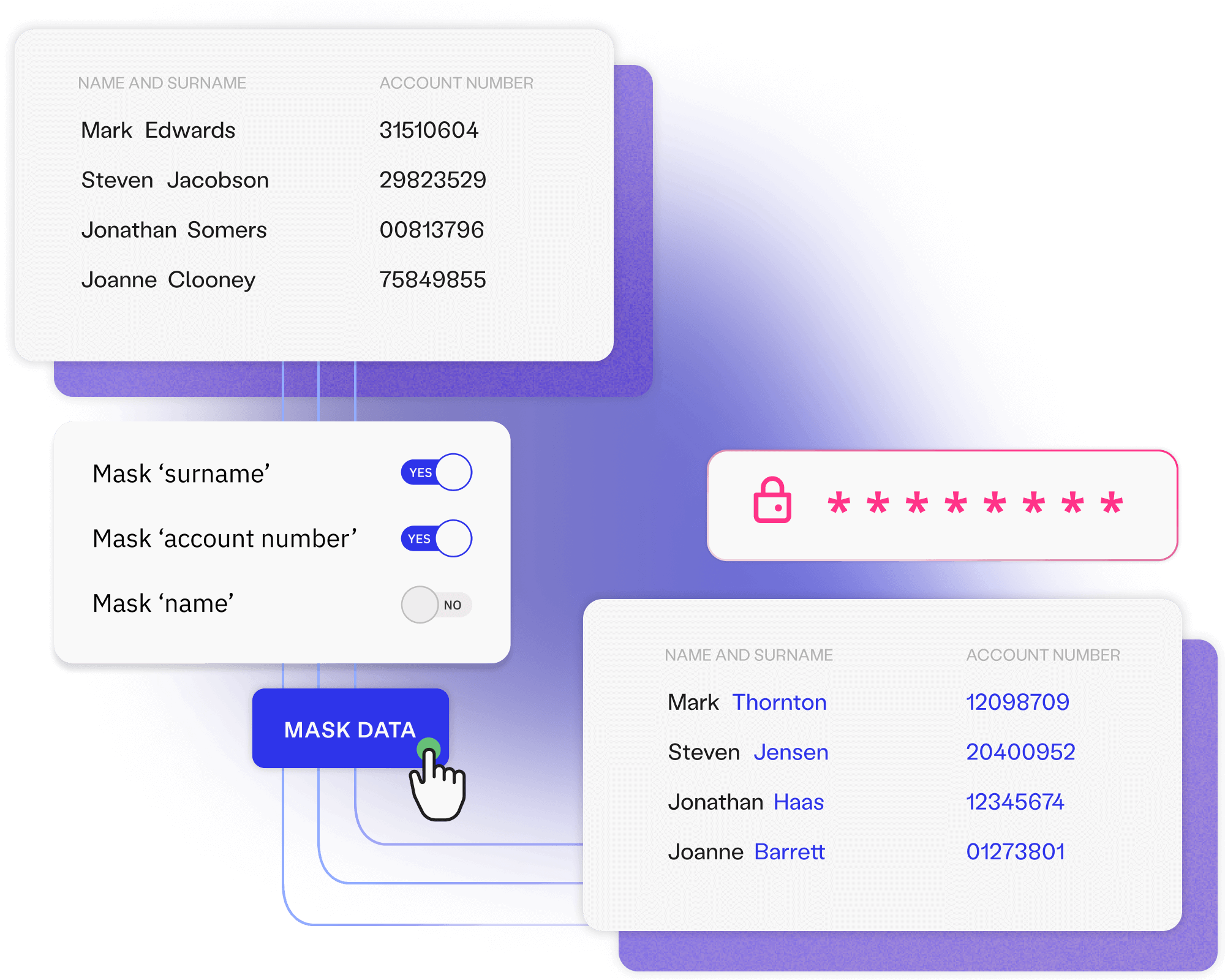

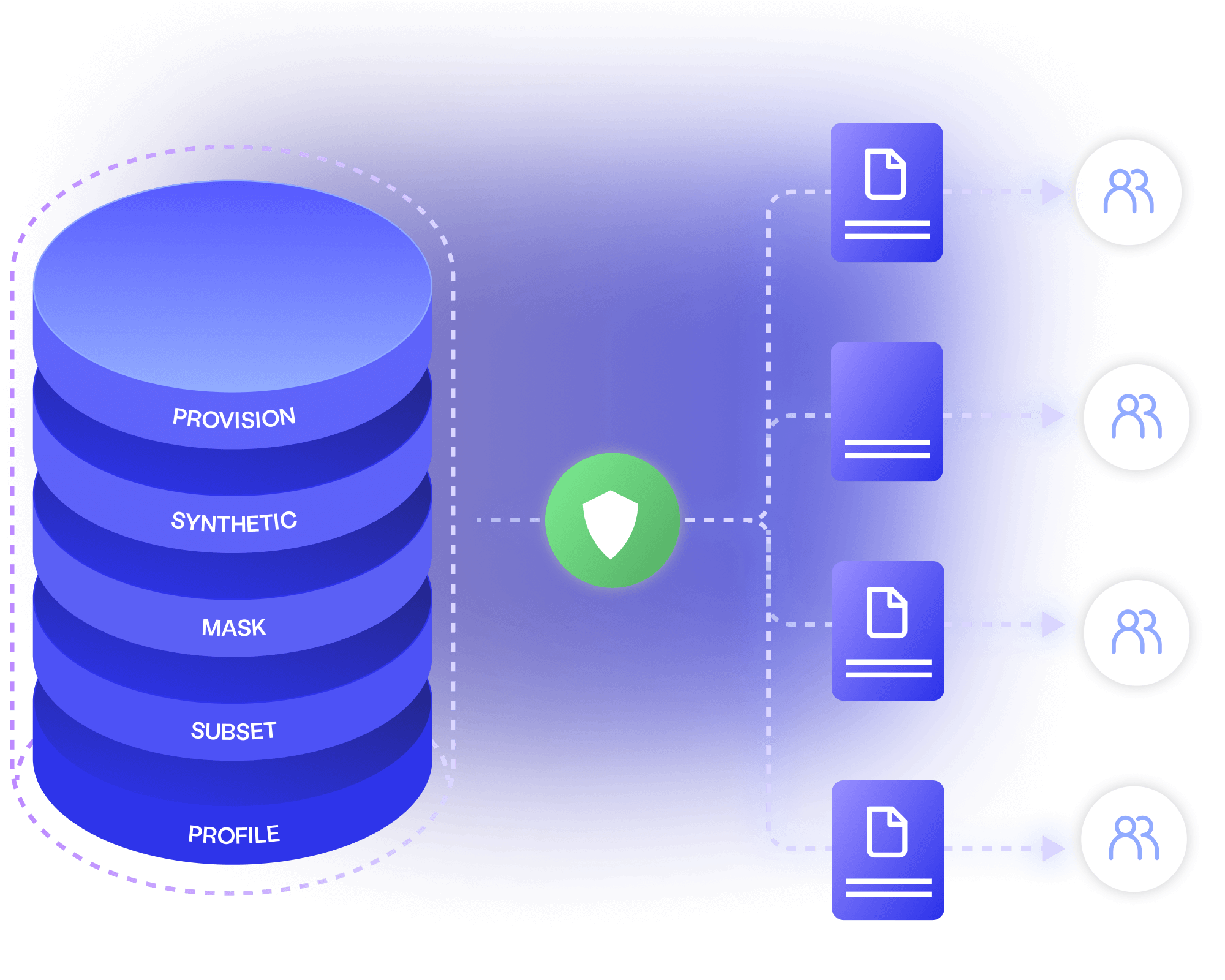

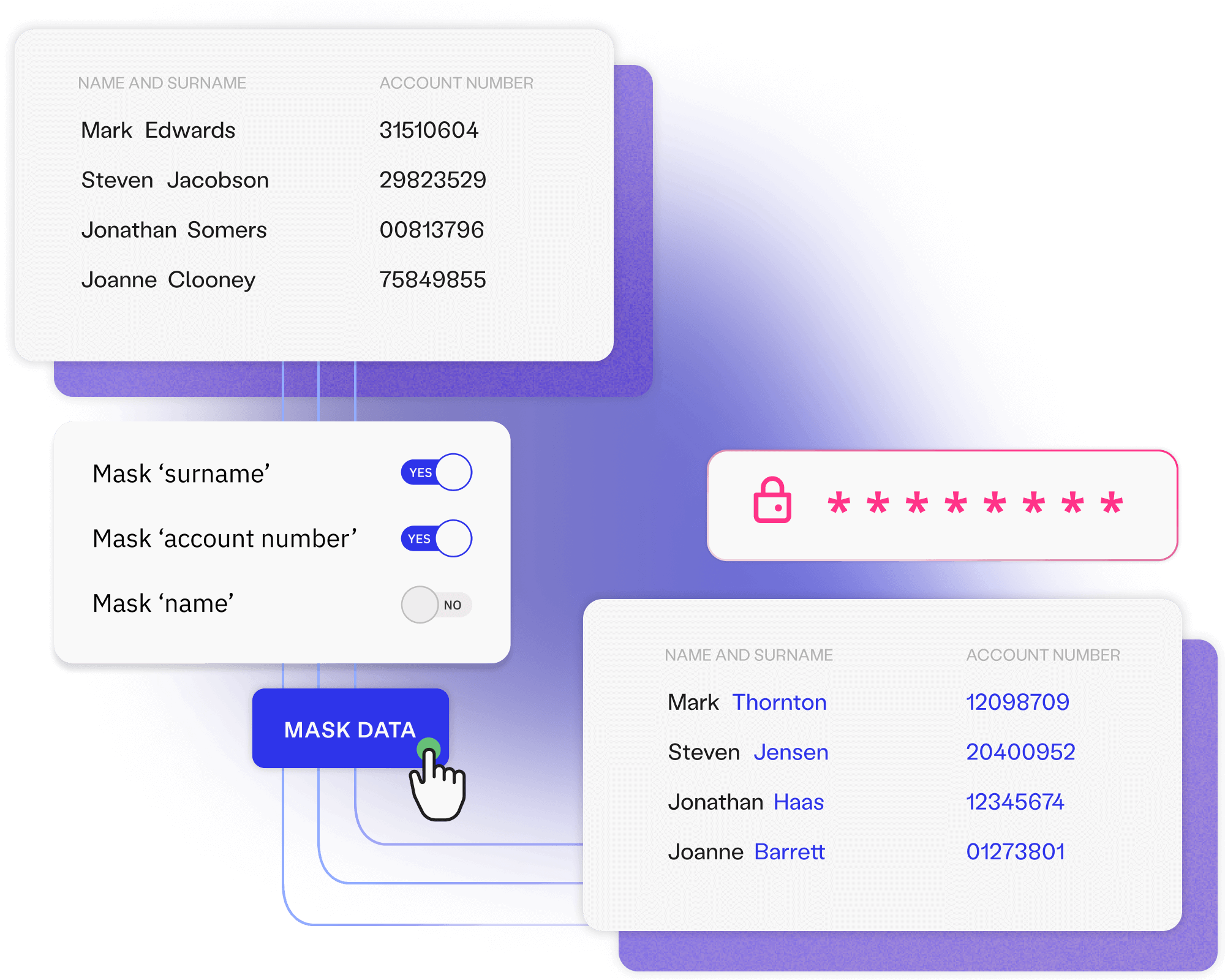

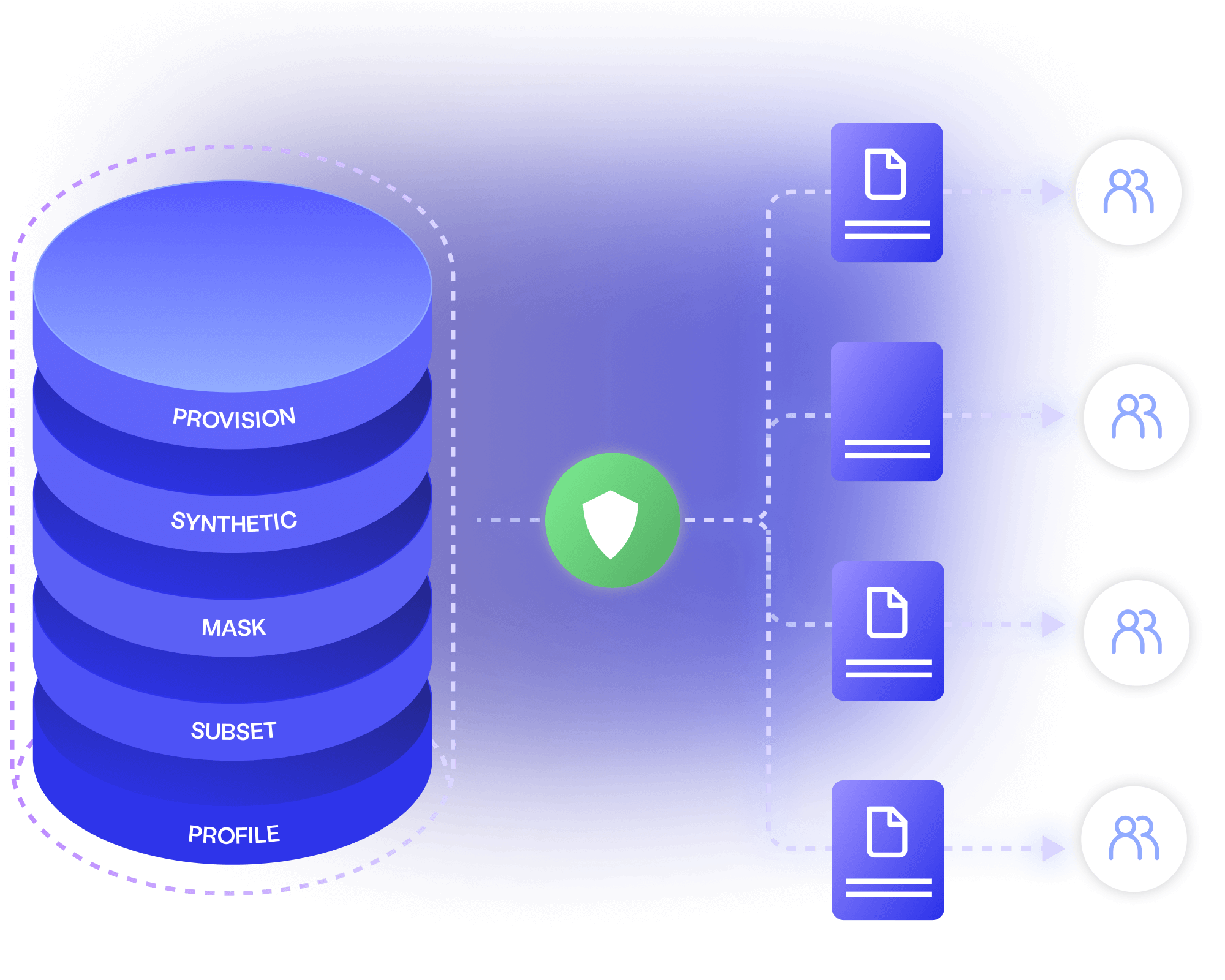

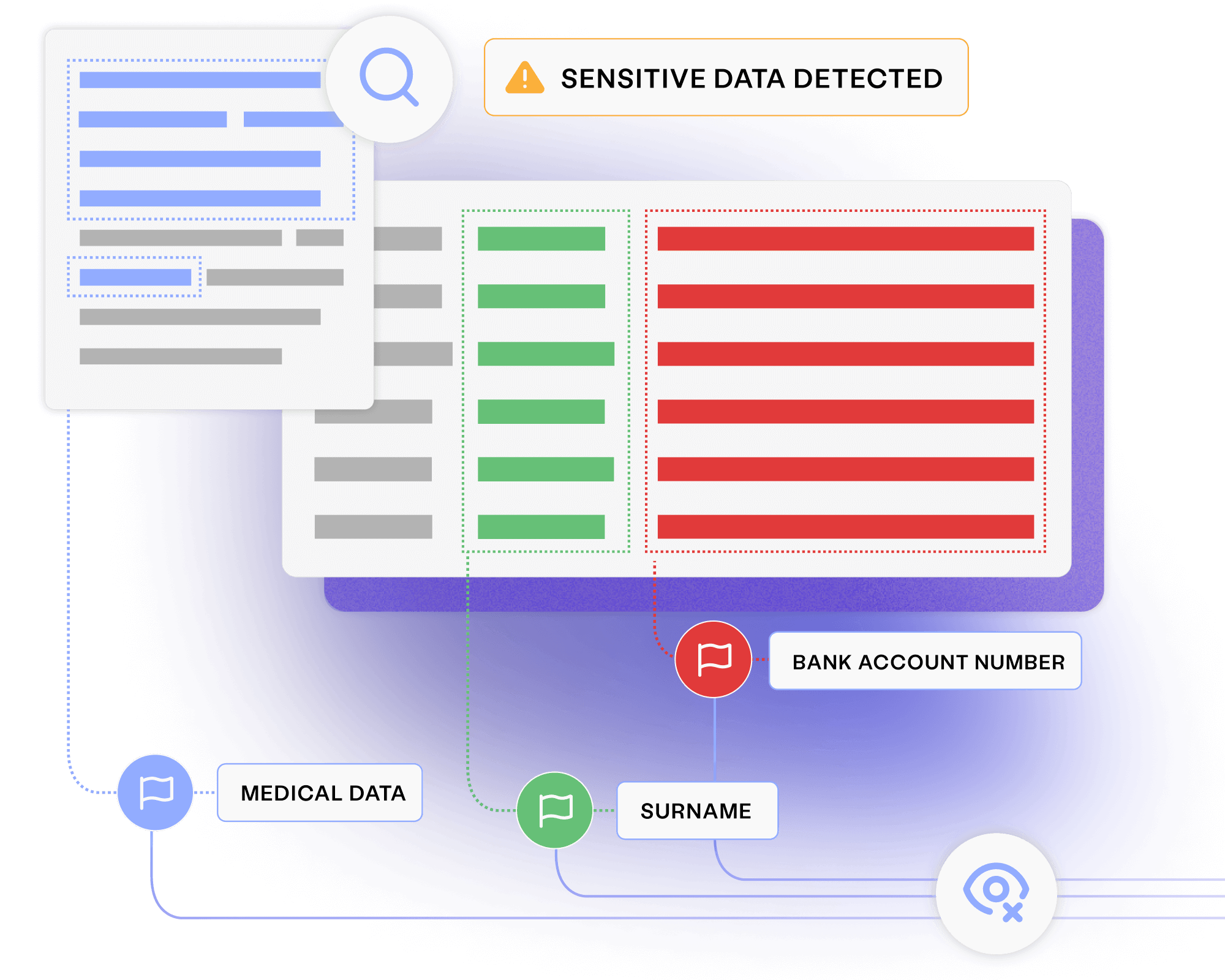

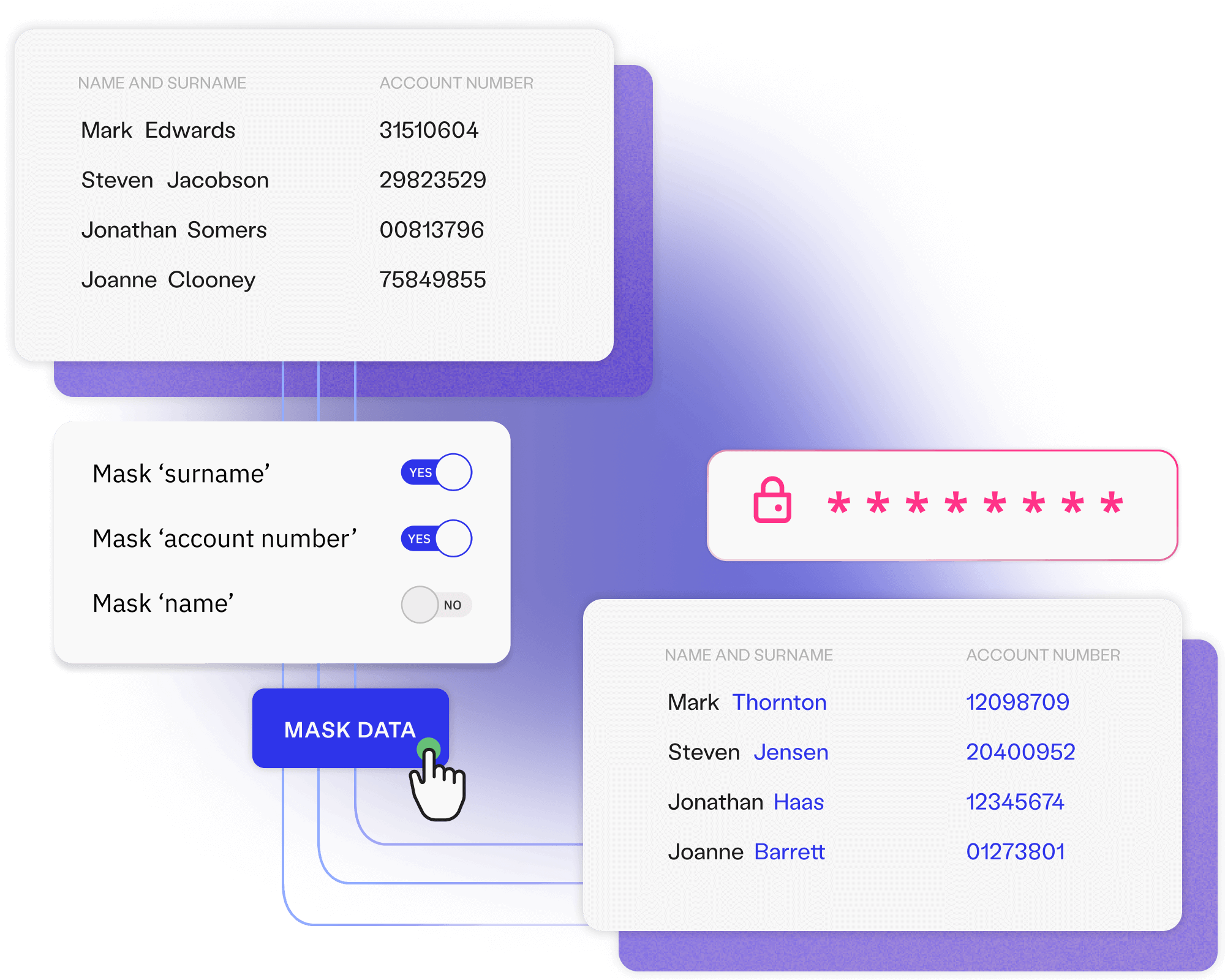

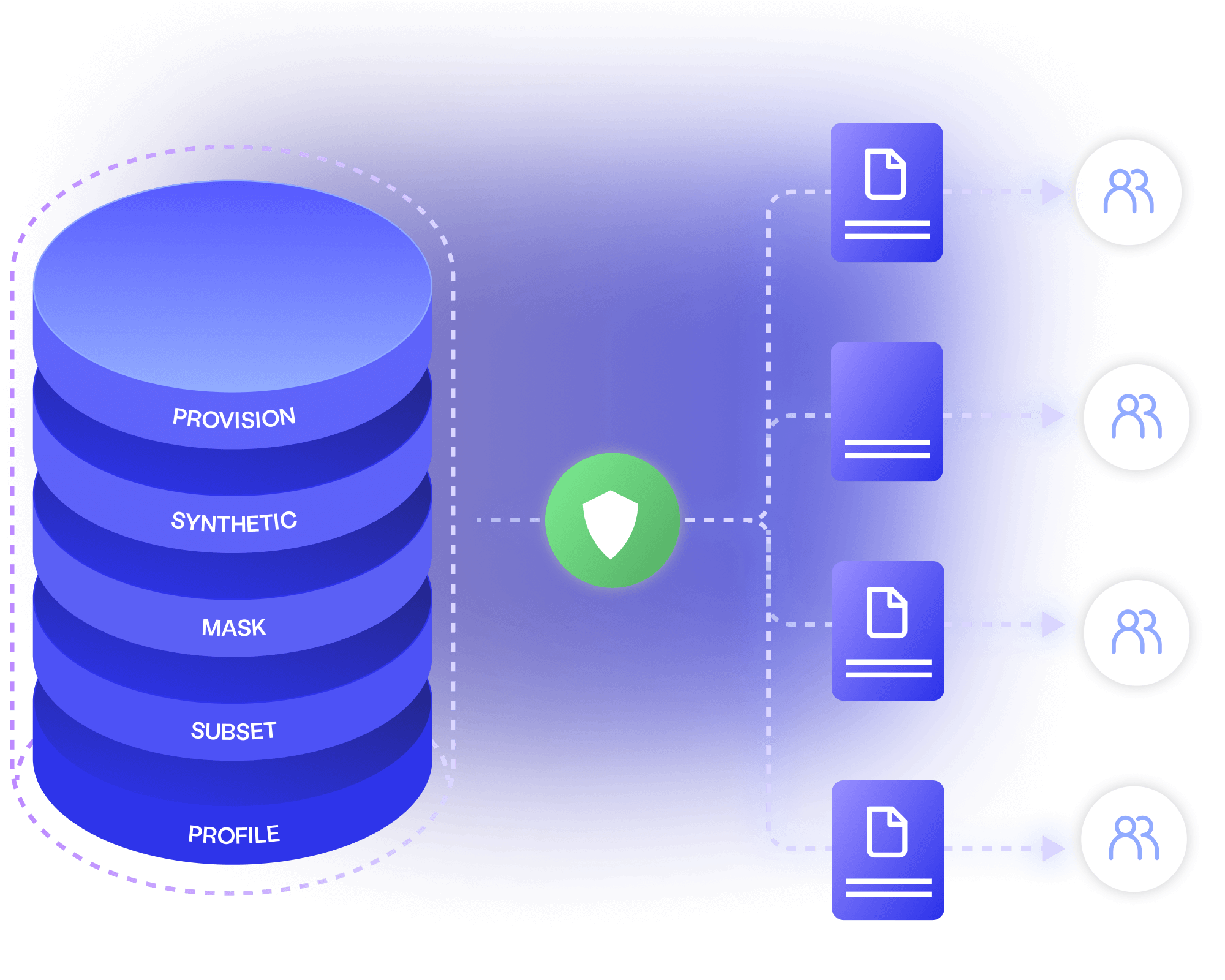

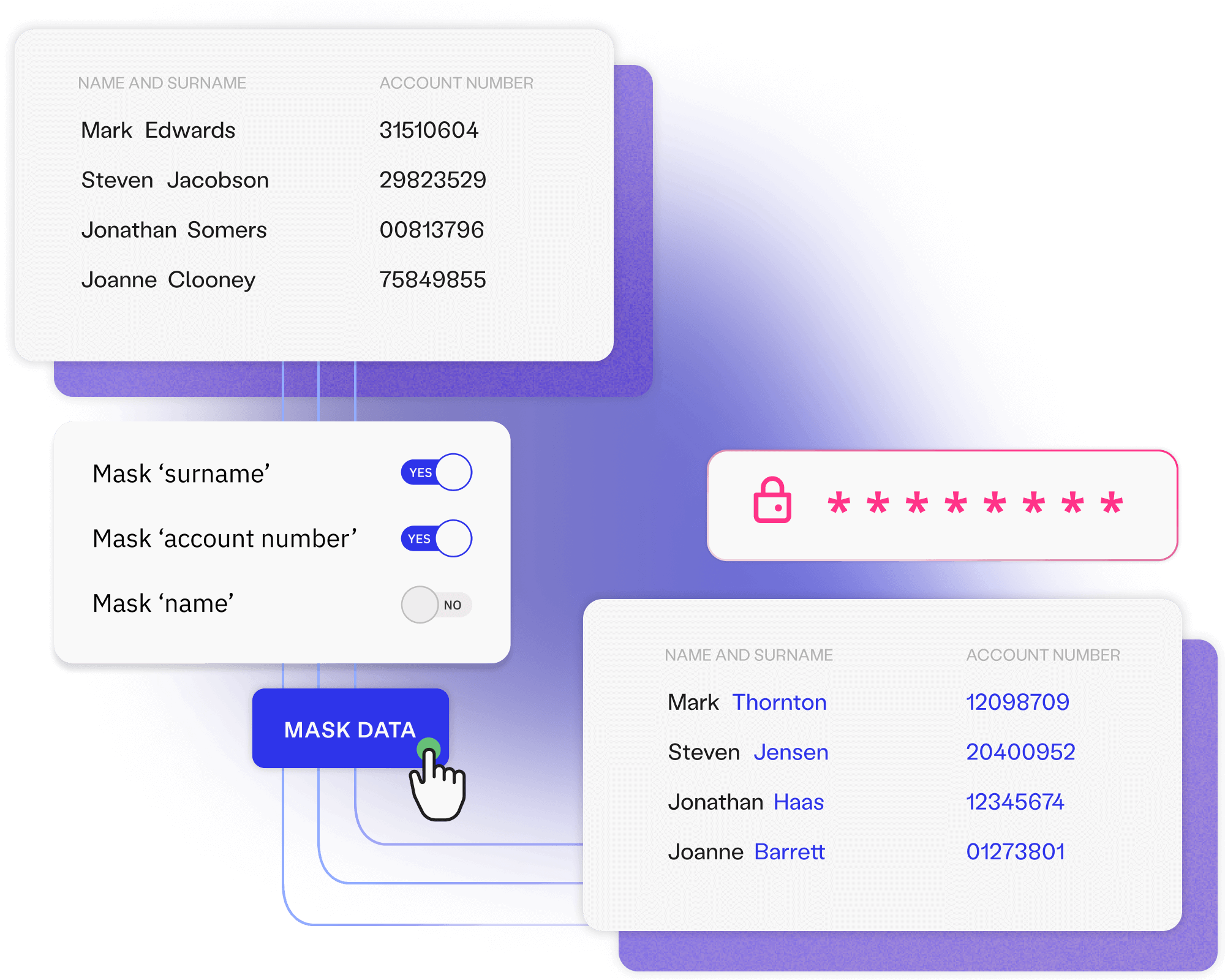

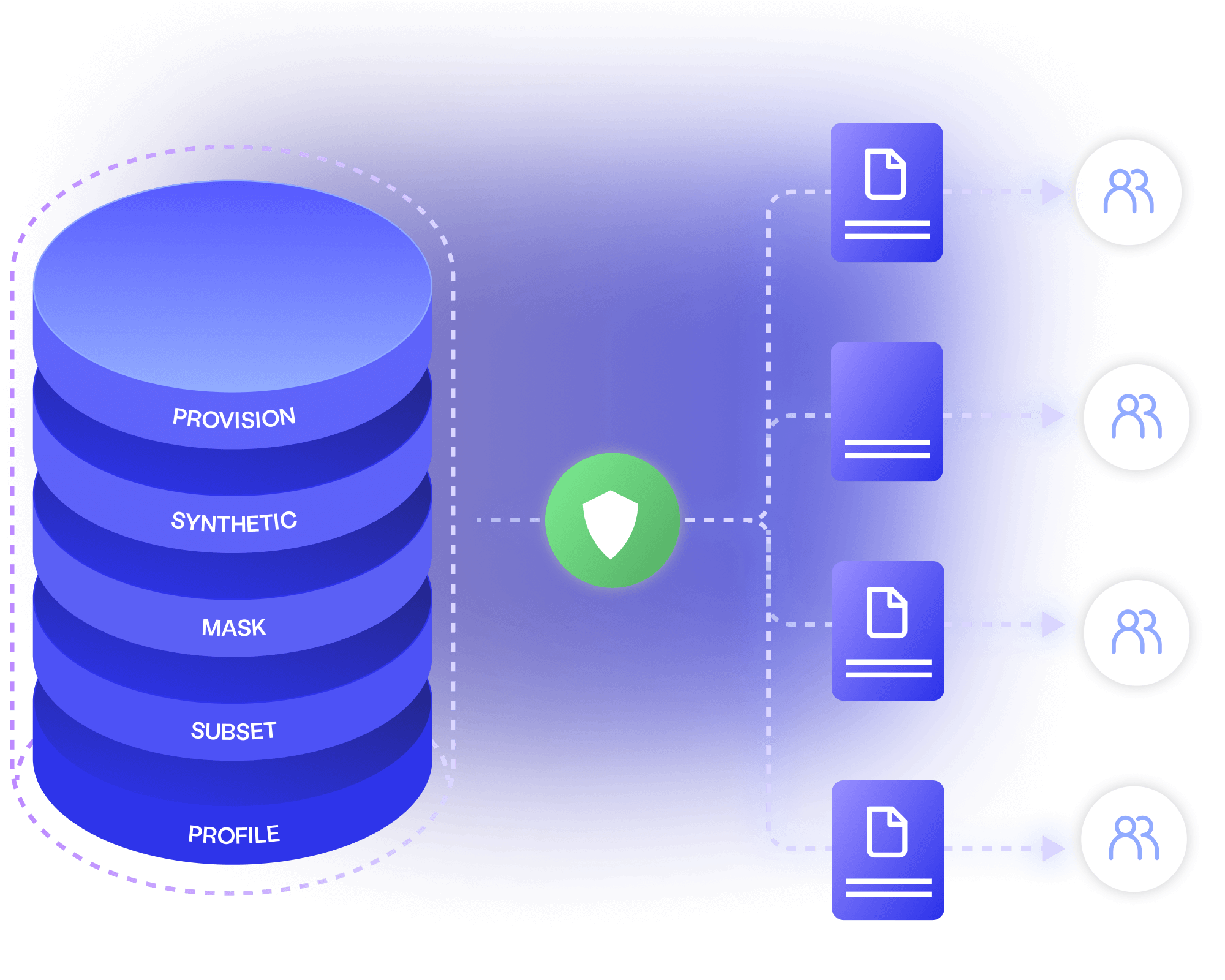

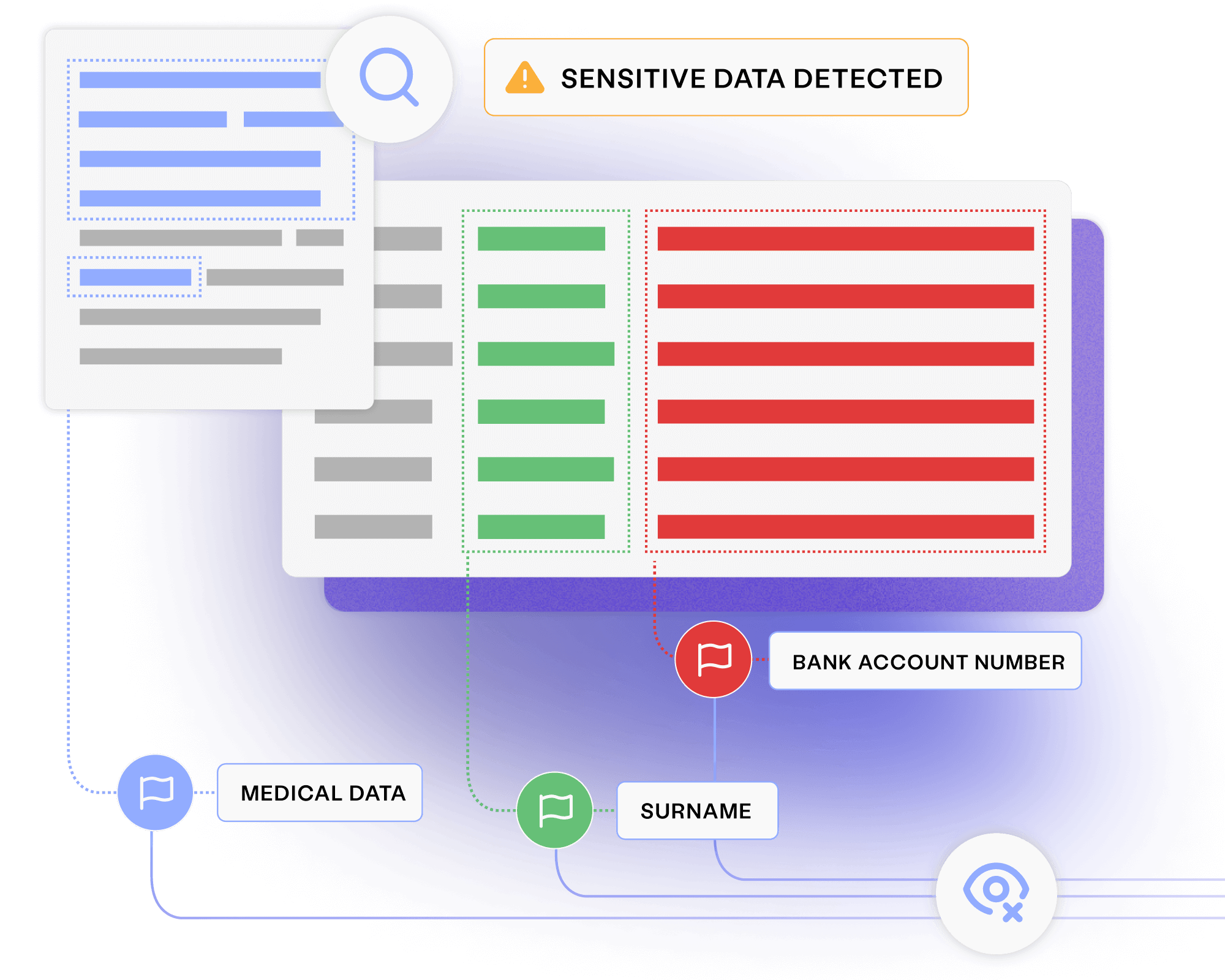

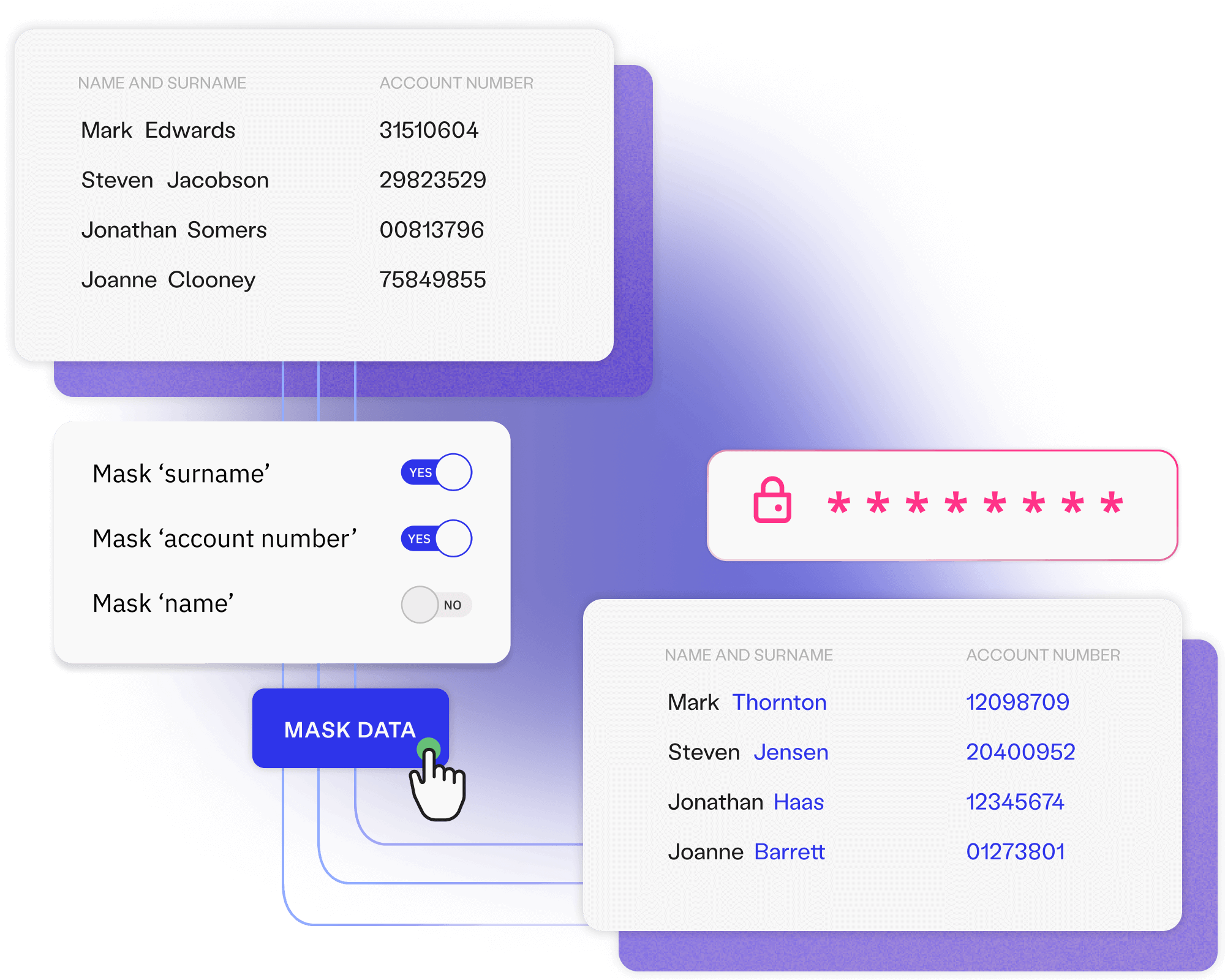

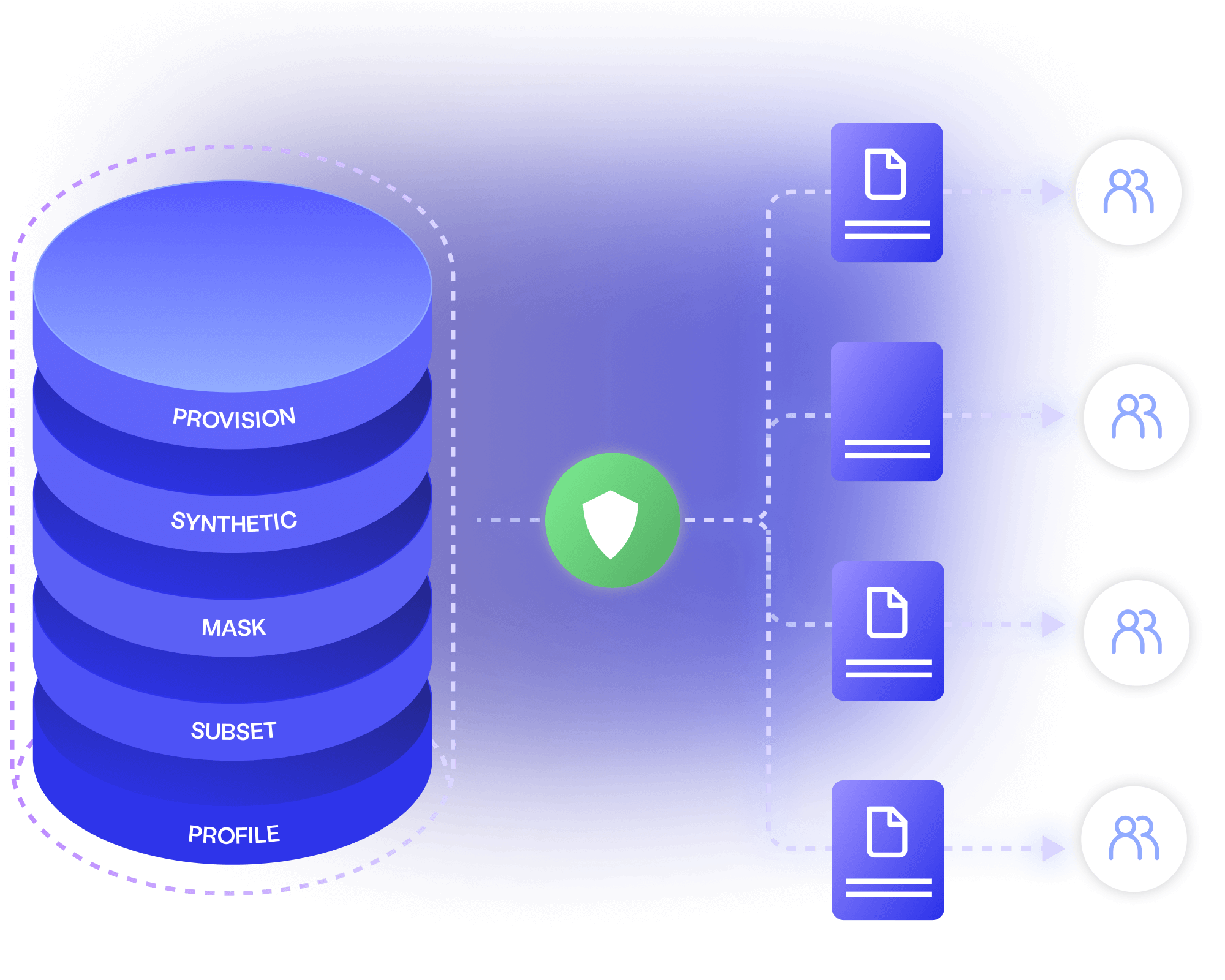

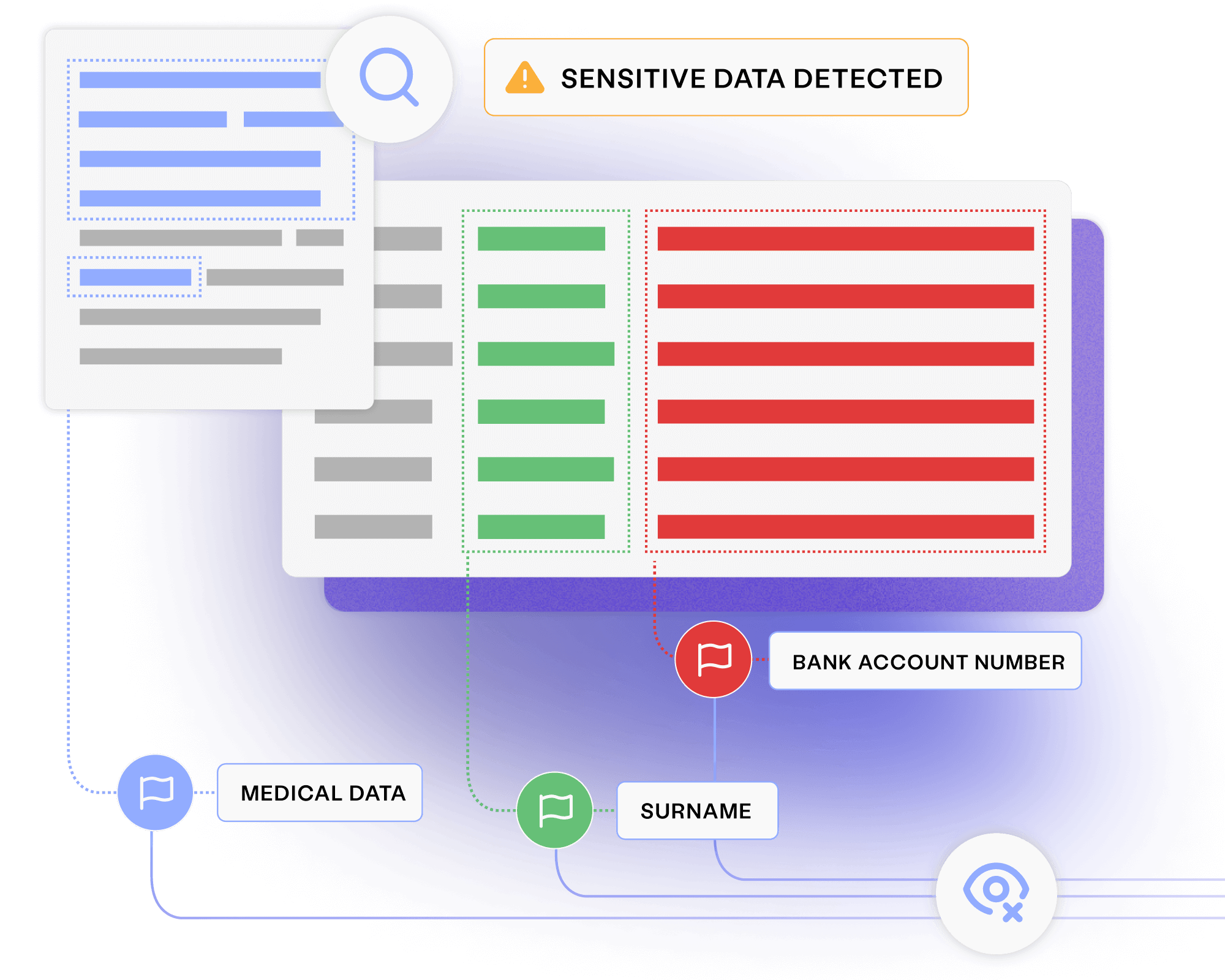

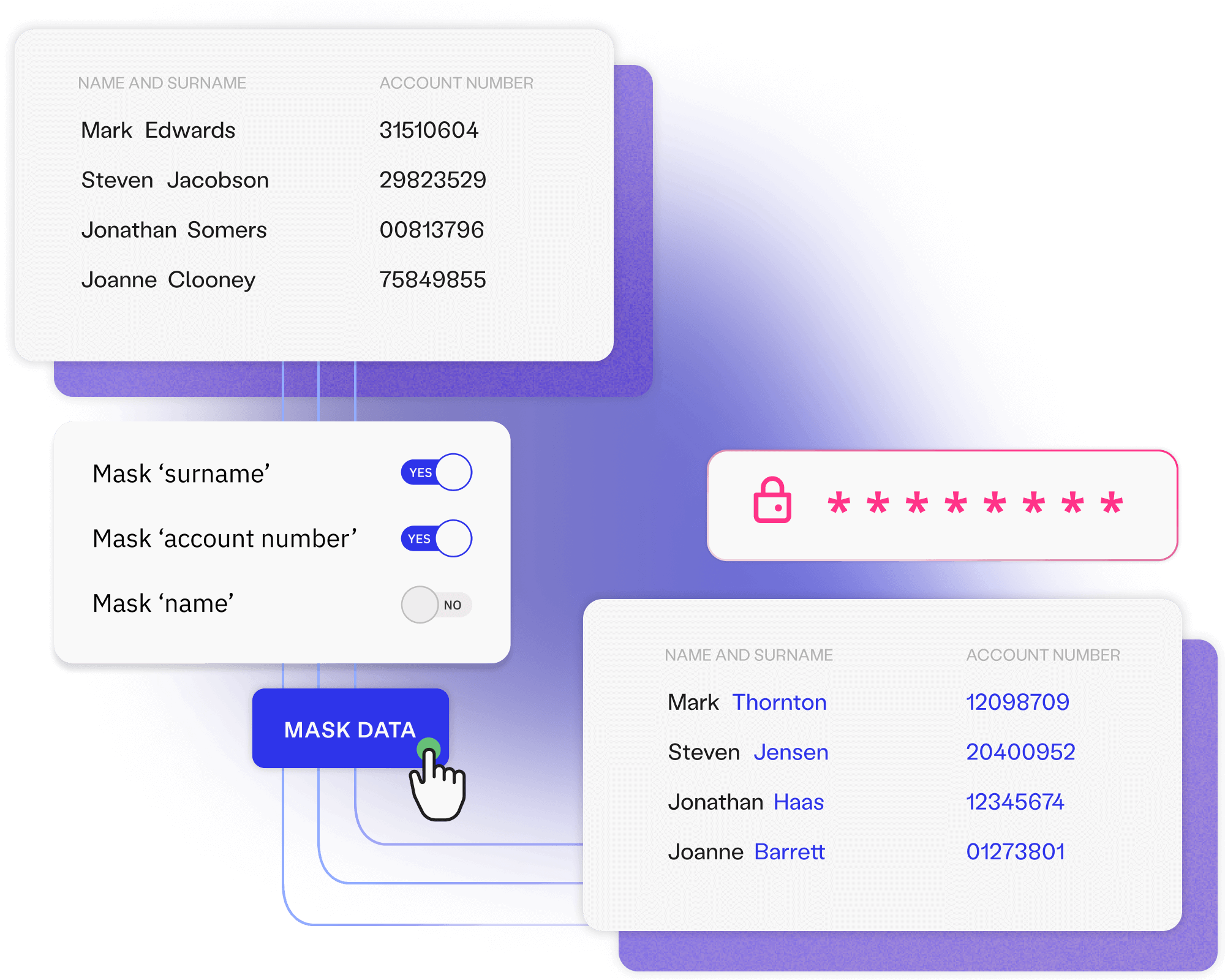

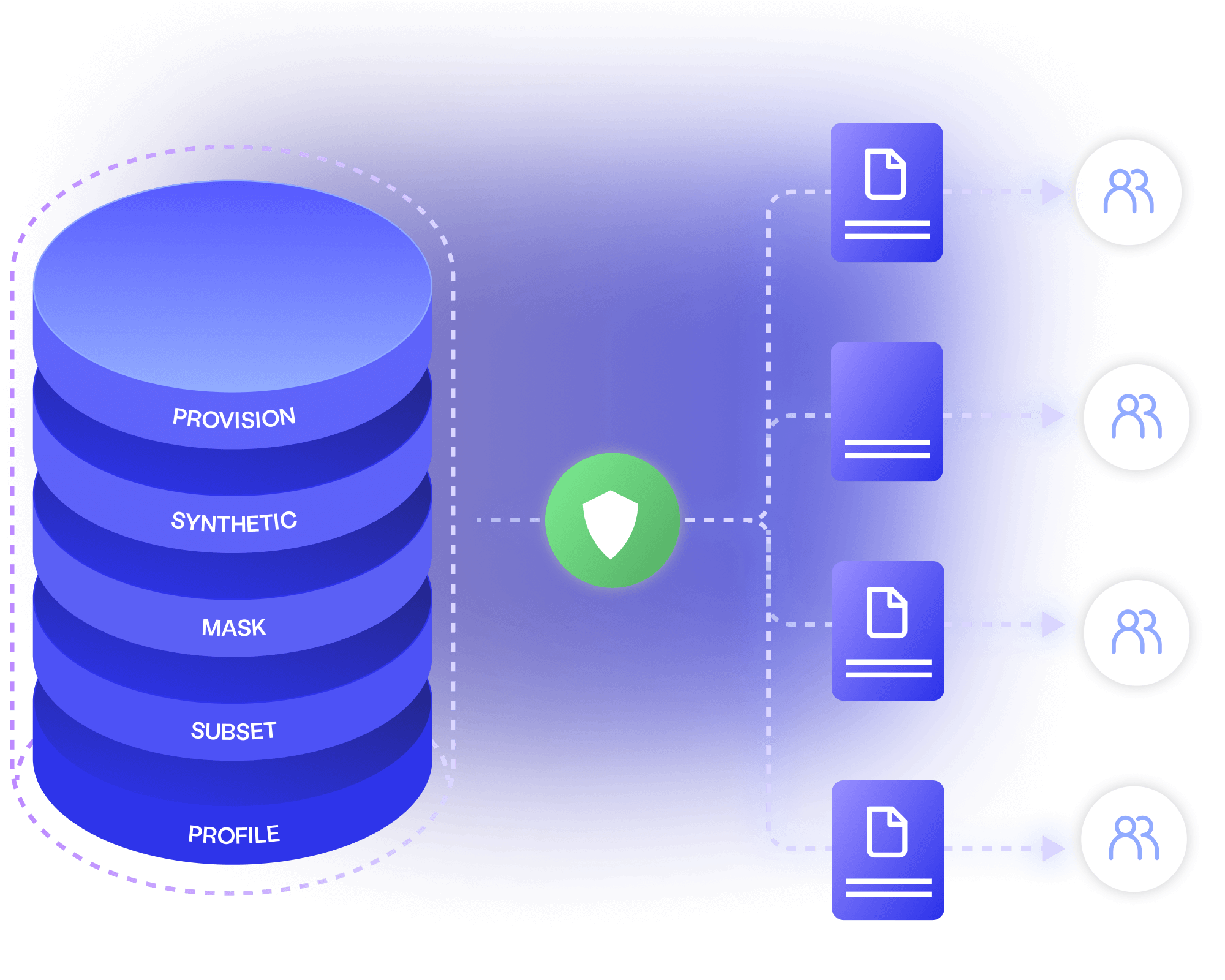

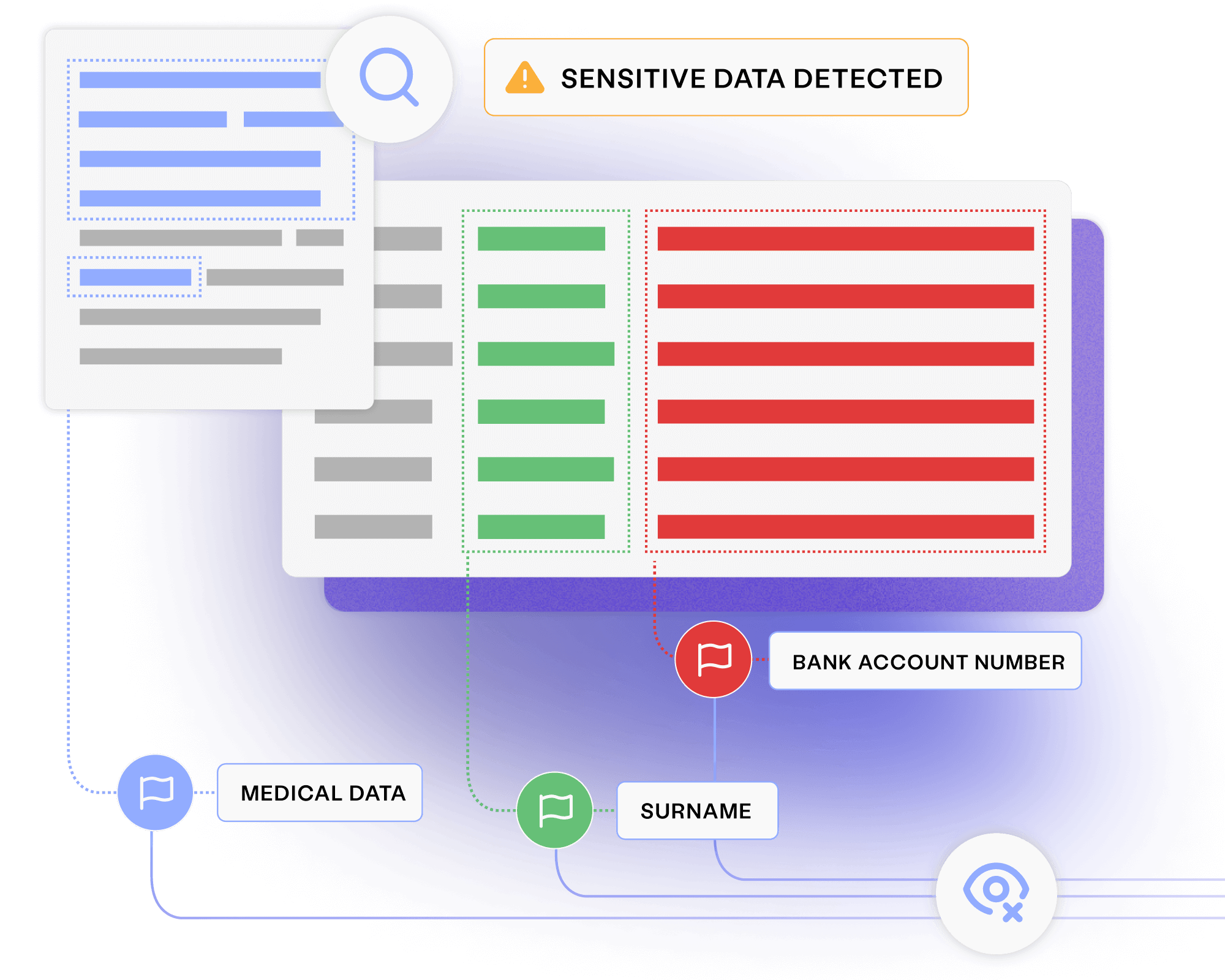

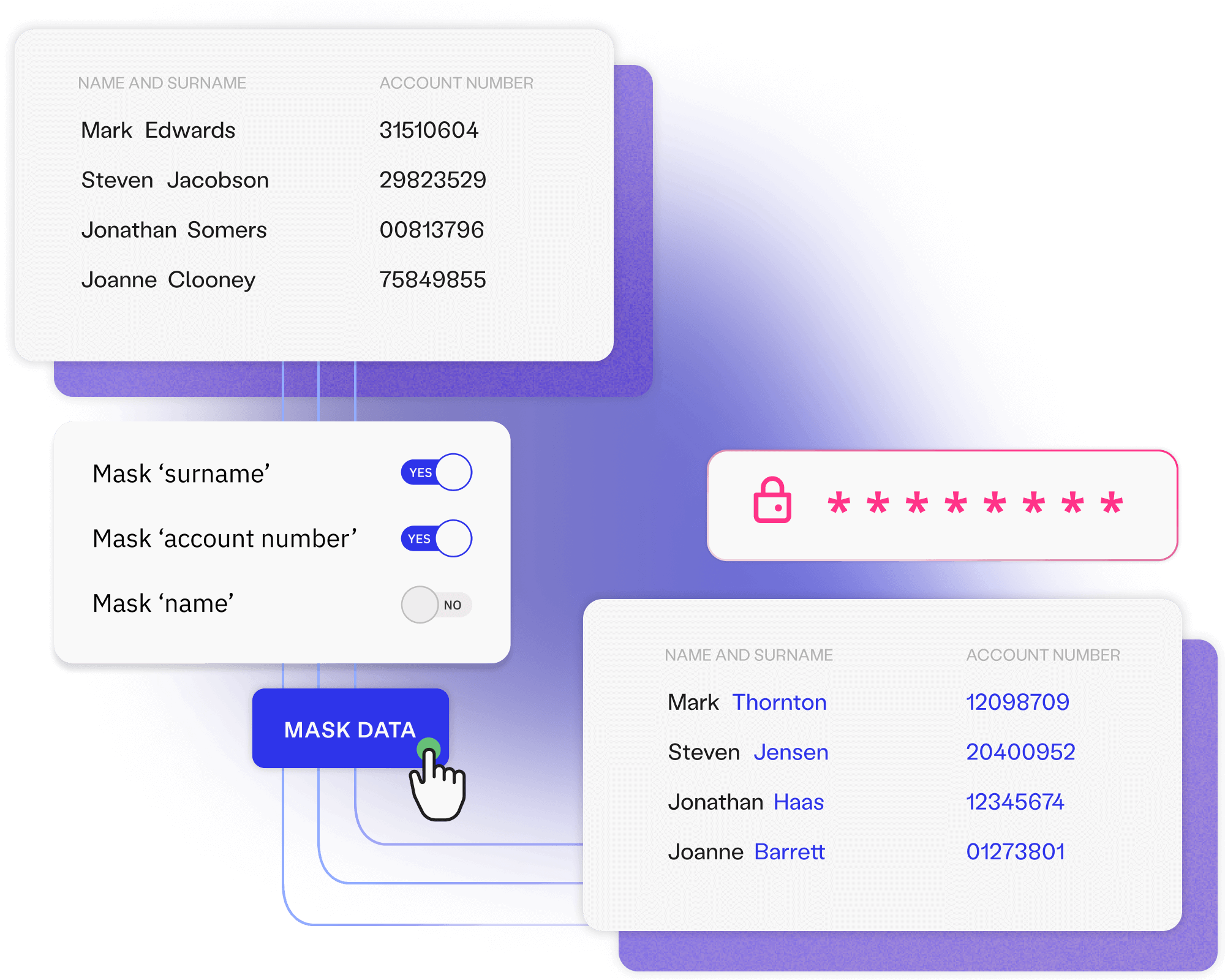

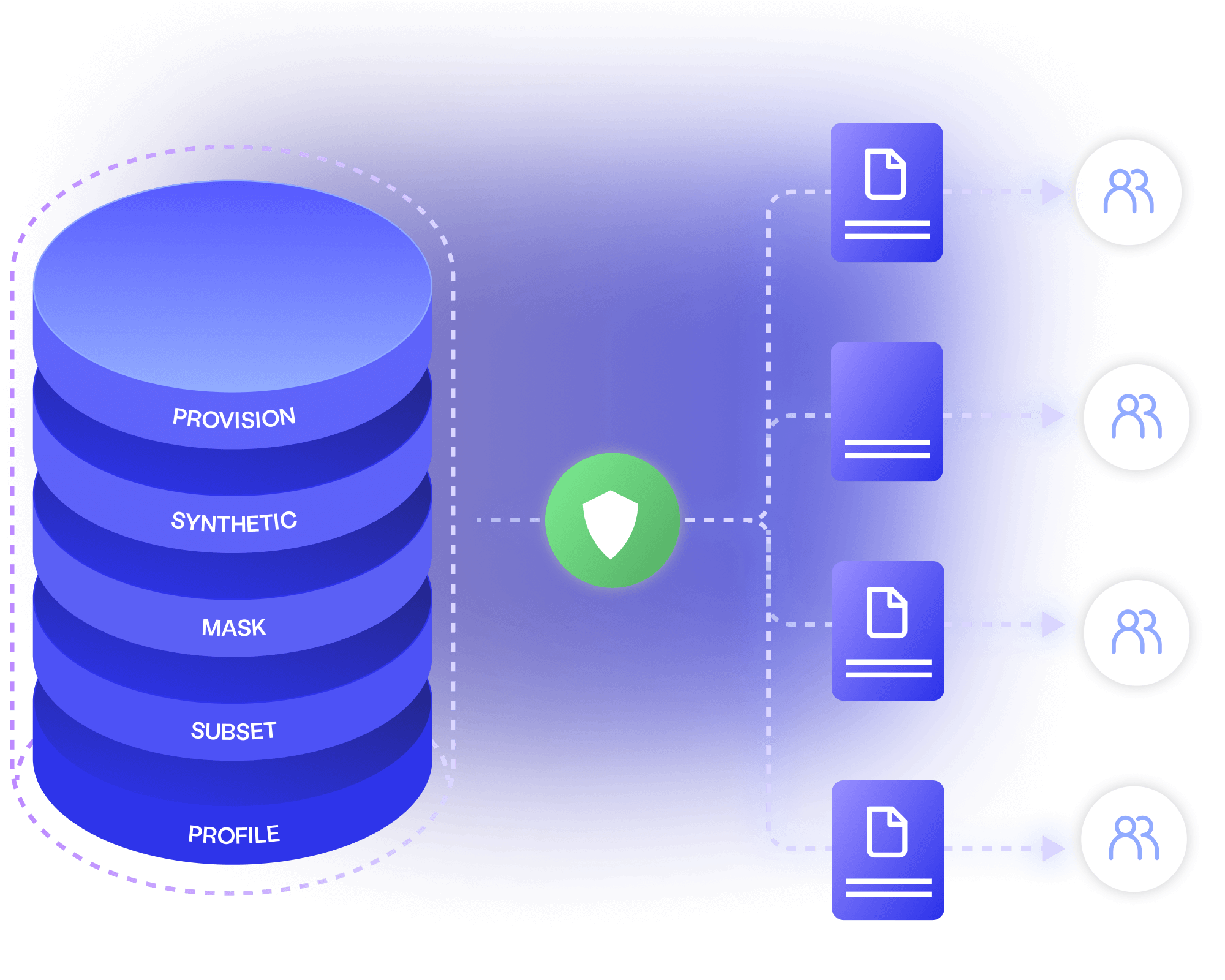

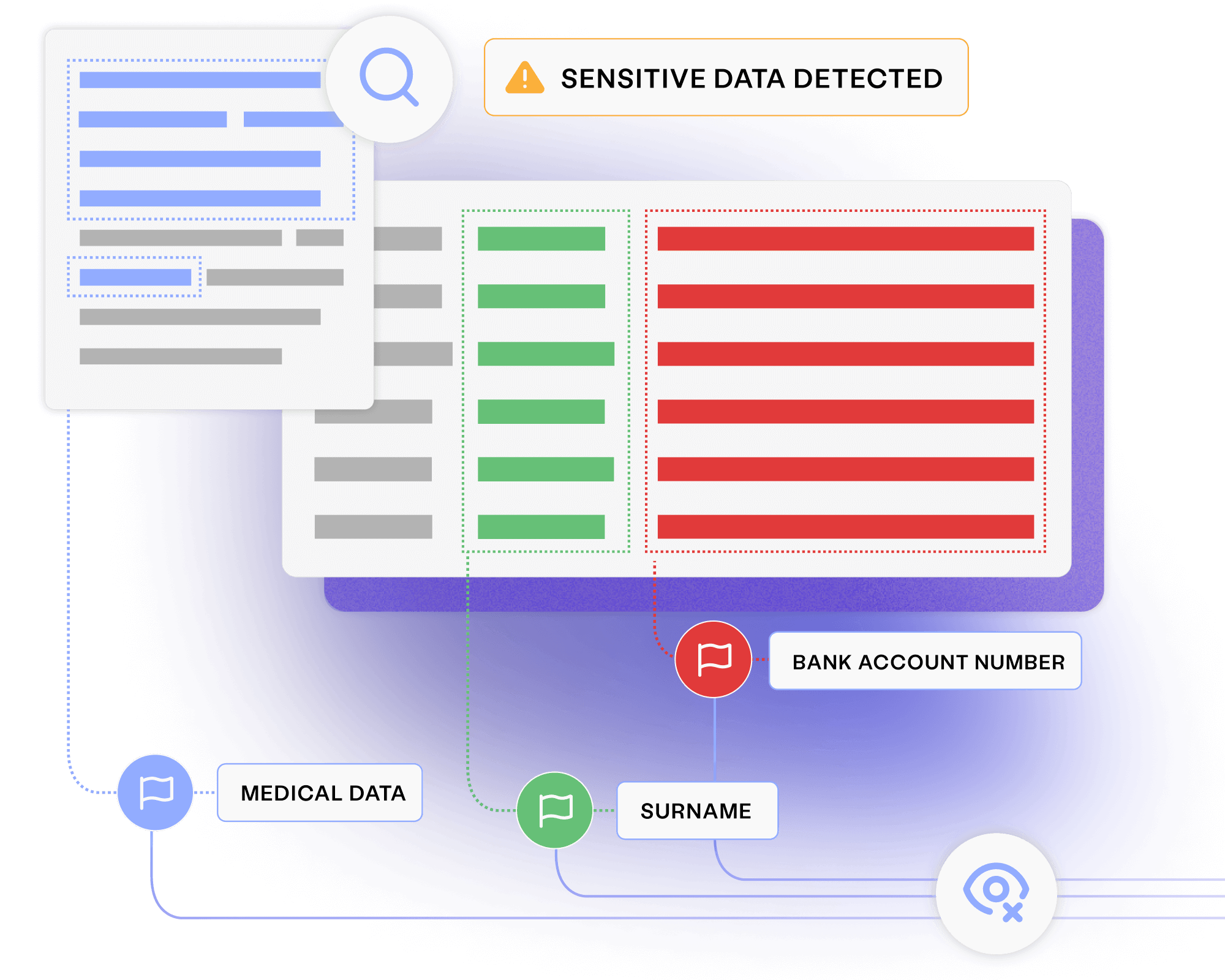

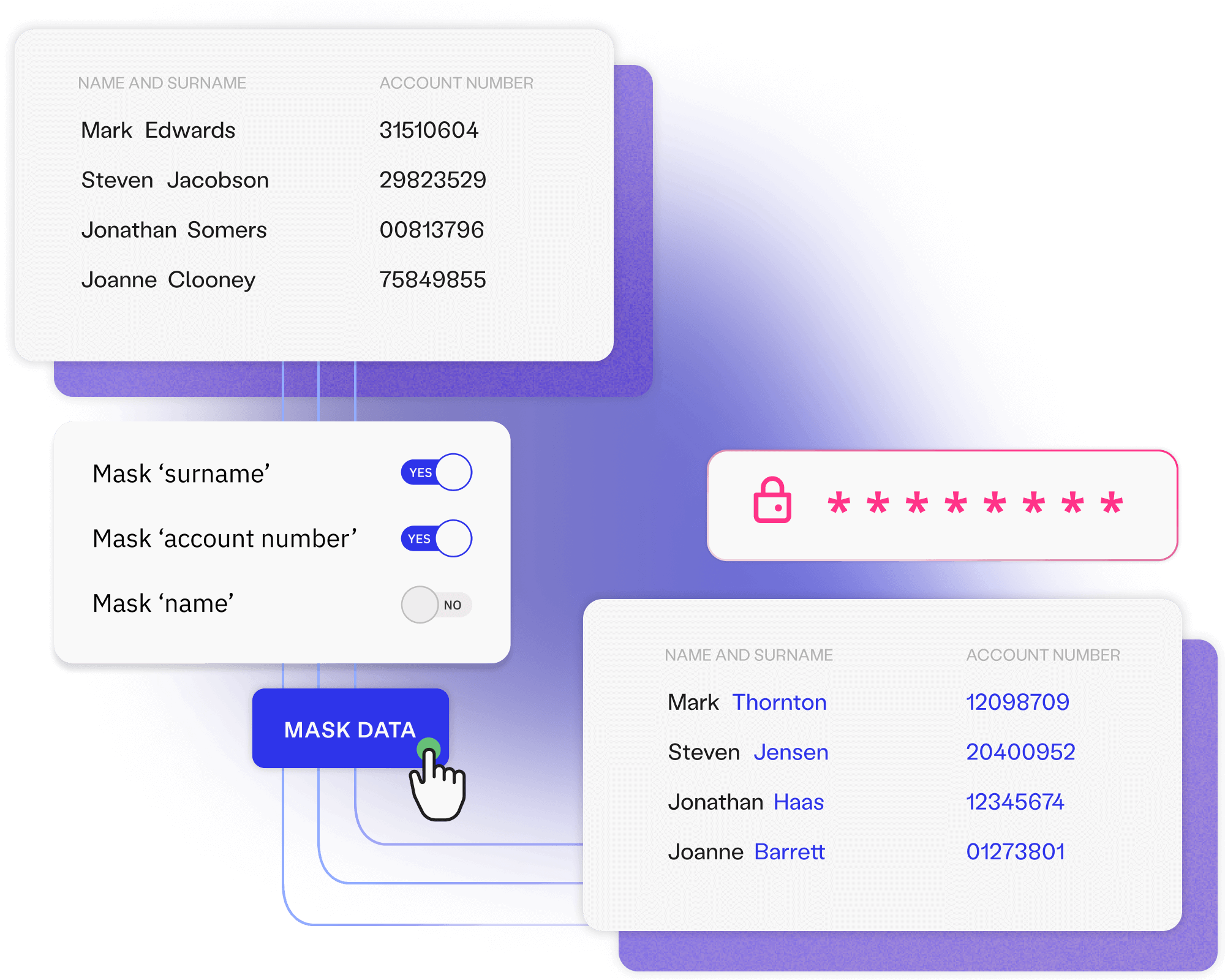

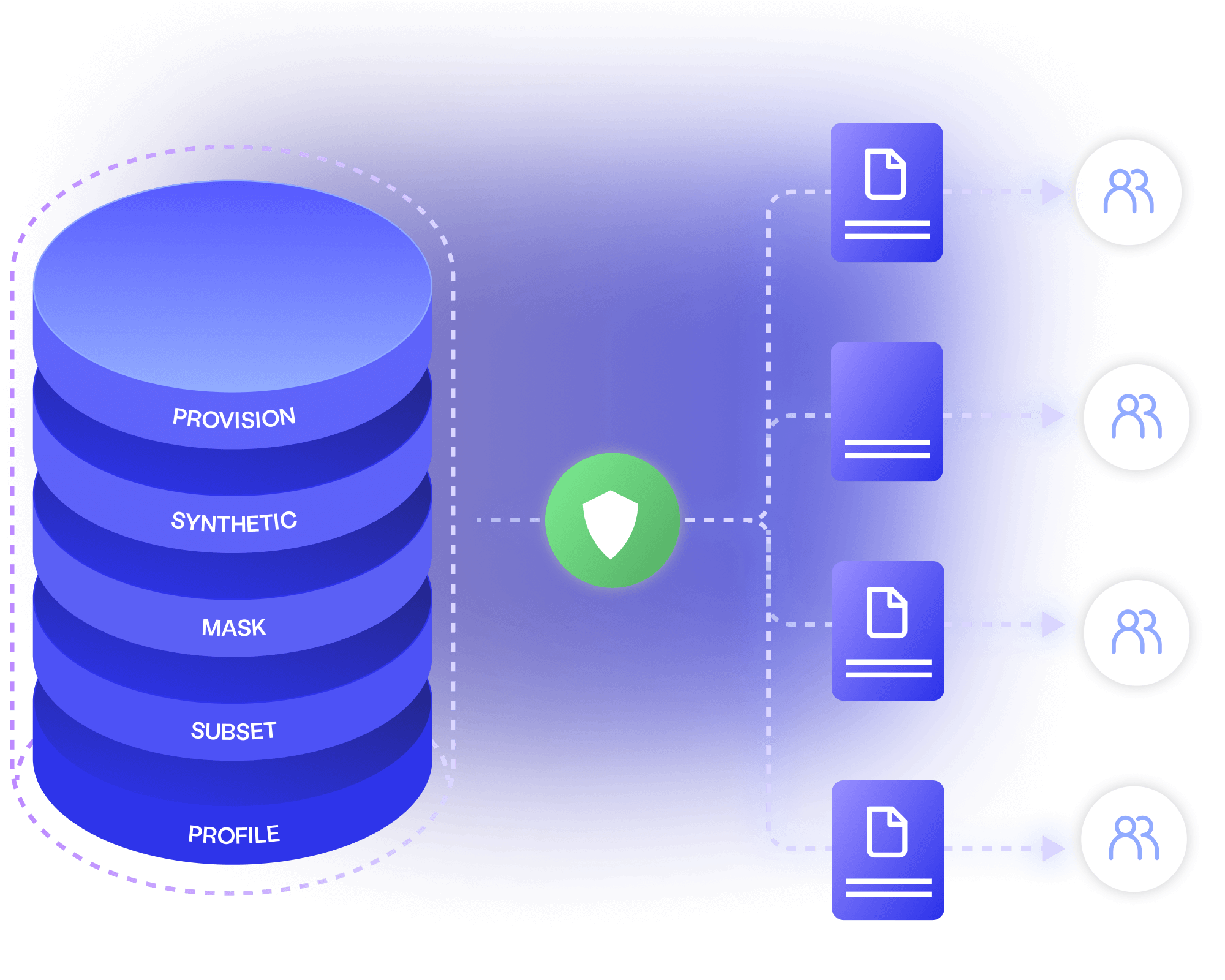

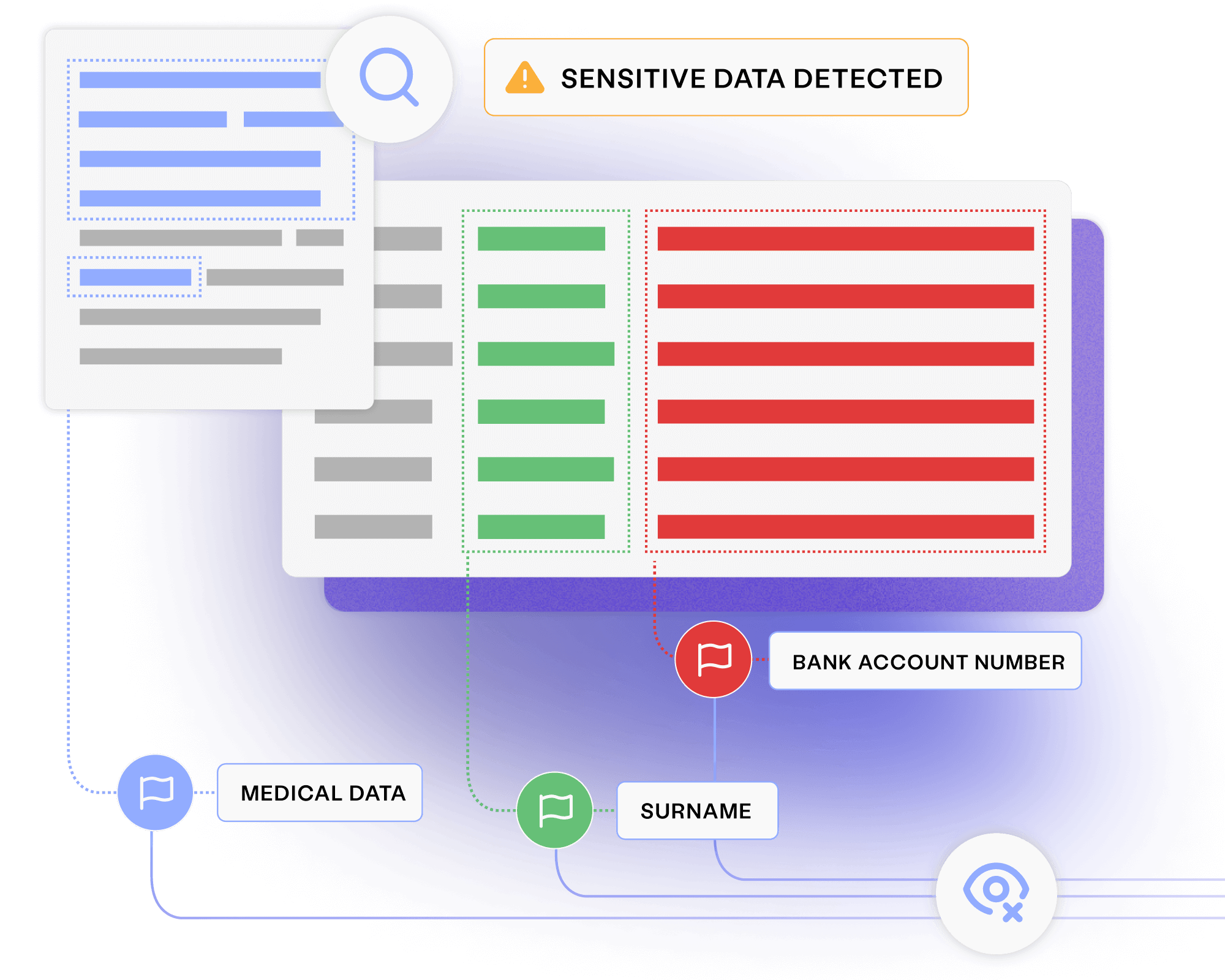

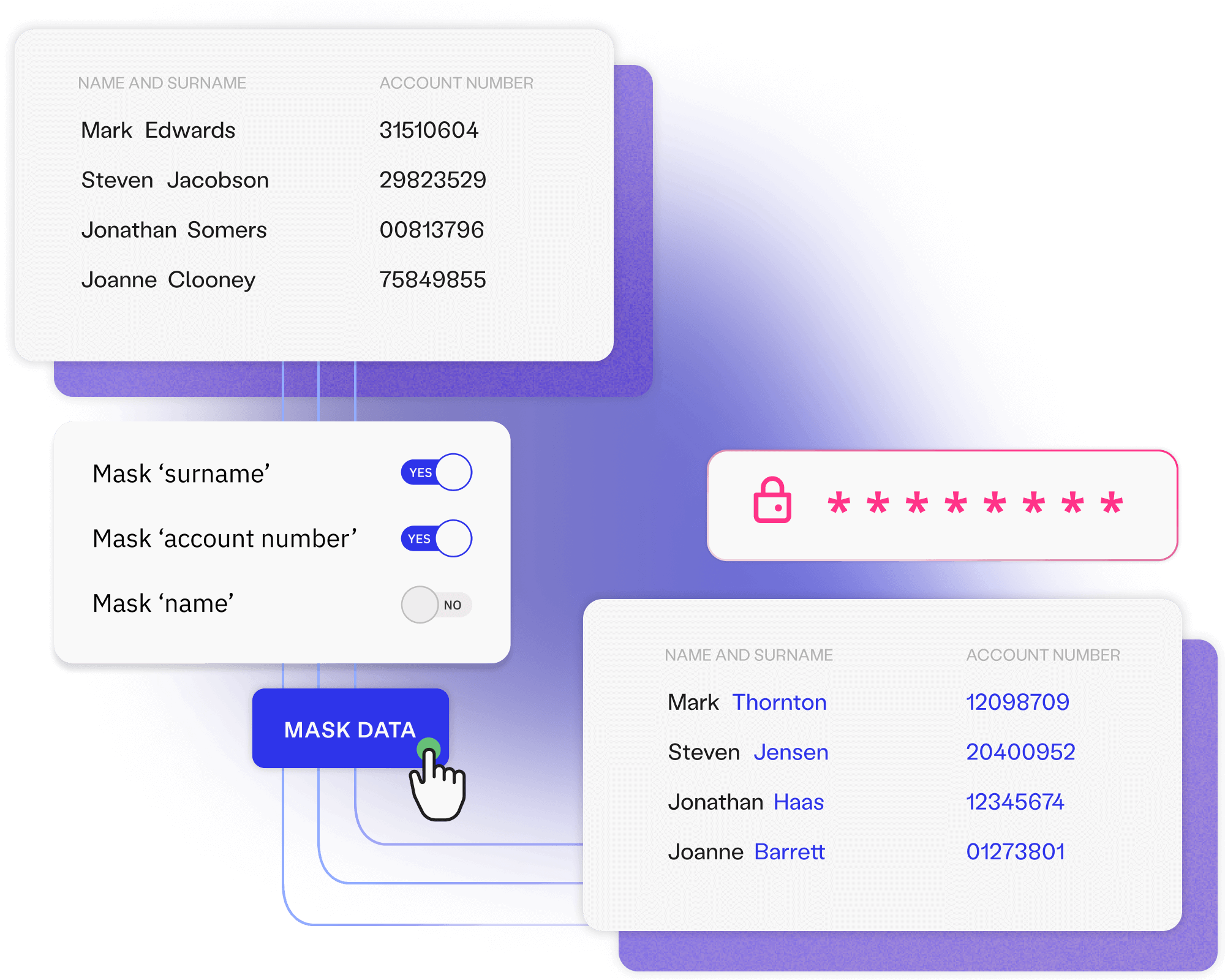

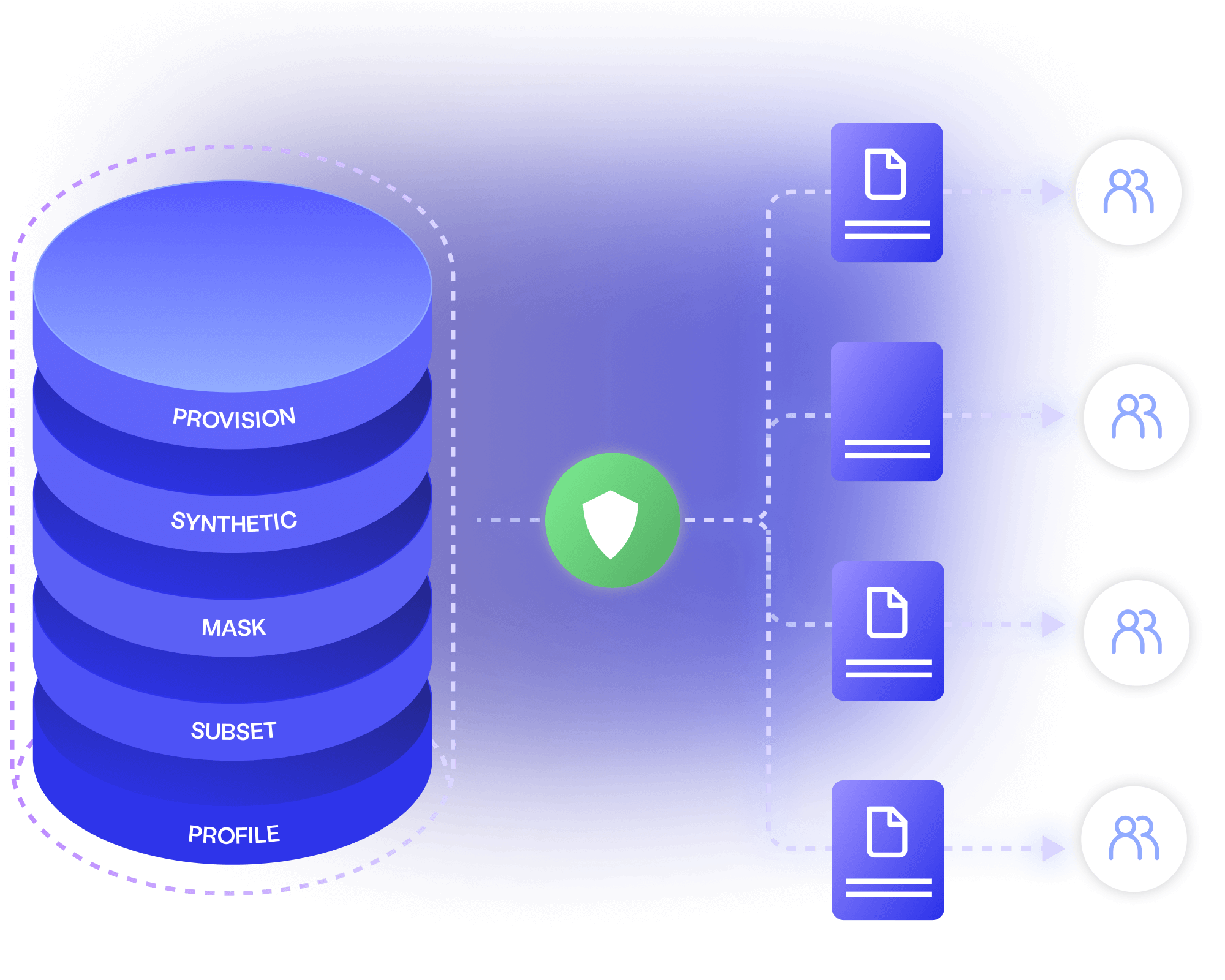

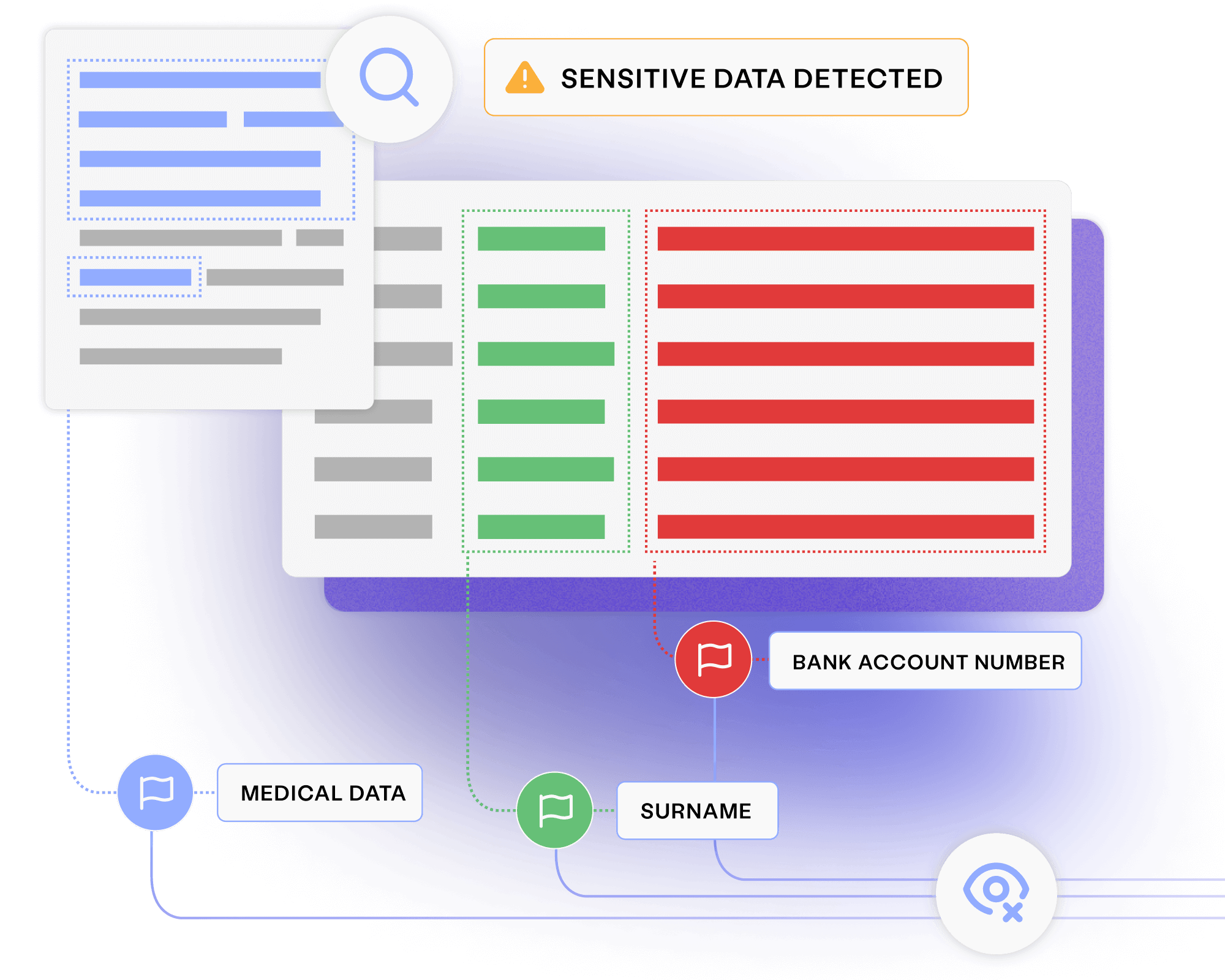

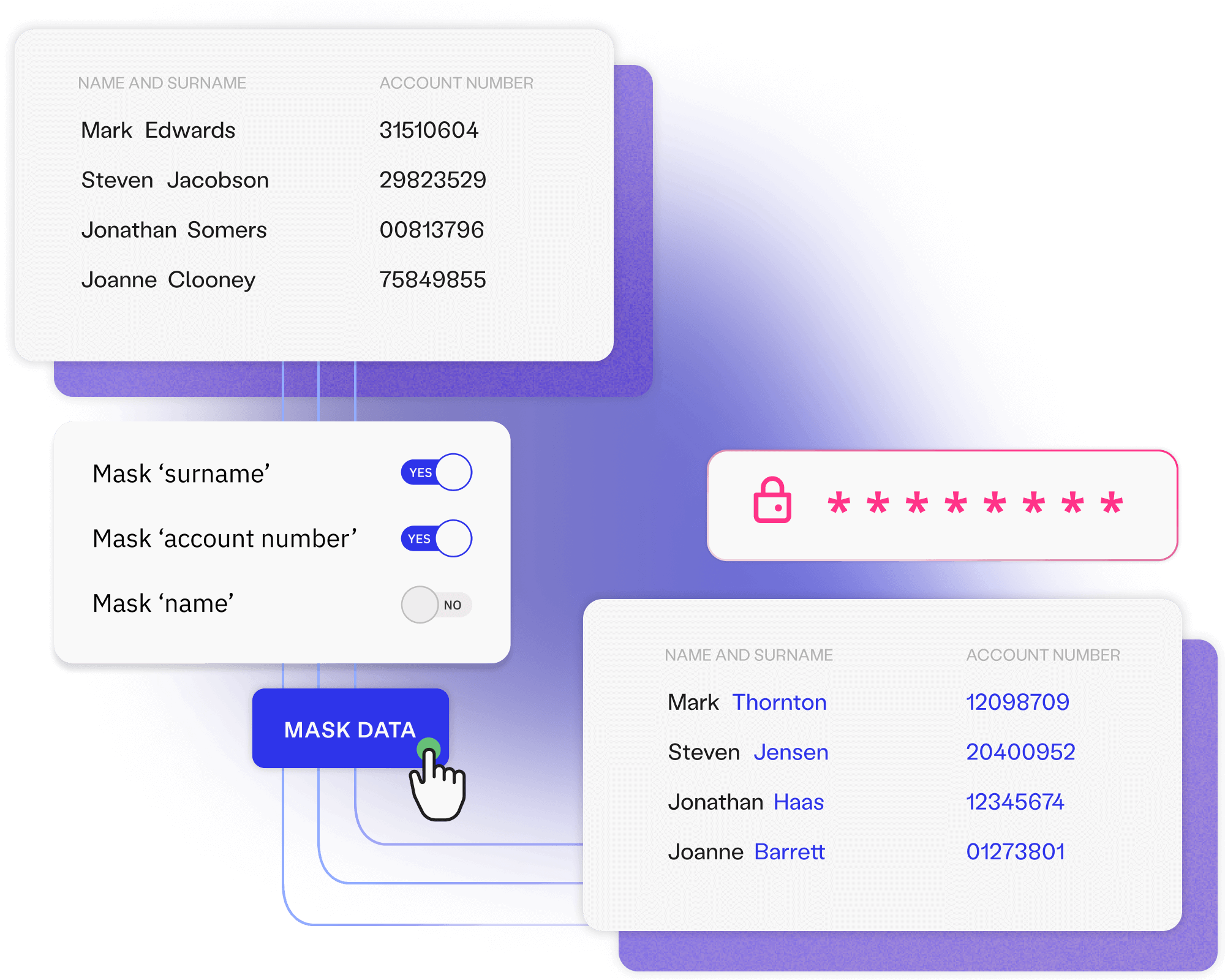

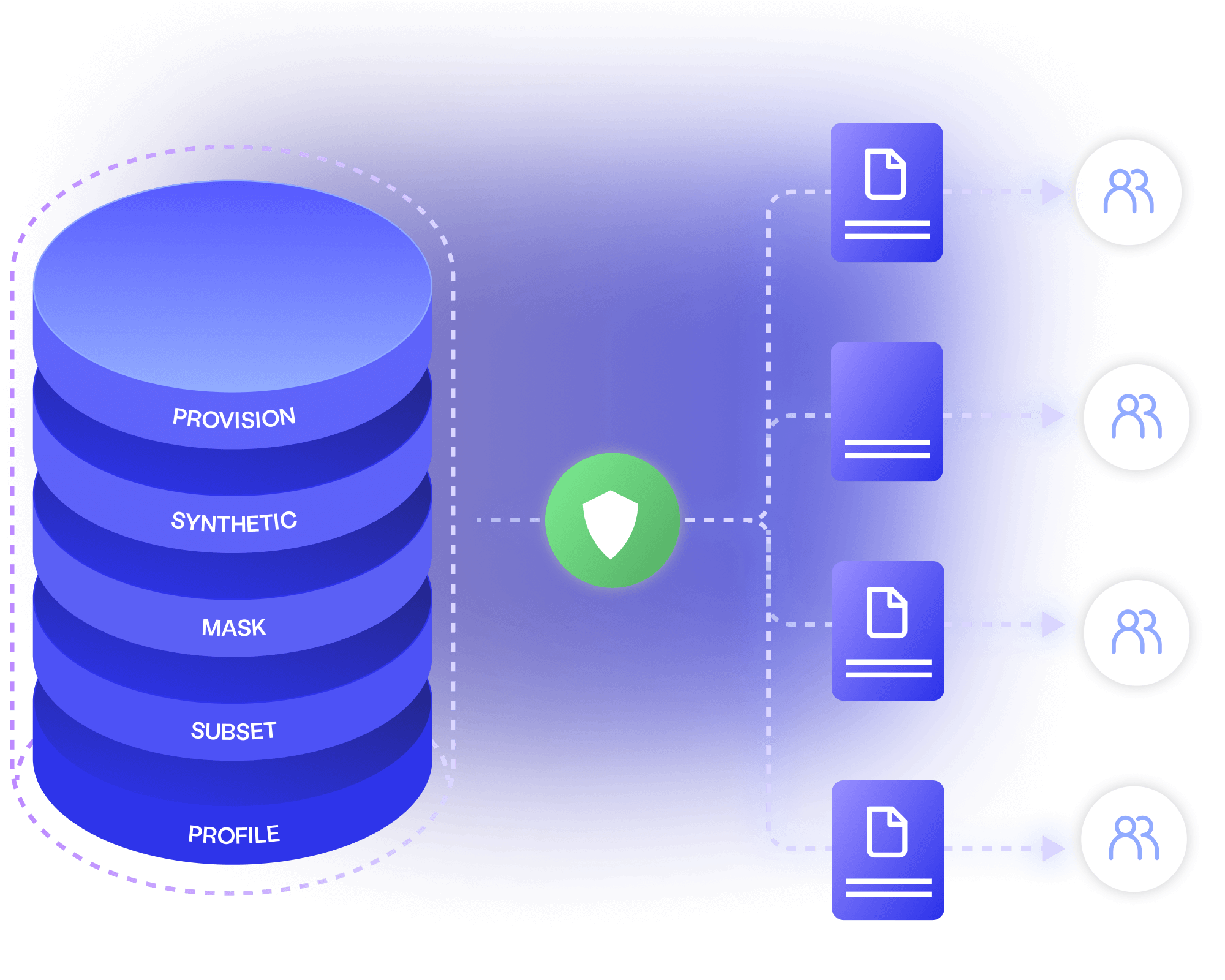

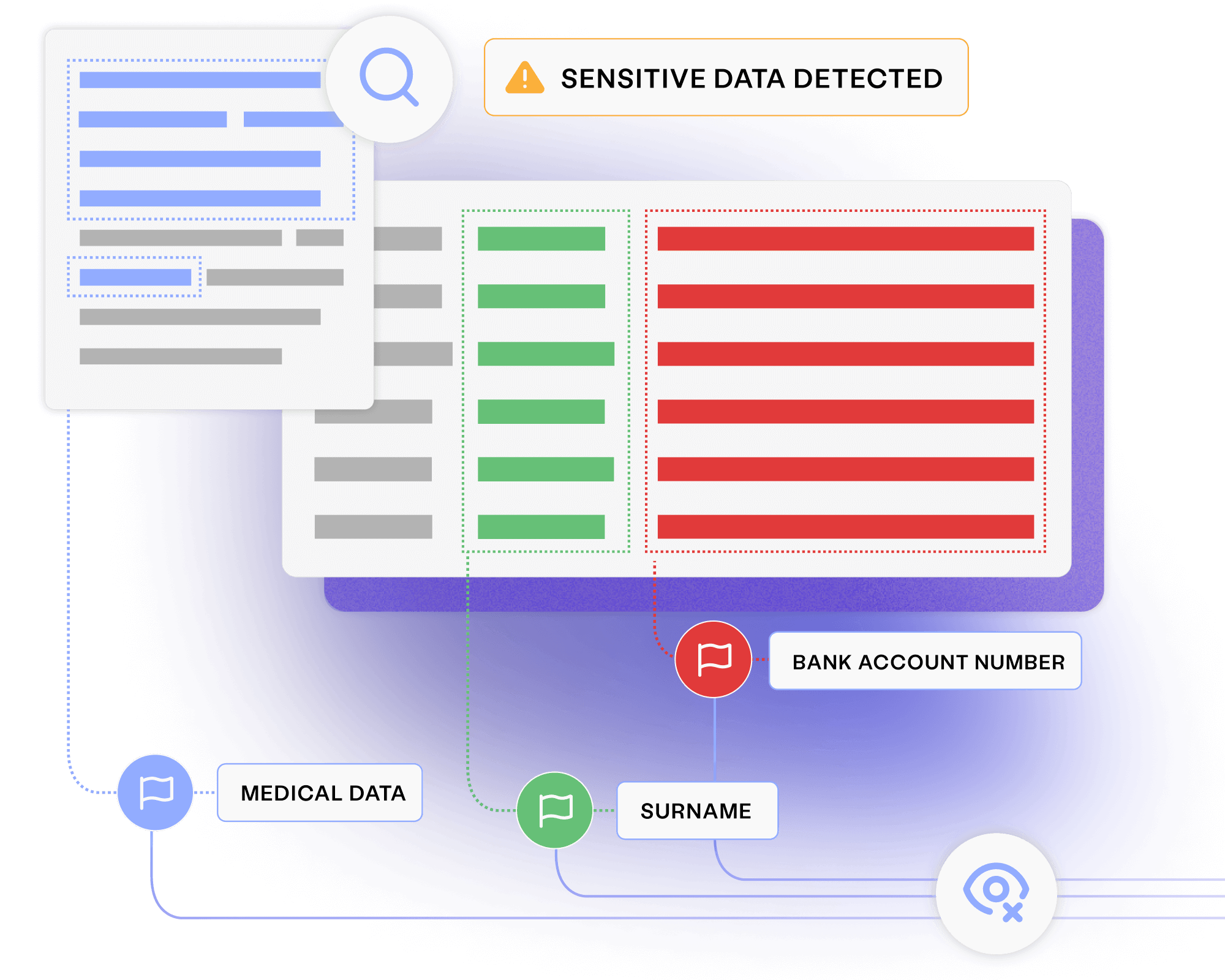

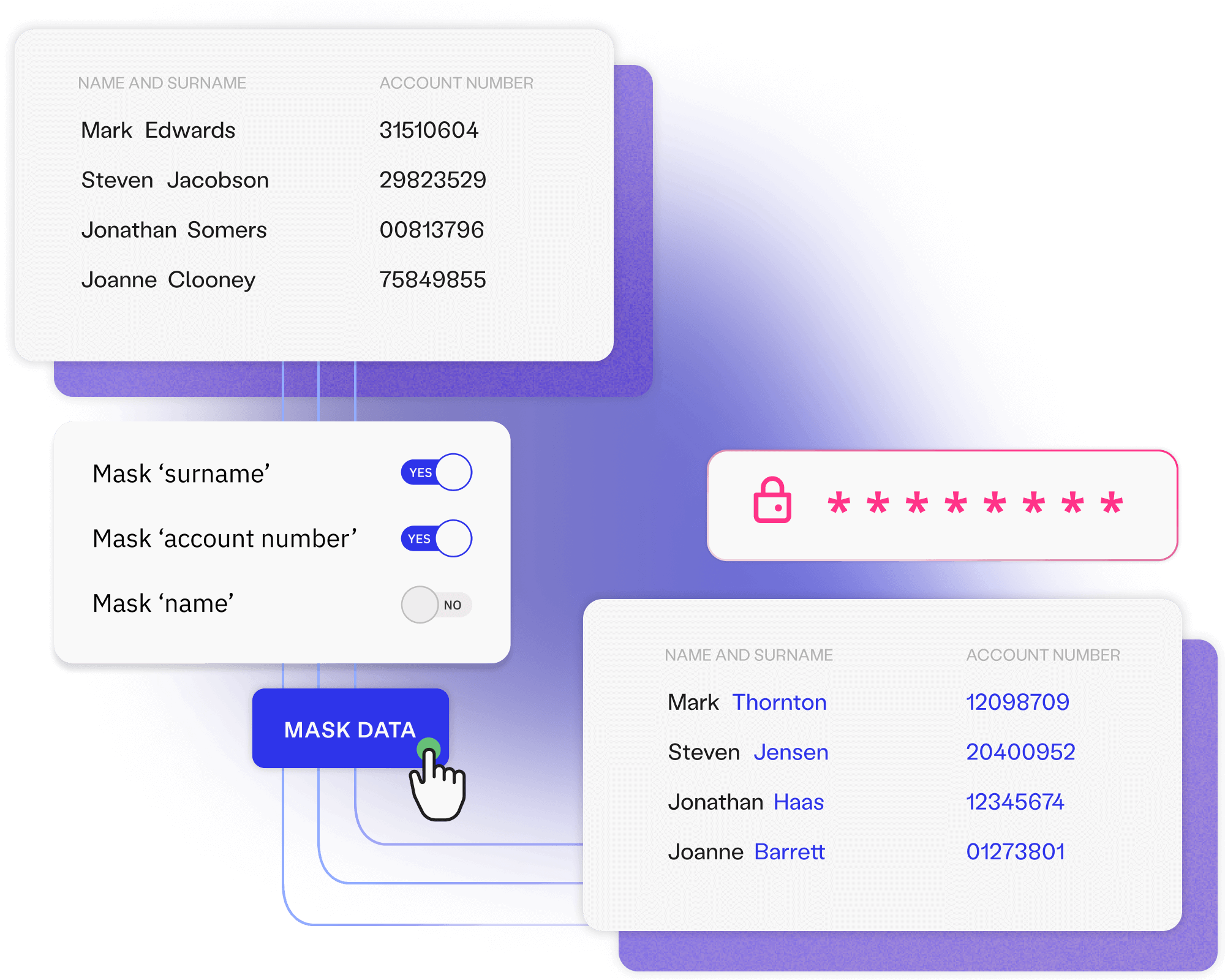

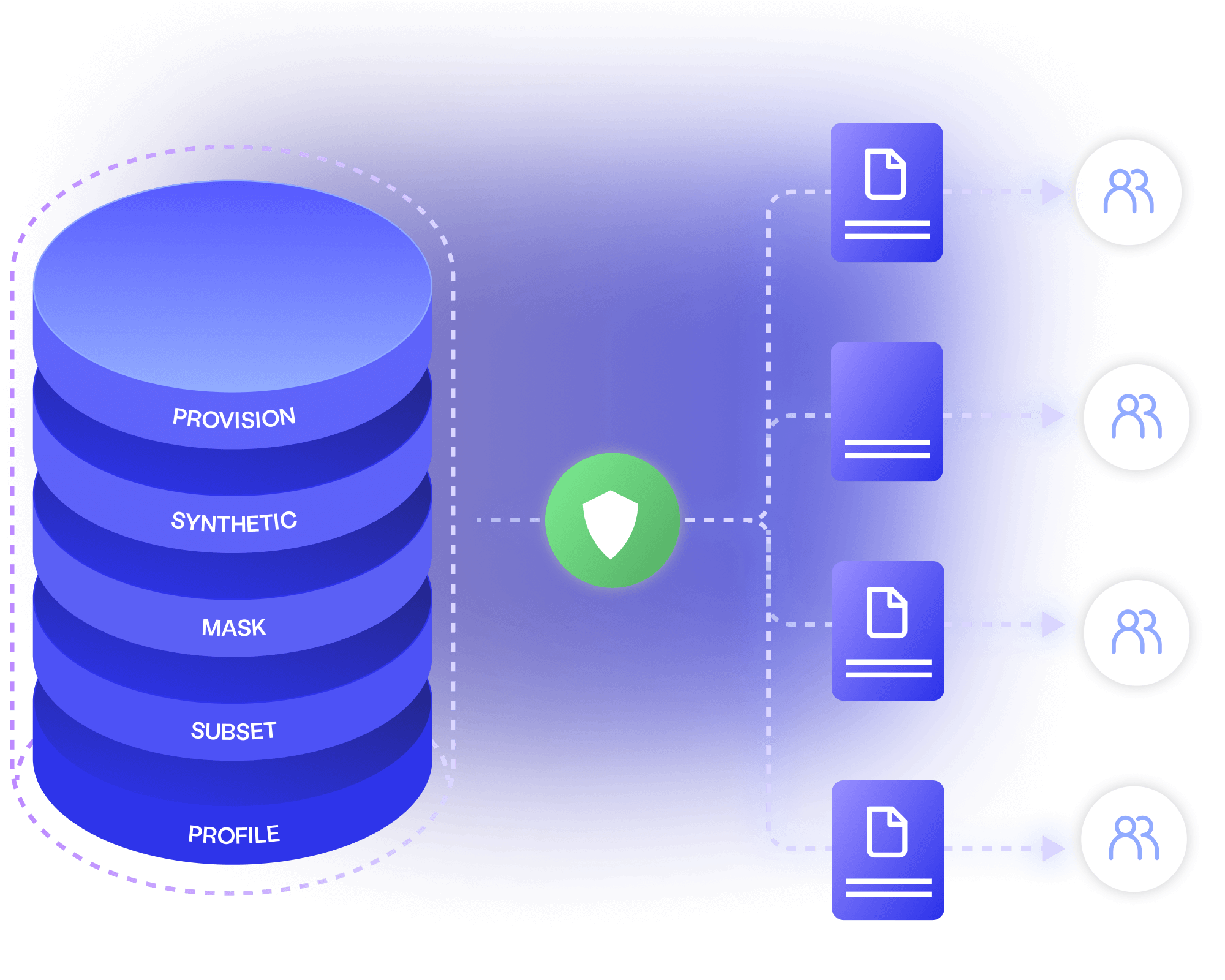

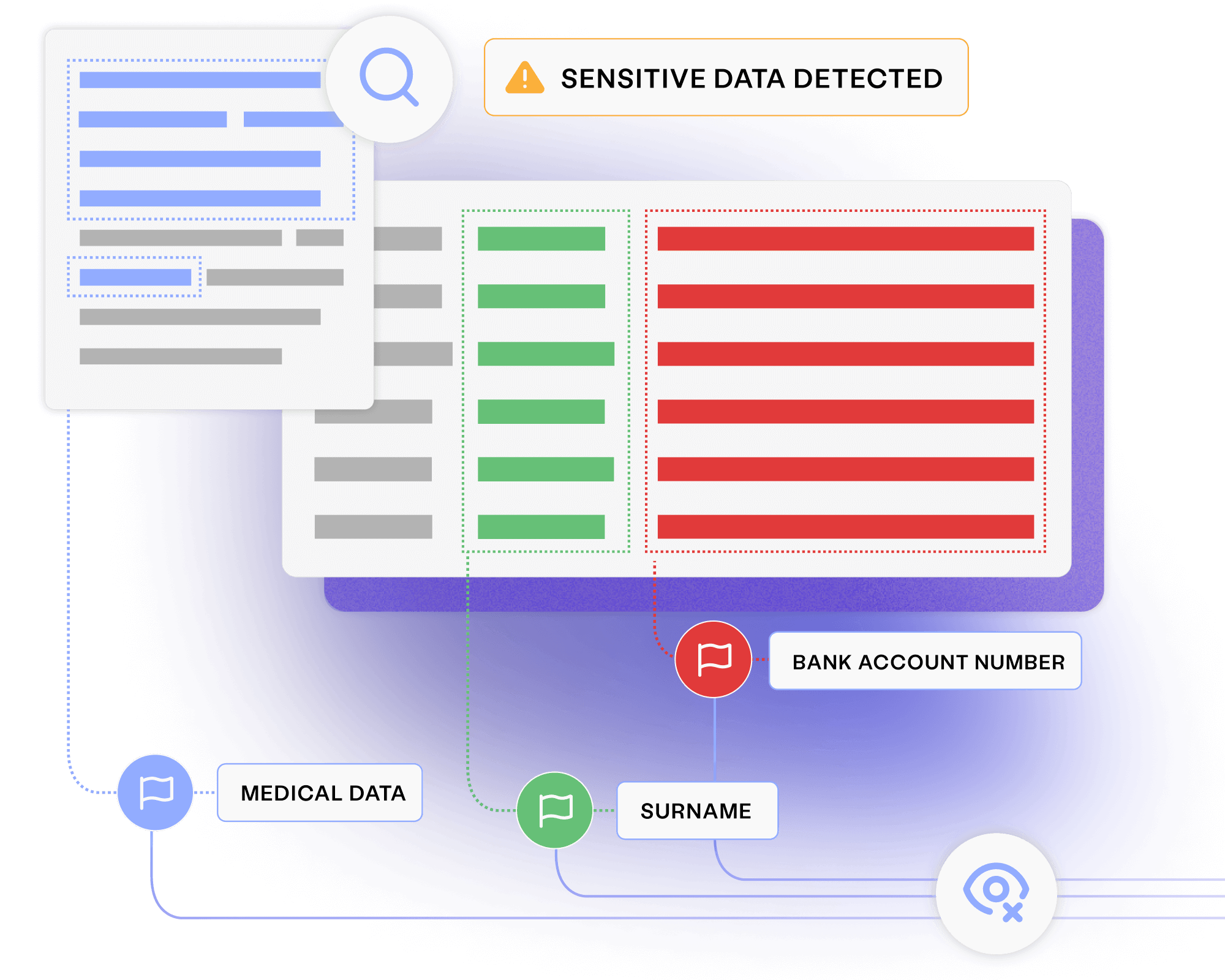

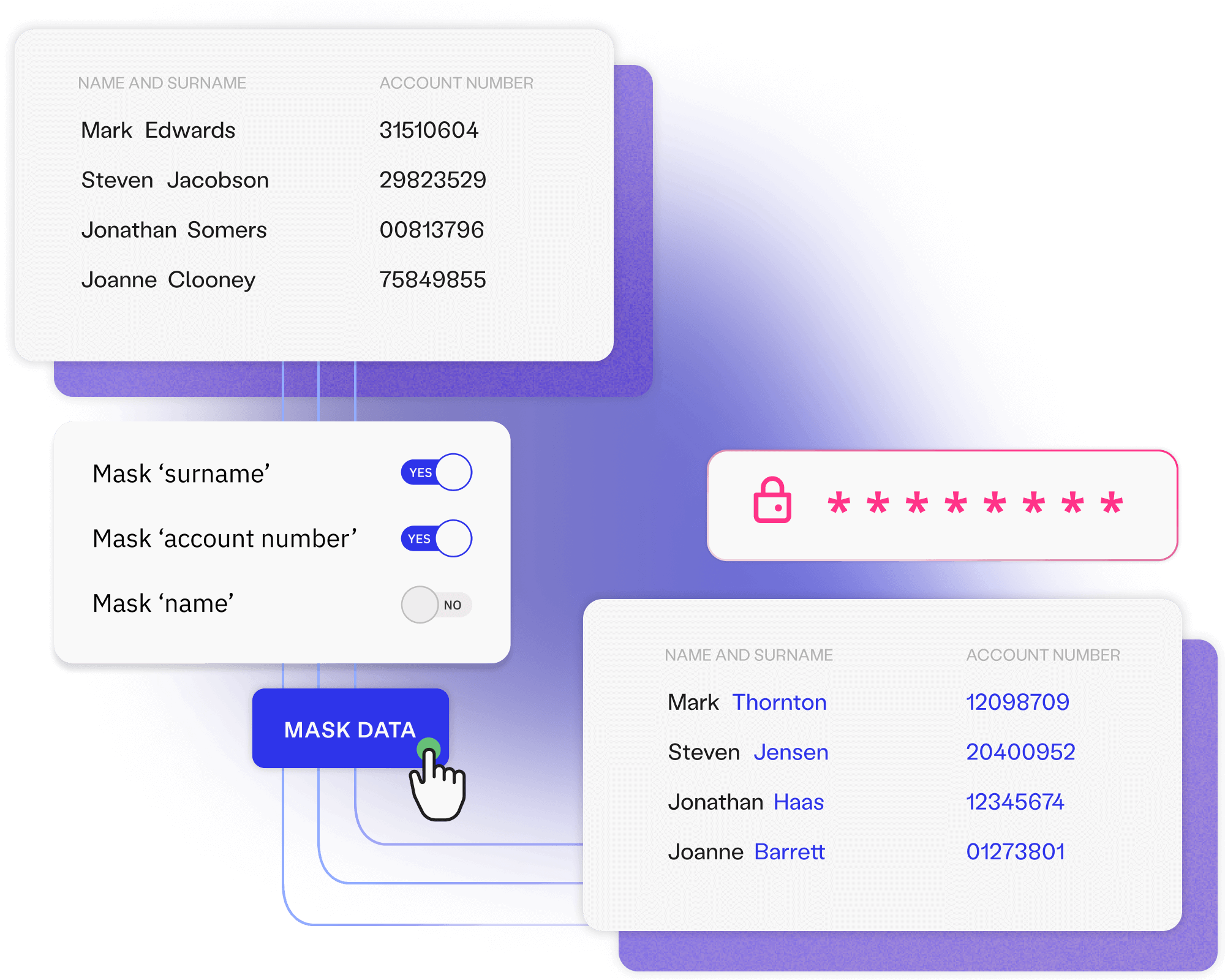

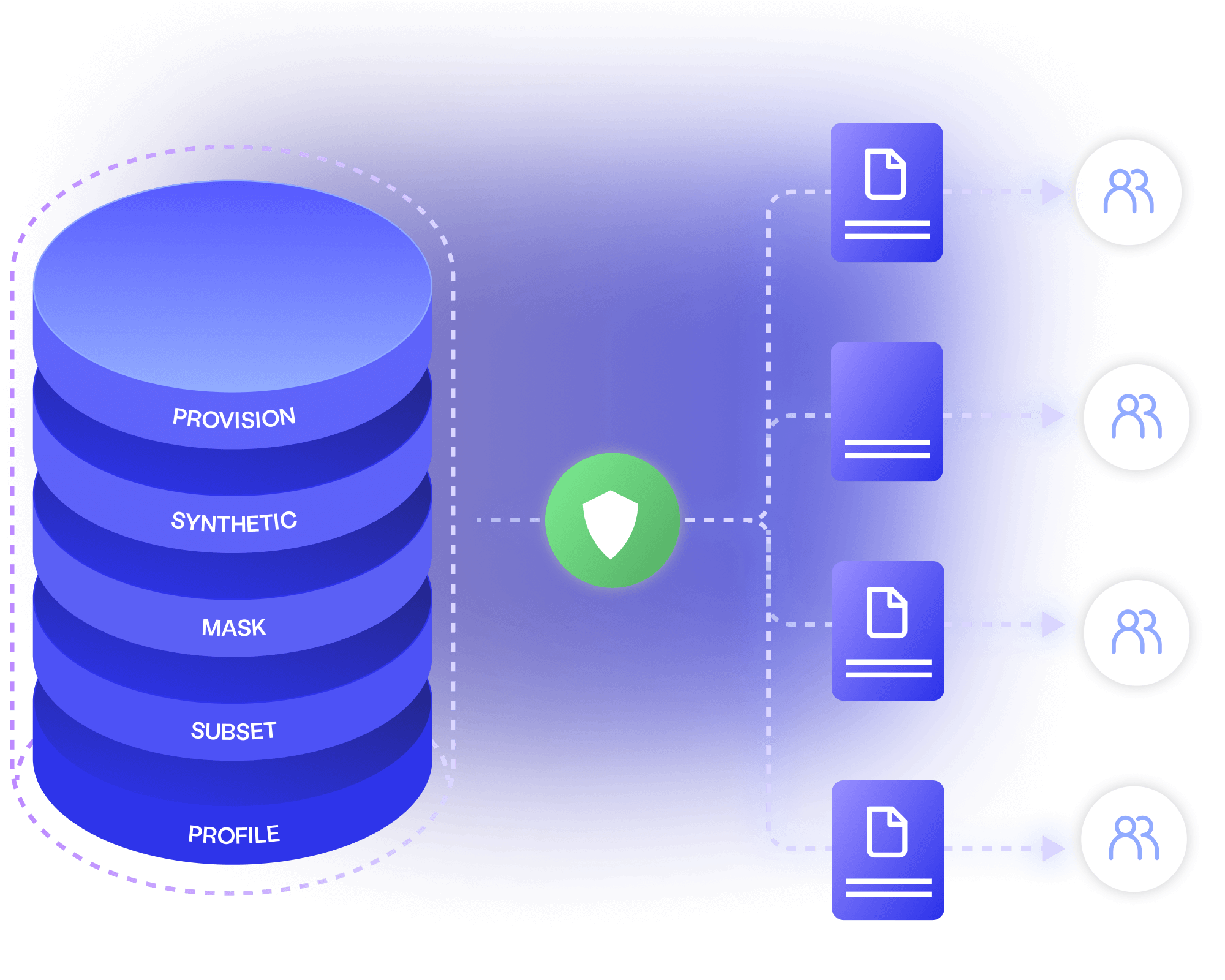

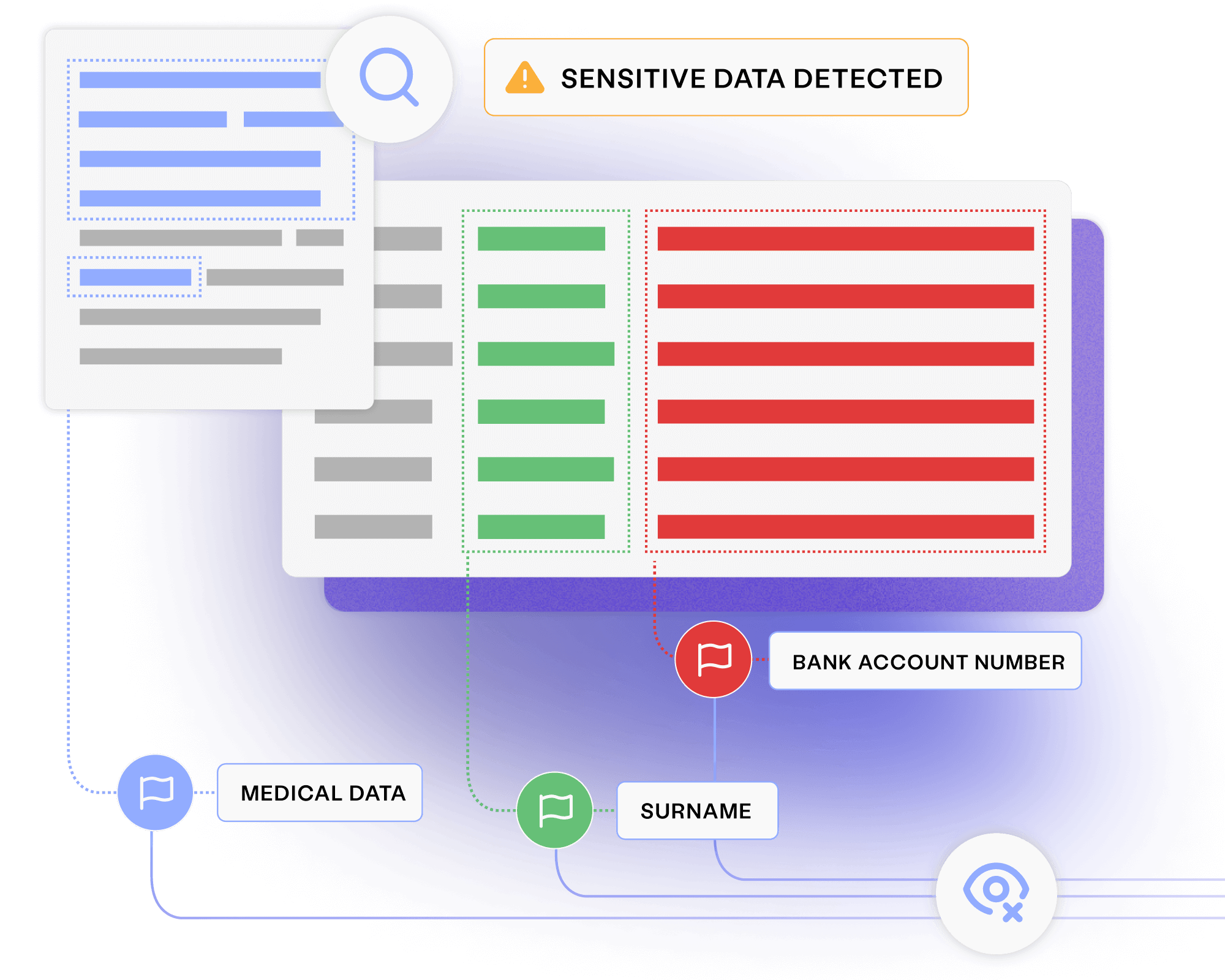

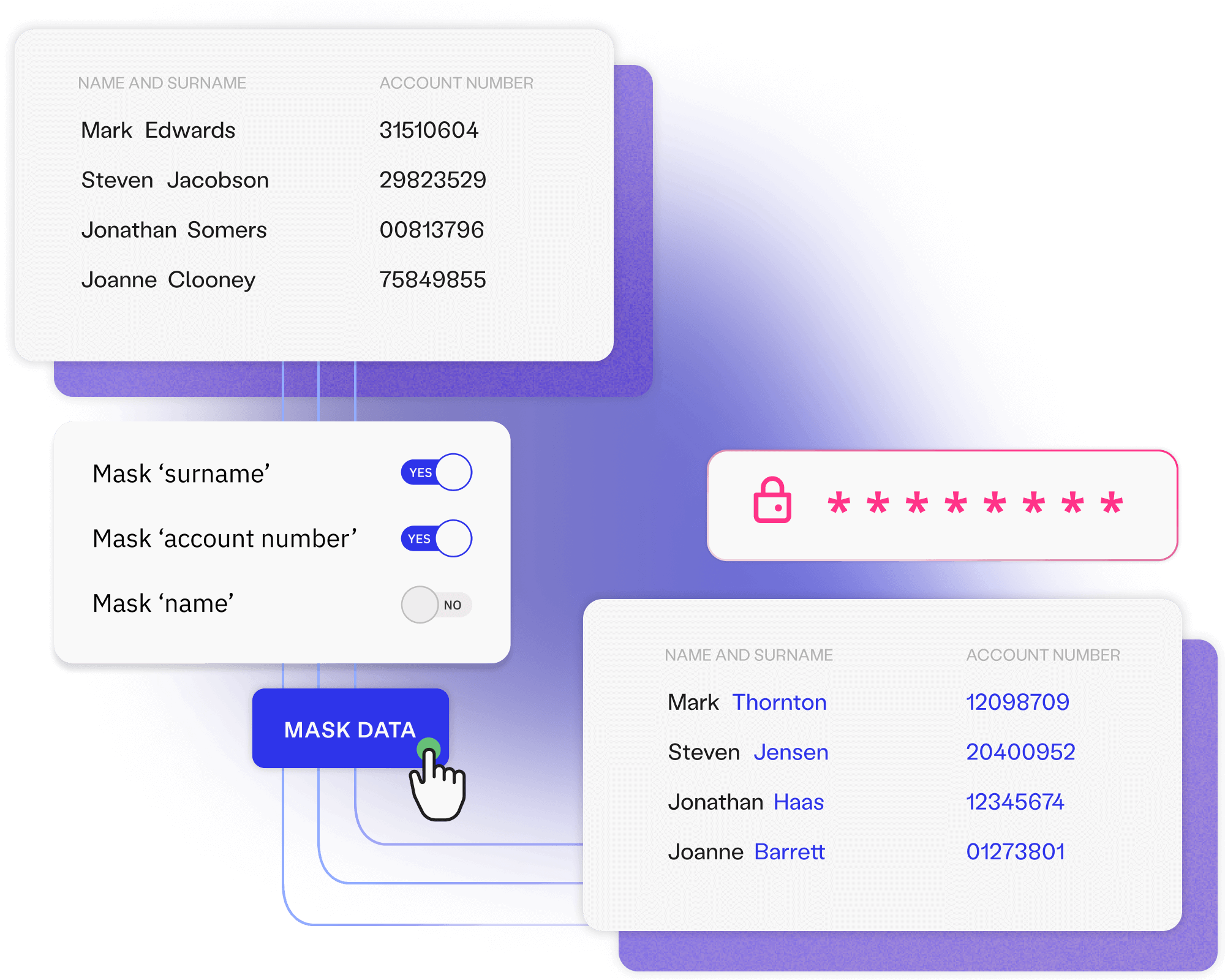

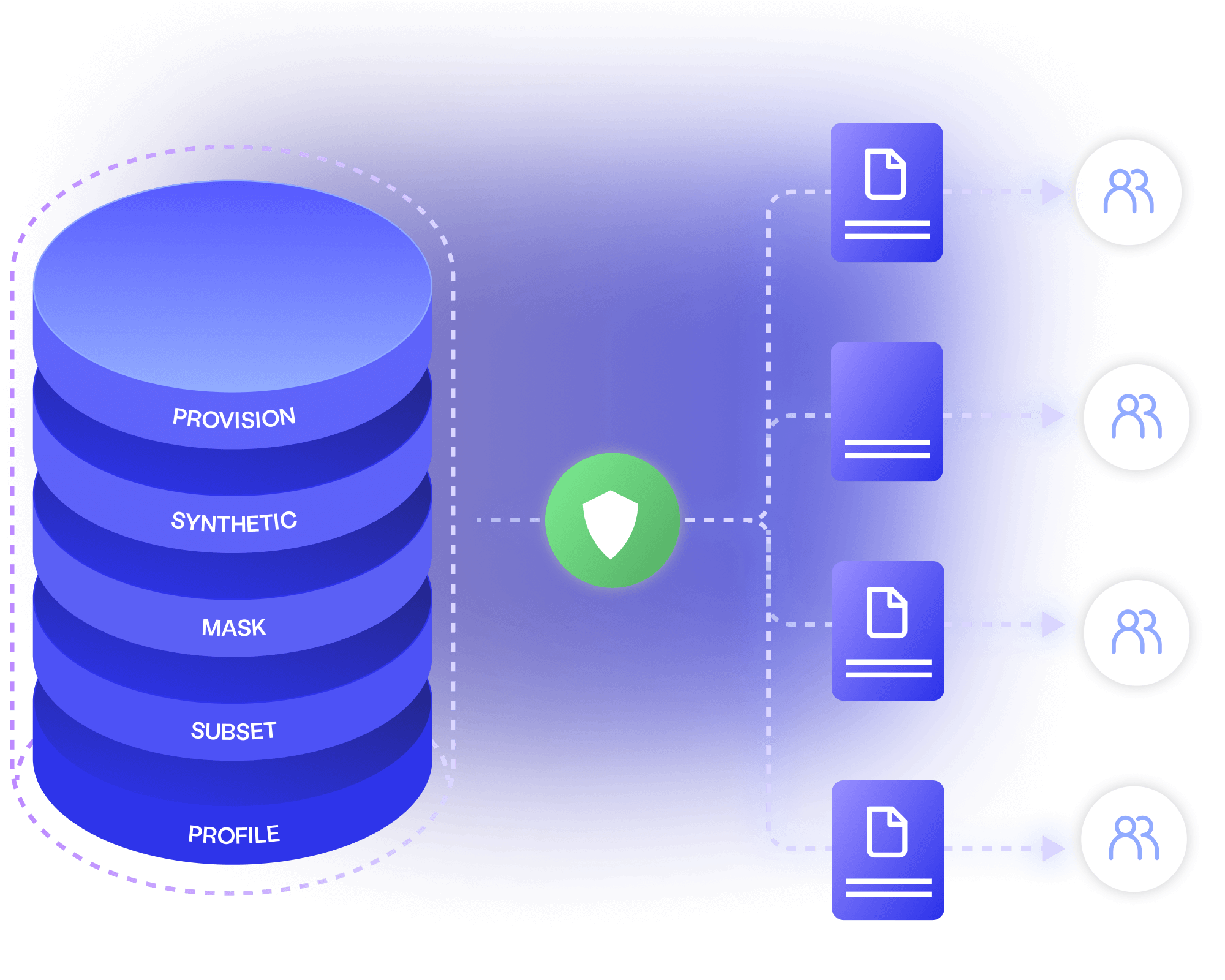

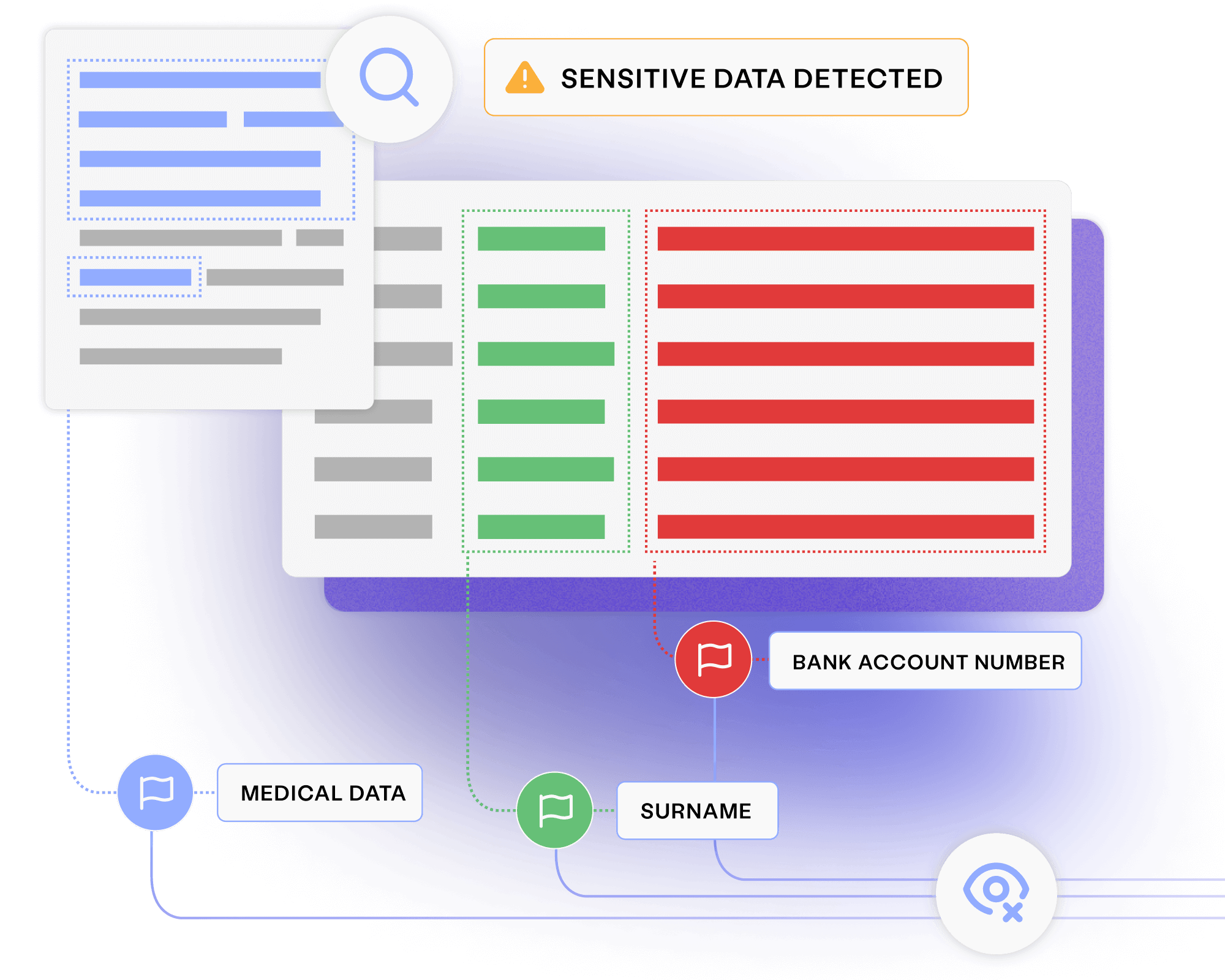

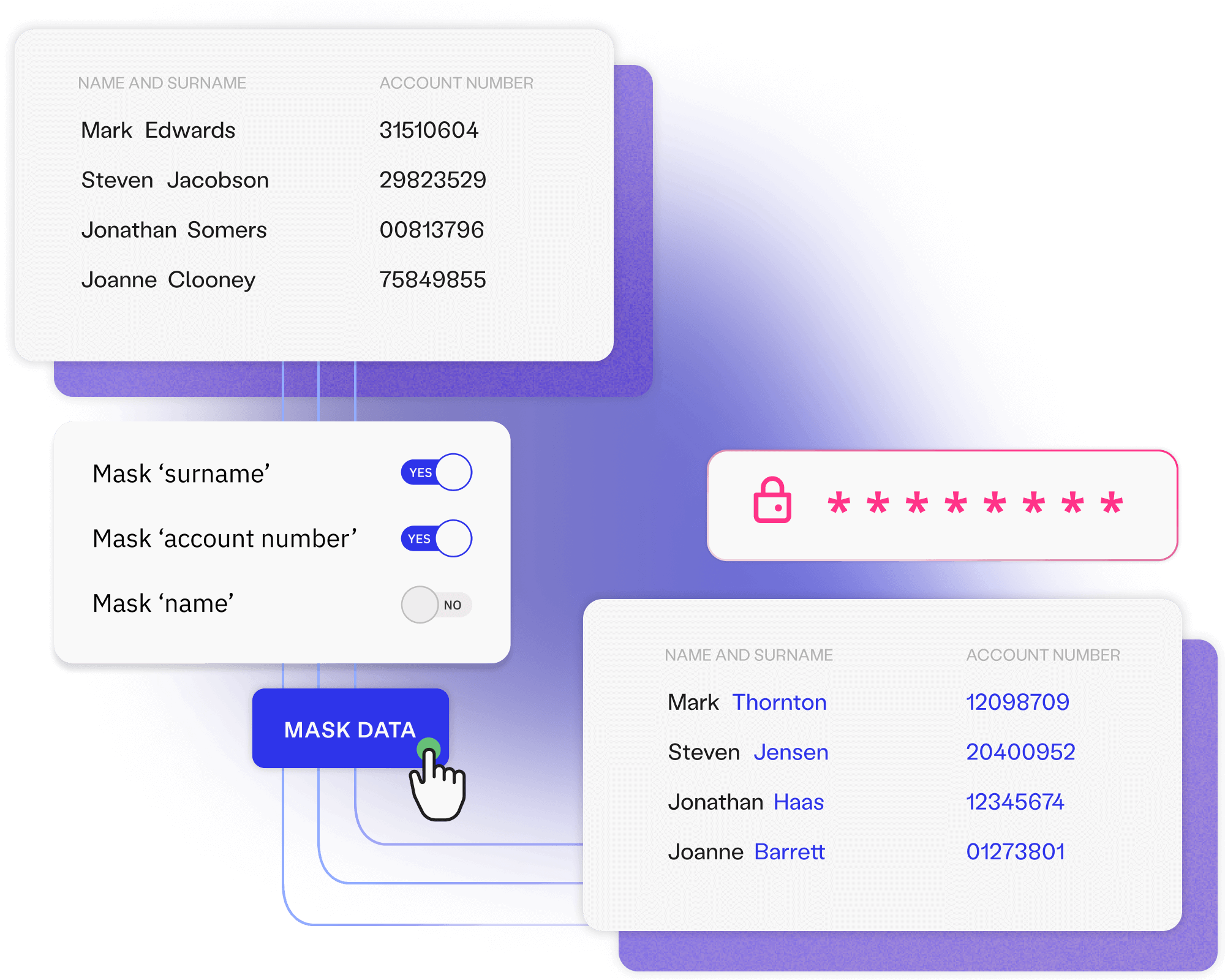

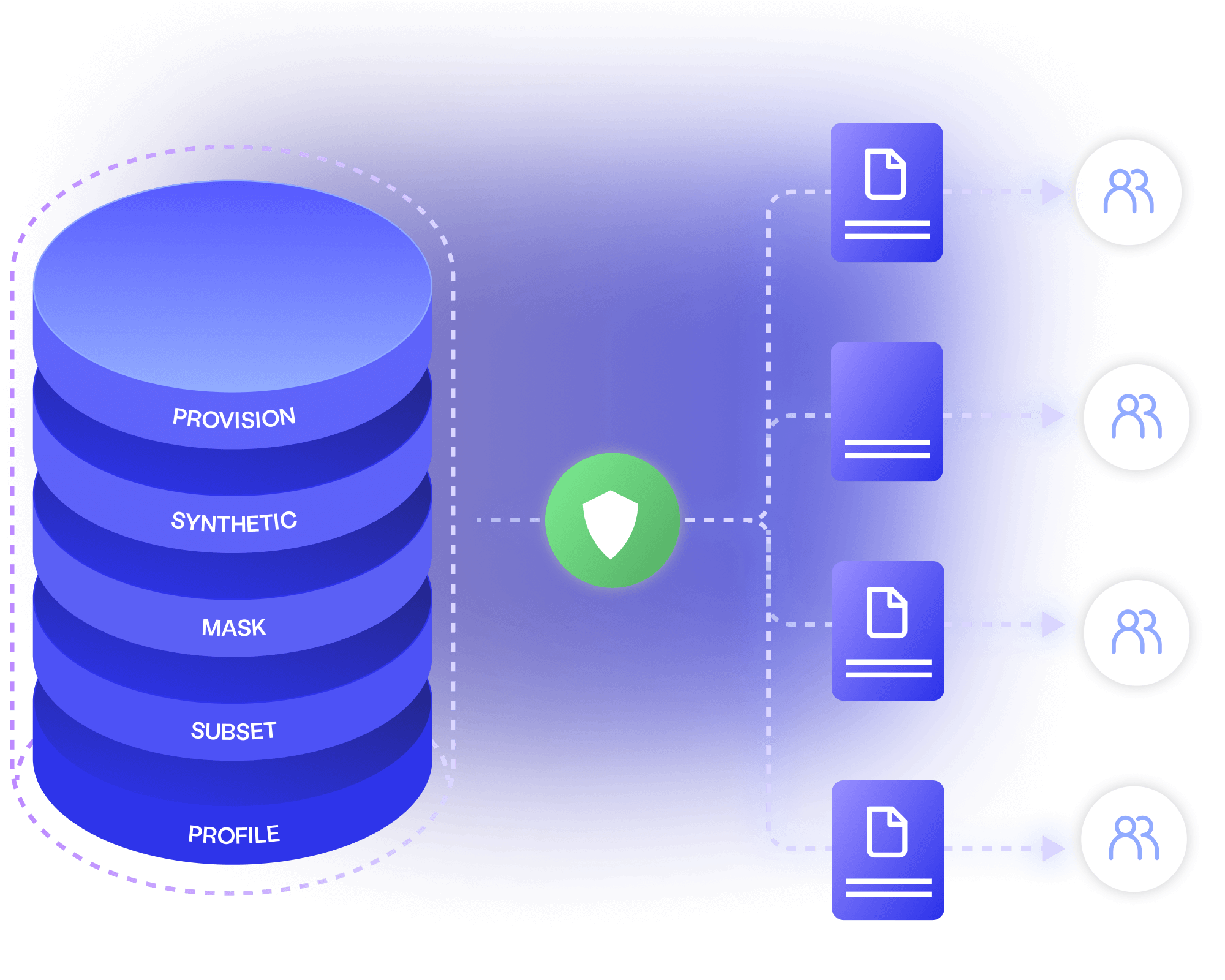

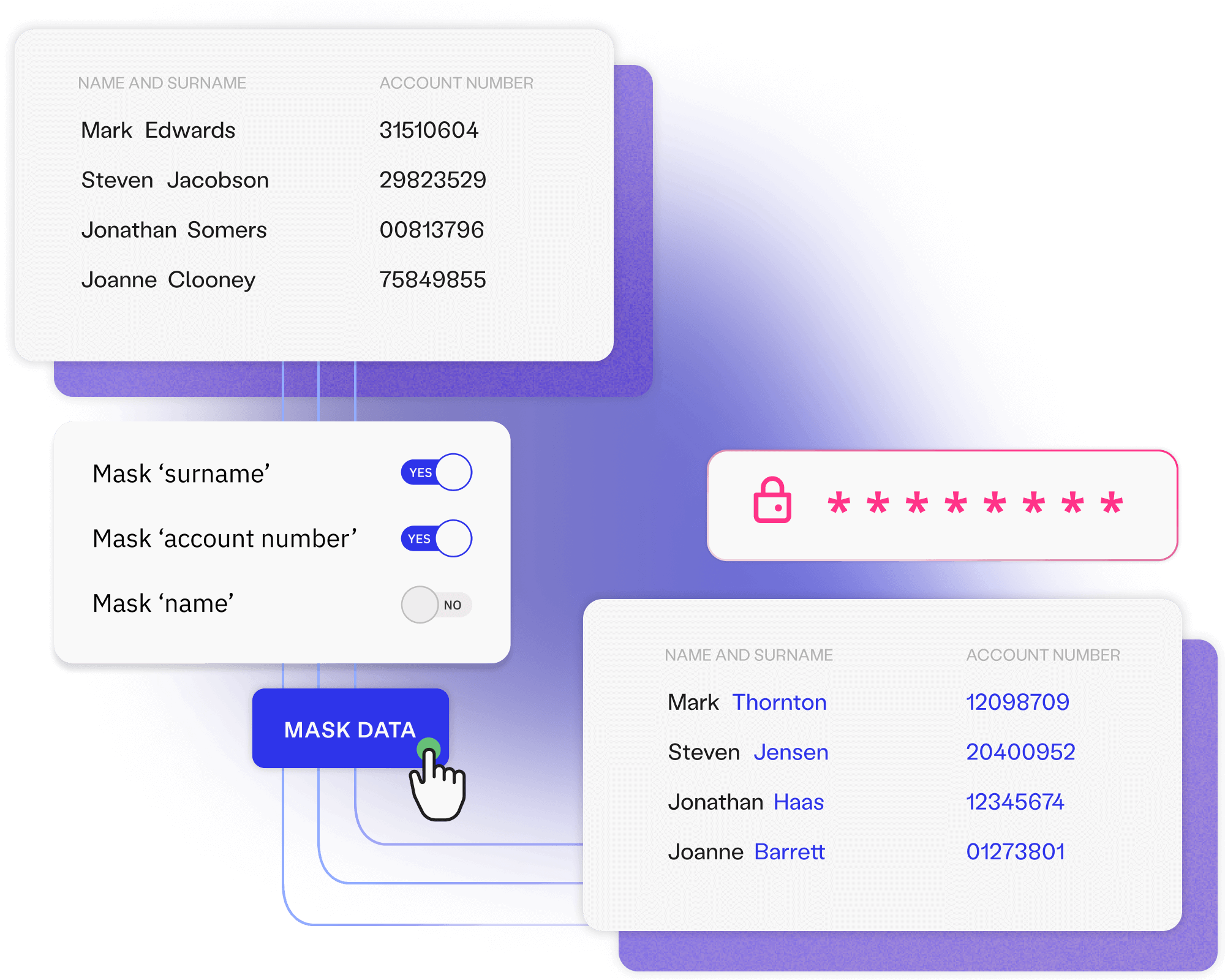

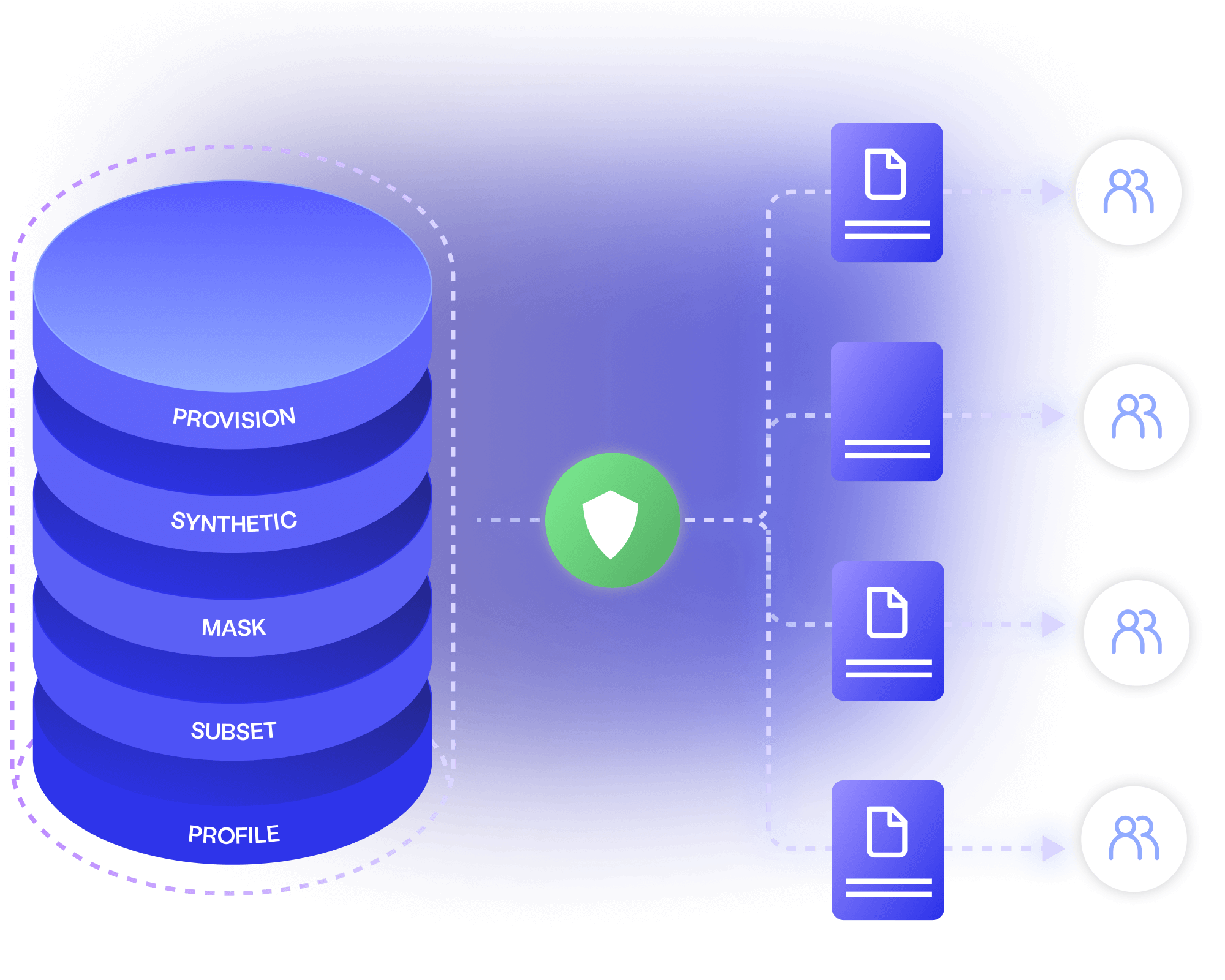

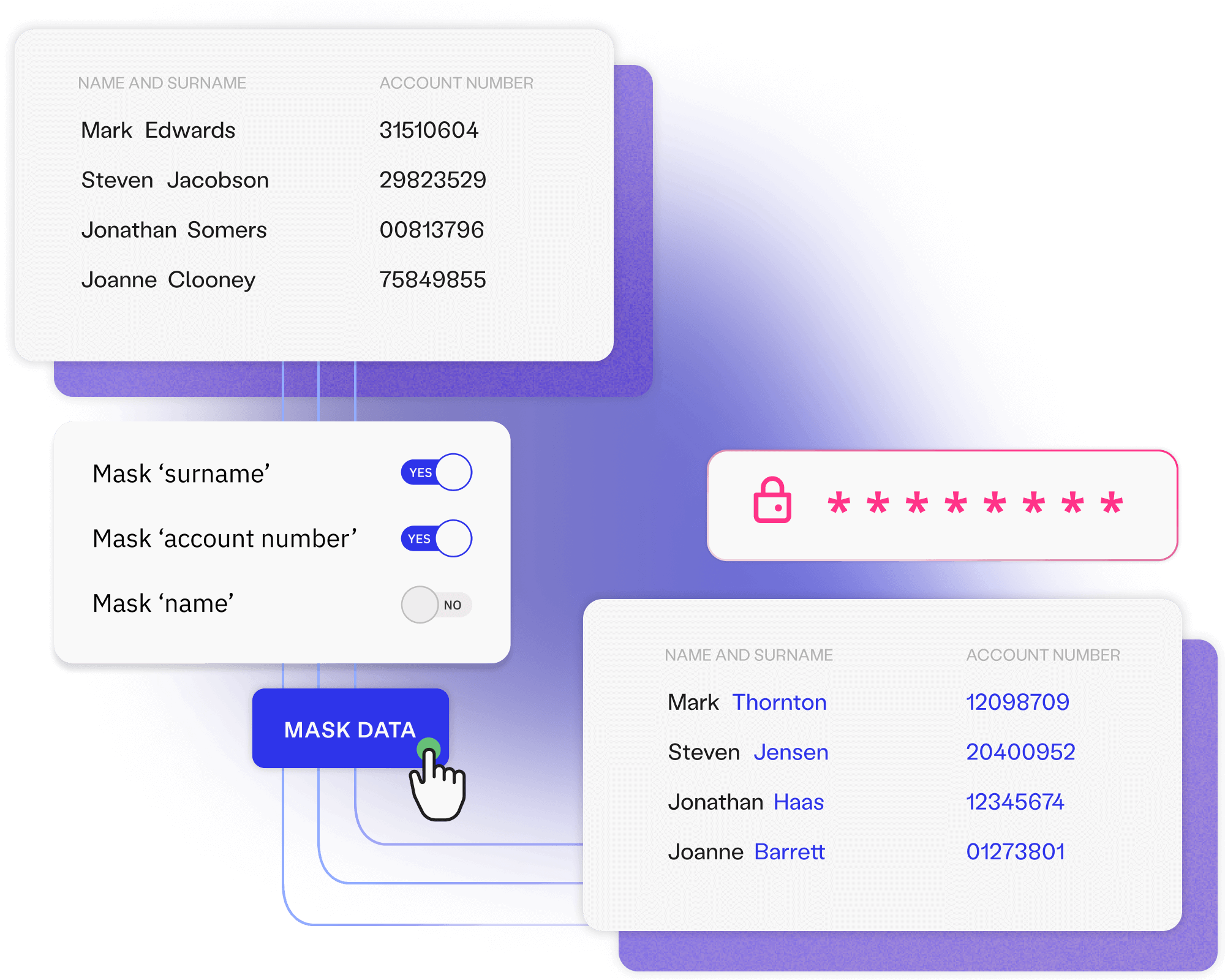

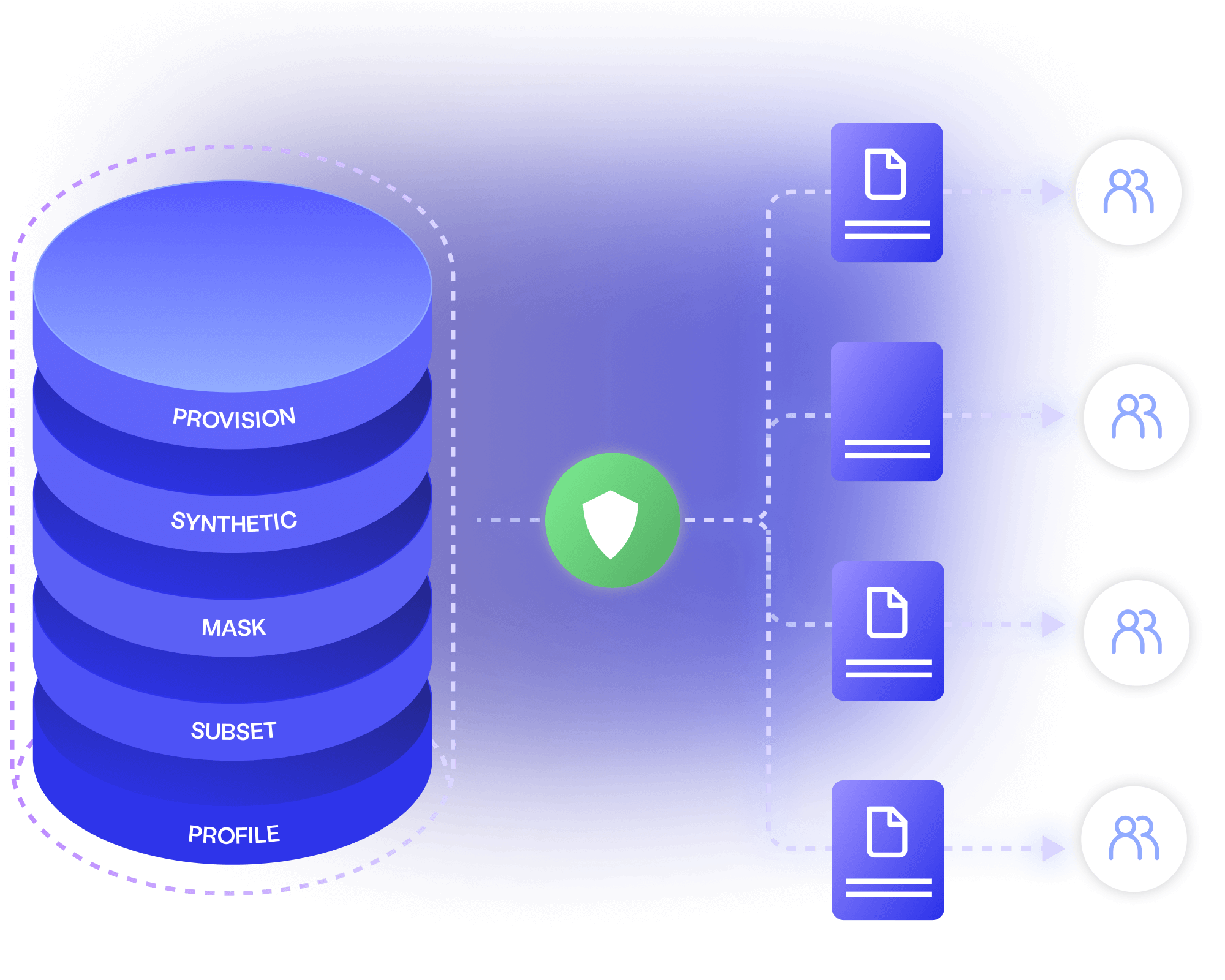

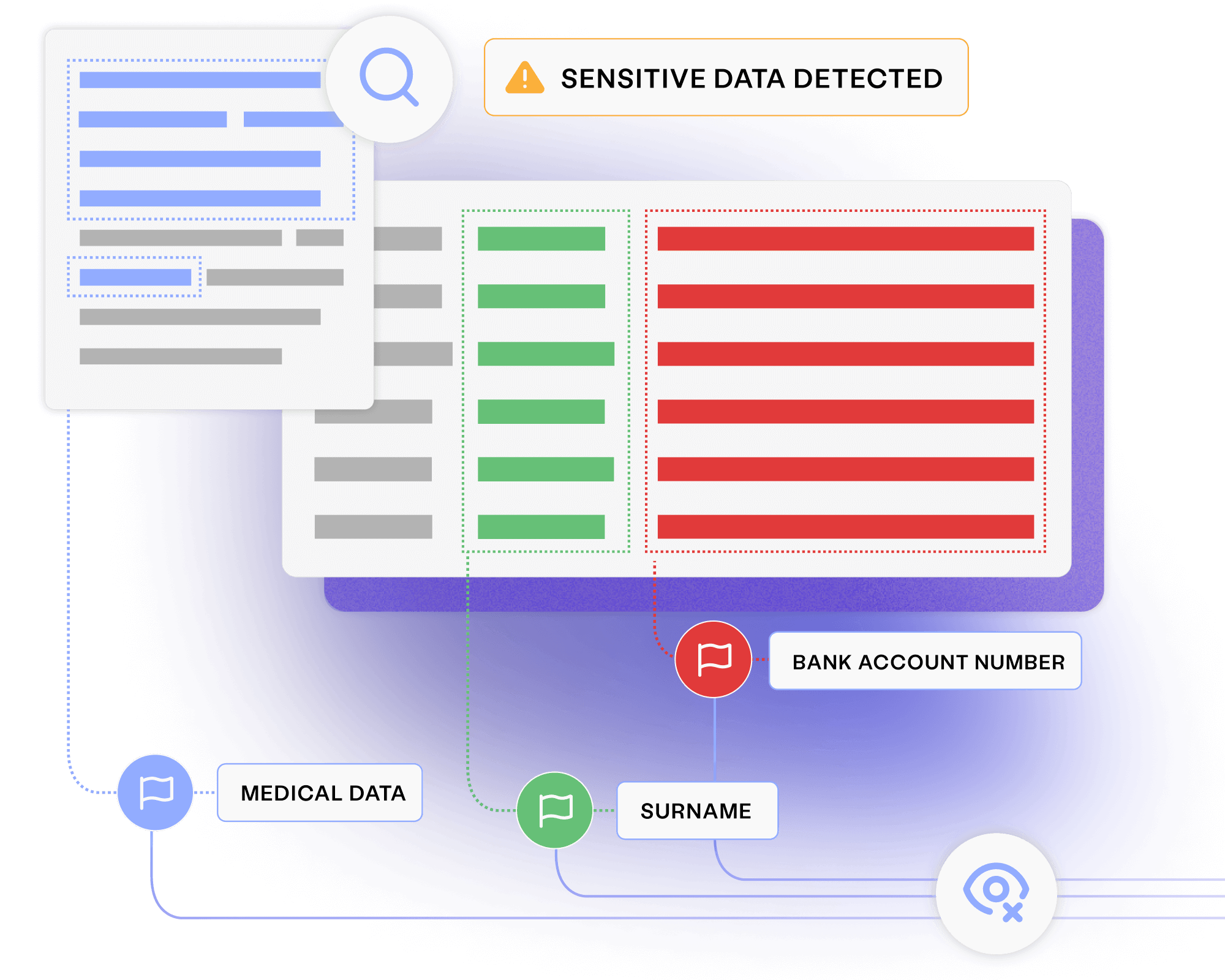

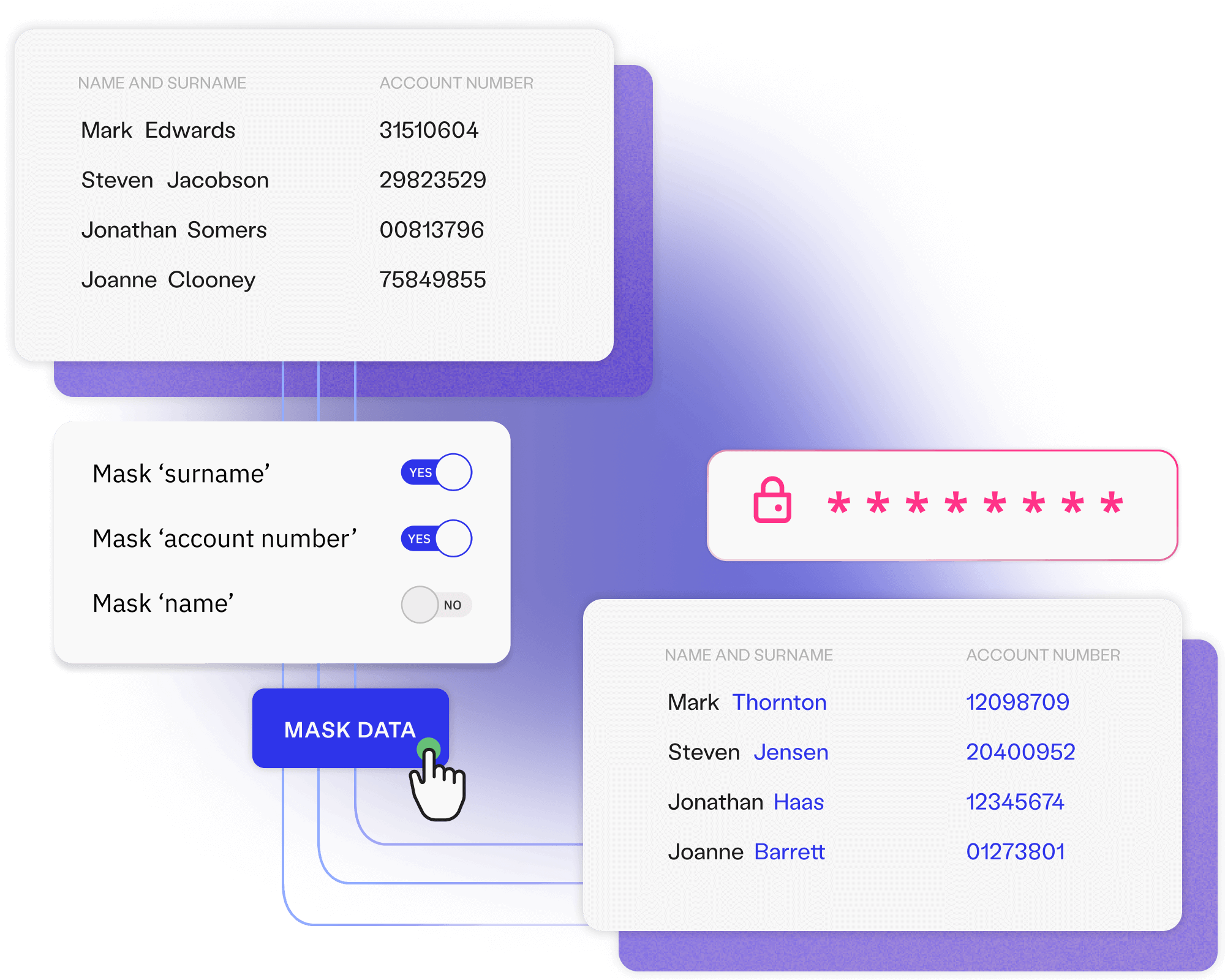

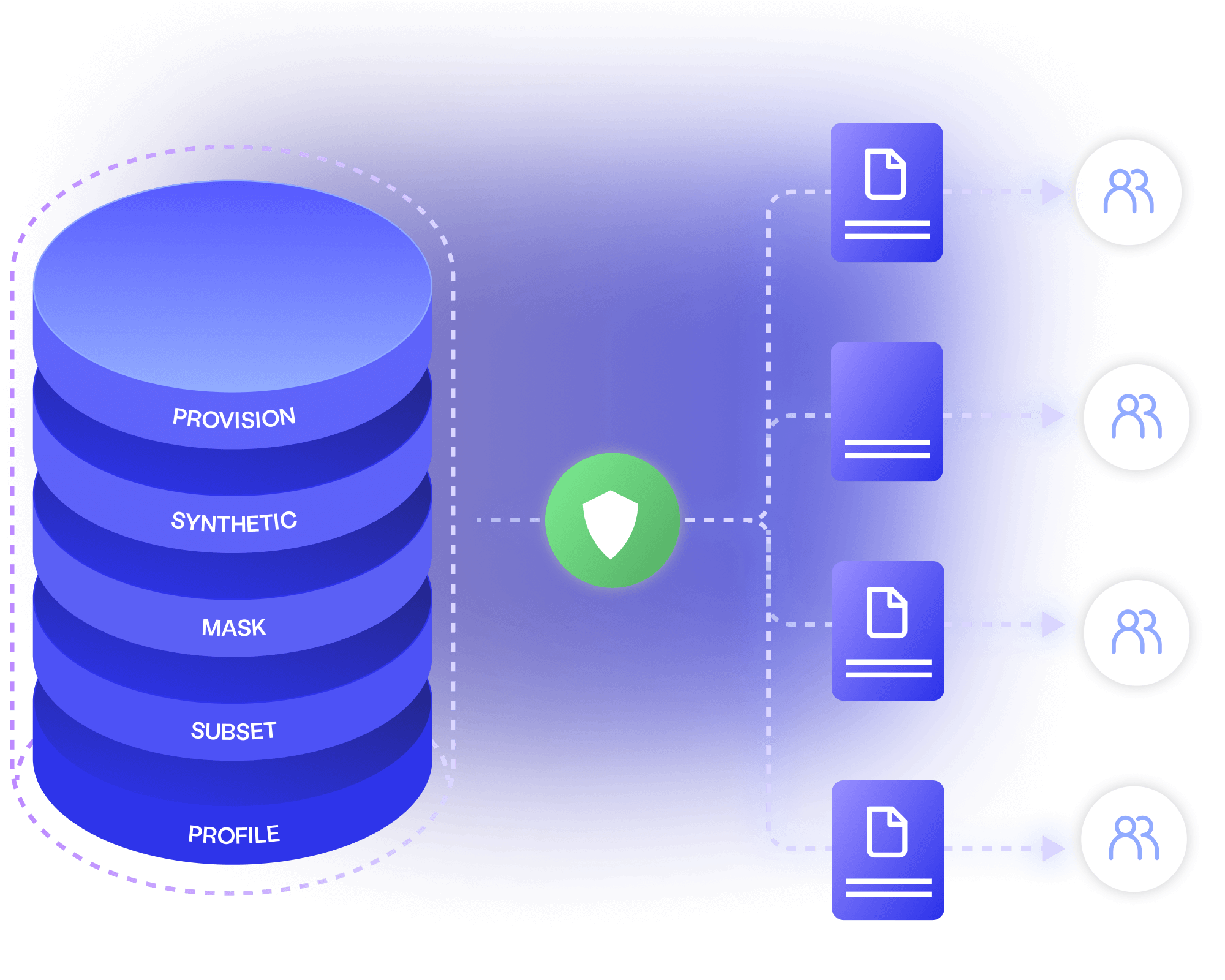

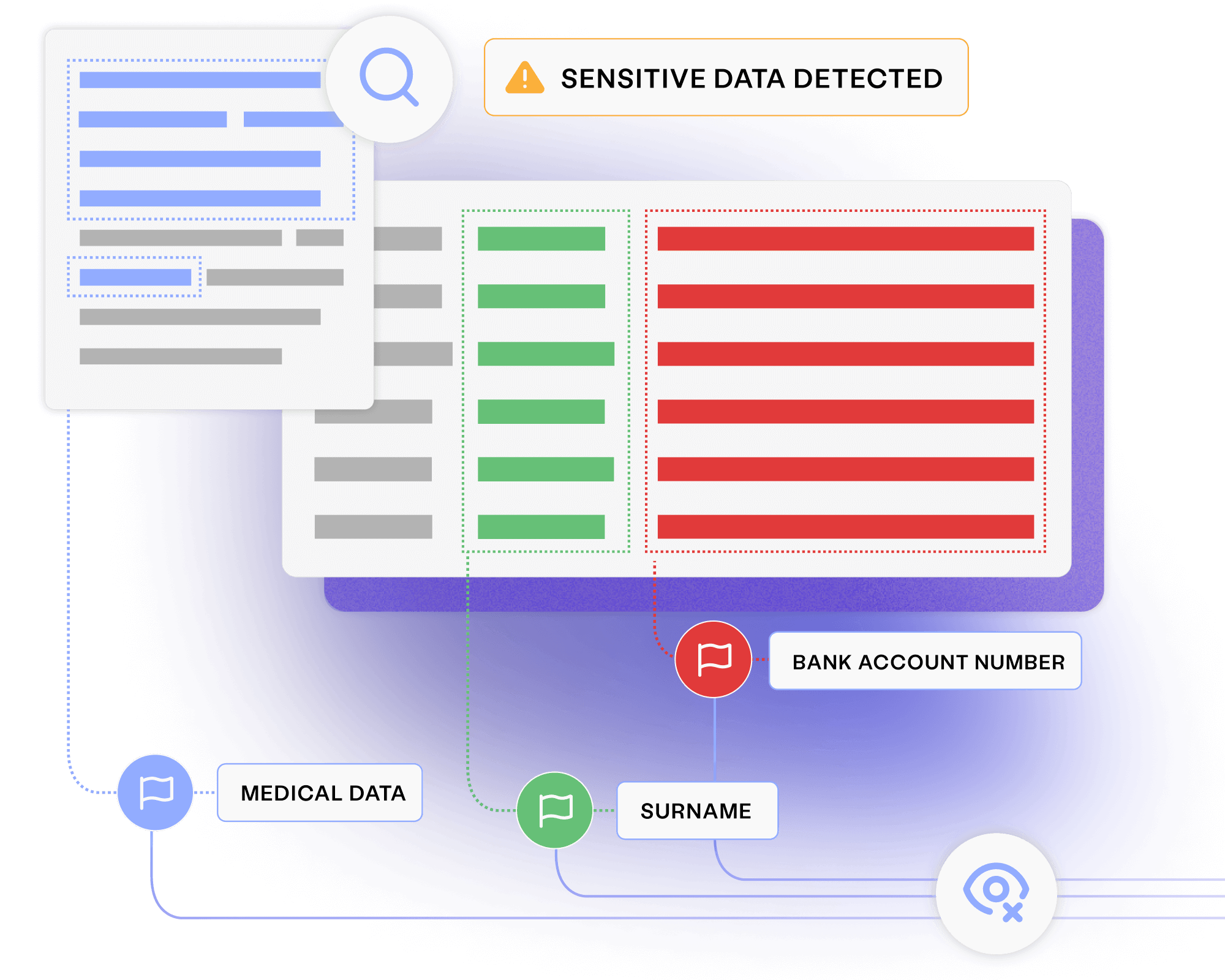

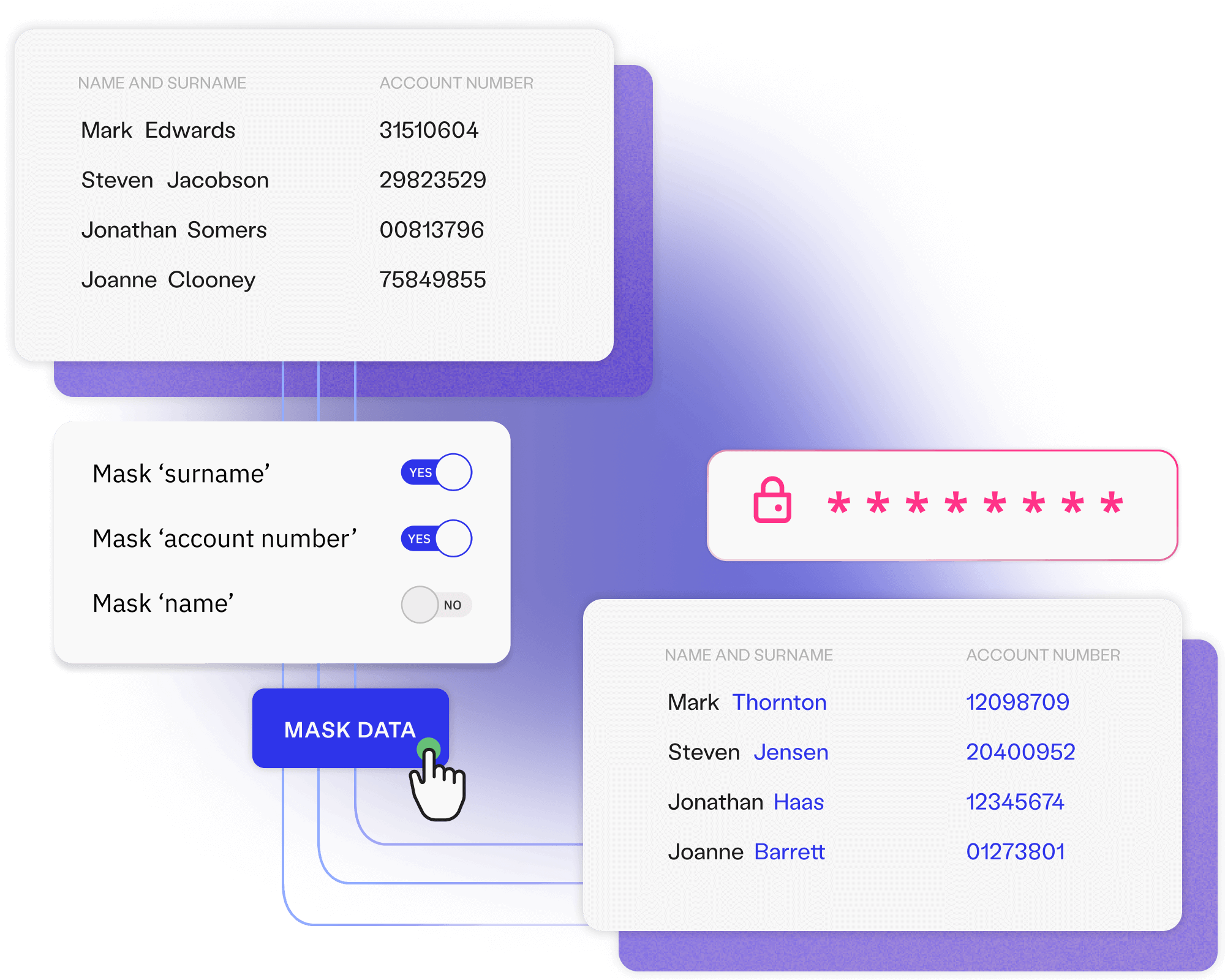

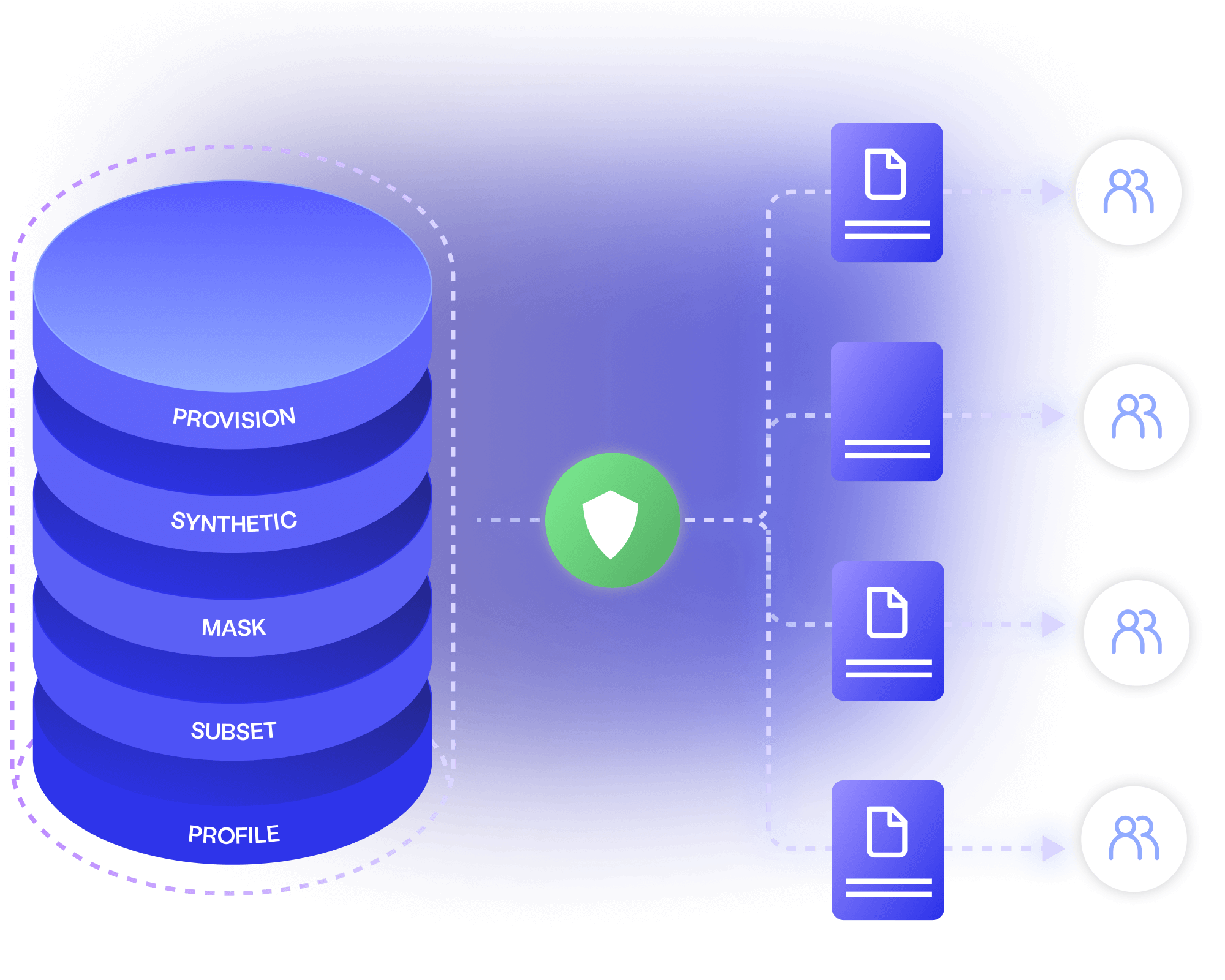

Remove sensitive data before it reaches less-secure non-production environments,

while providing every tool and team with all the data they need.

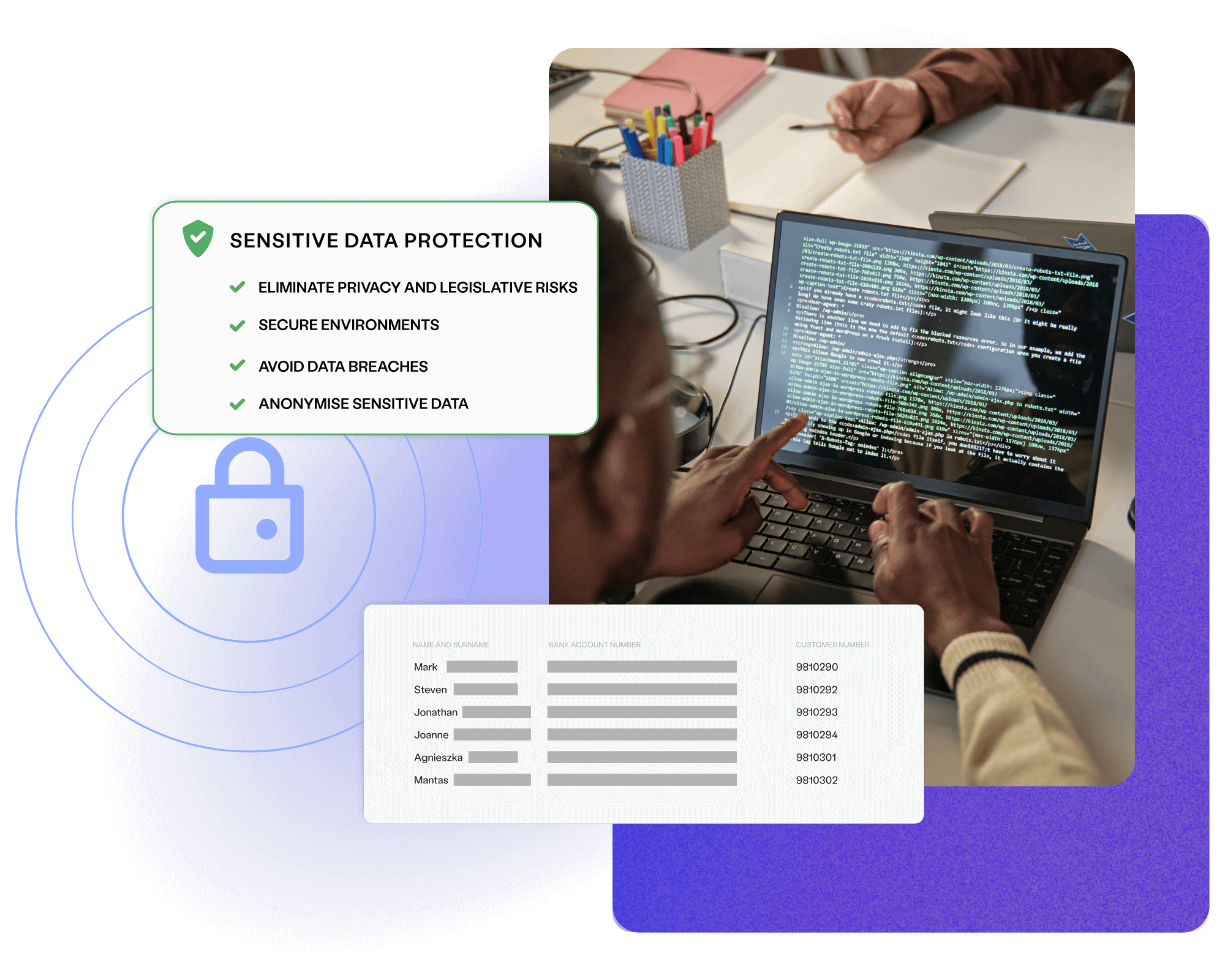

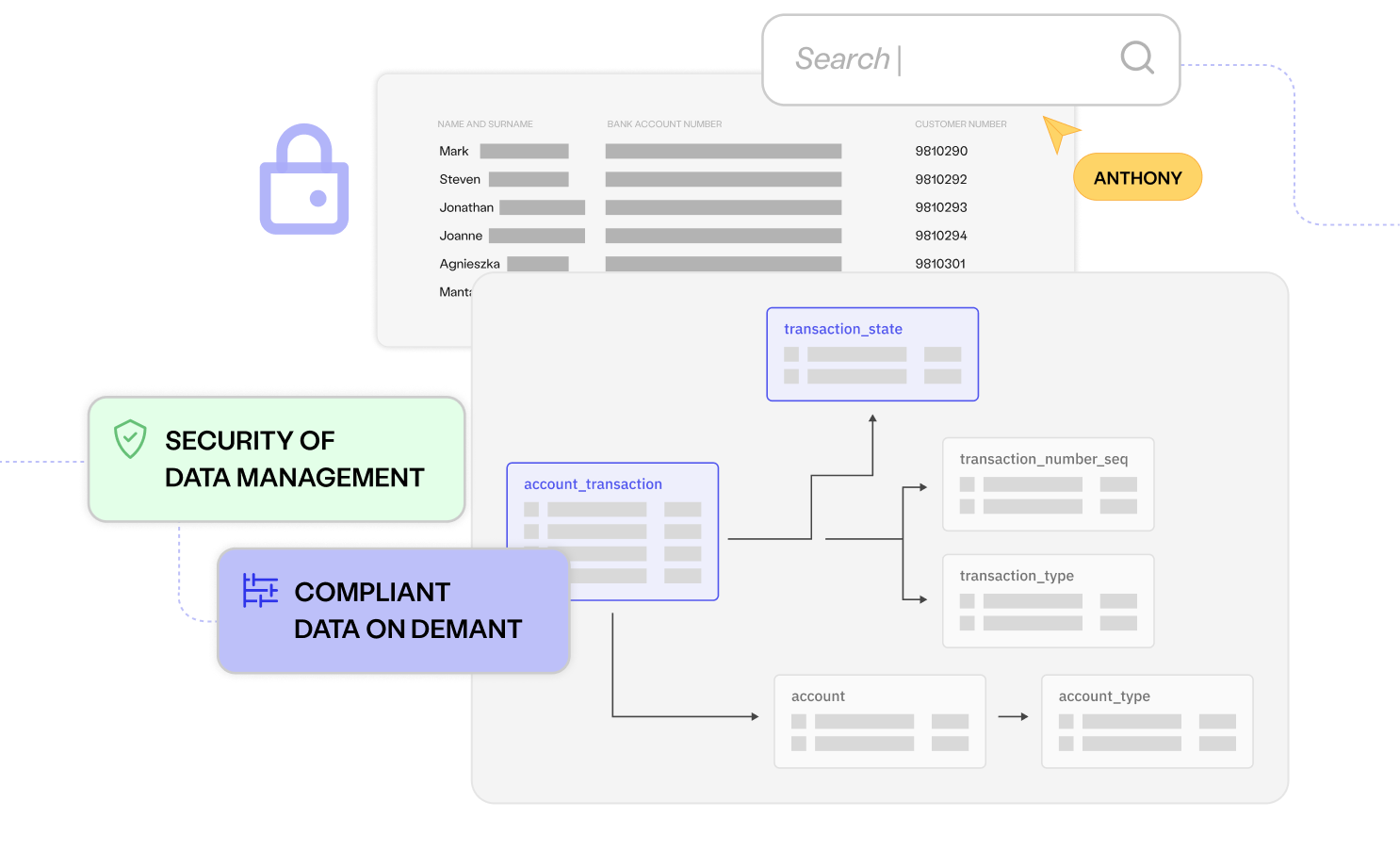

Removing sensitive data simplifies compliance with regulations like the EU & UK GDPRs, CCPA and HIPAA.

Avoiding the spread of sensitive data to less-secure environments reduces your attack surface and the risk of damaging data breaches.

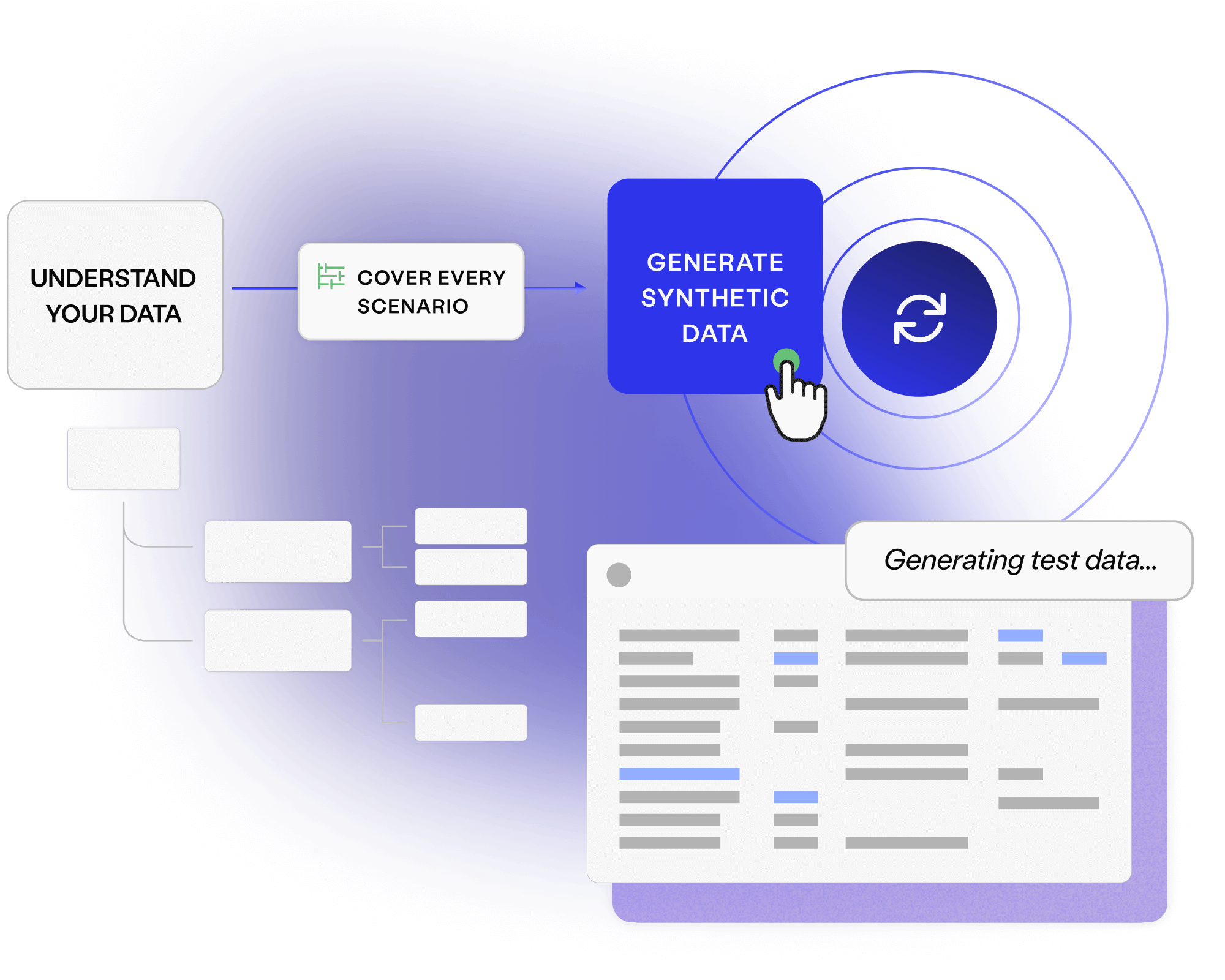

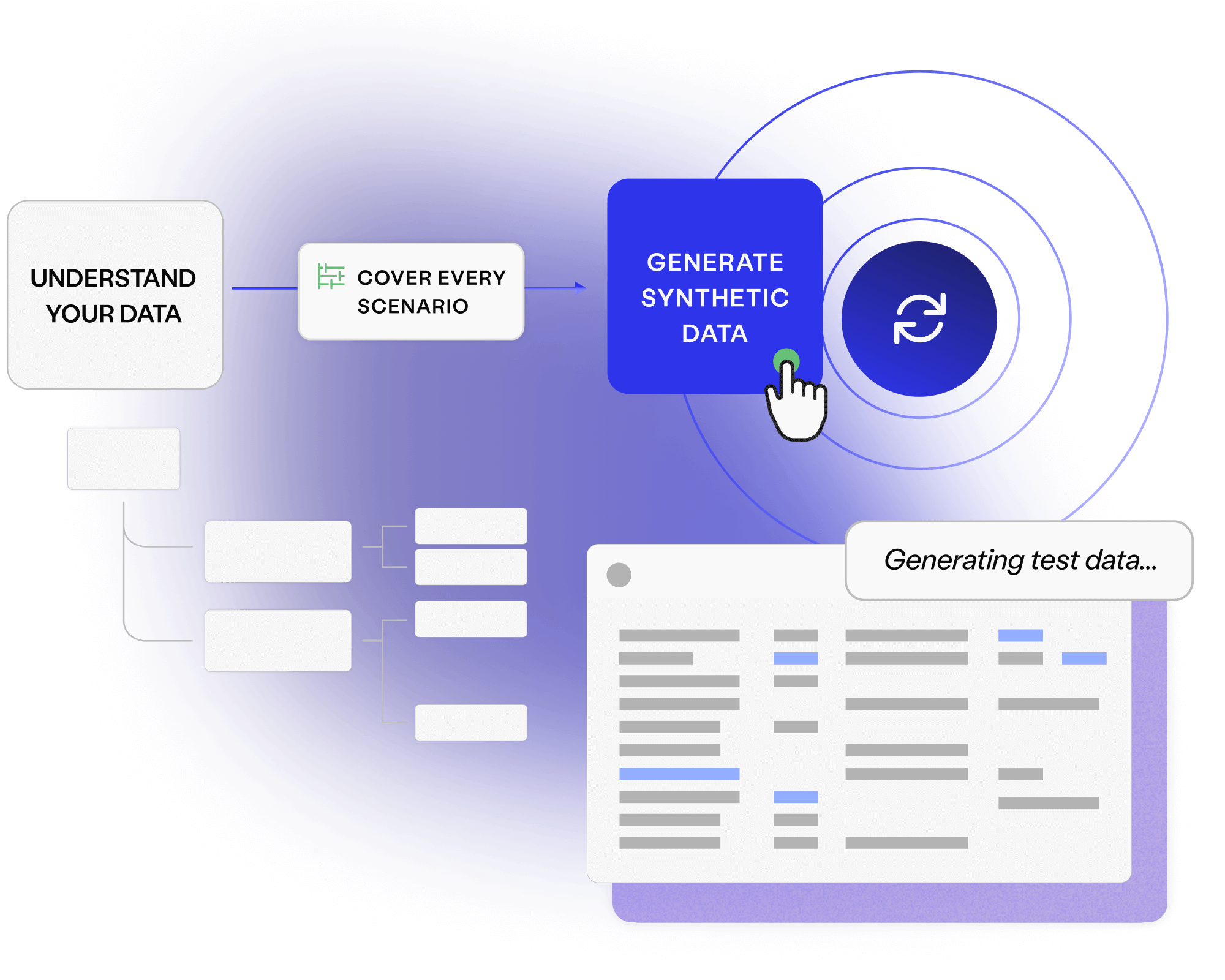

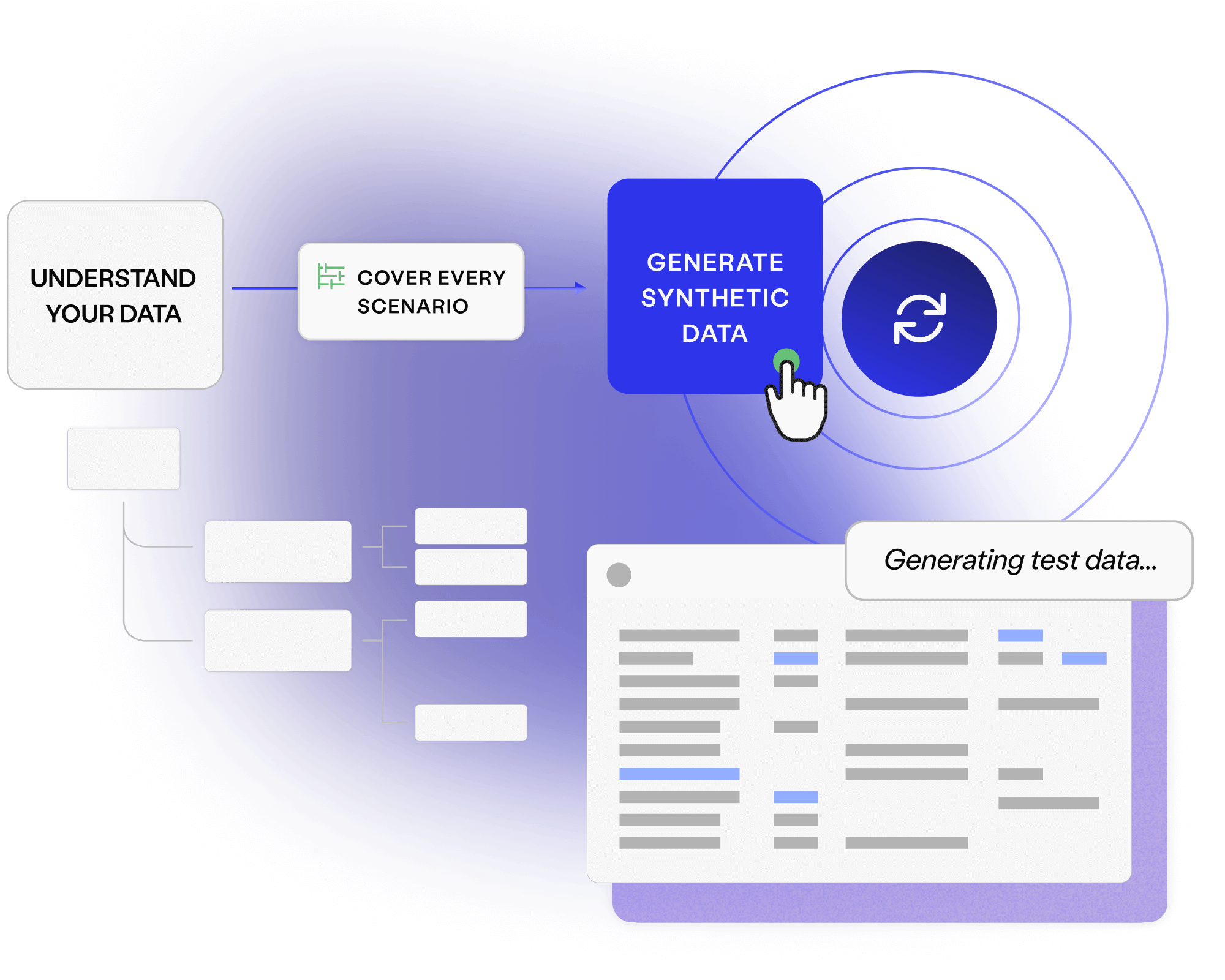

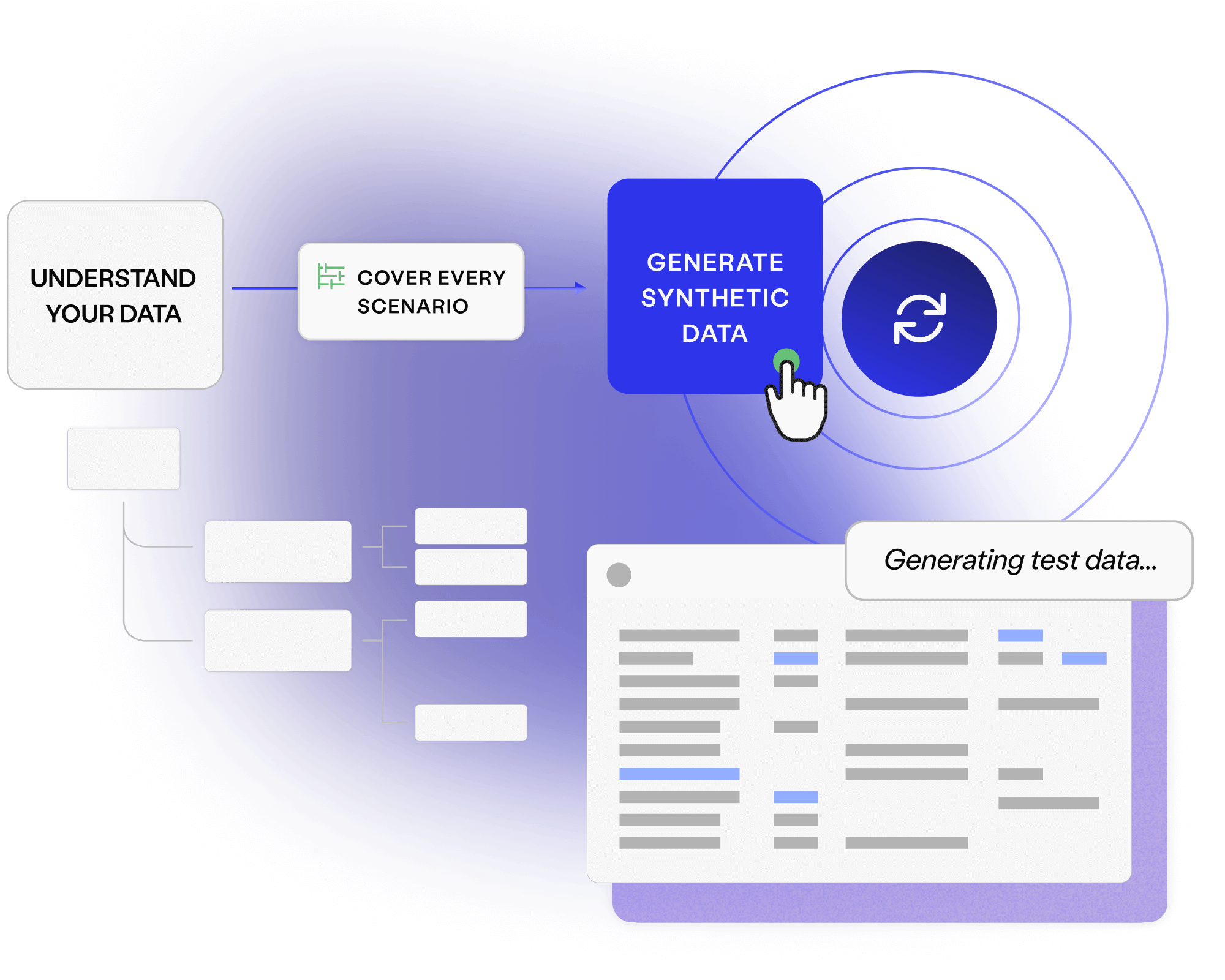

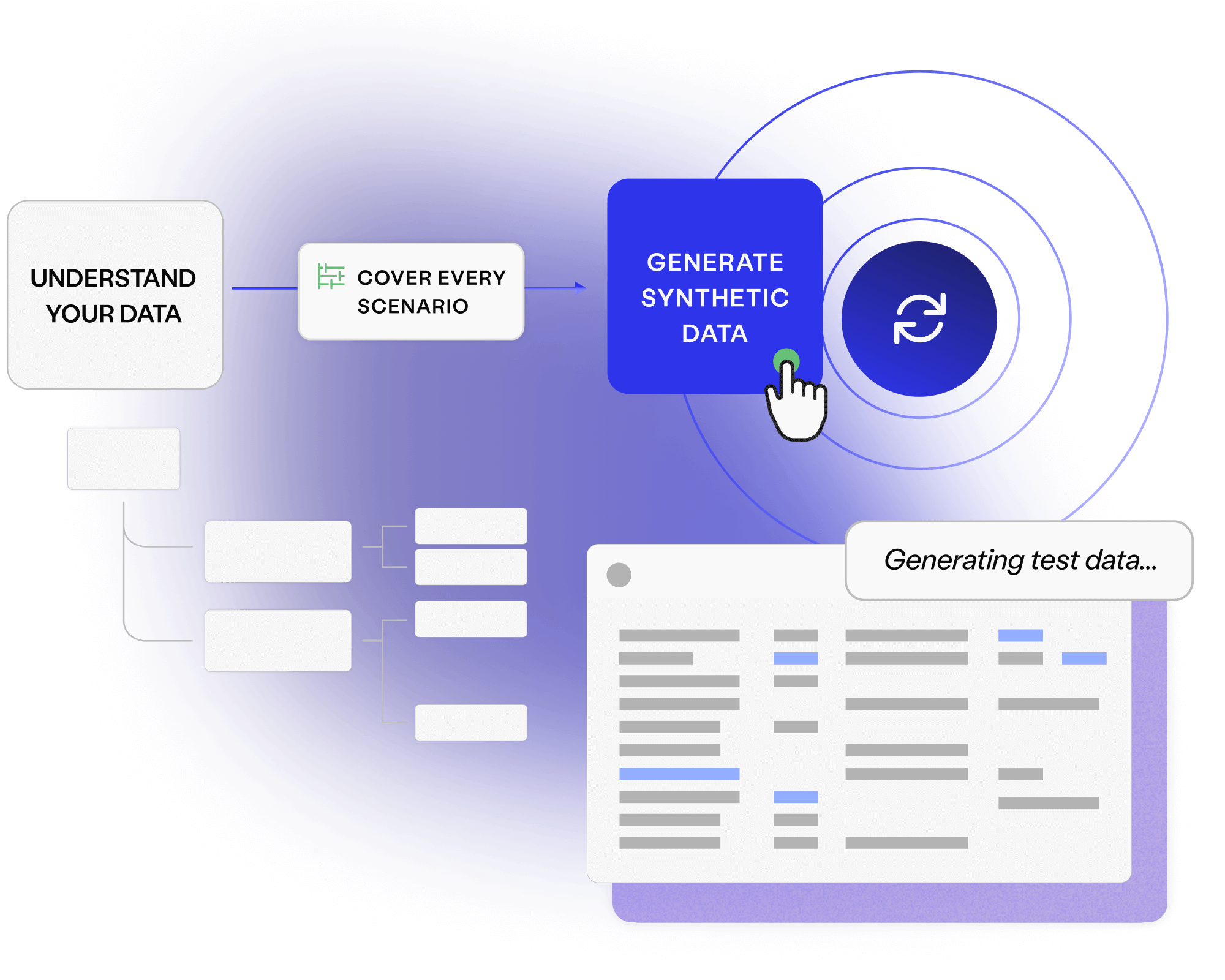

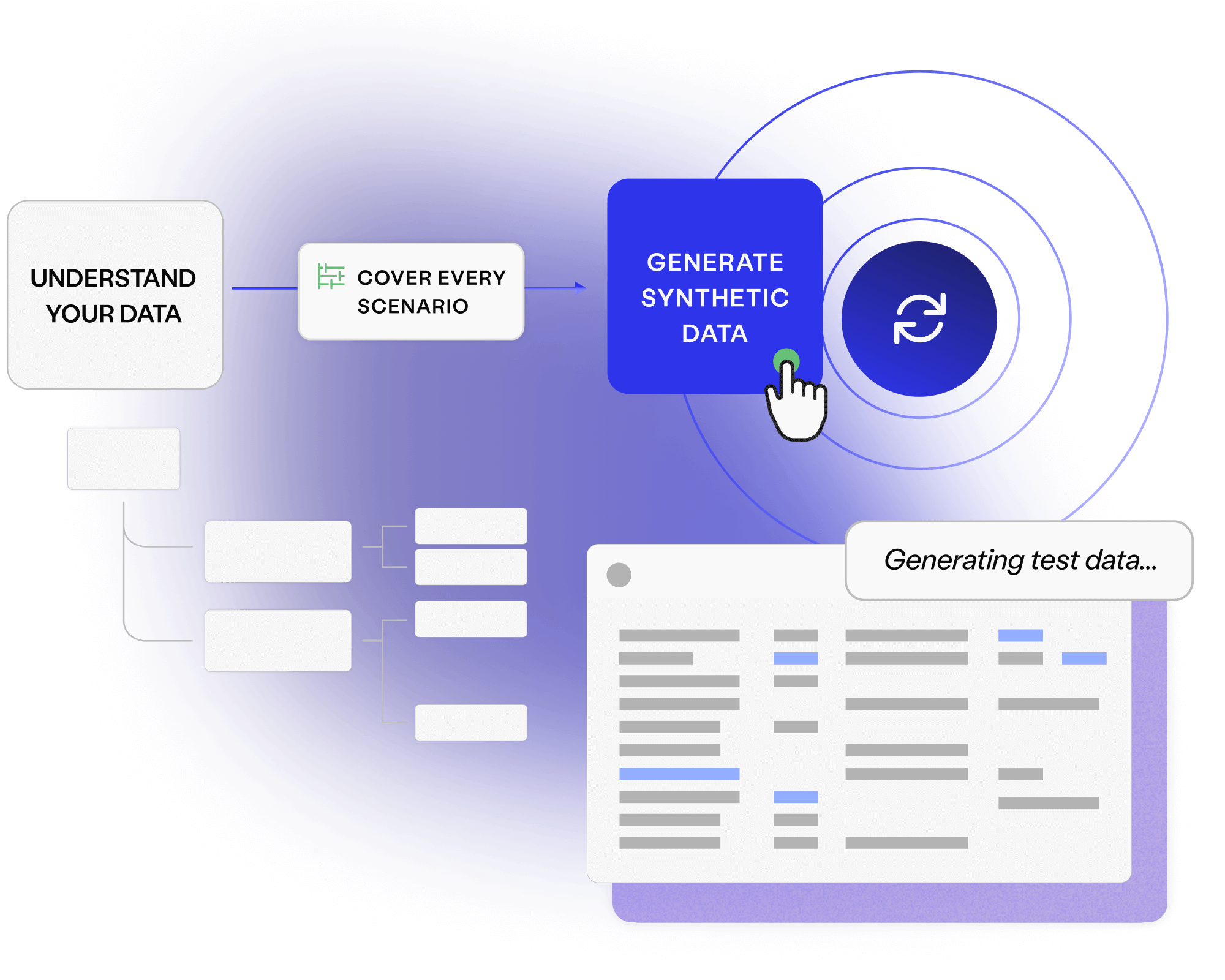

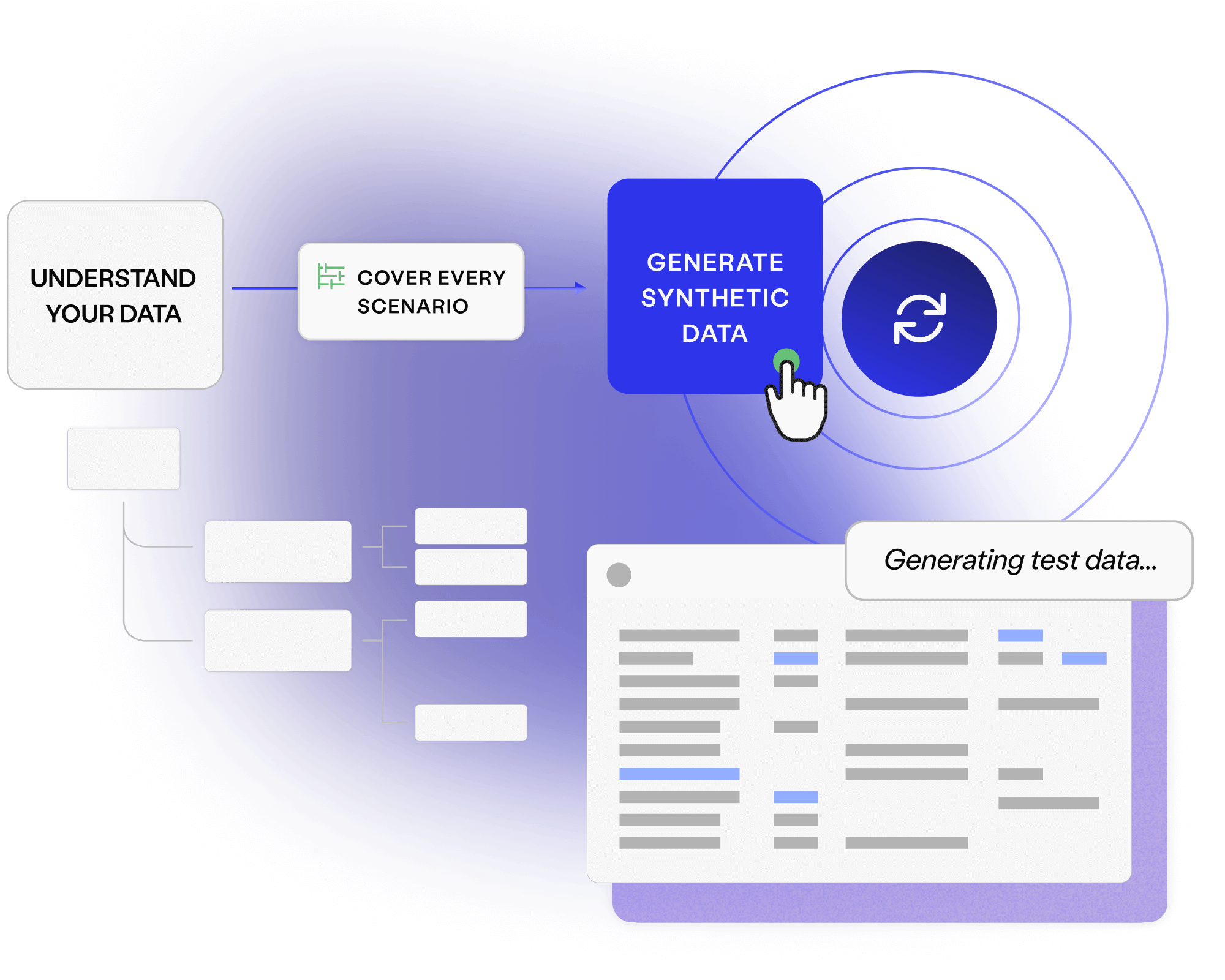

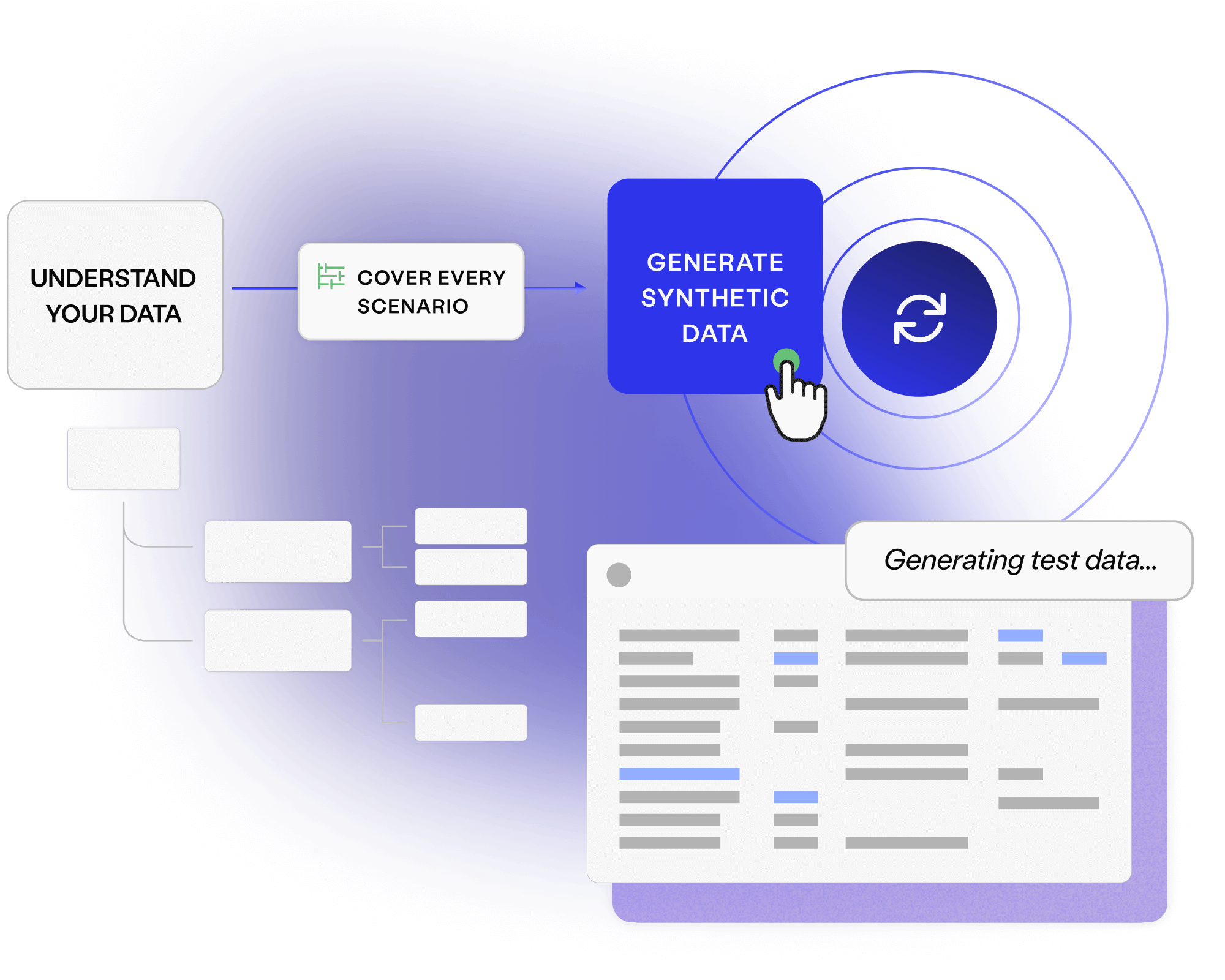

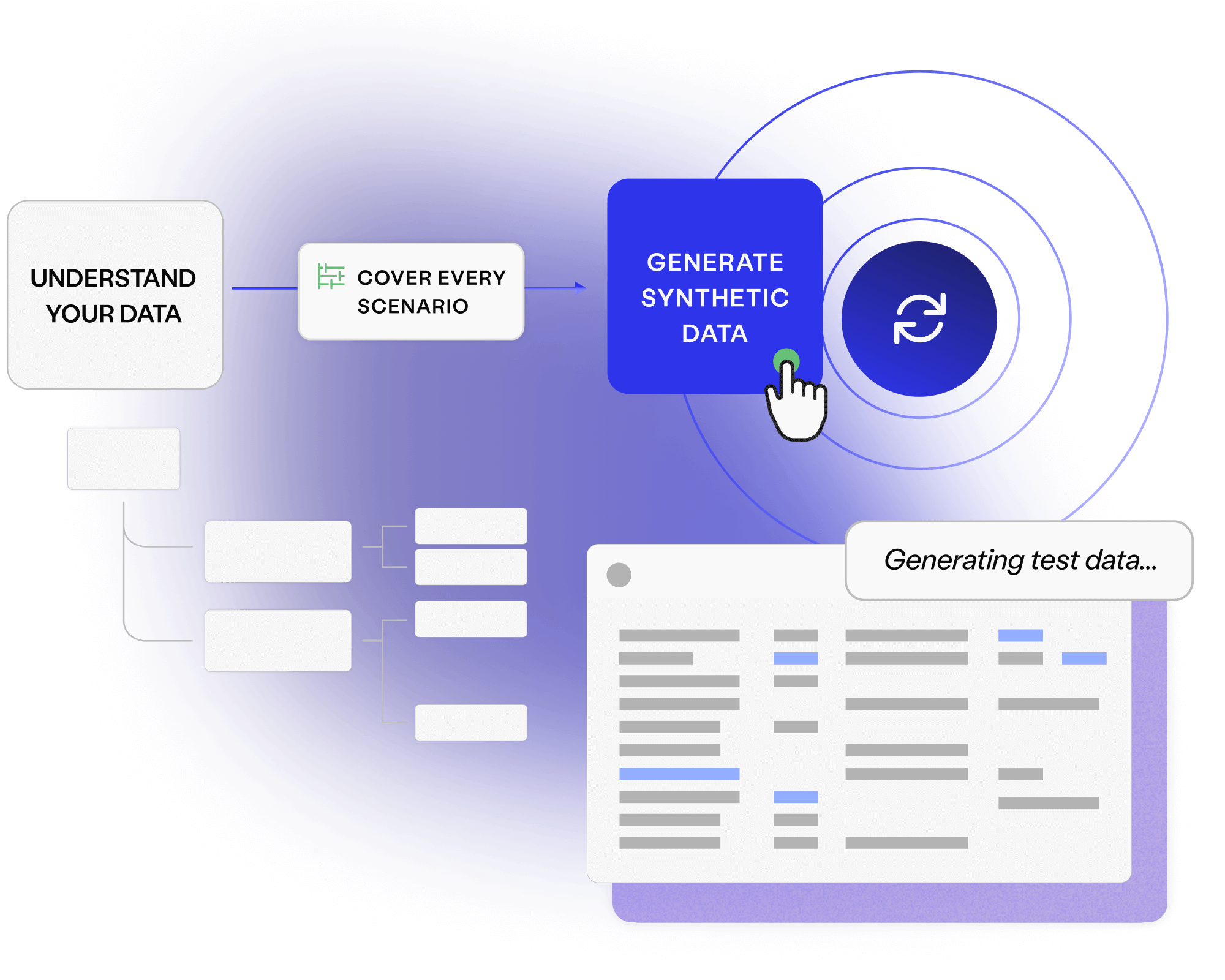

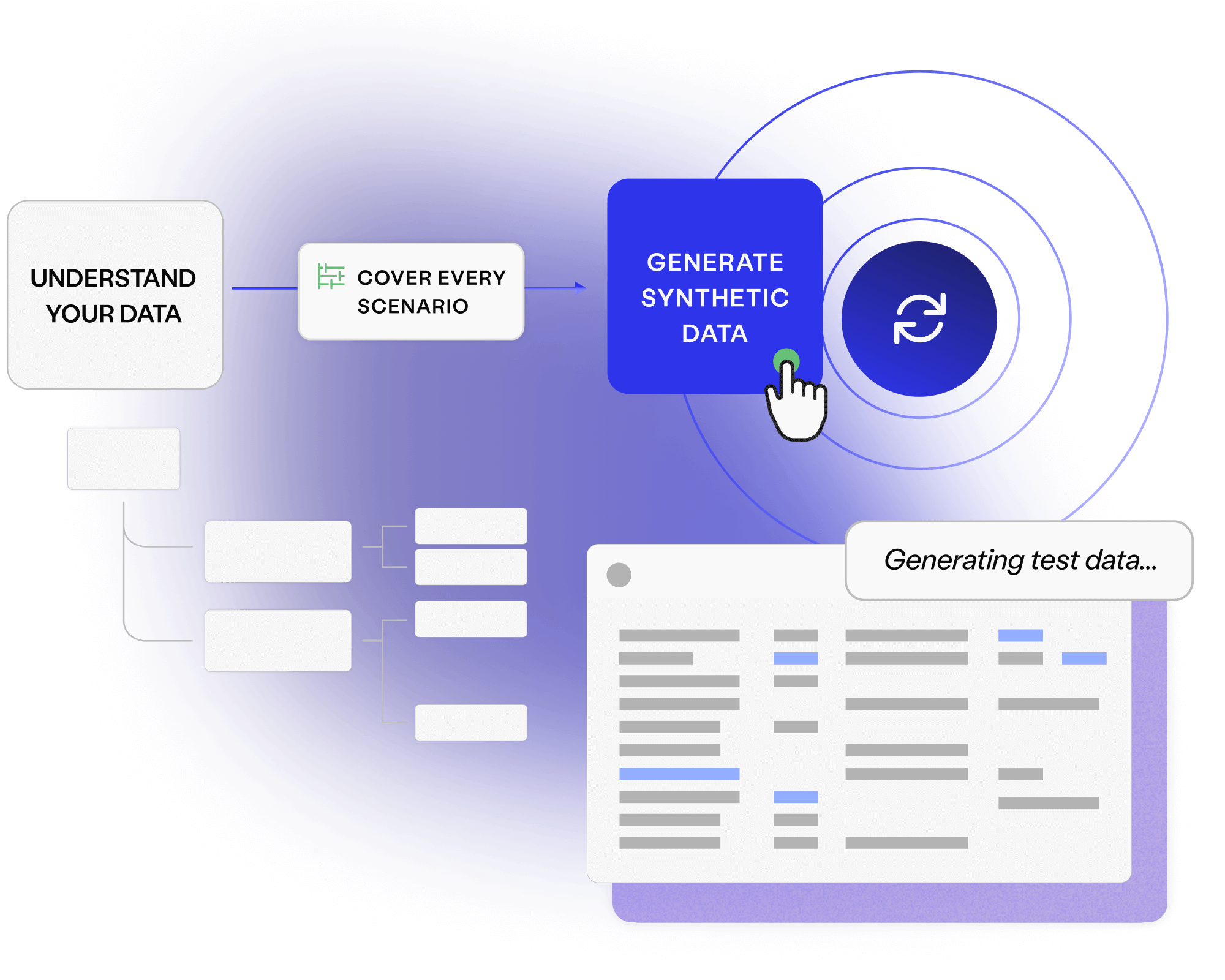

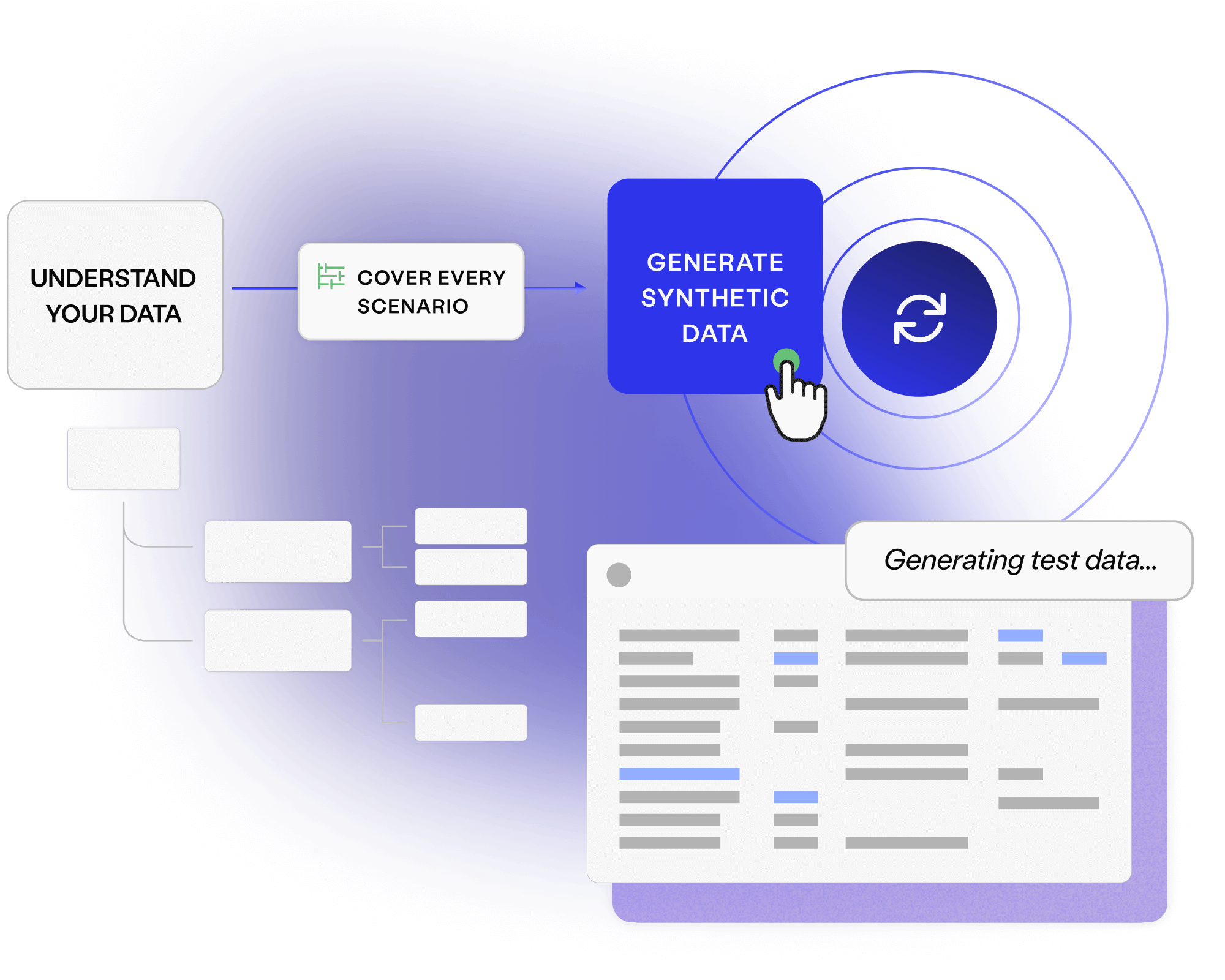

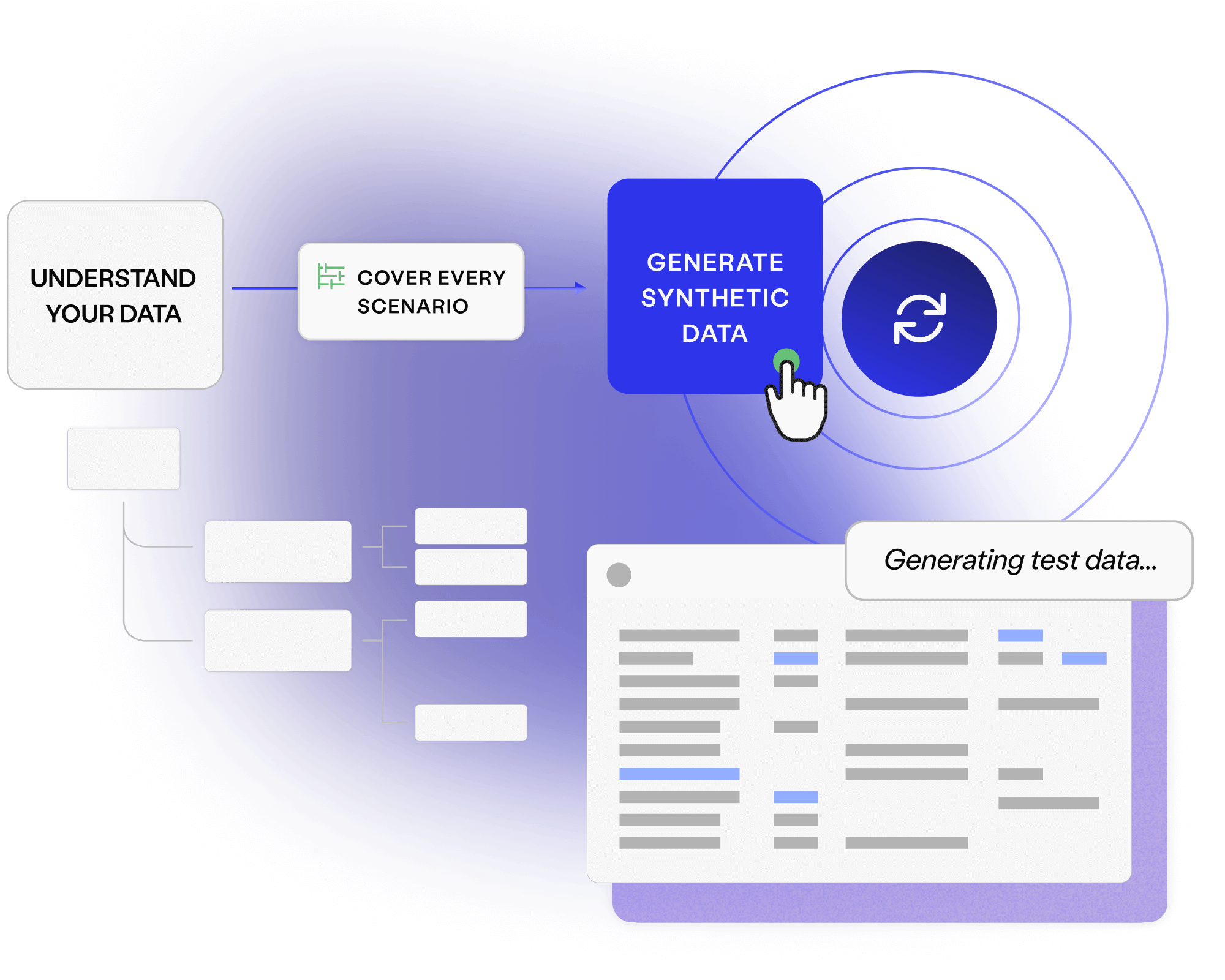

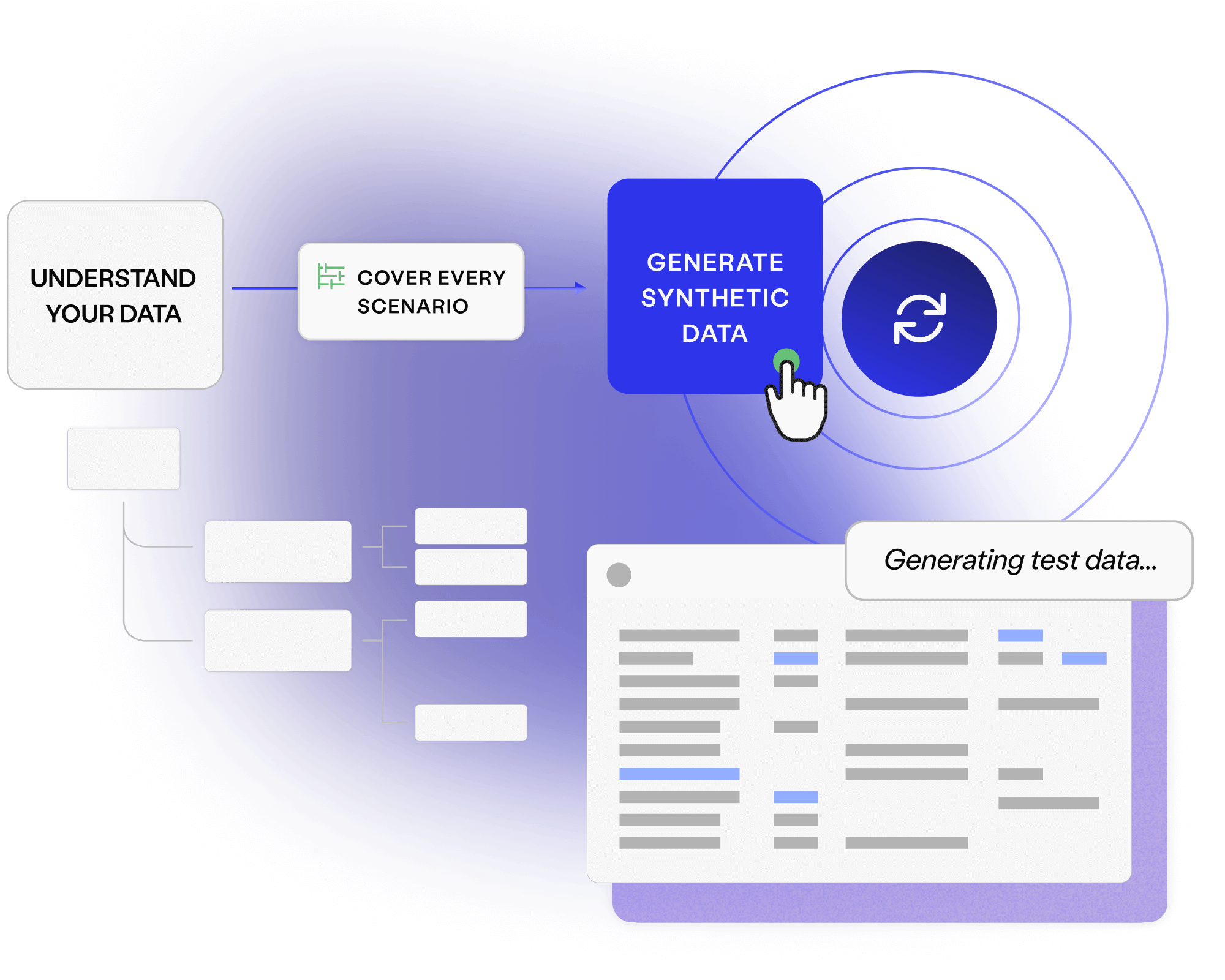

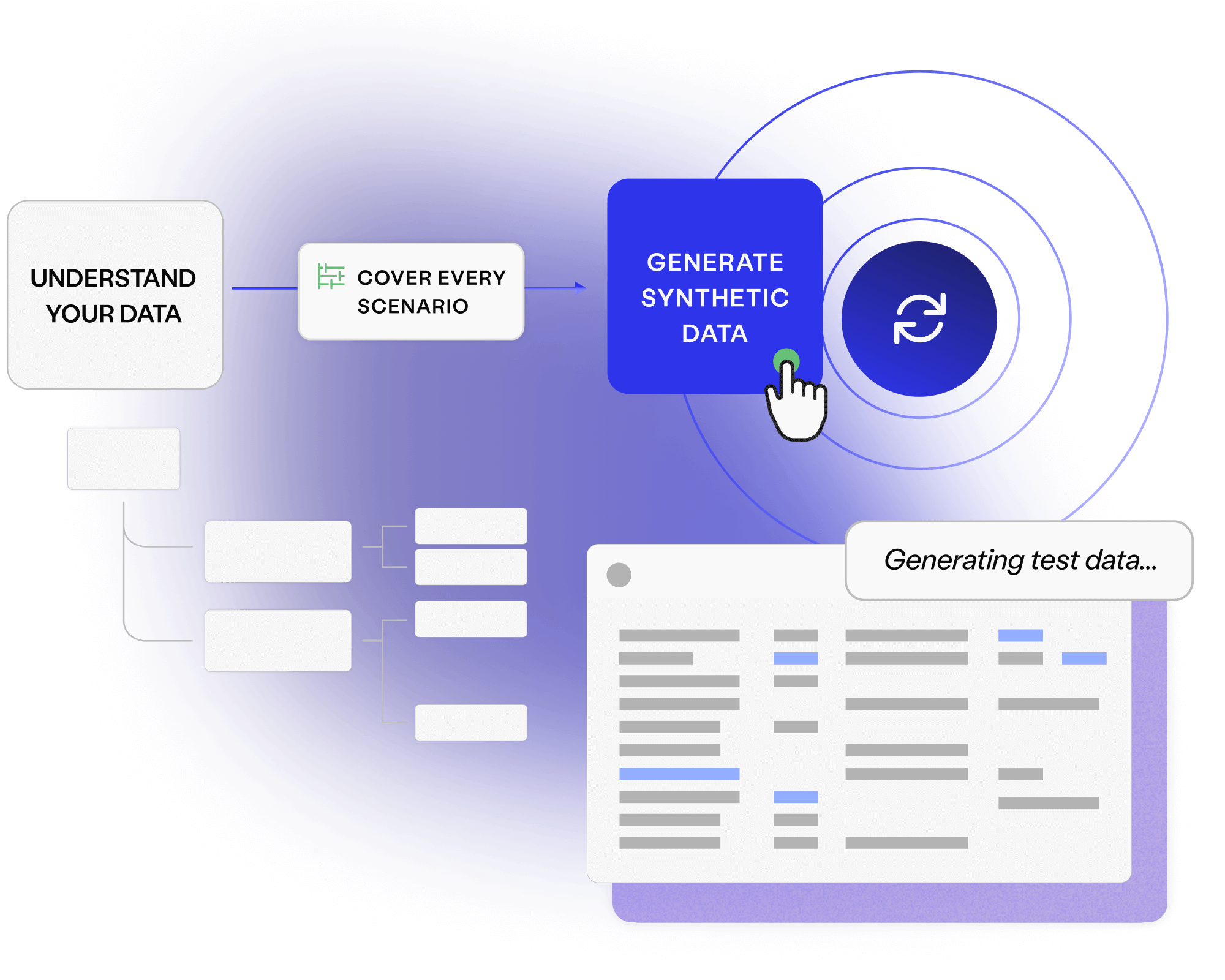

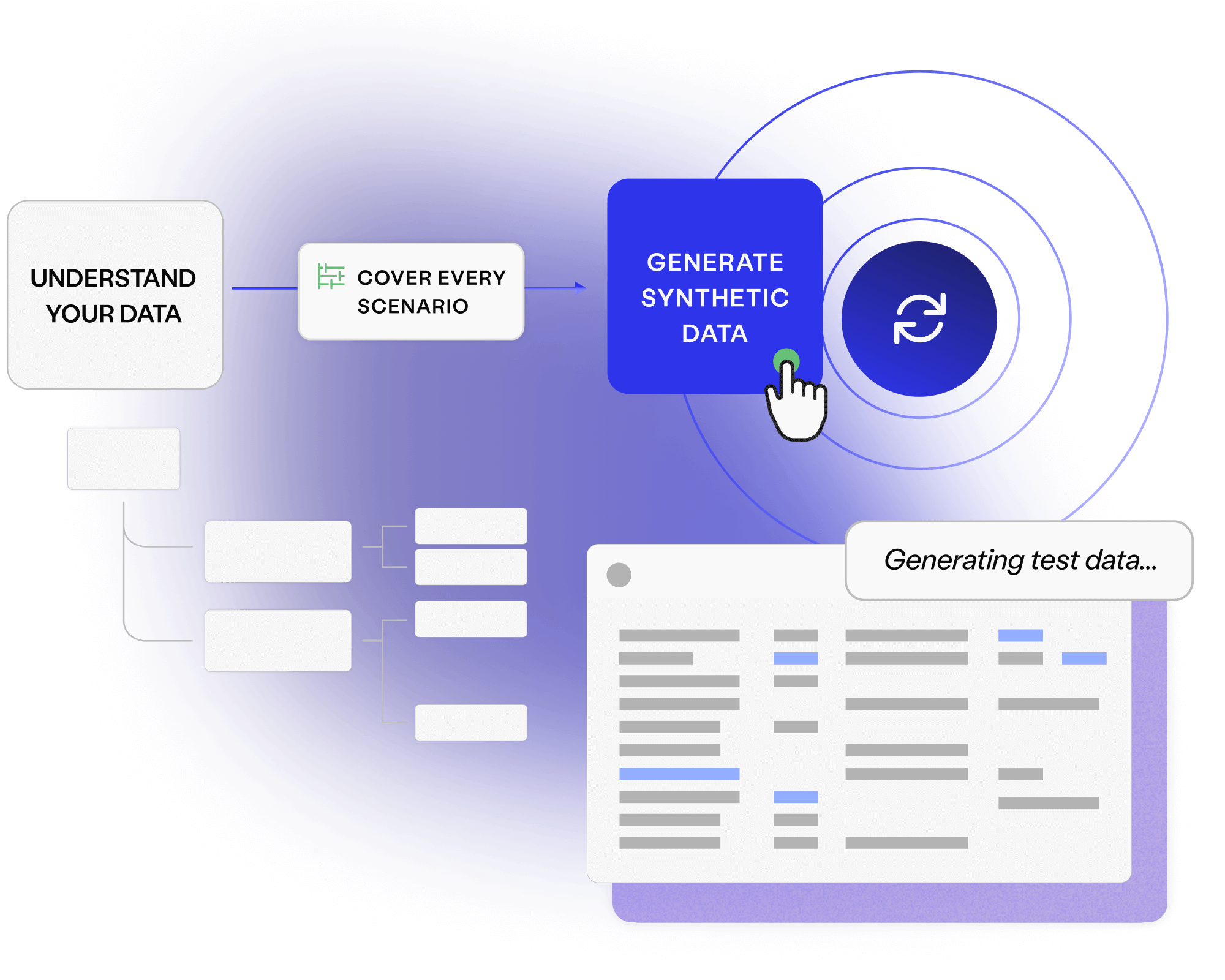

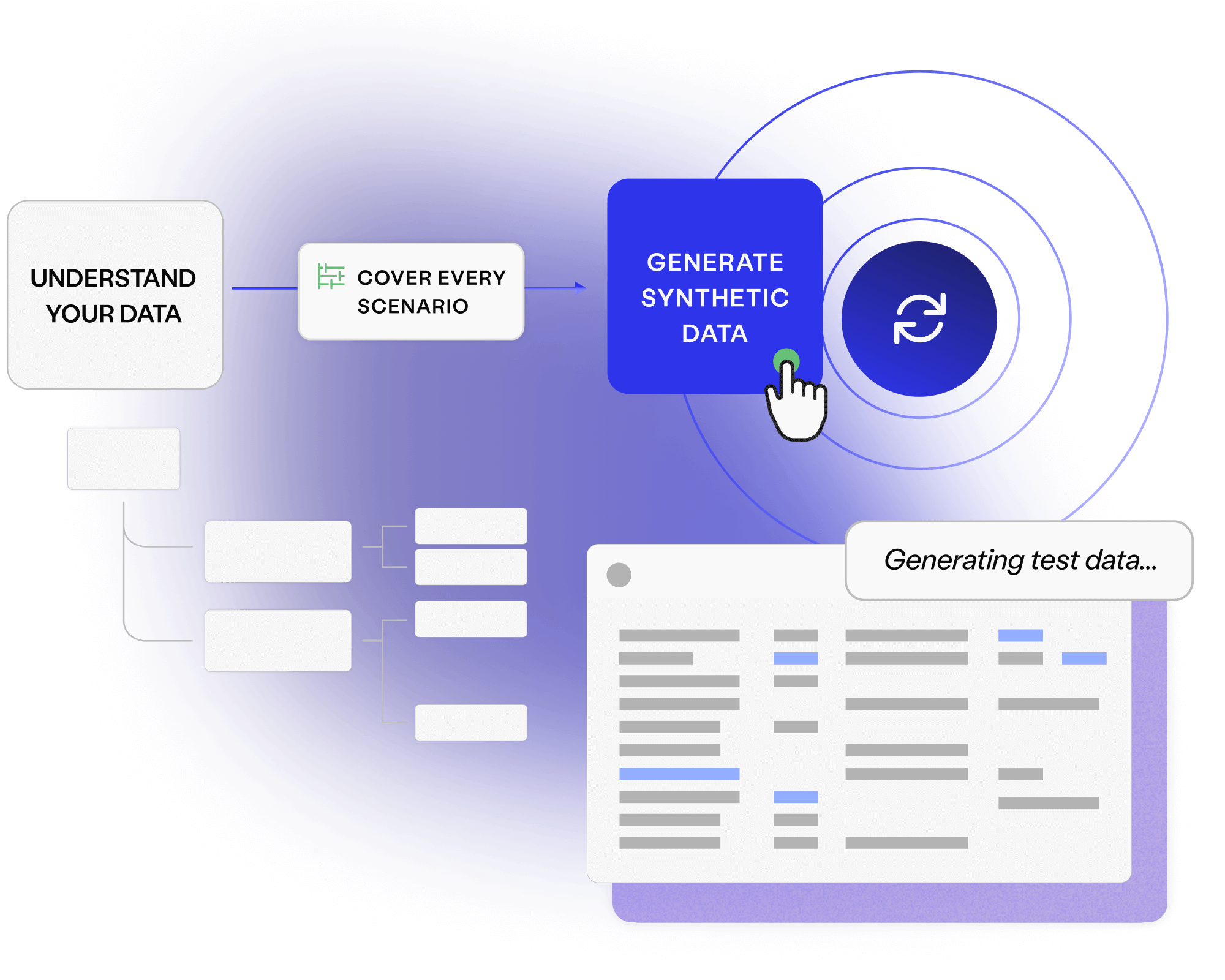

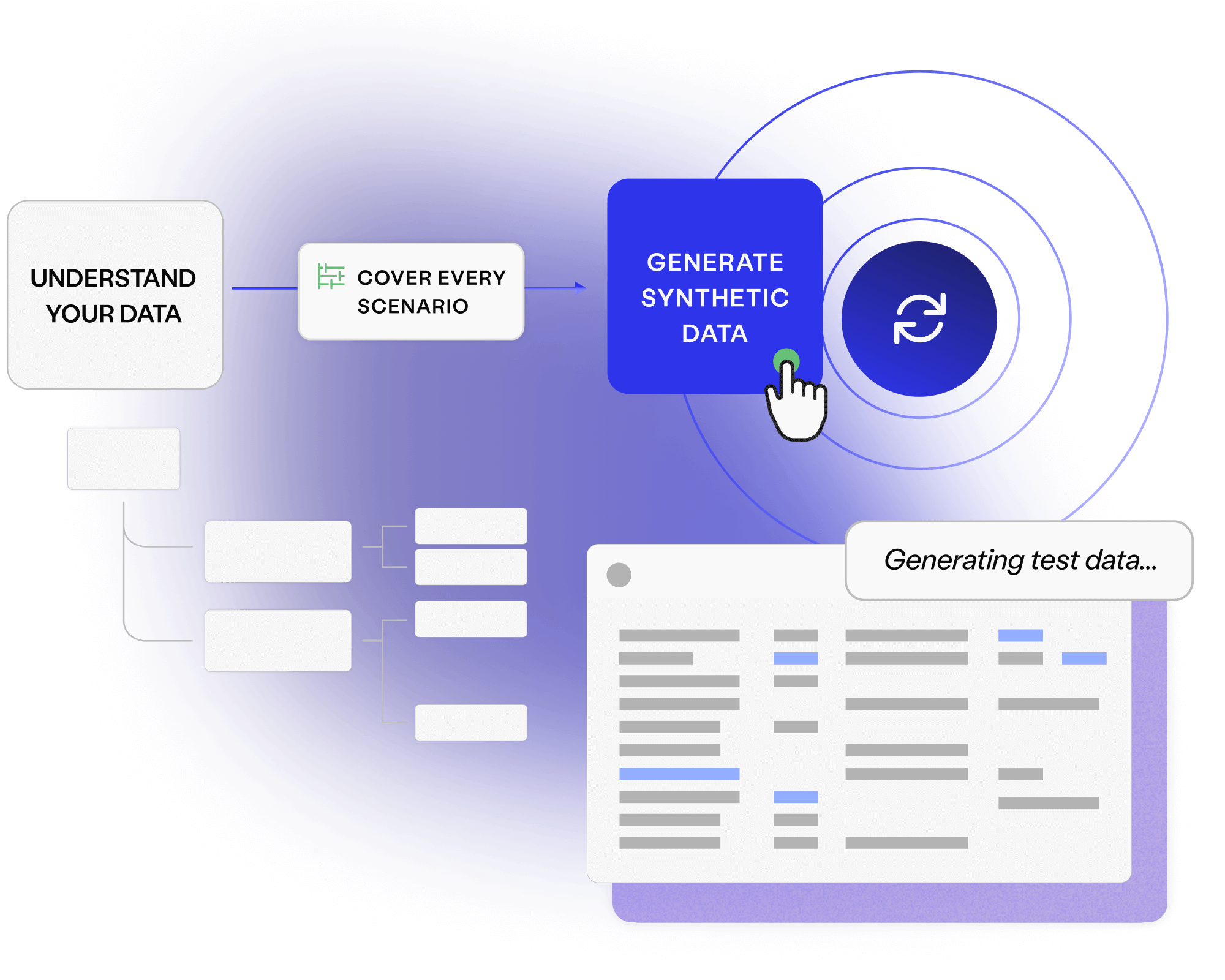

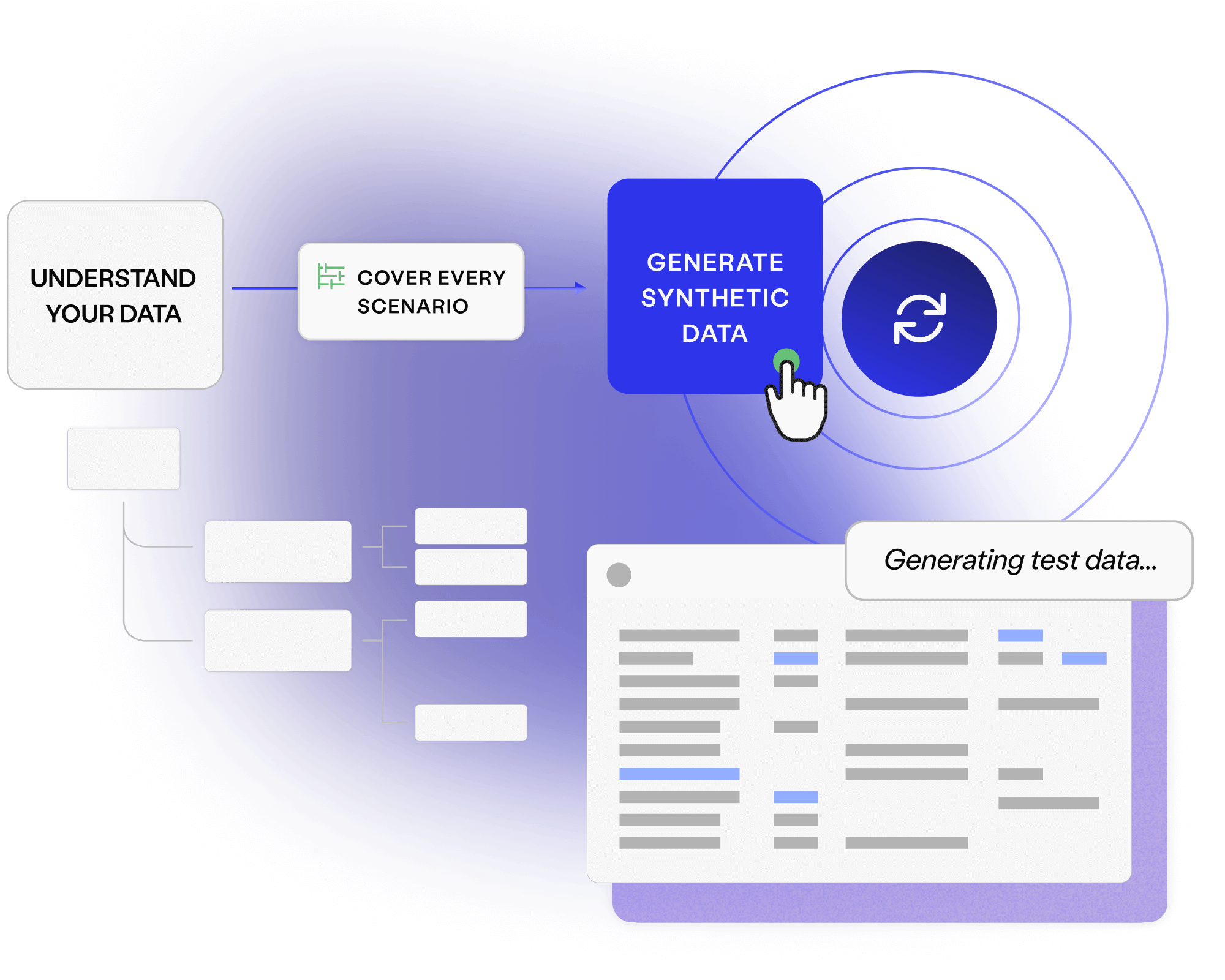

On demand masking and generation replaces the toil of finding, waiting for and making complex test data.

Generating data for every test scenario supports rigorous development and finds bugs while they remain low impact.

Uncover smarter test data, with Curiosity’s all-in-one, AI-accelerated platform. Offering integrated, secure, and intuitive tools to simplify complex data landscapes and overcome test data management challenges.

Explore platform

In highly regulated industries, some of the world’s largest enterprises trust our platform to remove sensitive information.

Payment platforms and complex banking systems are perfect for model-based data generation. We can break their intricate logic down into intuitive visual models, applying algorithms that create the smallest message set needed to cover diverse combinations.

Program Manager, ISO 20022

Read a free resource, or talk to an expert, to learn how you can avoid fines, increase productivity and embed quality across your whole software delivery ecosystem.

Discover how you can deliver complete and compliant data on demand across the enterprise.

Read more about Enterprise Test Data brochure Download your copy

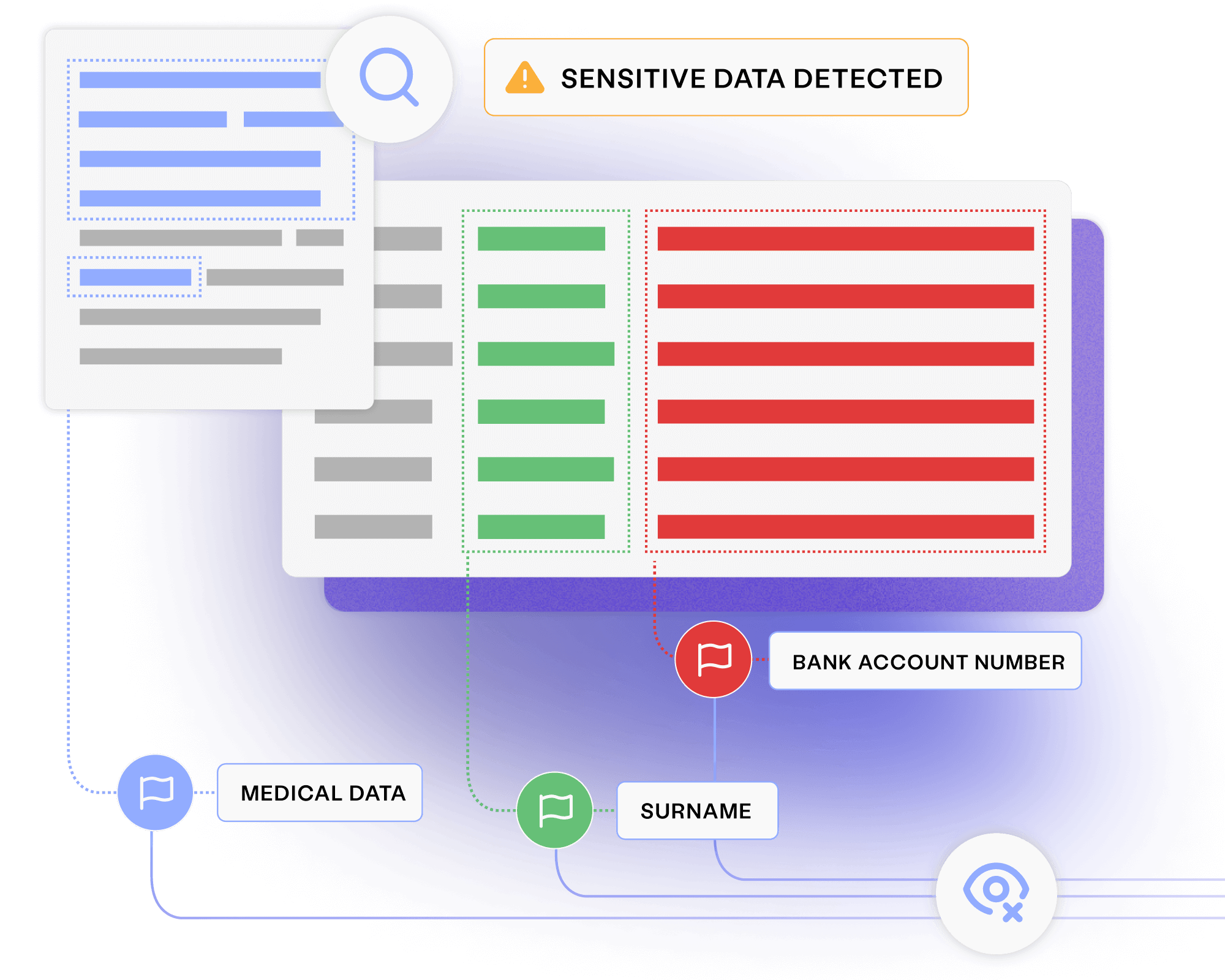

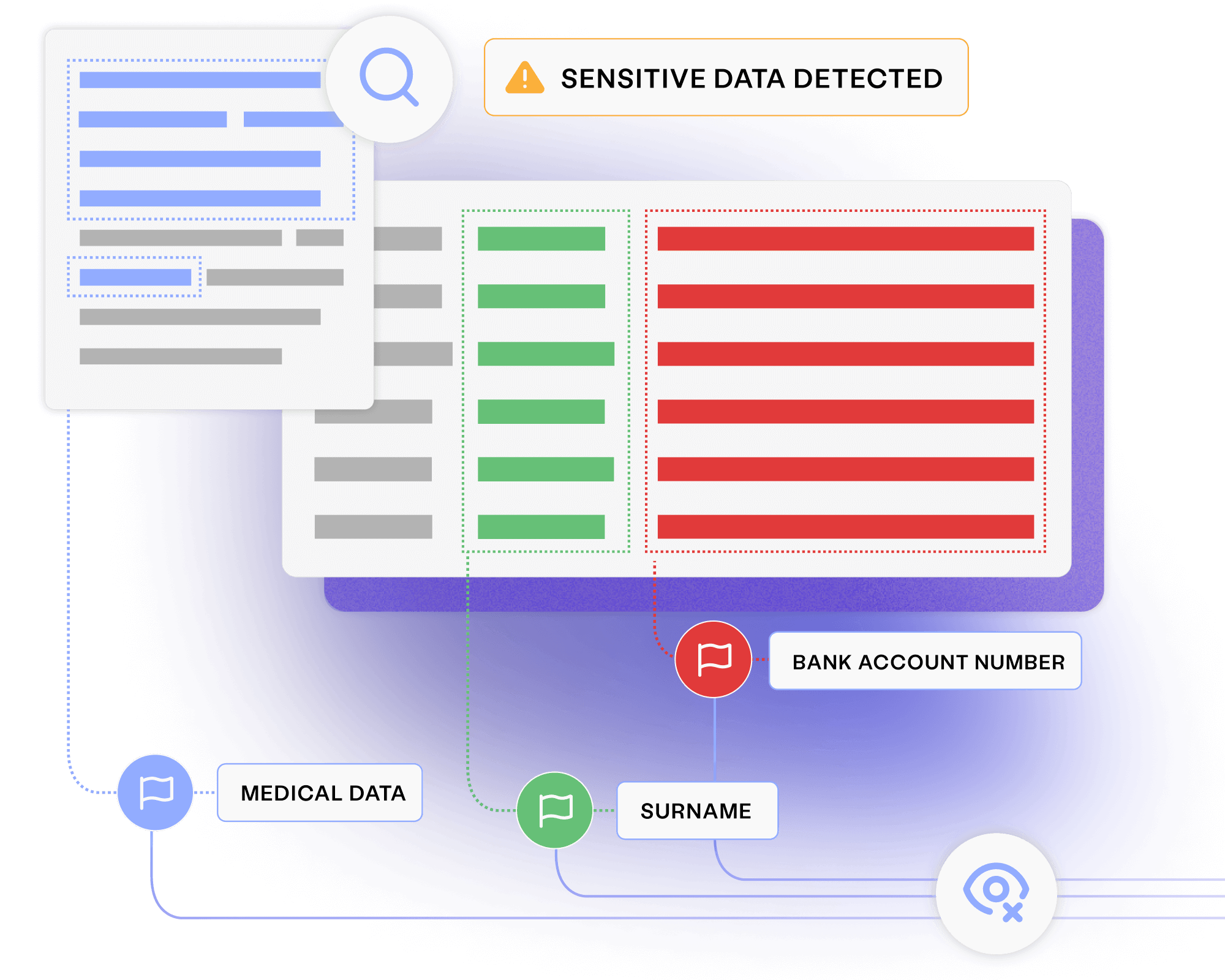

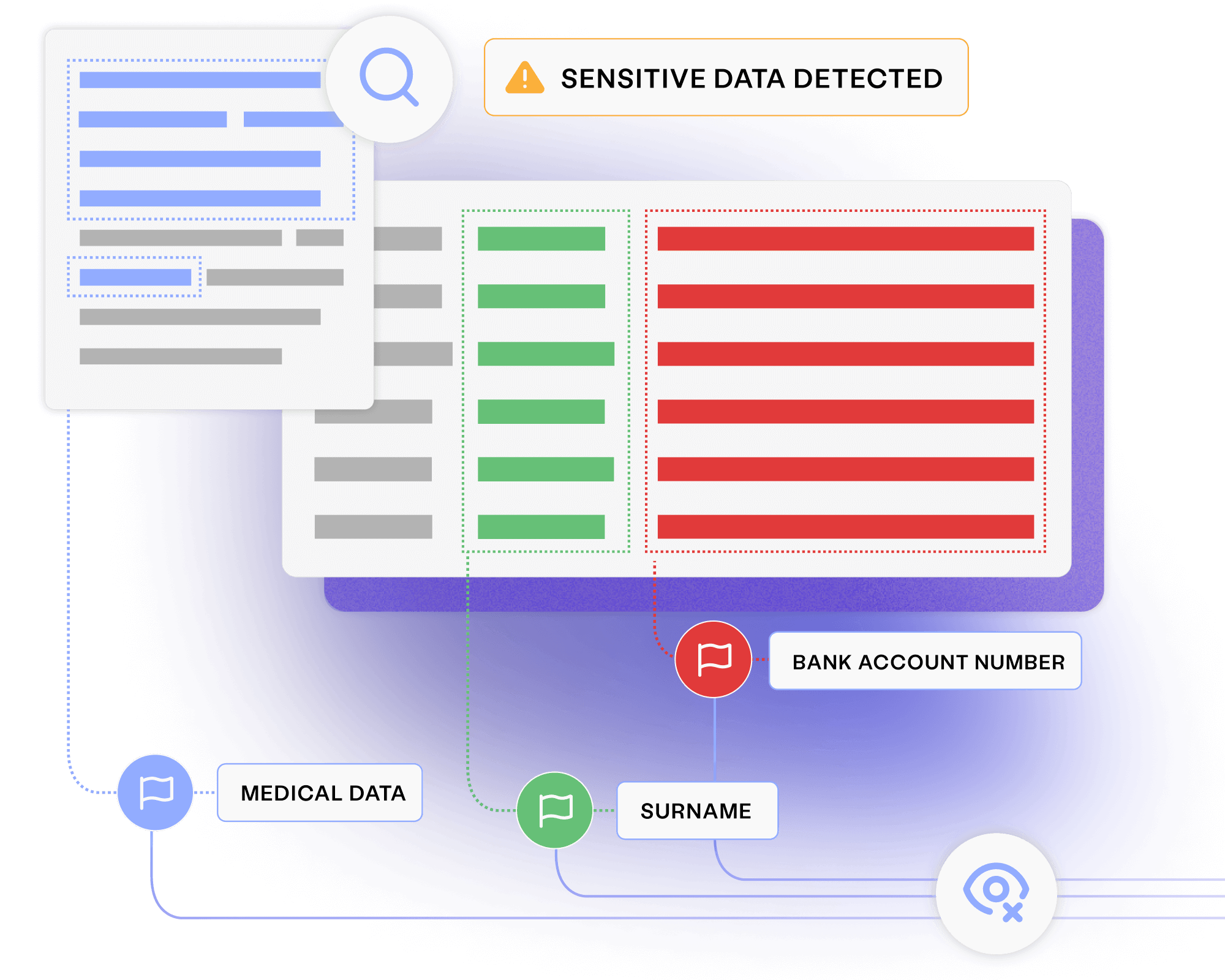

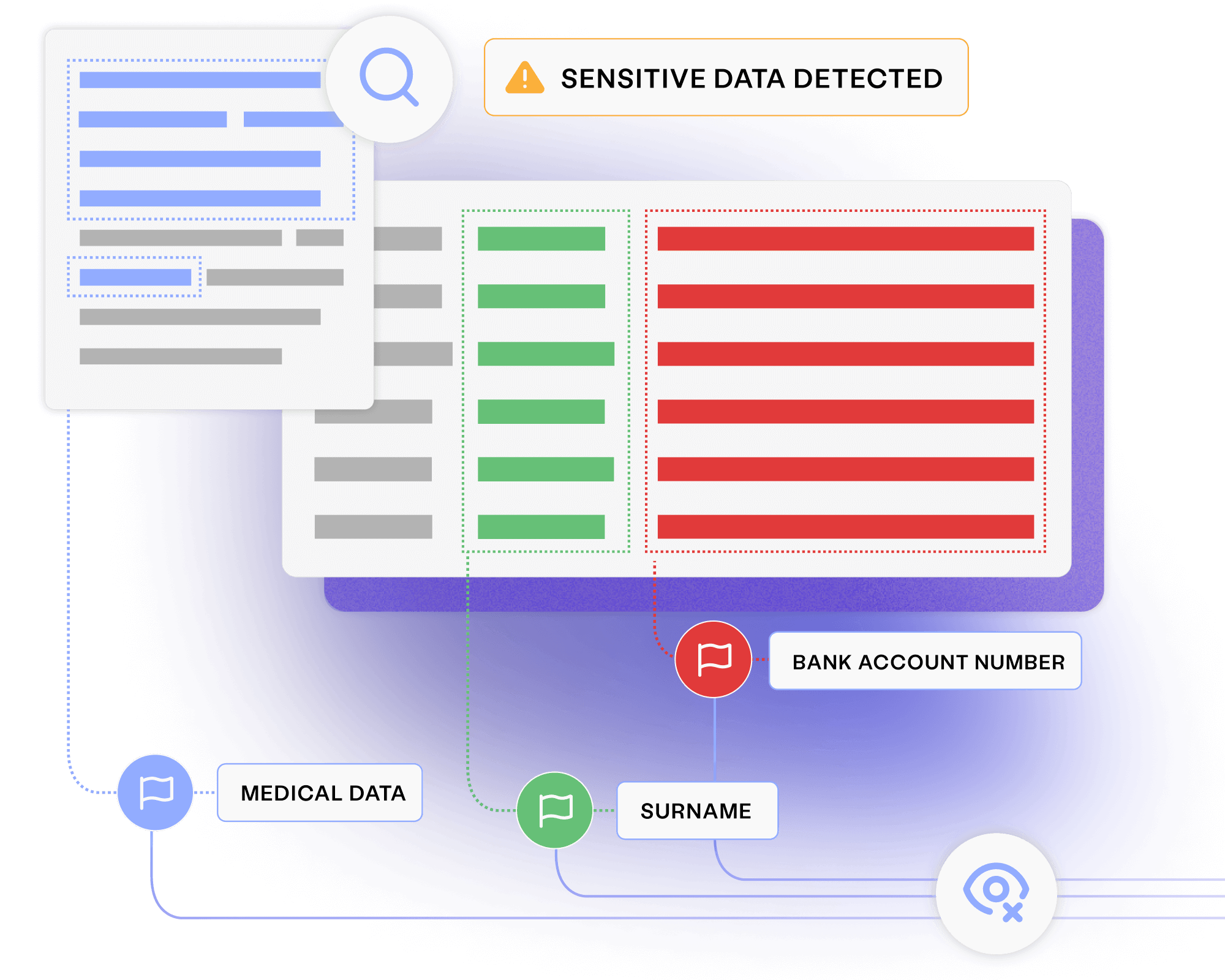

Discover how profiling, masking and generation reduce the risk of data breaches and costly fines.

Read more about Privacy and compliance data sheet Read today

Talk to us to learn how masking and generation can enhance your software delivery and data privacy.

Read more about Meet with a Curiosity expert Book now