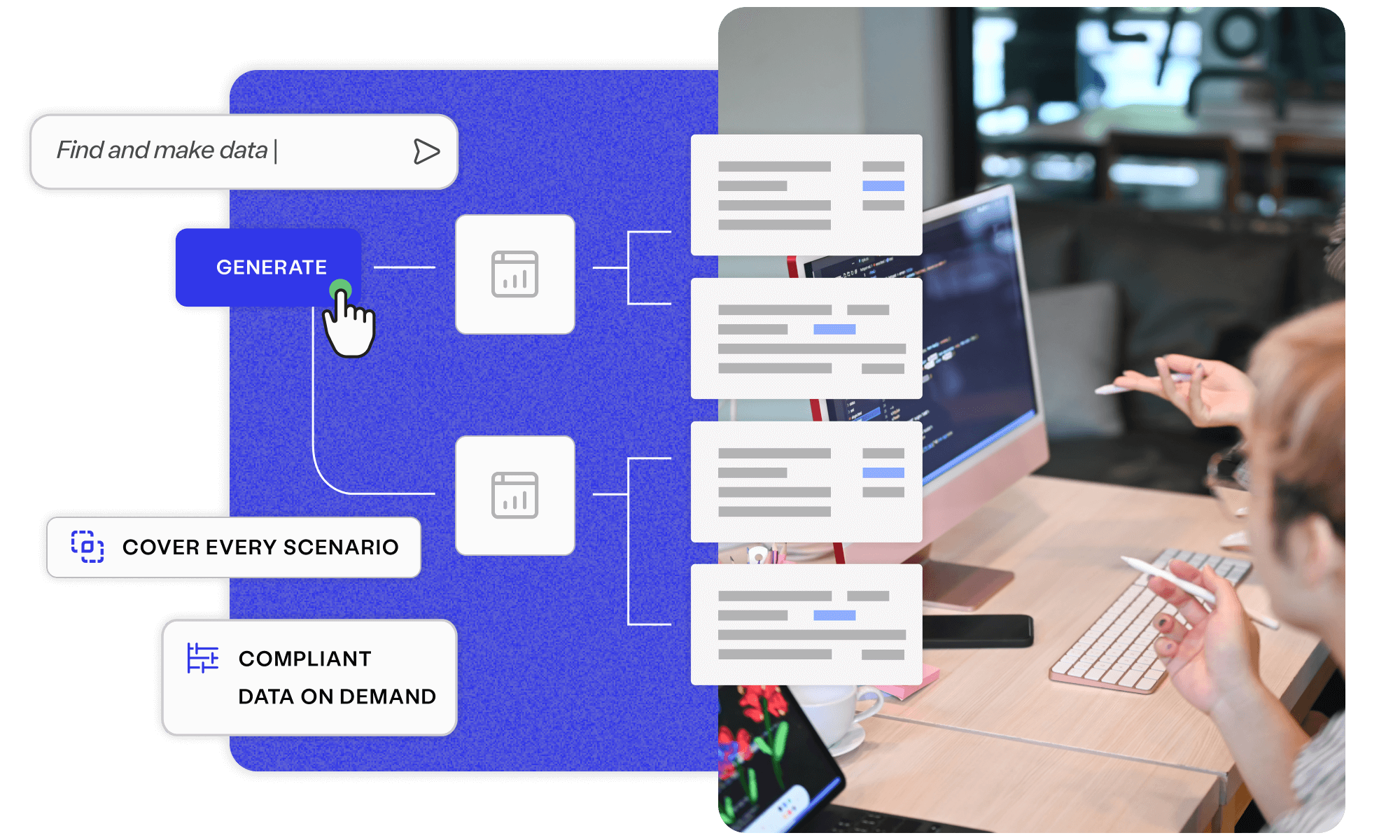

Discover Enterprise Test Data®

Simplify your complex data landscape and provide confidence and clarity at every step of your data...

Read more about Discover Enterprise Test Data® Learn moreAI-powered. End-to-end. Your complete test data management platform.

Explore Curiosity's collection of webinars, podcasts, blogs and success stories, covering everything from visual modelling to artificial intelligence and test data management.

Deliver superior test data and overcome the challenges of complexity, legacy, scale, and regulation with Curiosity Software.

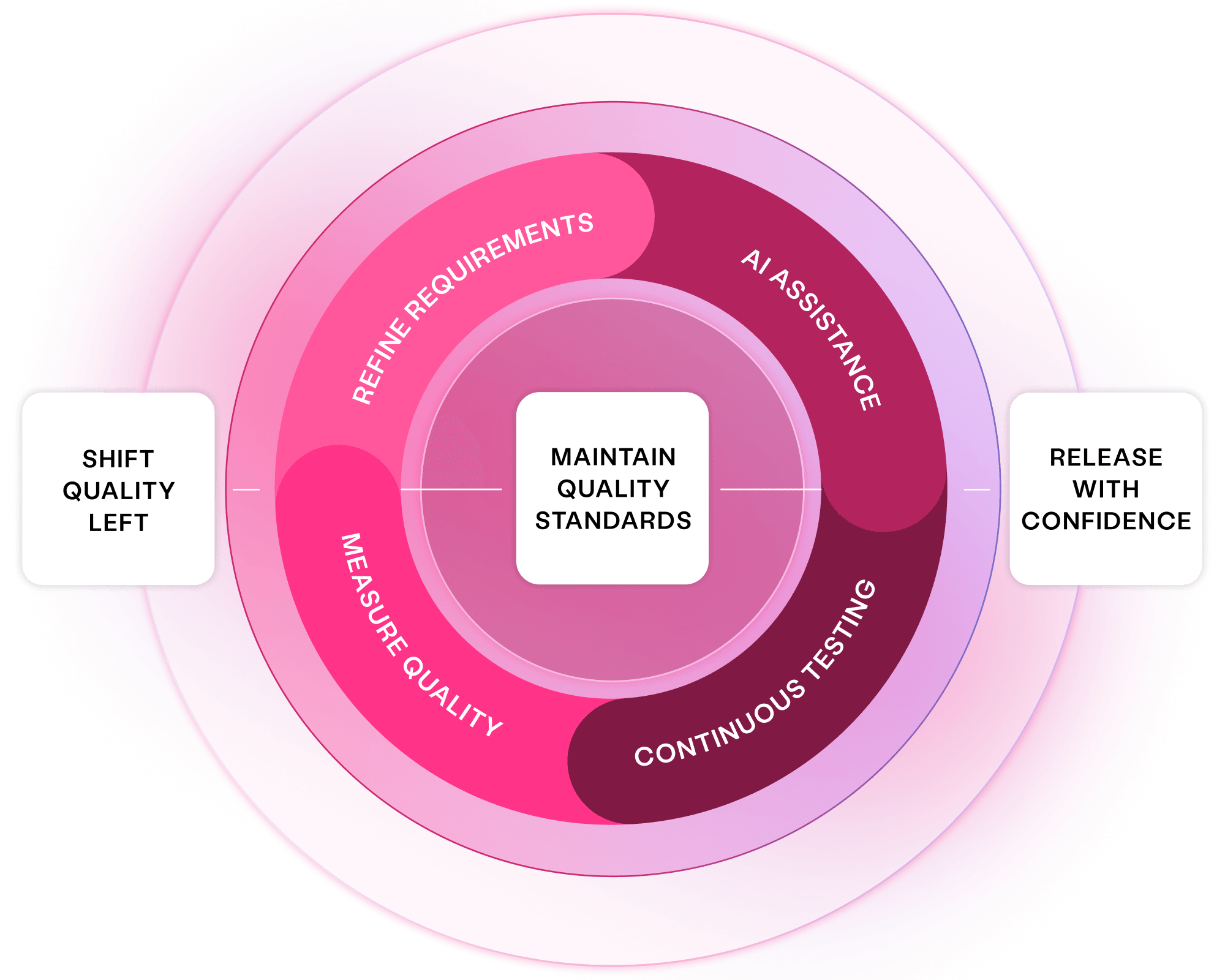

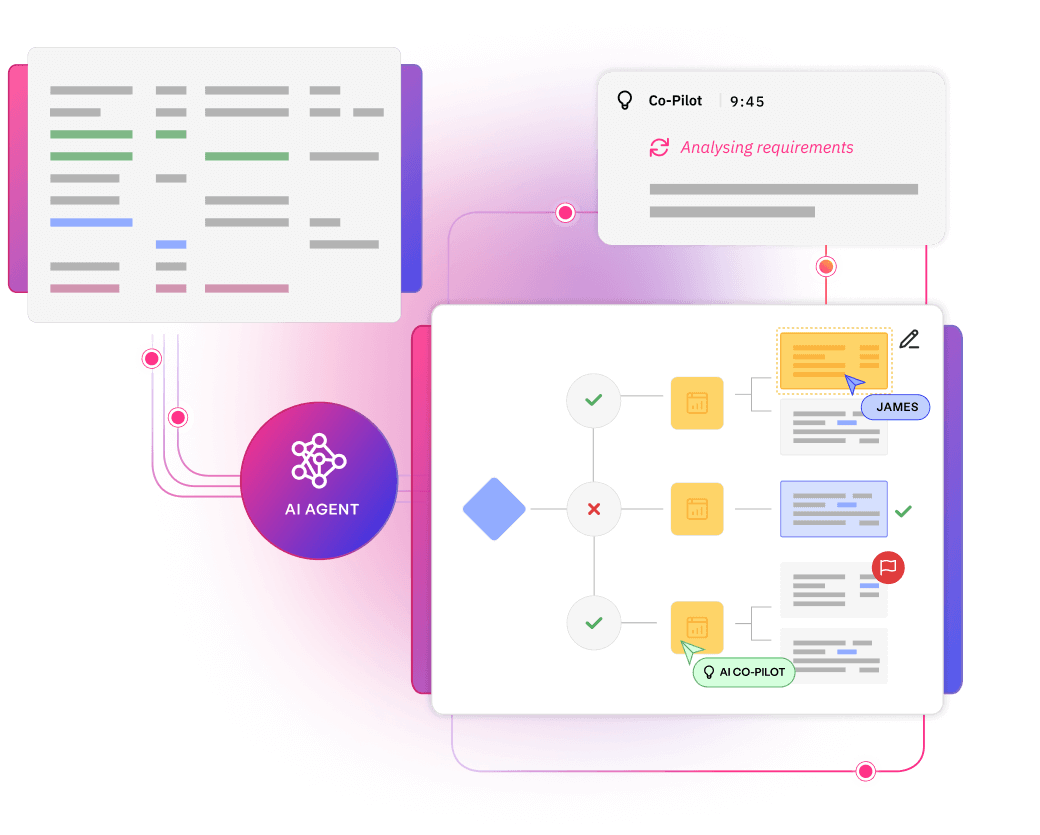

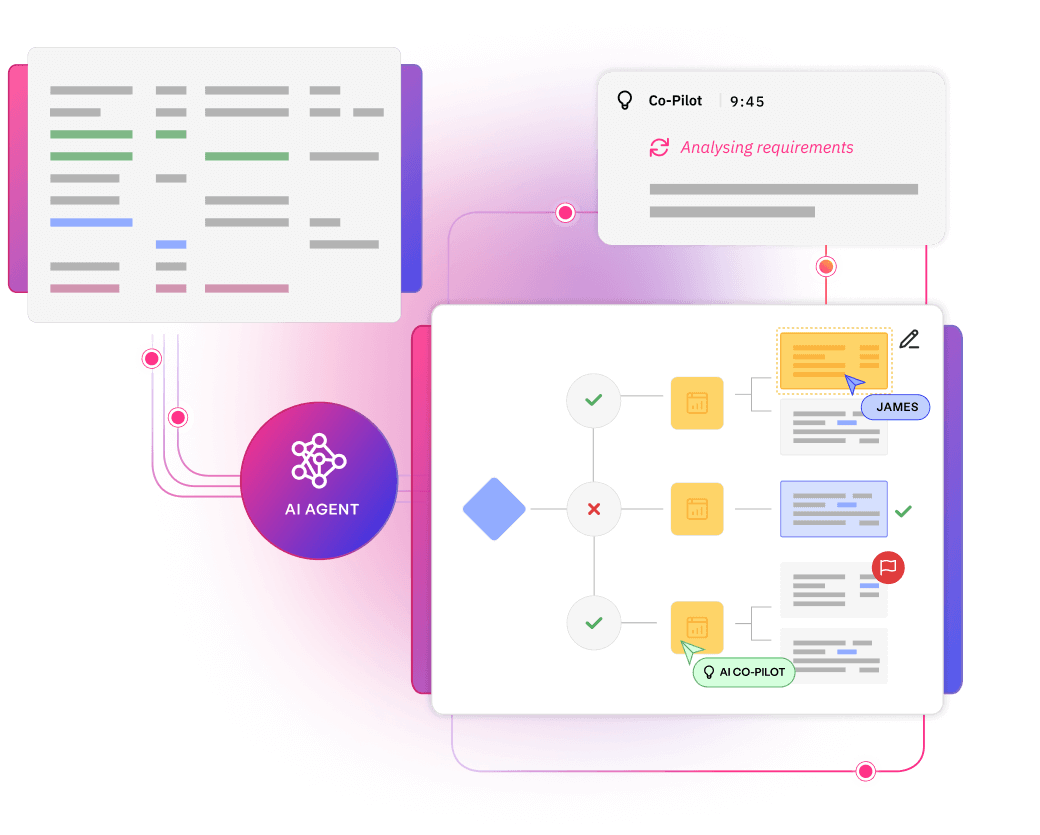

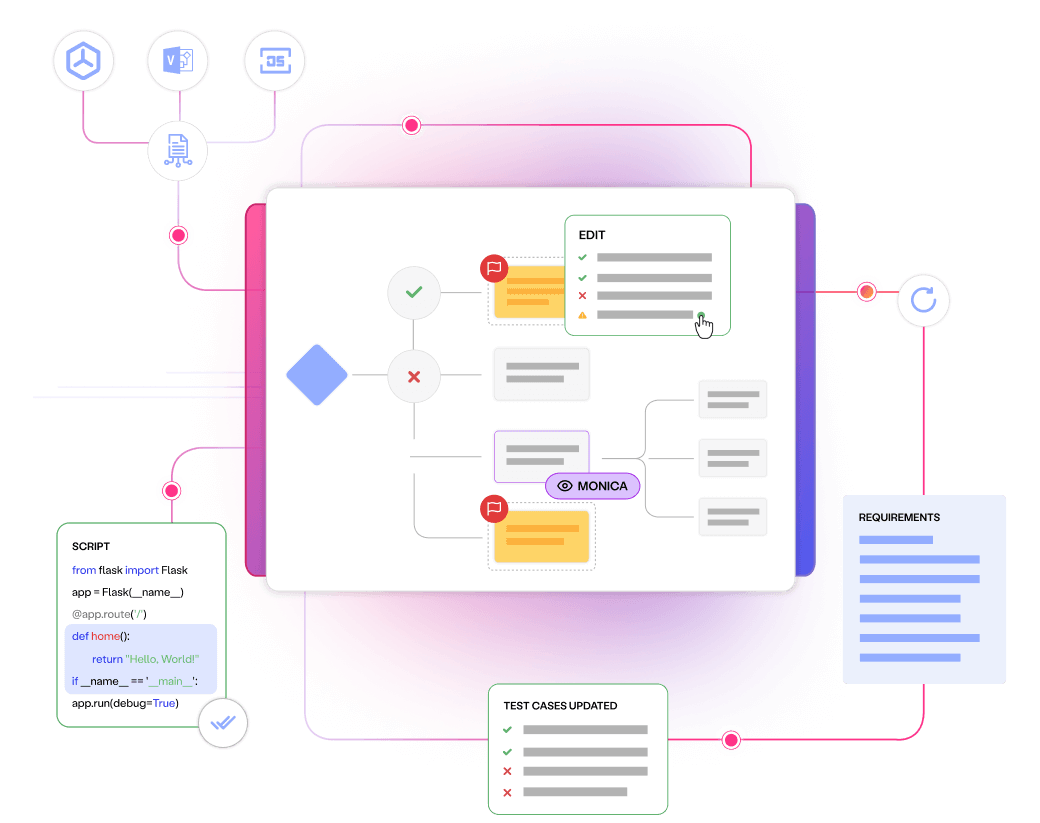

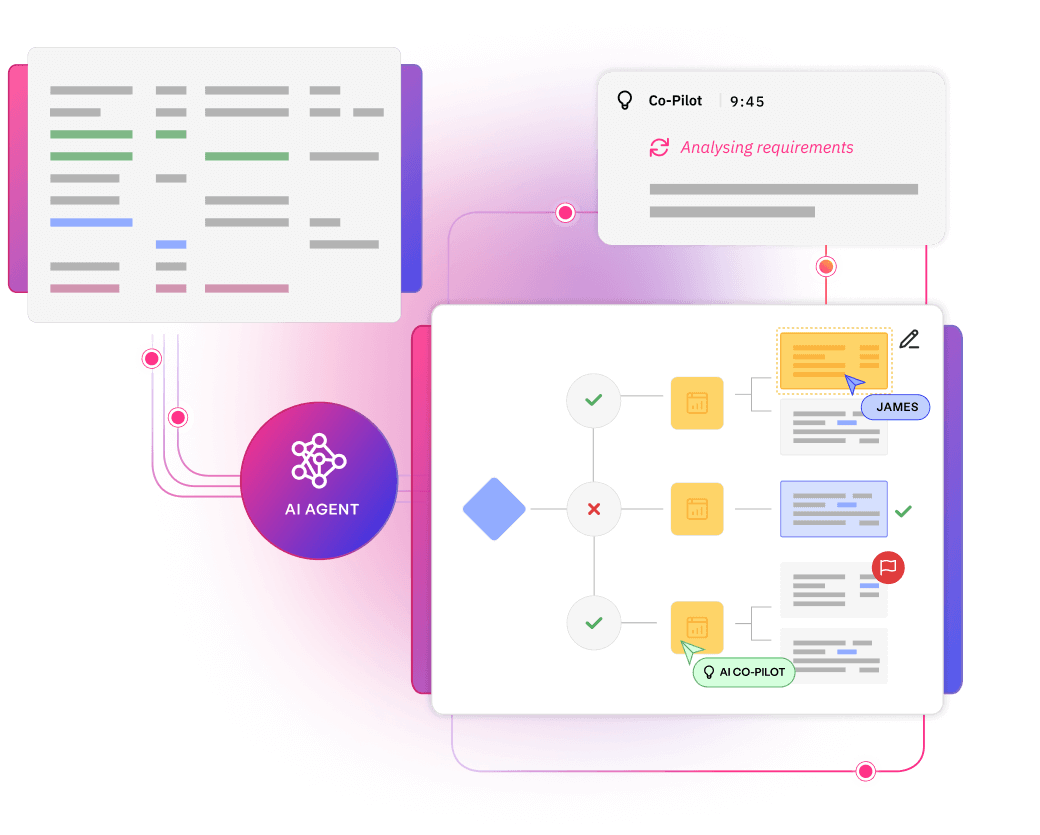

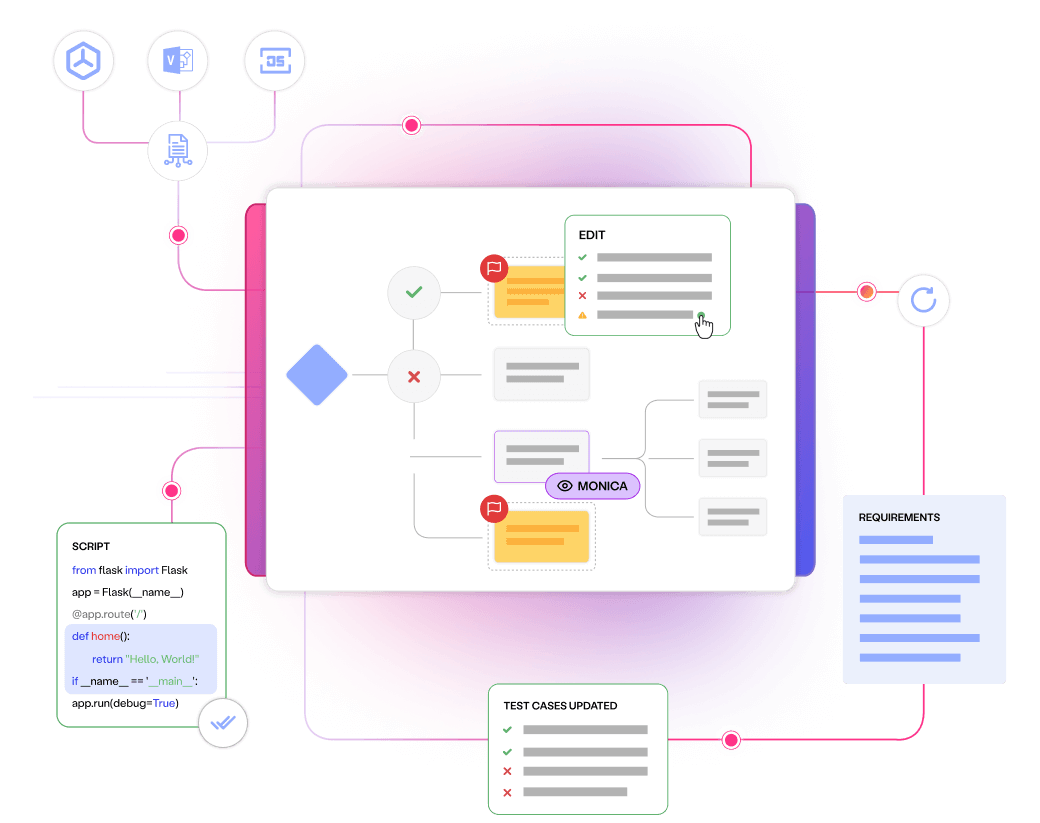

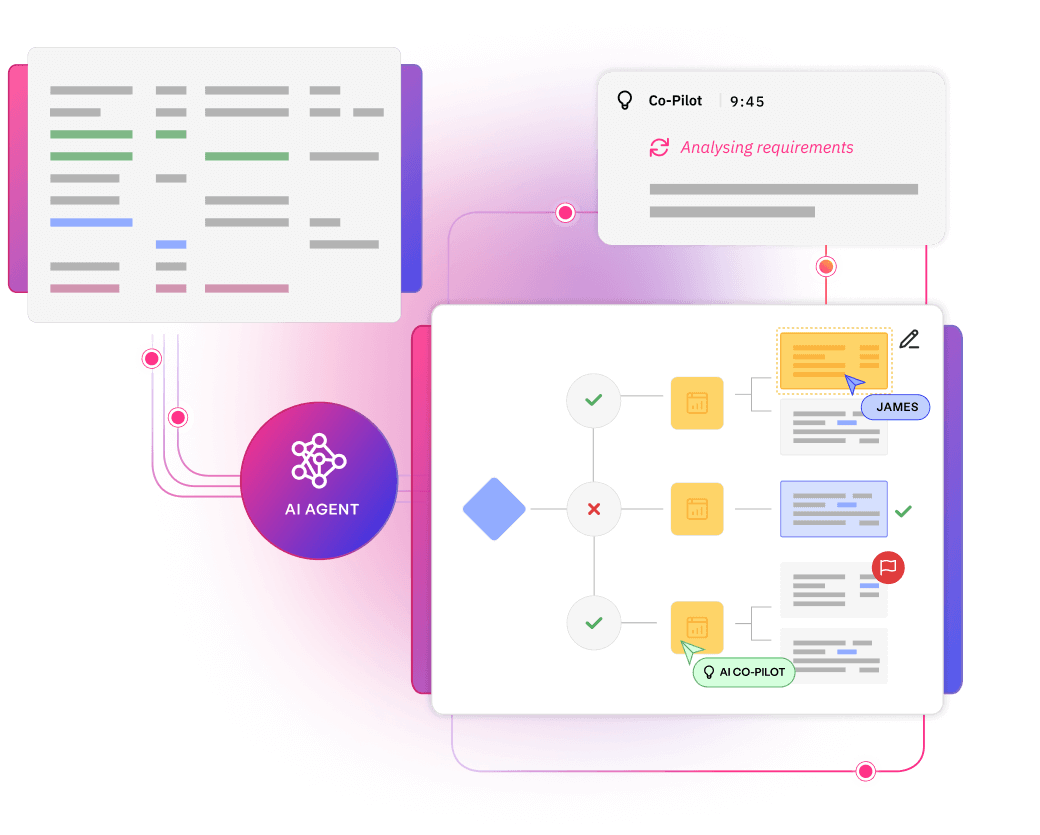

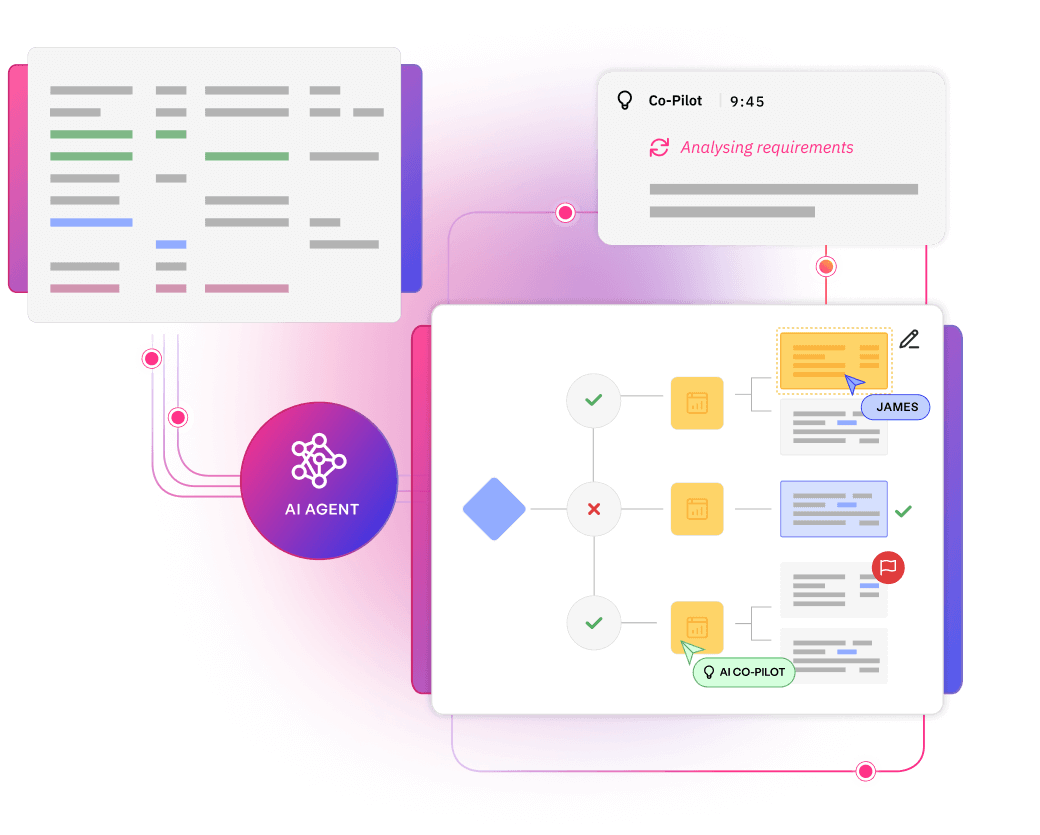

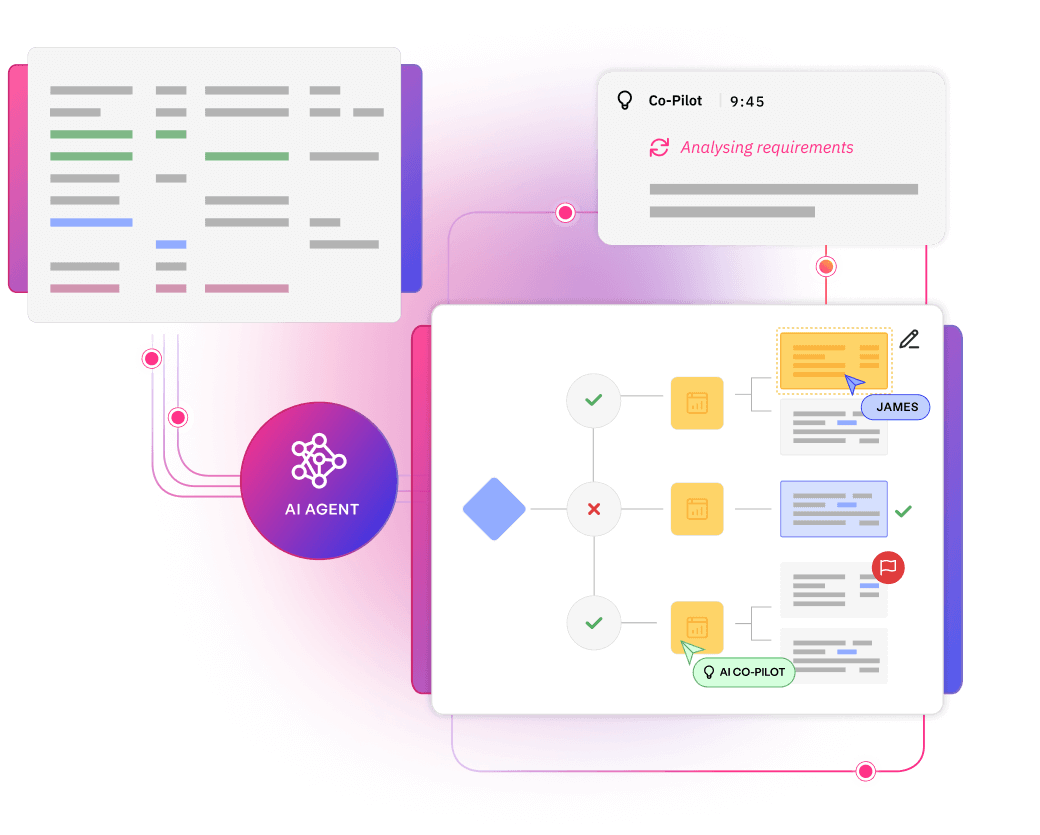

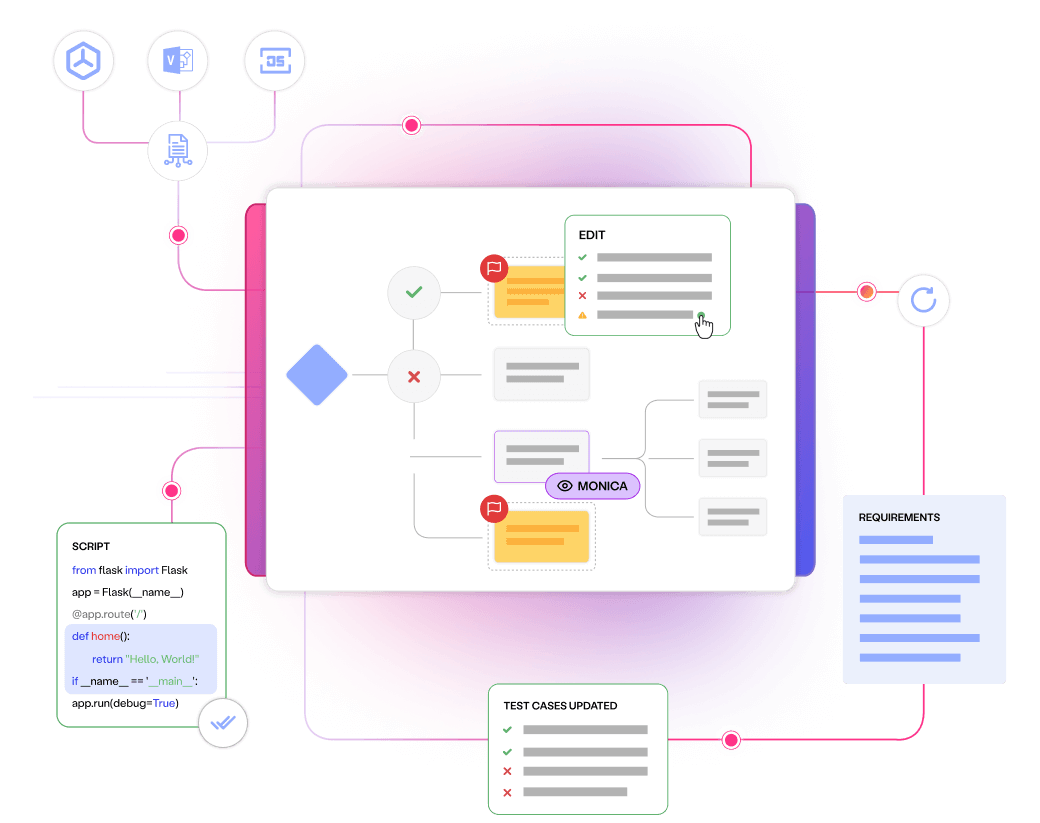

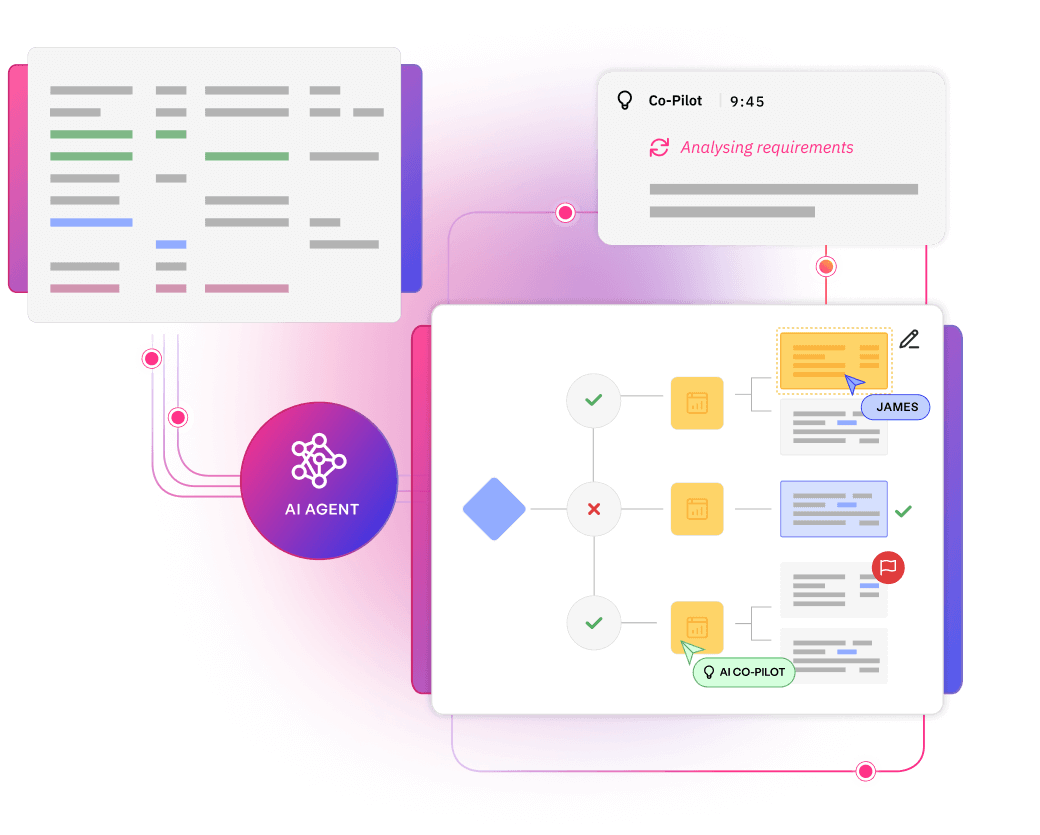

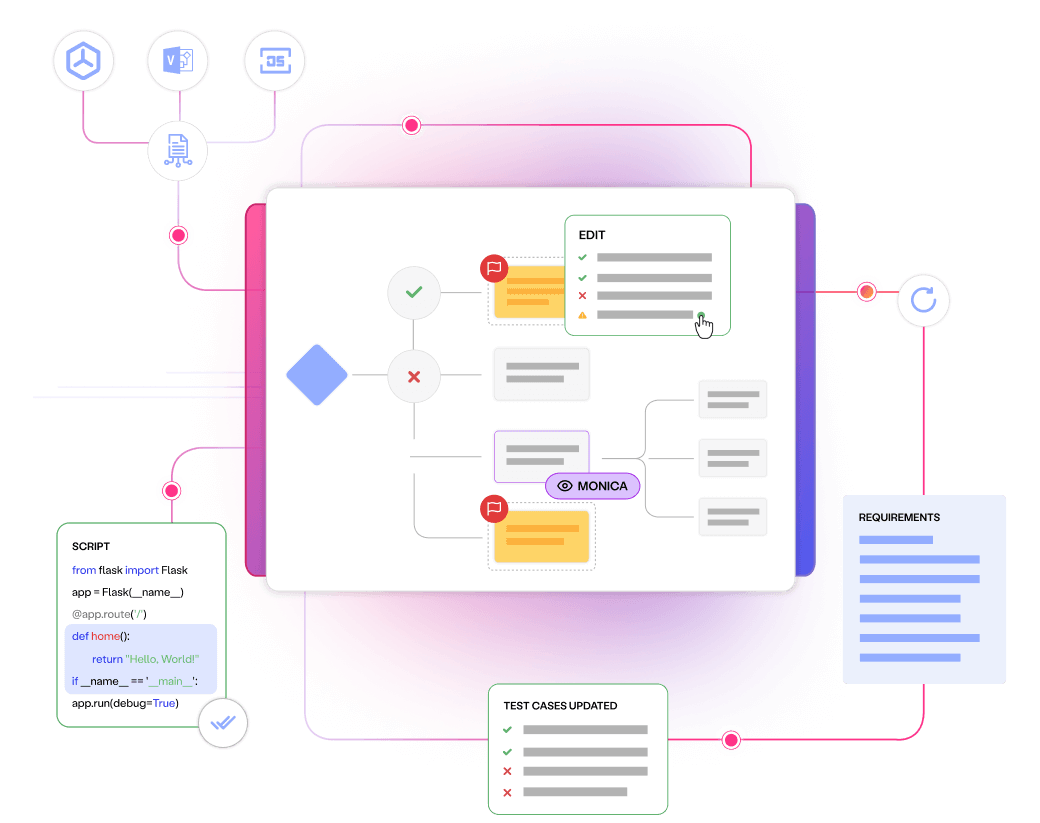

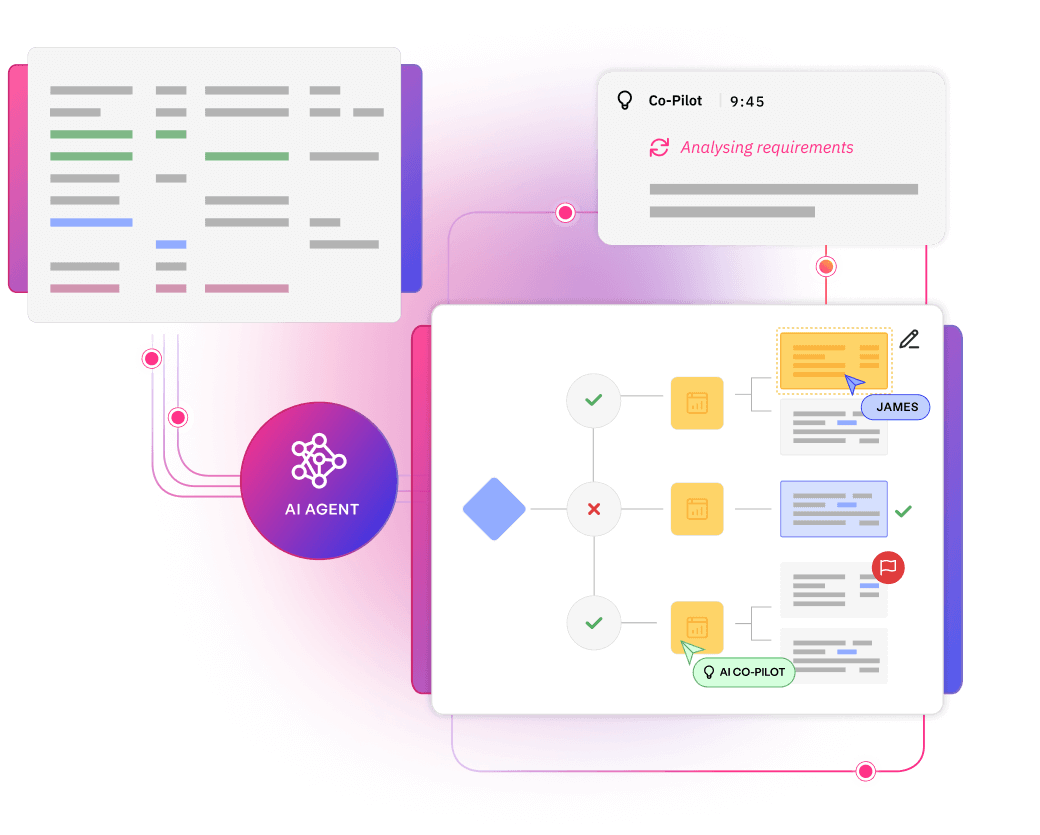

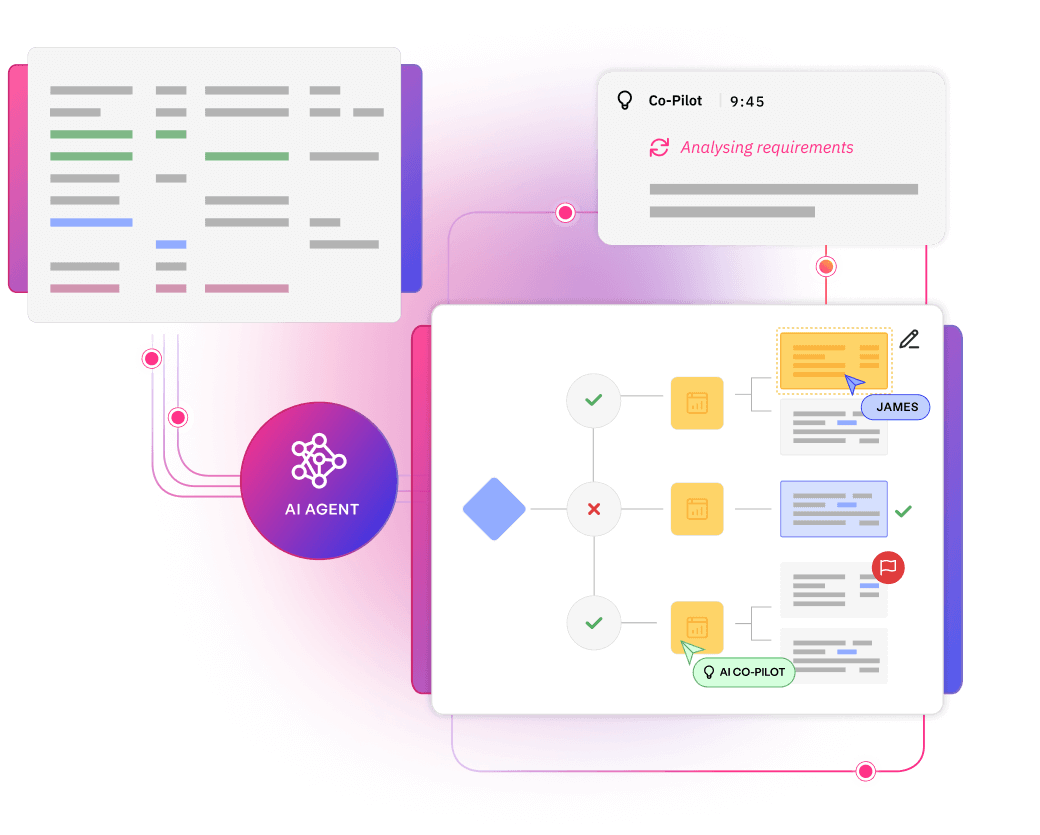

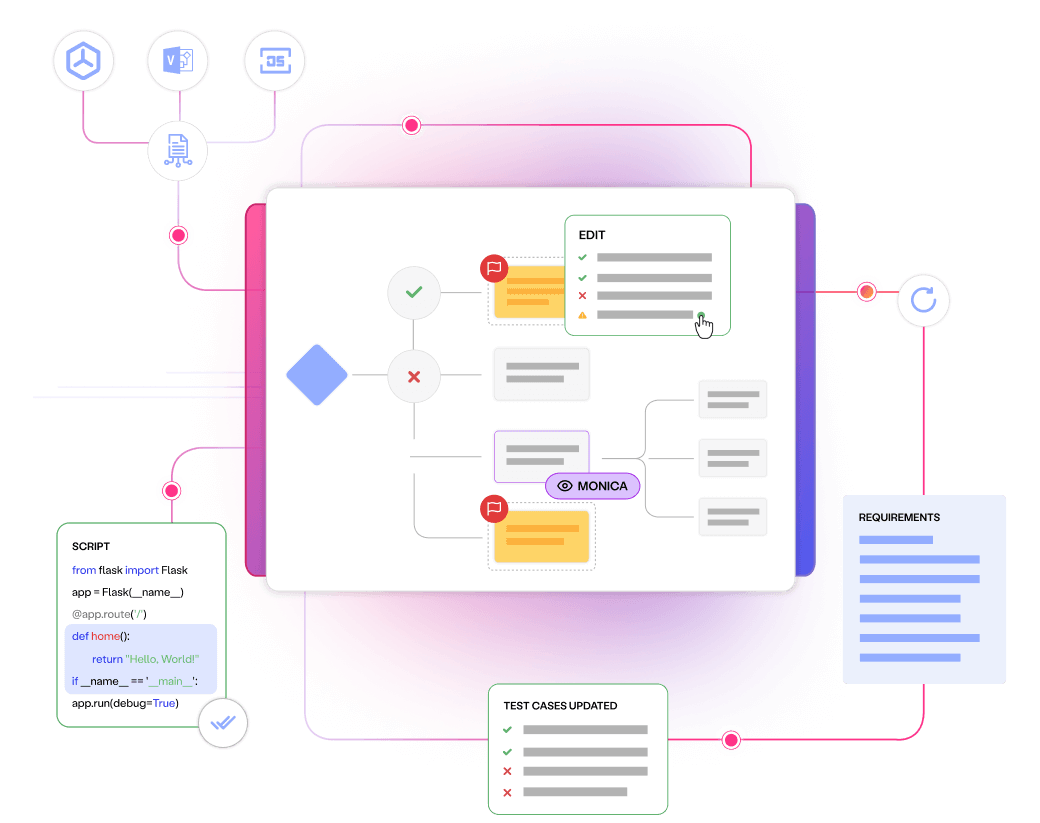

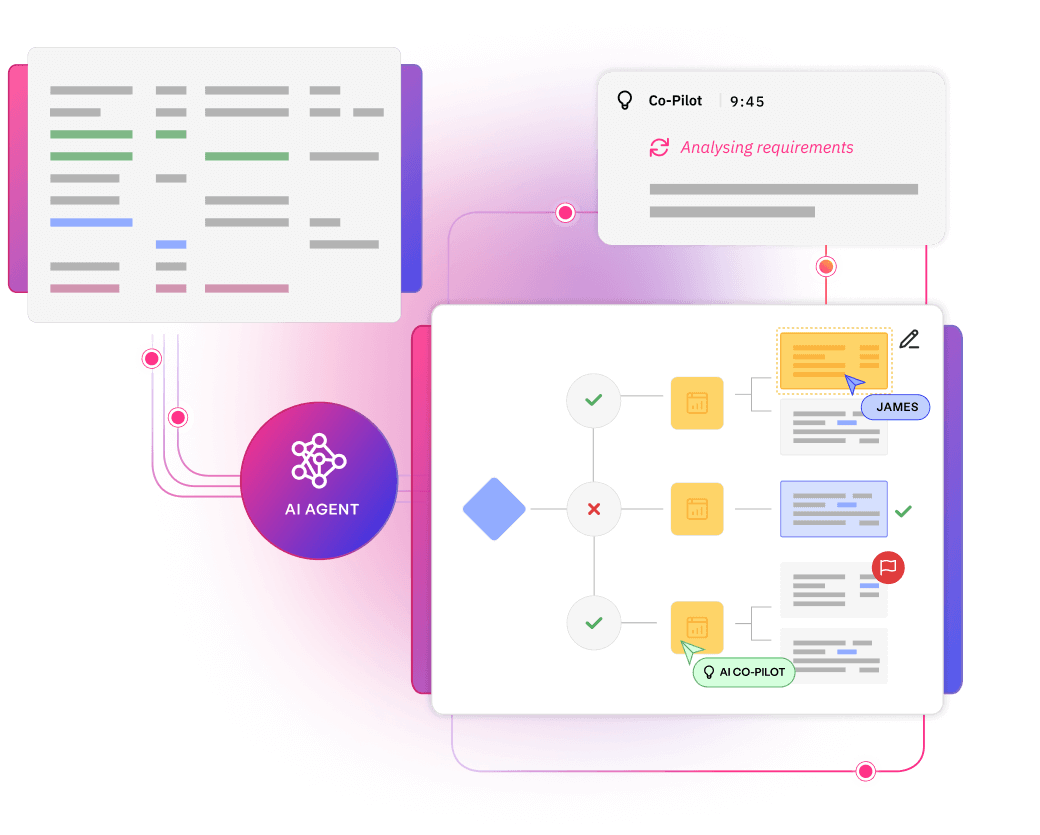

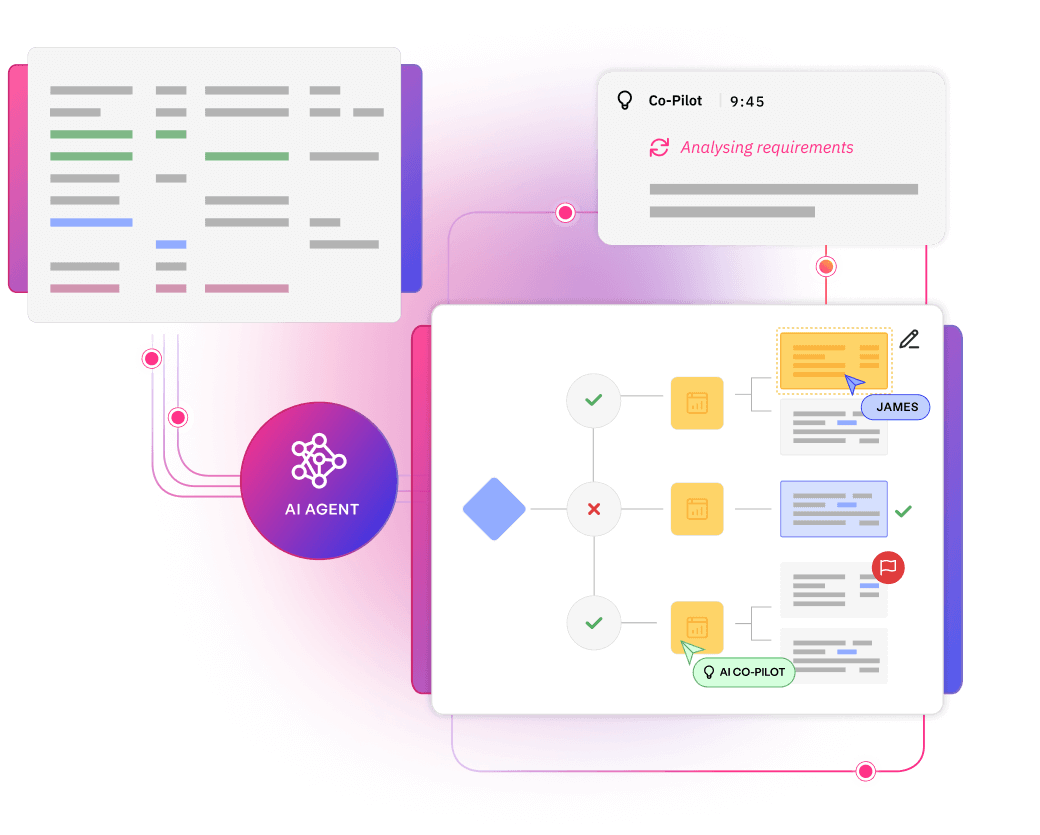

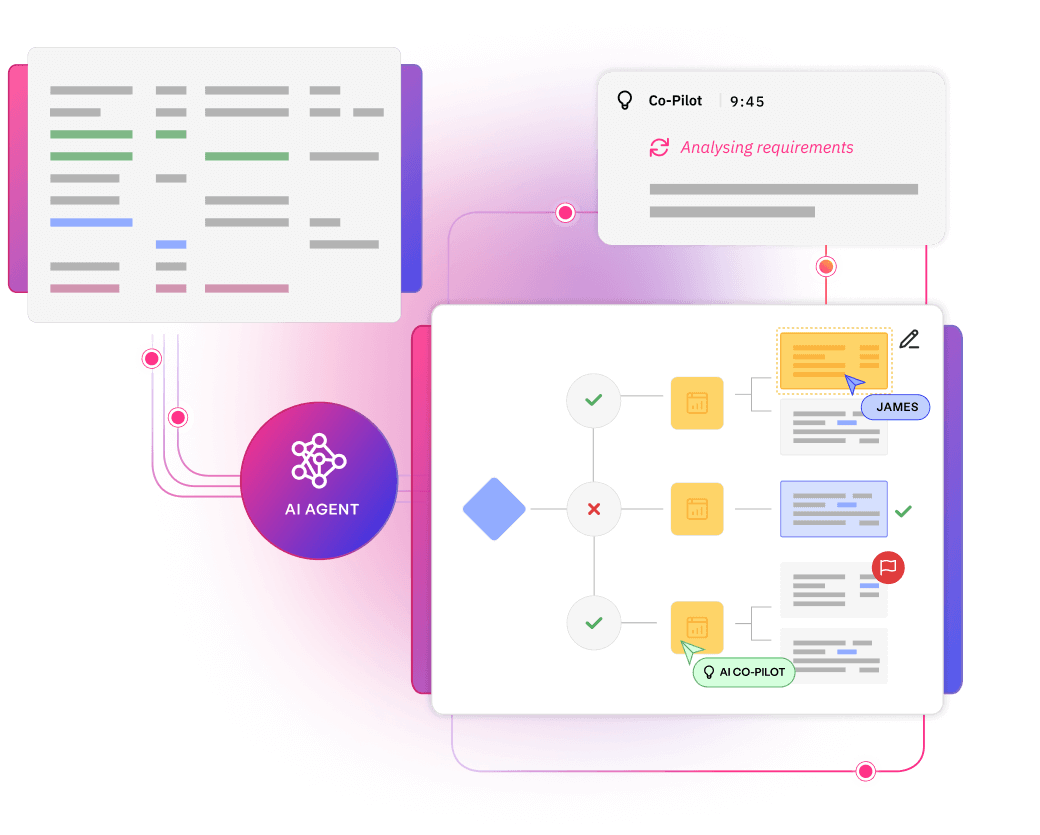

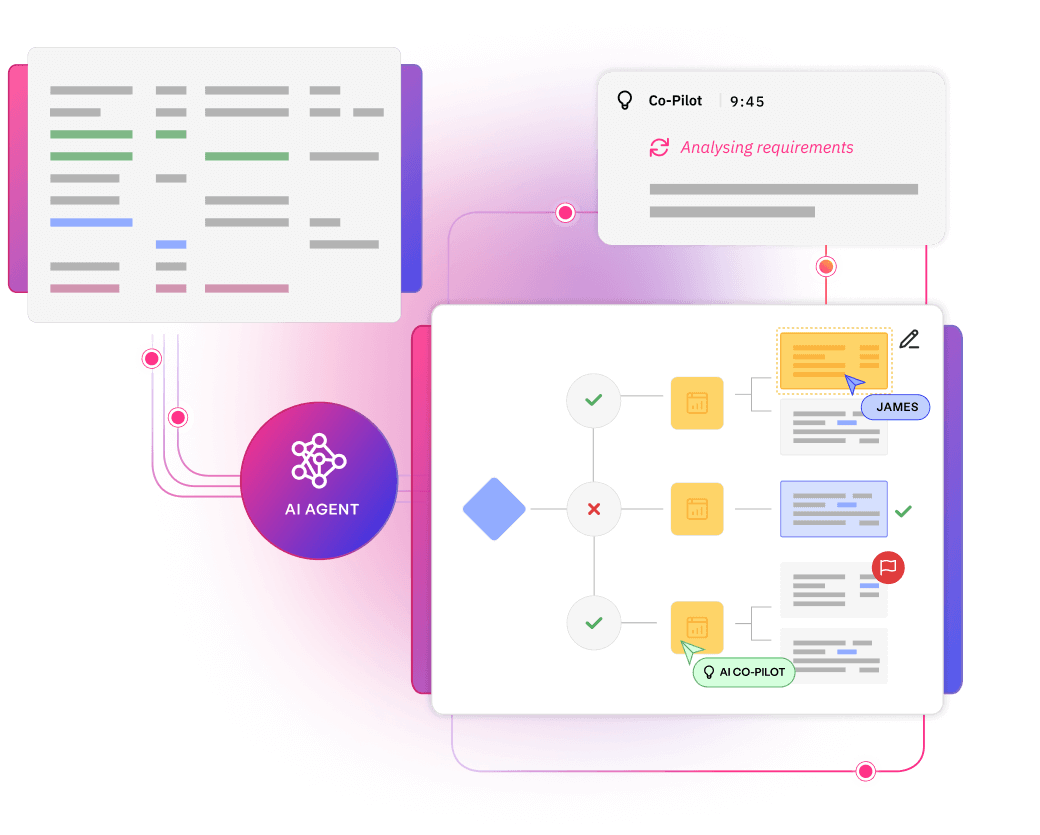

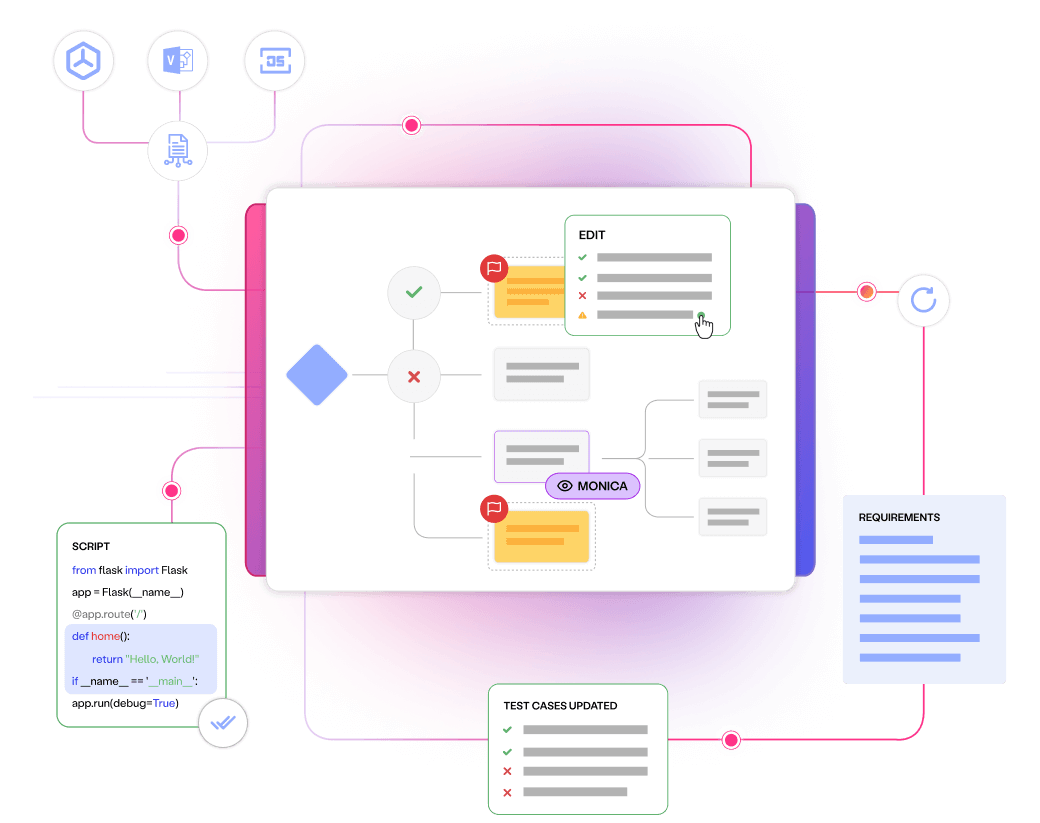

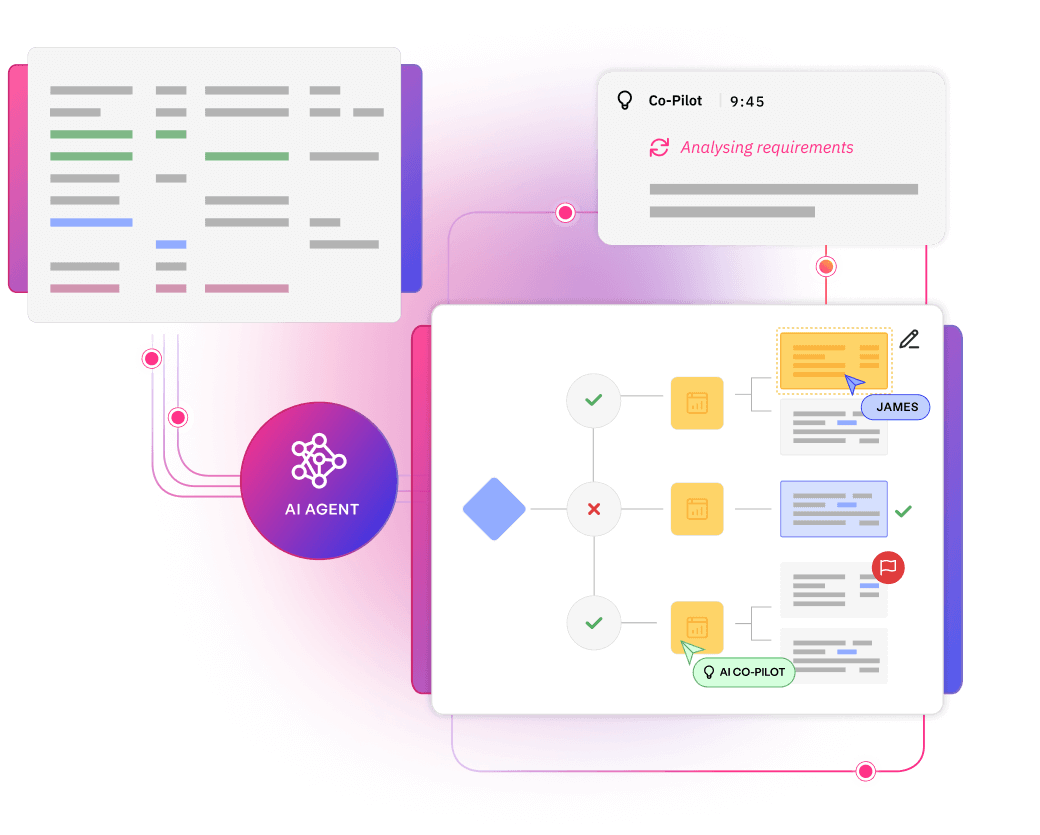

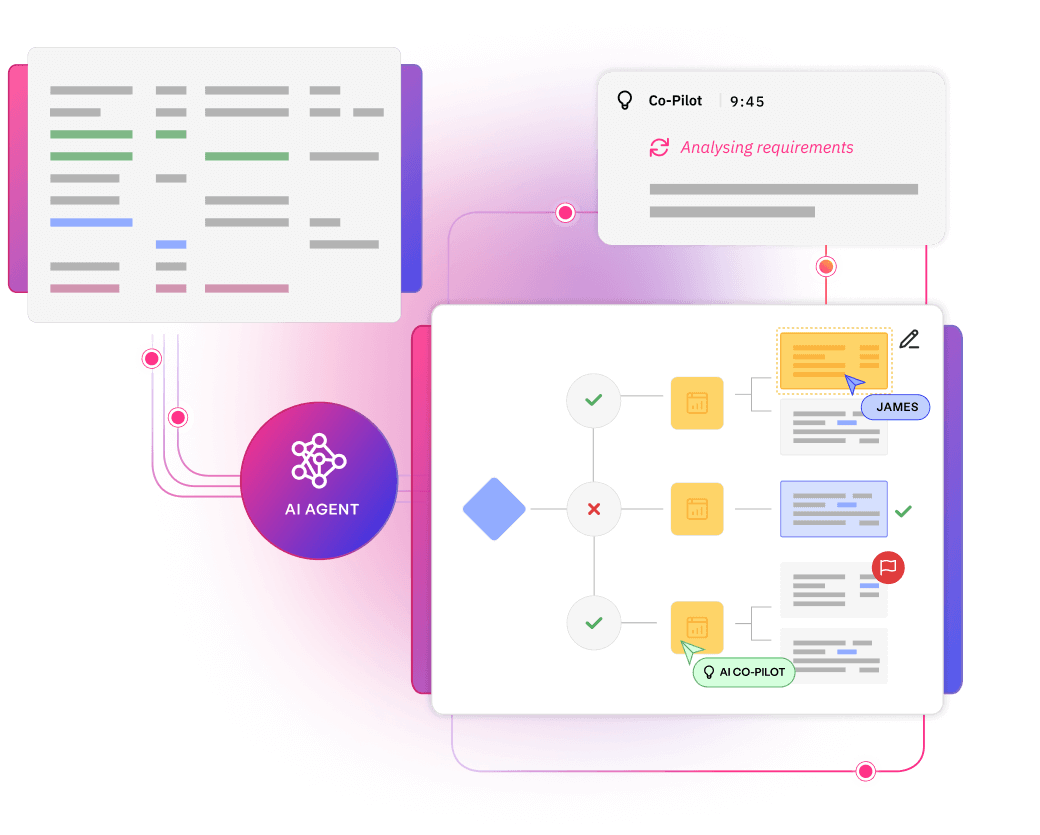

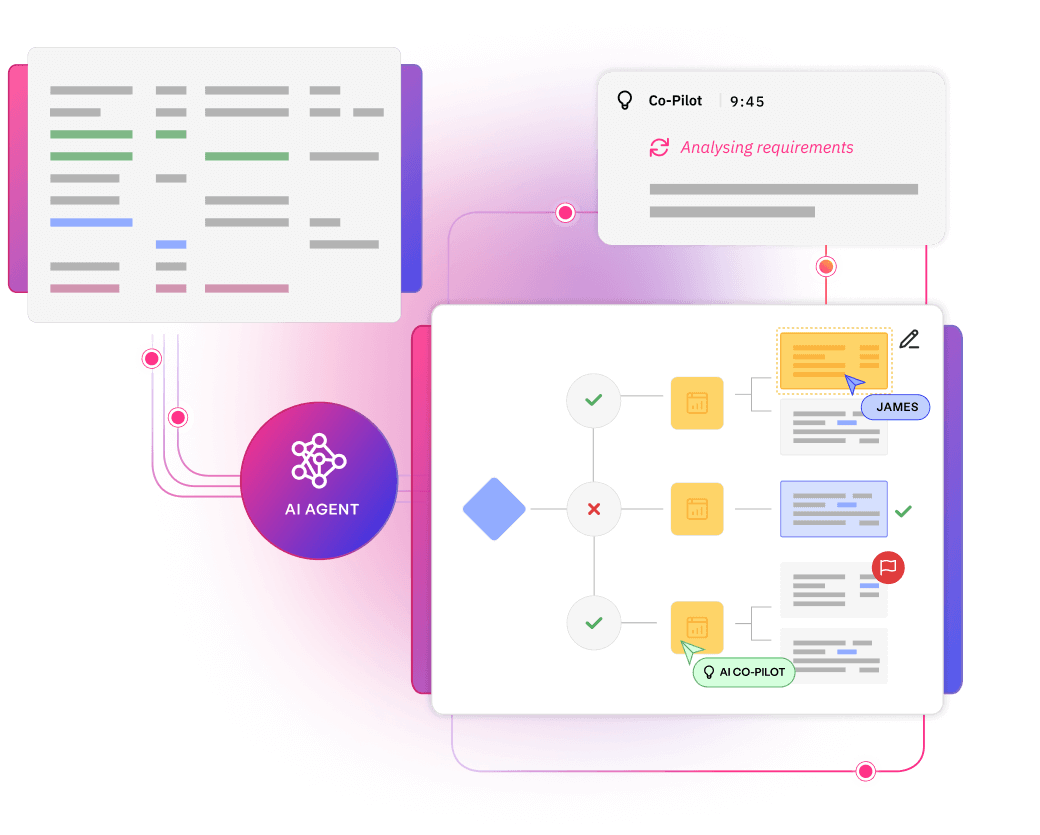

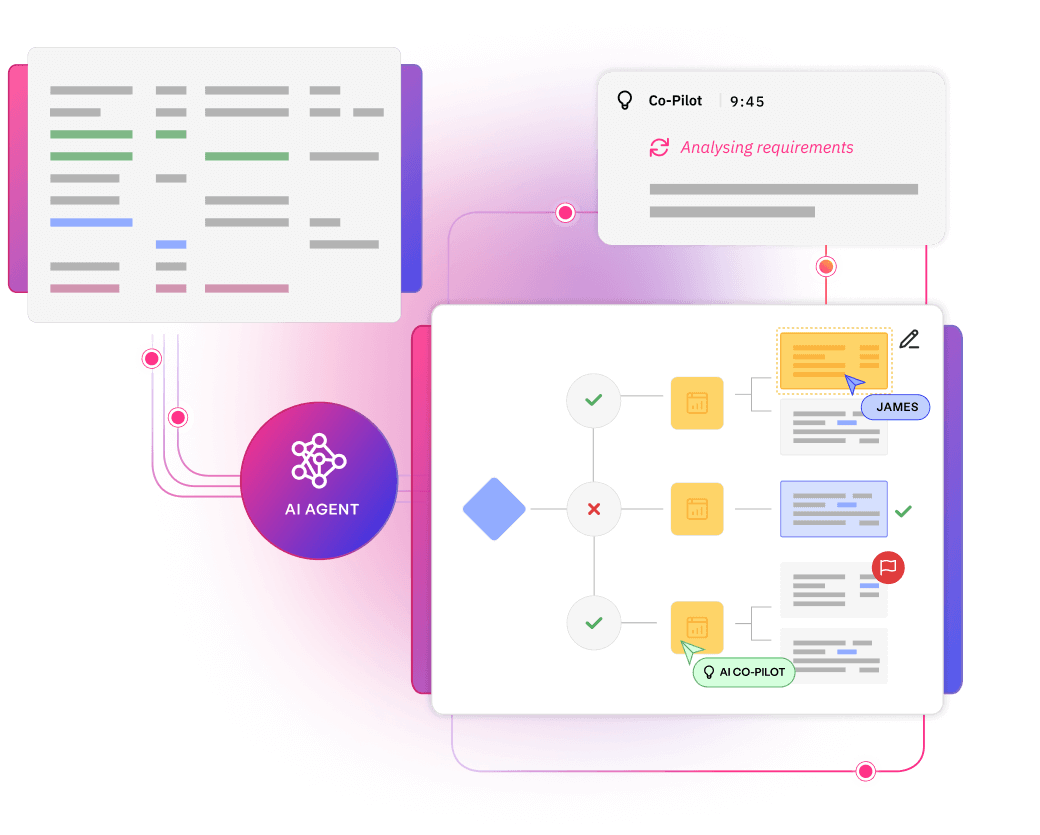

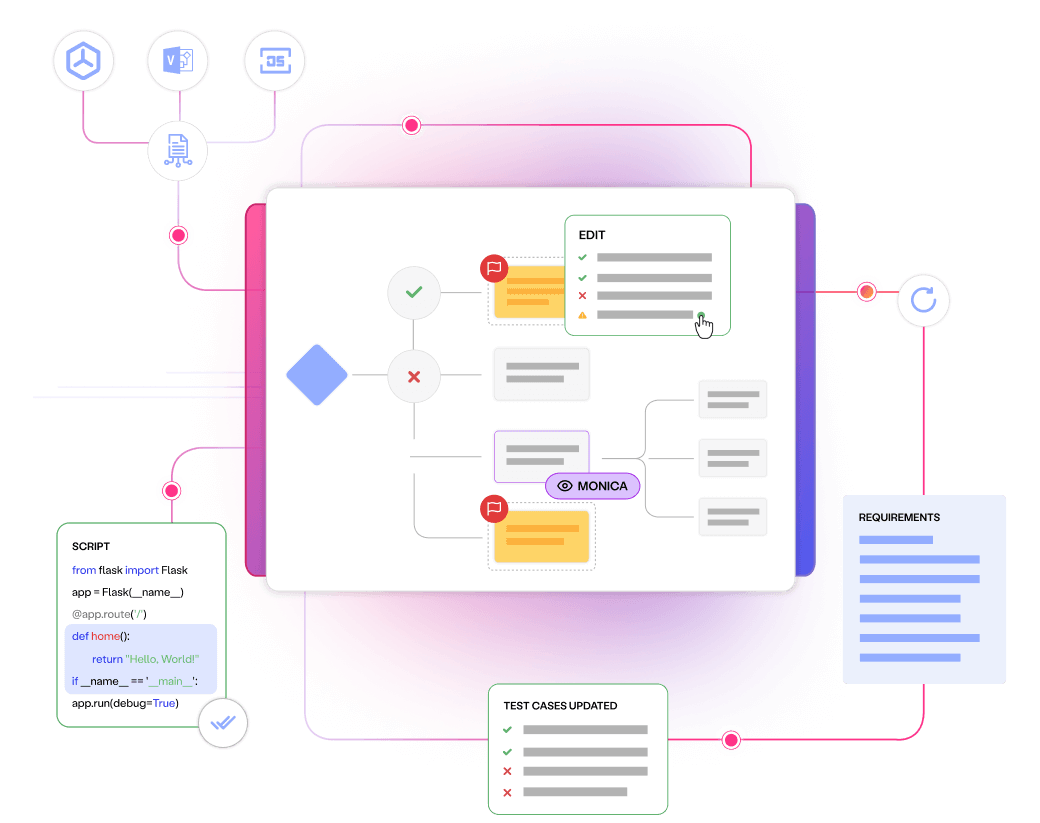

Align every stakeholder, requirement and test to measurable quality outcomes.

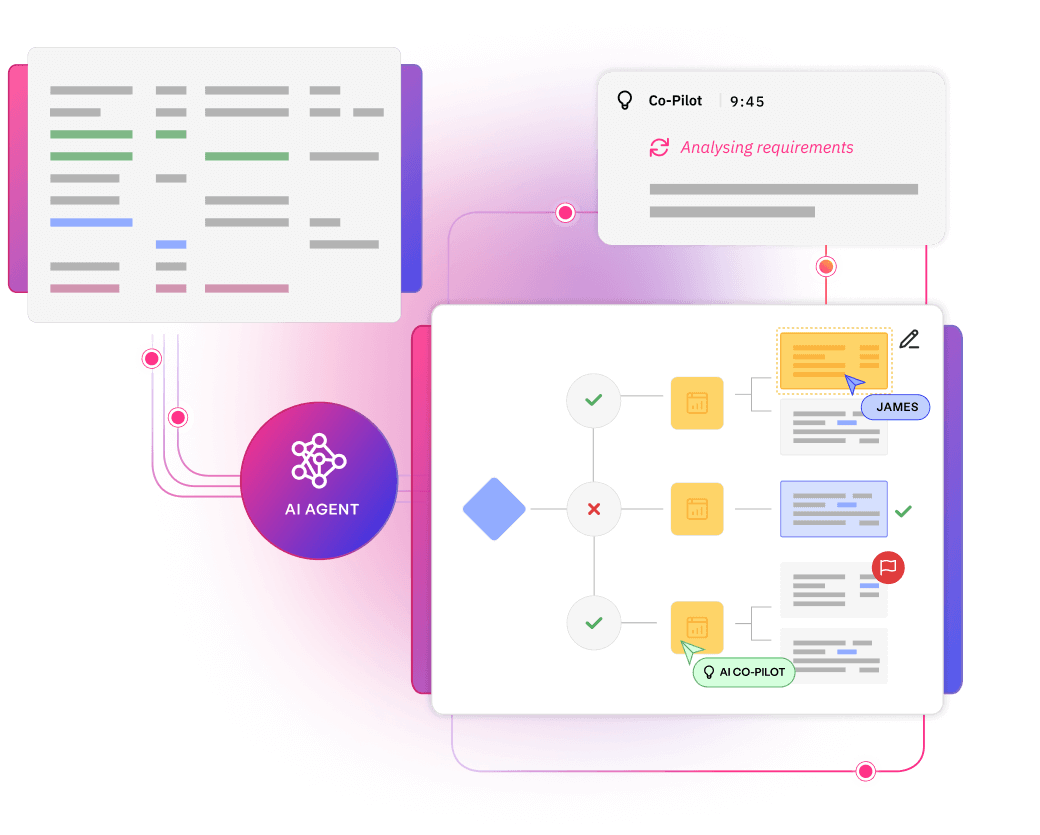

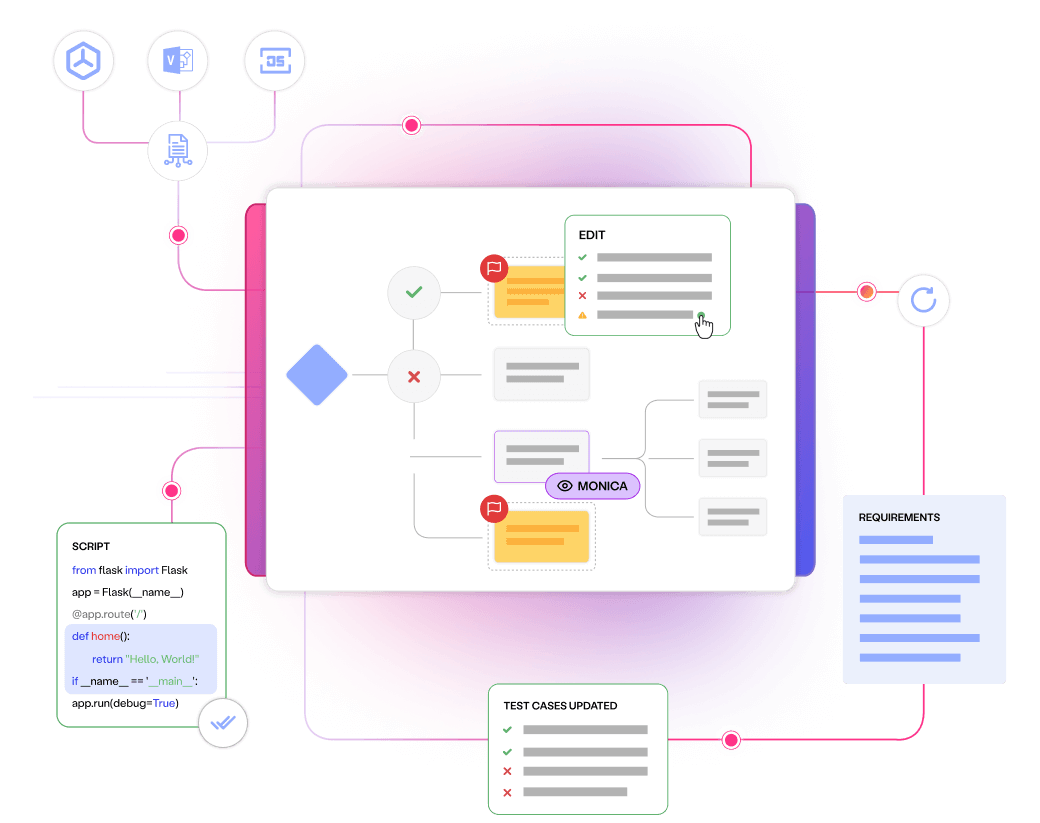

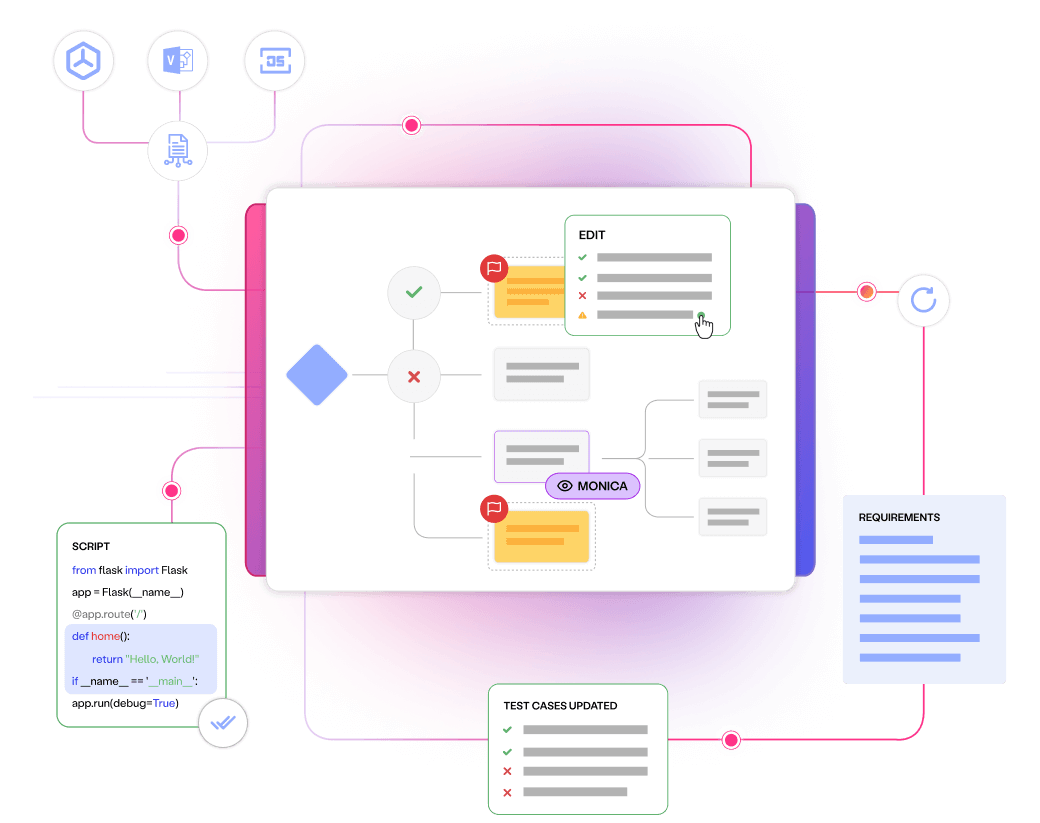

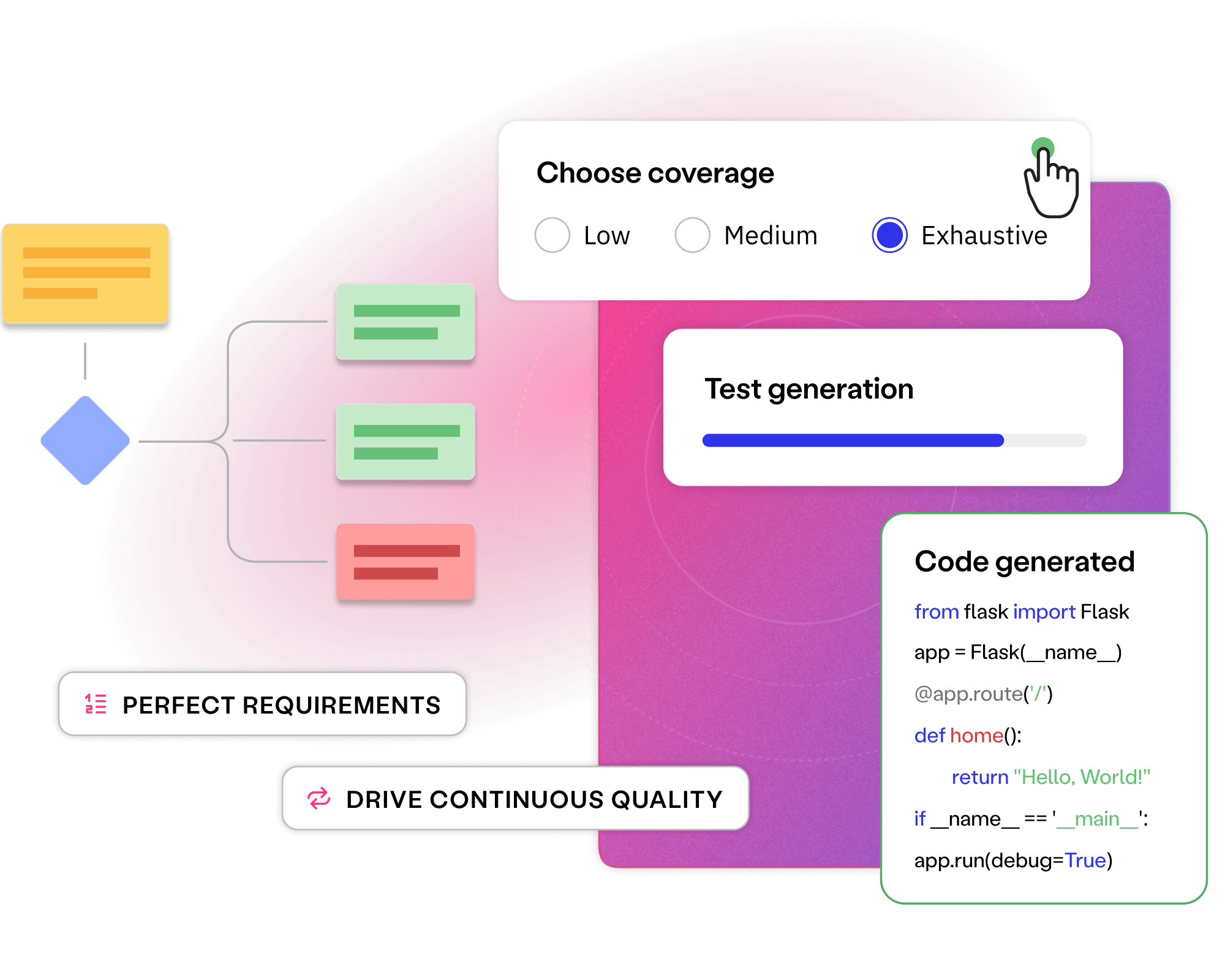

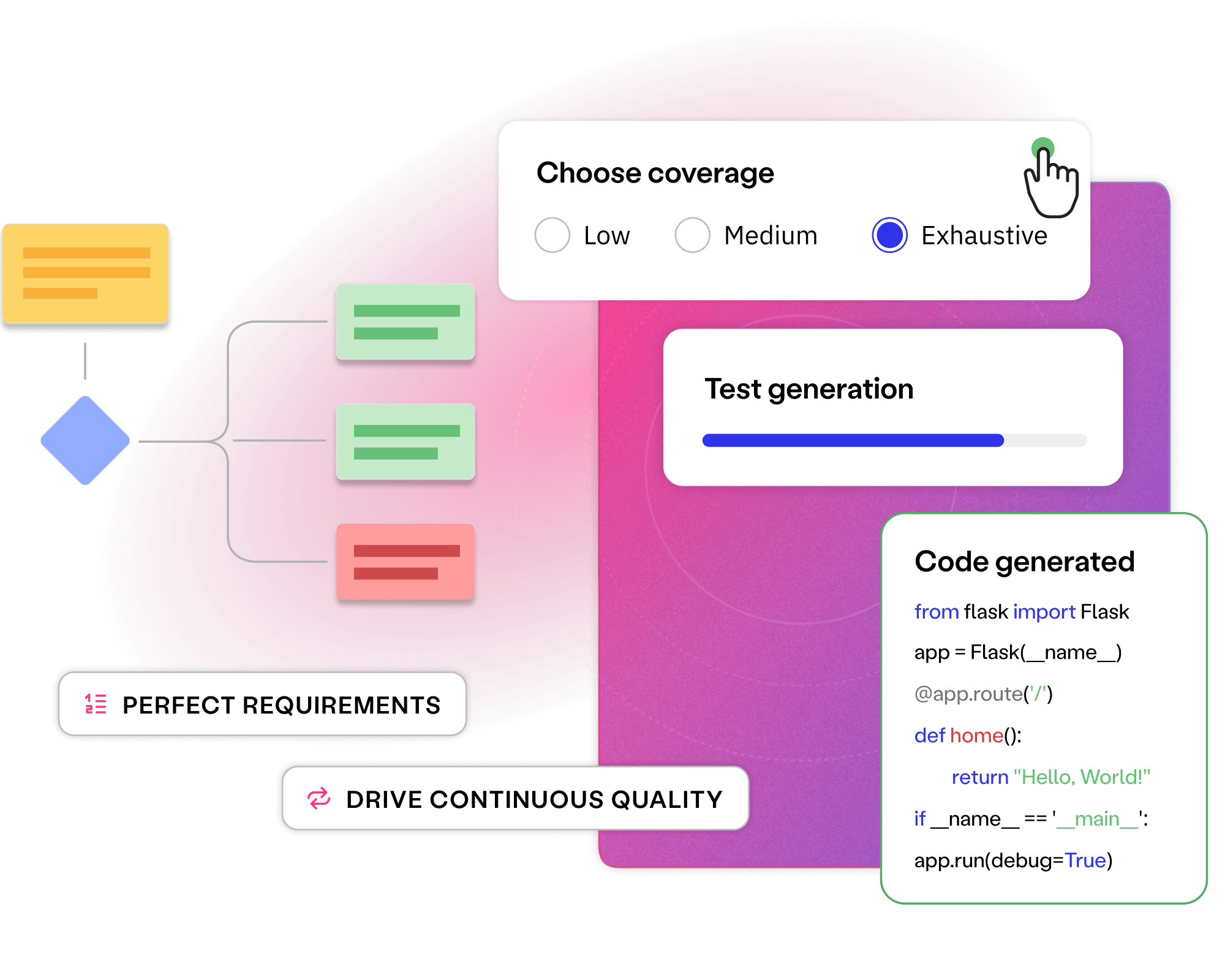

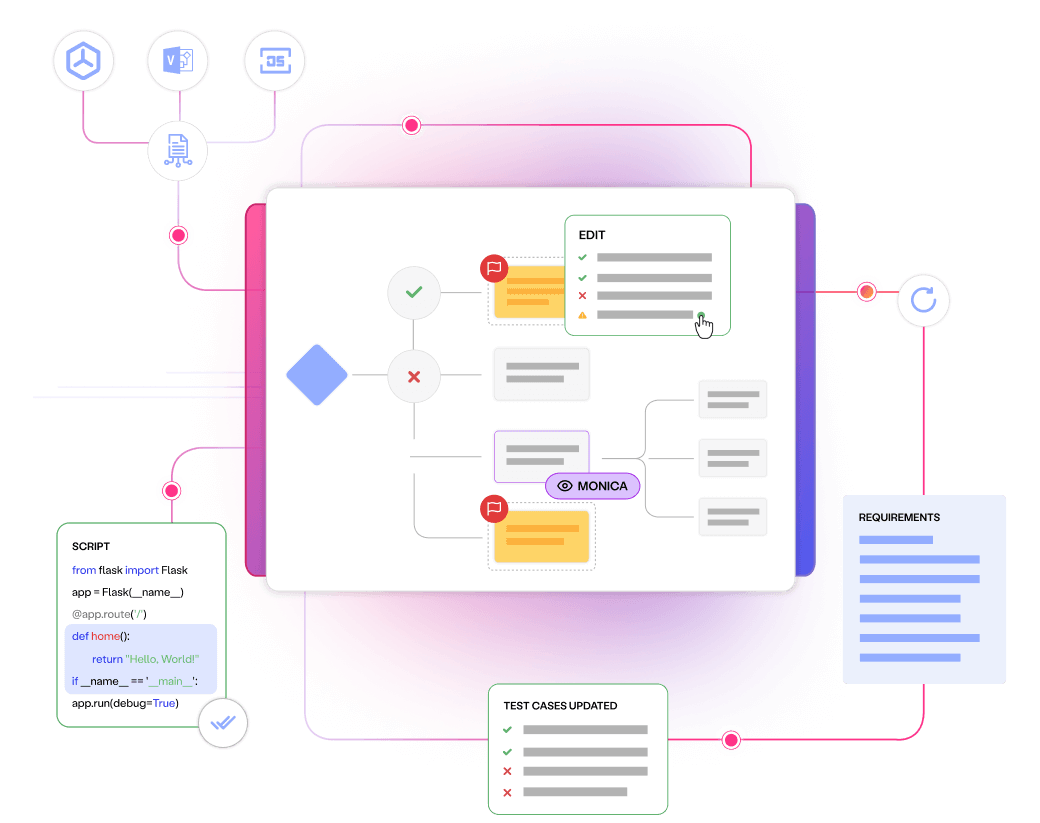

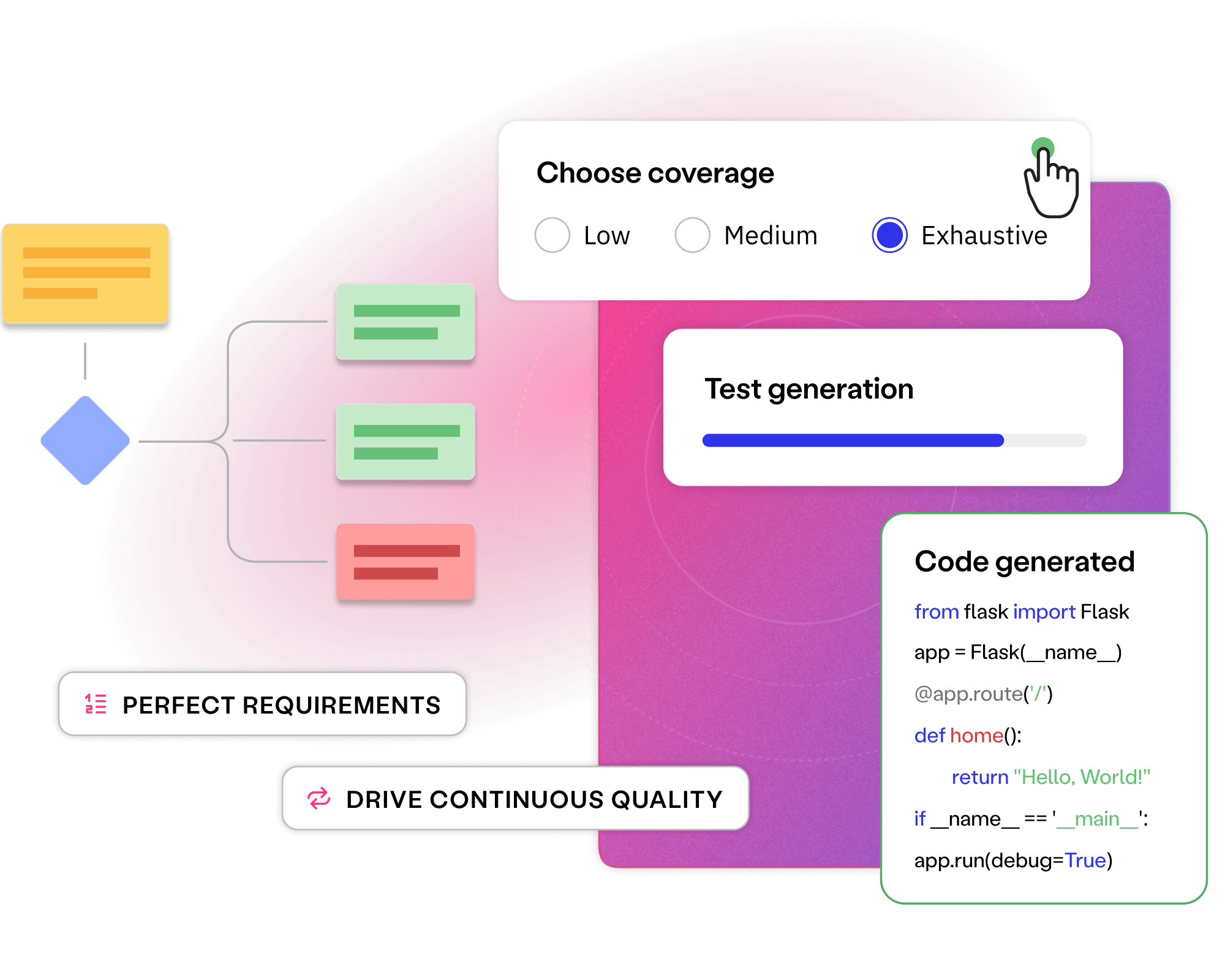

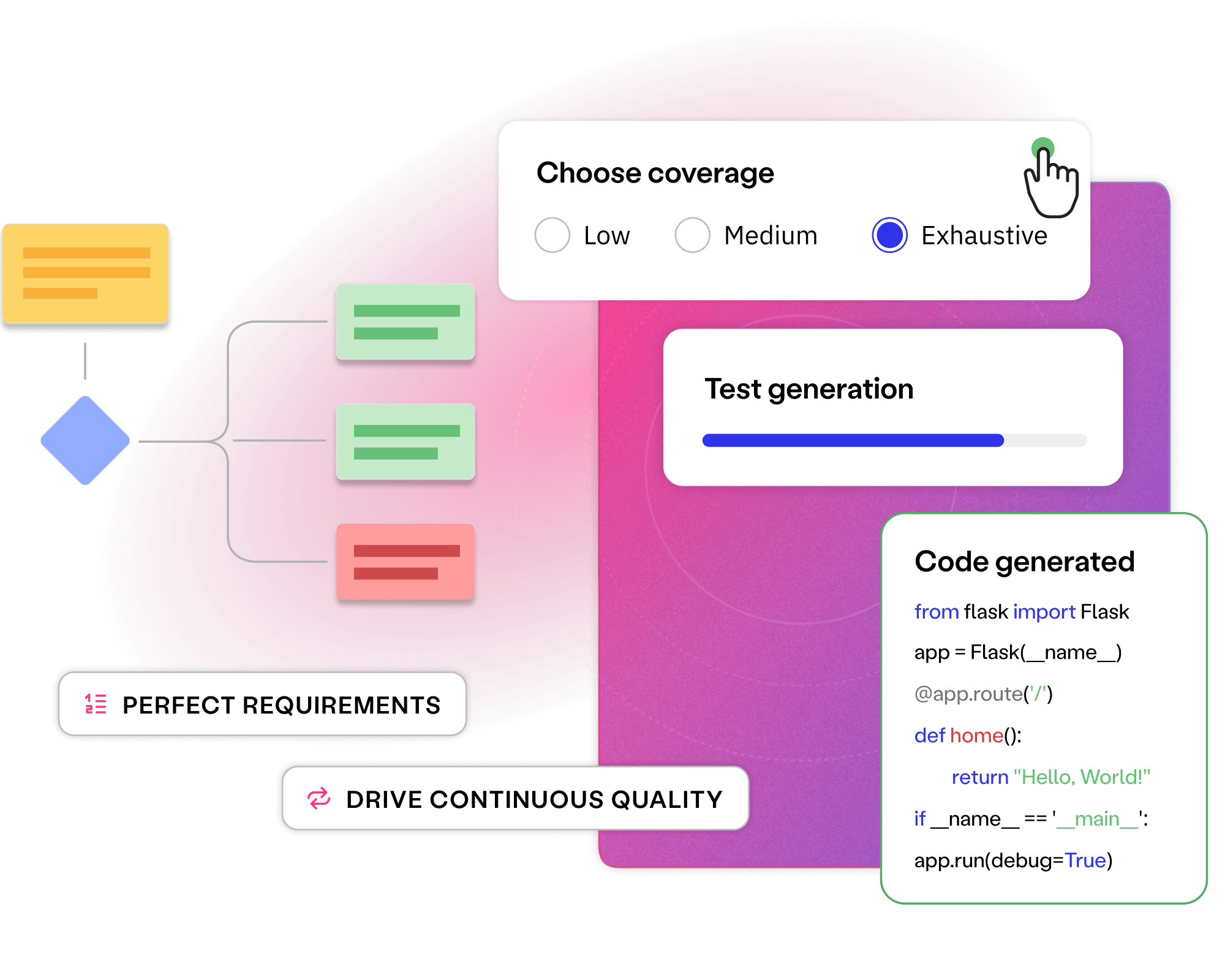

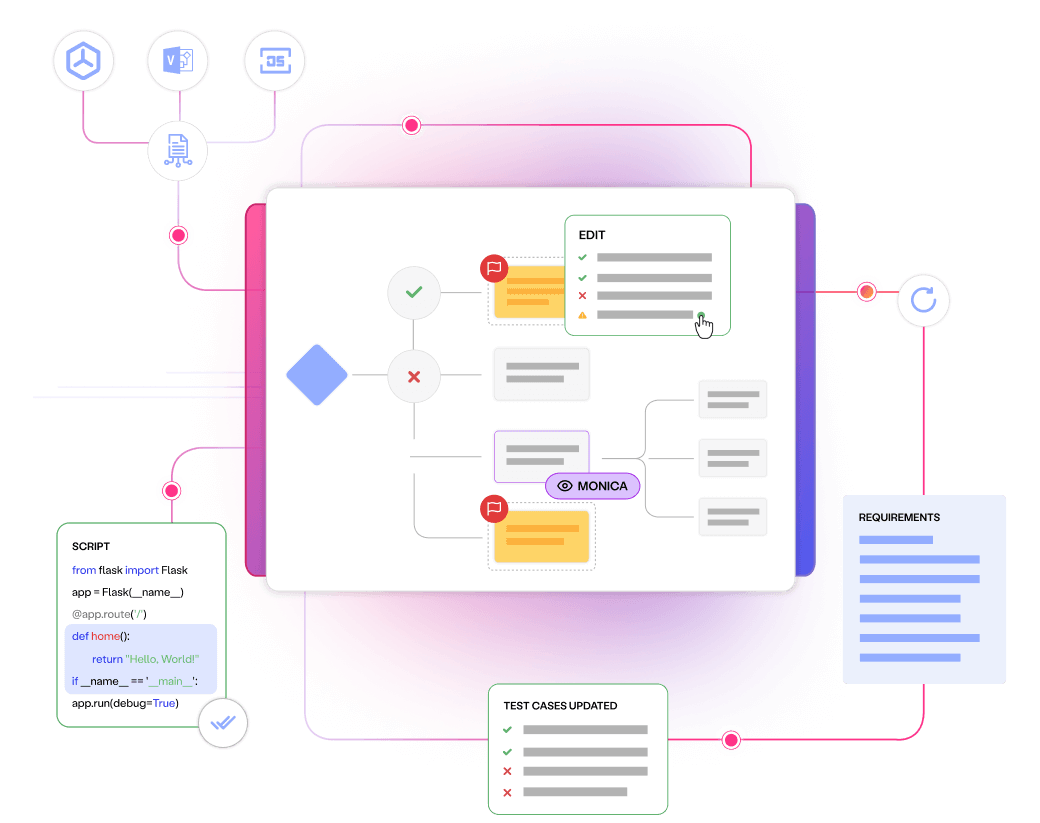

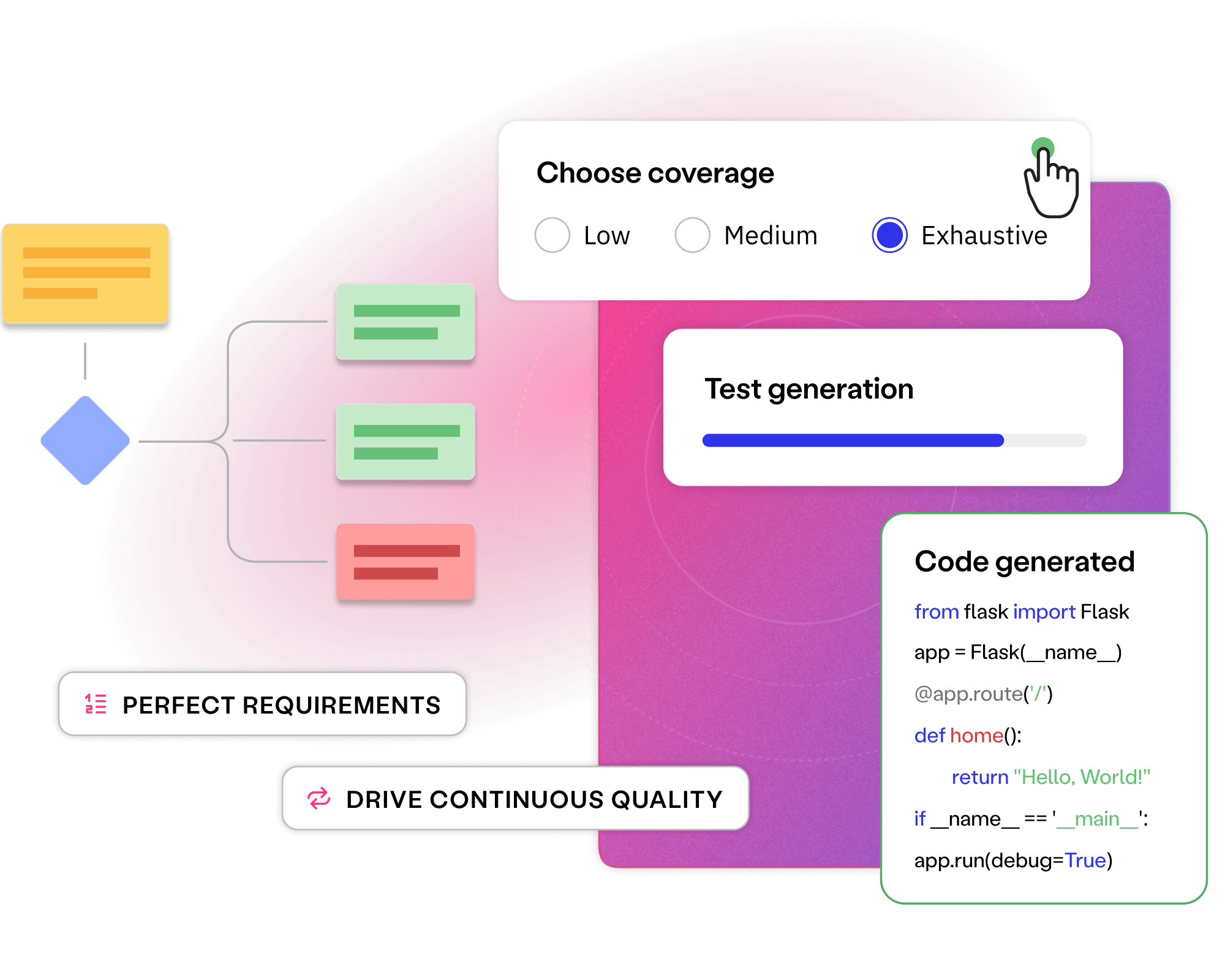

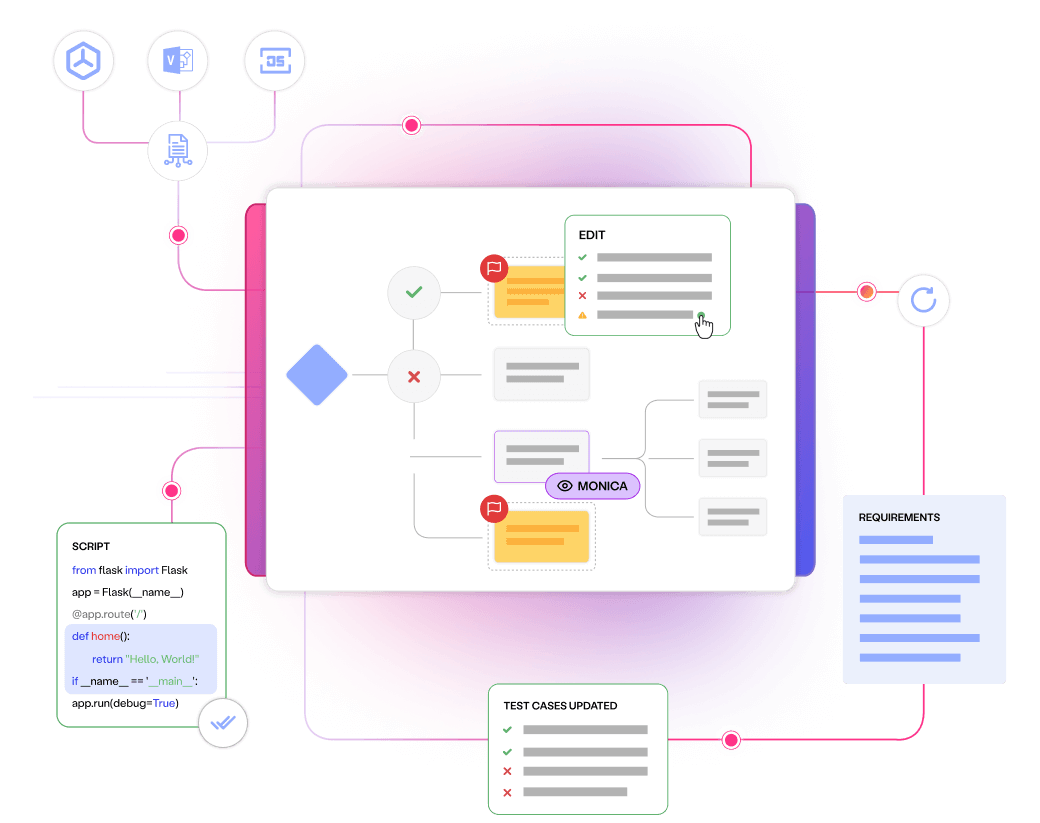

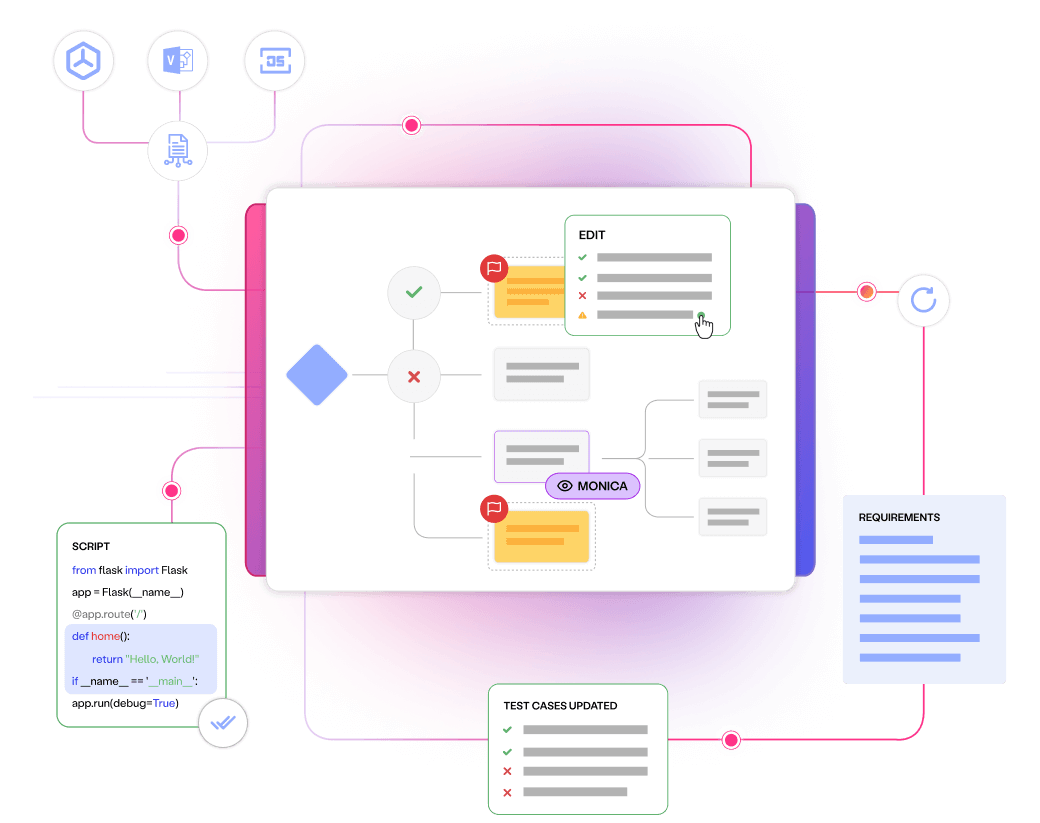

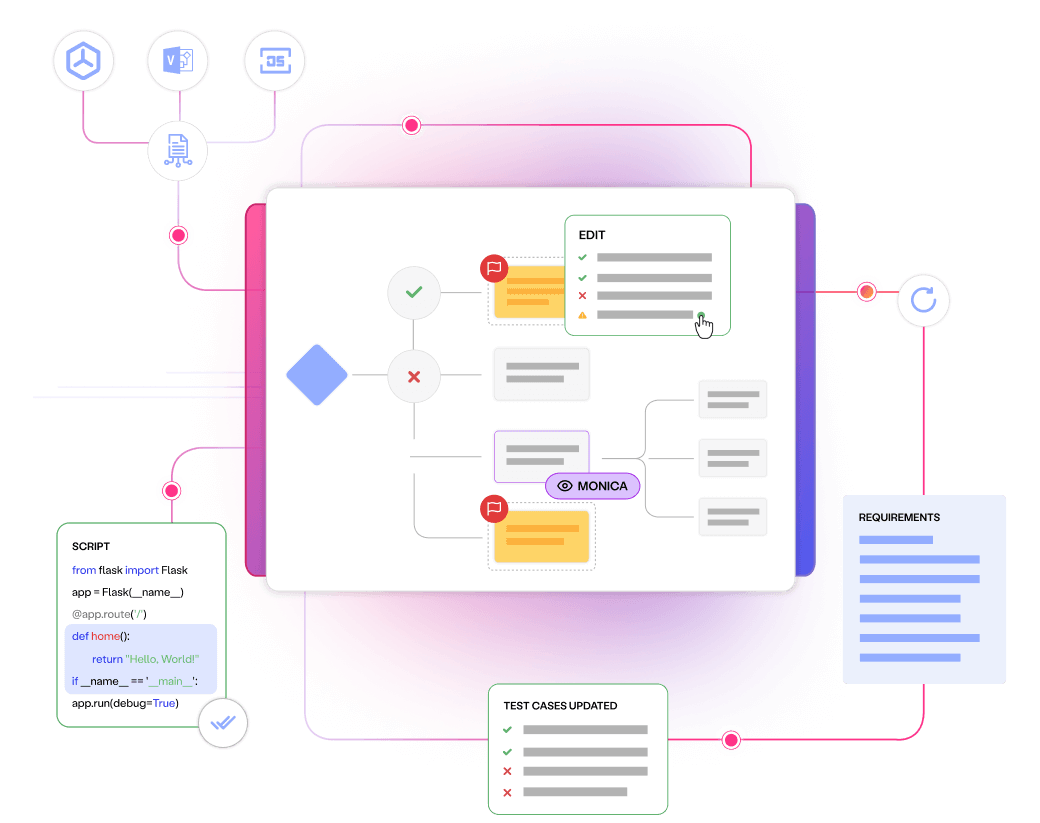

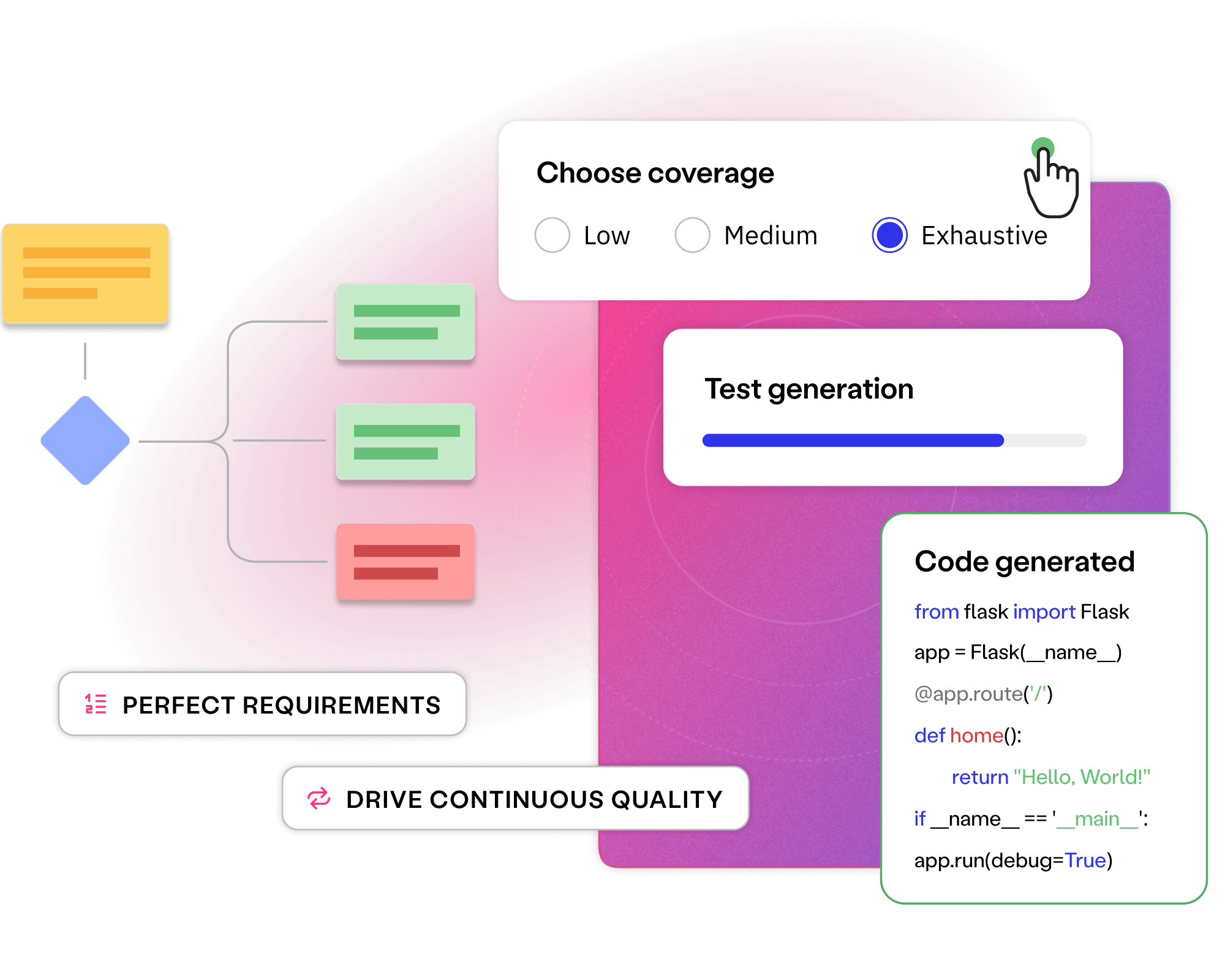

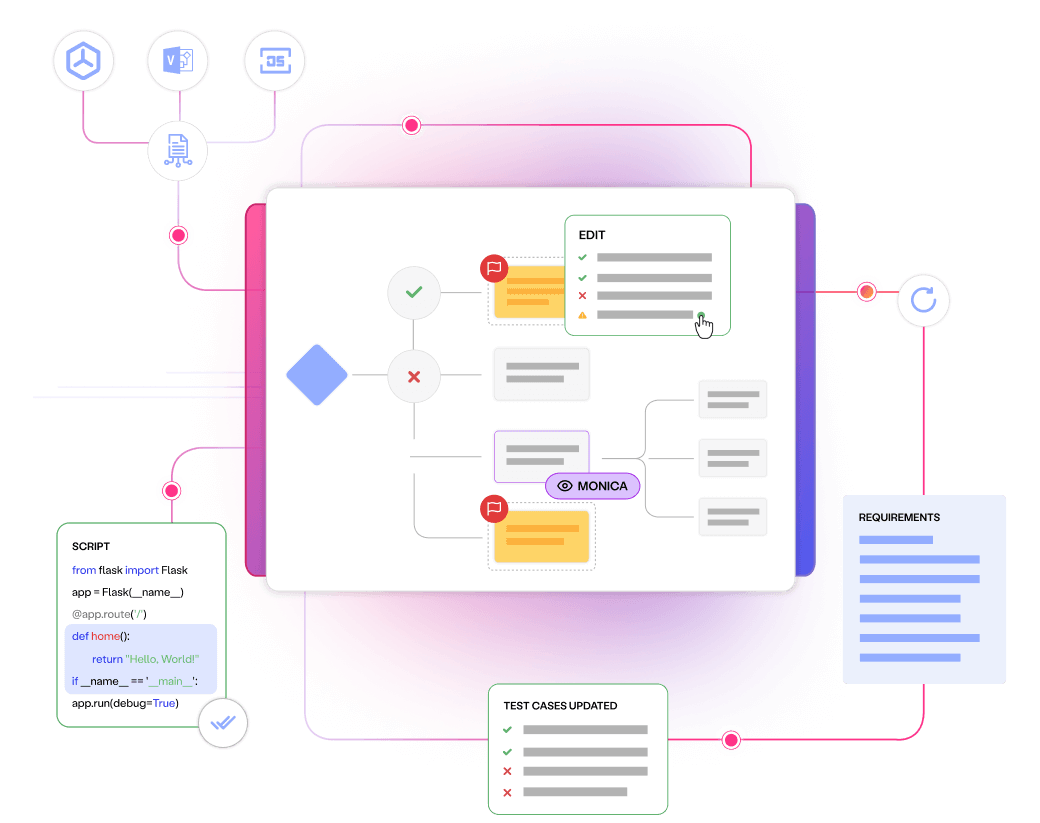

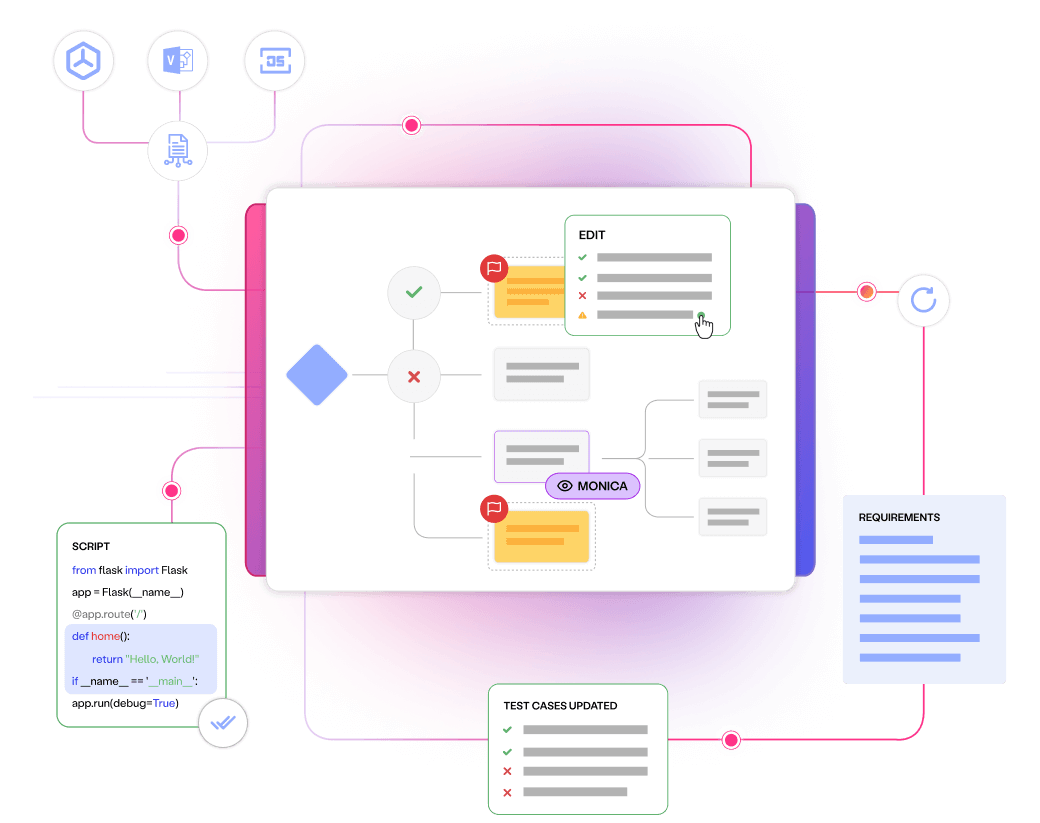

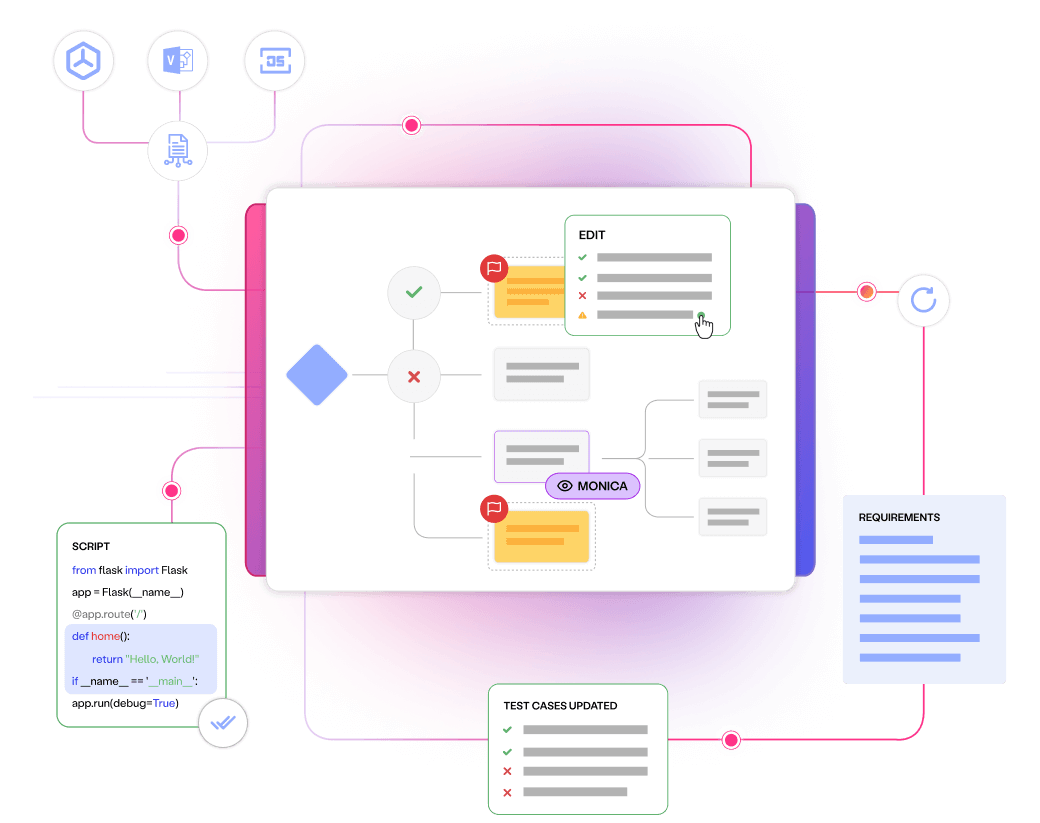

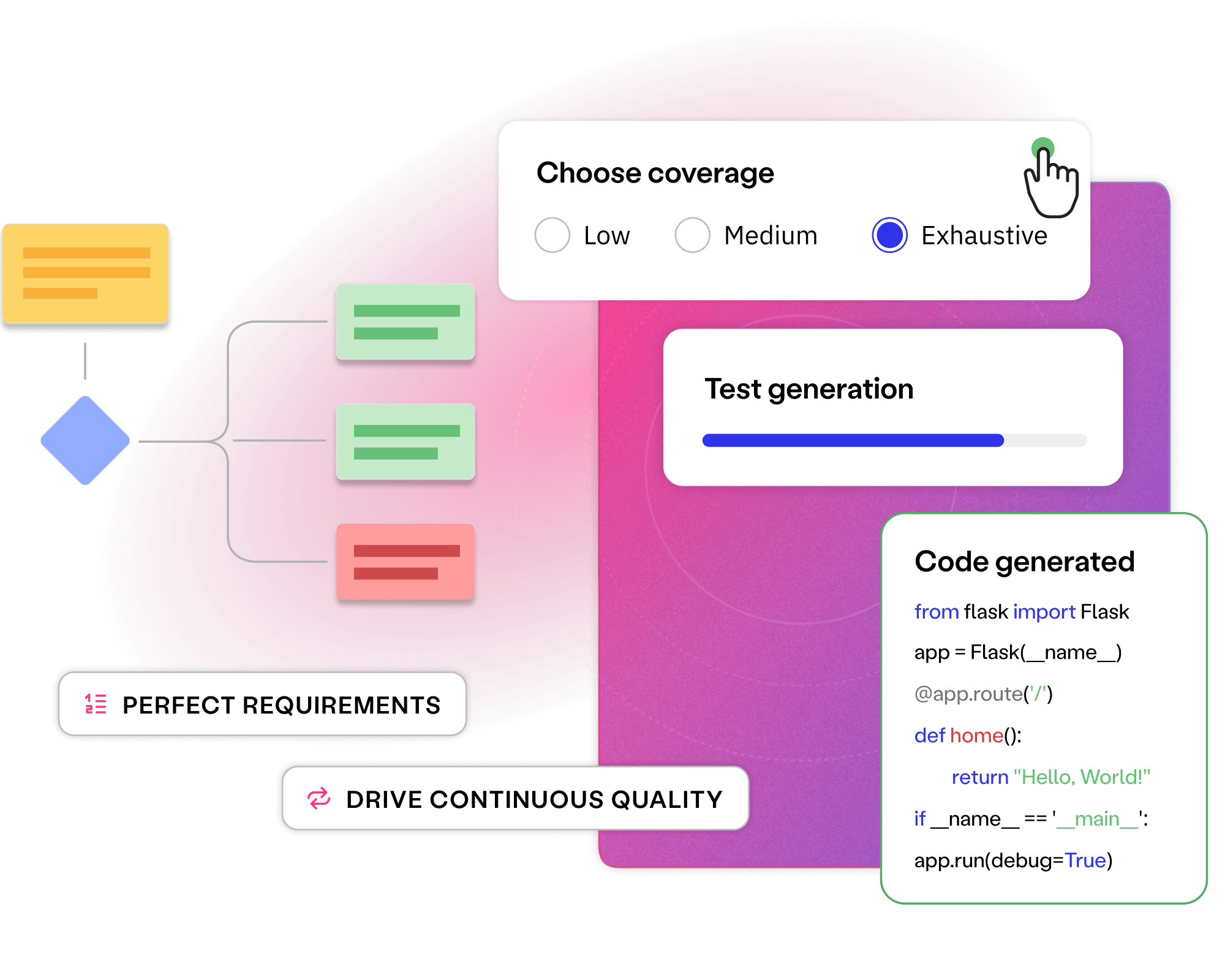

Fixing ambiguous and incomplete requirements avoids costly bugs, while enabling transparency and critical thinking throughout delivery.

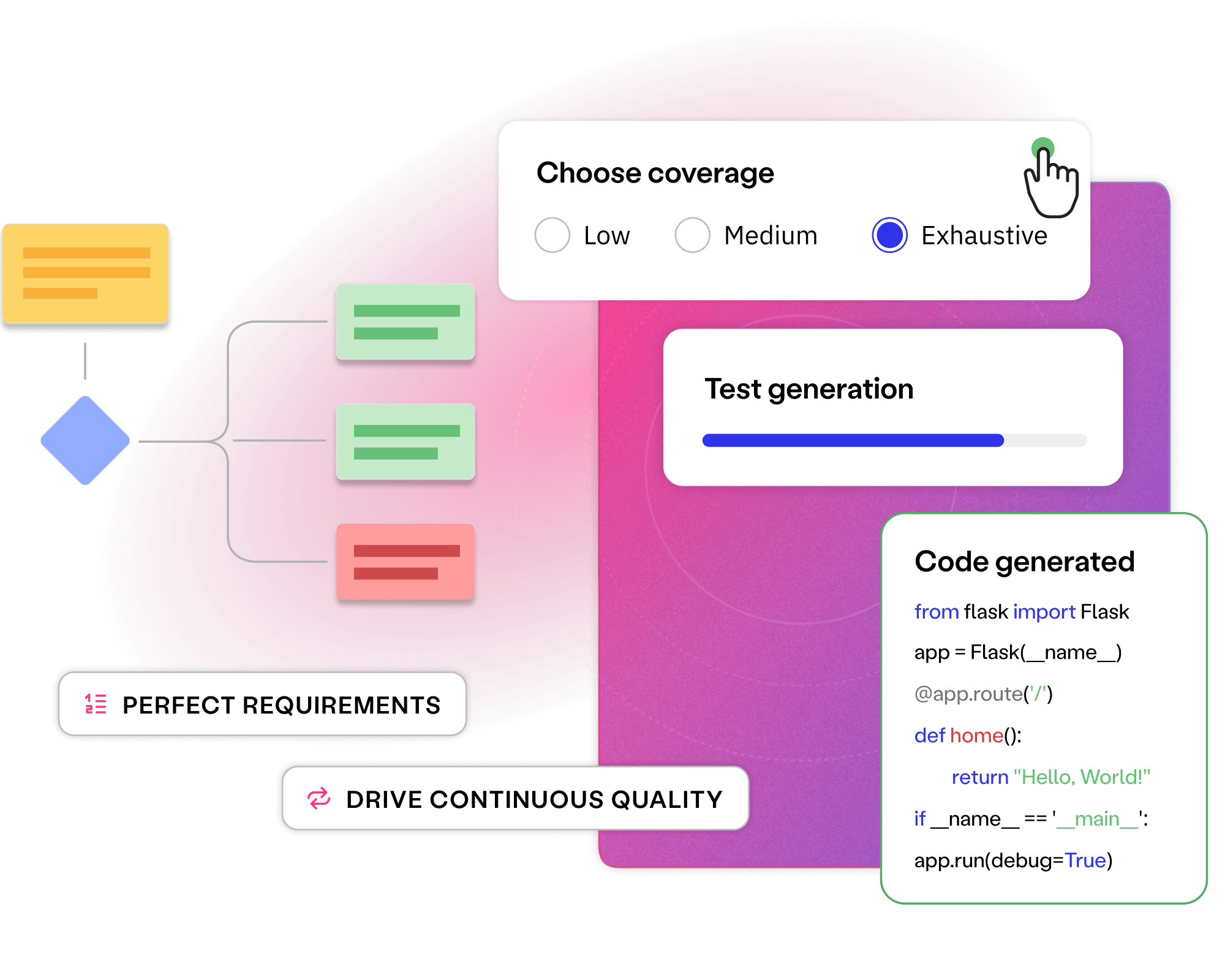

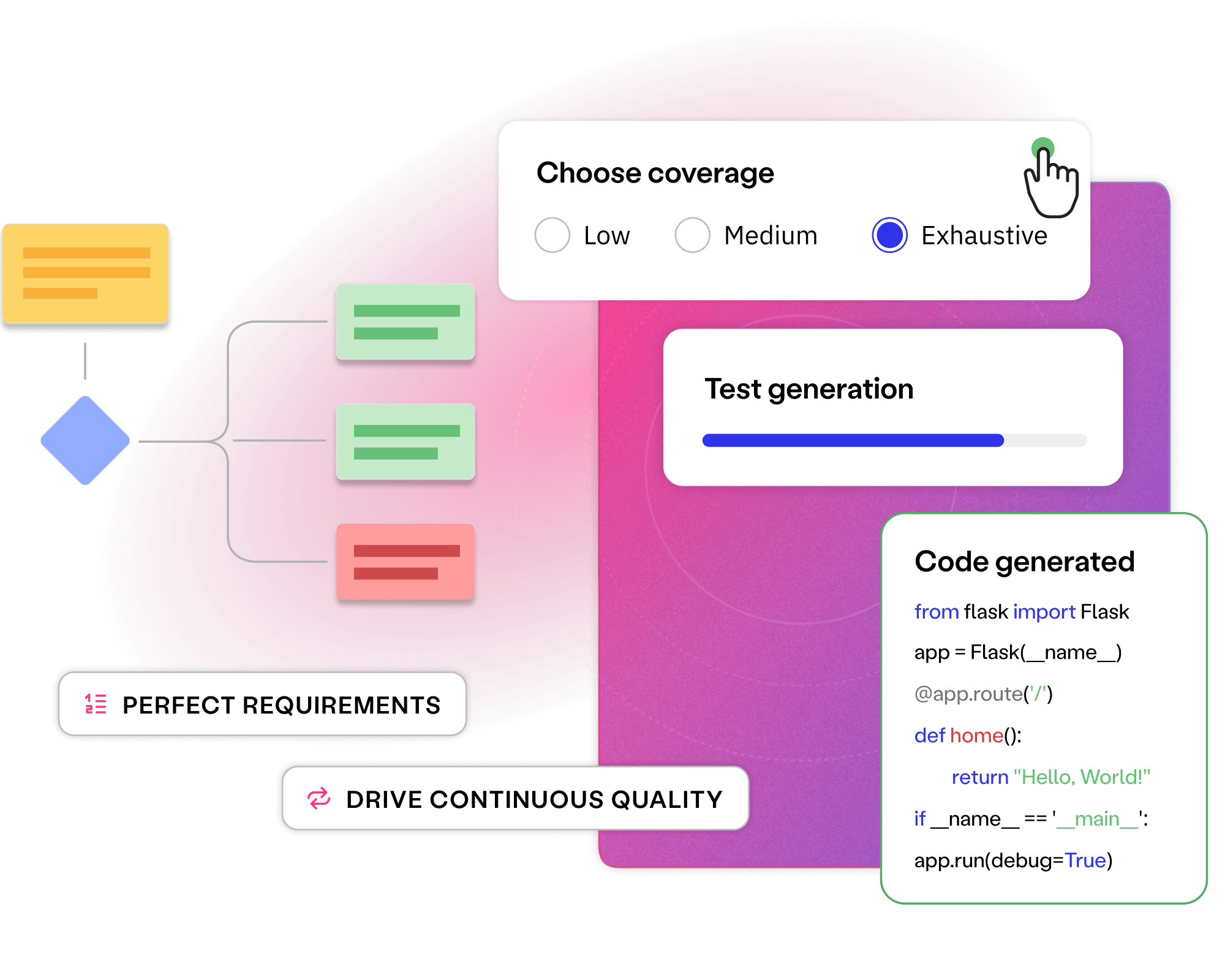

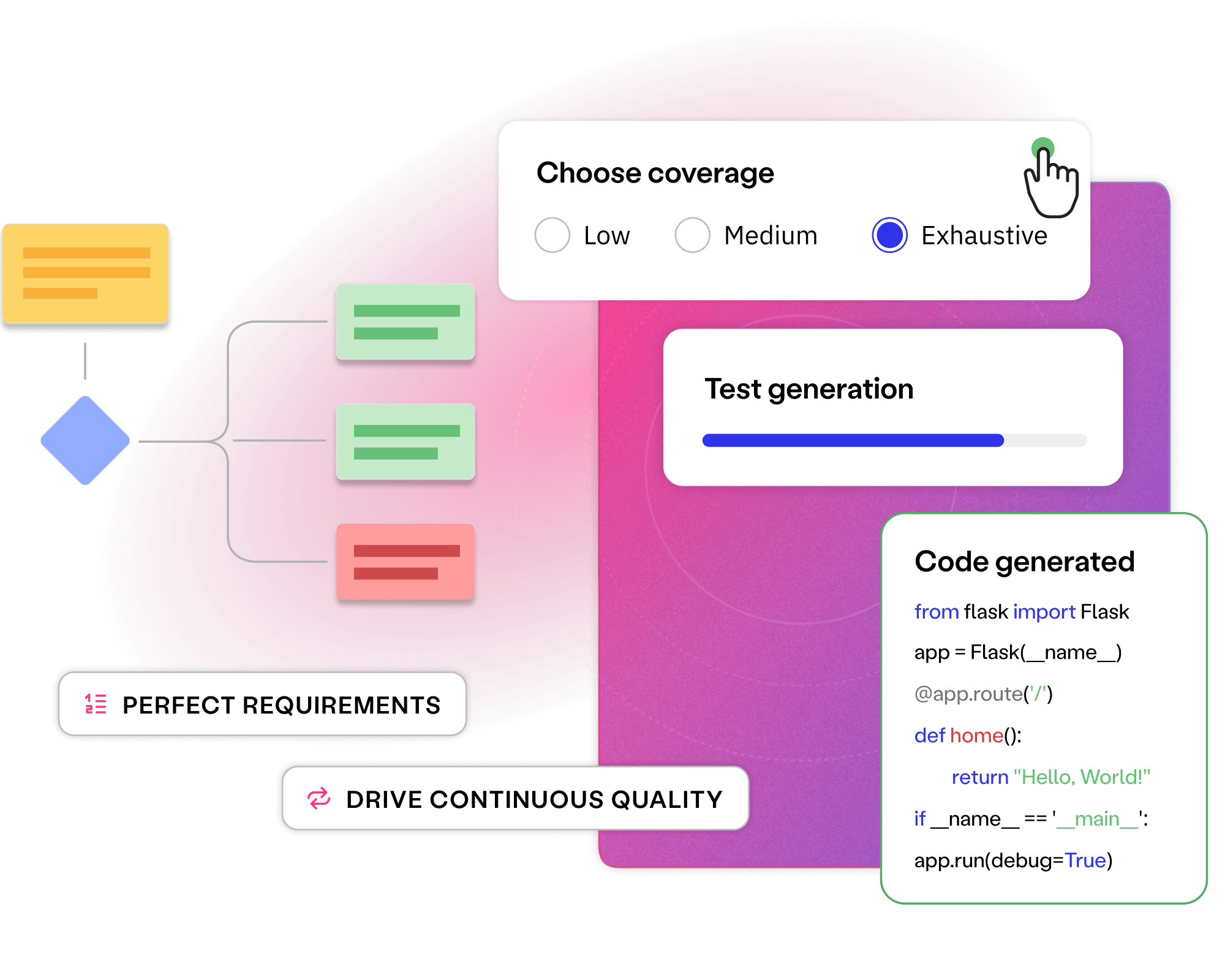

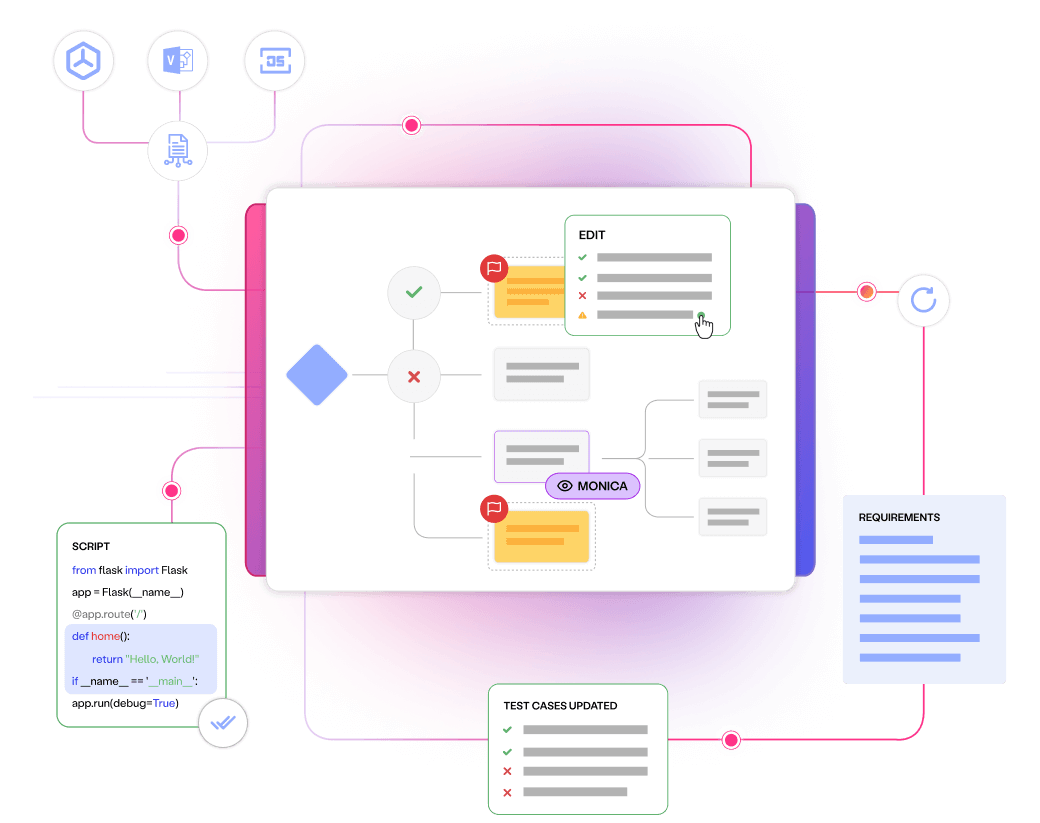

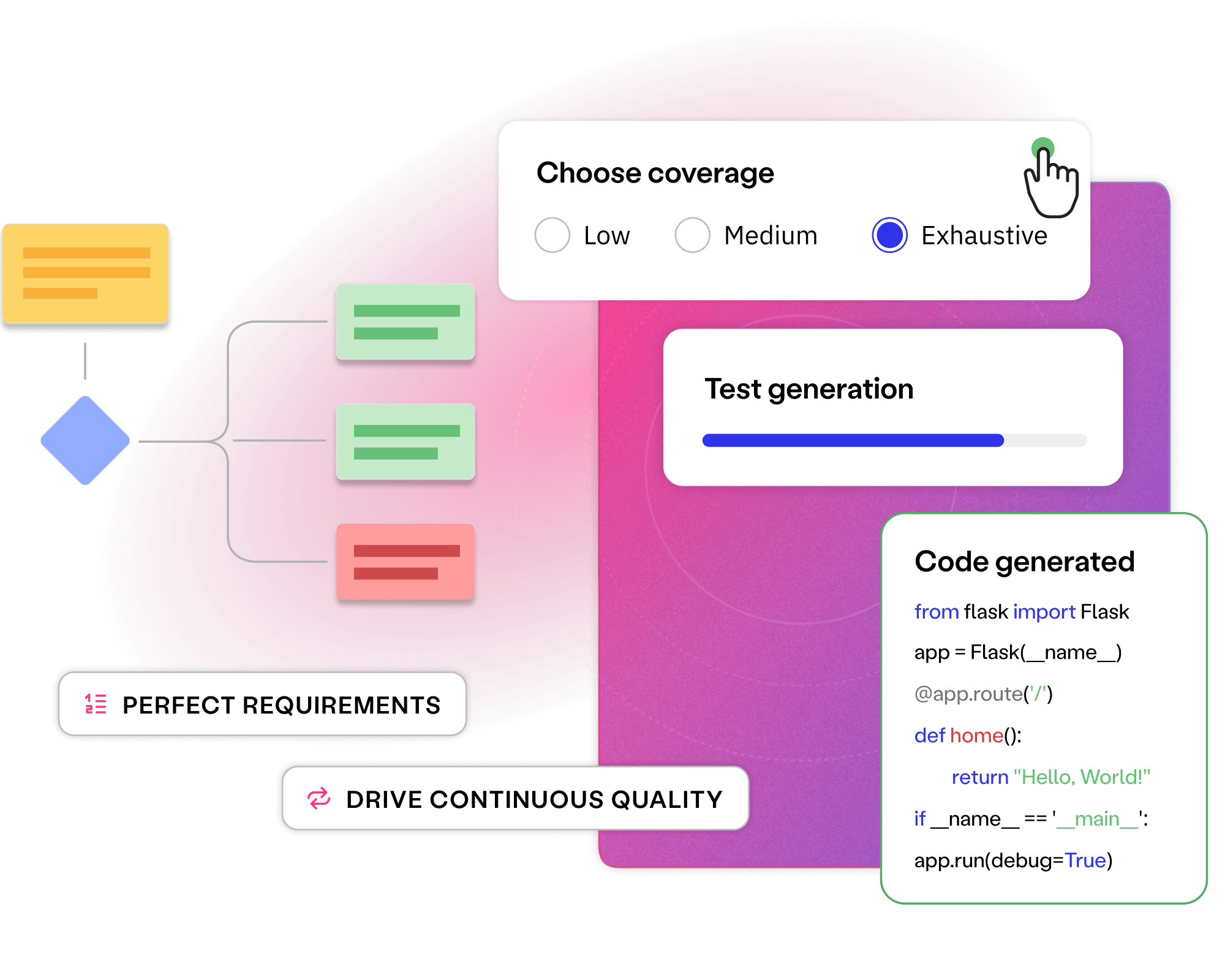

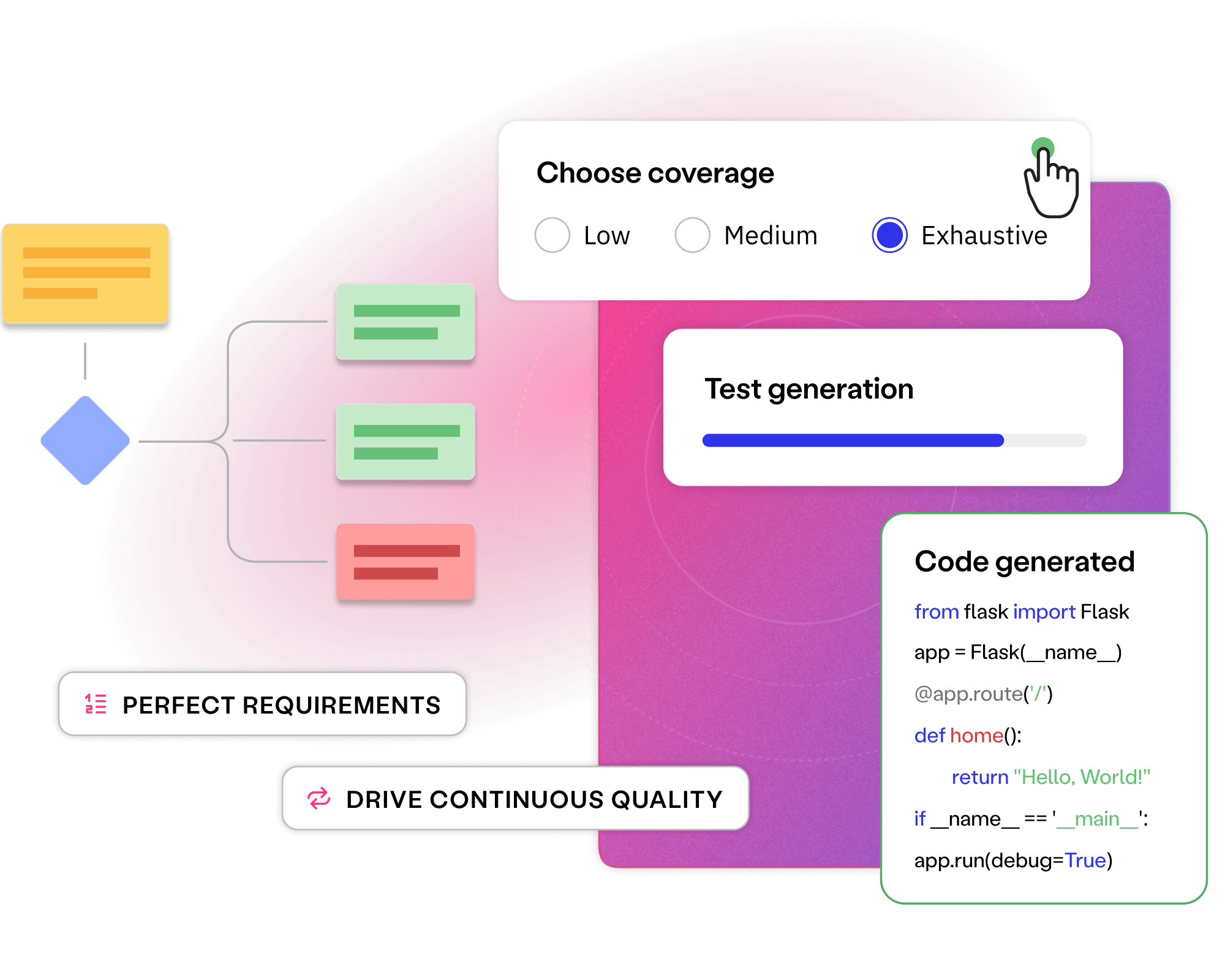

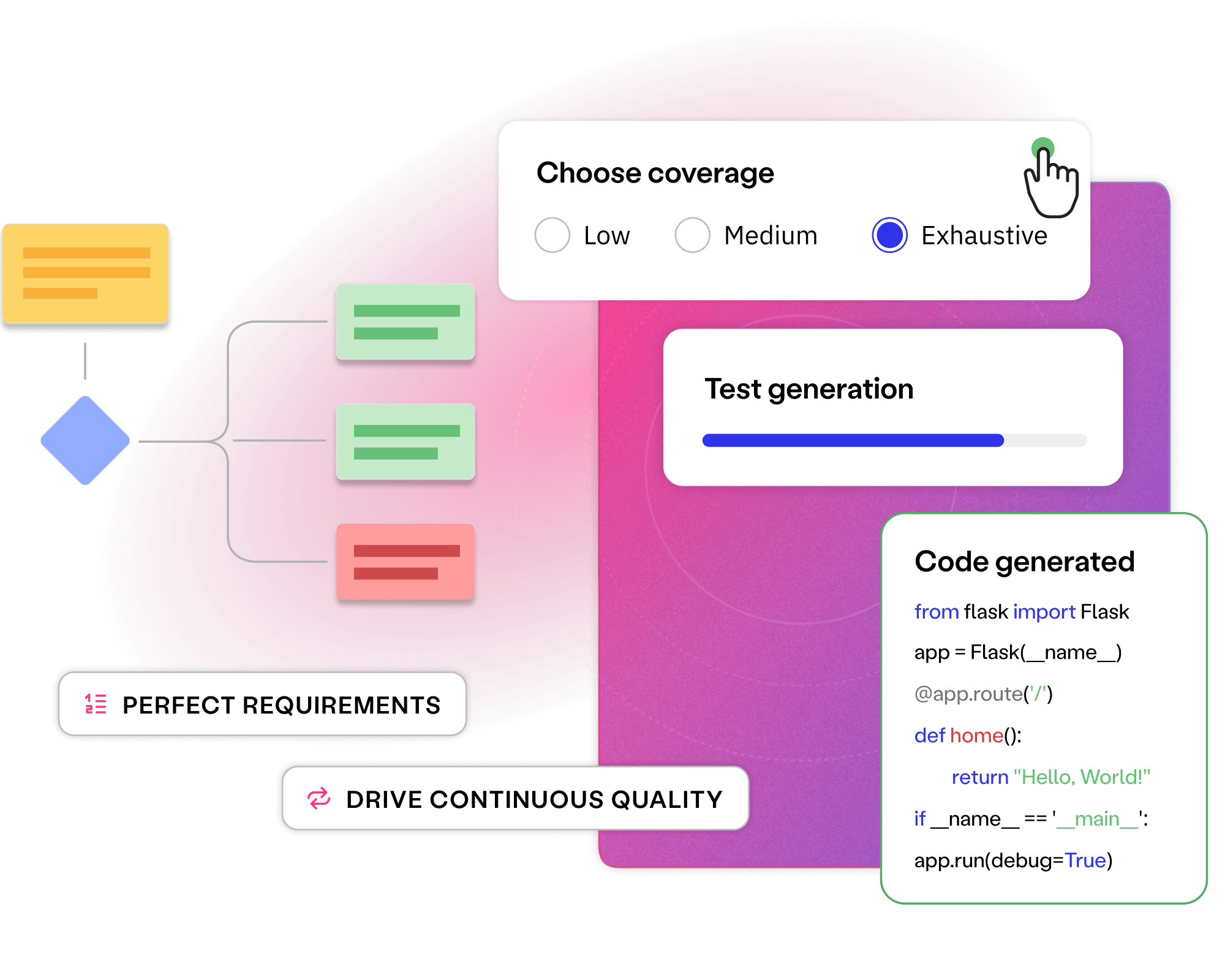

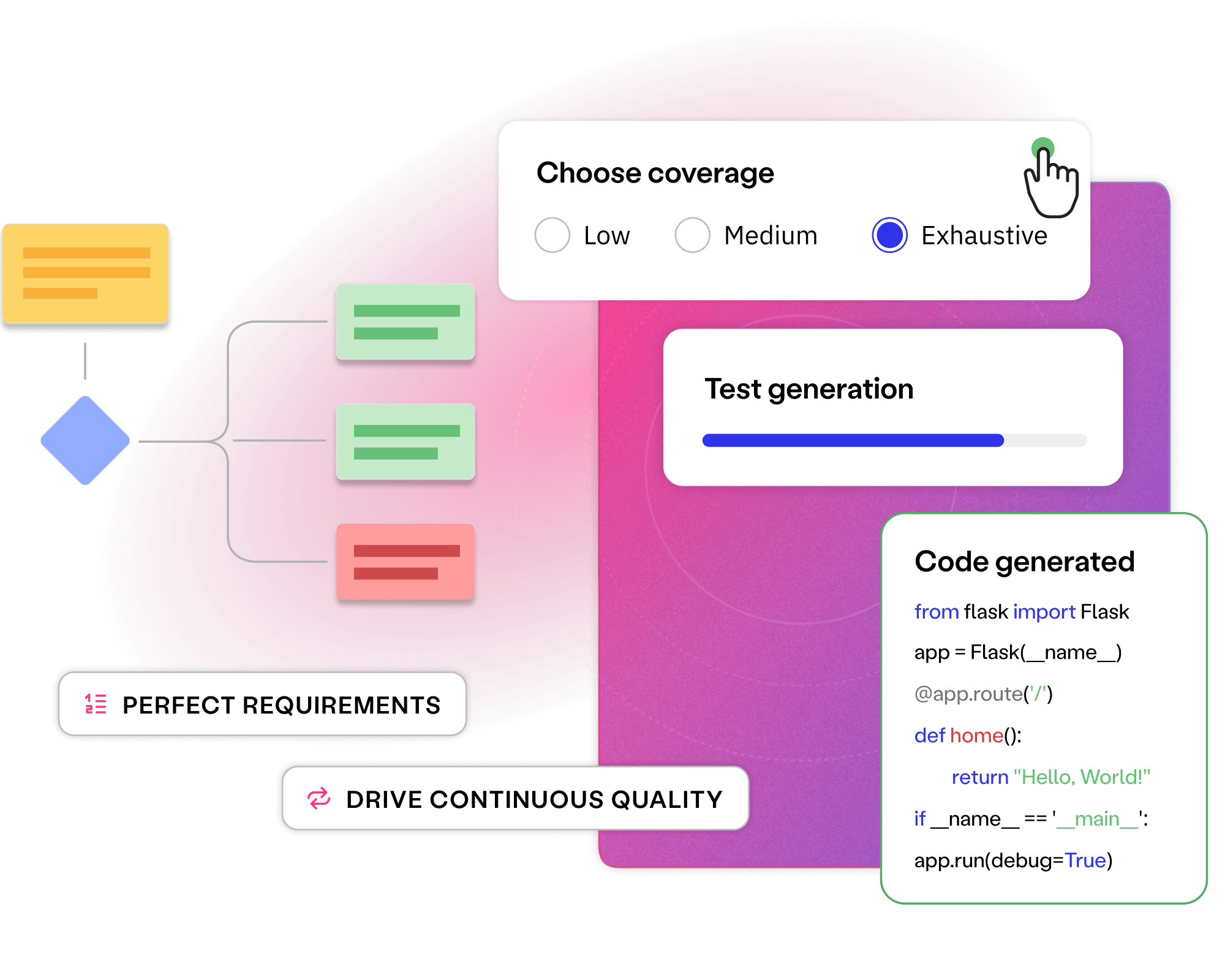

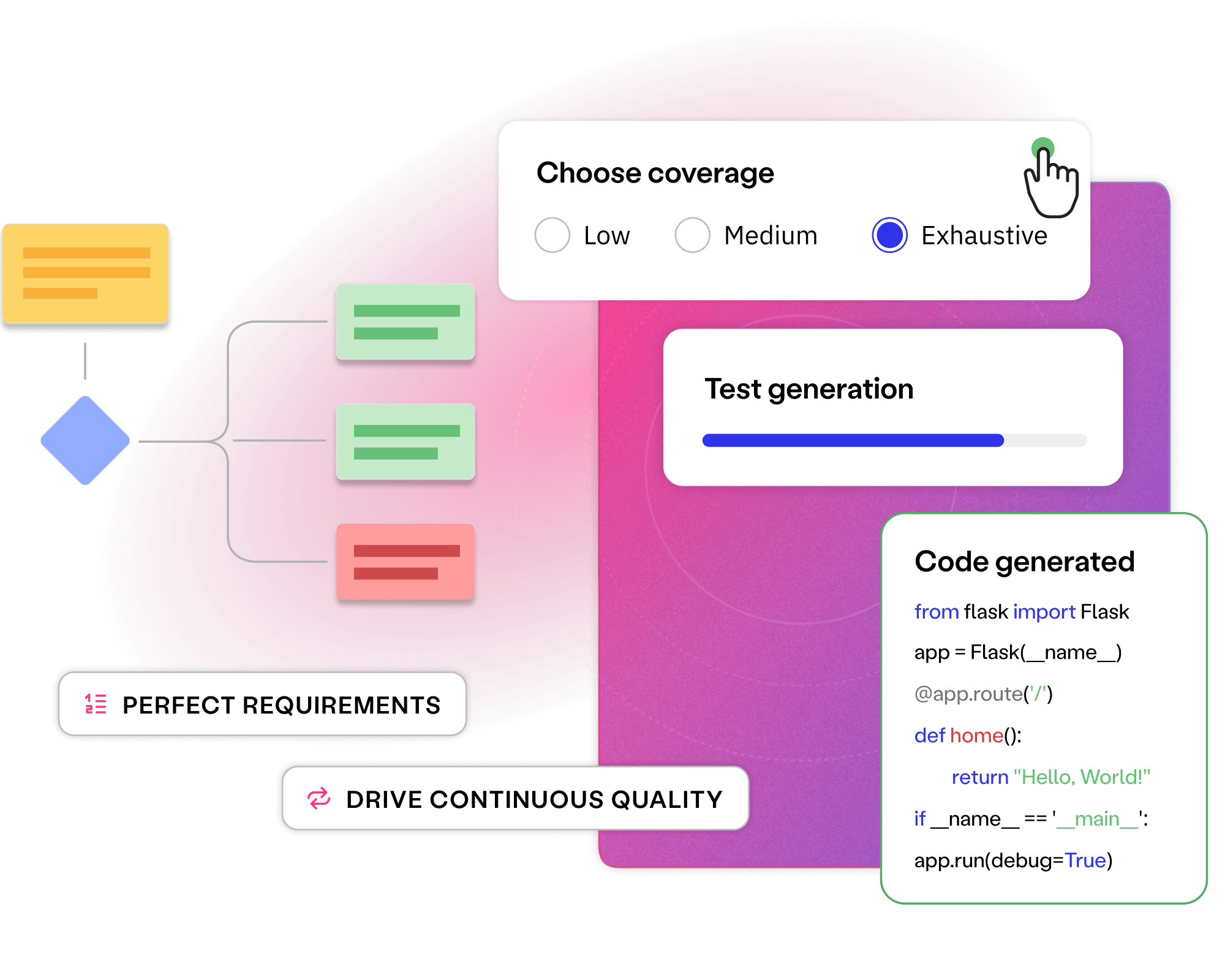

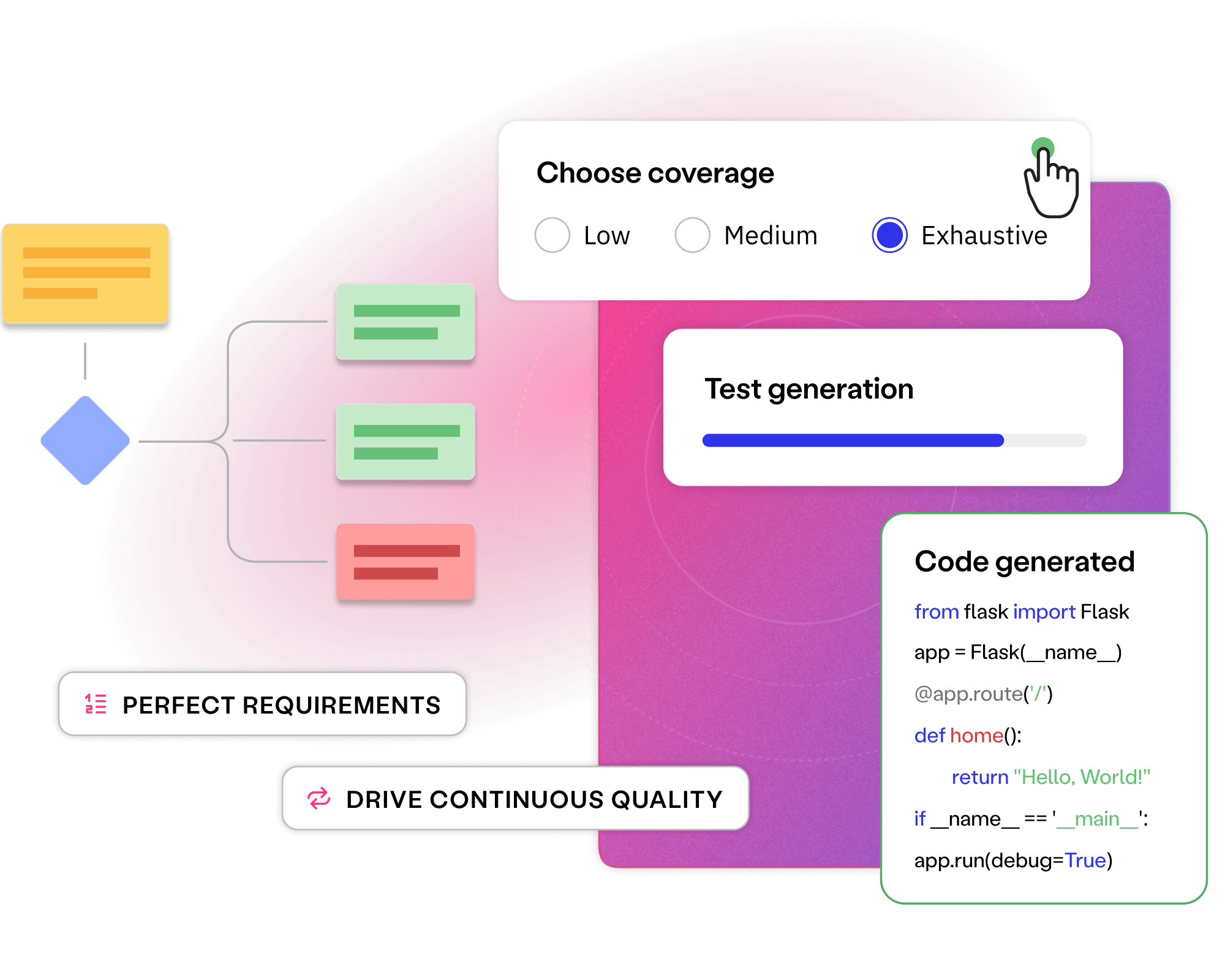

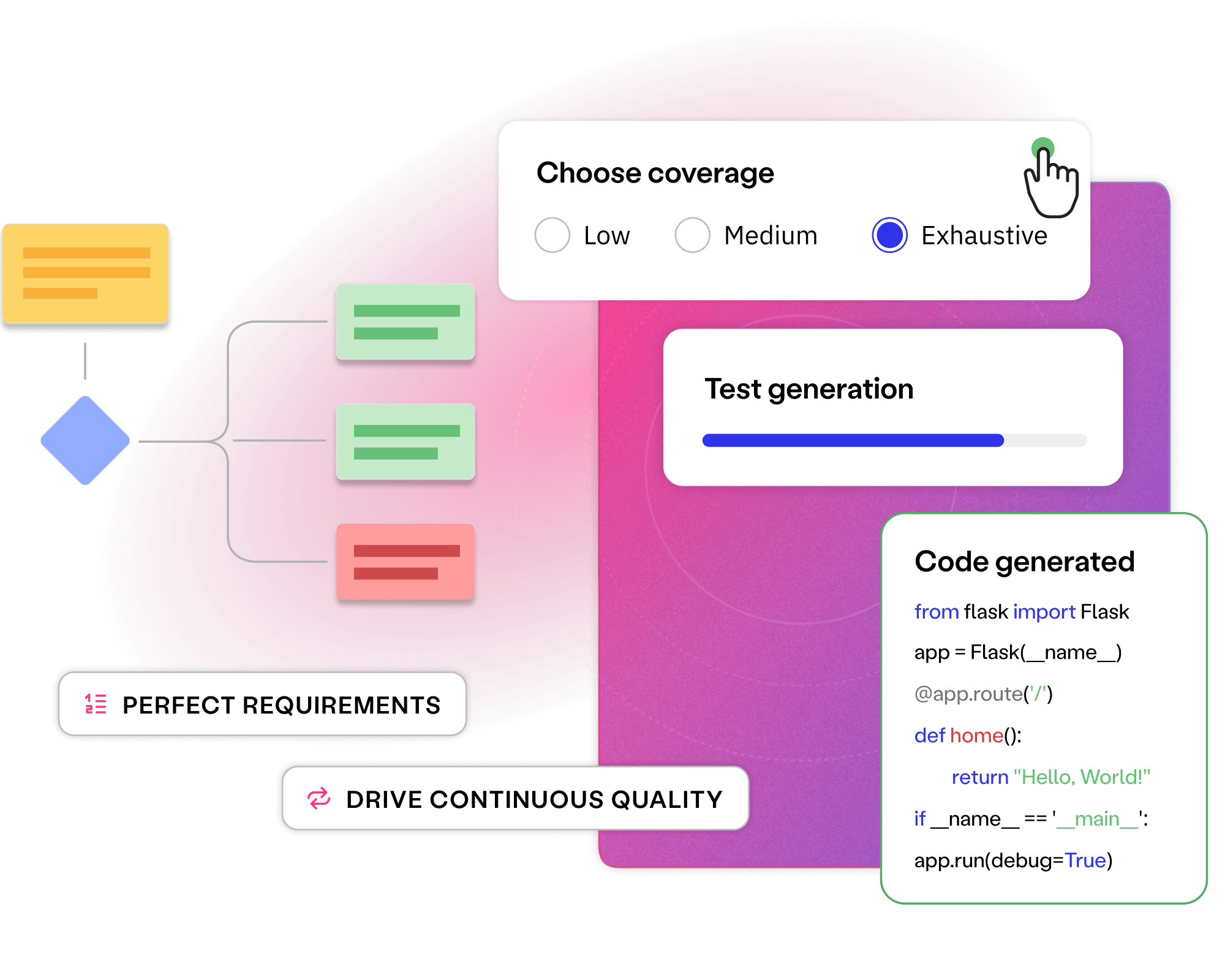

Collaboratively perfecting requirements and generating continuous tests finds bugs while they are low impact and affordable to fix.

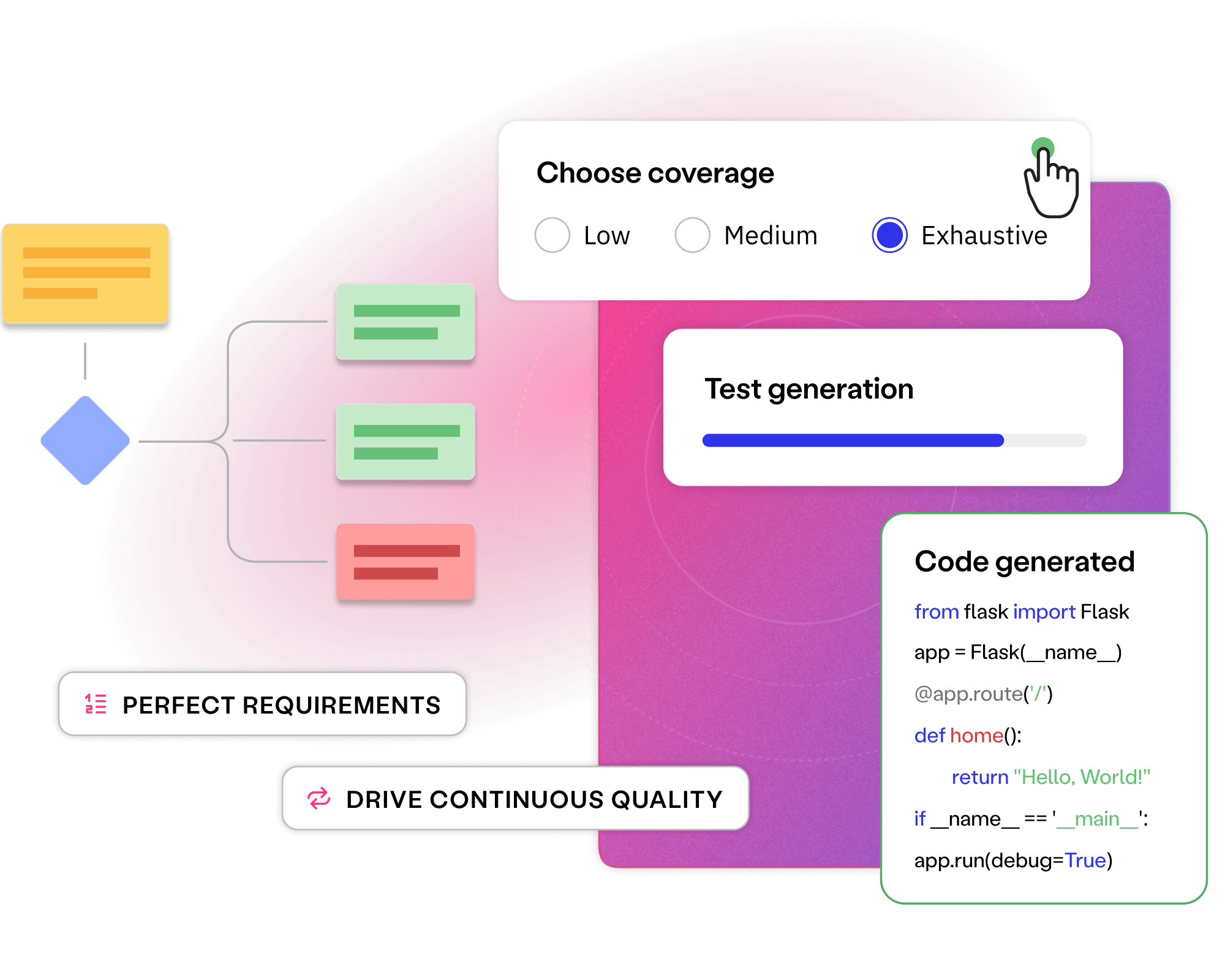

Automating repetitive test creation, requirements engineering and maintenance removes toil, enabling faster, higher-quality releases.

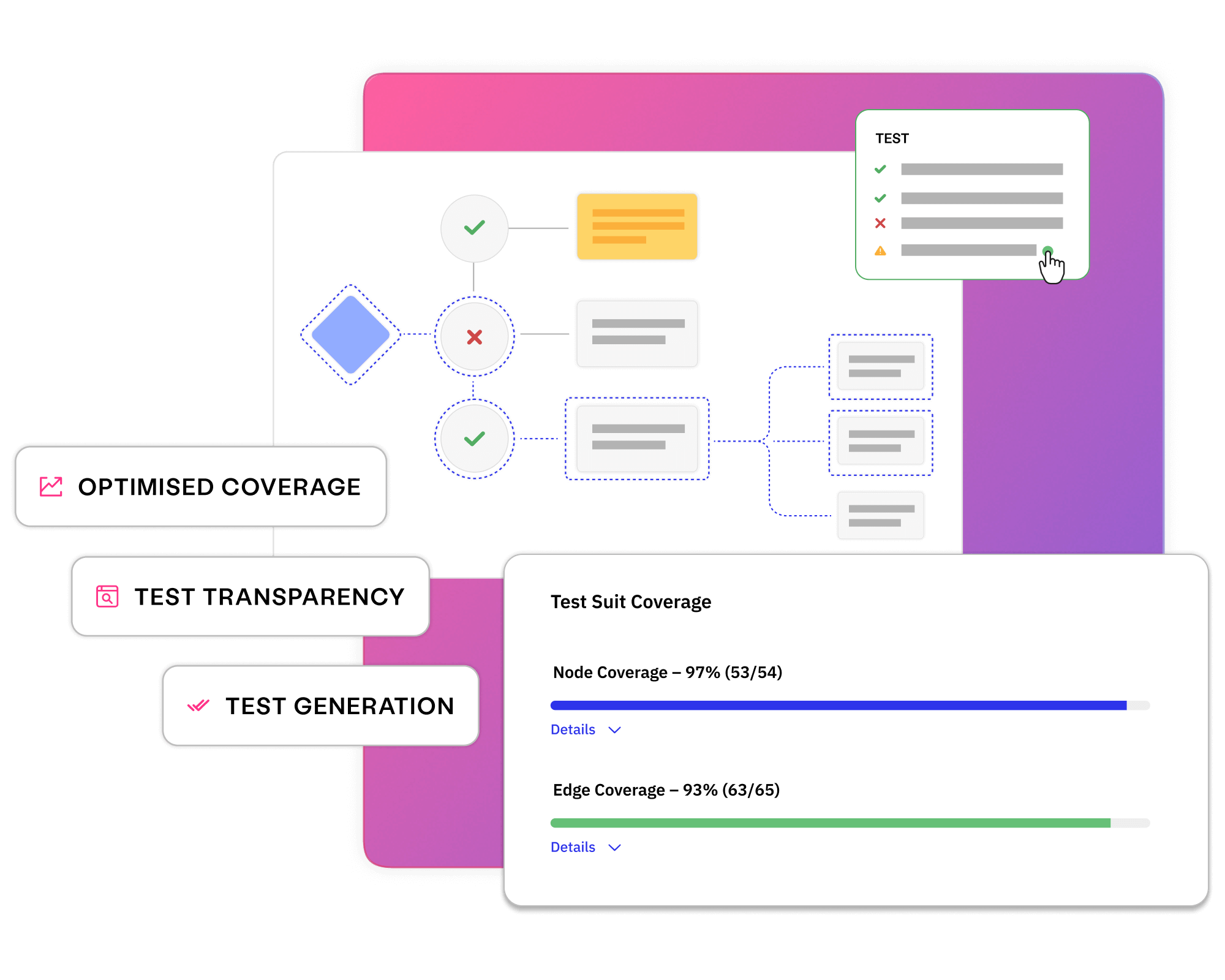

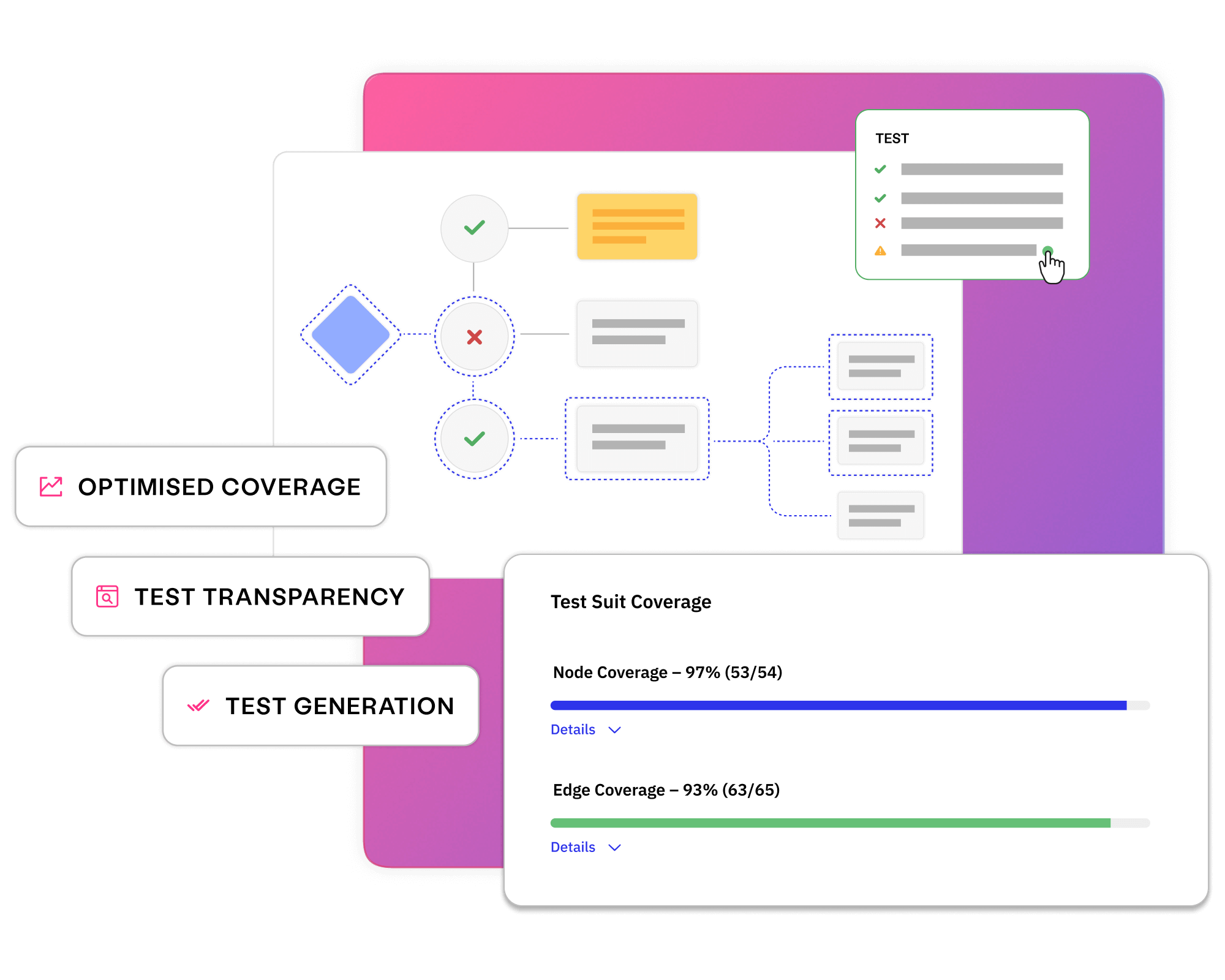

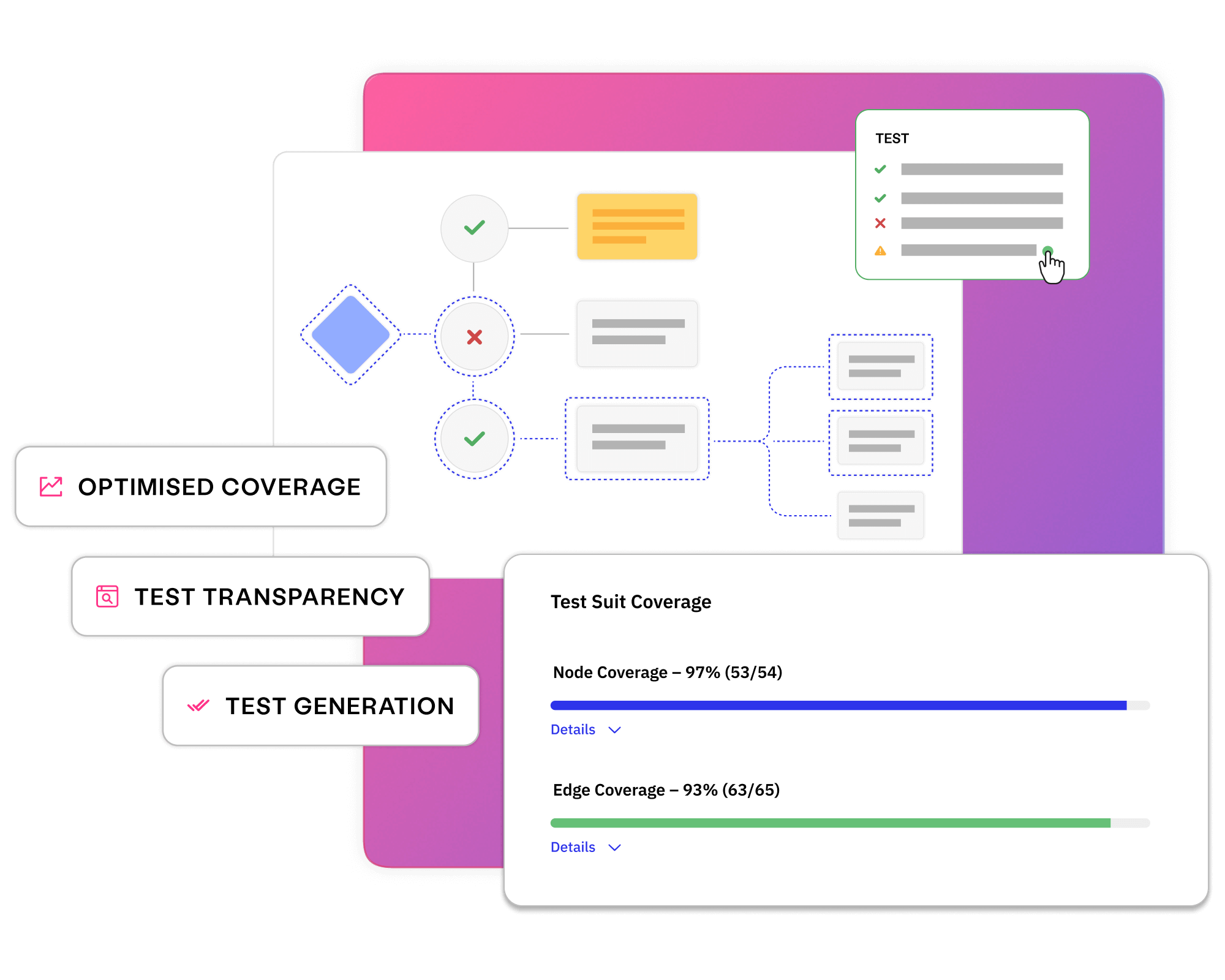

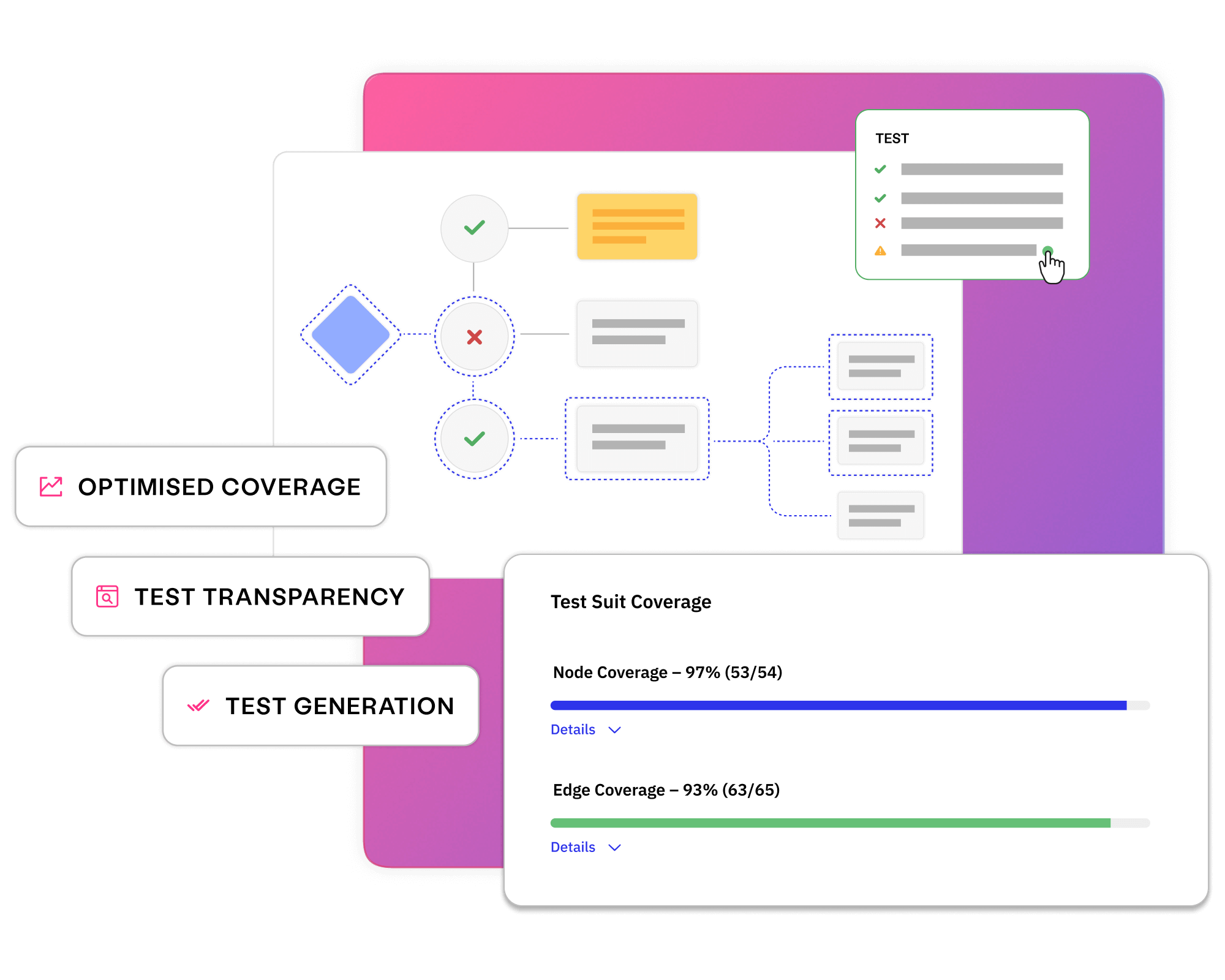

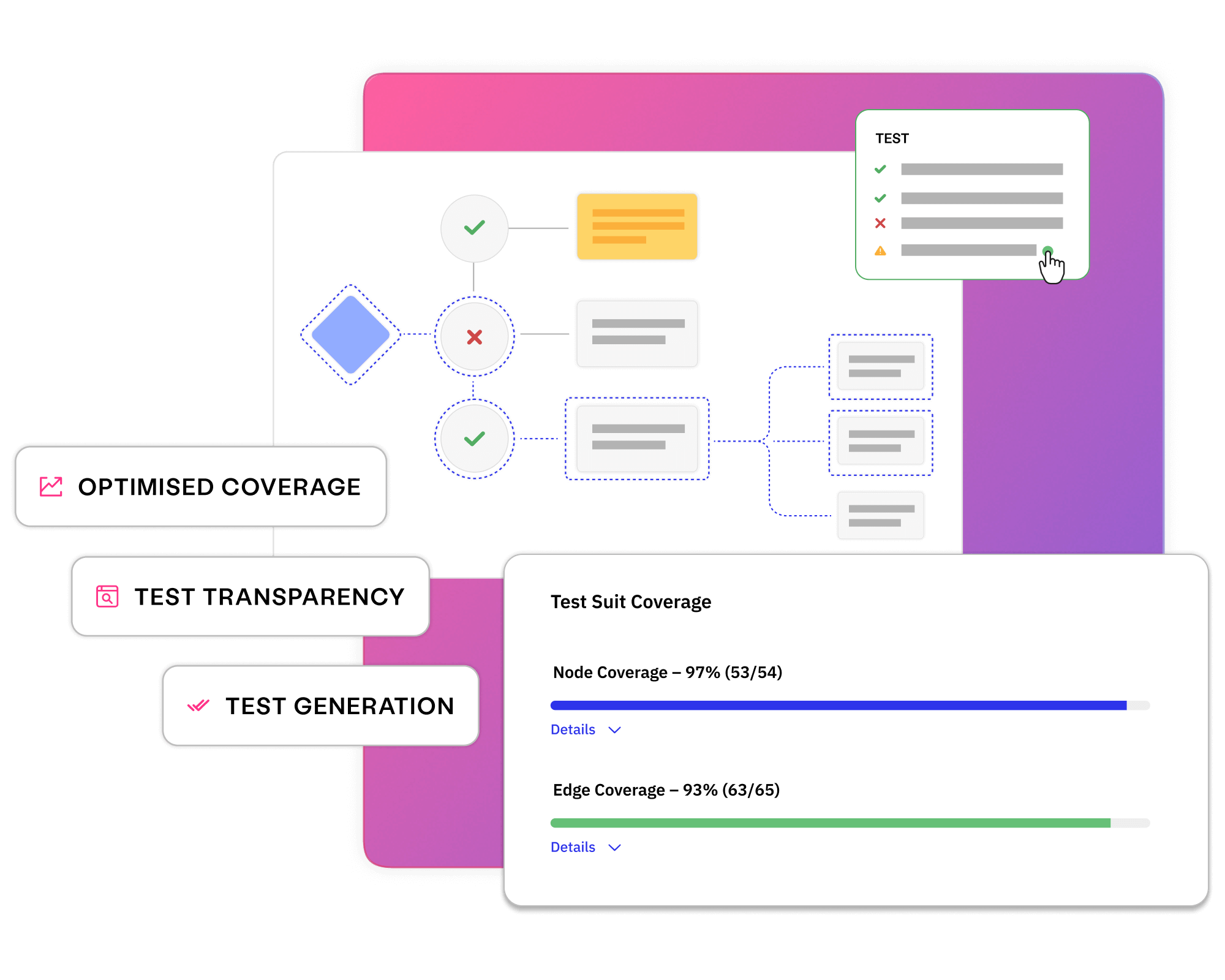

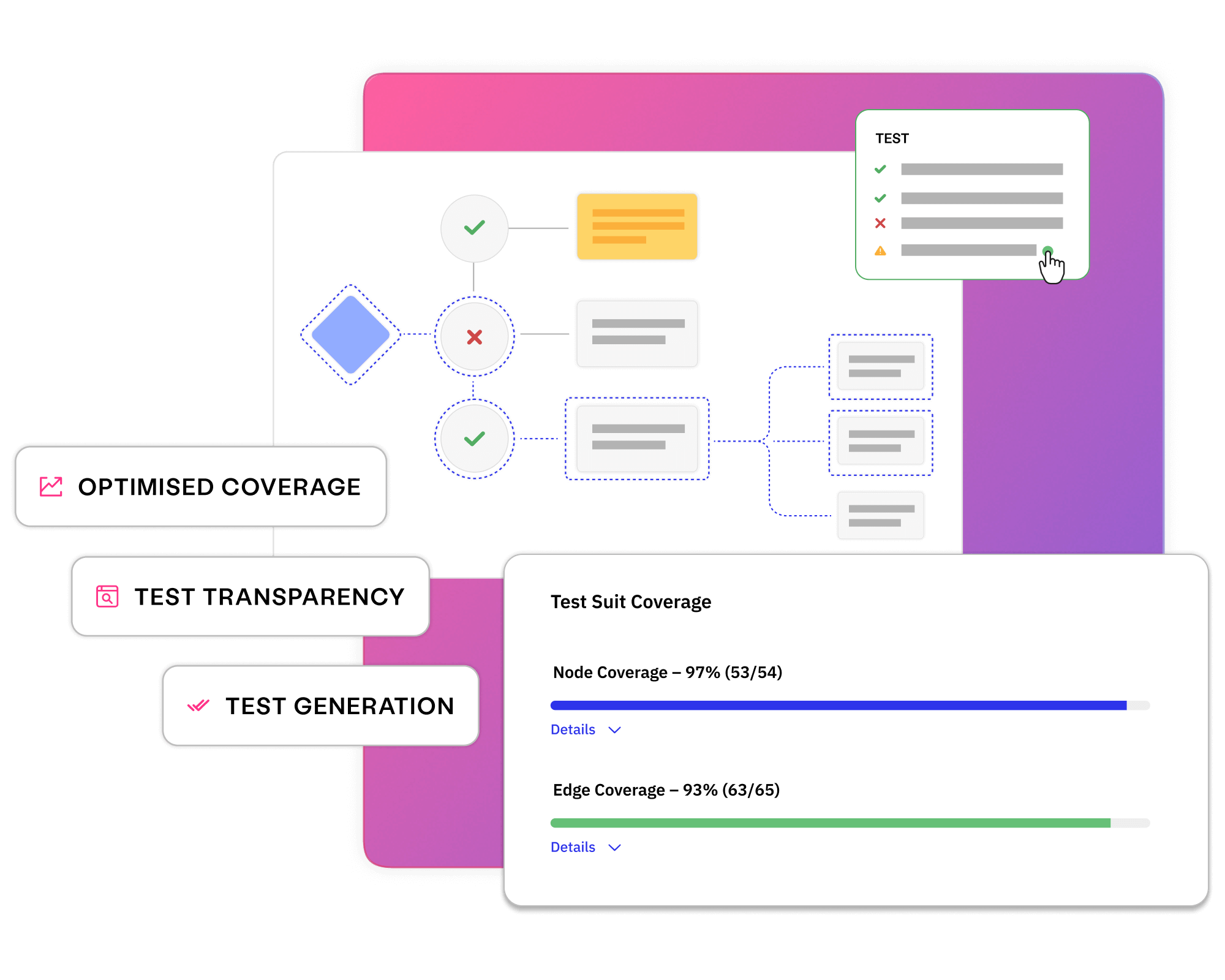

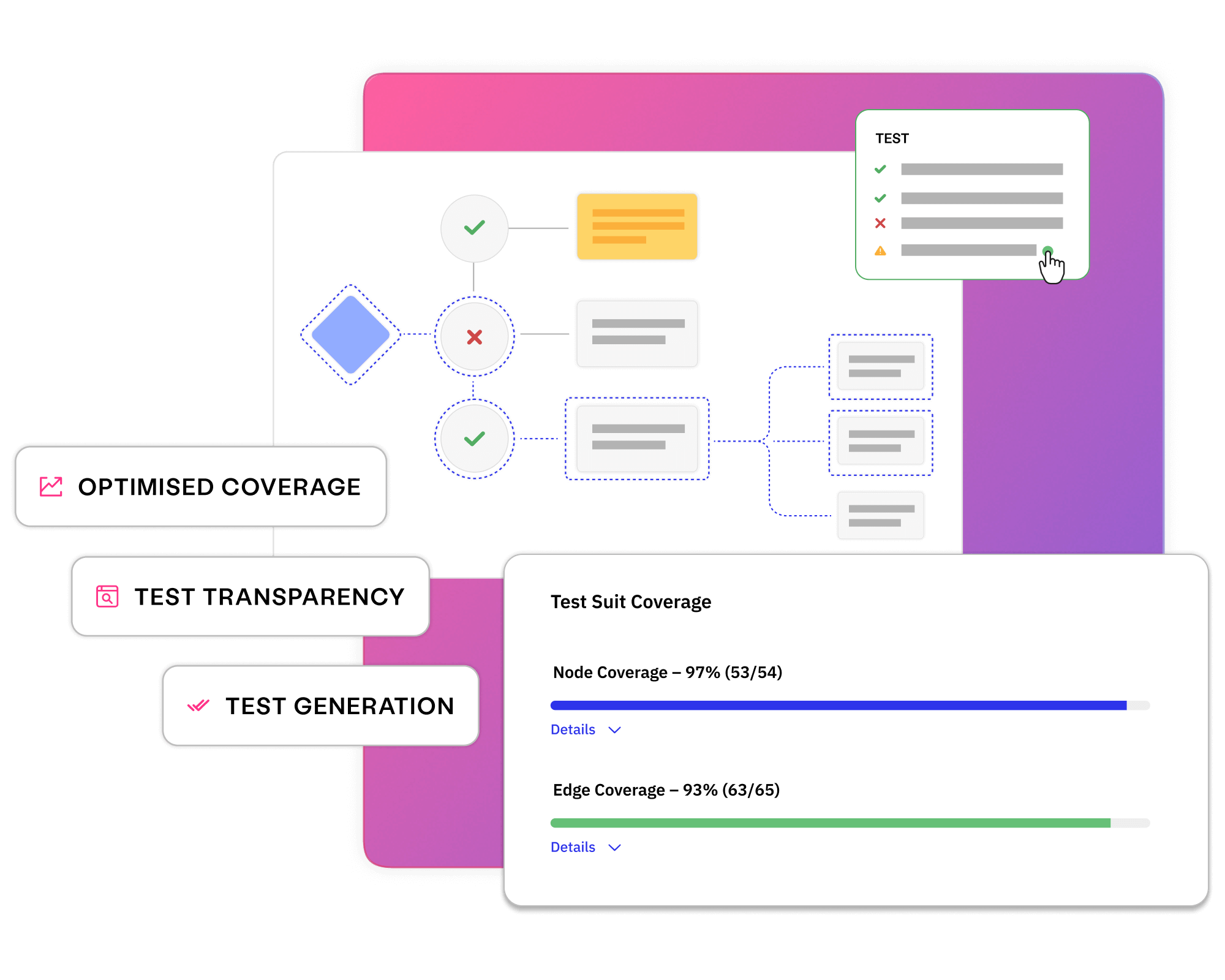

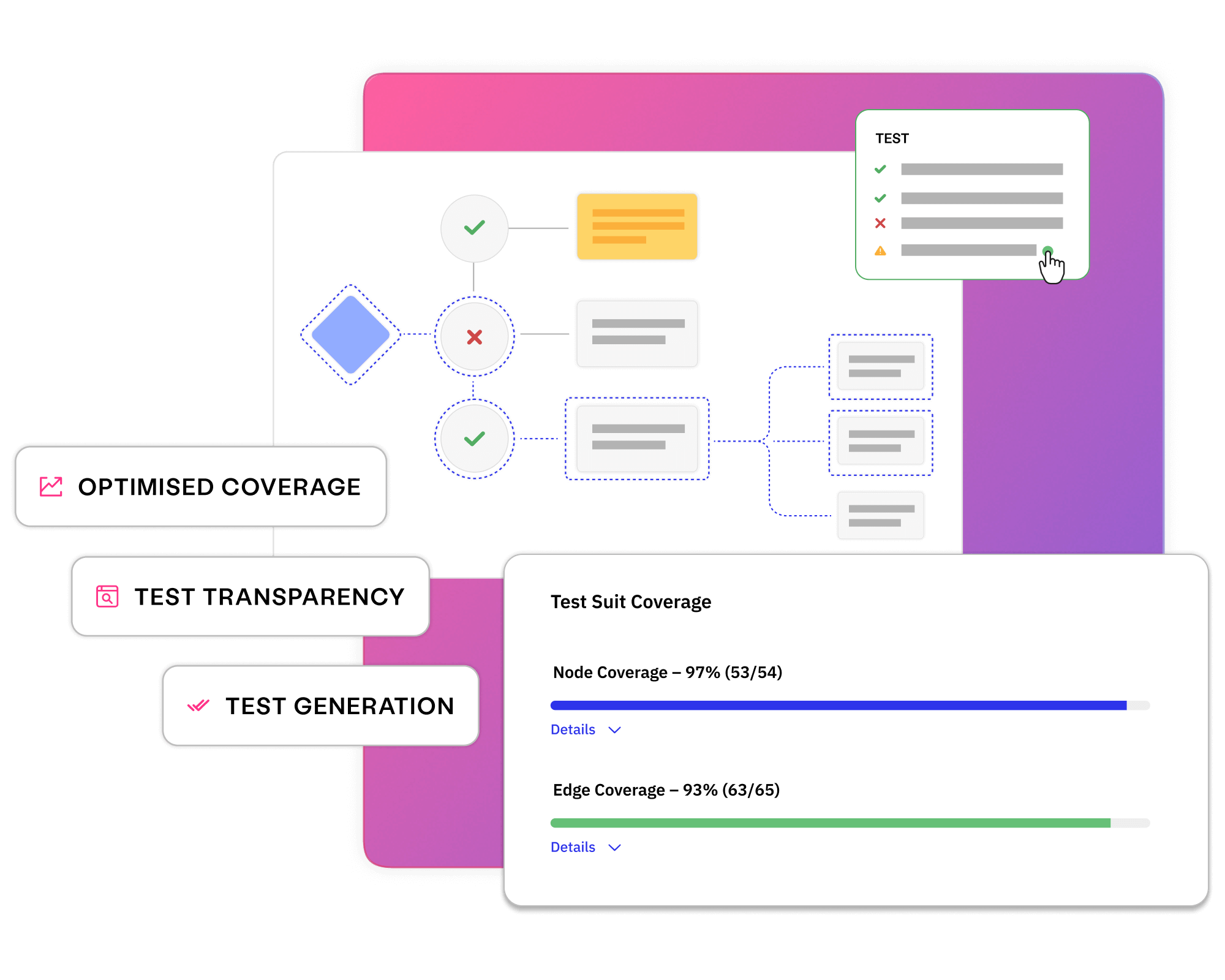

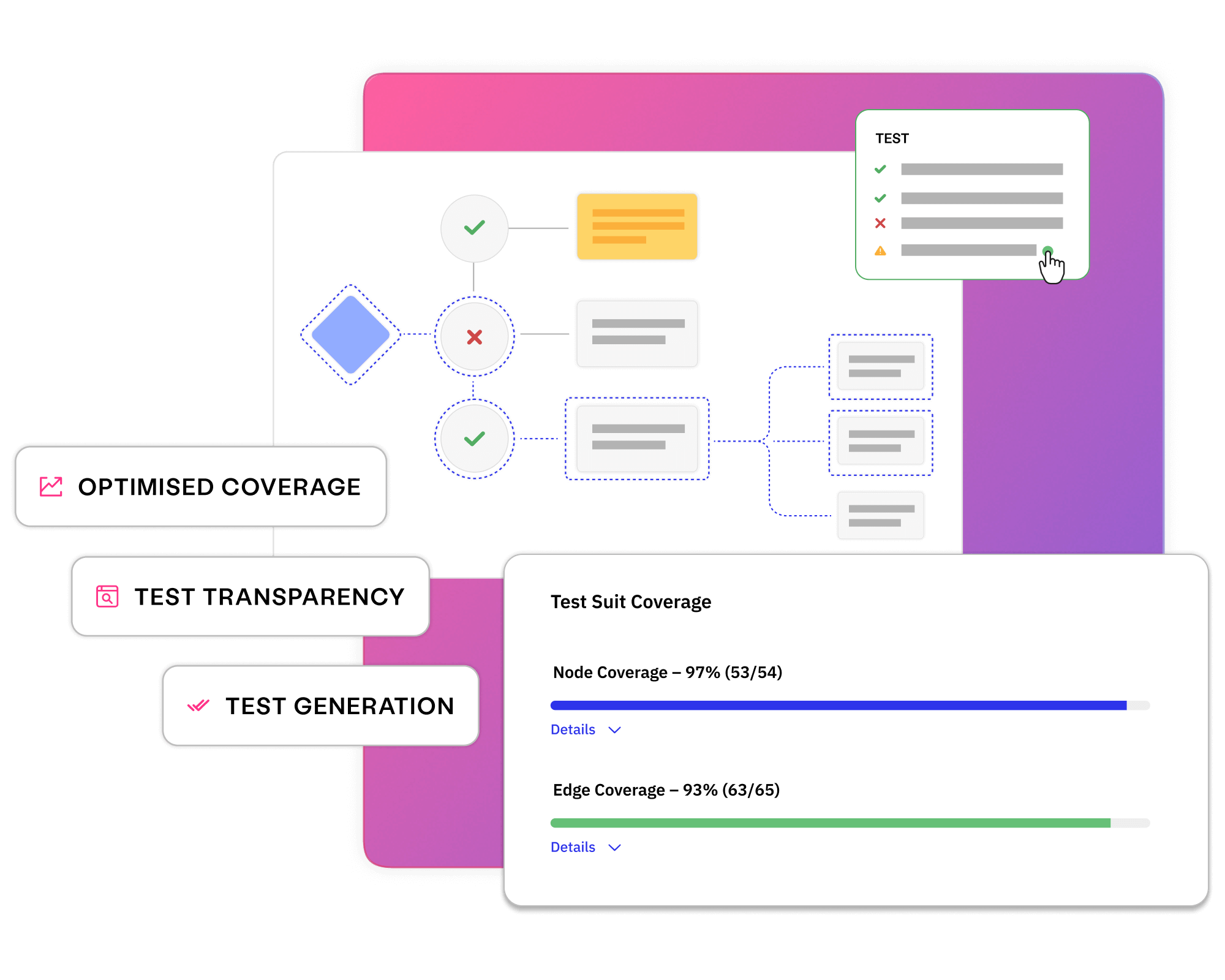

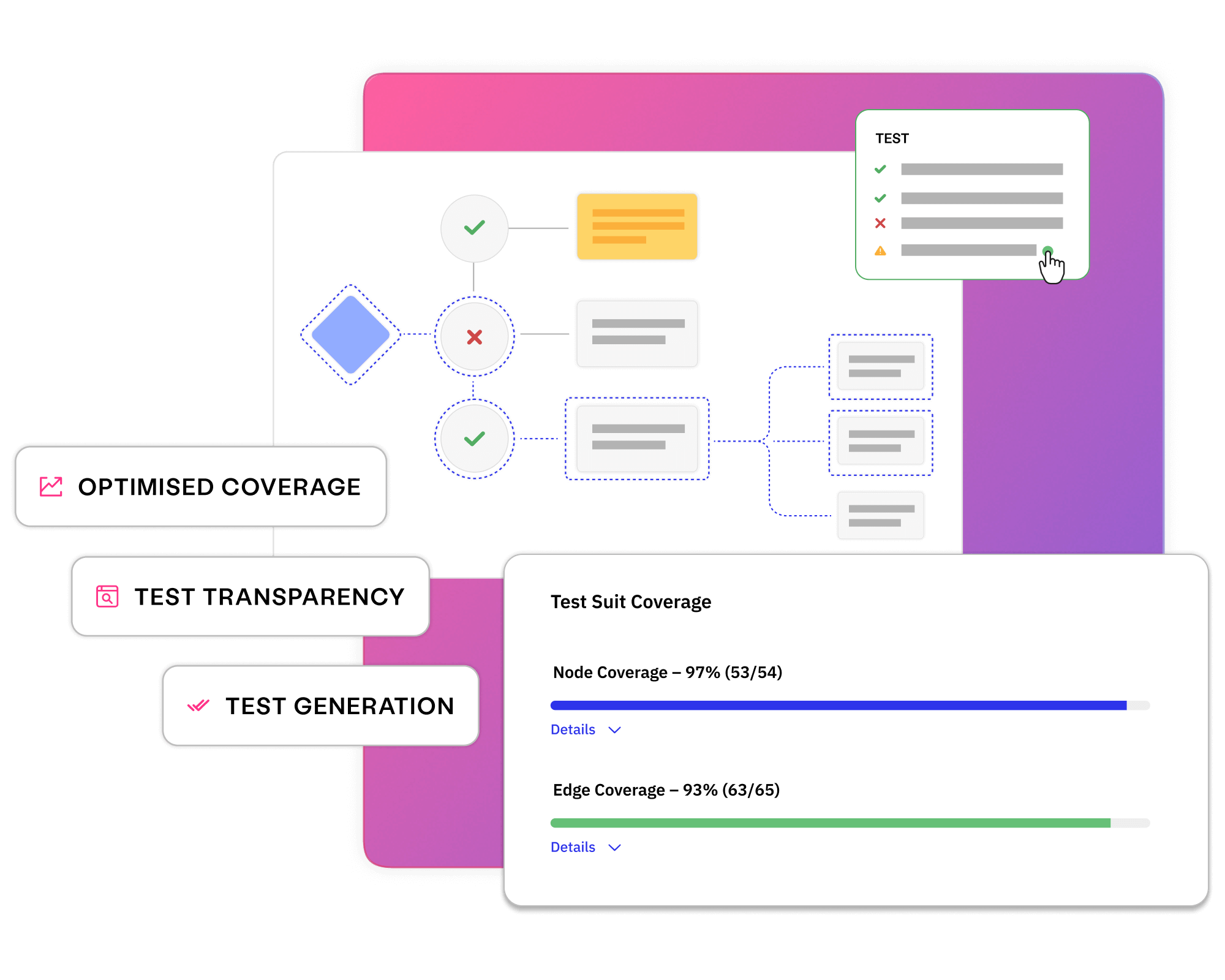

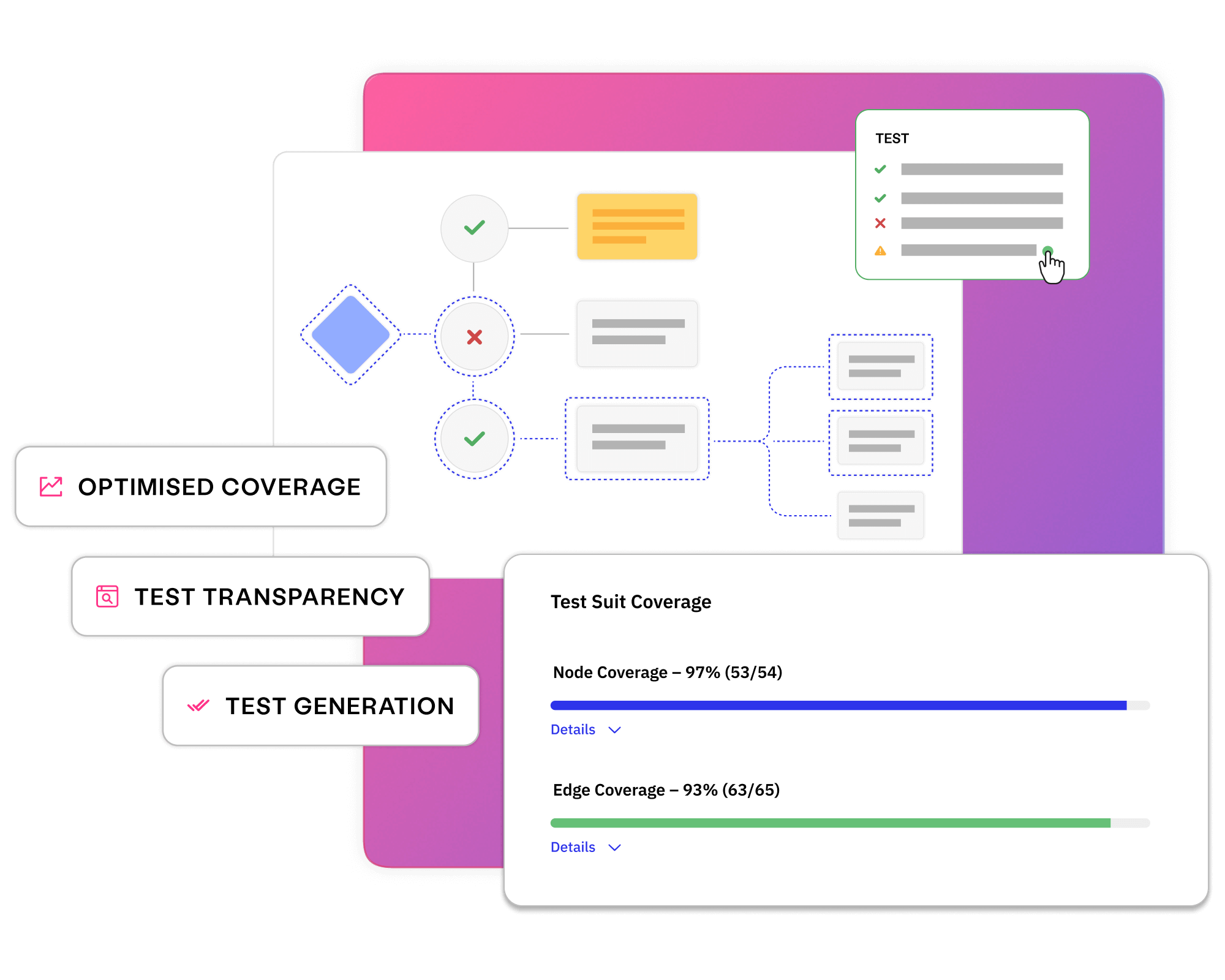

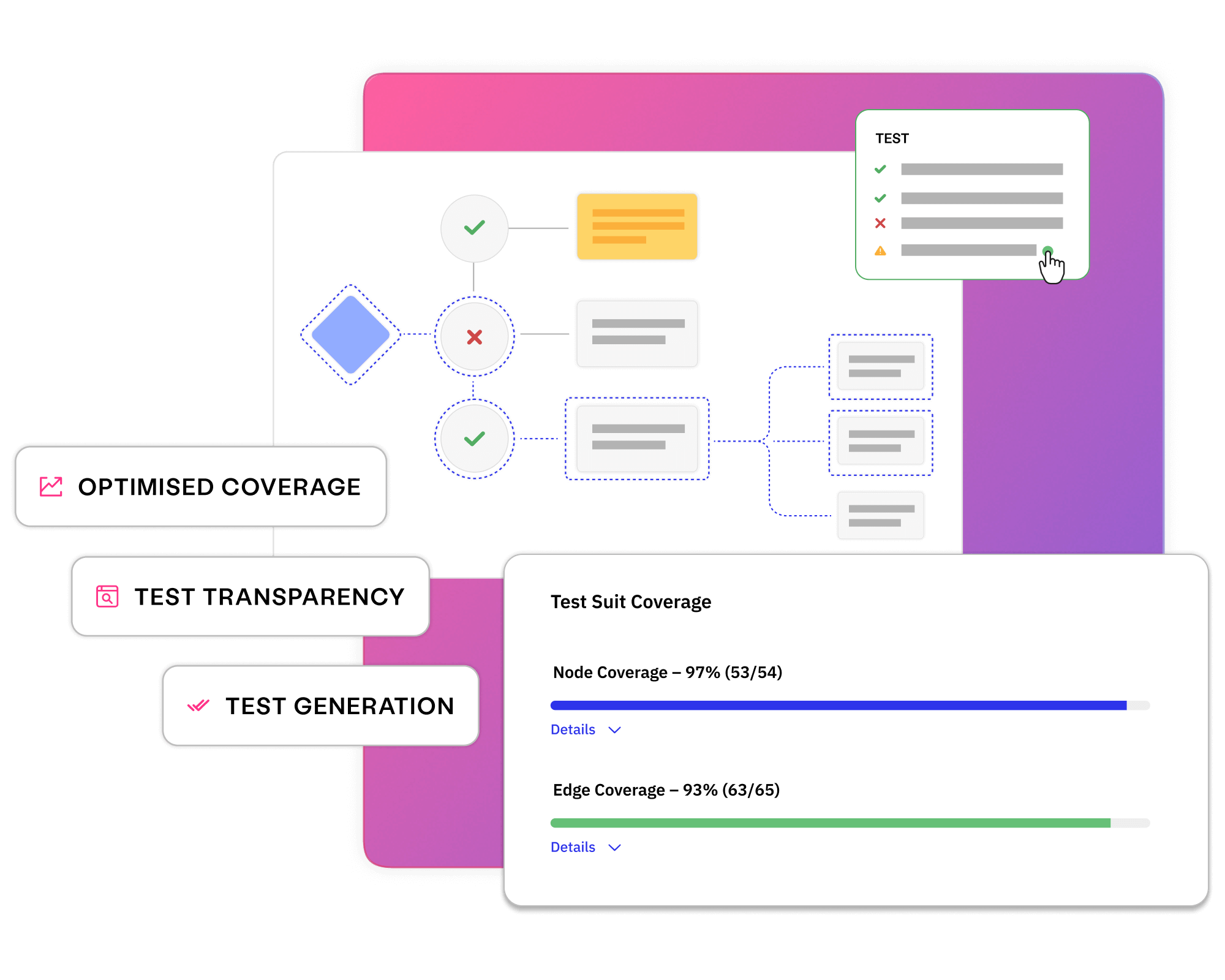

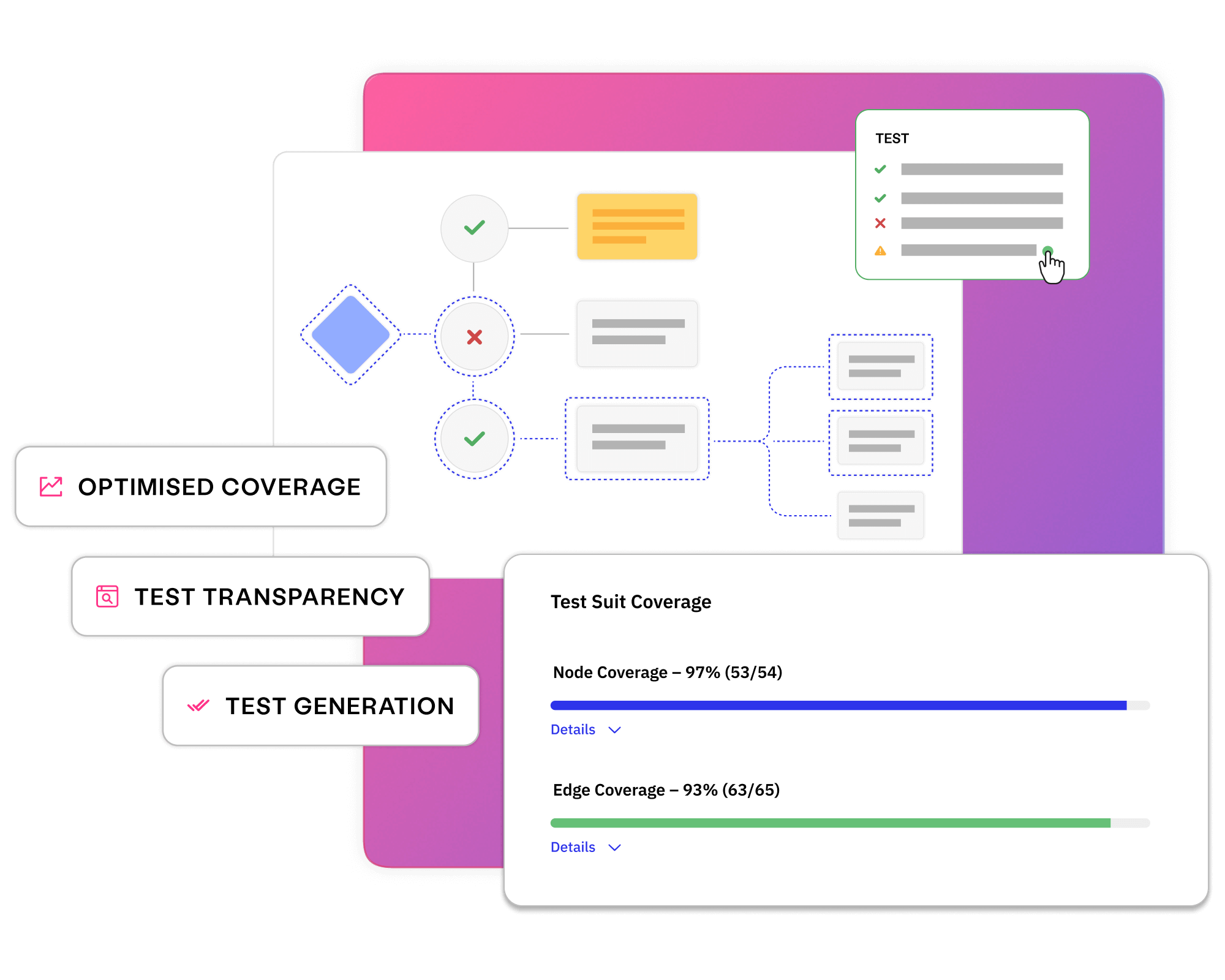

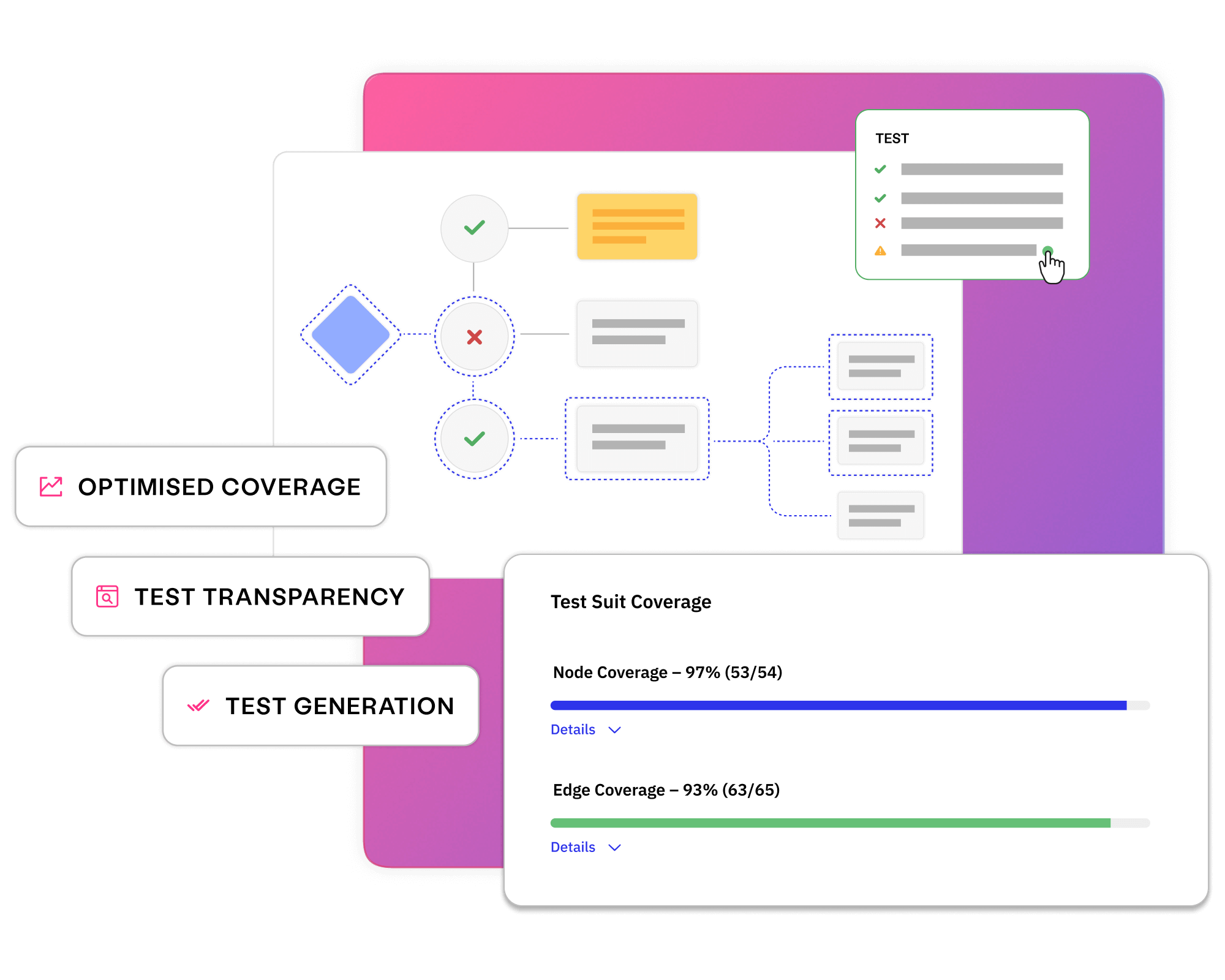

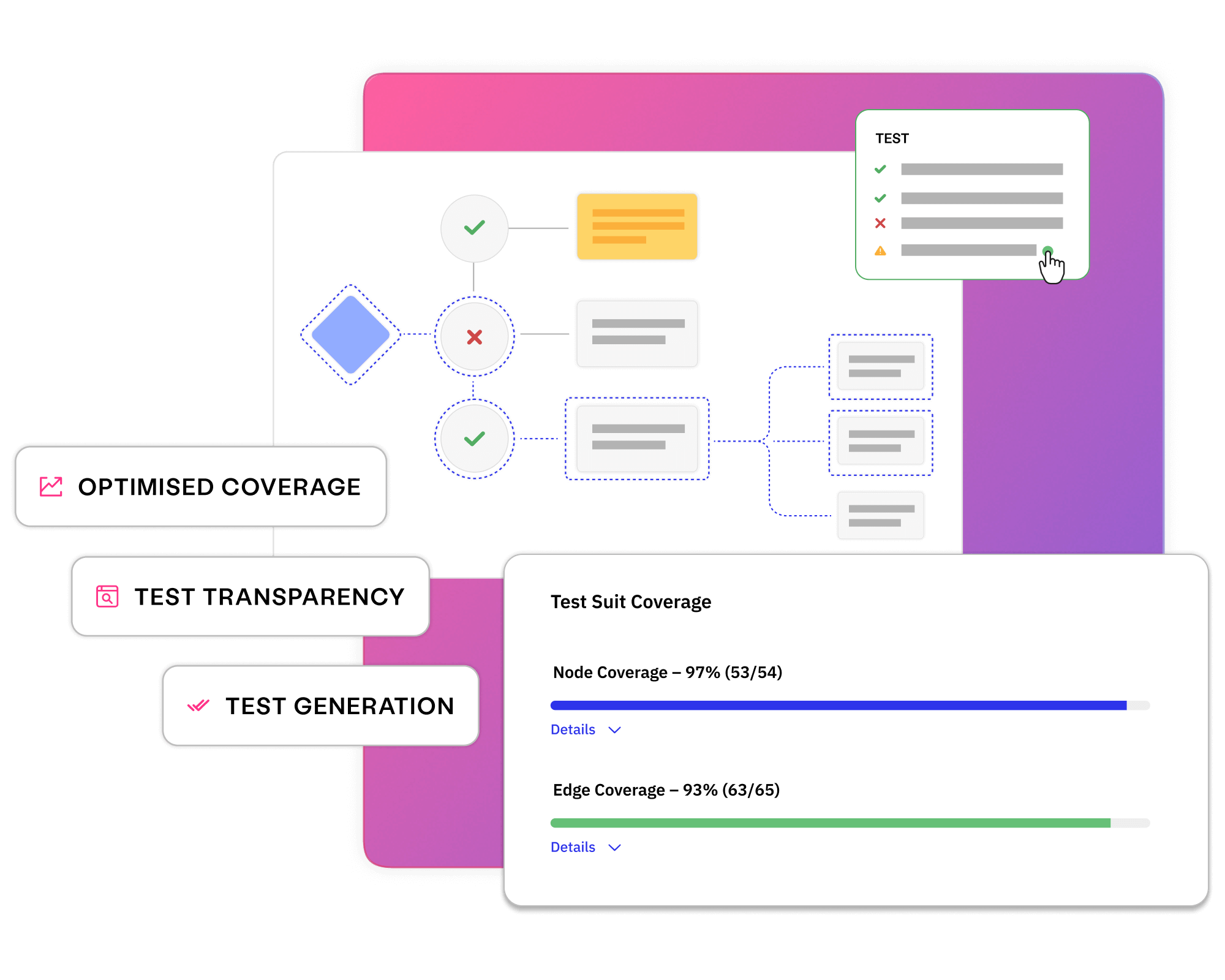

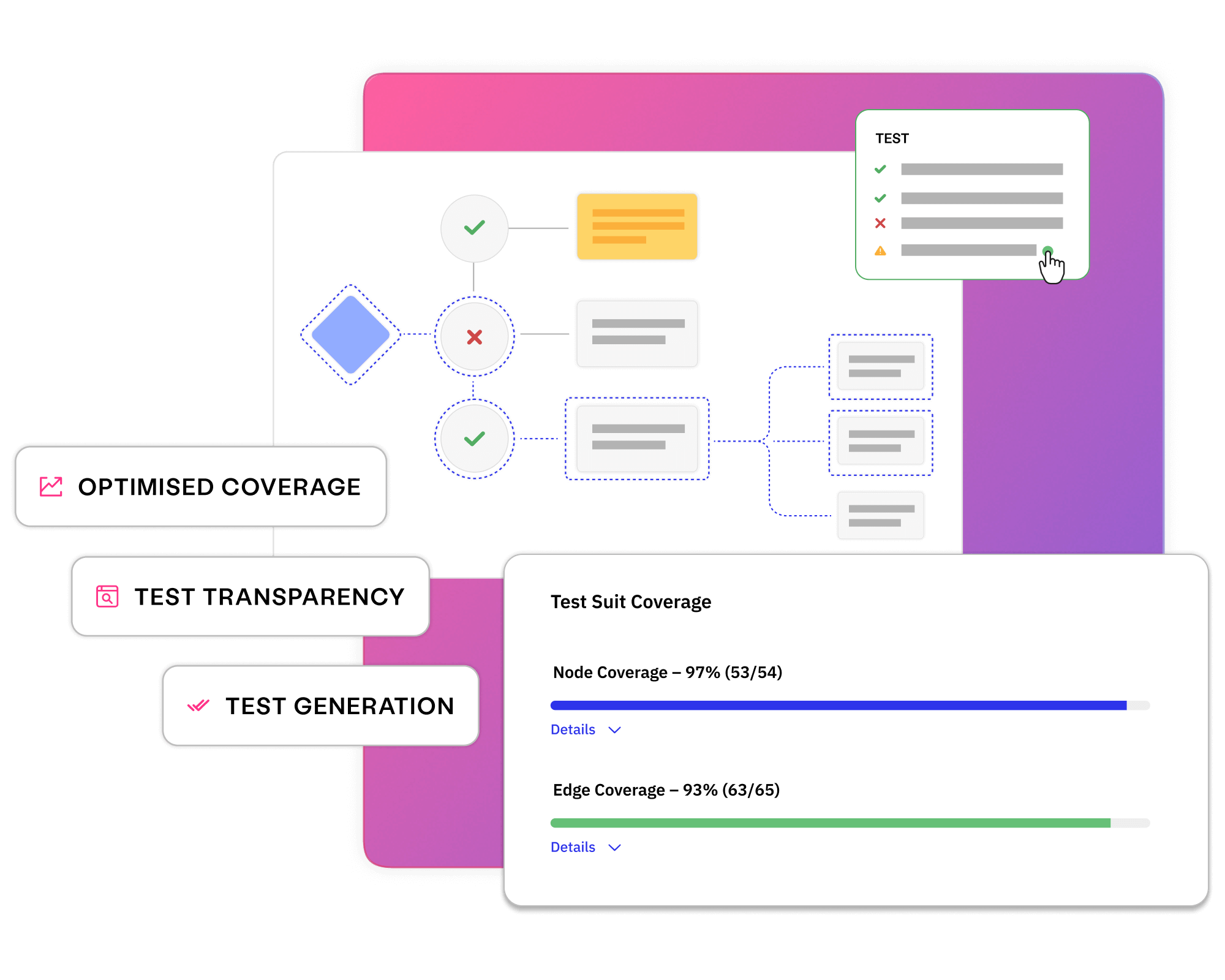

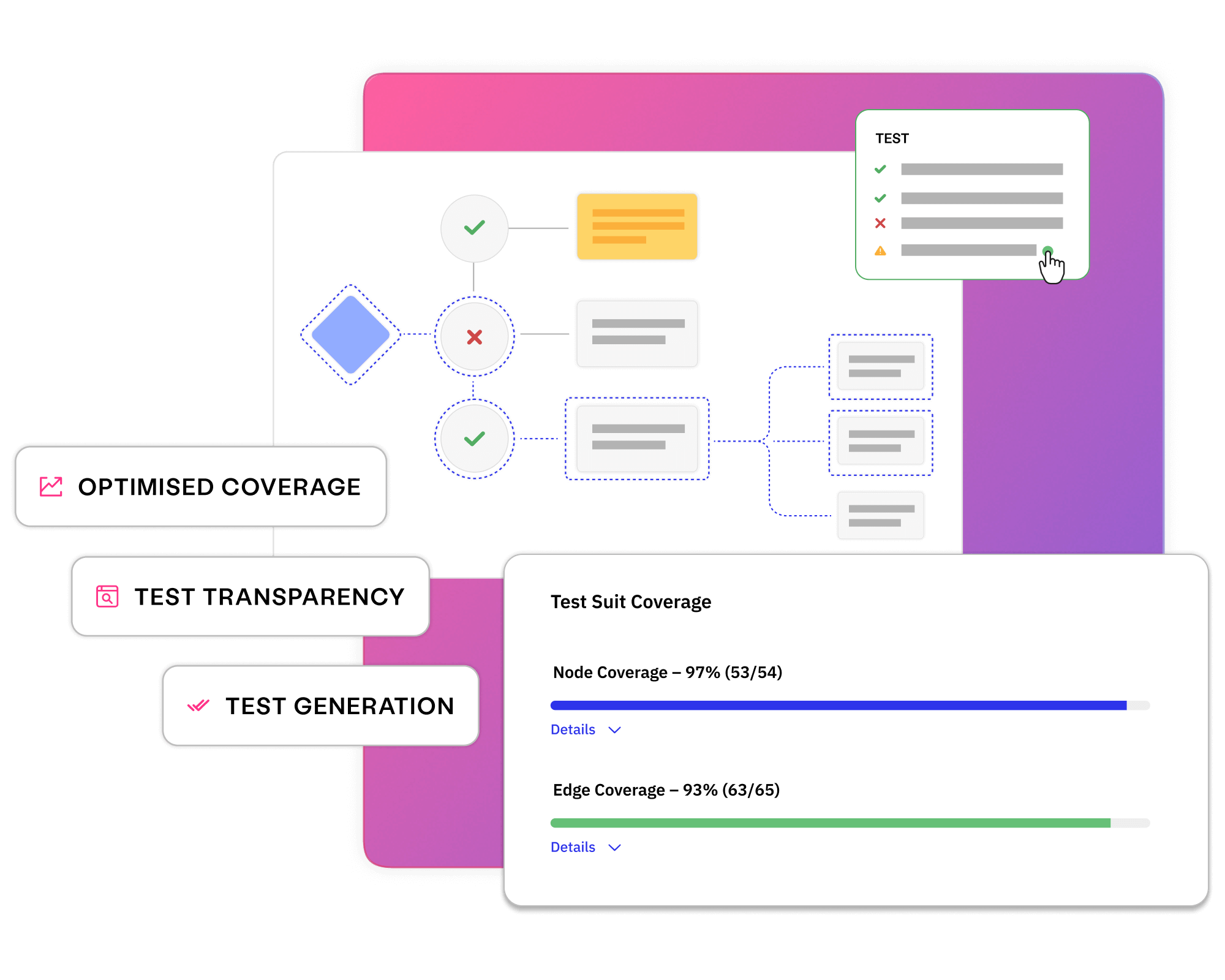

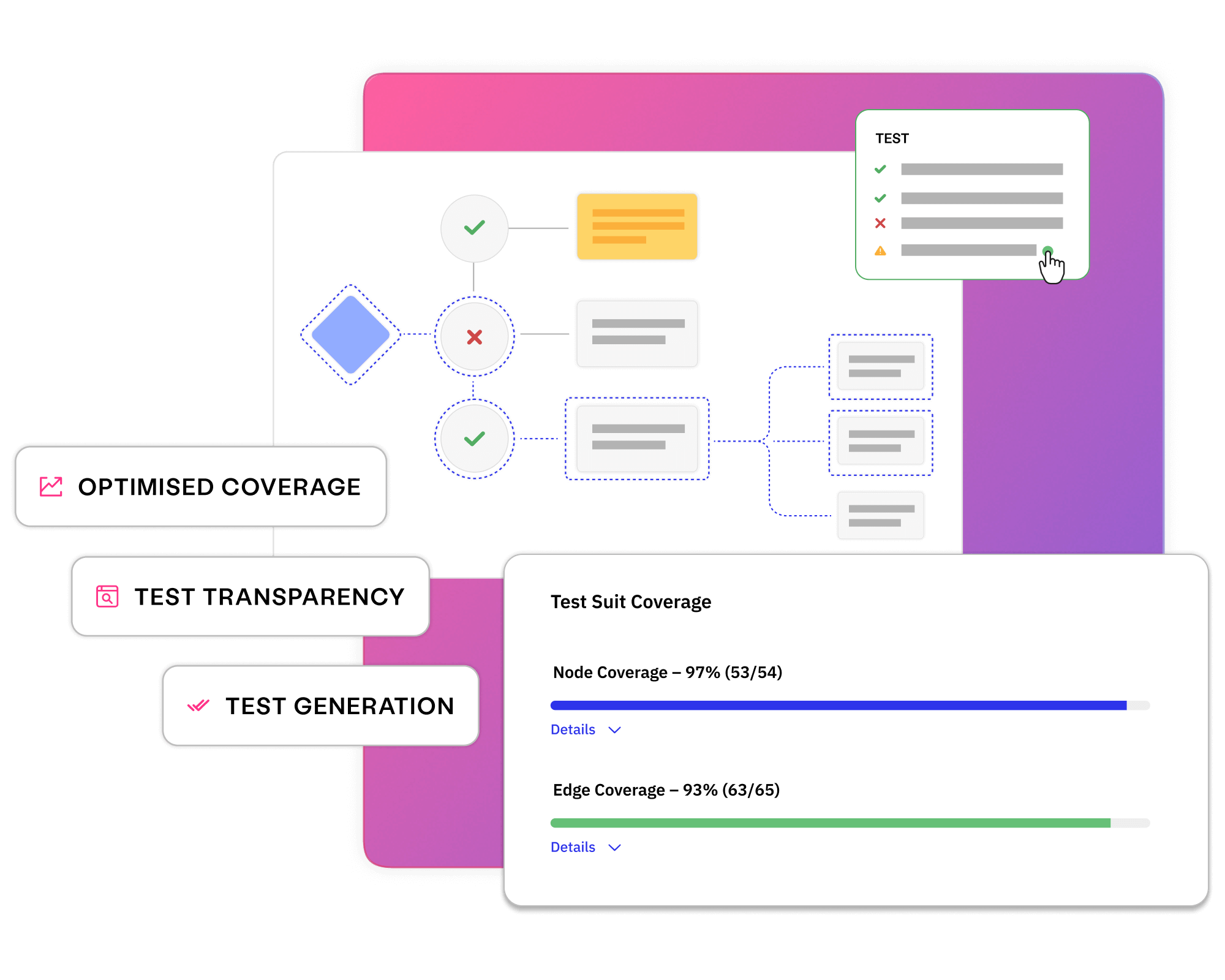

Measuring test coverage and knowing that every requirement has been covered provides release confidence and stability.

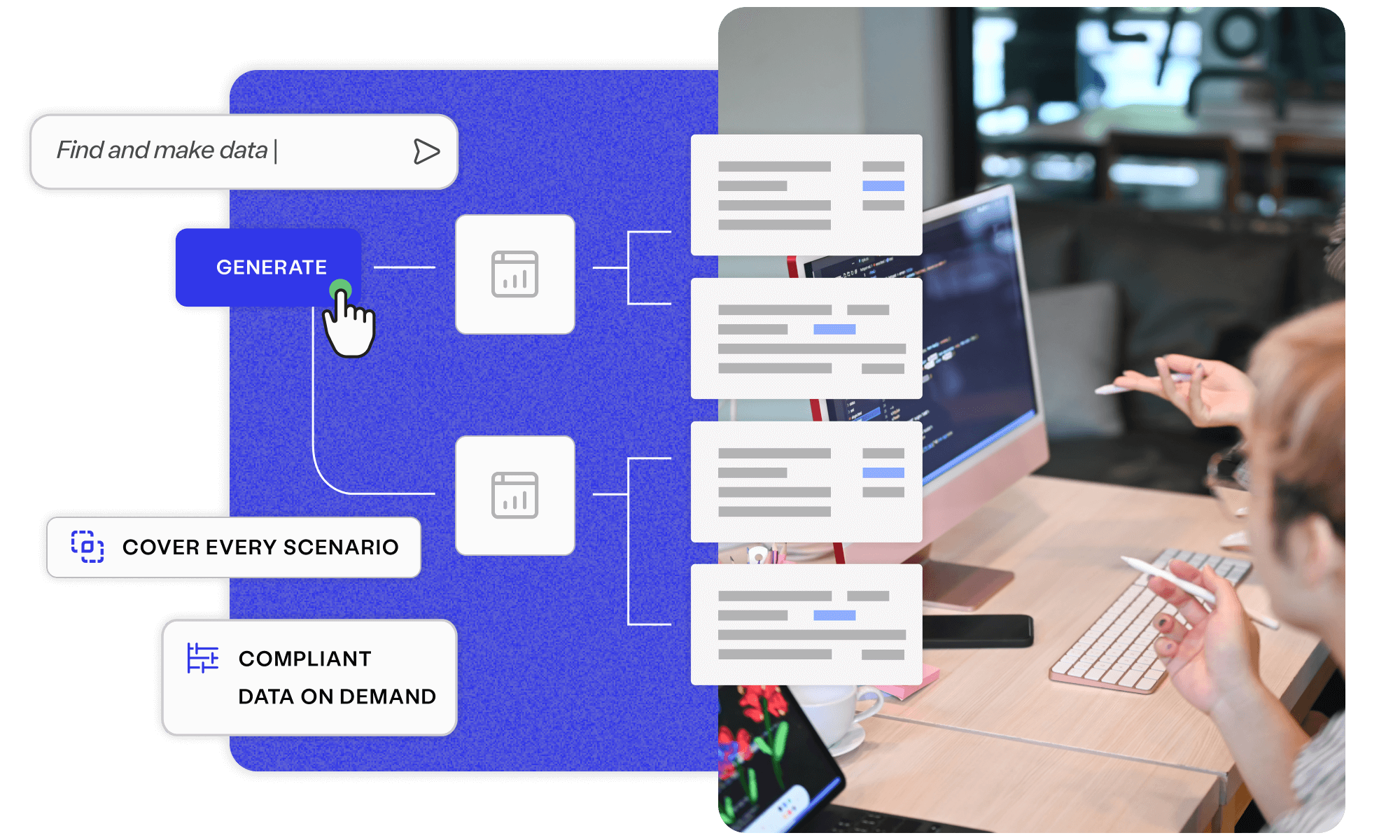

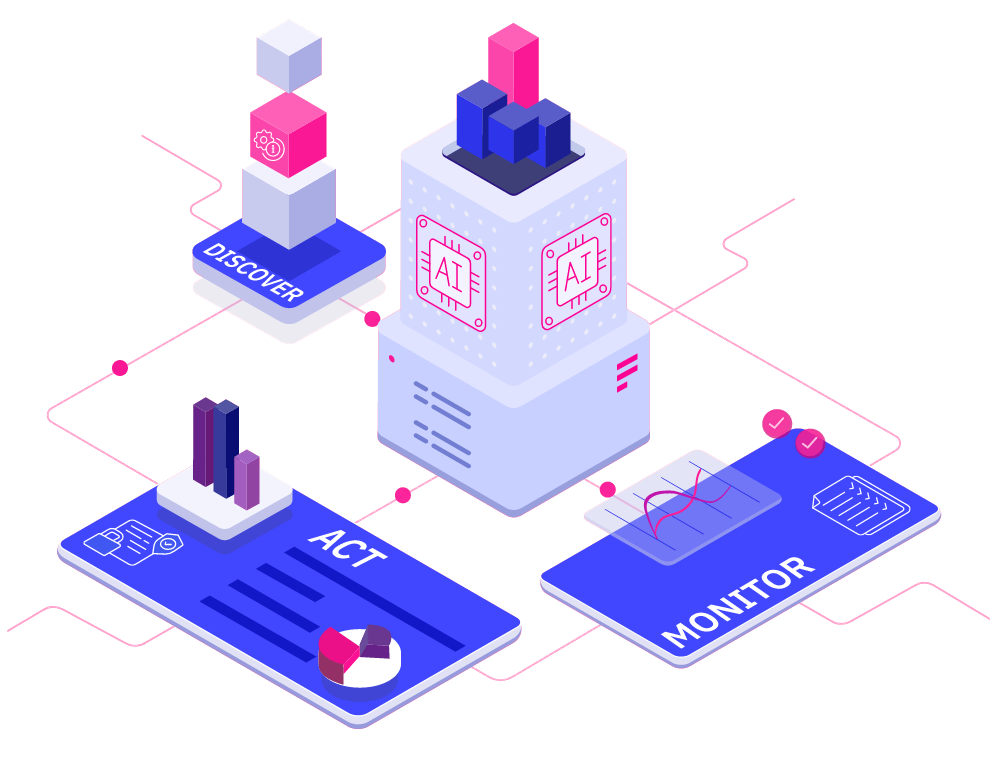

Uncover smarter test data, with Curiosity’s all-in-one, AI-accelerated platform. Offering integrated, secure, and intuitive tools to simplify complex data landscapes and overcome test data management challenges.

Explore platform

Across industries, some of the world’s largest enterprises use the Curiosity platform to shorten the time they need to deliver superior software.

Visual models break our system down in reusable chunks that generate the functional and visual tests we need. We can further assemble these reusable building blocks automatically as new courses are checked in, generating rigorous tests.

Greg Sypolt

VP of Quality Engineering

Read a resource, or meet with an expert, to learn how you can test rigorously and develop rapidly from clear and collaborative requirements.

Simplify your complex data landscape and provide confidence and clarity at every step of your data...

Read more about Discover Enterprise Test Data® Learn more

Discover how EVERFI generate new tests within minutes of check-ins, all documented in clear models.

Read more about Continuous testing at EVERFI Read today

See how you can build quality into requirements and generate tests to release with confidence.

Read more about Meet with a Curiosity expert Book now