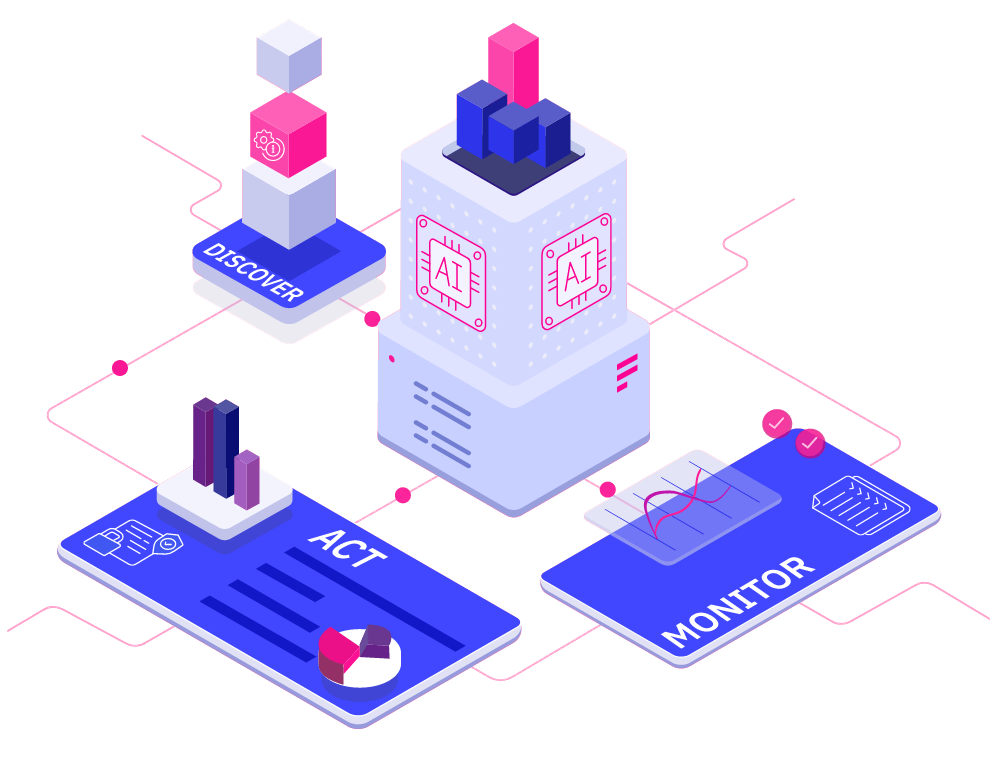

Discover Enterprise Test Data®

Simplify your complex data landscape and provide confidence and clarity at every step of your data...

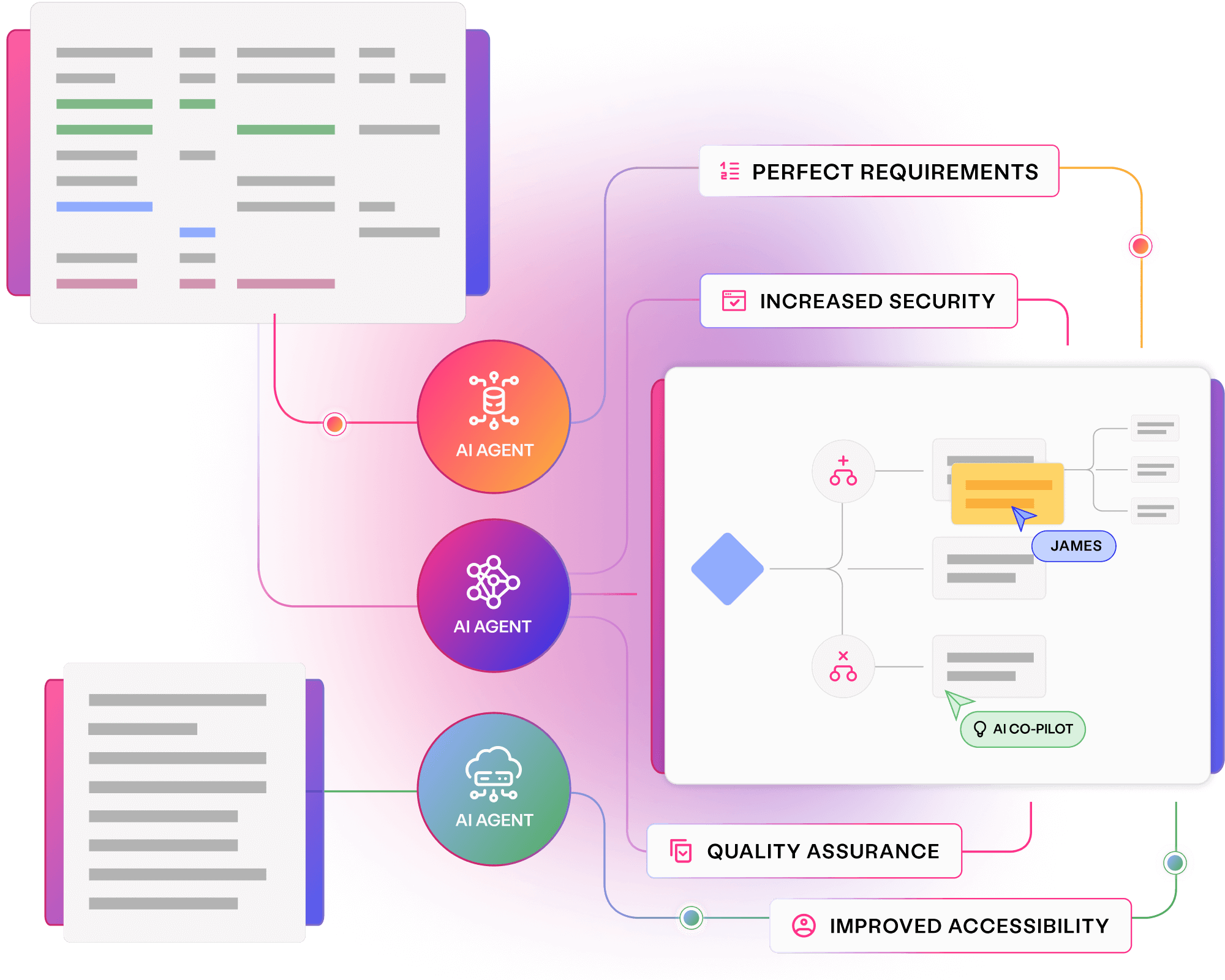

Read more about Discover Enterprise Test Data® Learn moreAI-powered. End-to-end. Your complete test data management platform.

Explore Curiosity's collection of webinars, podcasts, blogs and success stories, covering everything from visual modelling to artificial intelligence and test data management.

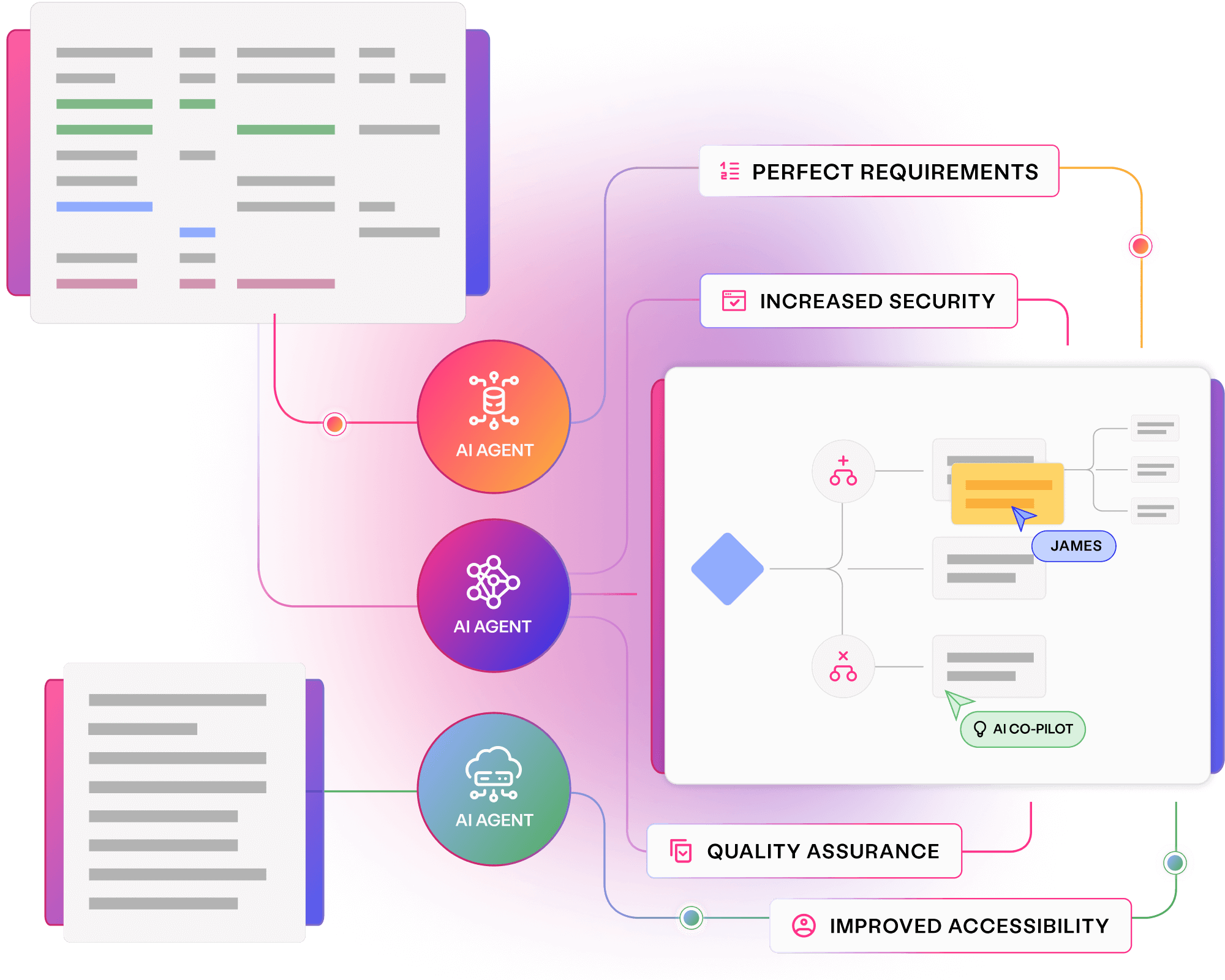

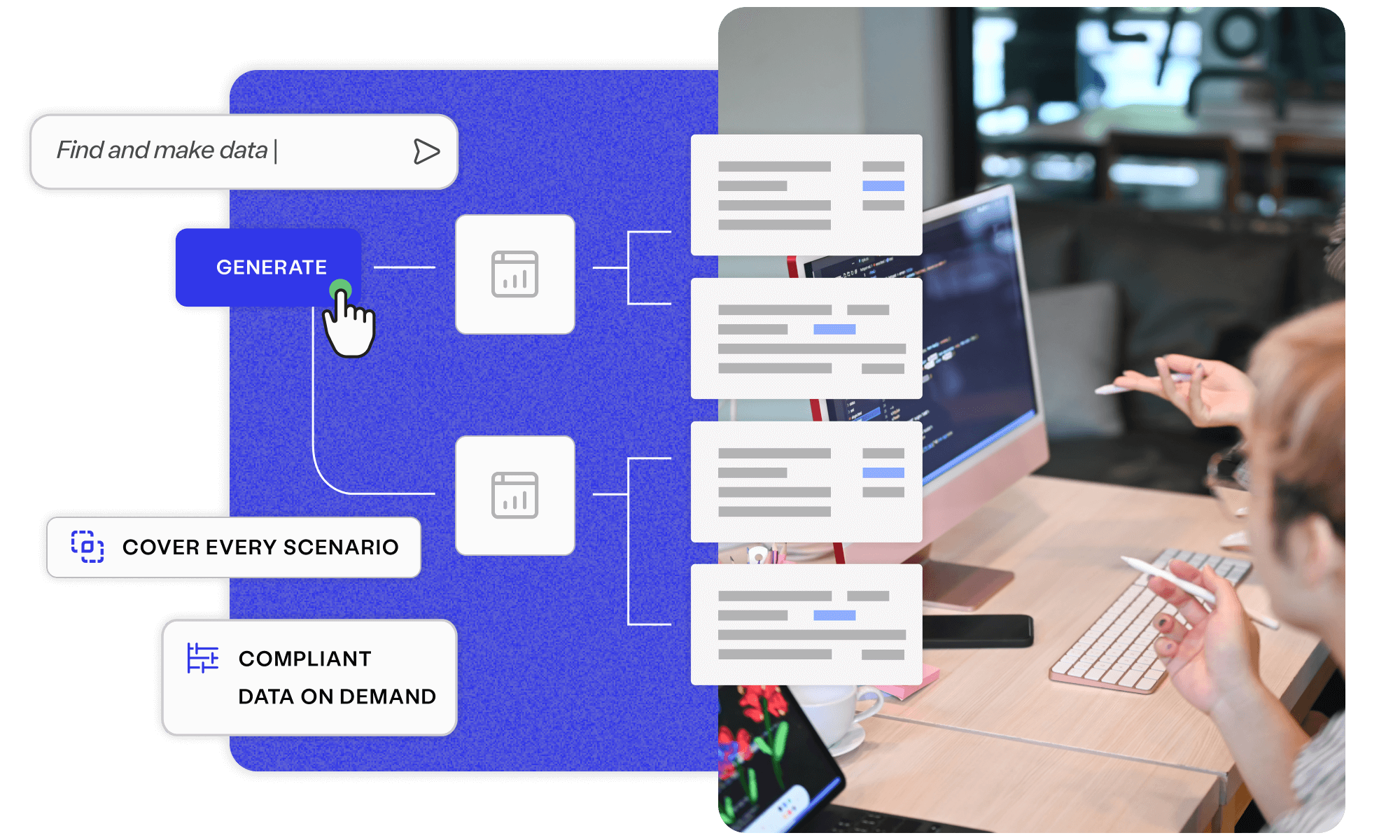

Deliver superior test data and overcome the challenges of complexity, legacy, scale, and regulation with Curiosity Software.

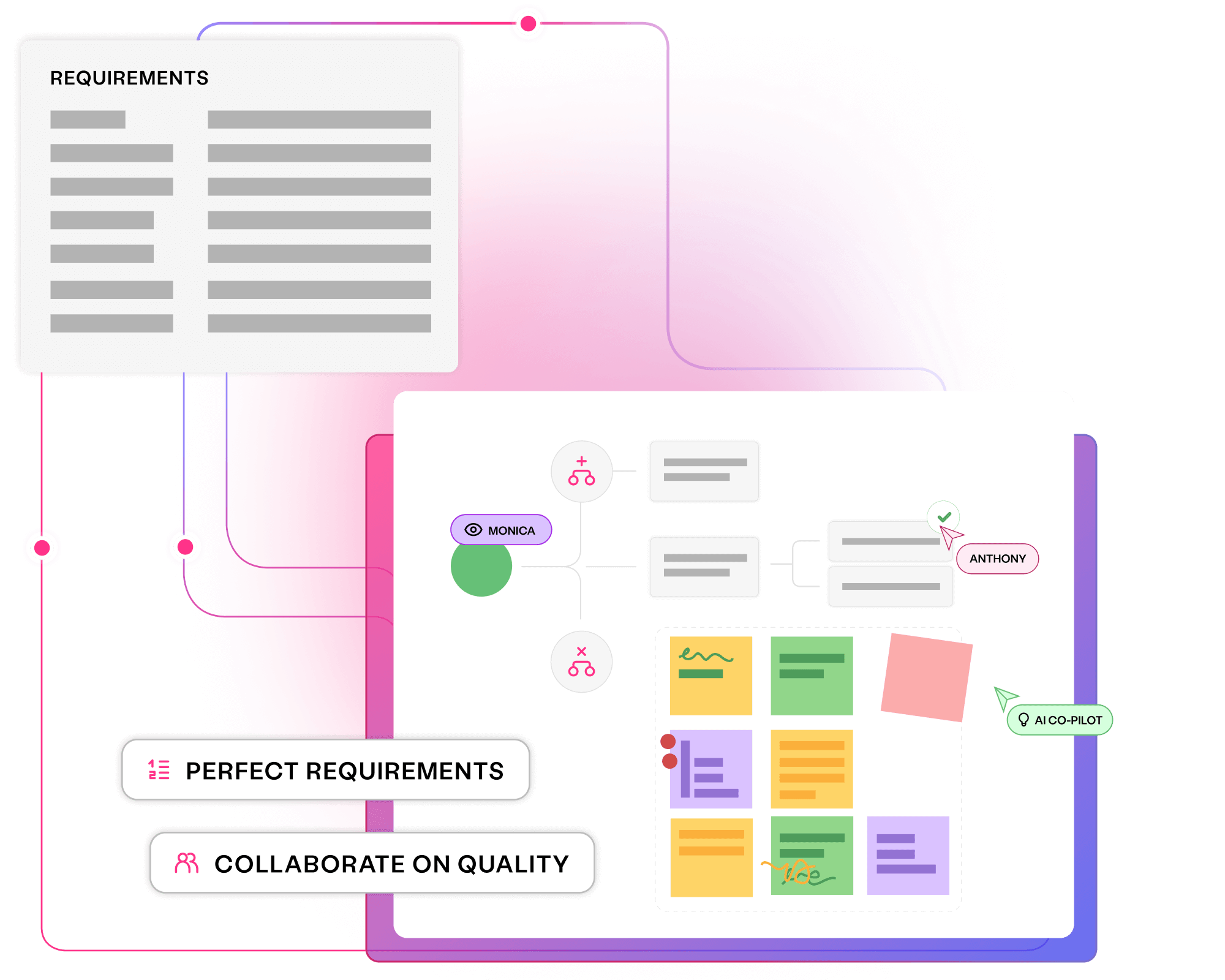

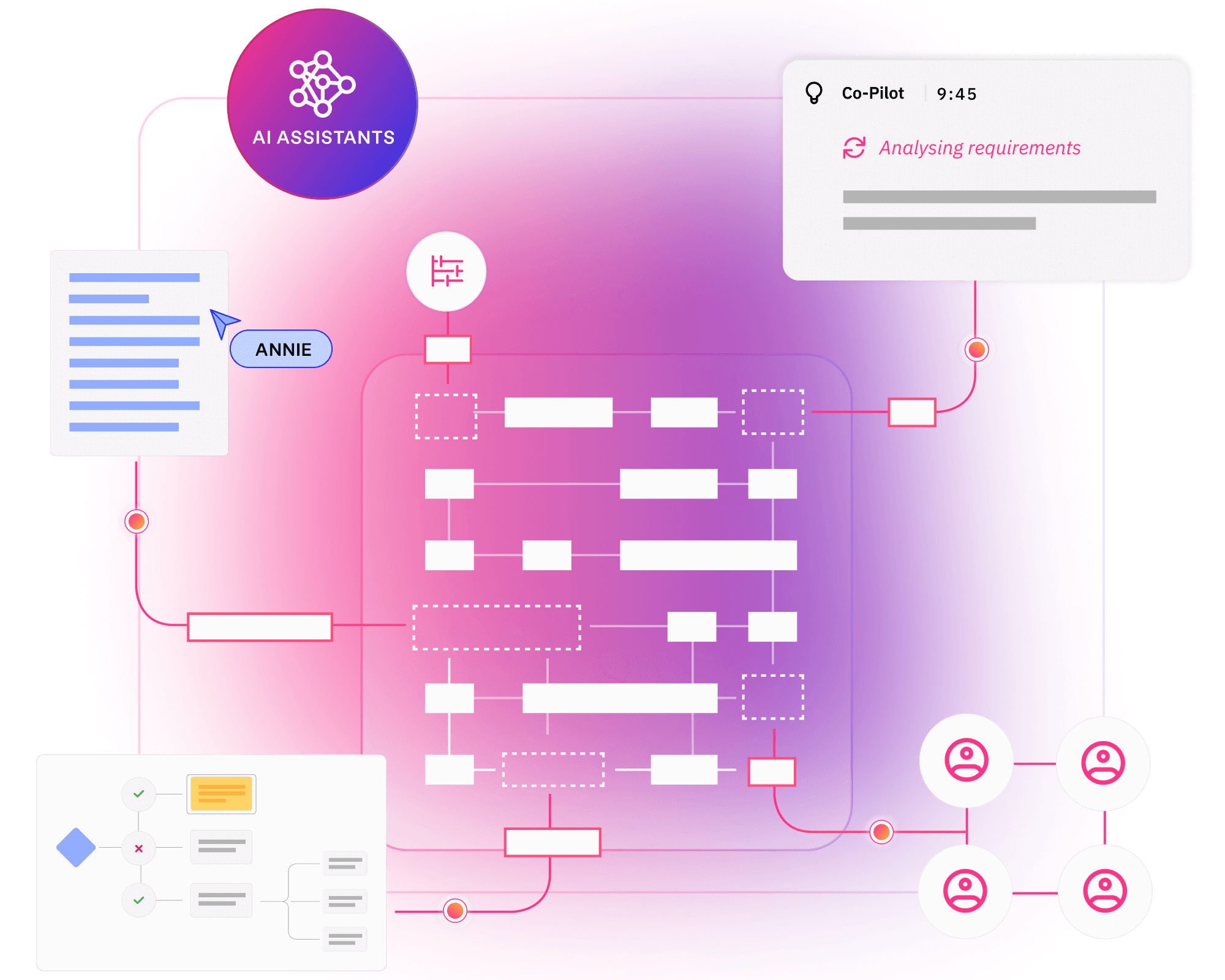

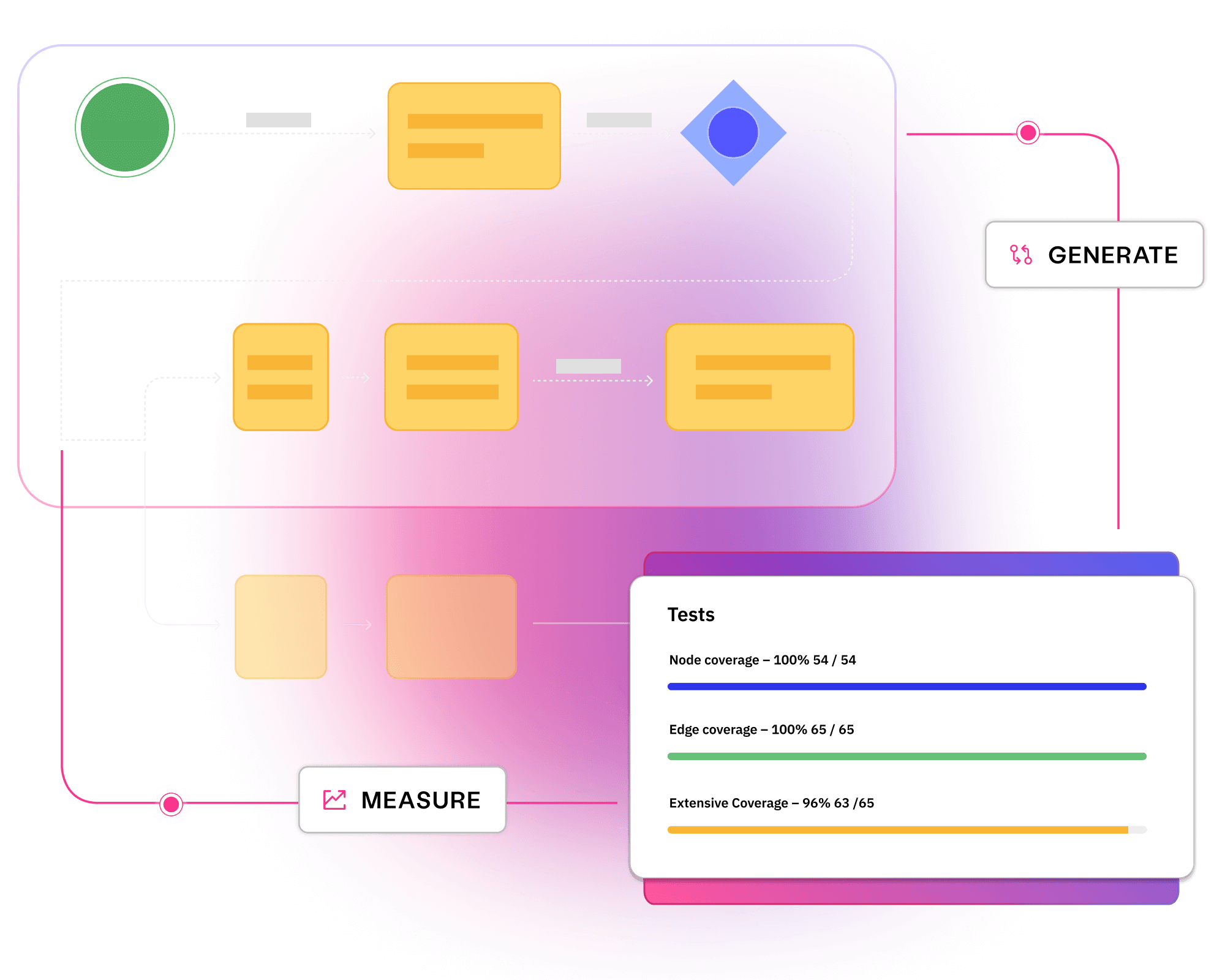

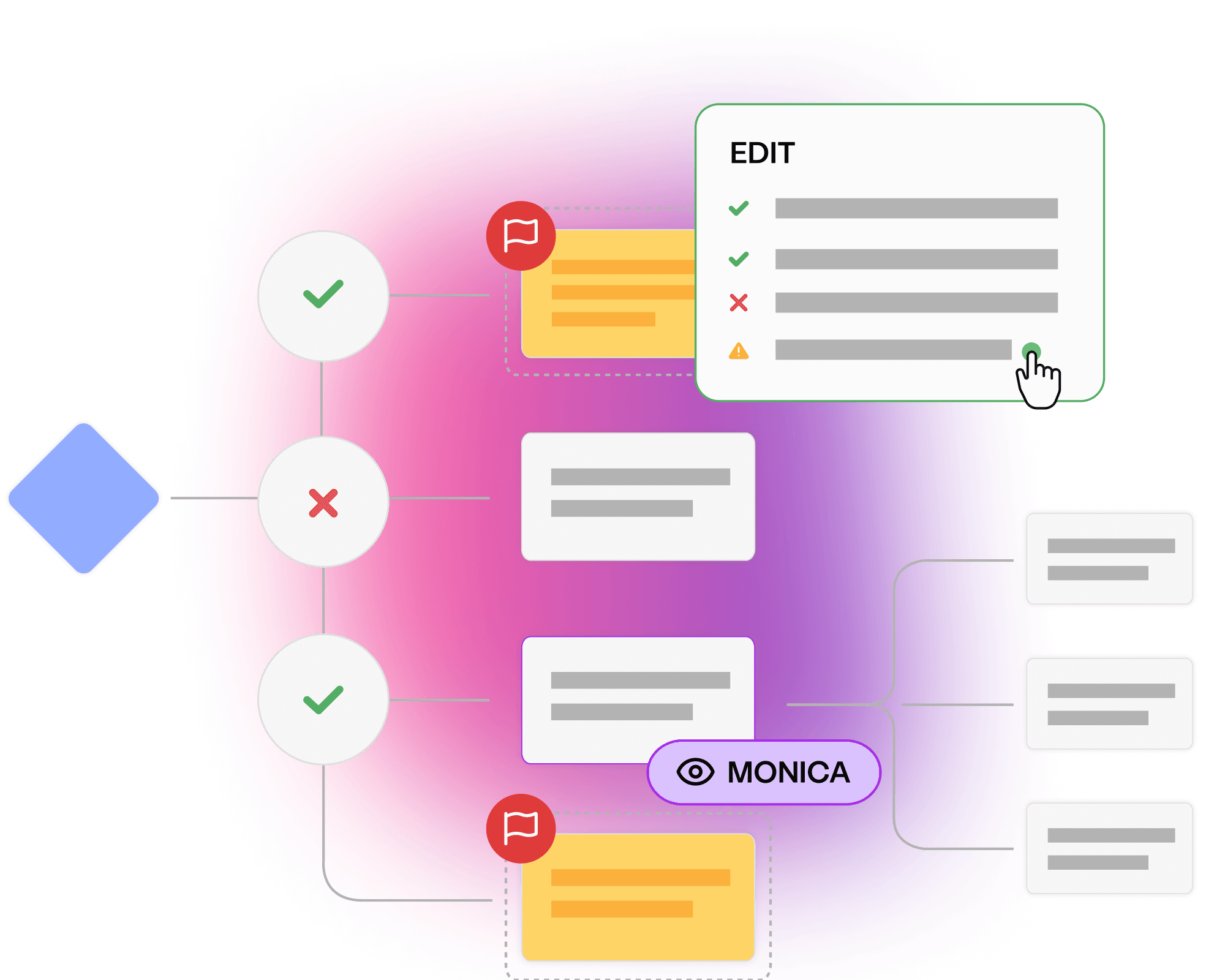

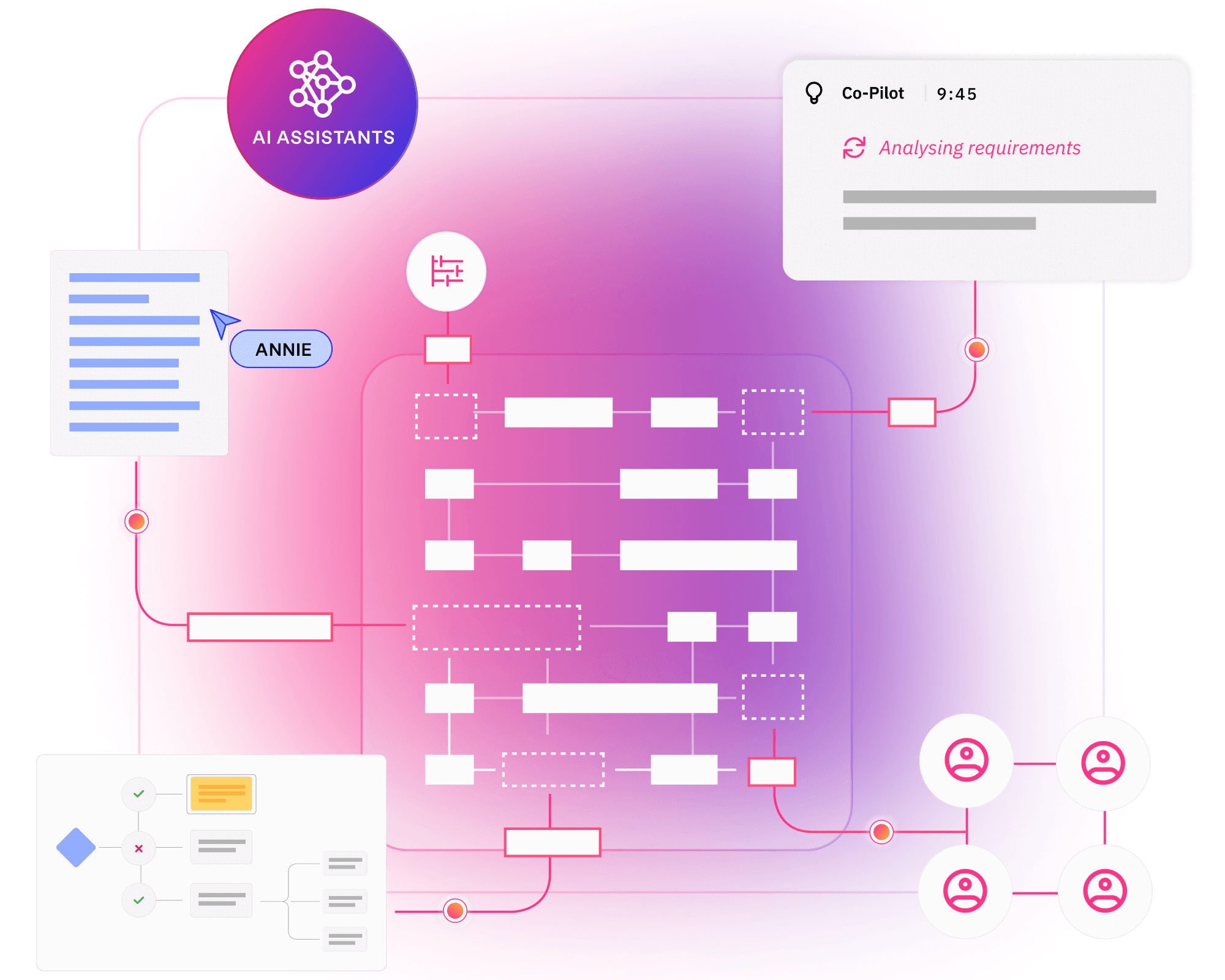

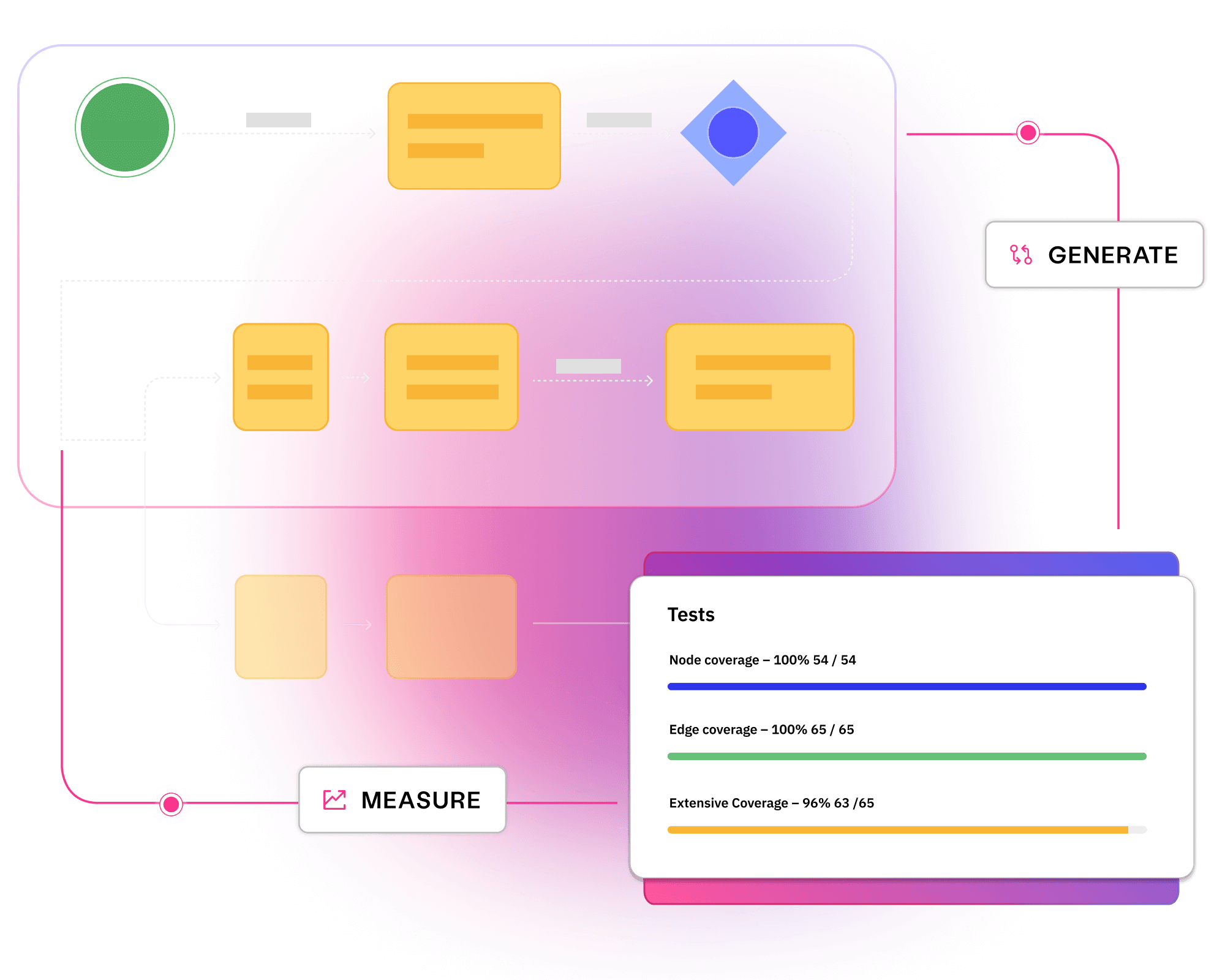

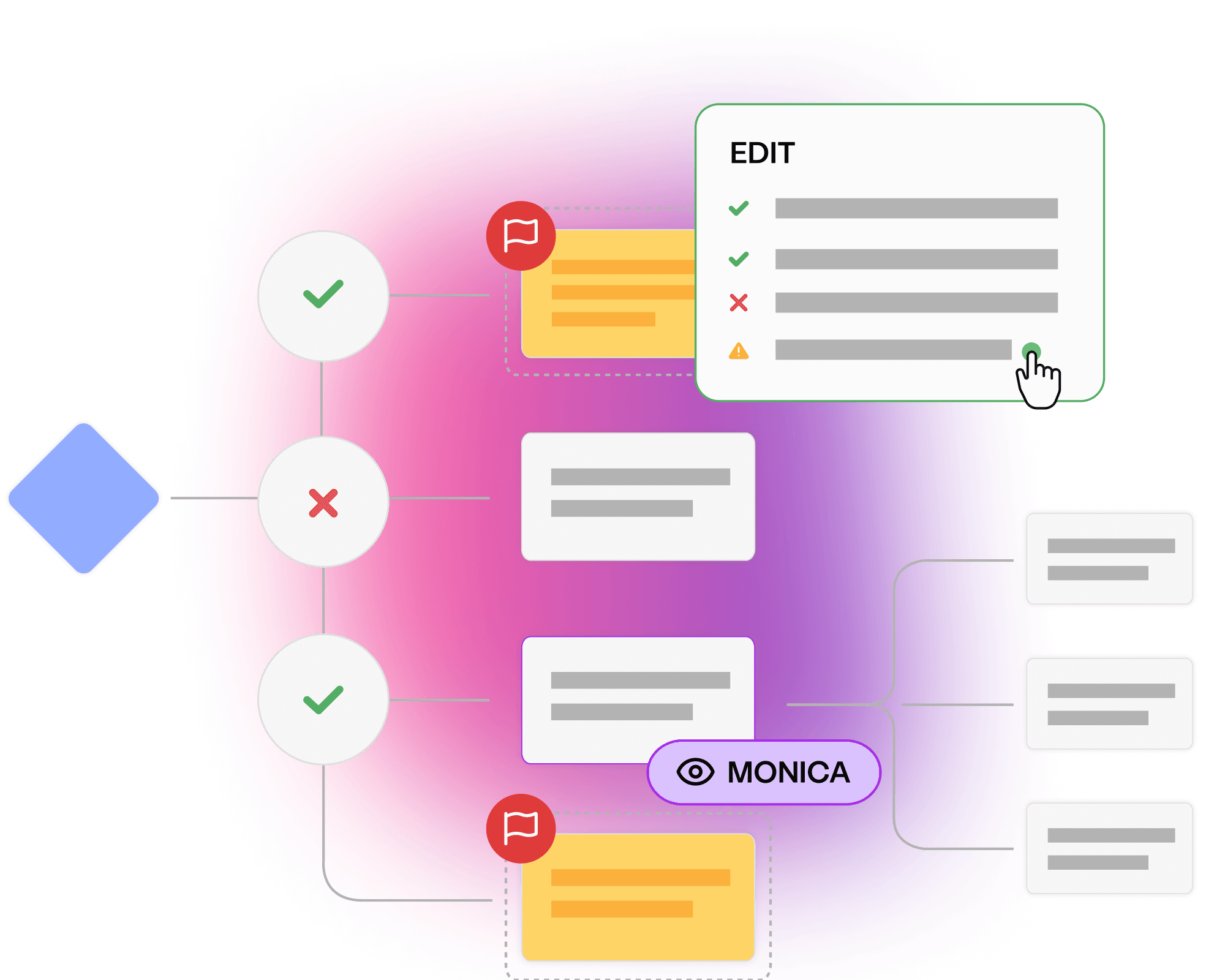

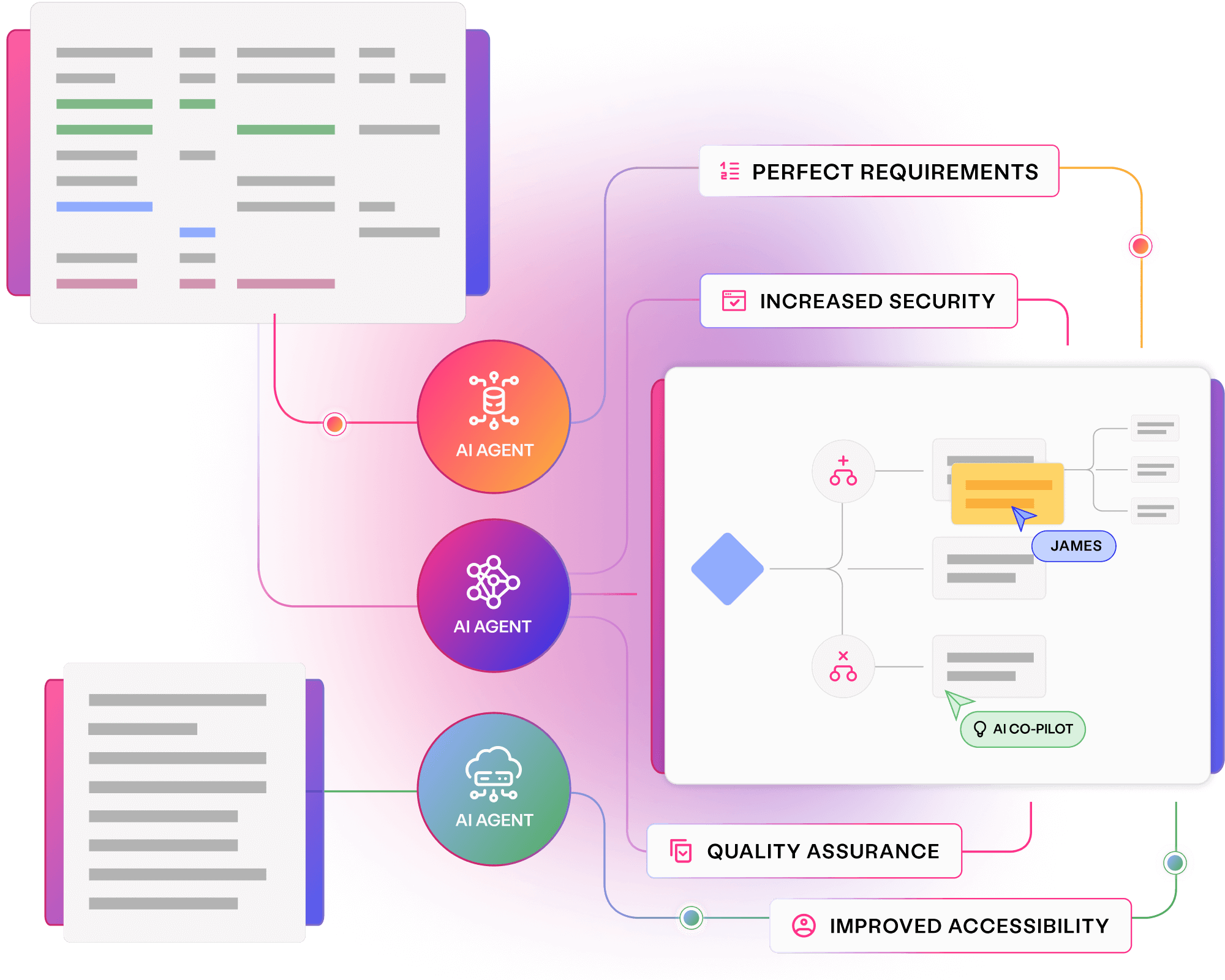

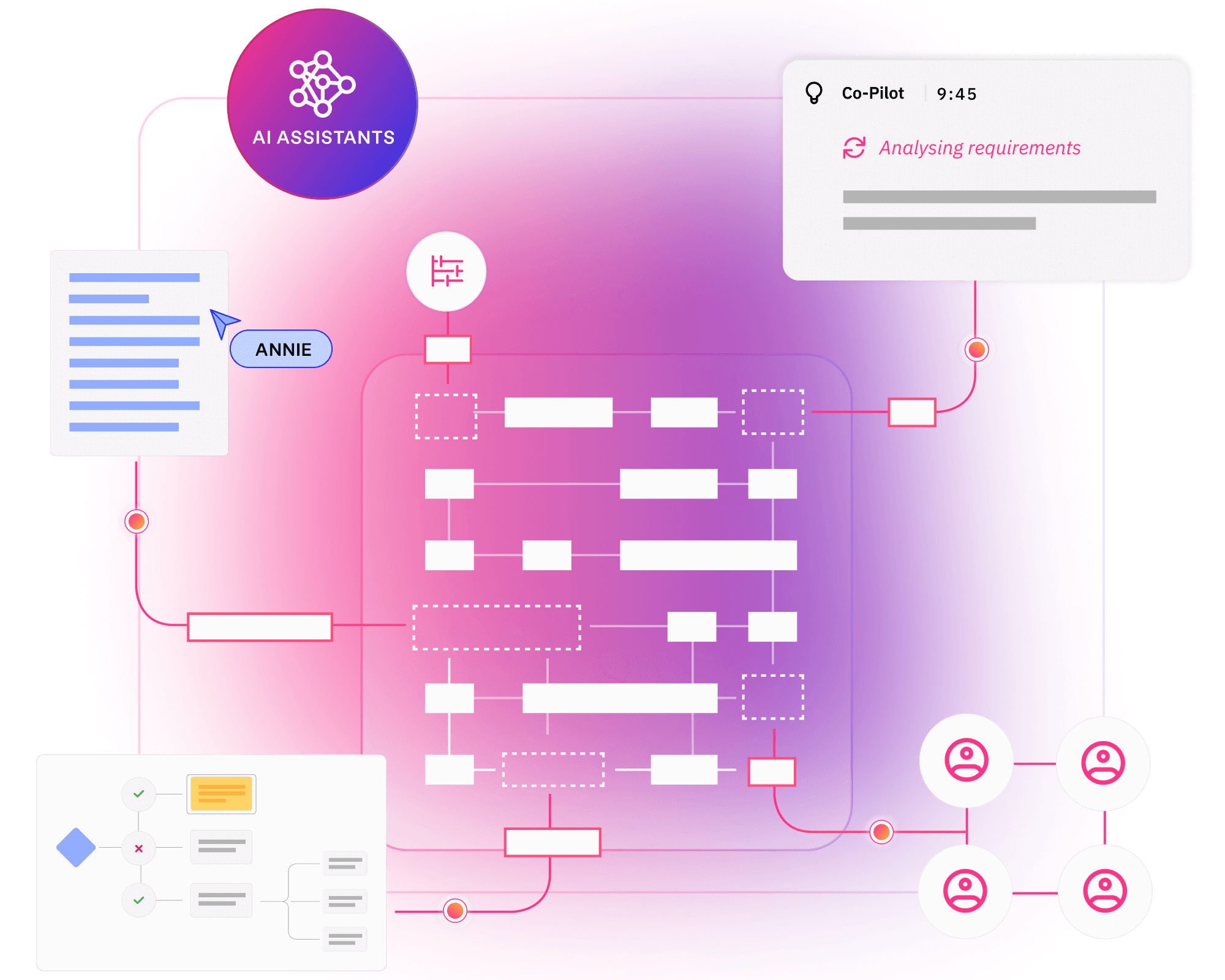

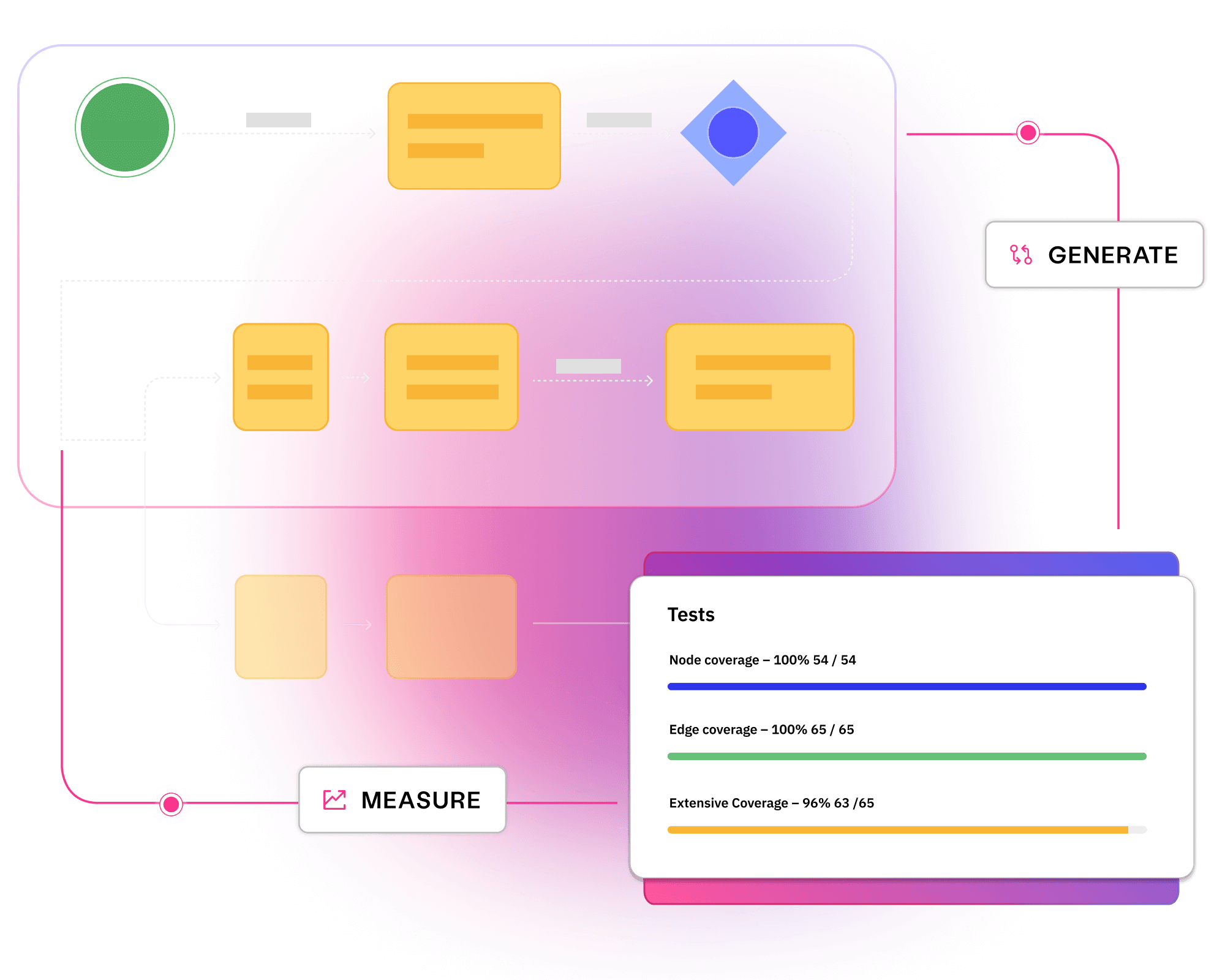

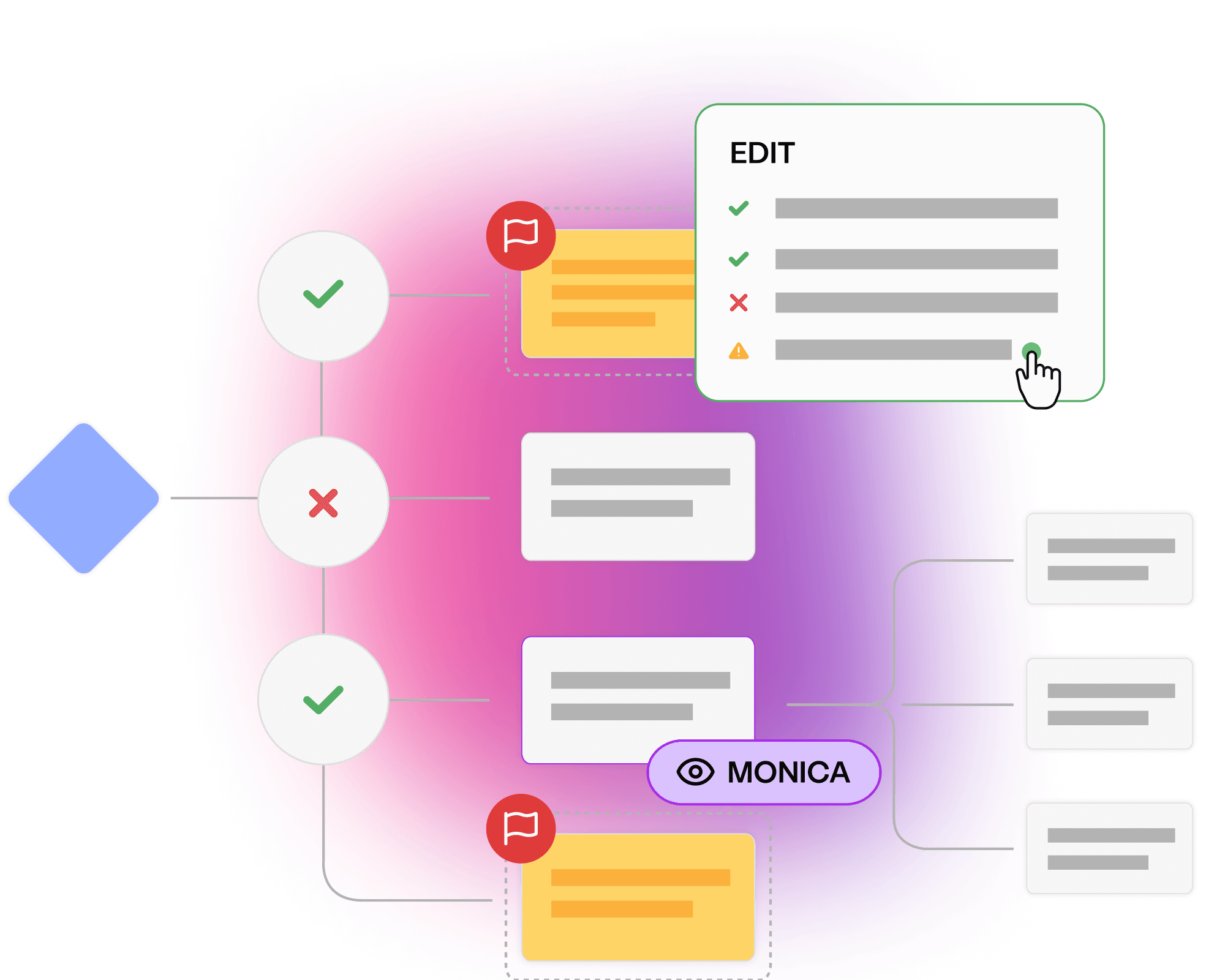

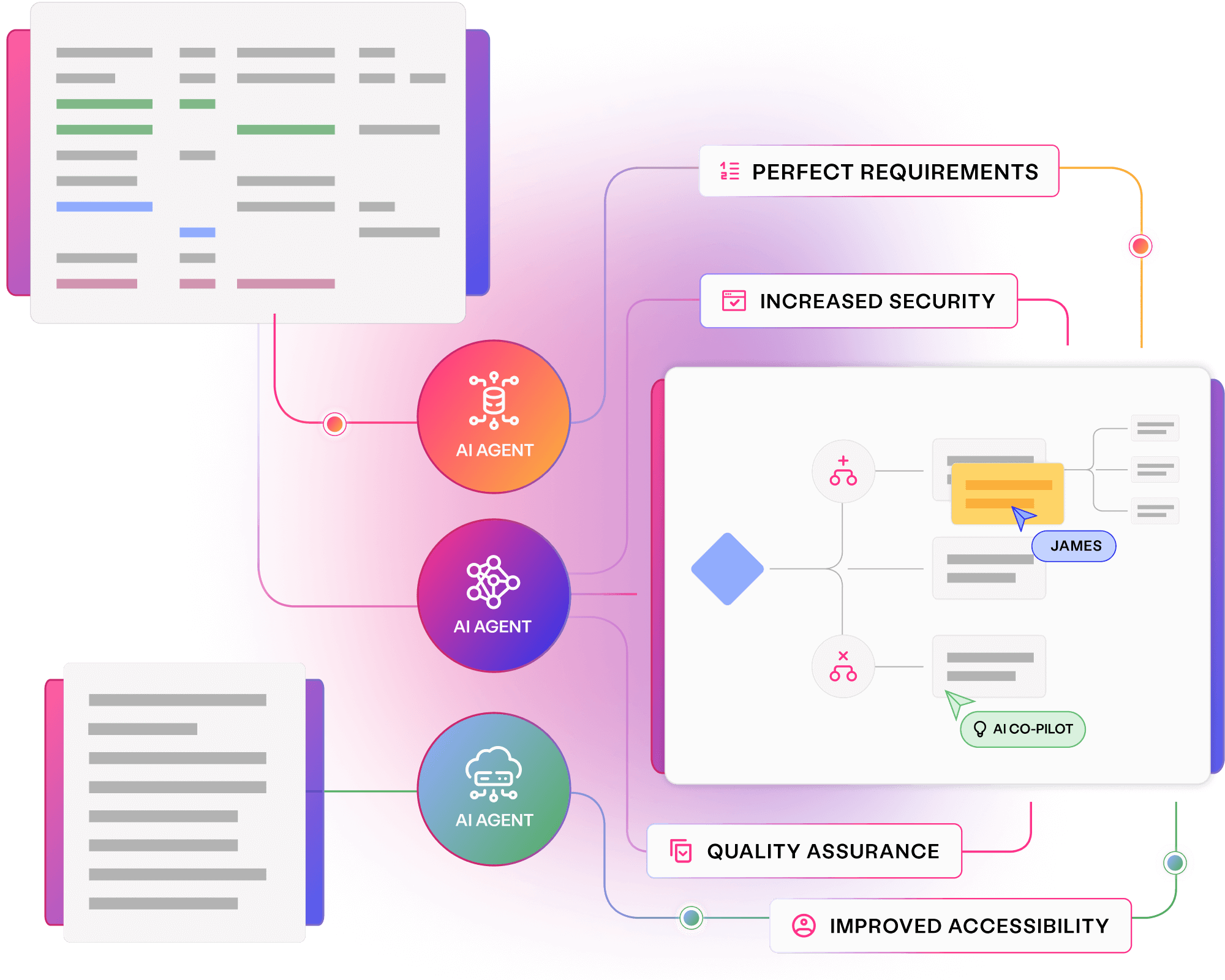

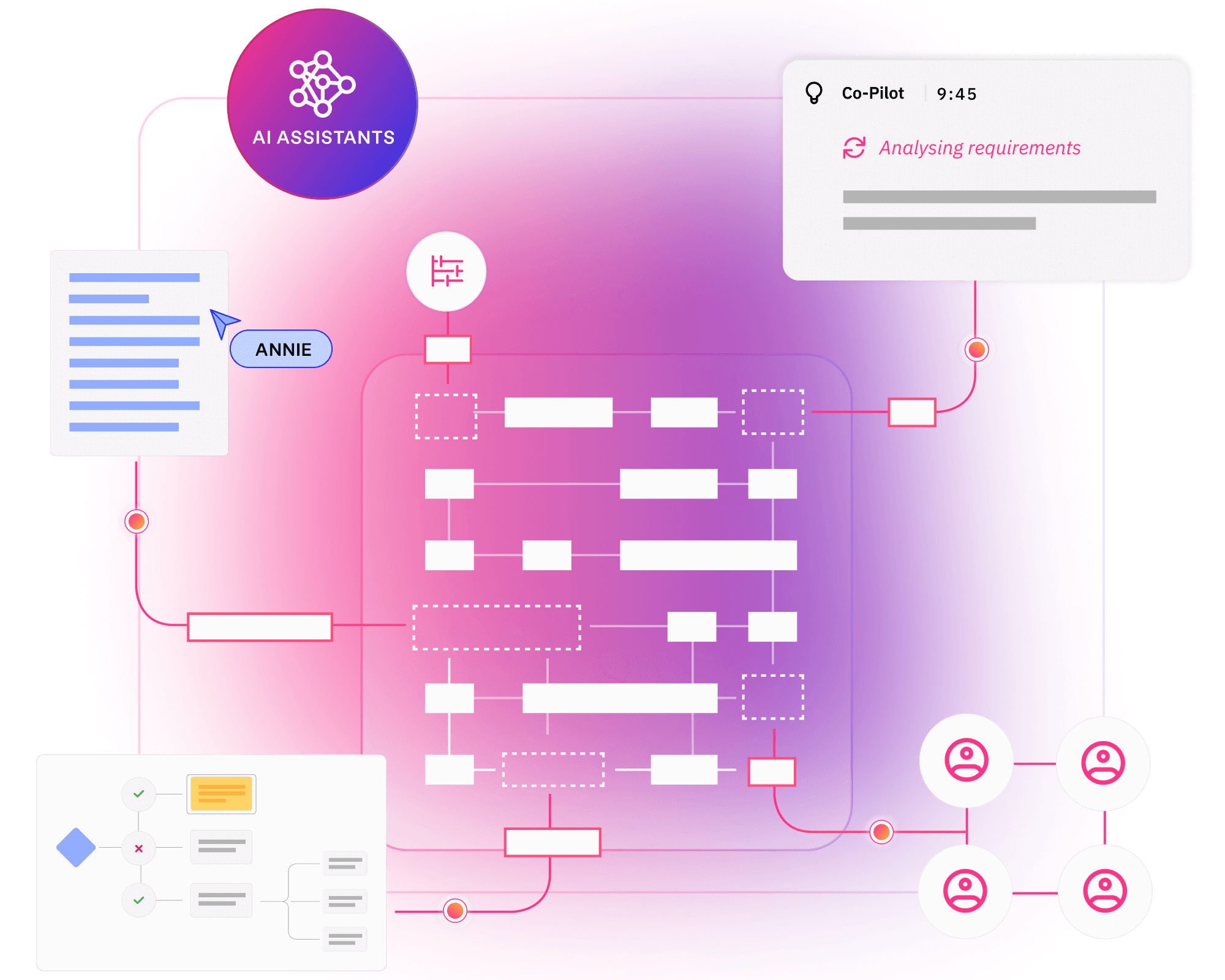

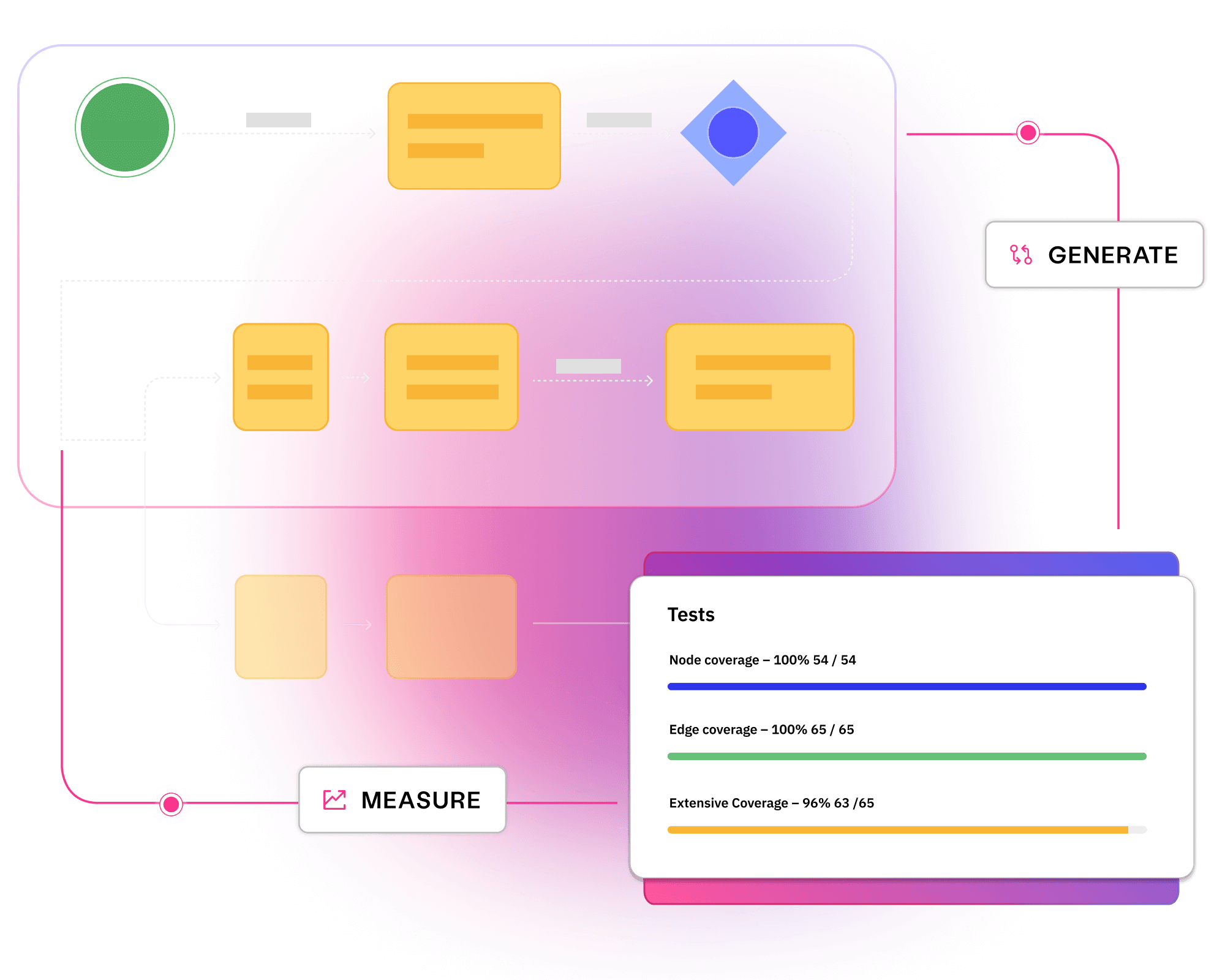

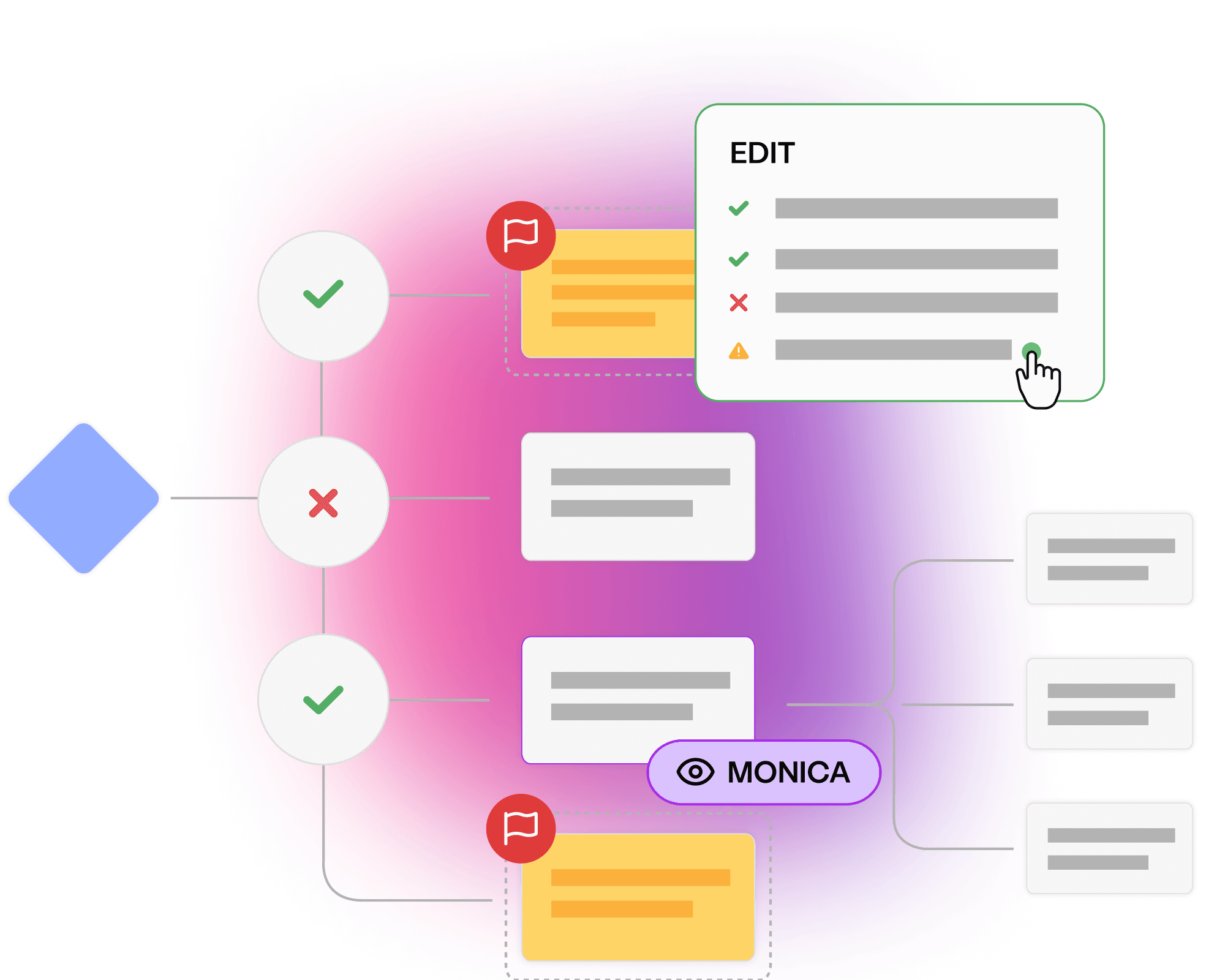

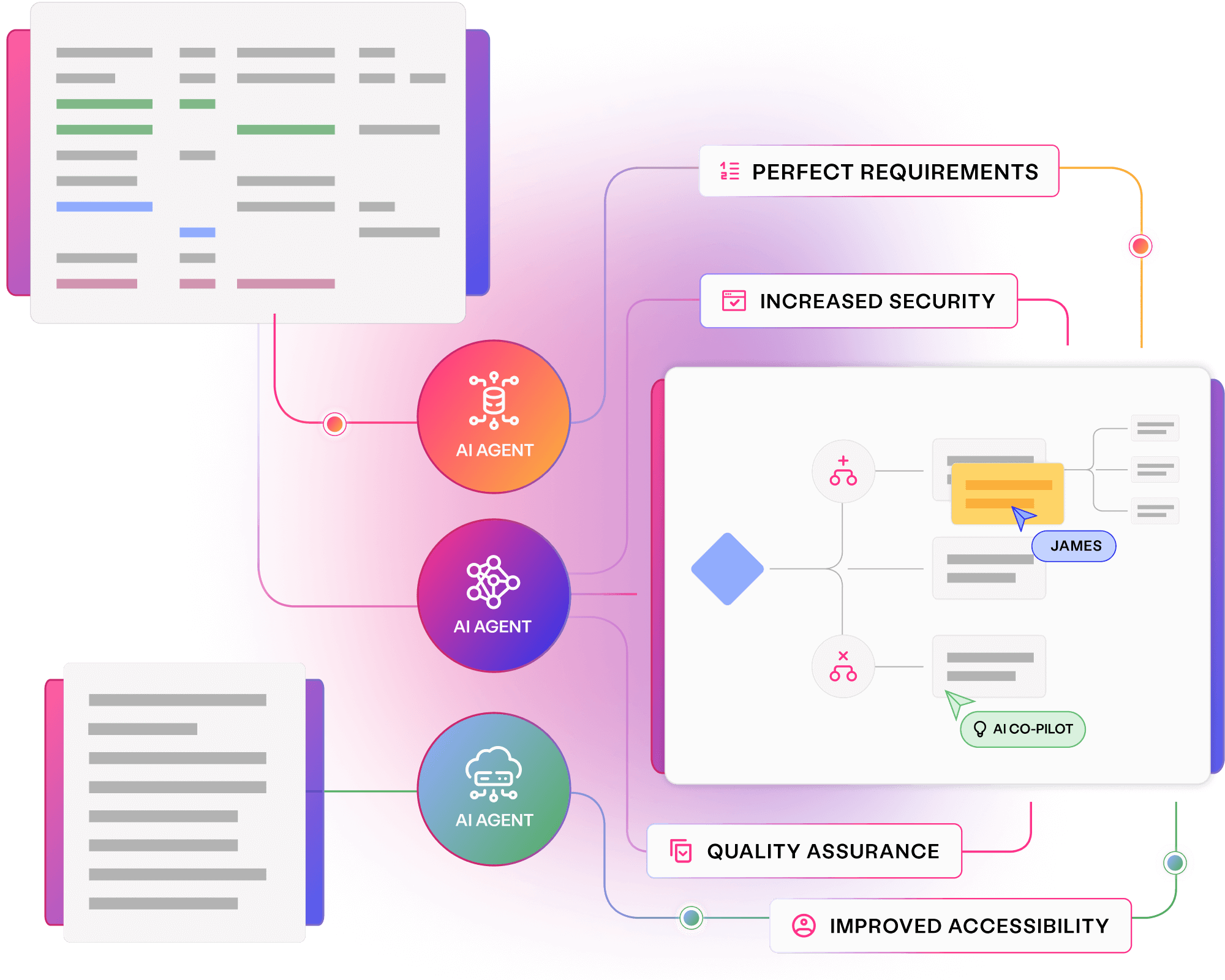

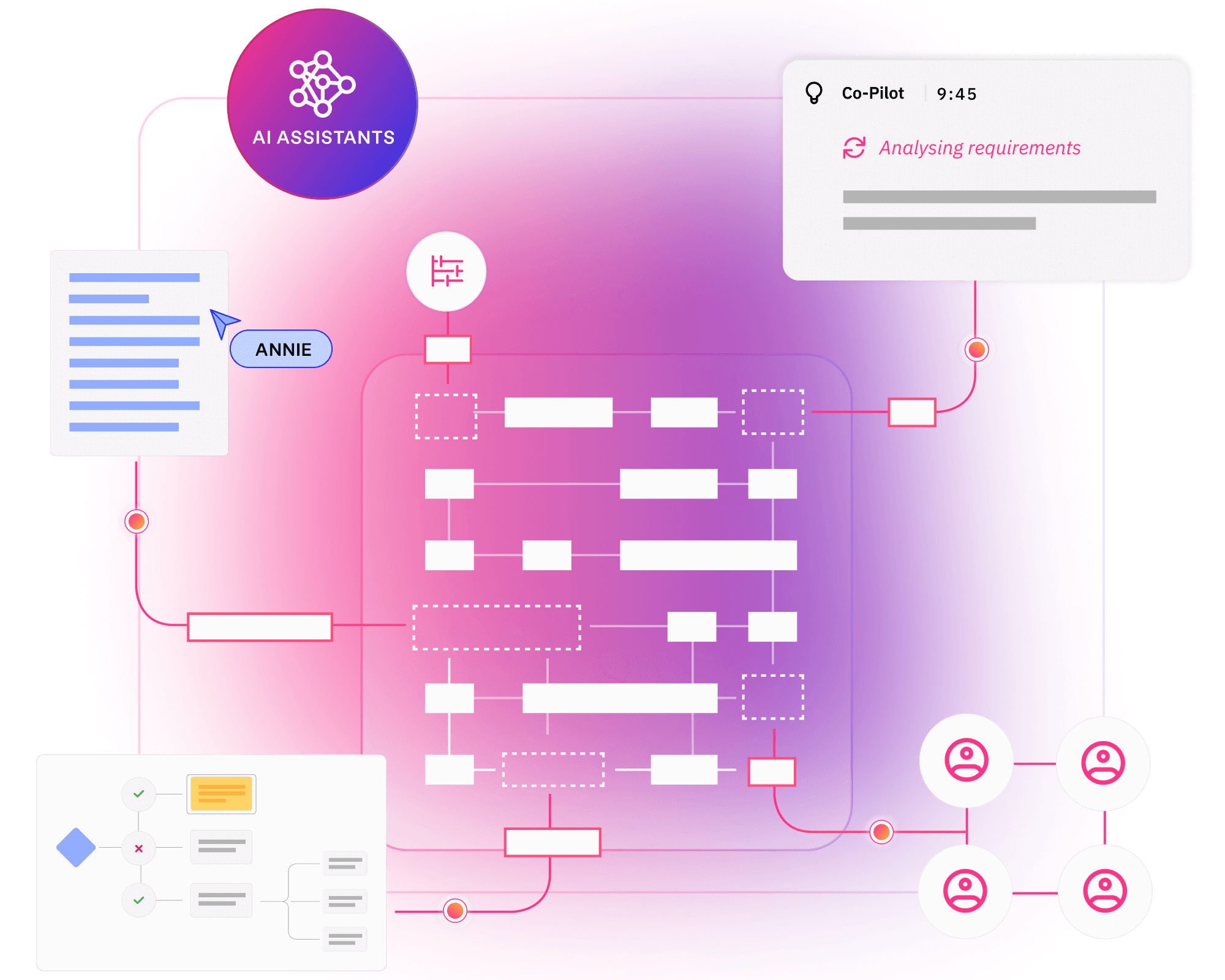

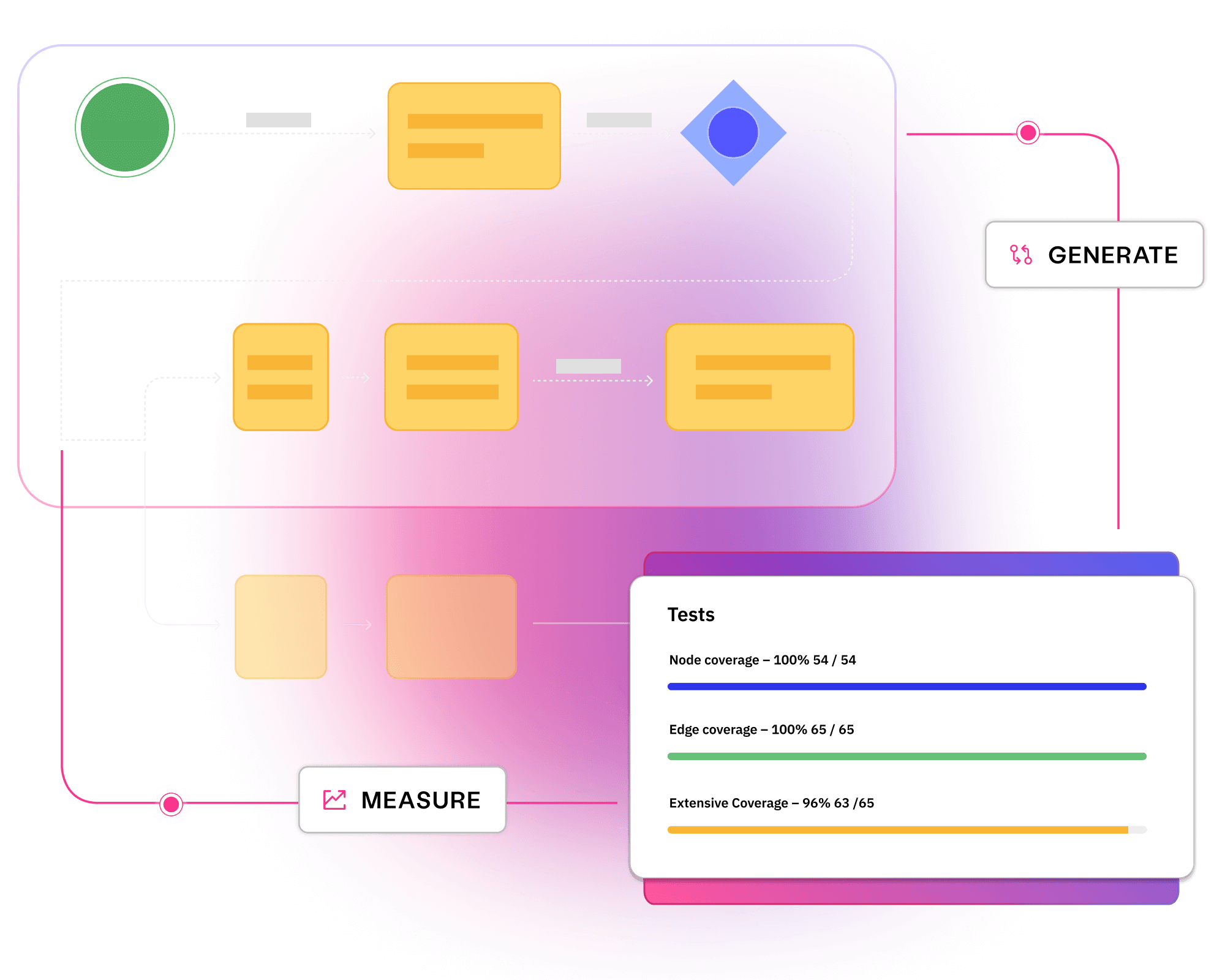

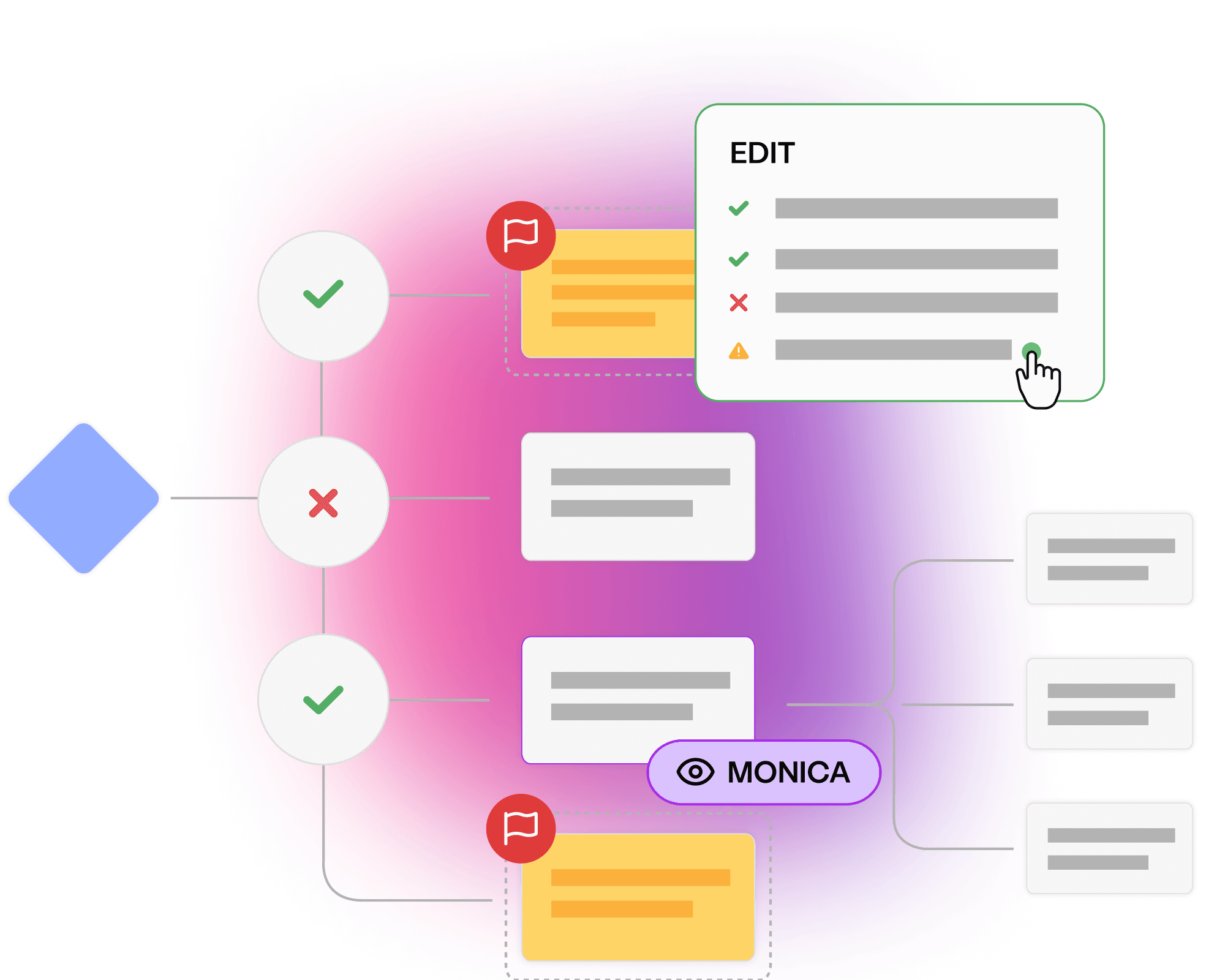

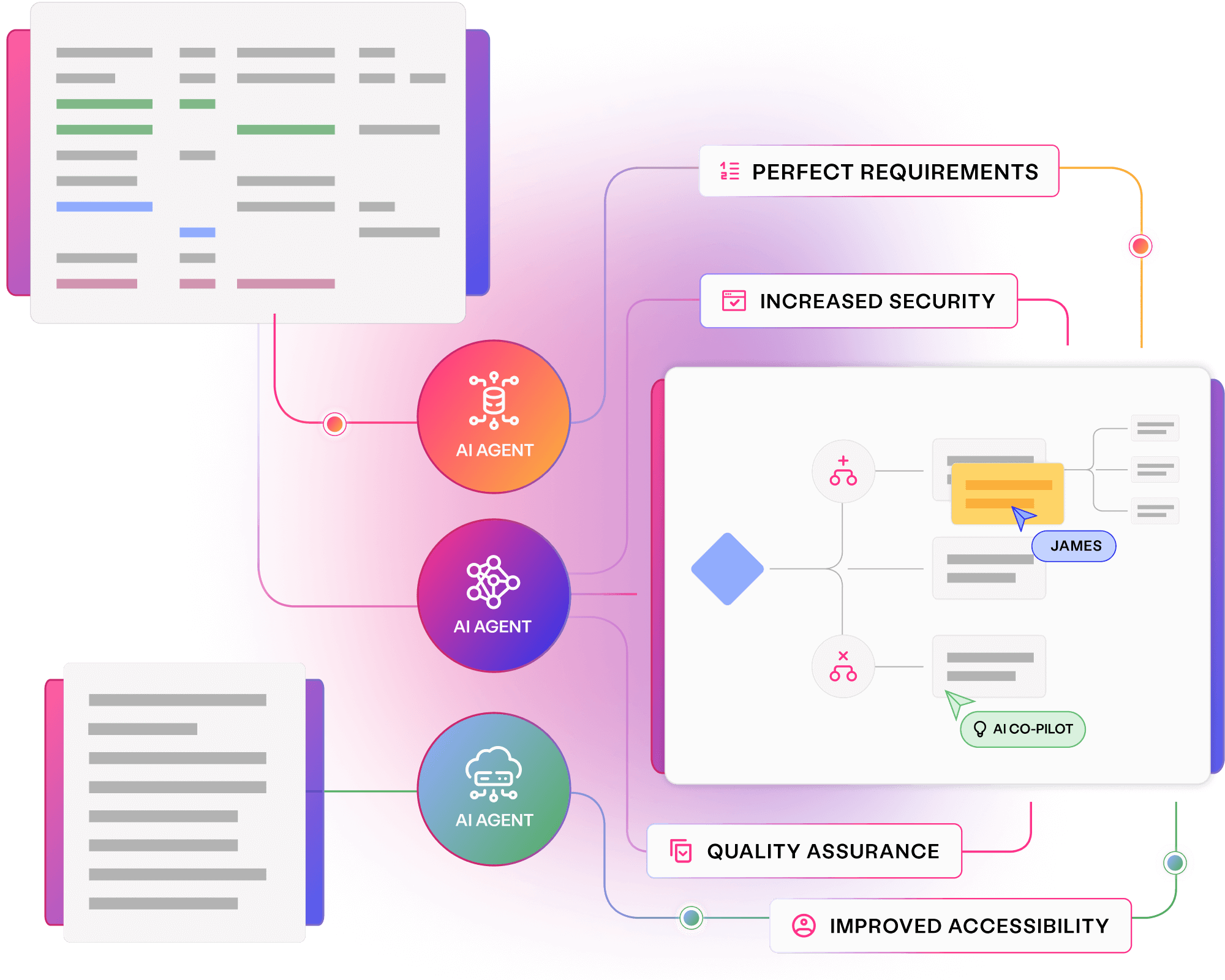

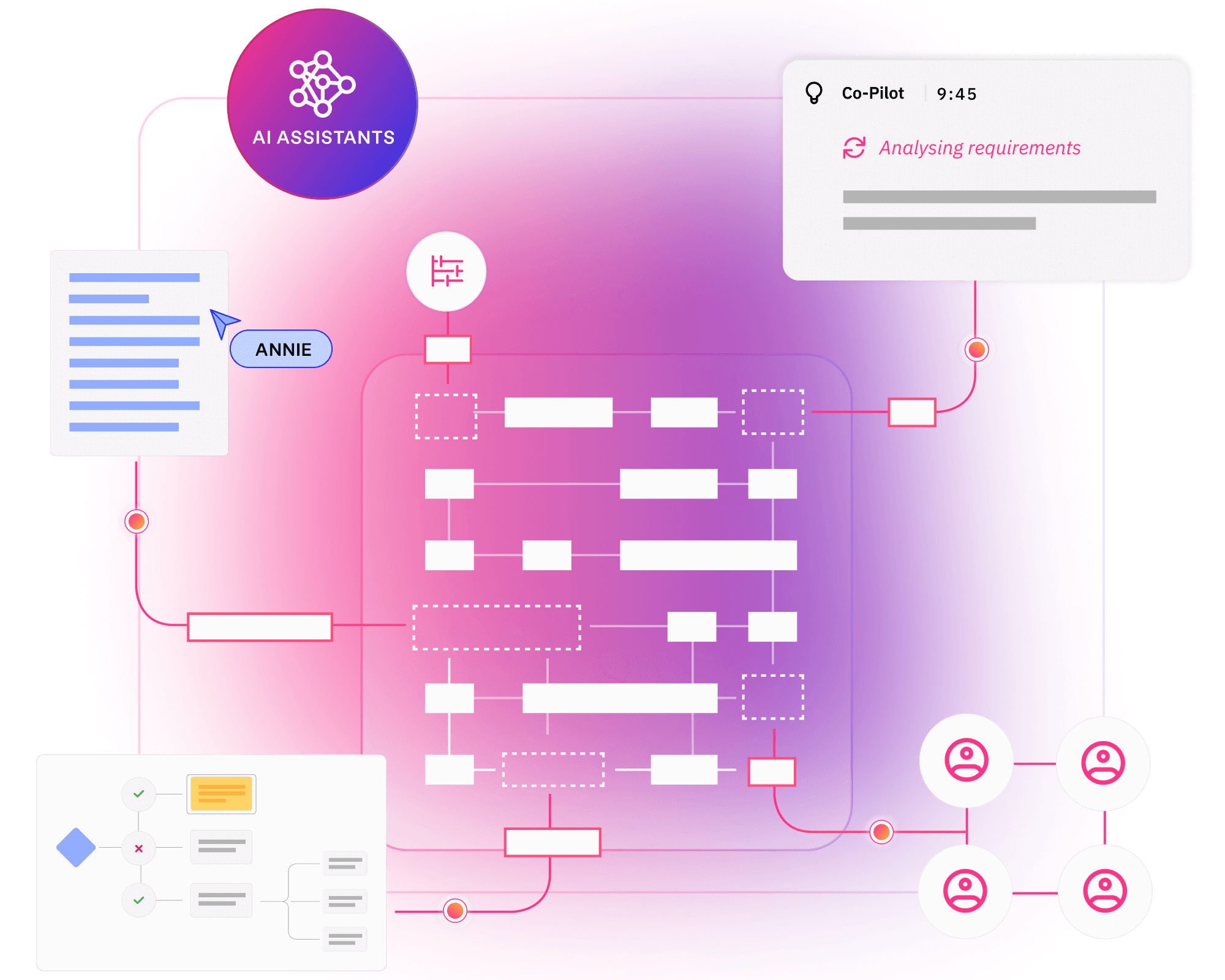

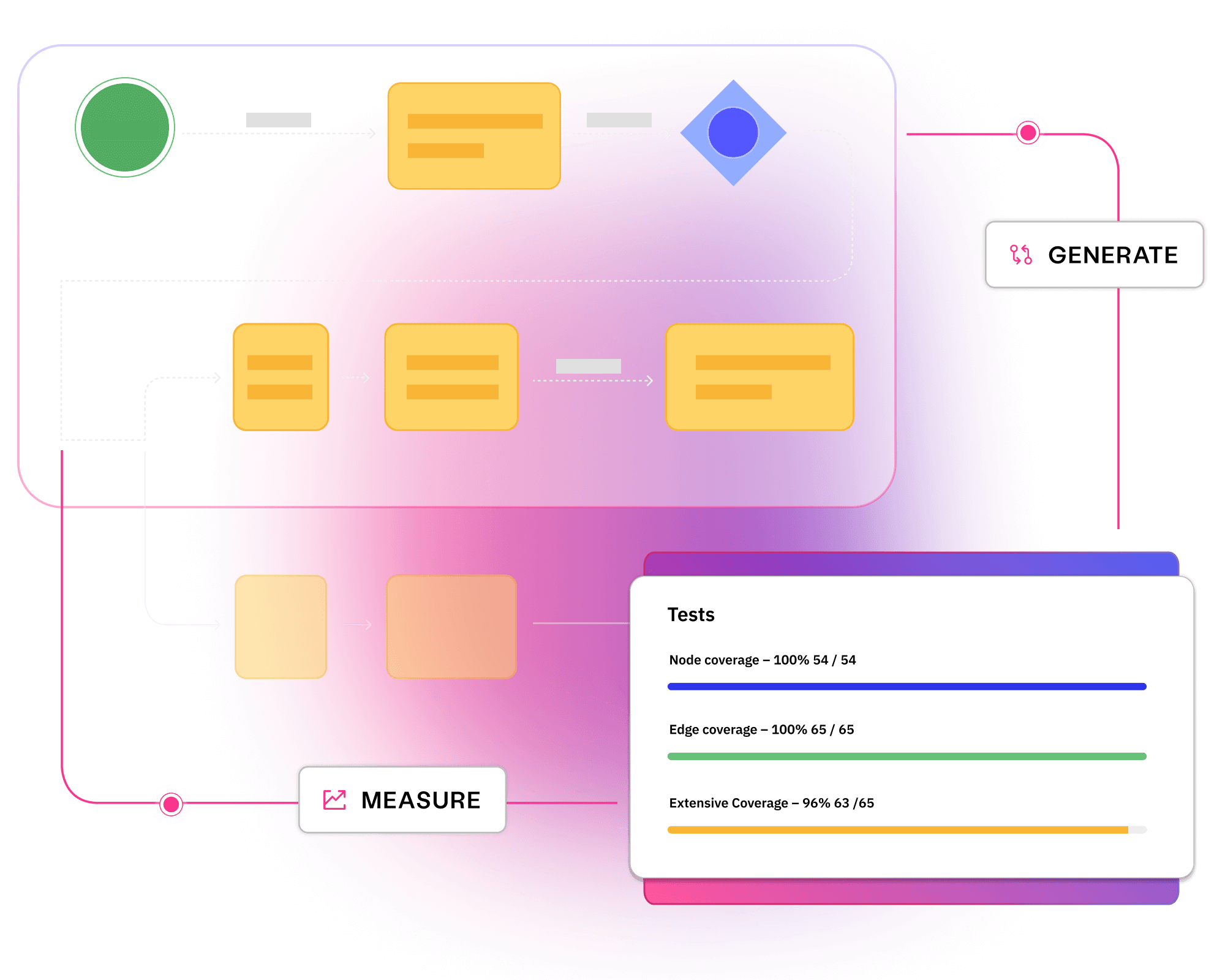

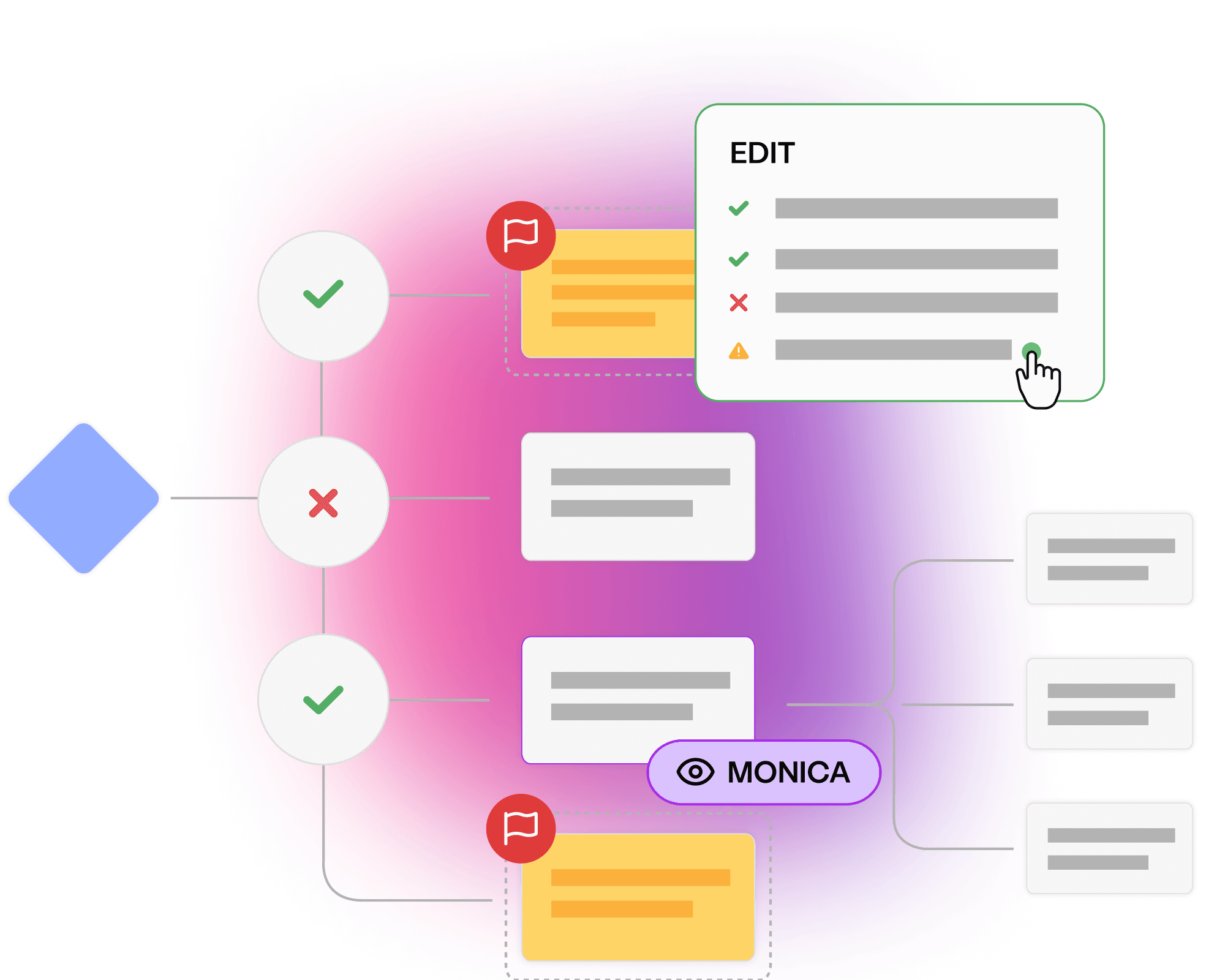

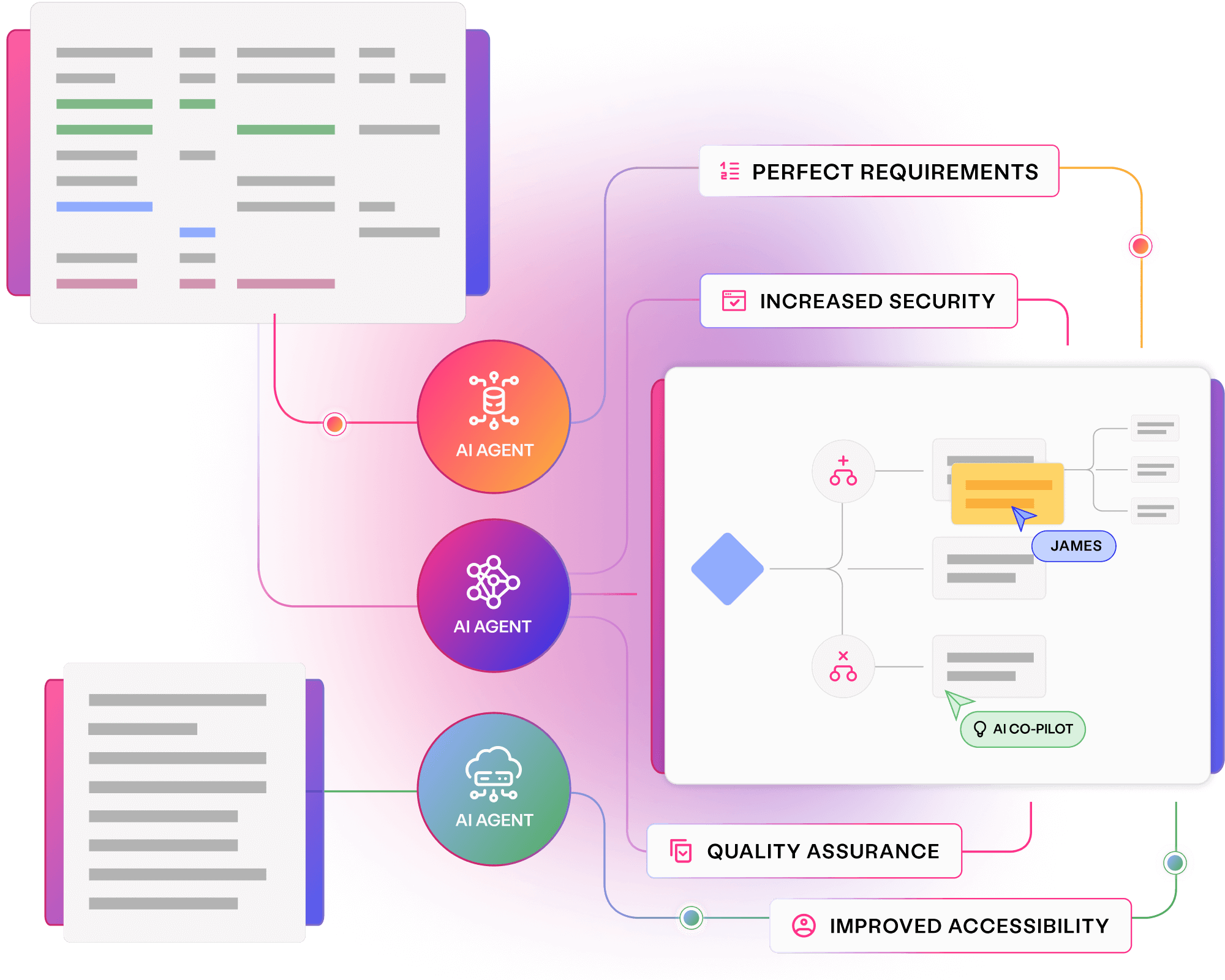

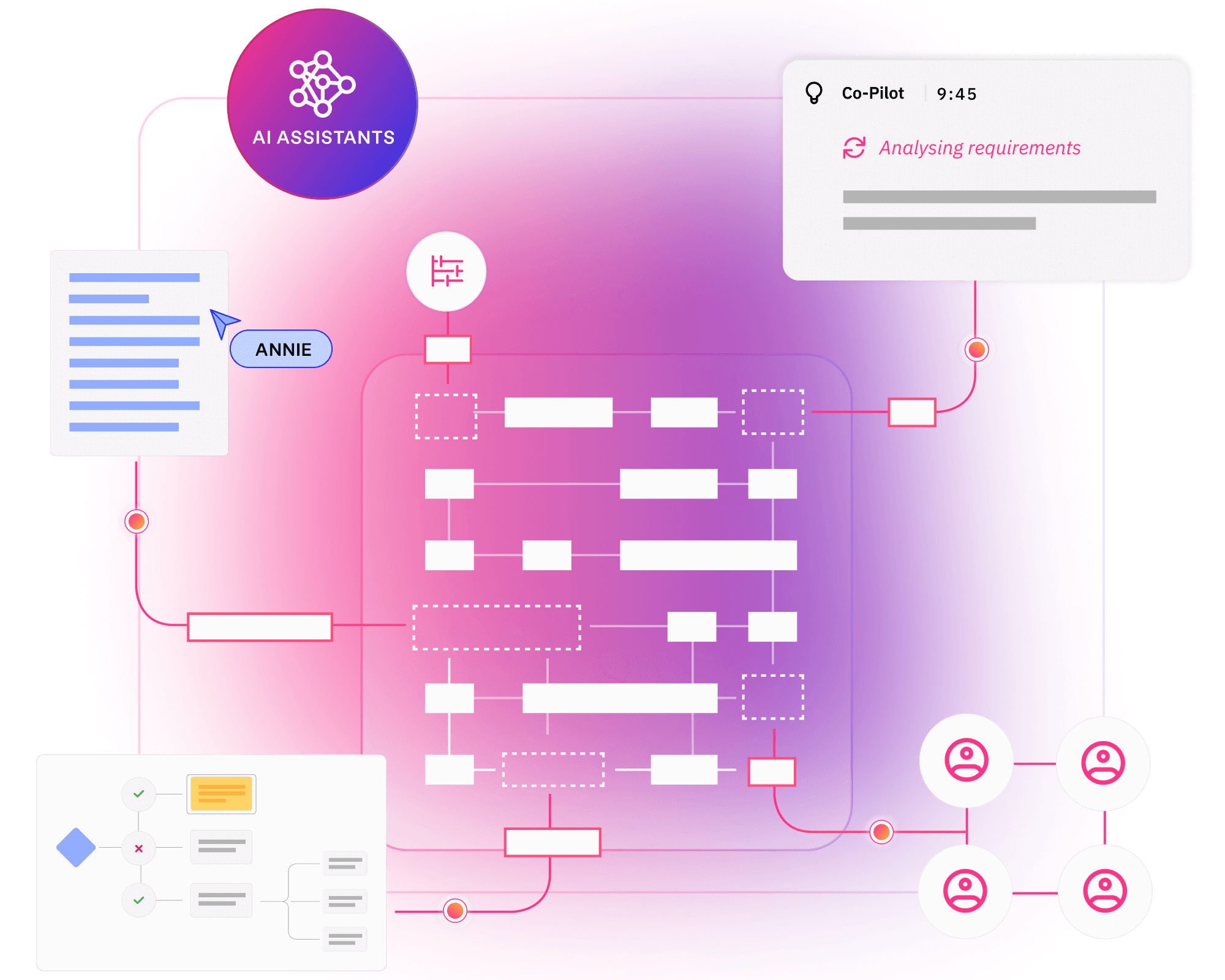

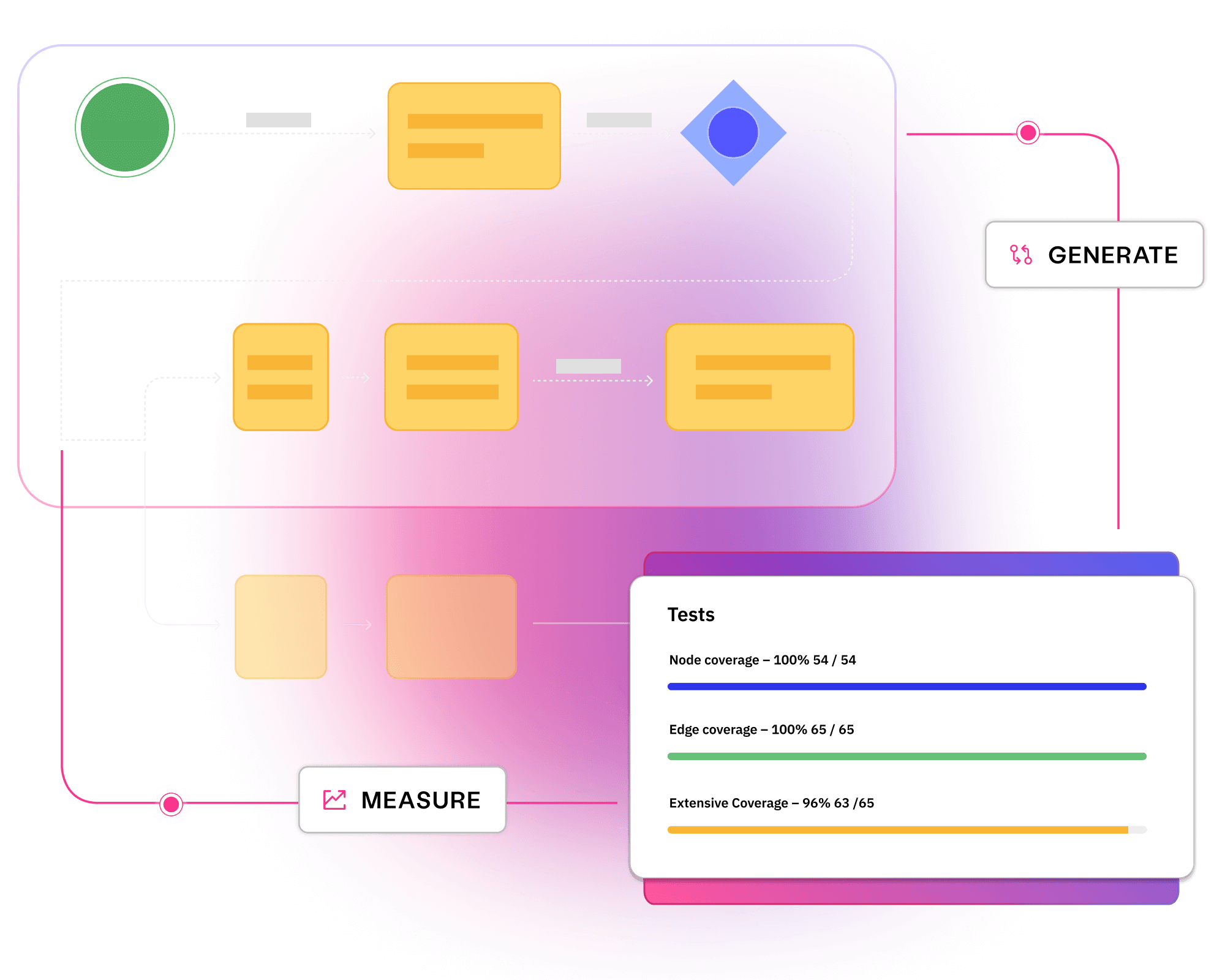

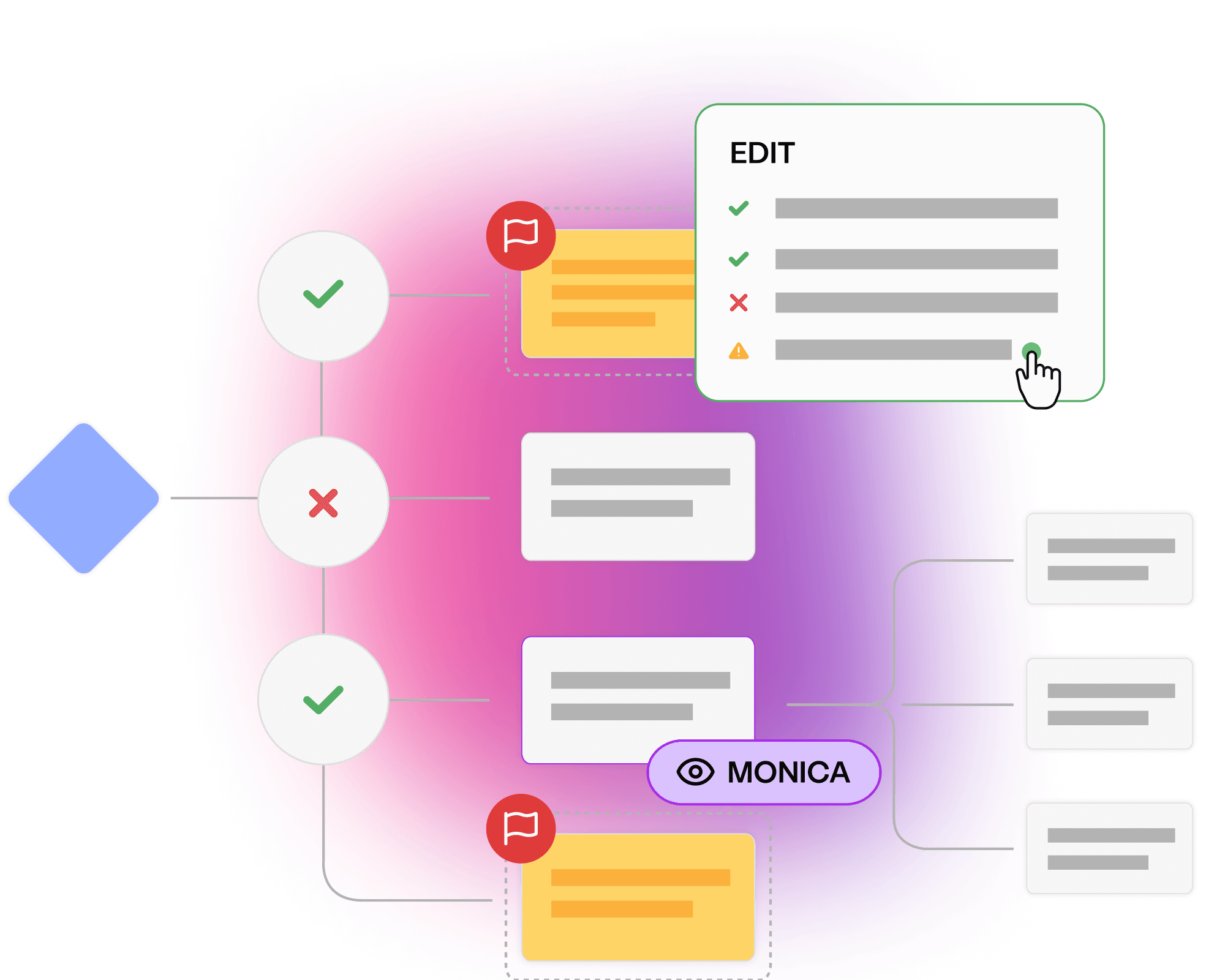

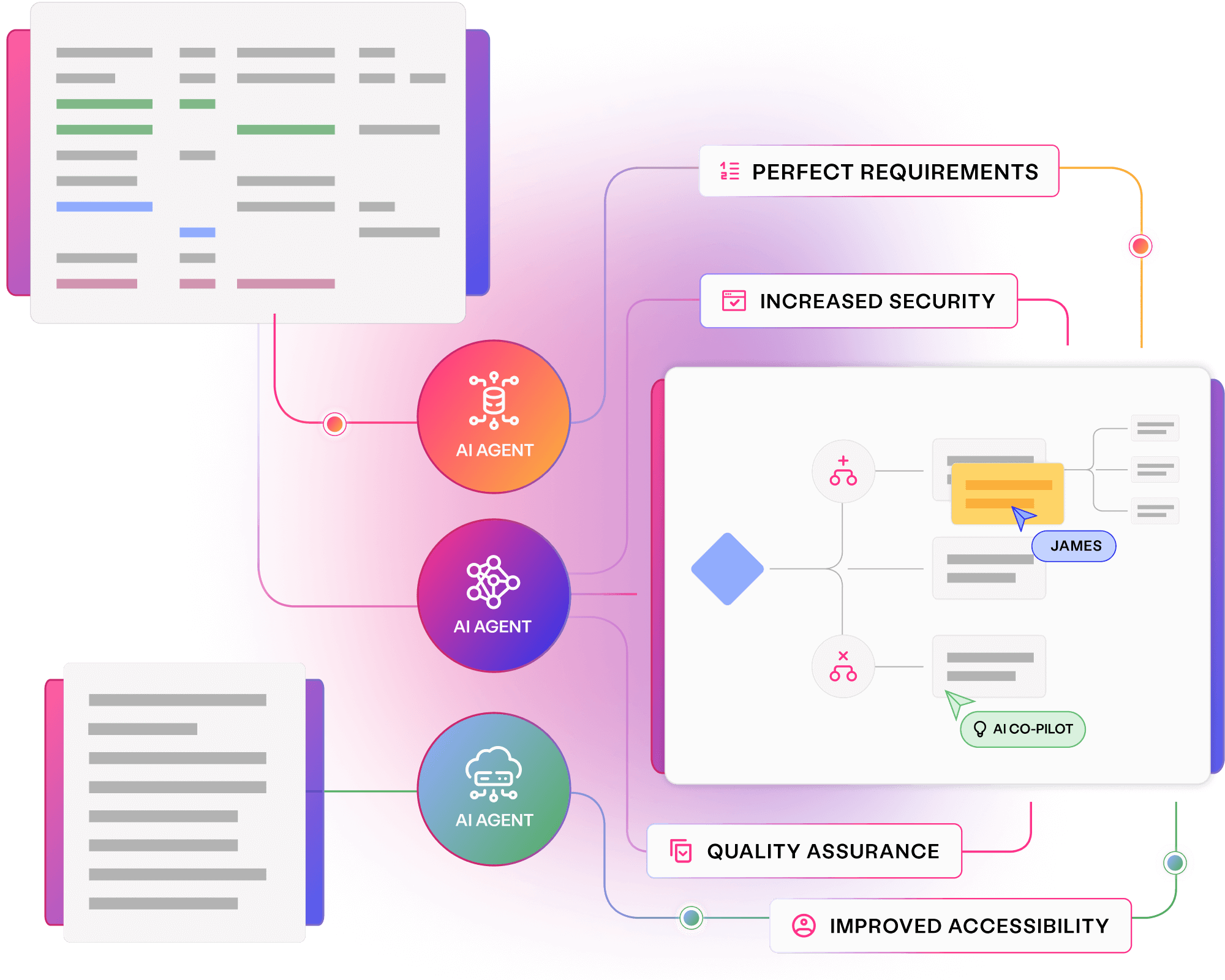

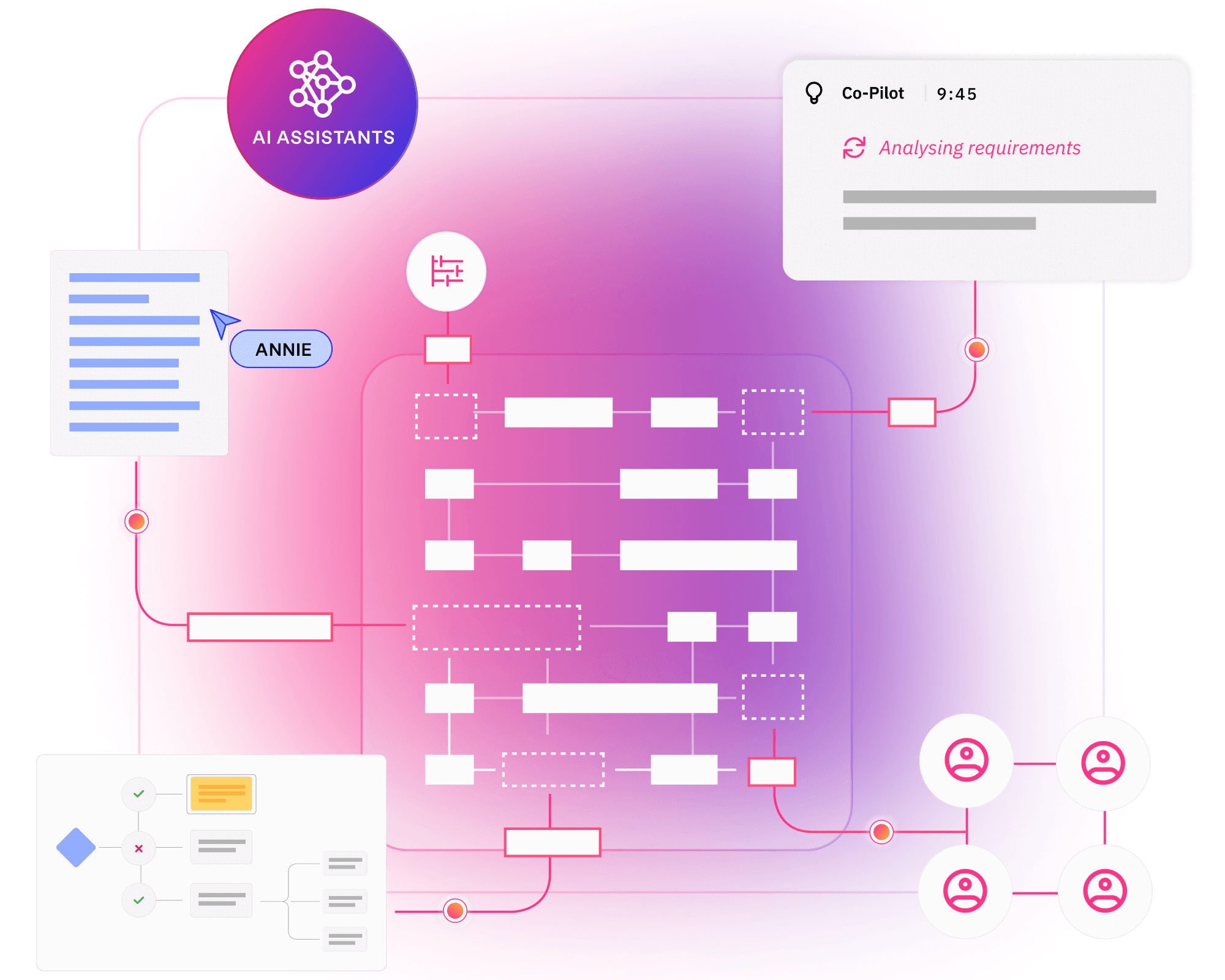

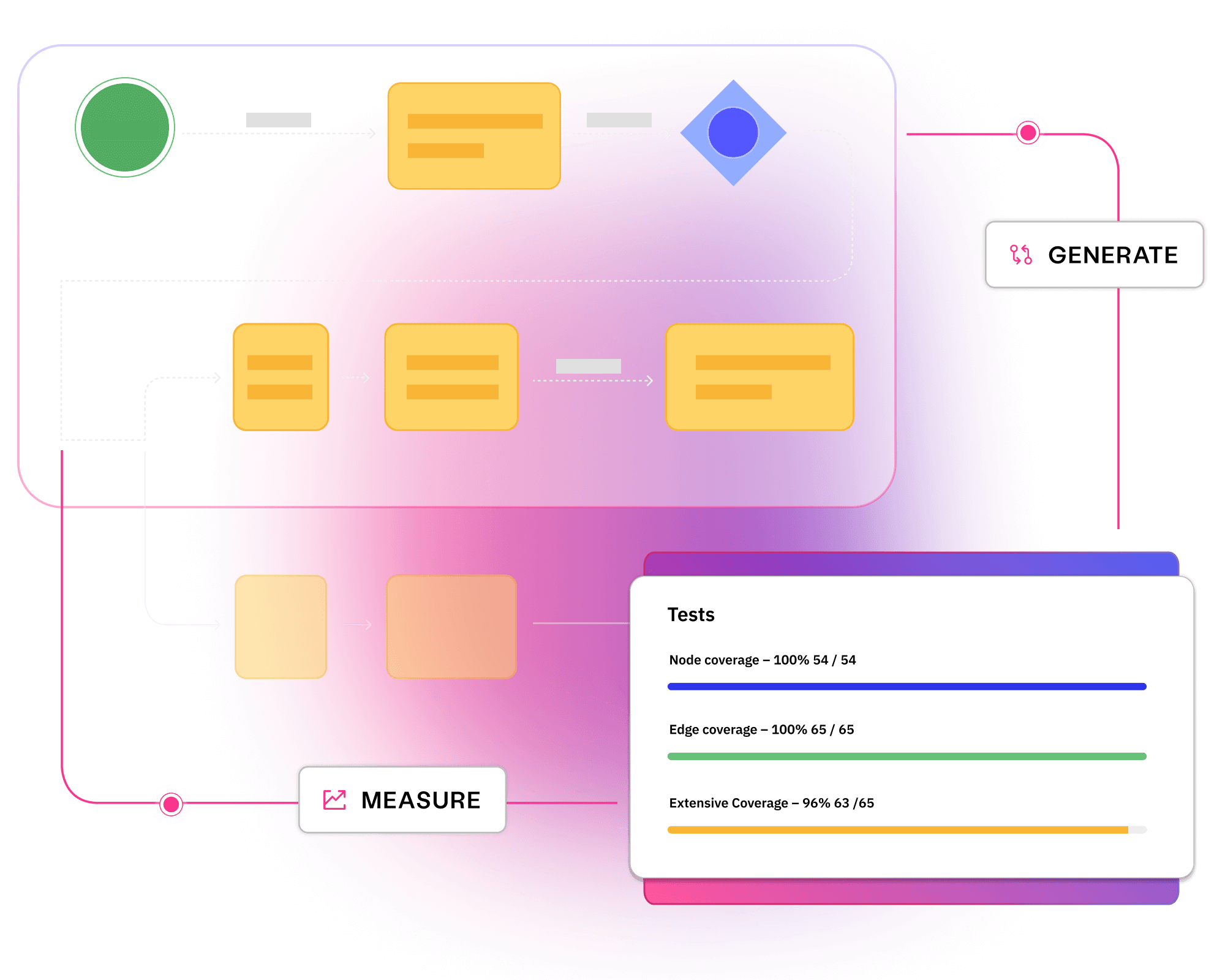

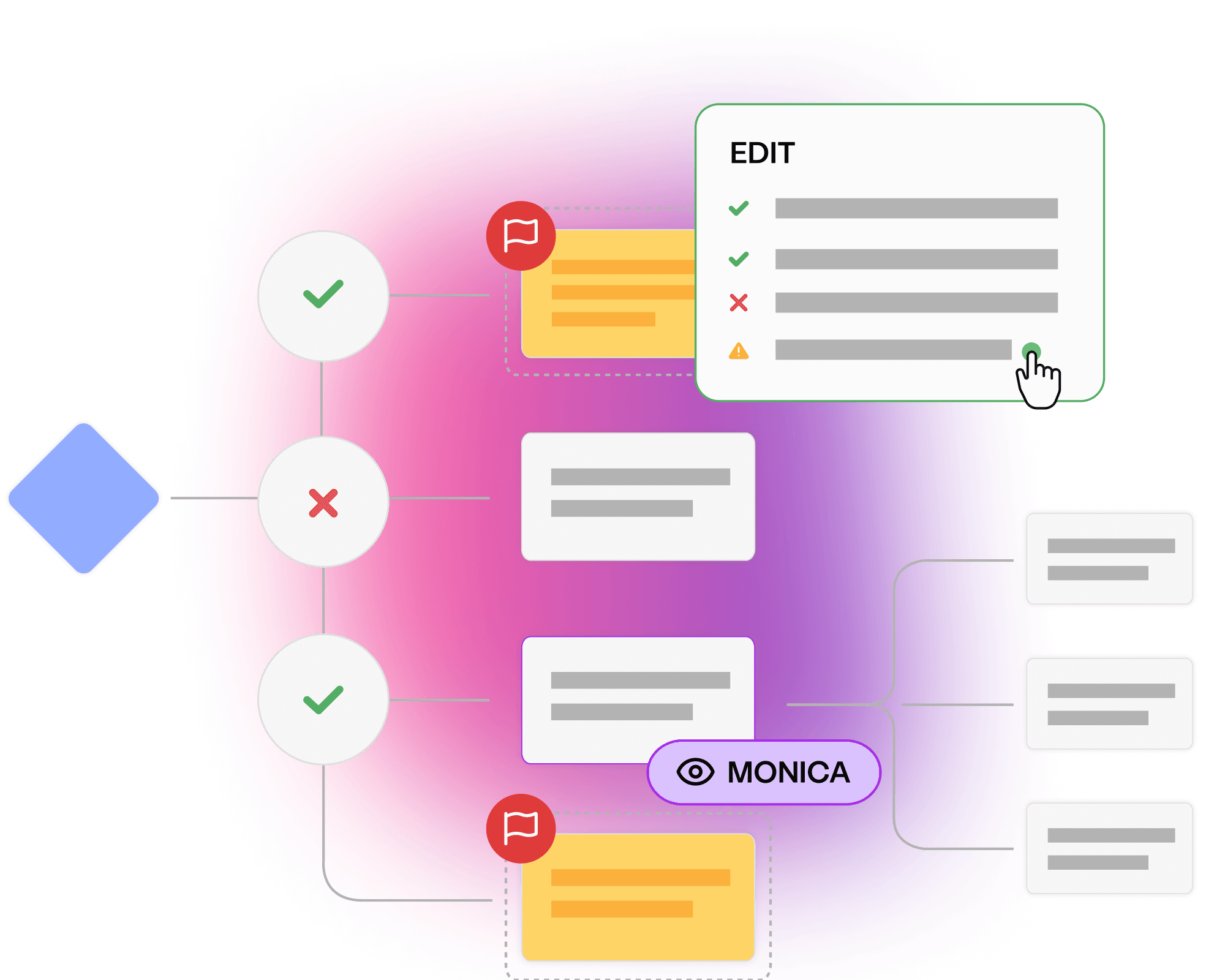

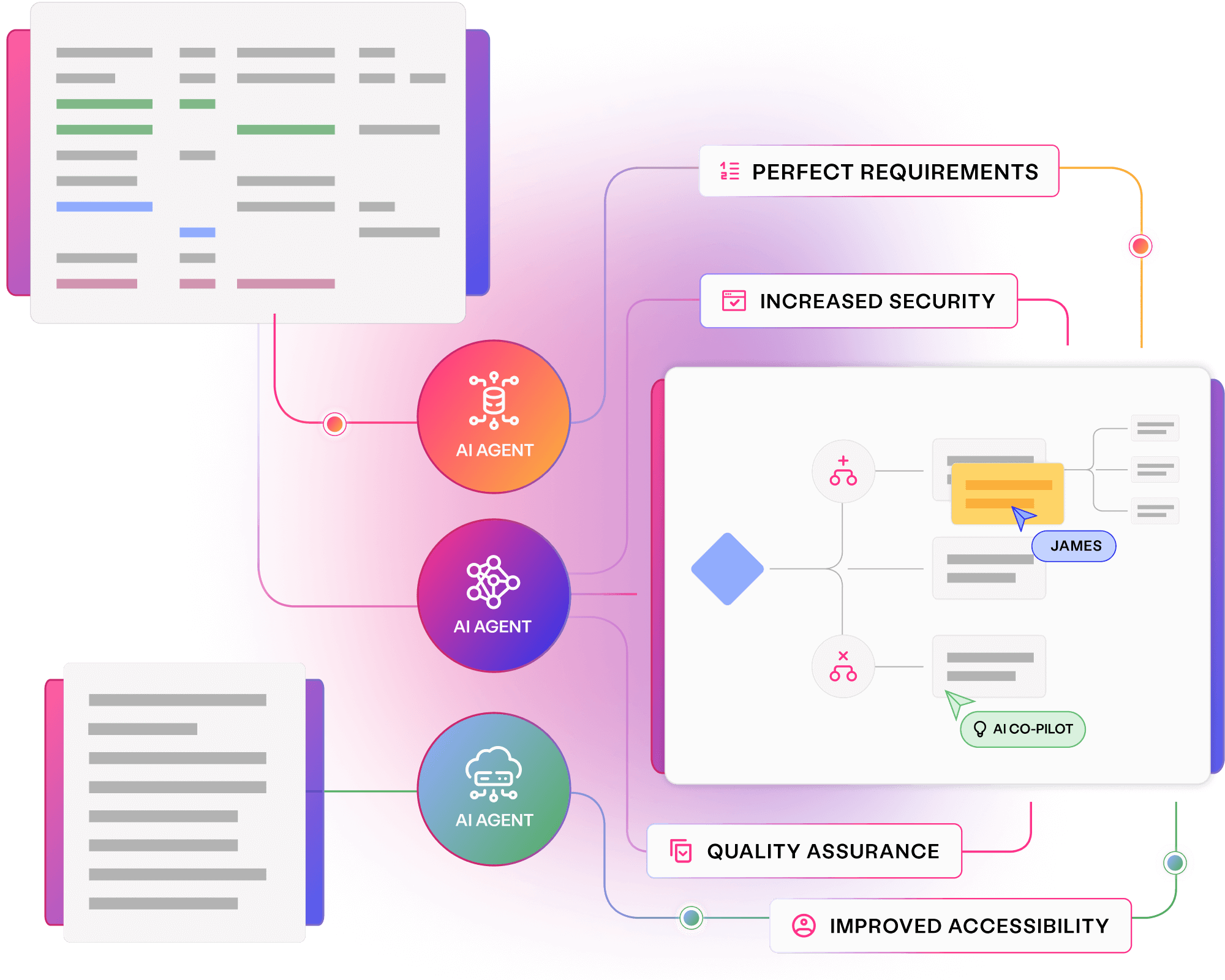

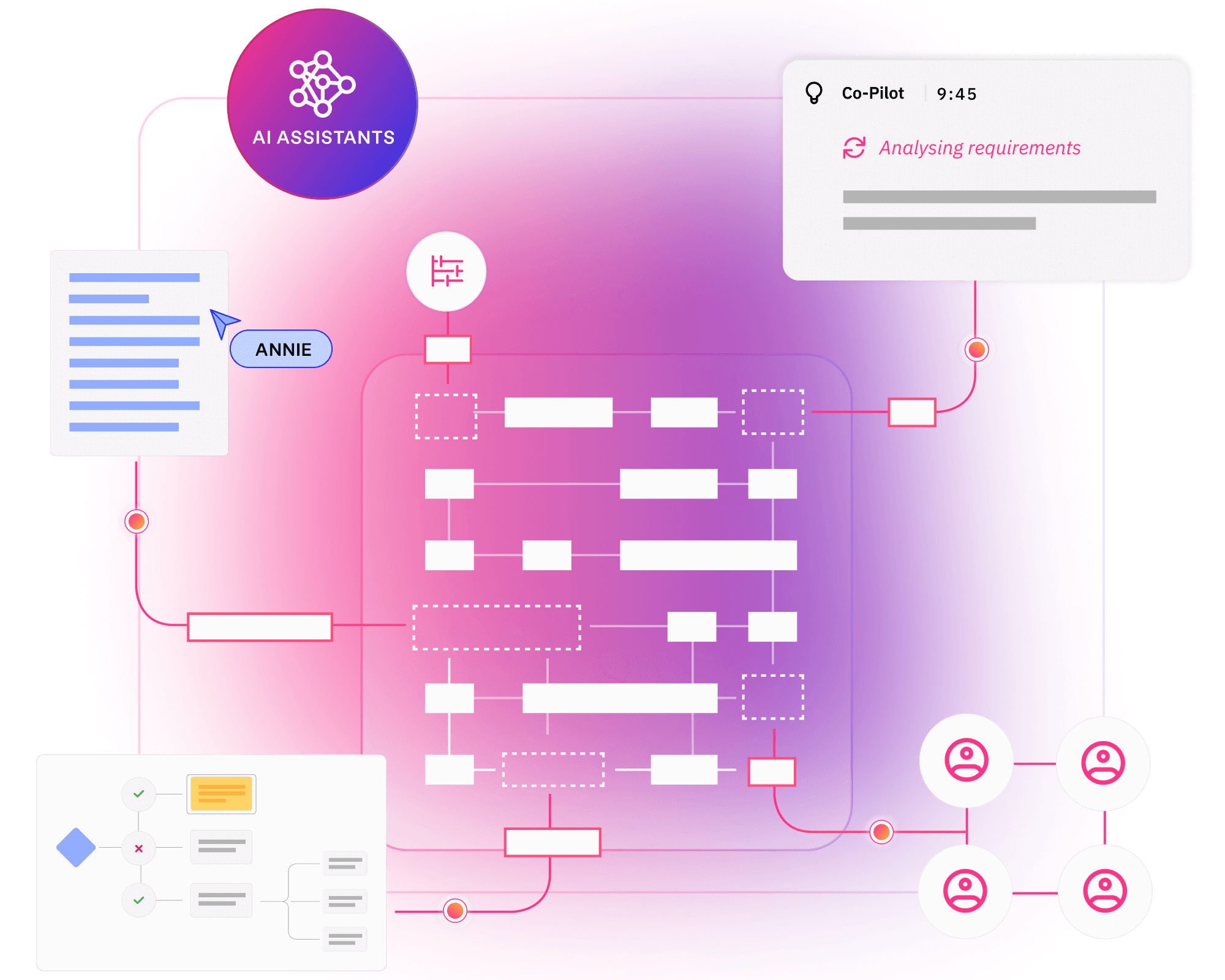

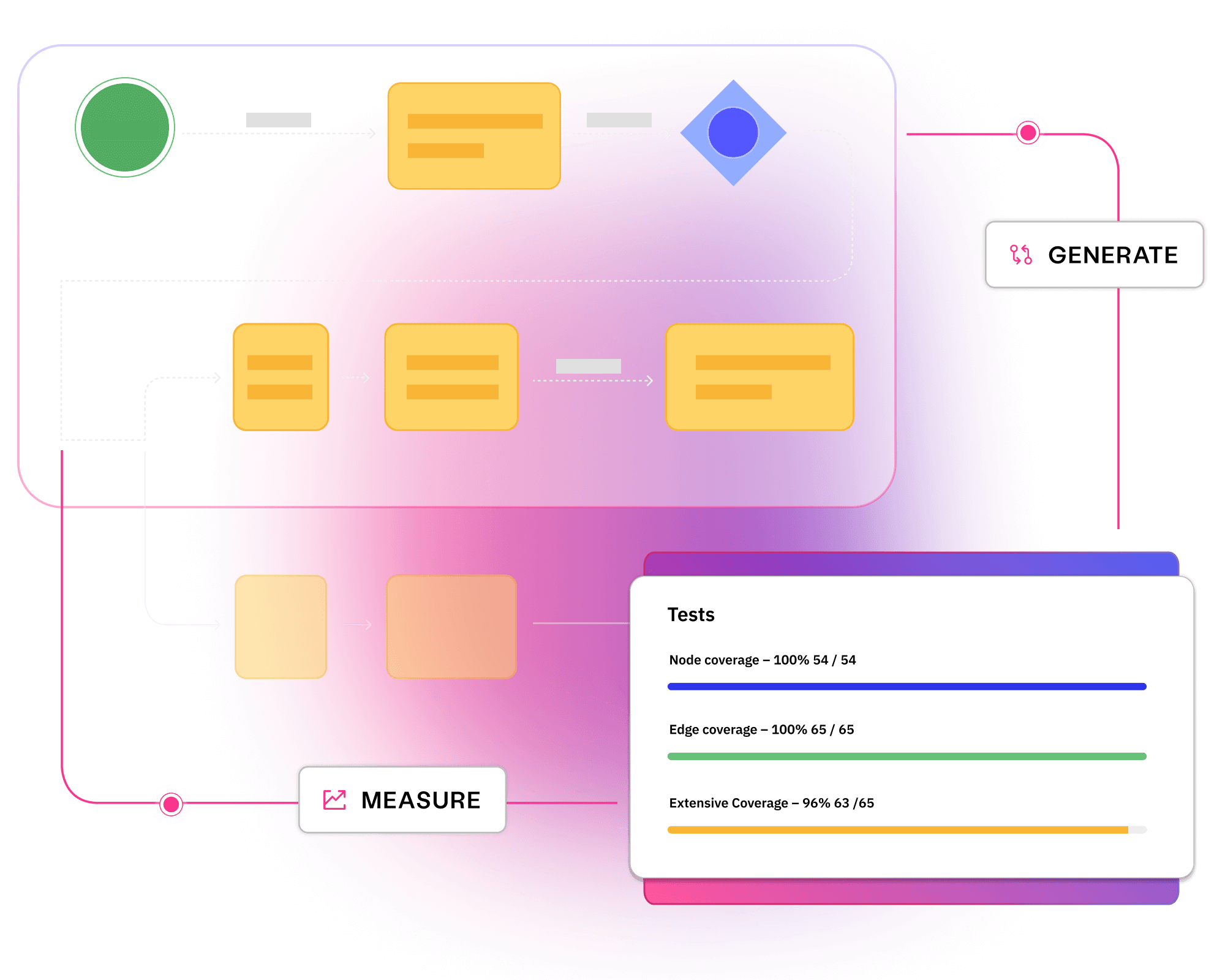

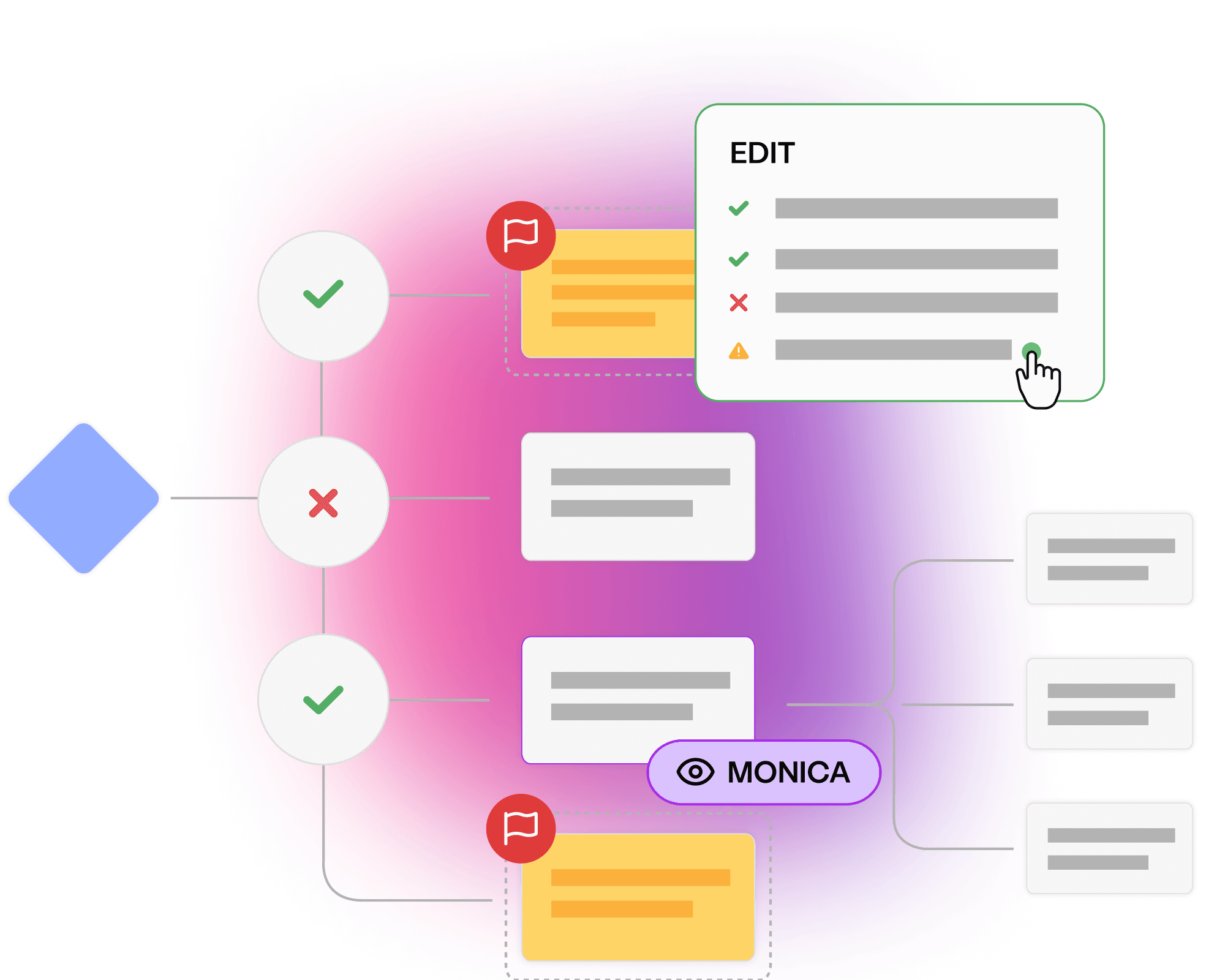

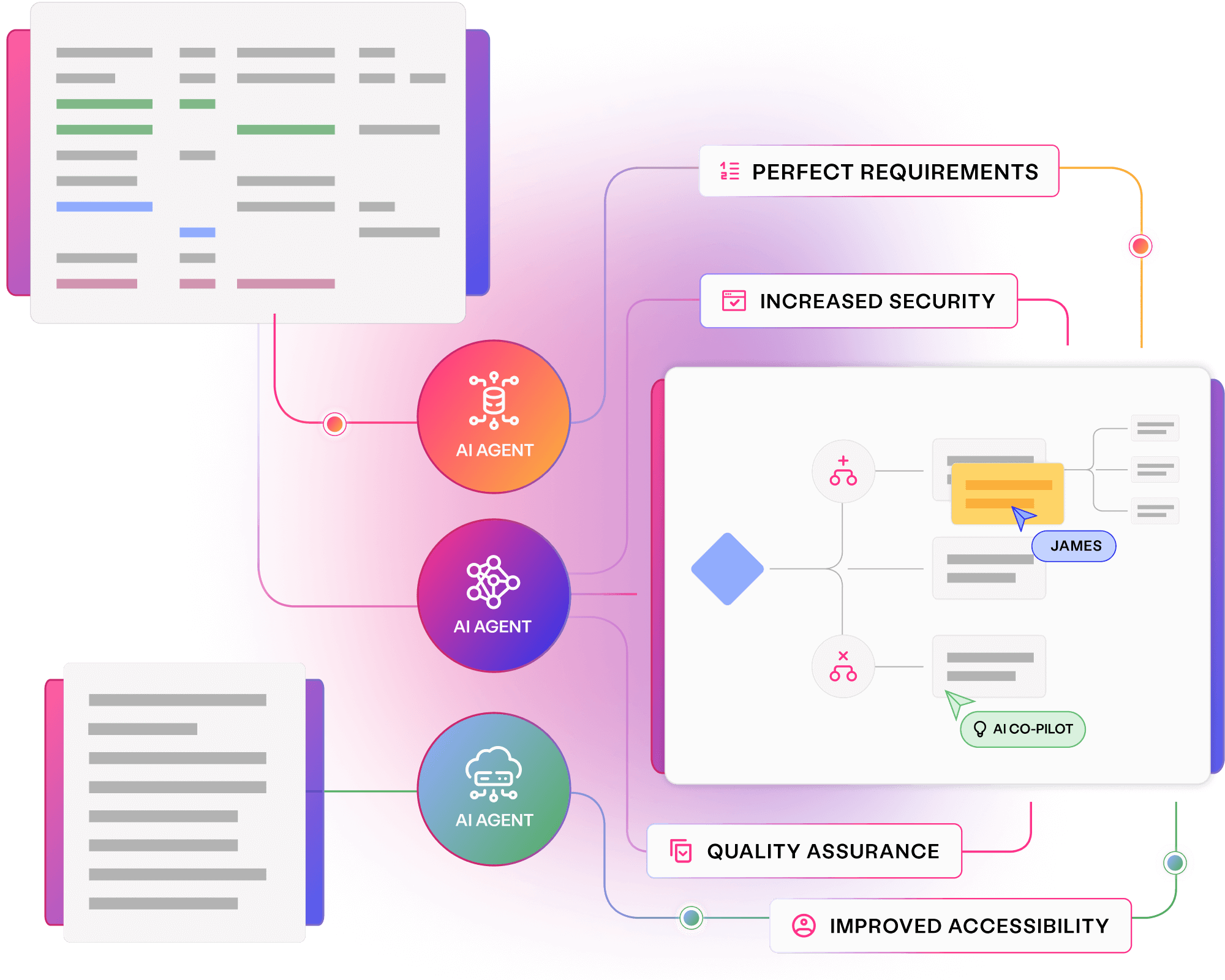

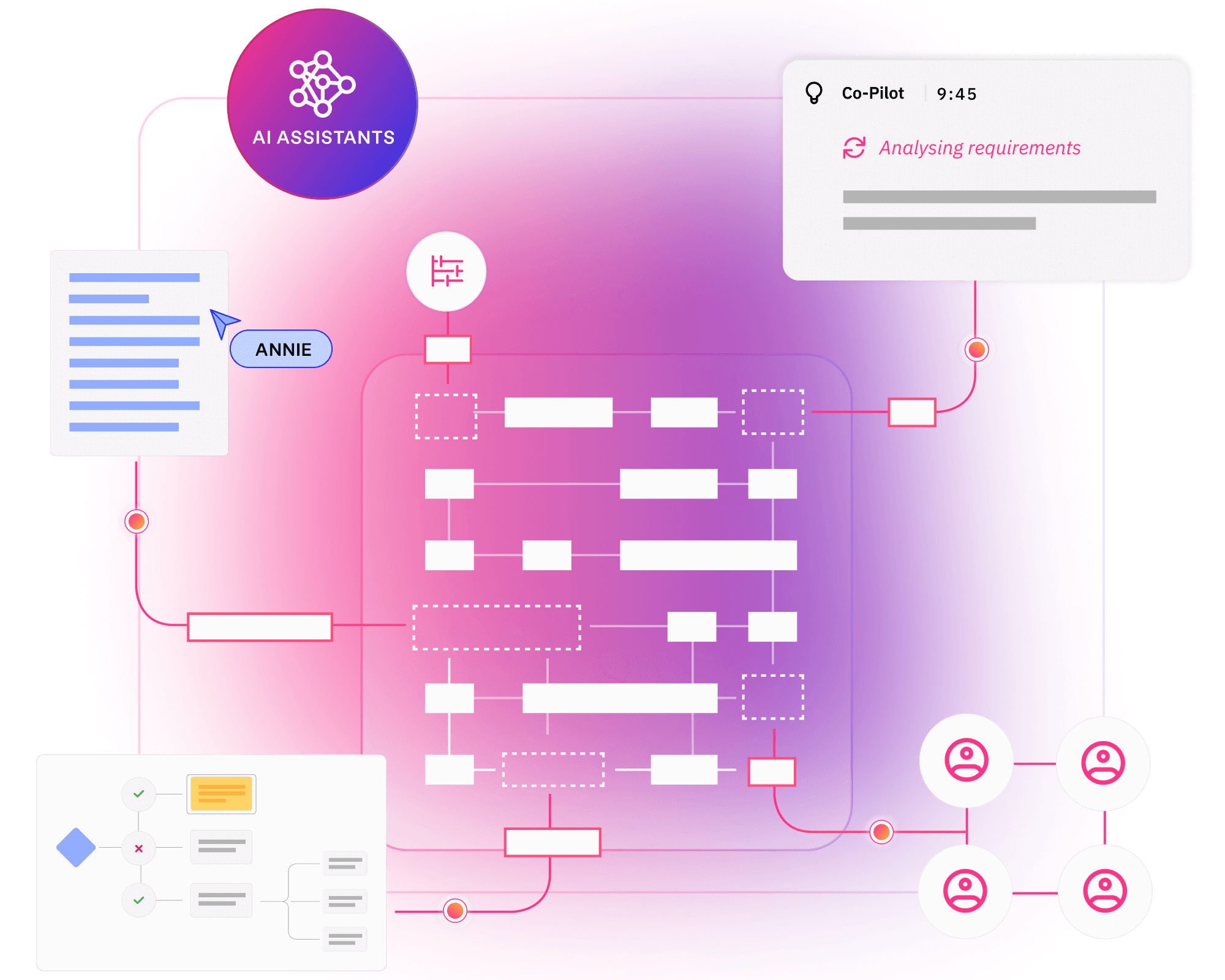

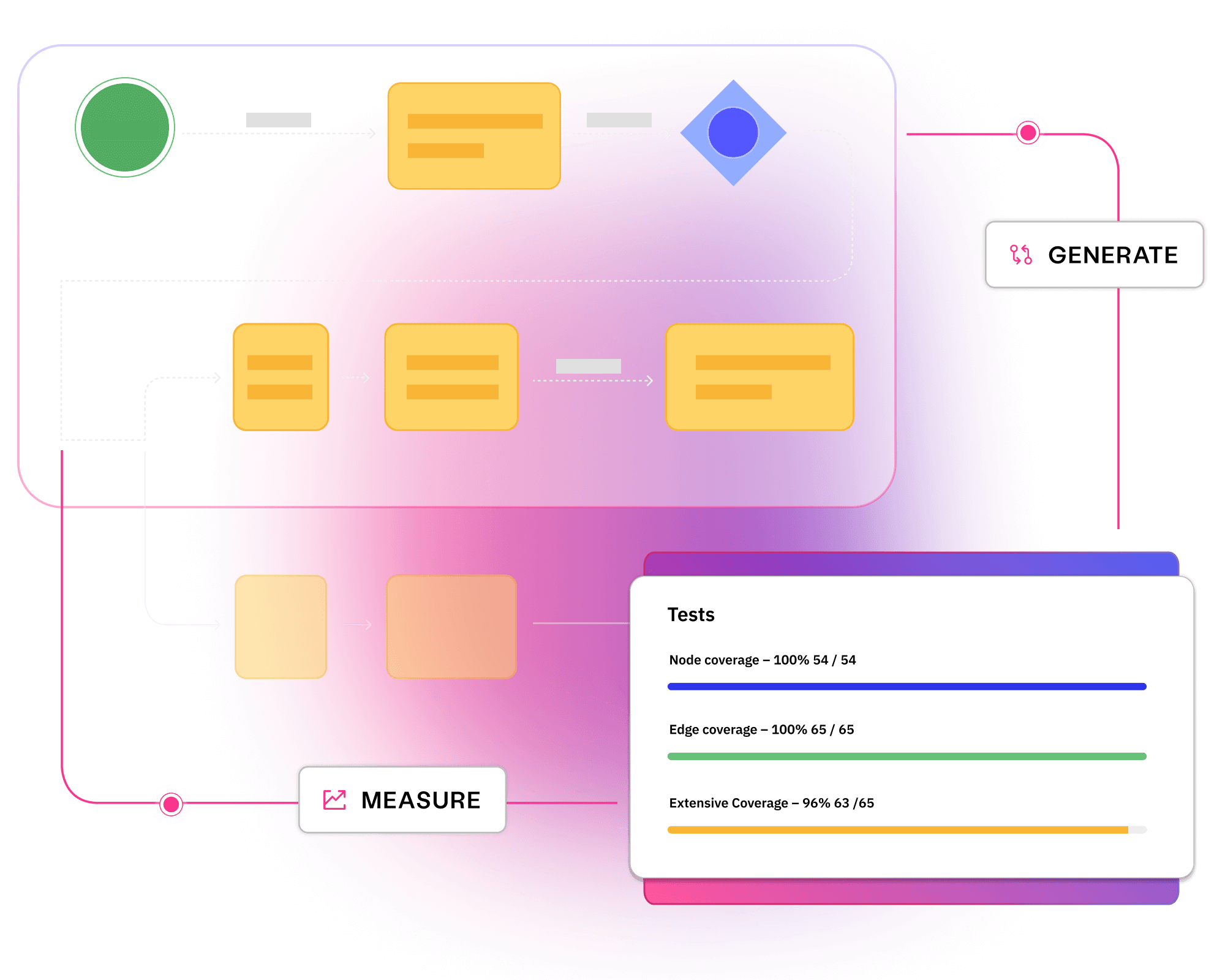

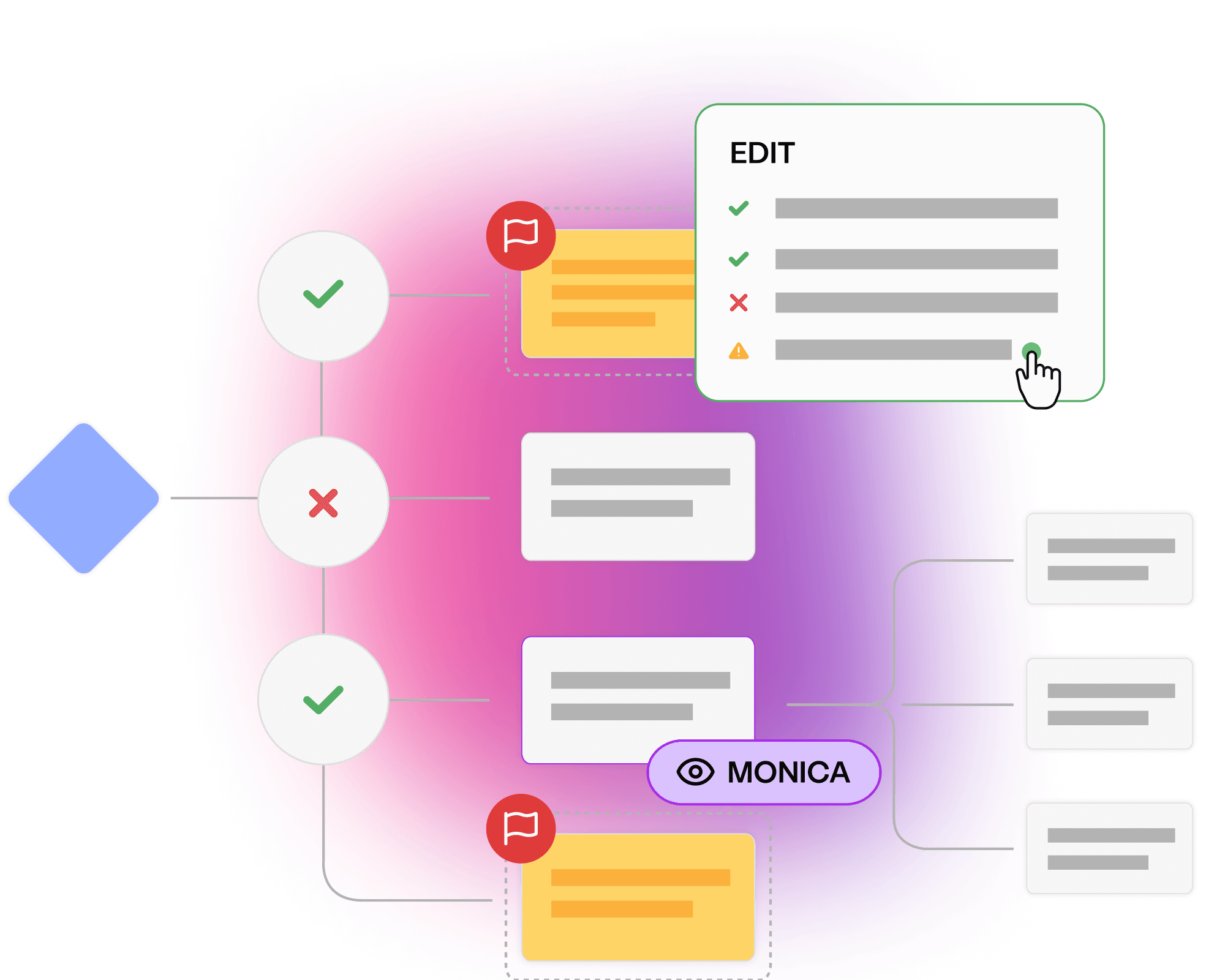

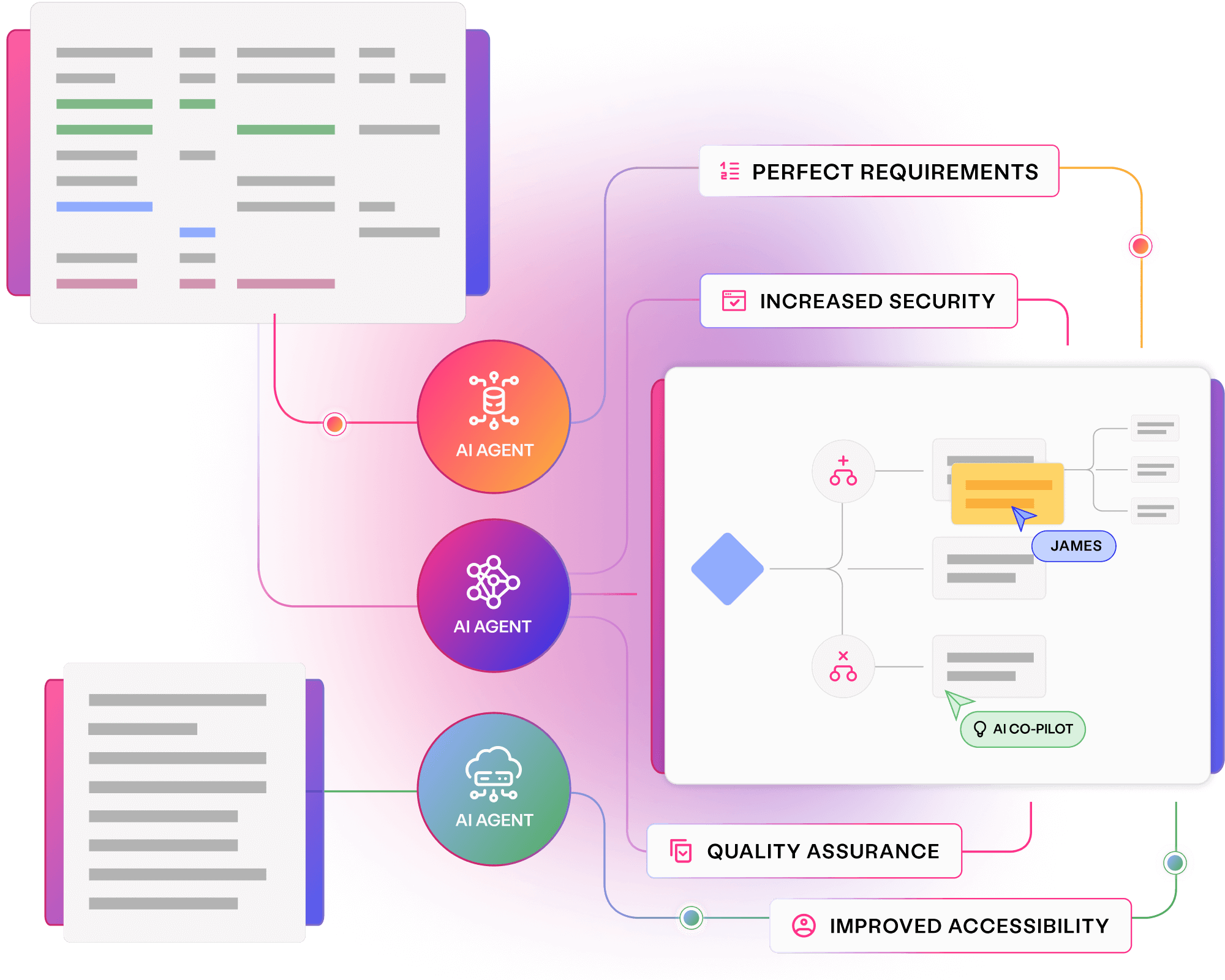

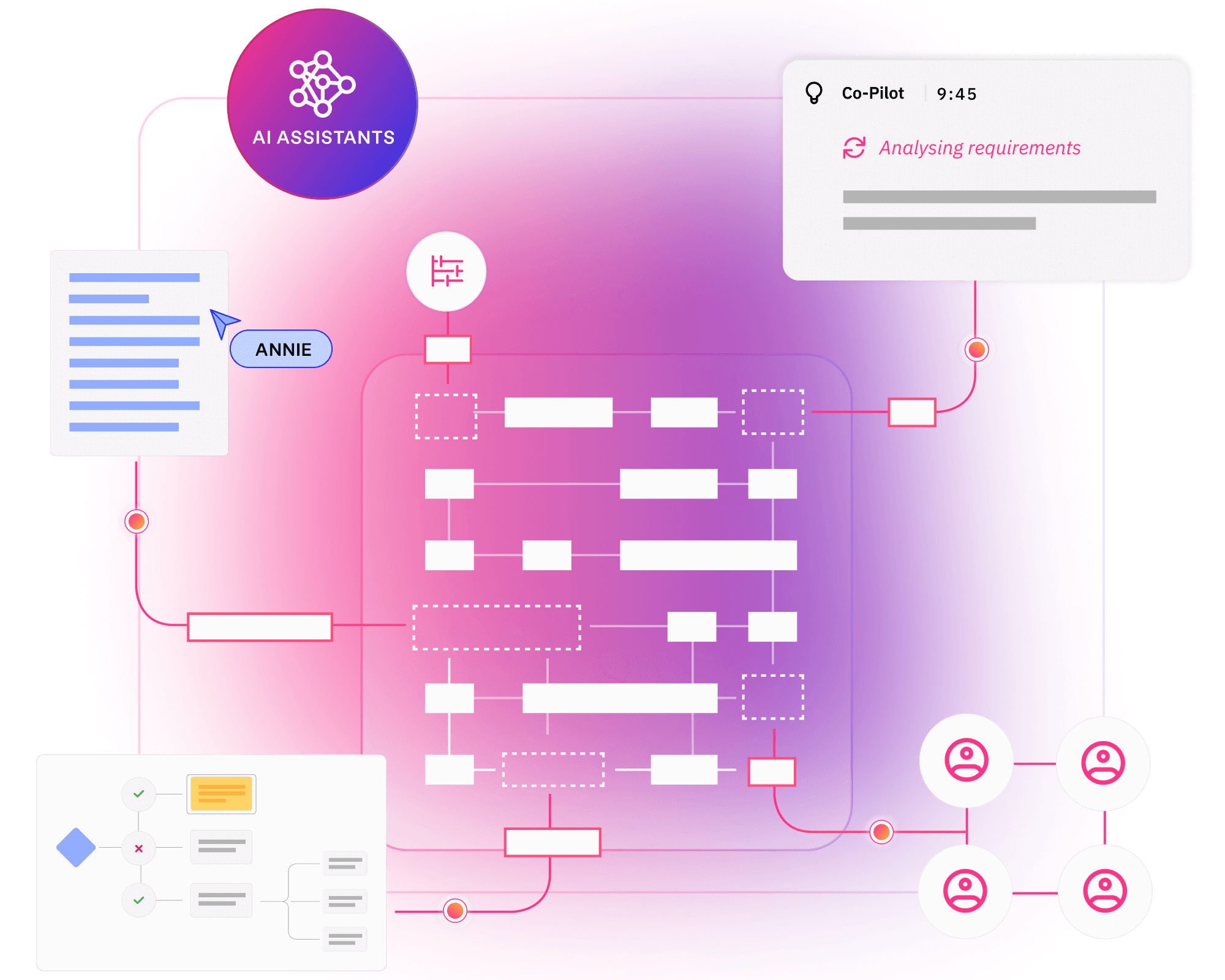

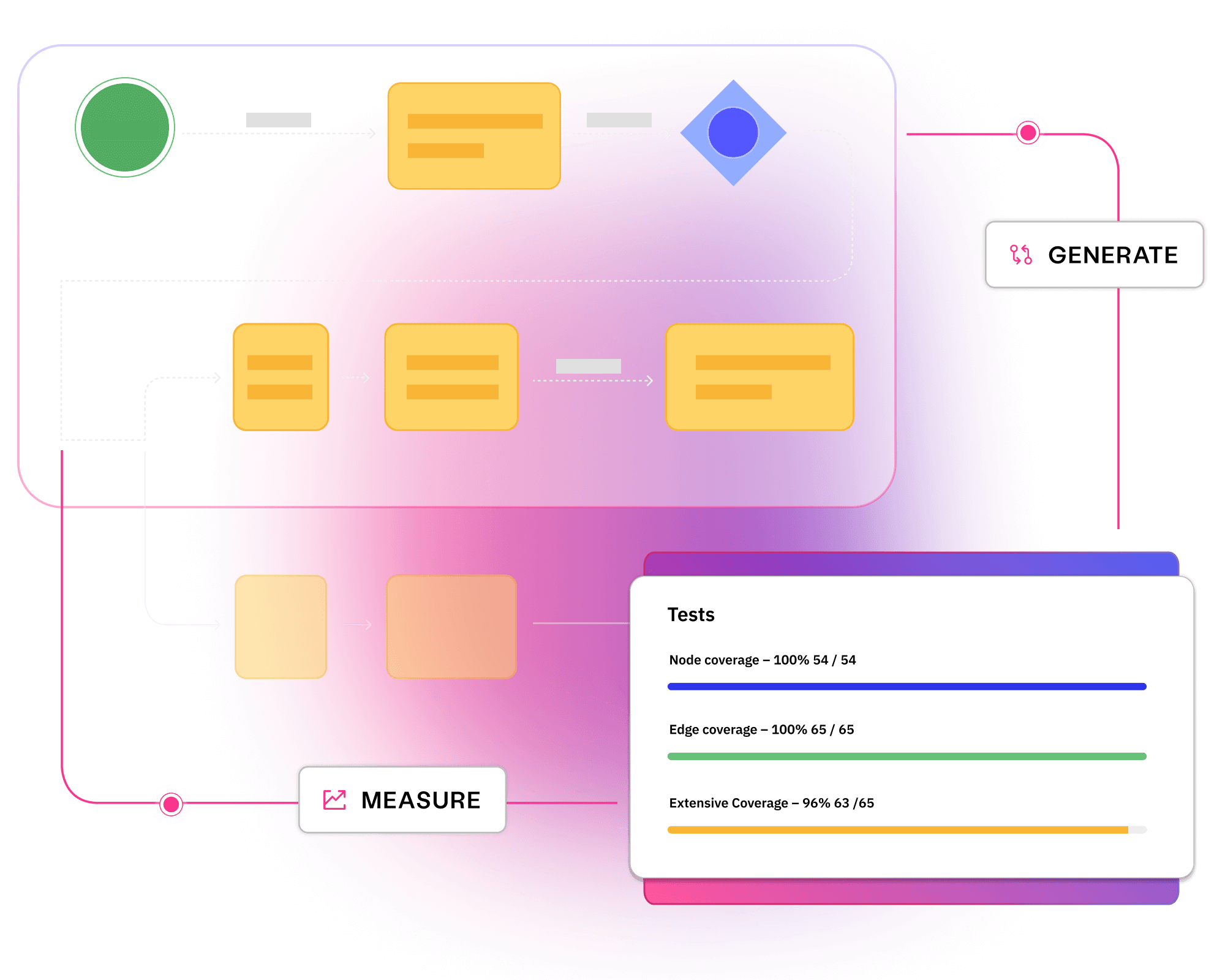

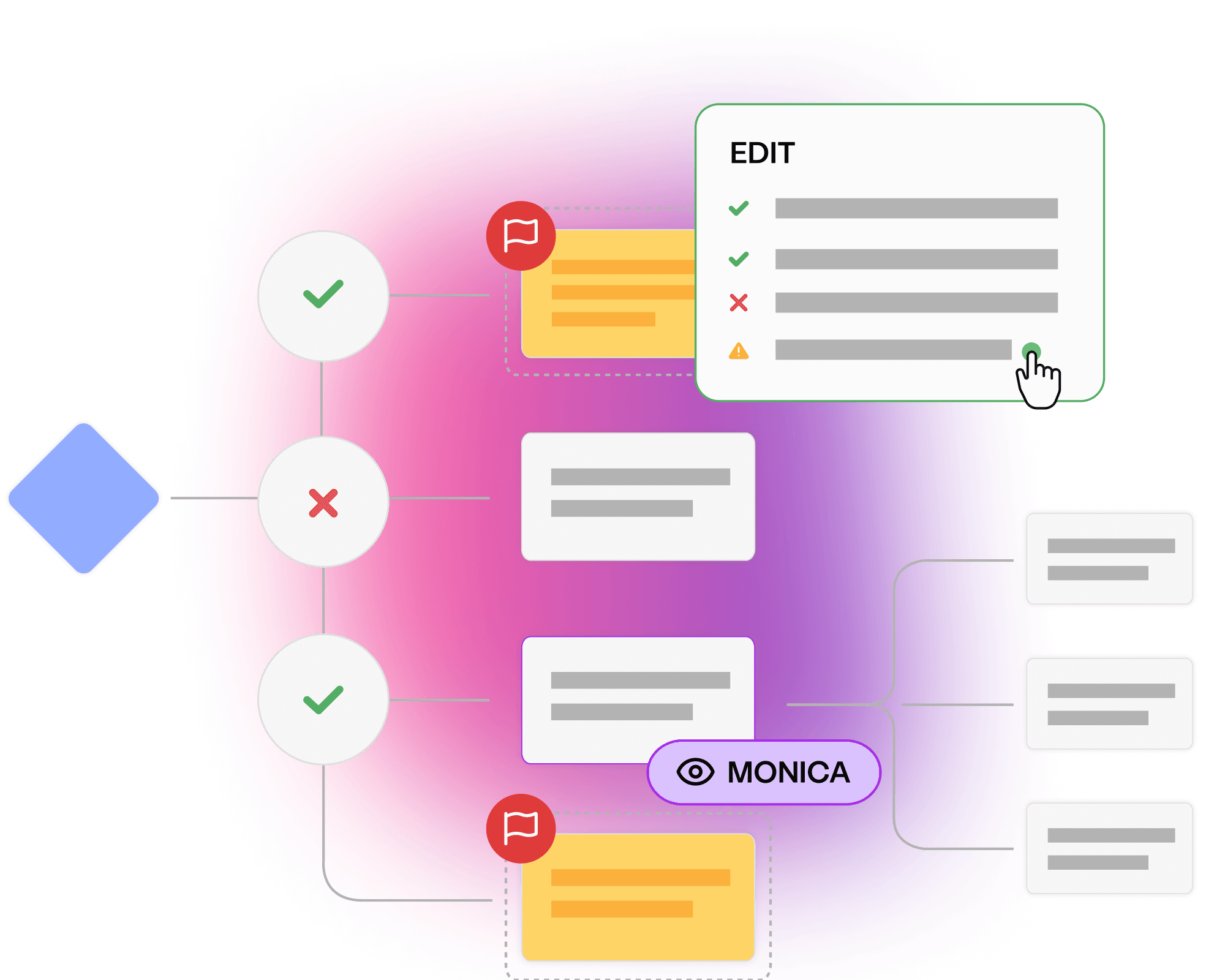

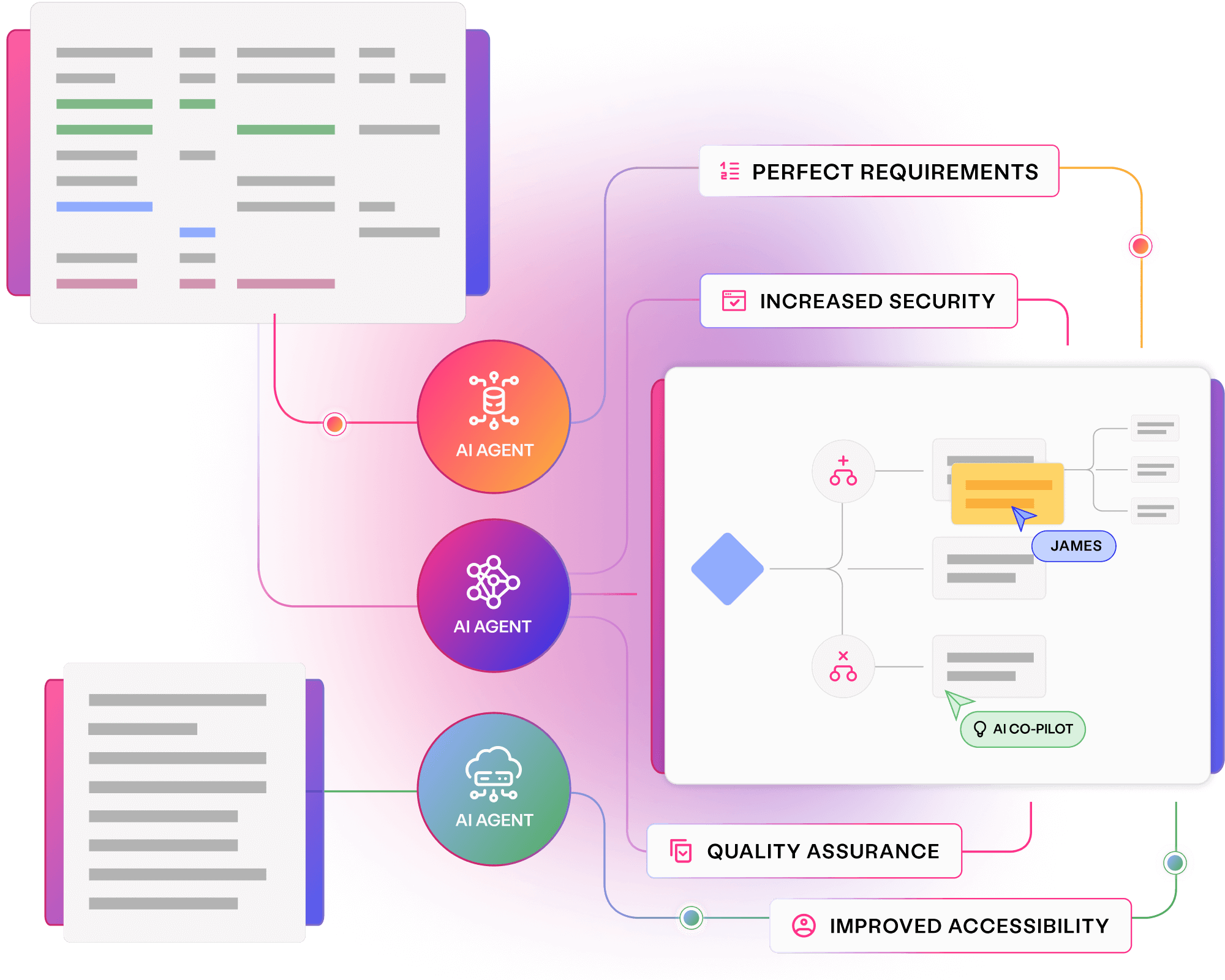

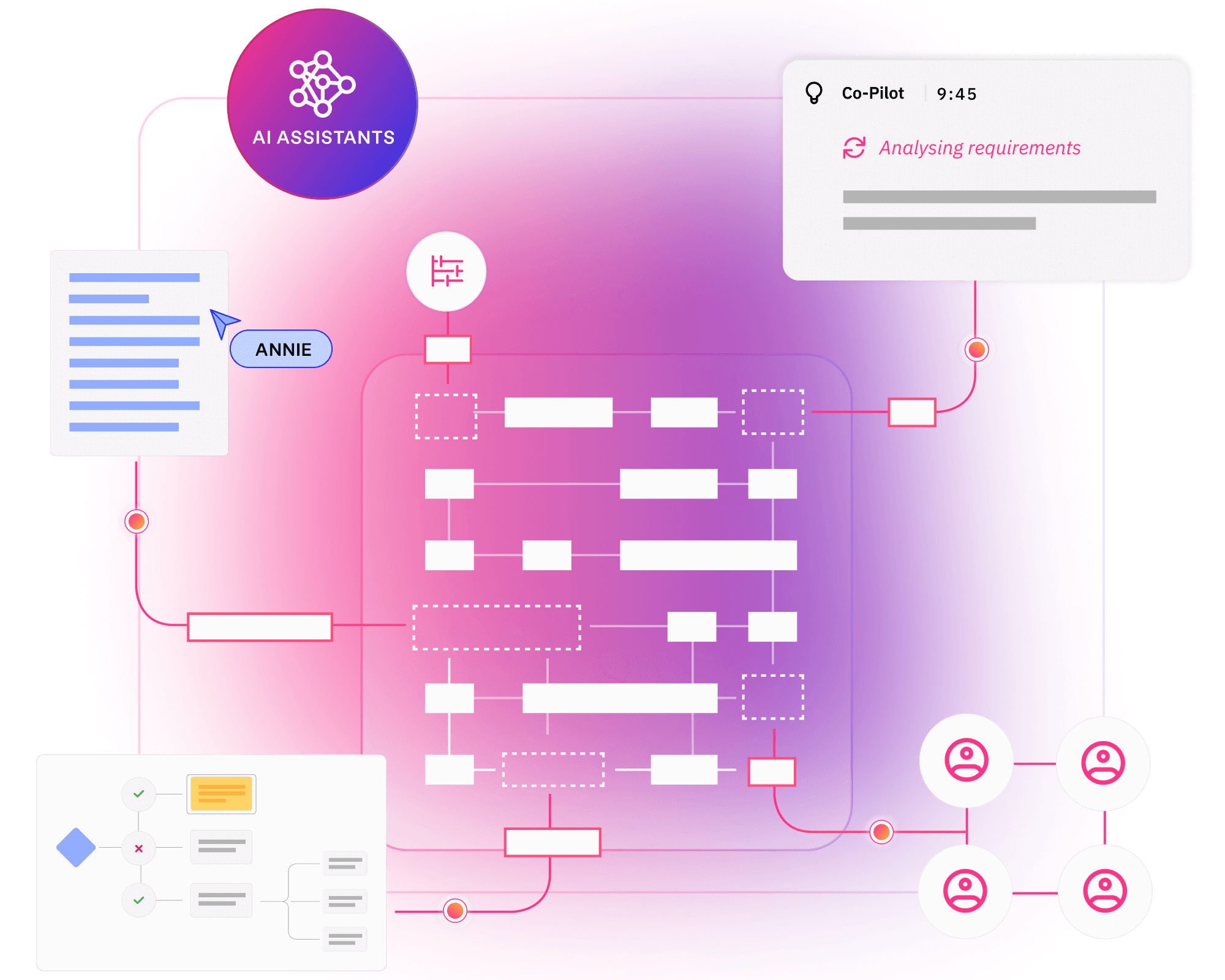

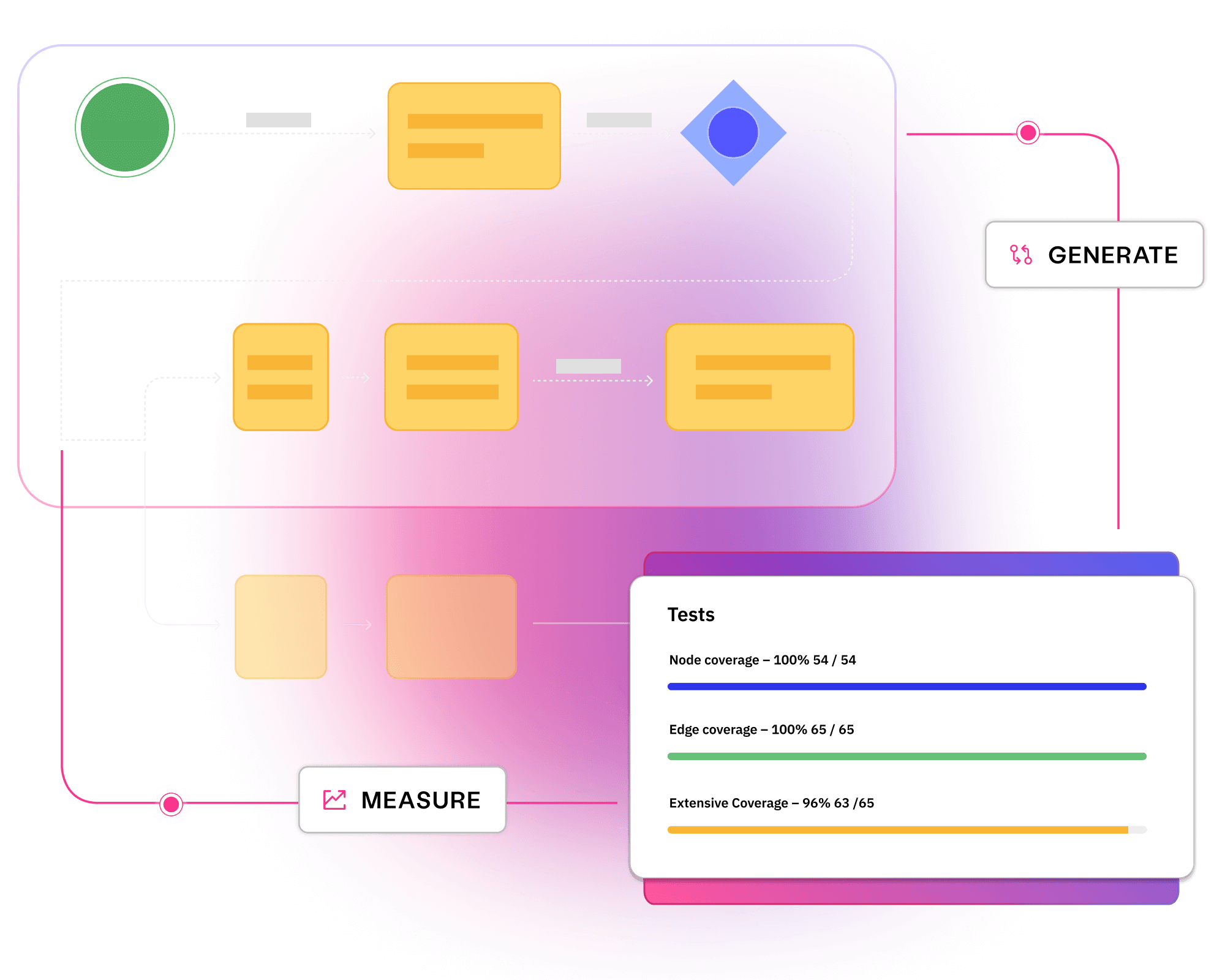

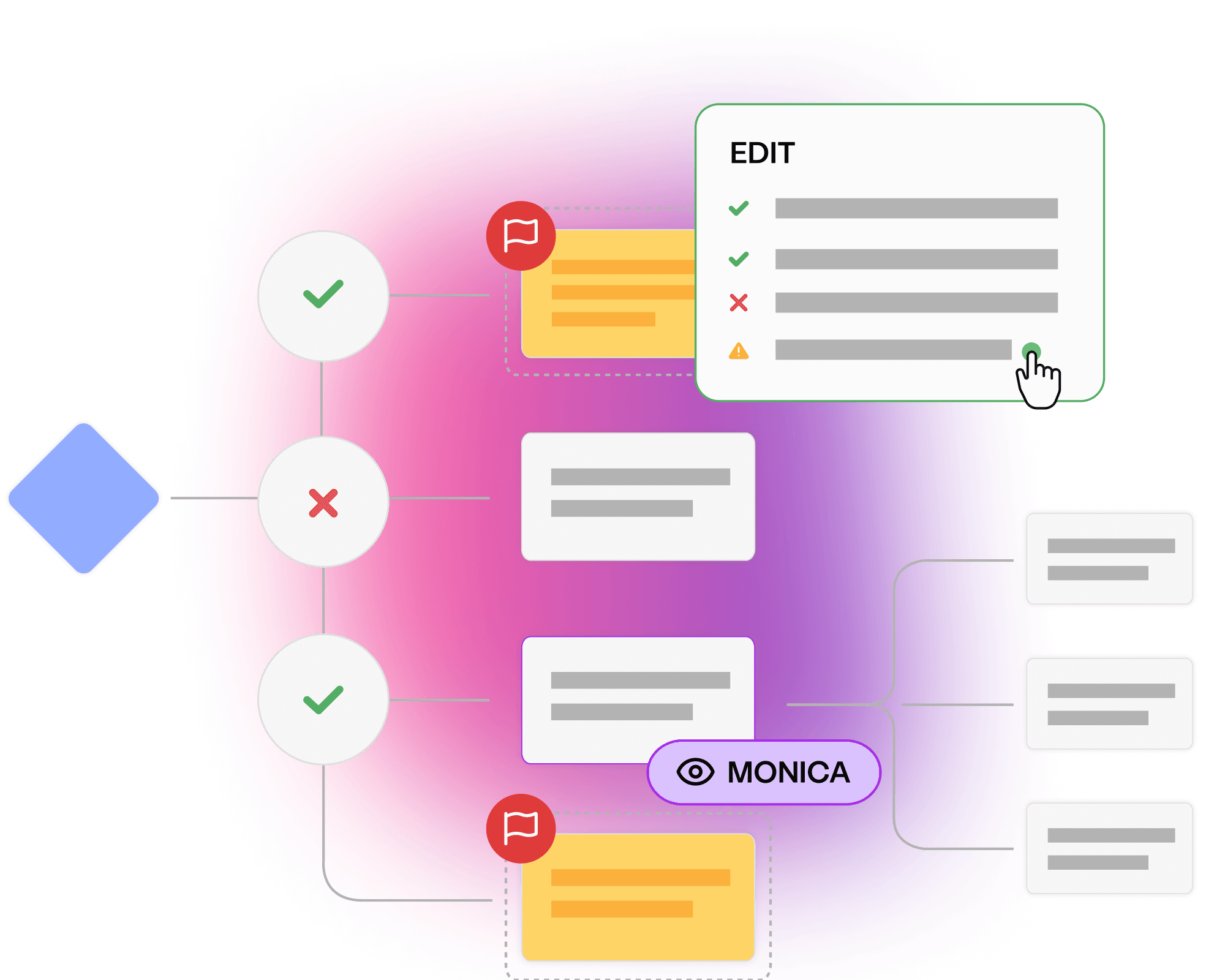

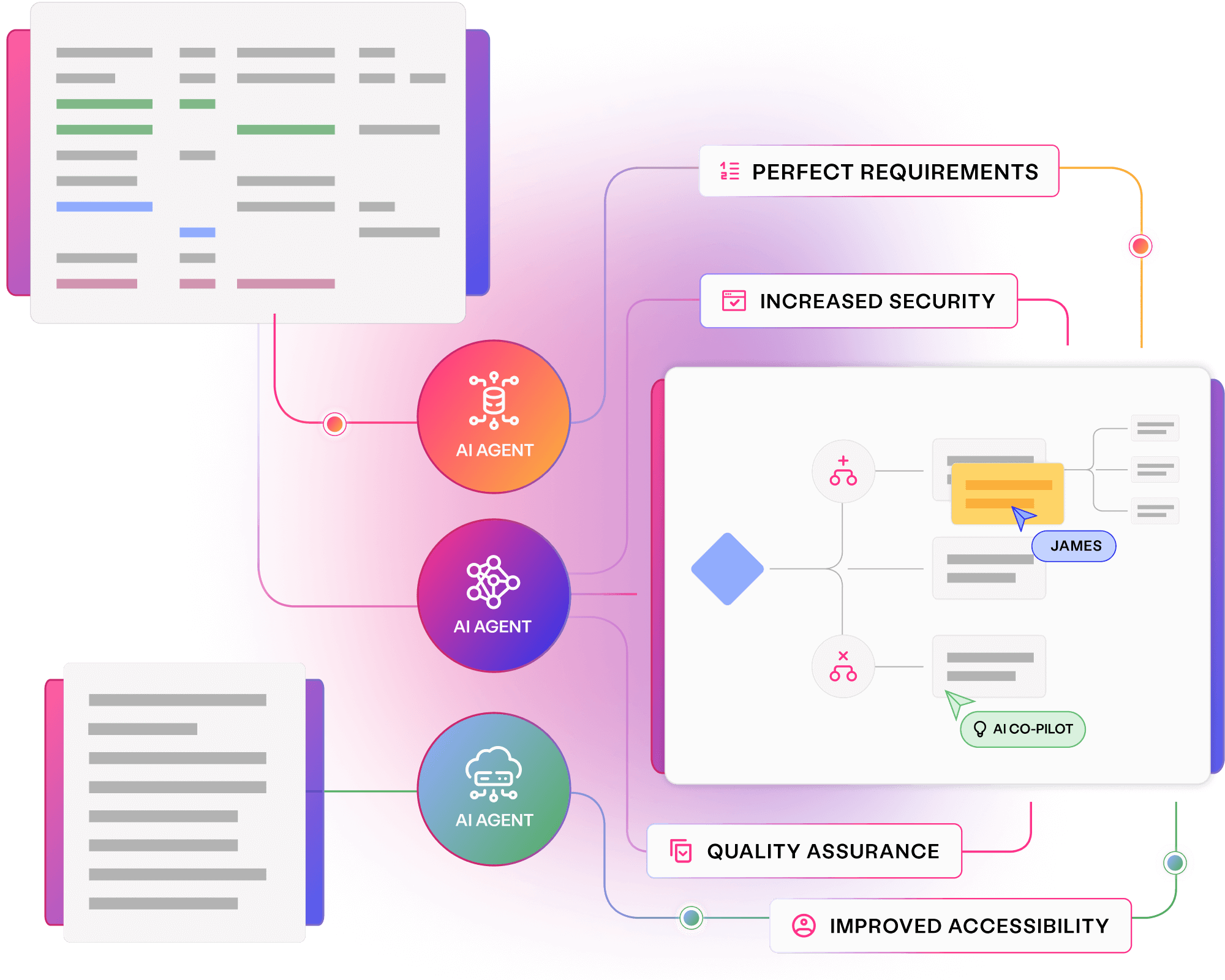

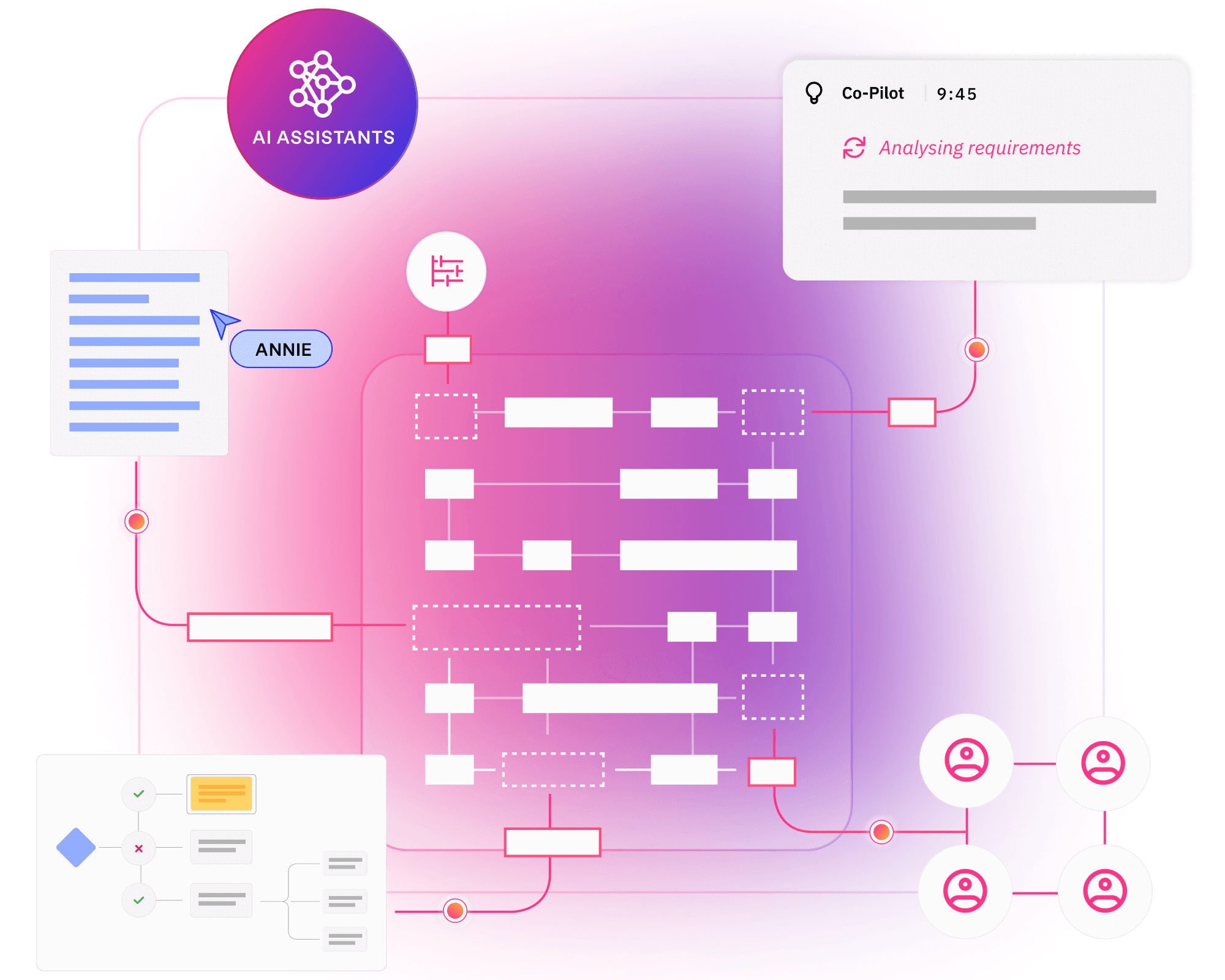

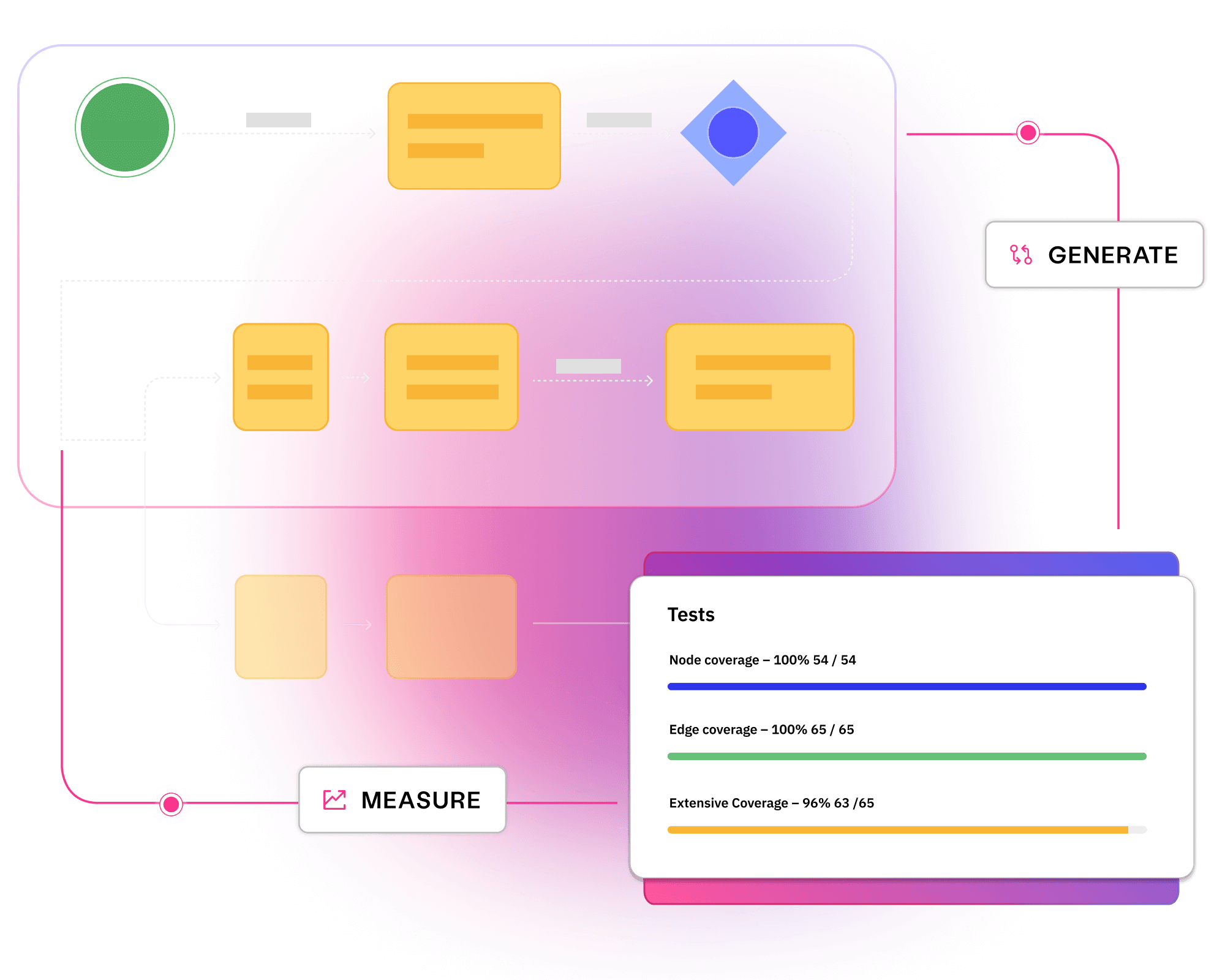

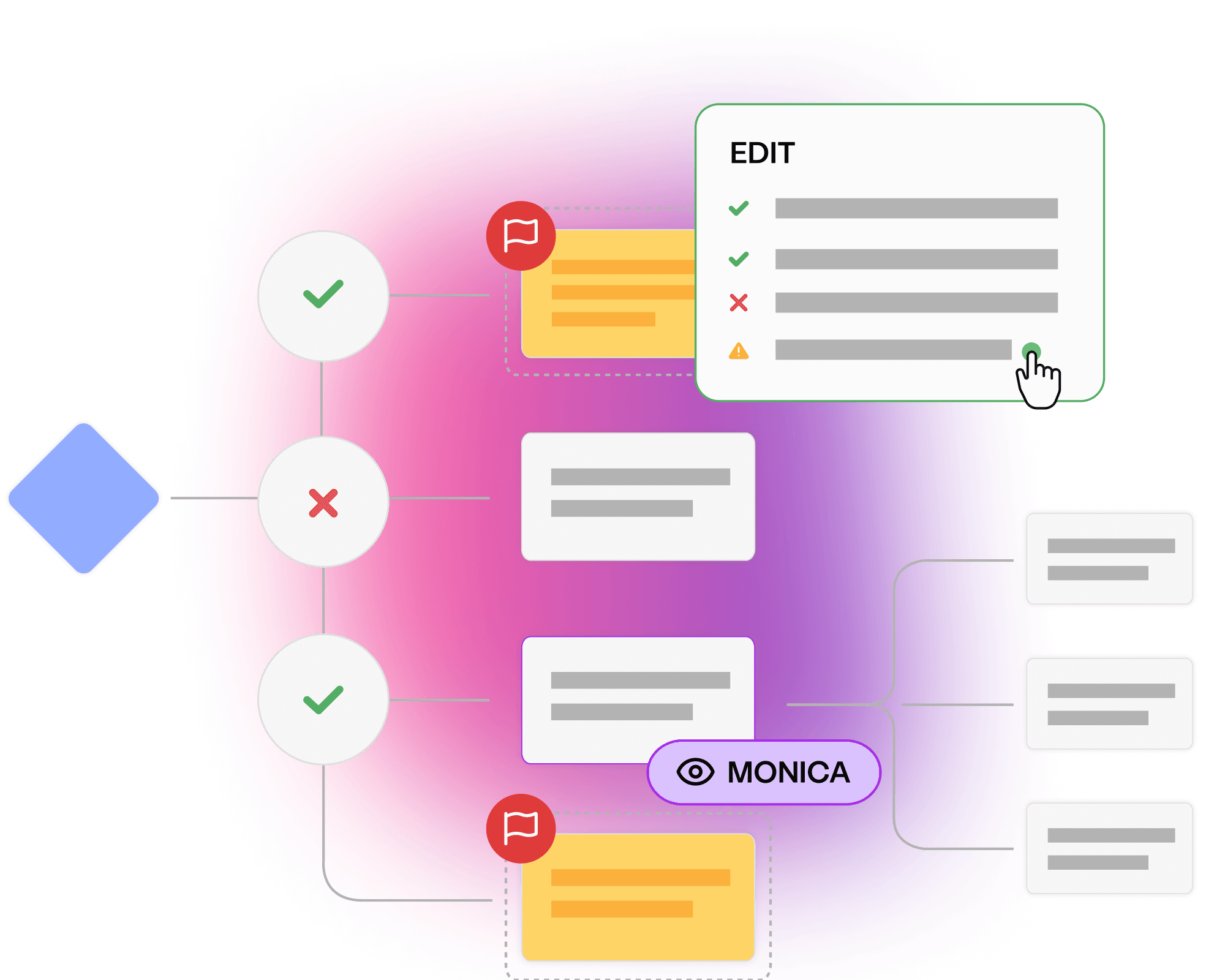

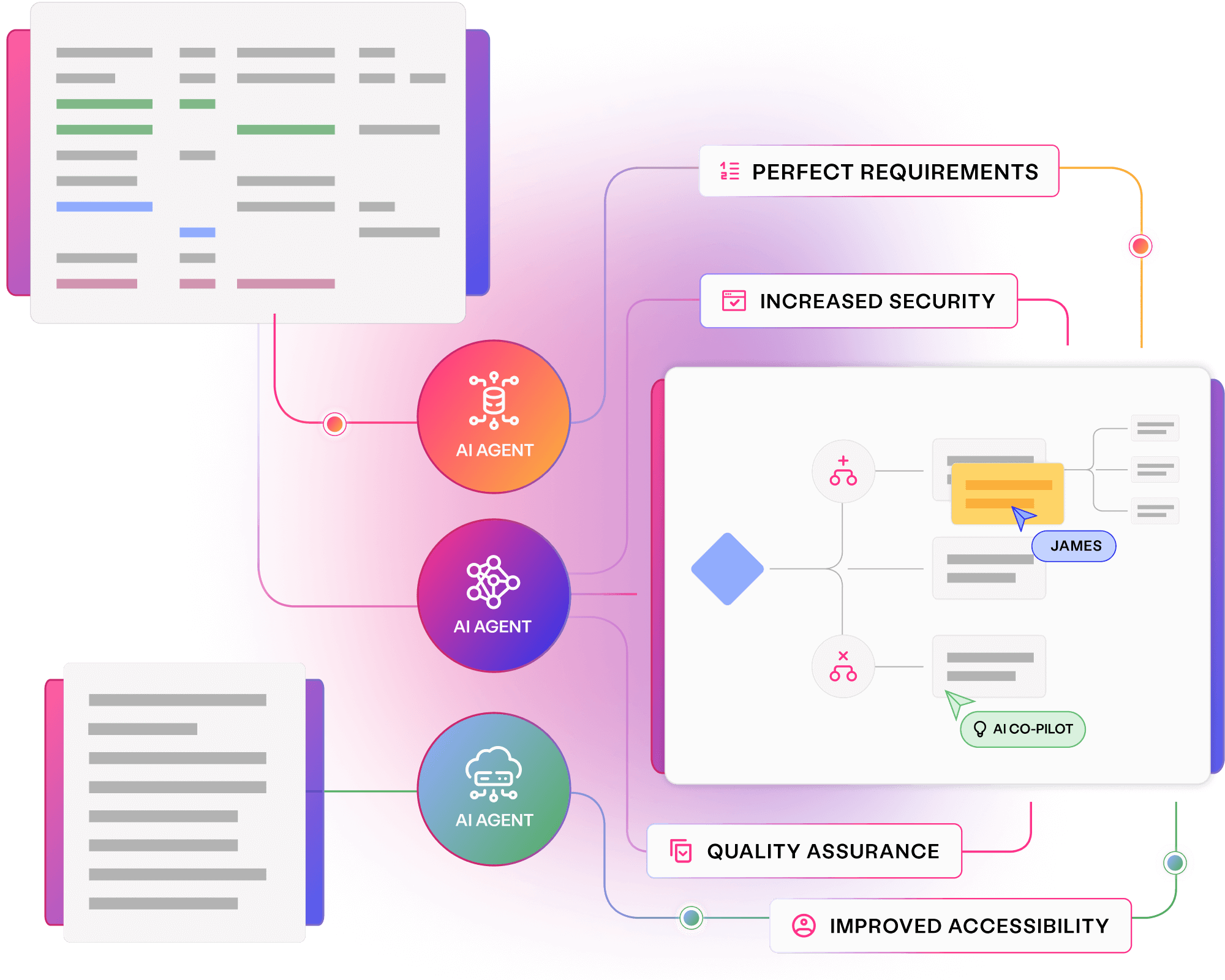

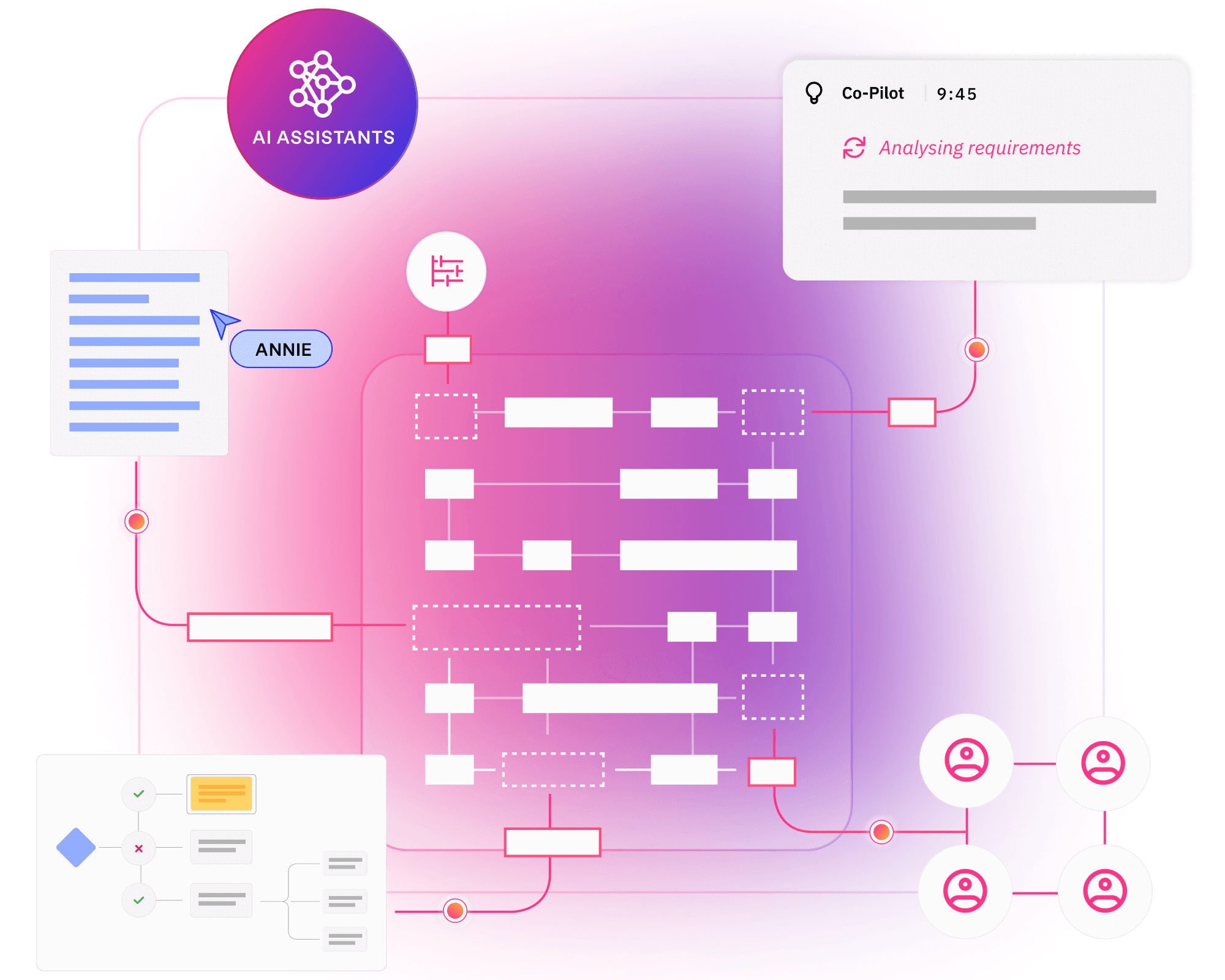

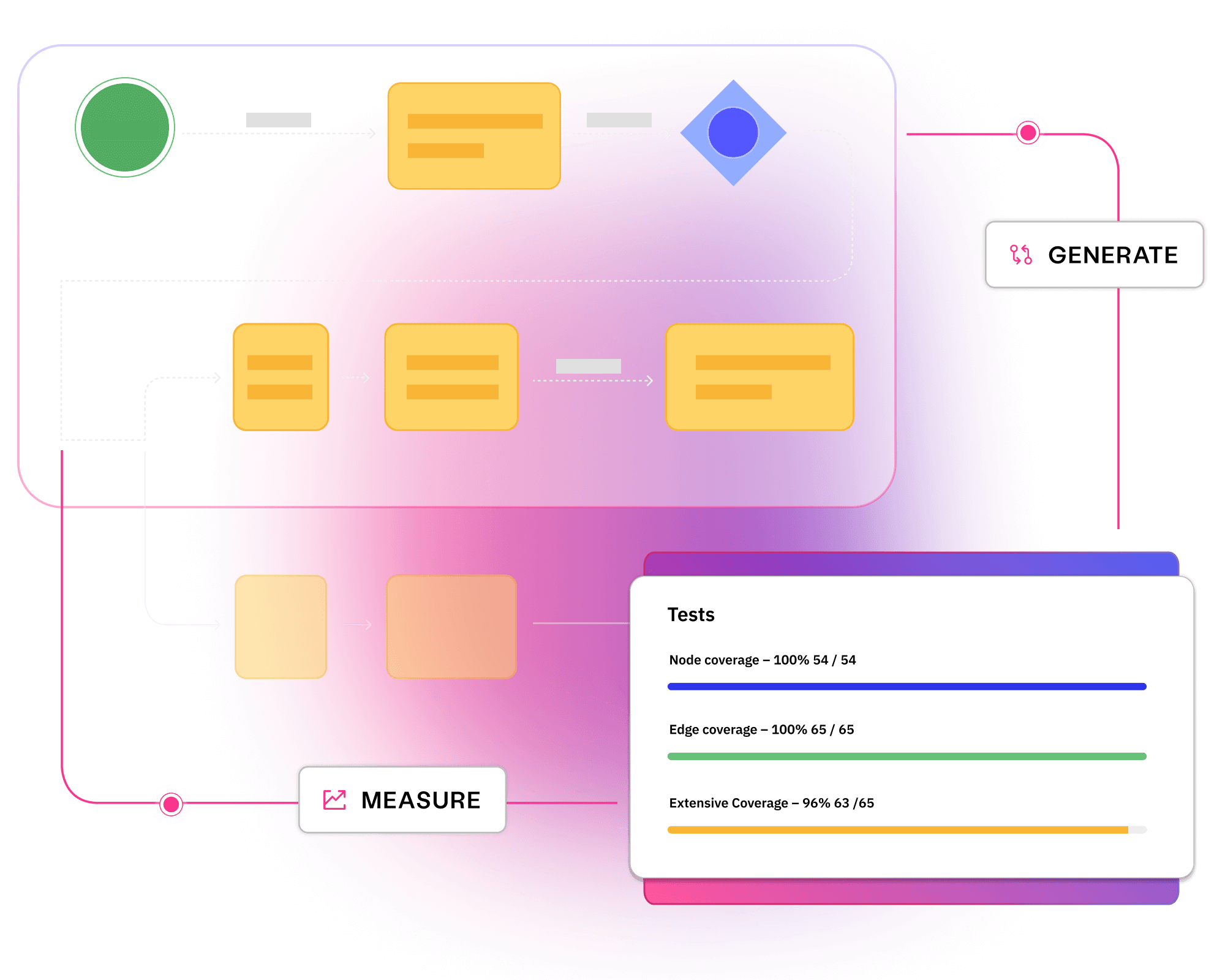

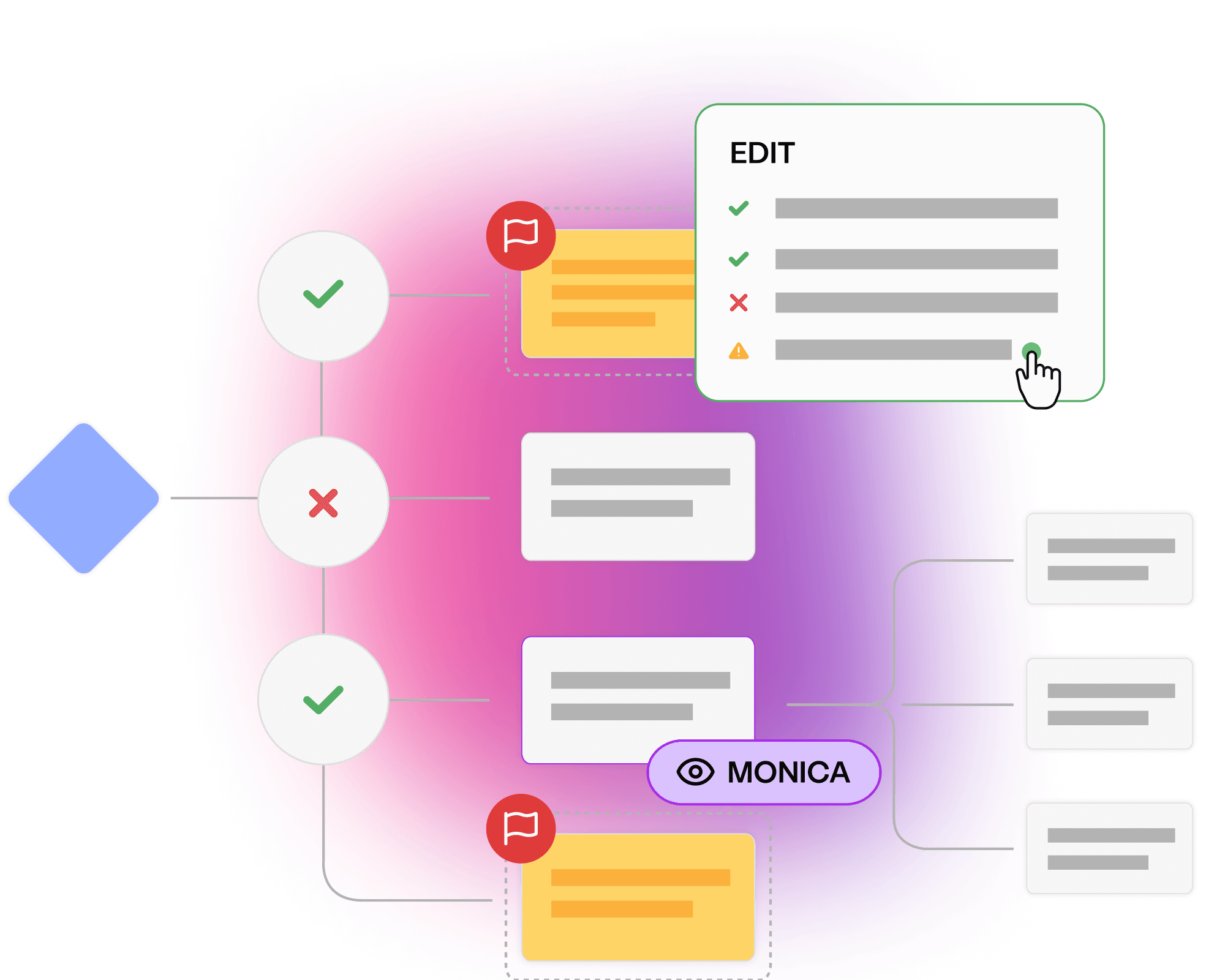

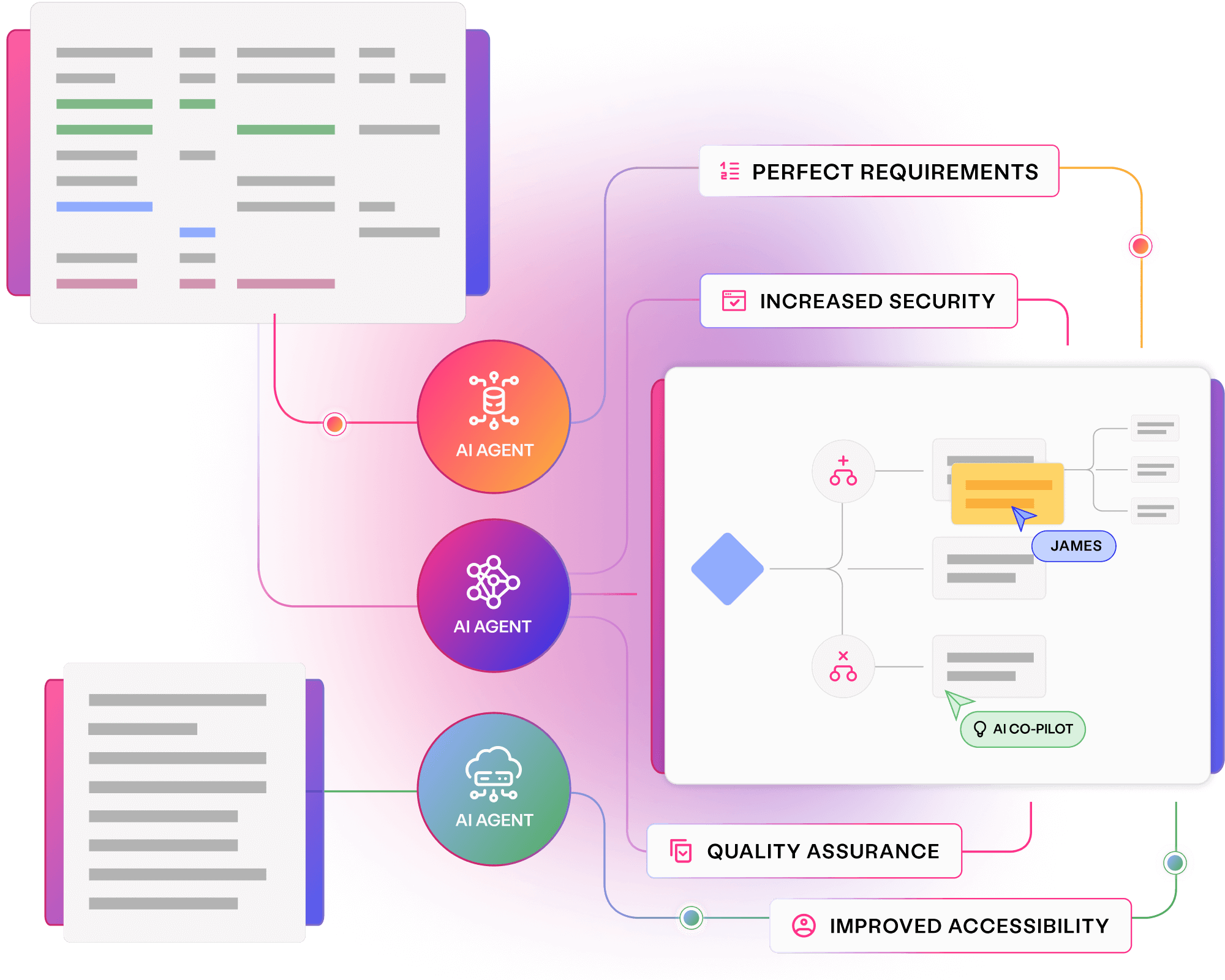

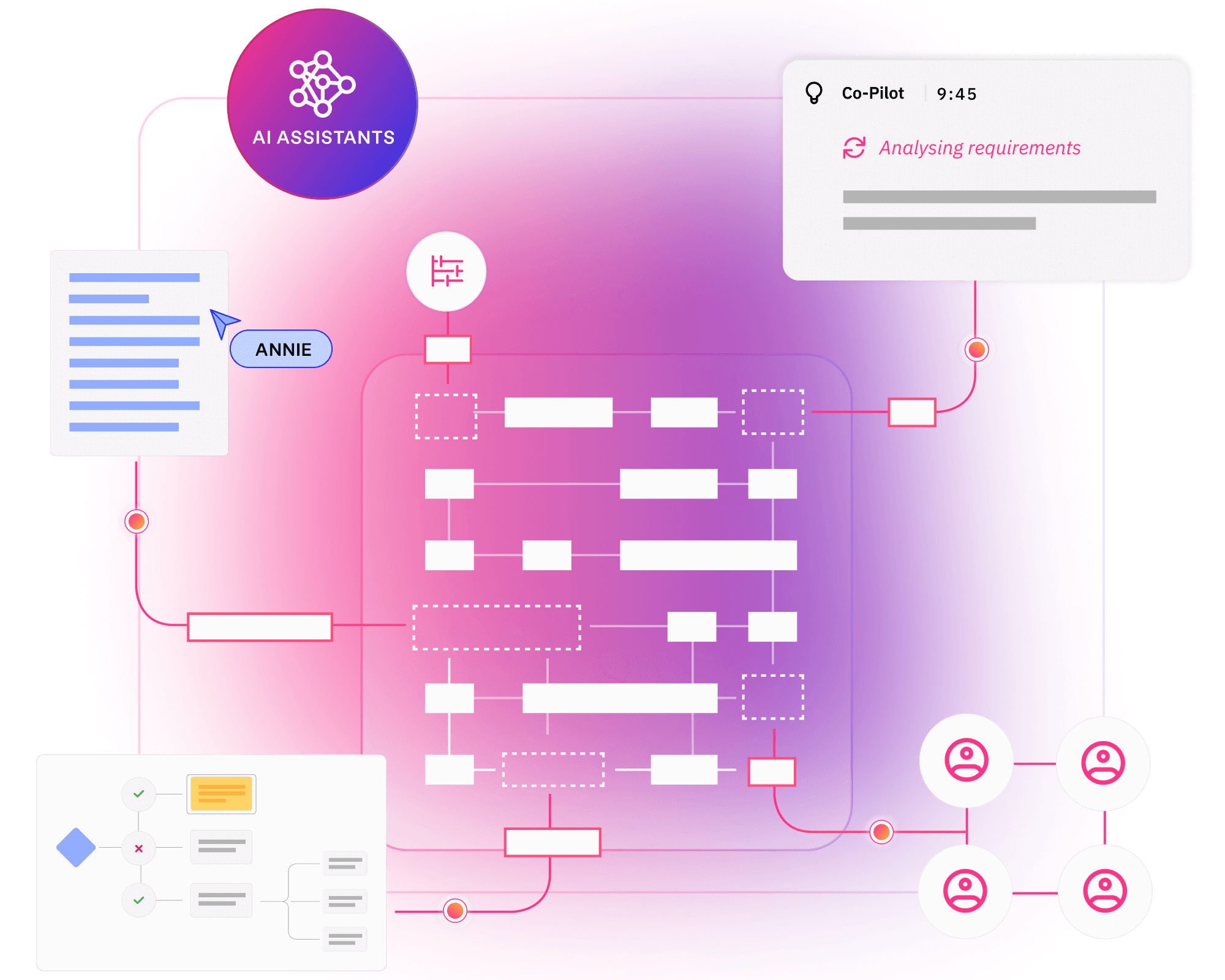

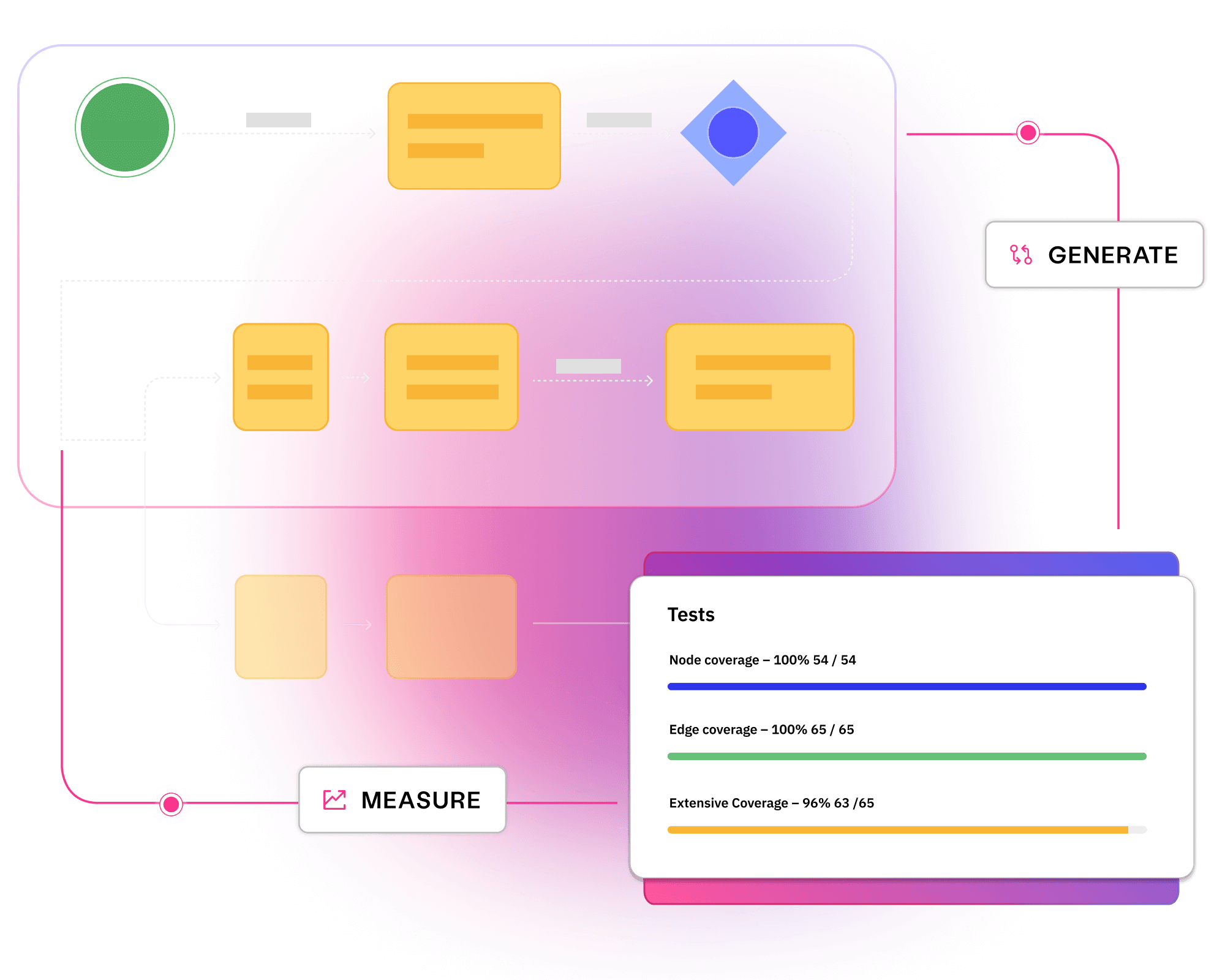

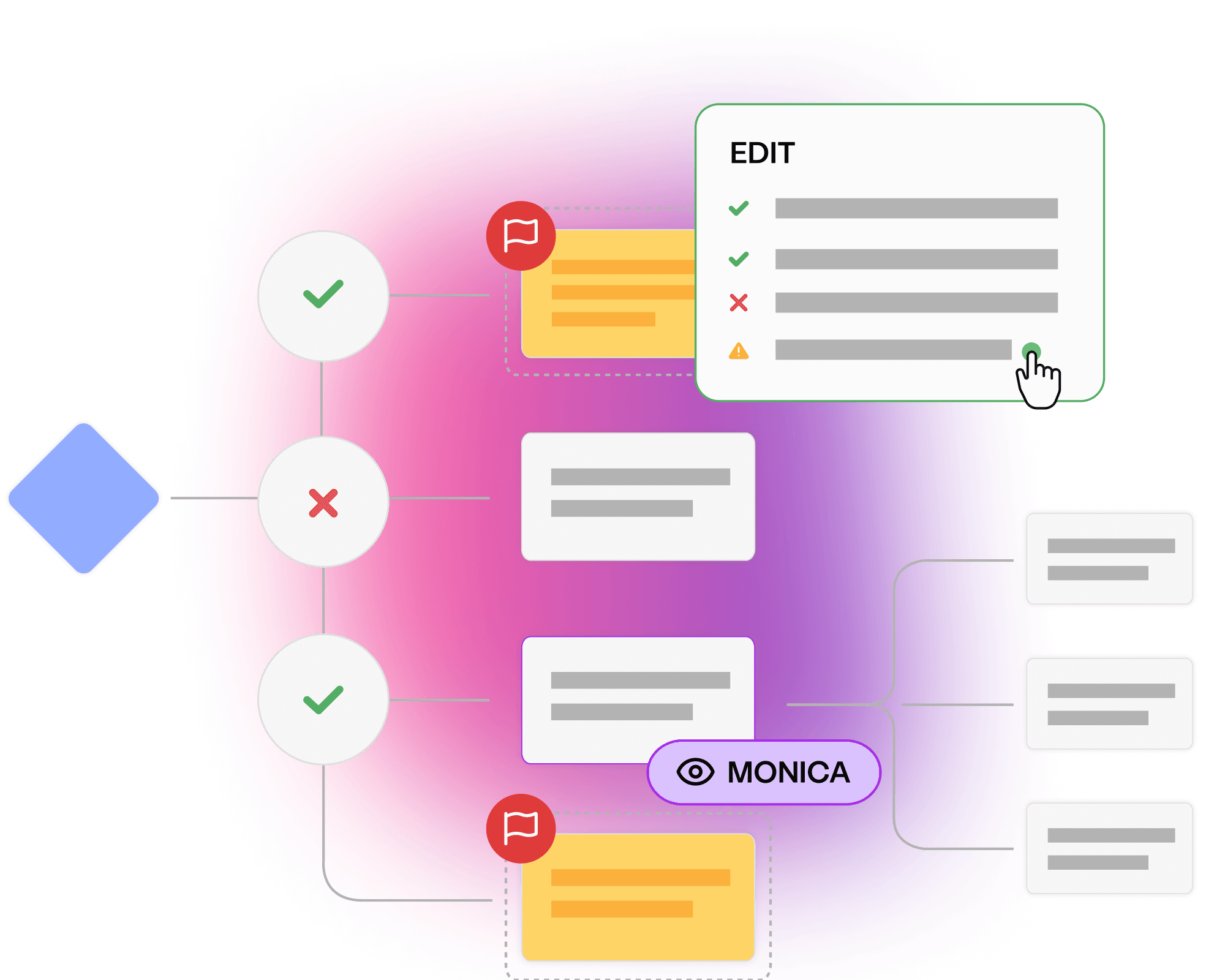

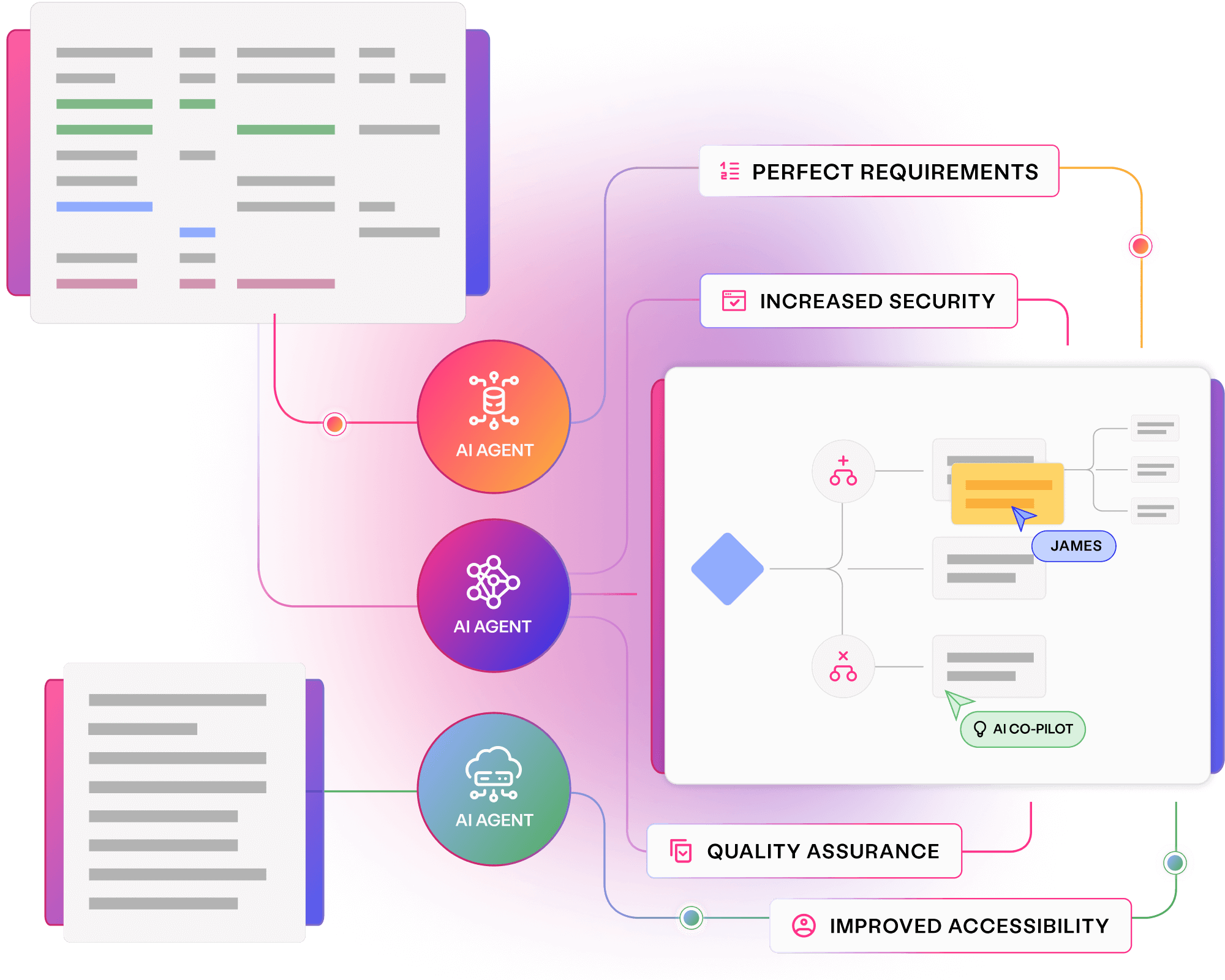

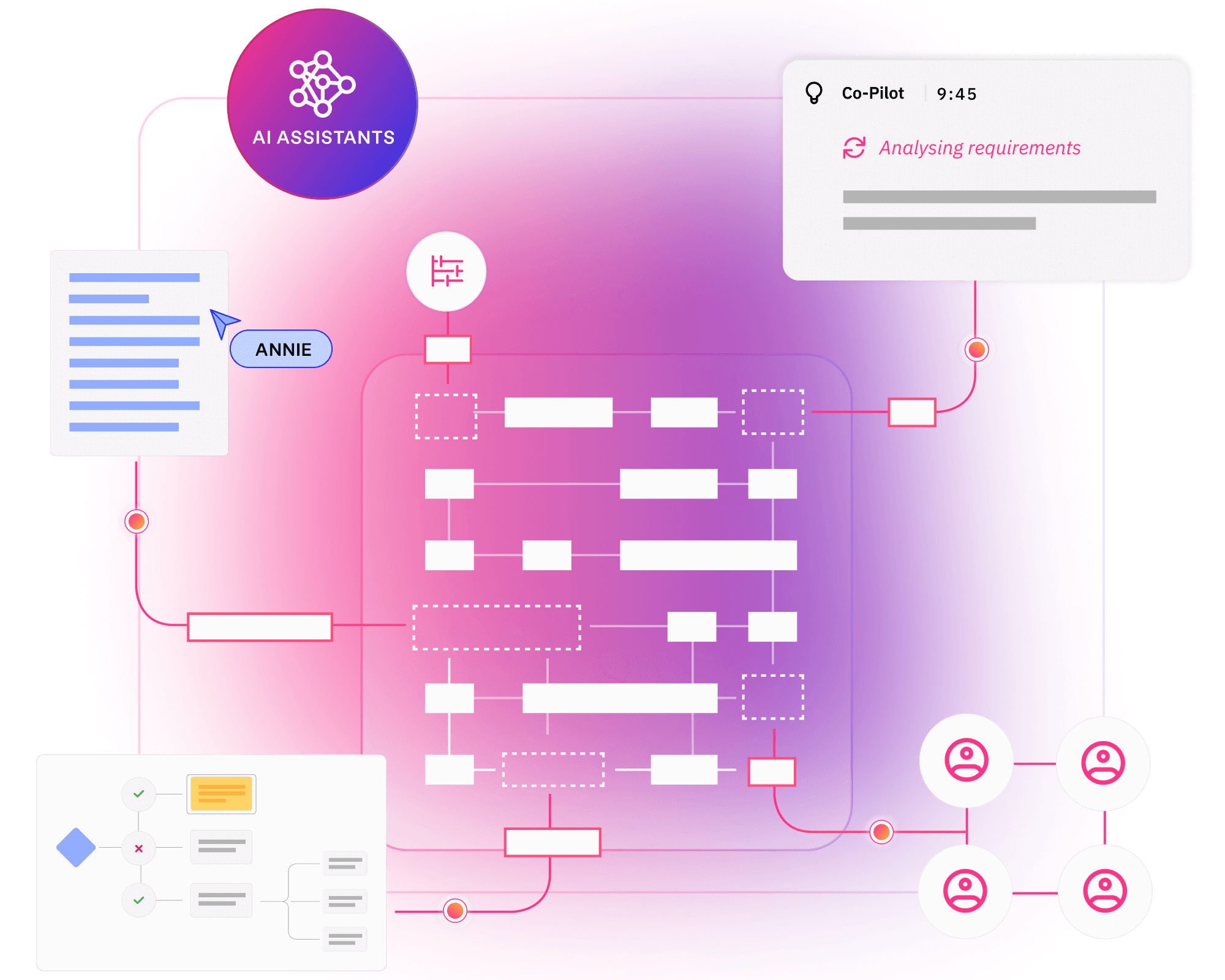

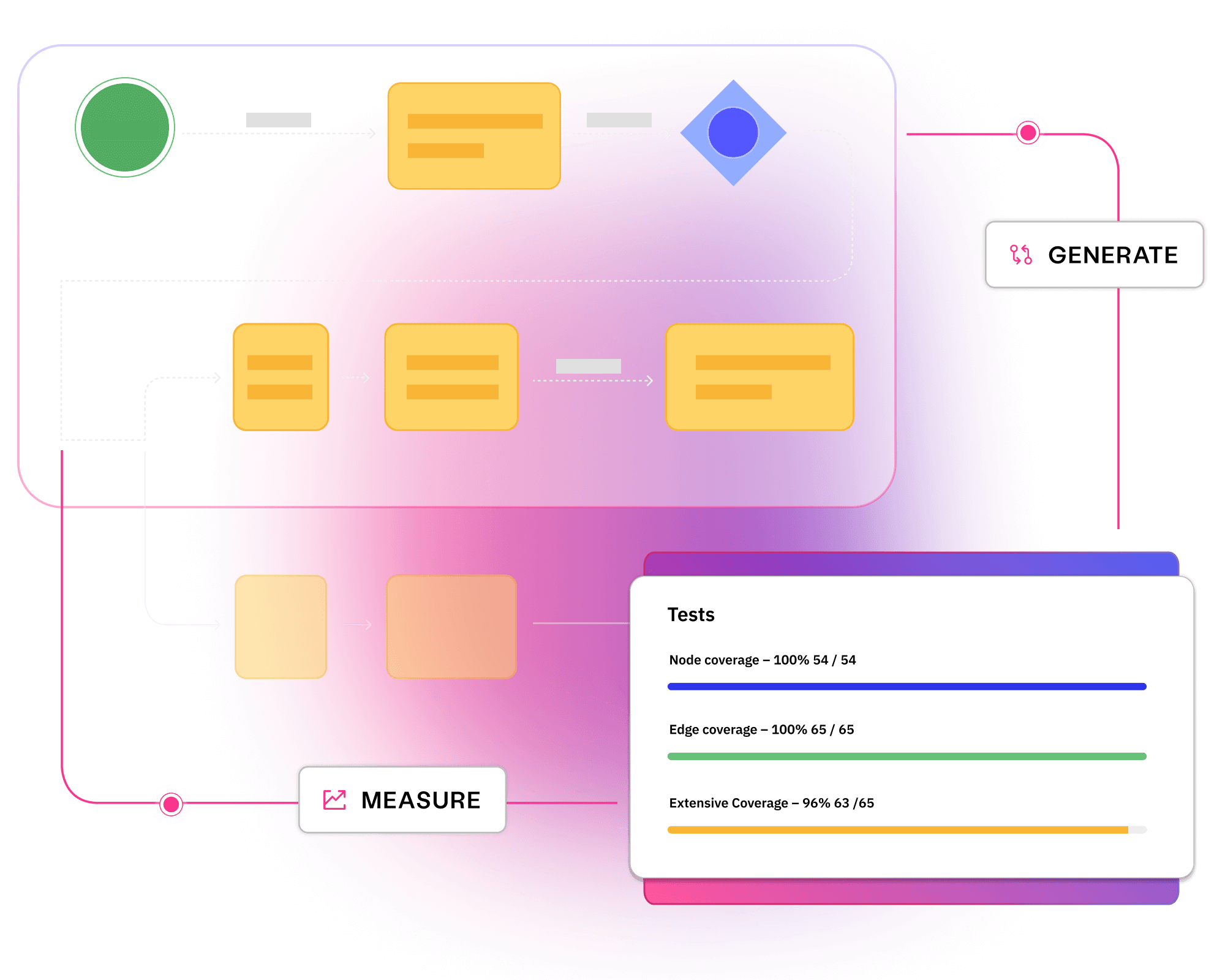

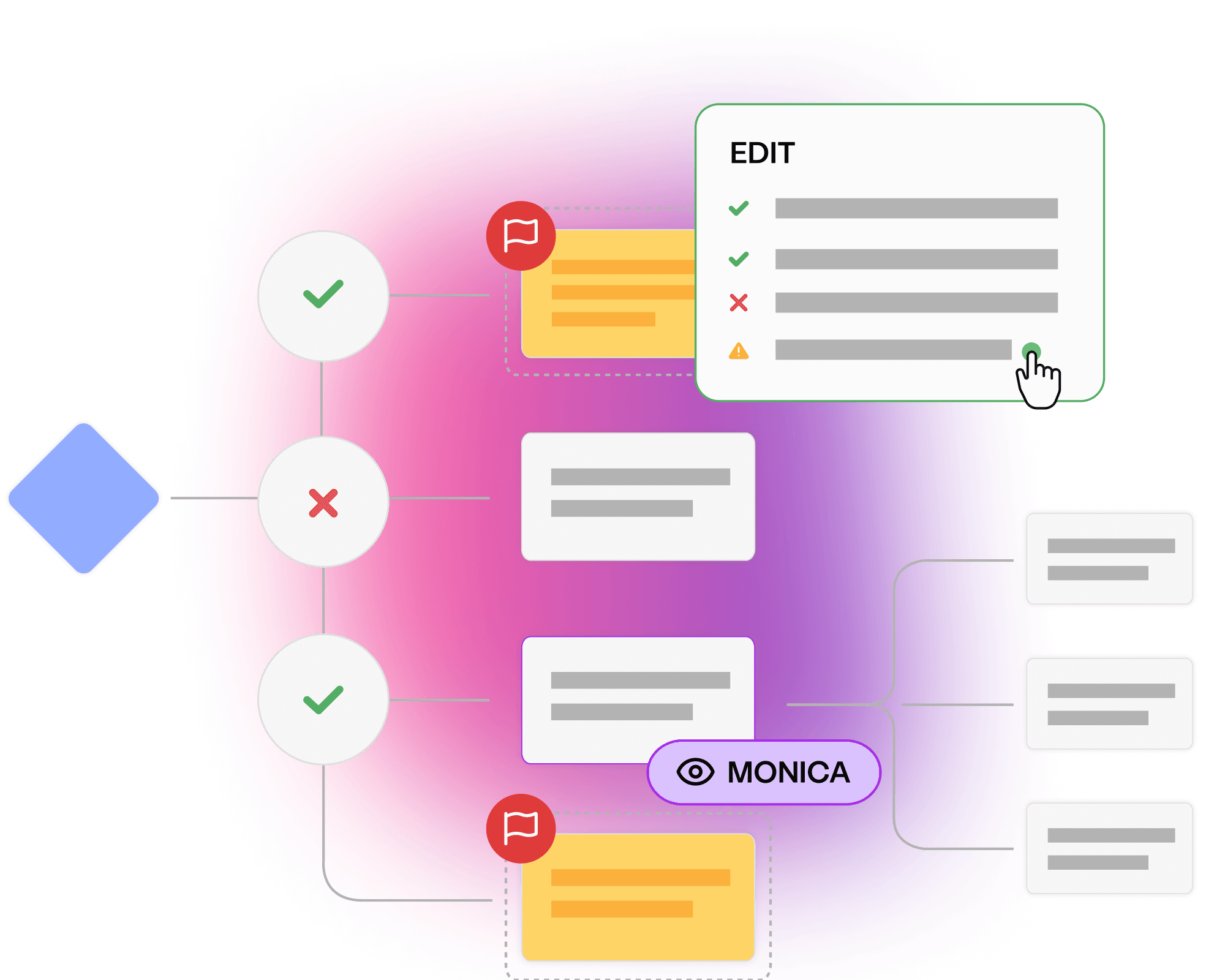

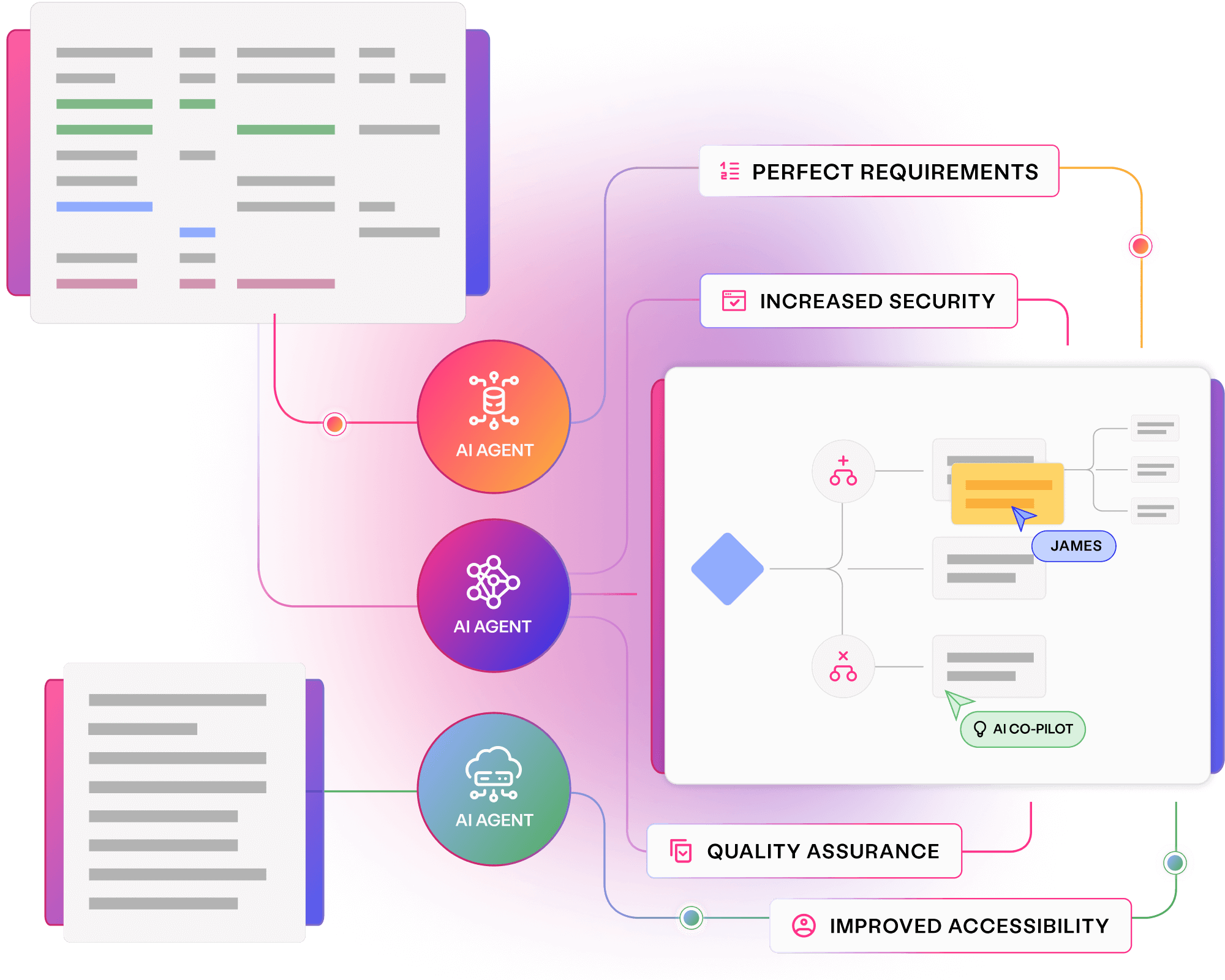

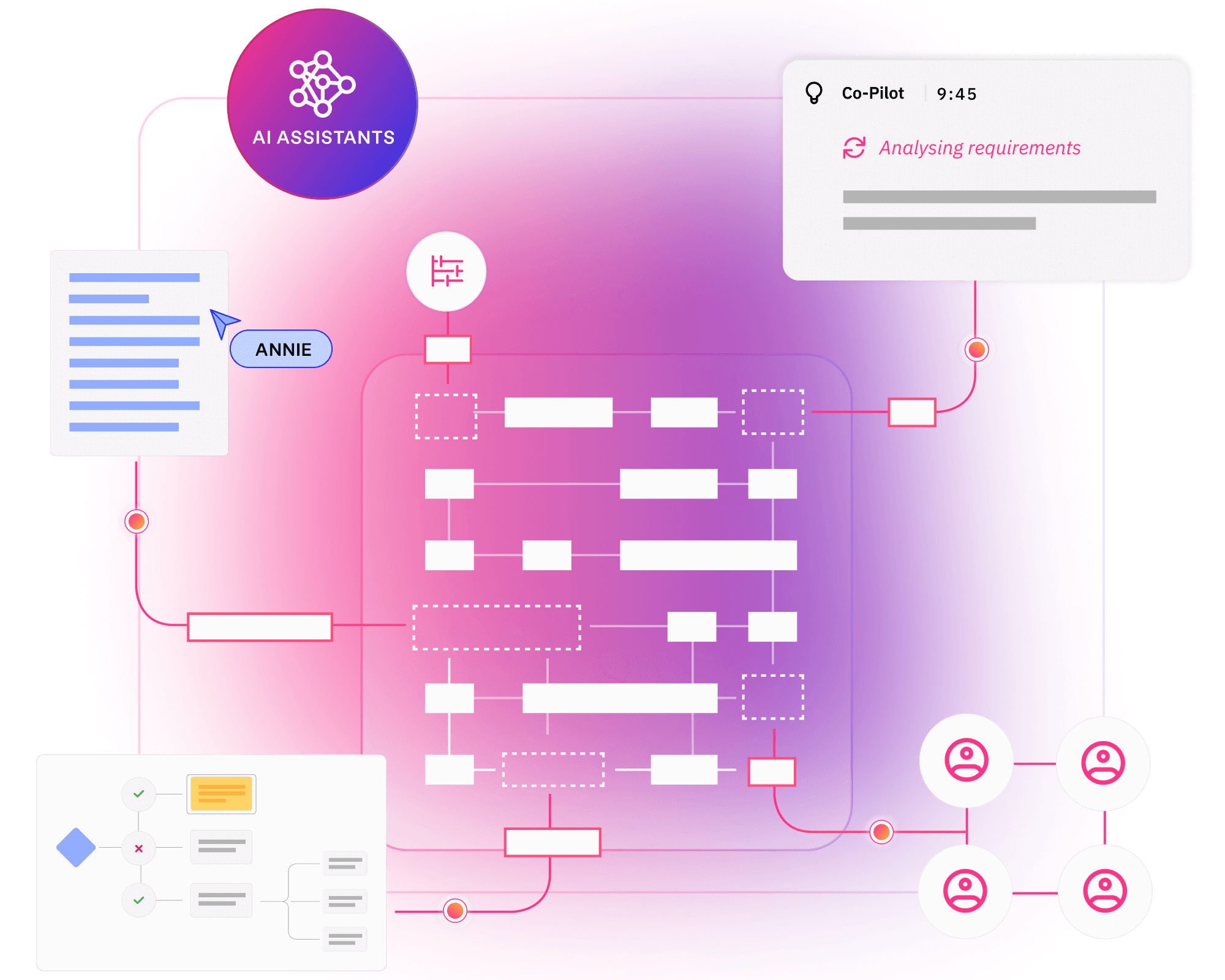

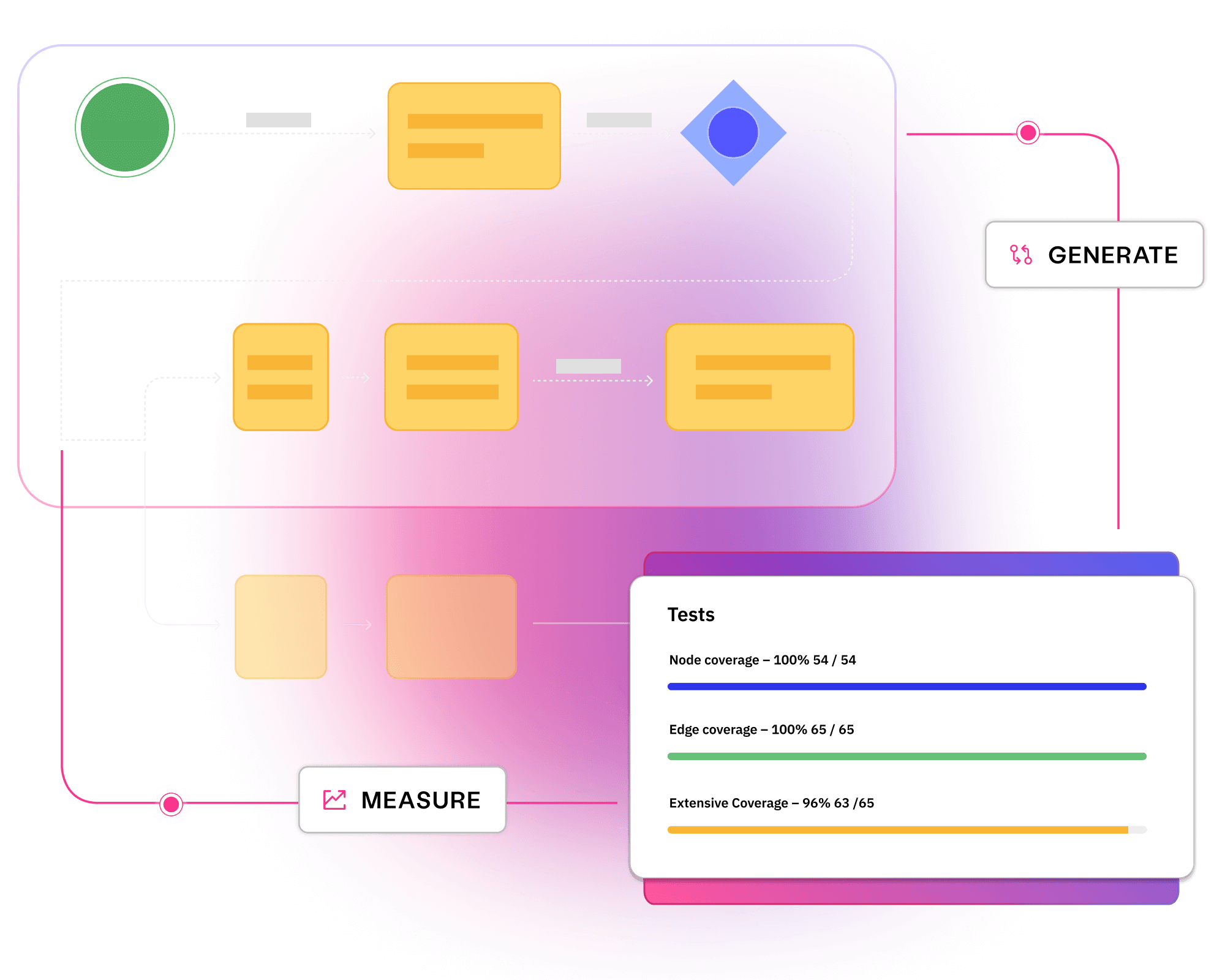

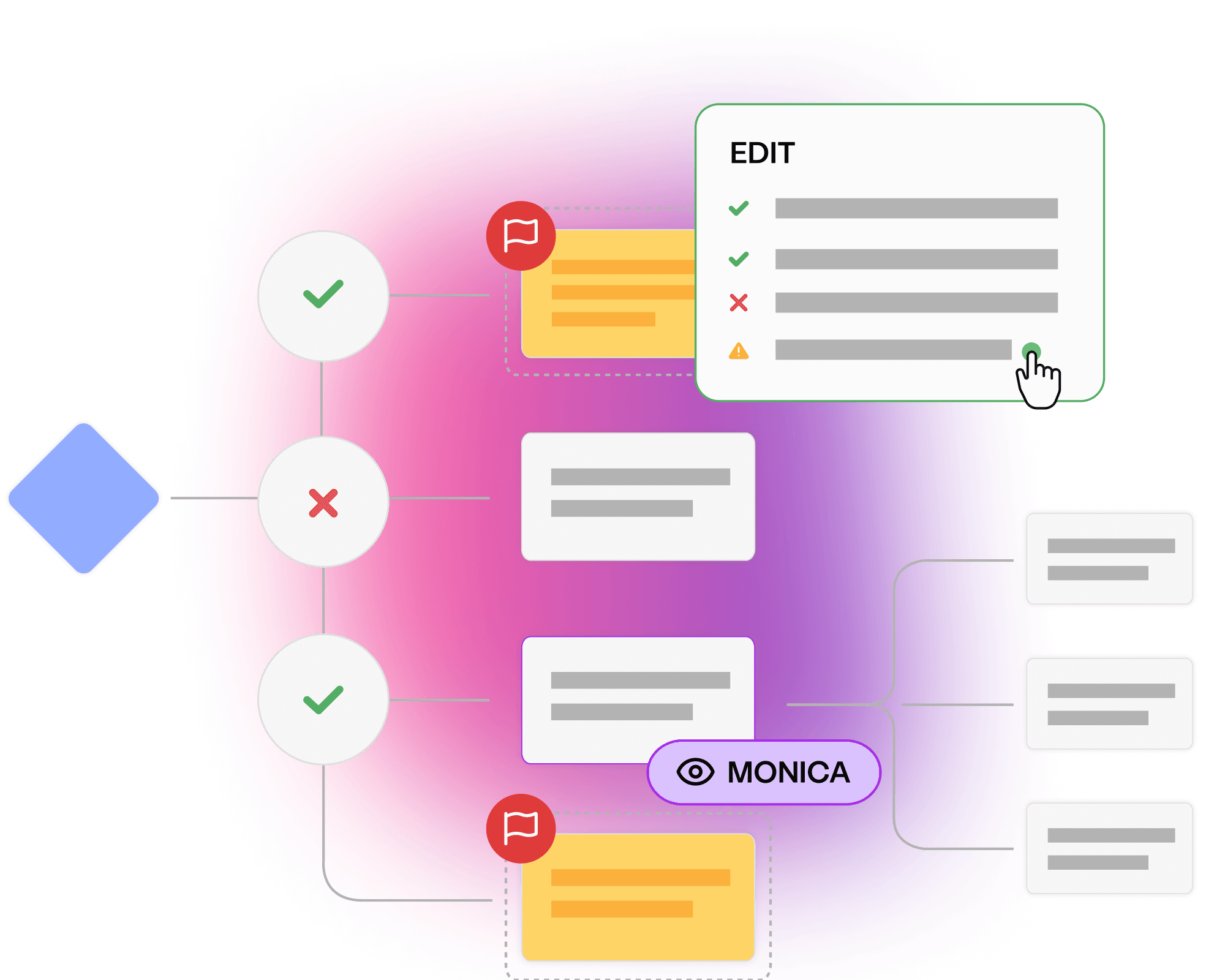

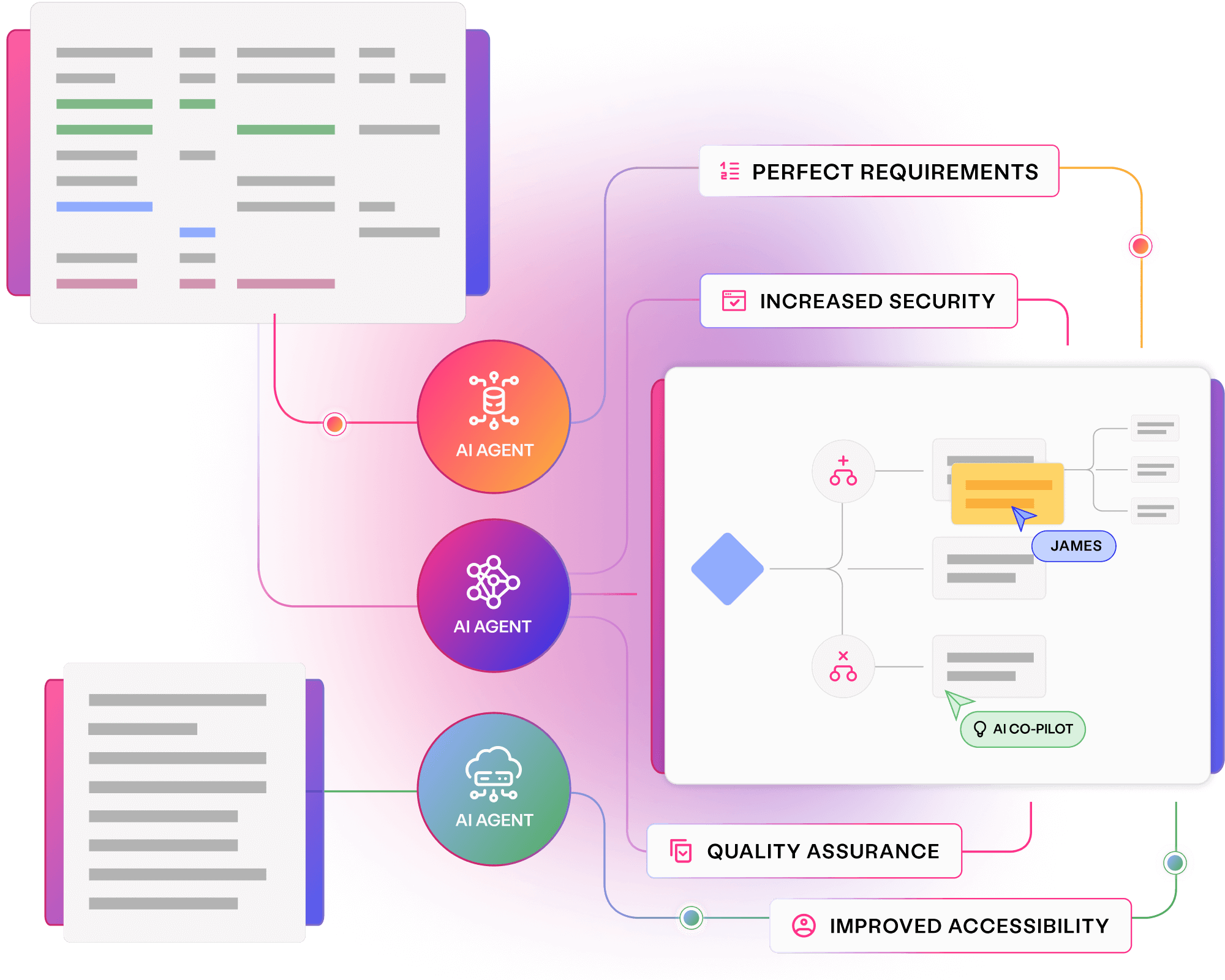

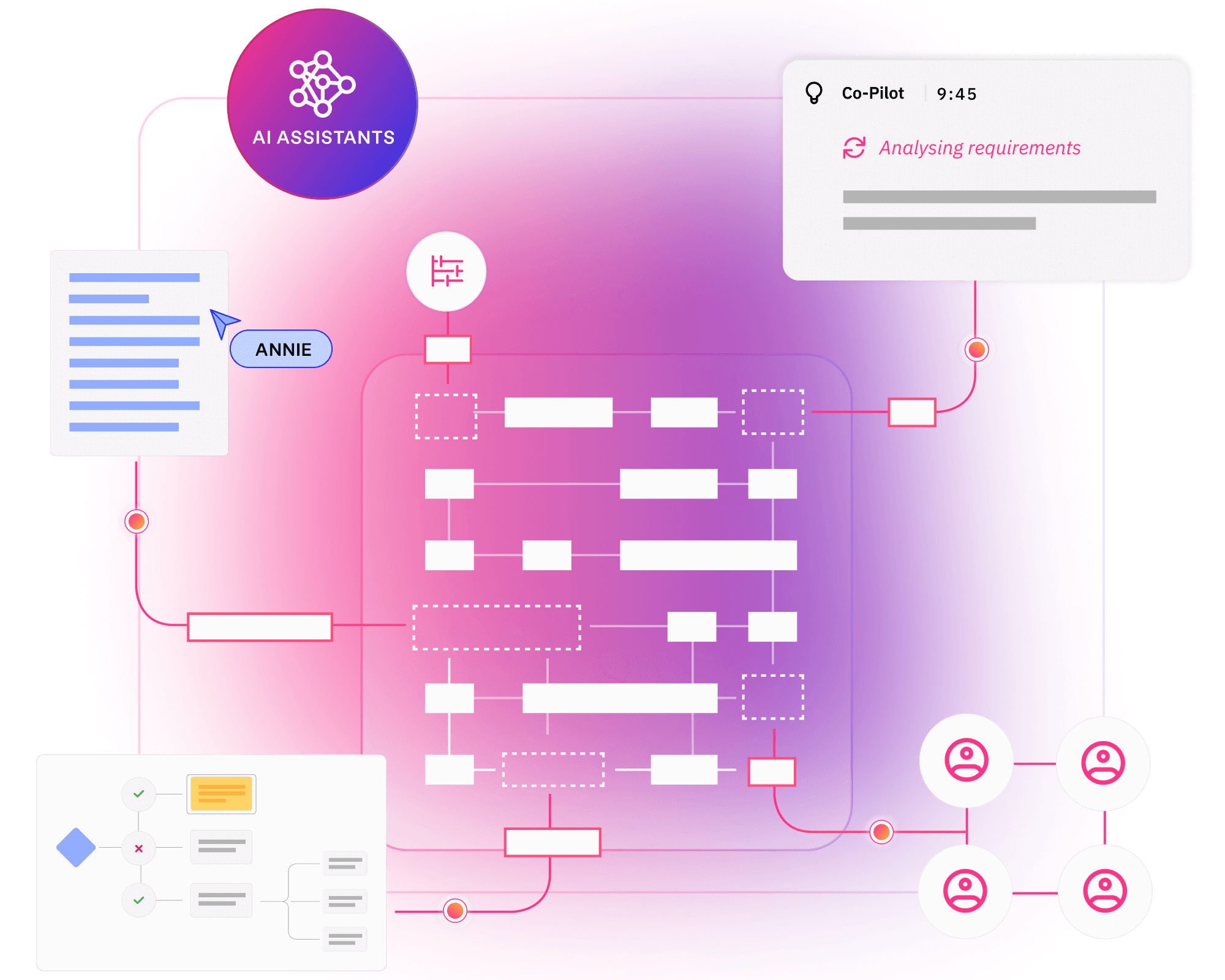

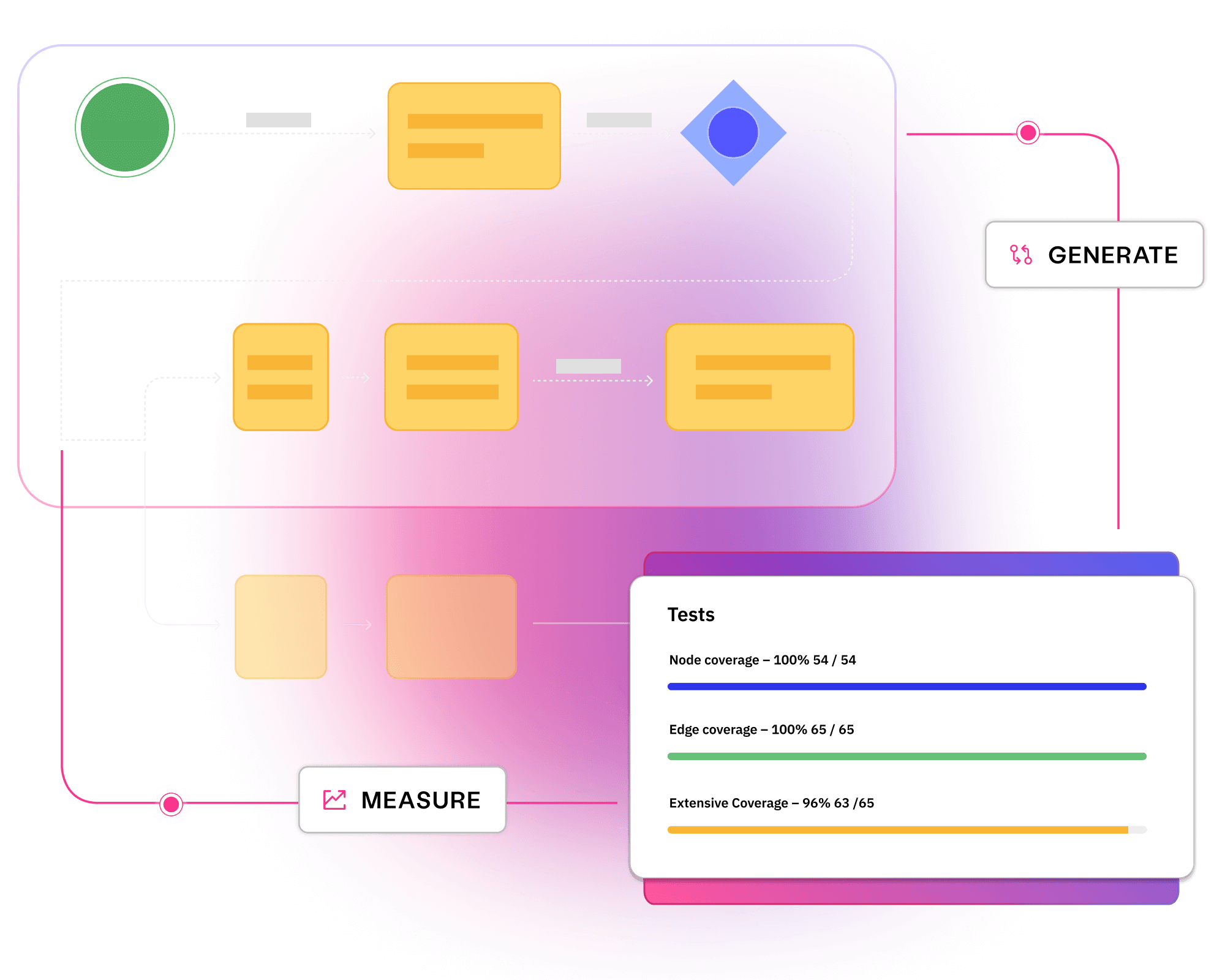

Foster quality and collaboration throughout software delivery. Convert requirements into visual models, with AI feedback and fixes to avoid bugs and rework.

Visualising requirements in clear models creates a collaborative resource for stakeholders working to deliver quality outcomes.

Collaborating on requirements uncovers ambiguity and incompleteness upfront, identifying bugs and avoiding rework.

Thoroughly analysing requirements enables critical thinking upfront, building quality and creativity throughout your software.

Breaking systems down into visual models allows stakeholders to grasp requirements quickly and deliver quality.

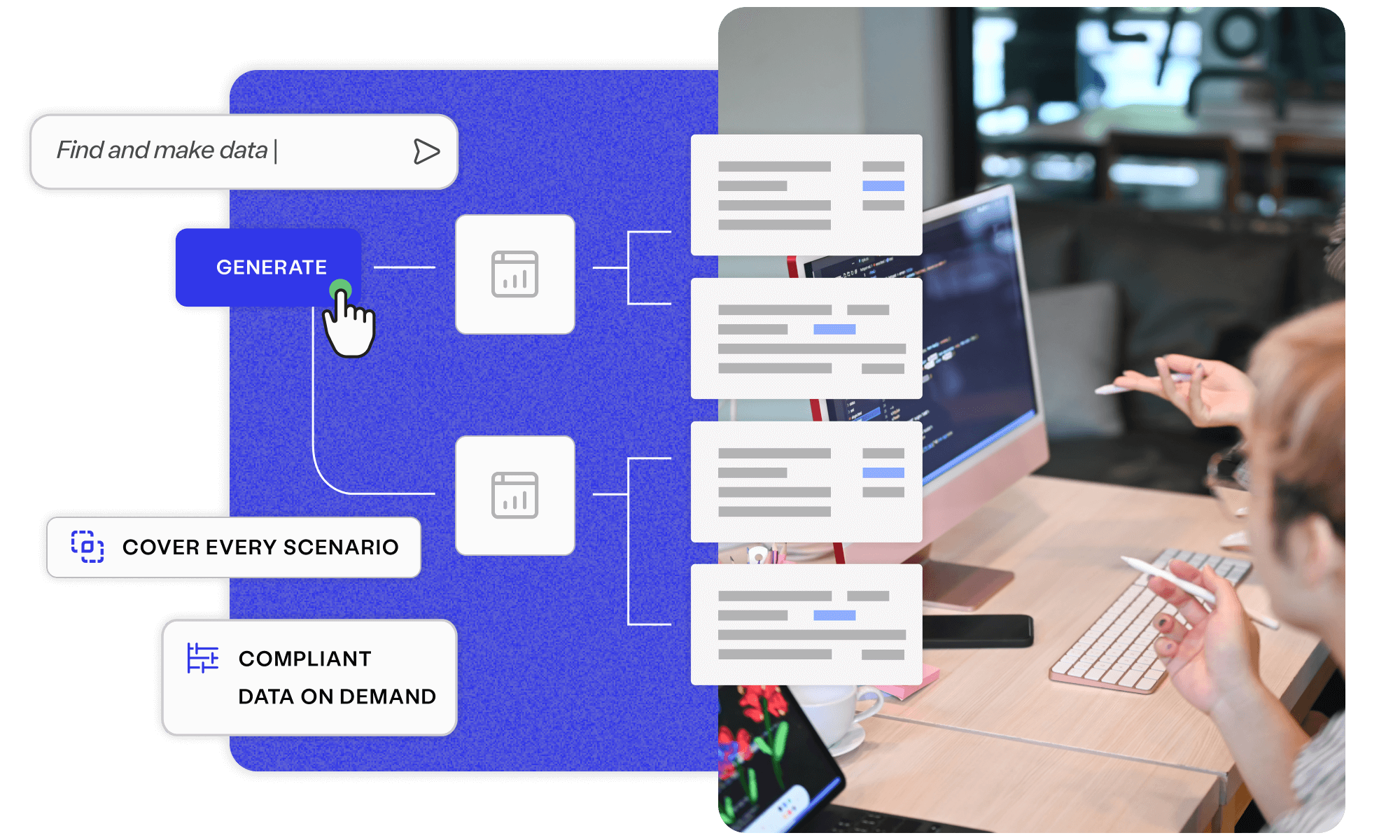

Uncover smarter test data, with Curiosity’s all-in-one, AI-accelerated platform. Offering integrated, secure, and intuitive tools to simplify complex data landscapes and overcome test data management challenges.

Explore platform

Across industries, some of the world’s largest enterprises use the Curiosity platform to perfect their requirements and collaborate on quality.

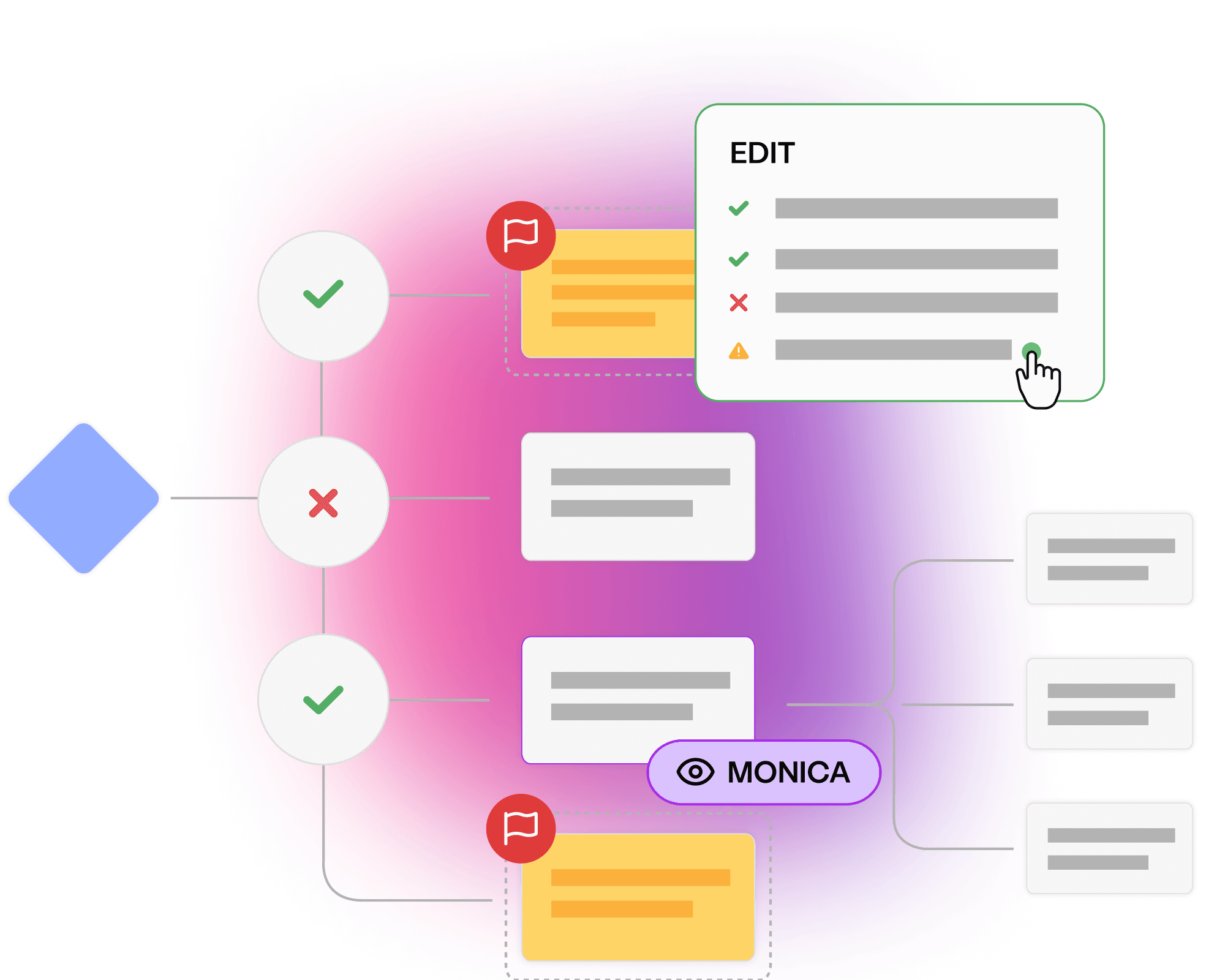

Miscommunications, silos, and a lack of transparency create bottlenecks throughout software delivery. AI can now diagram complex requirements and generate tests. This not only accelerates delivery and pays off technical debt; it also provides a collaborative vision that aligns every team.

VP of Application Delivery

Read a resource, or meet with an expert, to learn how AI-assisted, visual modelling can eradicate bugs and miscommunication in your requirements.

Simplify your complex data landscape and provide confidence and clarity at every step of your data...

Read more about Discover Enterprise Test Data® Learn more

Discover how EVERFI made quality everyone's responsibility, modelling and testing collaboratively.

Read more about EVERFI’s whole team approach to quality Read today

See how you can build quality early and avoid costly rework throughout your software delivery.

Read more about Meet with a Curiosity expert Book now