In the world of software development, generative AI has established itself as a formidable ally, assisting developers in coding and detecting potential problems in their applications.

My own experience with ChatGPT has been akin to working with a junior programmer, but with the added benefit of near-instant solutions for review. The ability to delegate tasks to ChatGPT and then seamlessly integrate or resolve issues with the provided solution empowers developers to move at an incredible pace.

Recently, Paul Gerrard released a series of videos showcasing the application of ChatGPT for software testing, with a focus on test automation. The results have been highly impressive, demonstrating how quickly you can transitioning from zero automation to functional test automation. However, there are concerns that need to be addressed to ensure the industry moves in the right direction.

To discover how you can start reaping the benefits of generative AI for your software testing, watch me, Paul and Jonathon Wright have a live roundtable discussion, available on demand.

The importance of understanding and collaboration

Merely generating tests without proper understanding of the application and requirements does not advance the industry. In fact, it has the potential to move software quality backwards.

The purpose of quality assurance is to provide confidence that what is being delivered meets the initial software requirements and functions as expected. Automation and manual testing are vehicles for demonstrating that confidence to stakeholders.

The exception to the rule is tech giants like Google who can largely manage with minimal automation, relying on well-designed A/B which roll back instantaneously on the presence of defects.

To effectively use AI in testing and provide confidence to stakeholders, it is crucial to comprehend how the AI is testing the application, and the underlying testing methodology which has been applied.

Furthermore, most enterprise applications possess business logic and rules that require thorough testing. These rules are often ingrained in the knowledge of subject matter experts (SMEs) and may not be readily available for training / inputting into generative AI models. To overcome this challenge, collaboration between SMEs and AI models is essential.

By incorporating the expertise of SMEs into AI-driven testing processes, a more accurate and comprehensive testing framework can be achieved.

Visualizing the testing process and enhancing collaboration

Incorporating a feedback loop is crucial for enhancing the performance of generative AI in understanding business logic, as it allows the AI model to continuously learn and improve.

At the same time, visualization plays a vital role in offering stakeholders transparency and insight into the testing process, ensuring they are well-informed about the aspects of the application being tested and the methodologies employed.

Visualisations communicate what is being tested, how, and to what extent.

By combining these two elements, we can foster a more effective and efficient software testing ecosystem that not only maximizes the potential of AI integration, but also builds stakeholder confidence in the quality of the applications being delivered.

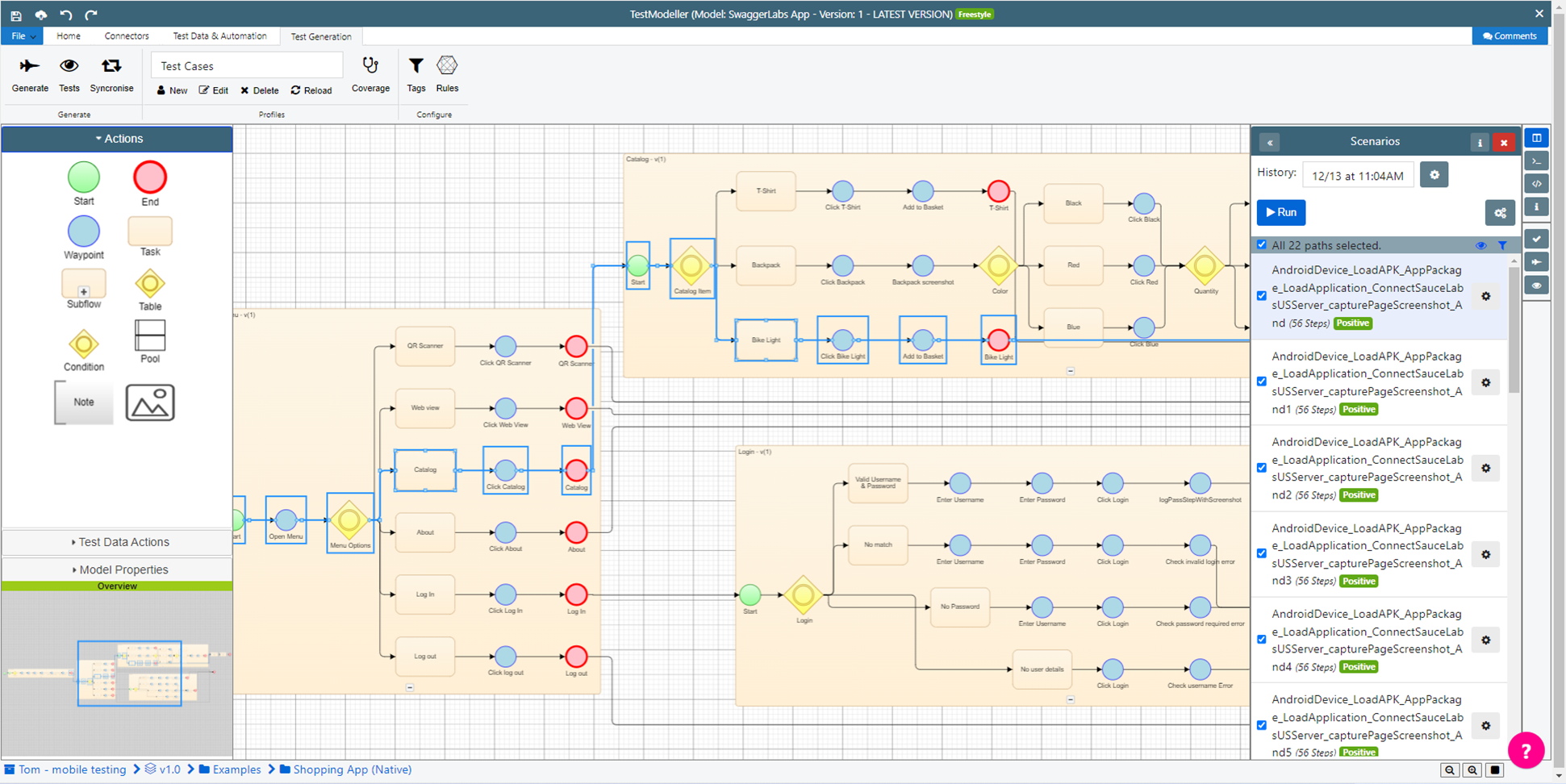

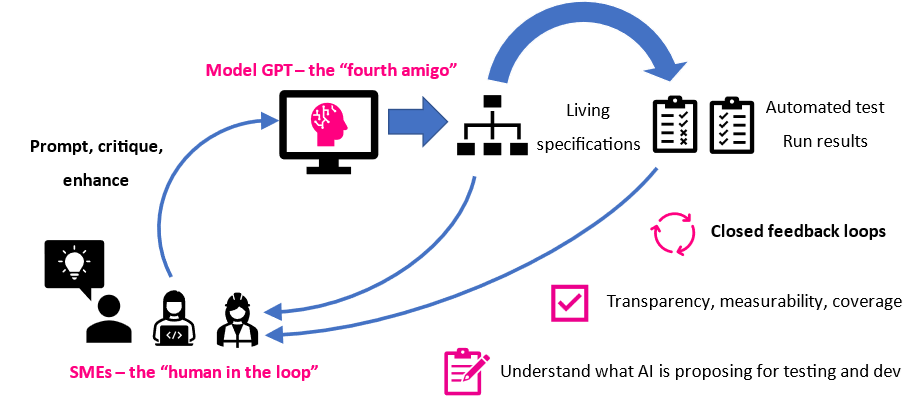

Paul Gerrard has presented an approach that features models as a collaboration hub for improving a tester's understanding of an application, enabling them to test more effectively. We foresee an extension of this model to include generative AI that comprises the following steps:

- The tester provides an initial set of requirements.

- The generative AI generates a solution.

- The tests are visualized using visual modelling.

- The tester enriches the model with their own knowledge or critiques the generative AI's solution, allowing the generative AI to enhance it’s model and the resulting tests.

A “model in the middle” approach to generative AI in testing.

This model in the middle process moves away from the black-box approach of generative AI, encouraging collaboration between AI models and testers to create a more efficient and effective software testing ecosystem, while also providing confidence in the tests and software being delivered.

The following video shows one application of this approach, leveraging generative AI to generate and refine requirements models and tests:

A new model: A collaborative and transparent testing ecosystem

By moving away from the black-box approach of generative AI and adopting a more collaborative process, we can achieve several benefits:

- Enhanced understanding: Visualizing the tests through visual modelling helps testers gain a better grasp of the AI-generated solution, enabling them to identify gaps and areas for improvement.

- Improved collaboration: The iterative process of enriching the model with tester input or critiquing the AI-generated solution fosters collaboration between testers and AI models, ultimately leading to better testing outcomes.

- Higher quality tests: As testers and AI models work together to refine the model and tests, the quality of the tests generated is improved, ensuring more accurate and comprehensive testing.

- Greater stakeholder confidence: Providing transparency in the testing process through visualization and collaboration allows stakeholders to gain confidence in the tests and the quality of the software product.

The "fourth Amigo" of software delivery?

The integration of feedback loops and visualization into generative AI for software testing is a promising approach for enhancing the testing process. Through it, we can create a more efficient and effective software testing ecosystem. This can be achieved in three broad steps:

- Fostering collaboration between testers, SMEs, and AI models.

- Refining the AI-generated models and tests.

- Providing visualization of the testing results.

This approach not only ensures the quality of the tests generated , butalso maximizes the potential benefits of AI integration in the software testing industry.

To discover how you can start reaping the benefits of generative AI for your software testing, join Paul Gerrard, Jonathon Wright and me for a roundtable discussion, now available on demand.