Discover Enterprise Test Data®

Simplify your complex data landscape and provide confidence and clarity at every step of your data...

Read more about Discover Enterprise Test Data® Learn moreAI-powered. End-to-end. Your complete test data management platform.

Explore Curiosity's collection of webinars, podcasts, blogs and success stories, covering everything from visual modelling to artificial intelligence and test data management.

Deliver superior test data and overcome the challenges of complexity, legacy, scale, and regulation with Curiosity Software.

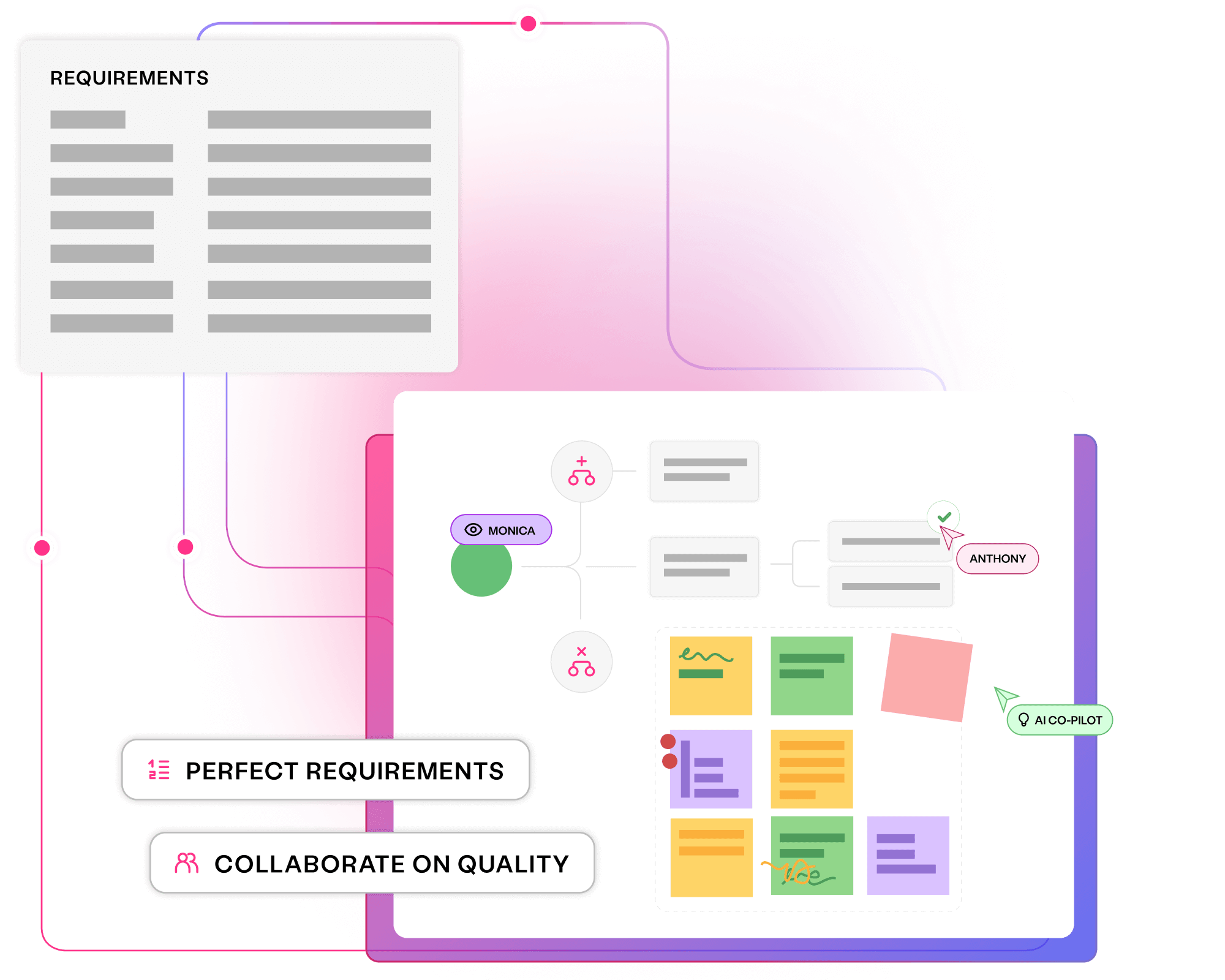

Align all stakeholders to quality outcomes and create critical assets early, delivering superior software at speed with Curiosity's platform.

Collaborate seamlessly on organisation-wide quality goals

Generate key assets upfront, with measurable coverage to prevent bugs

Maximise productivity, with minimal toil and rework

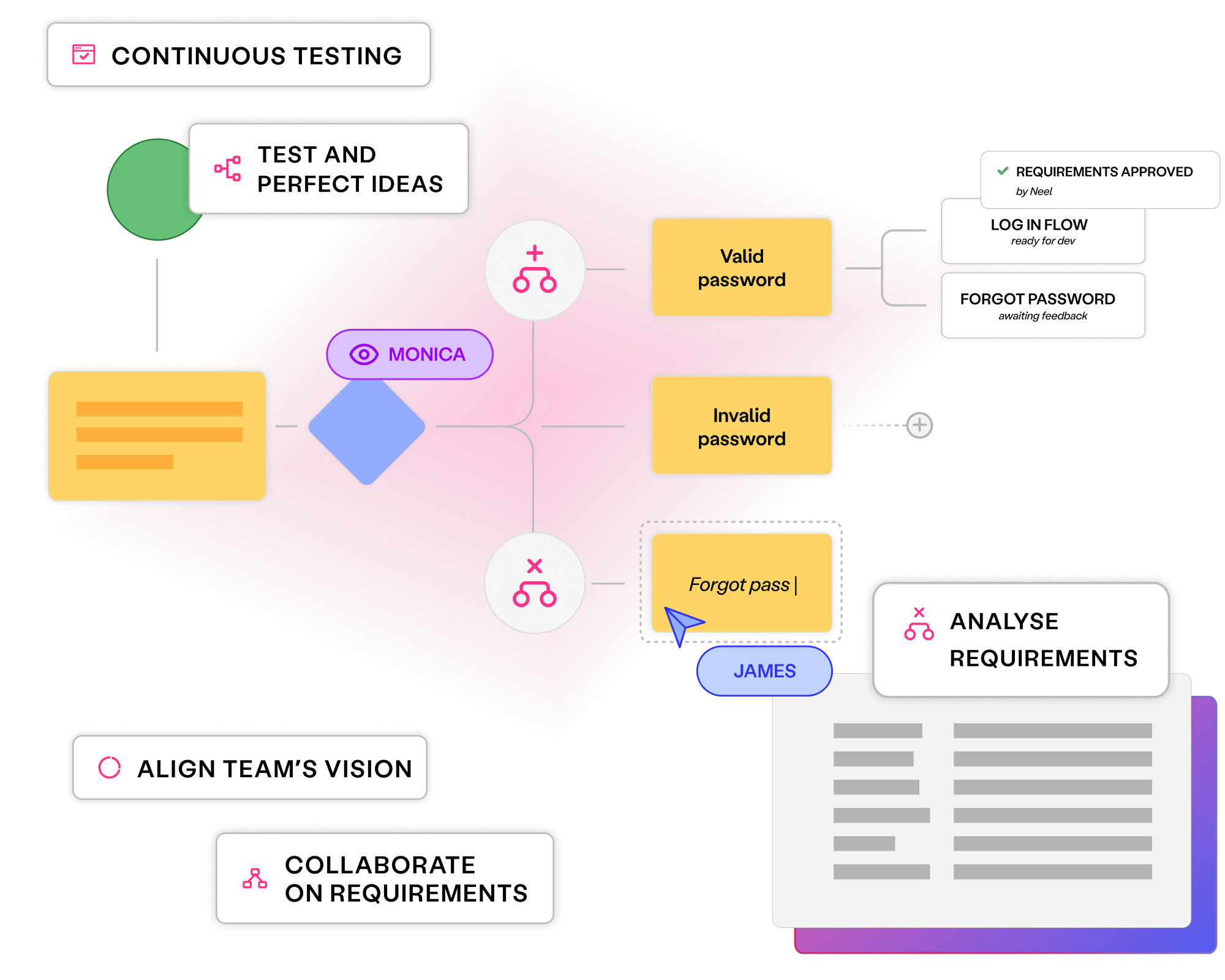

Visual models align stakeholders and enable upfront critical thinking, reducing costly bugs and avoiding miscommunications.

Automating repetitive test creation, requirements engineering and maintenance enables faster releases with minimal rework.

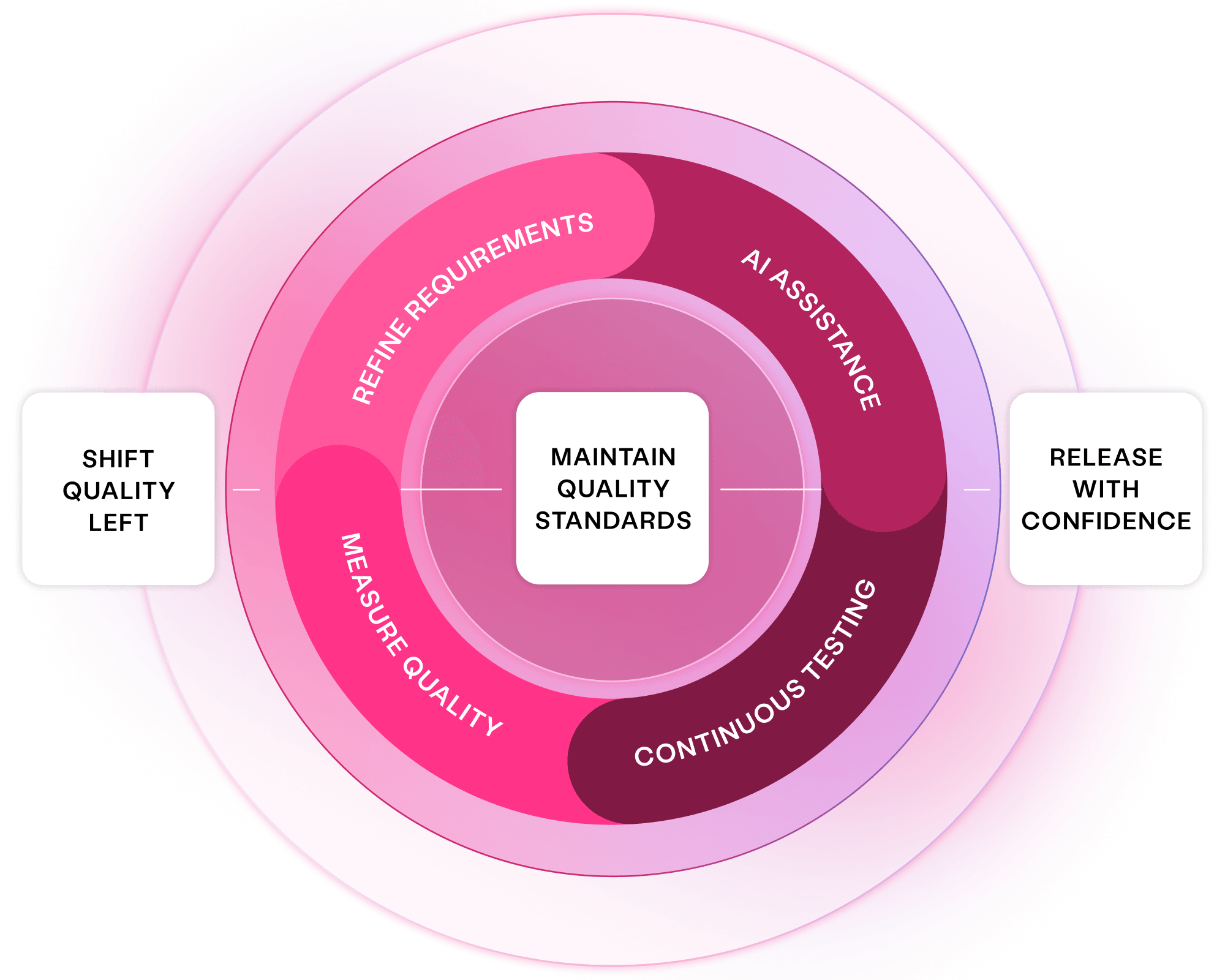

Instilling quality upfront and ensuring it throughout software delivery finds bugs while they are quick and affordable to fix.

Measuring coverage and generating optimised tests provides transparency and assurance, without under-testing or wasteful over-testing.

Visualise requirements to avoid miscommunications and align every team on quality outcomes. Ensure transparency, foster critical thinking and streamline software delivery.

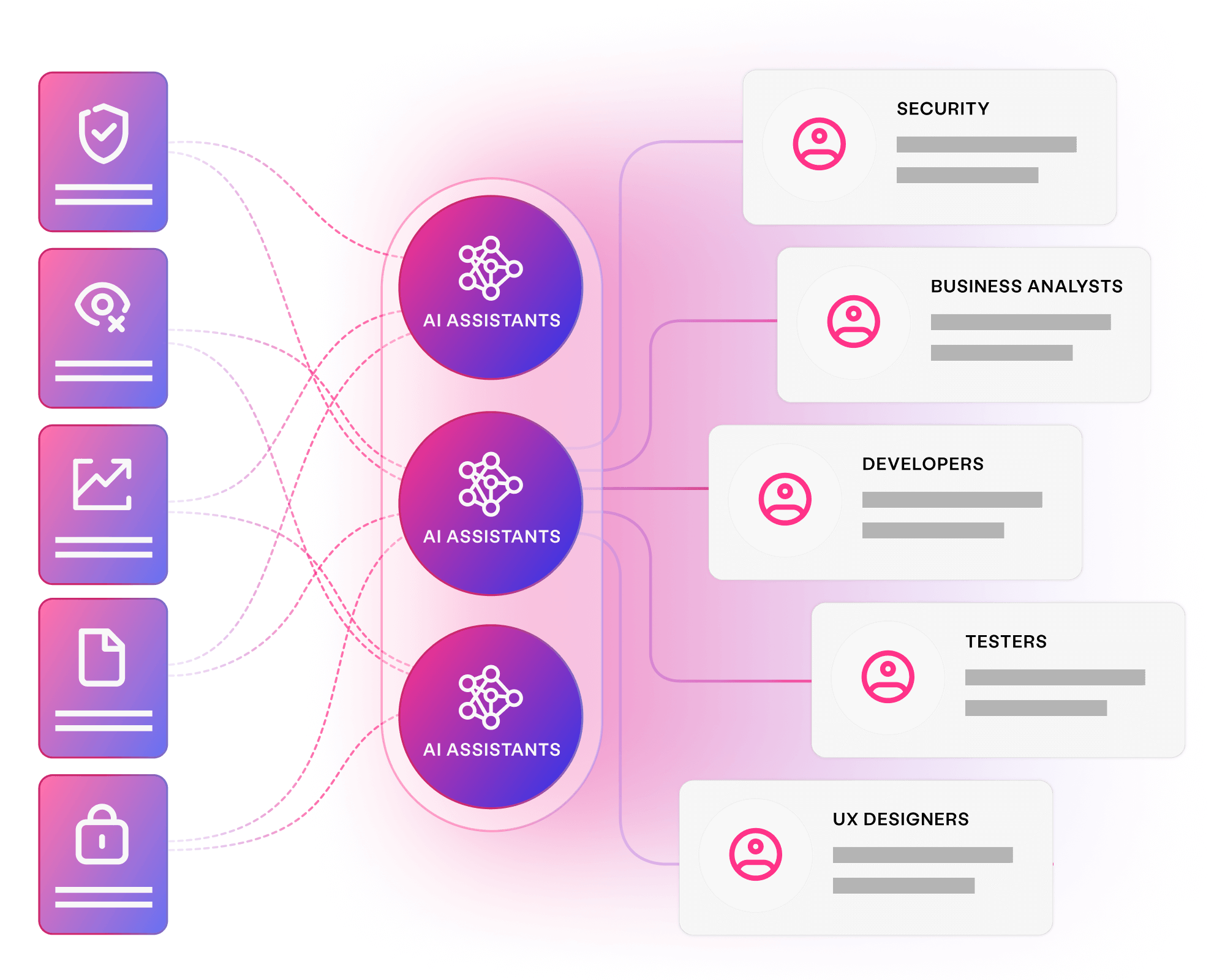

Provide the domain expertise, specialist feedback and assets your teams need. Virtual assistants pay off technical debt, provide on demand clarity, and augment your tests and requirements.

Unblock delivery and prevent quality issues before they arise. Continuously generate rigorous tests and data, with full transparency and coverage.

Across industries, some of the world’s largest enterprises leverage our platform to deliver superior software faster than ever:

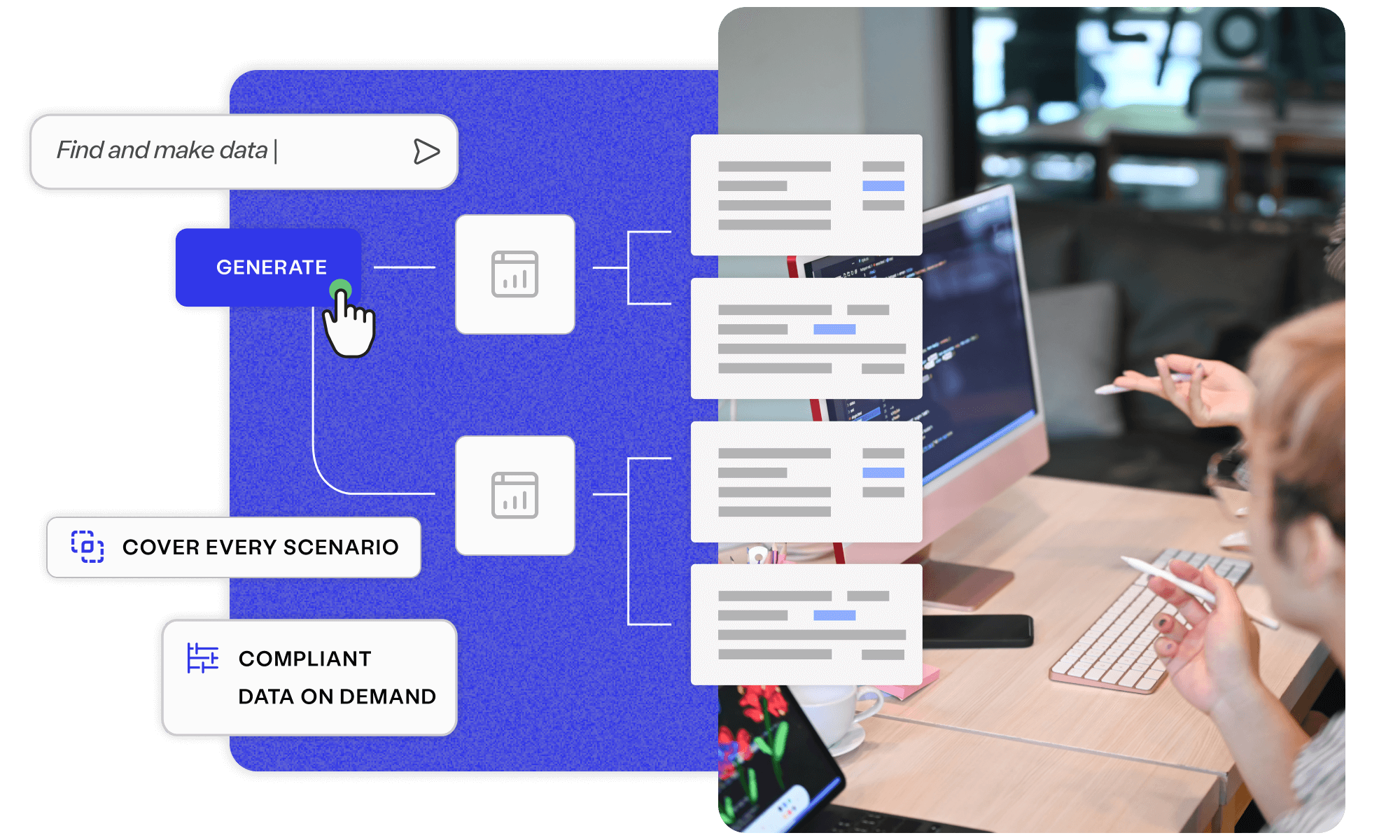

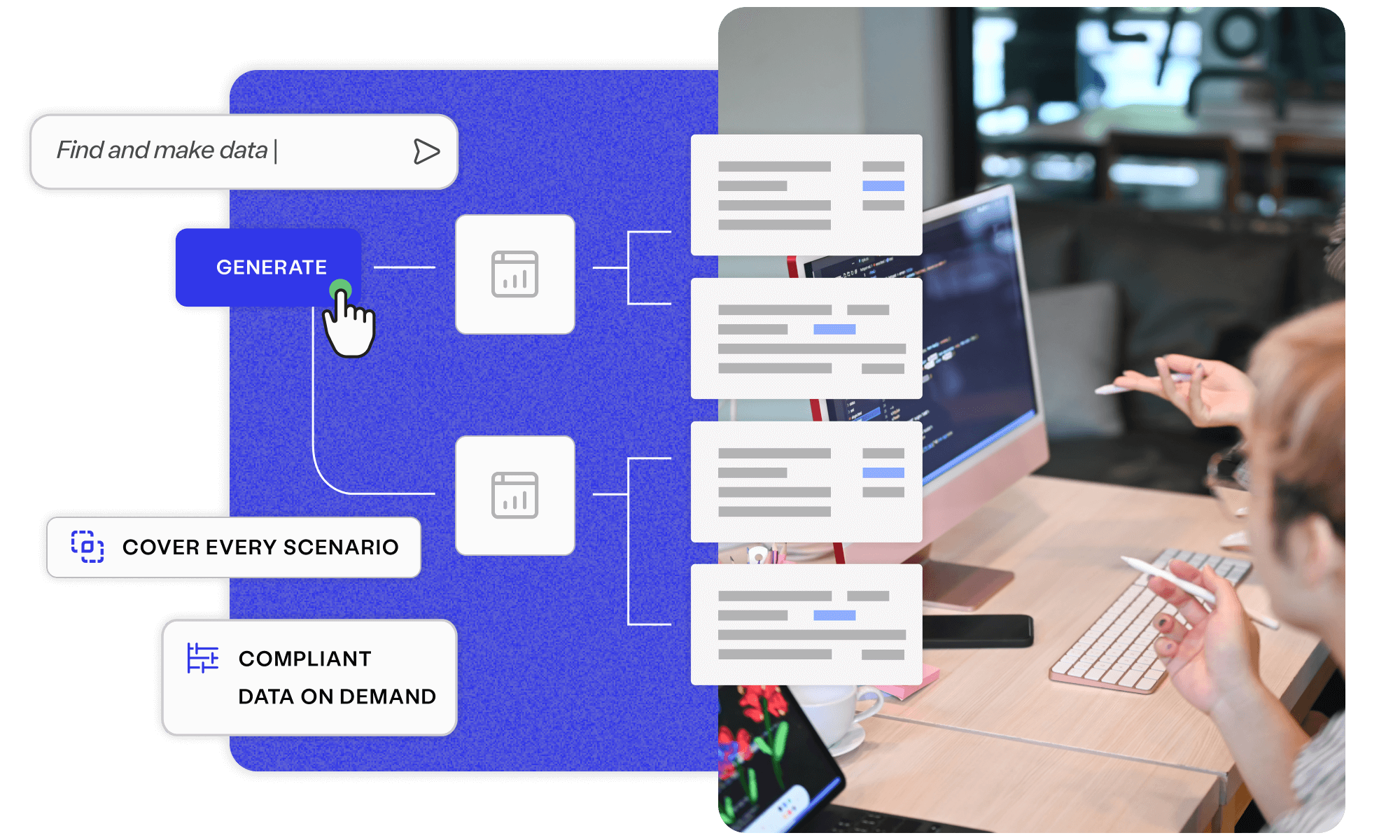

Manually finding and making data adds up to one of the most resource-intensive, time-consuming tasks in testing and development. Automating provisioning can make the difference when striving to hit release deadlines and stay on budget.

Senior Test Data Engineer

AI is making system data more complex than ever. Developing AI-driven, AI-built systems requires diverse data that reflects intricate relationships, trends and hierarchies. Model-based data generation is perfect for overcoming this complexity.

Principal Test Data Engineer

Using generative AI to visualise complex requirements provides the clarity developers need to build quality systems. The same models then generate optimal tests, testing continuously and paying off technical debt in requirements, tests and code.

Chief Technology Officer

Testers and developers can spend 20-50% of their time on data-related activities. Accurately and automatically provisioning test data is one of the biggest and fastest wins for enterprises seeking to deliver software faster and with better quality.

Test Data Engineering Lead

Curiosity’s models ensured the reliability of complex connections between user types, so payments stay on target and private data remains secure … They identified minor and major bugs, so we could bring our product to market quickly—and guarantee our users a simple, worry-free experience.

John McElroy

President of Eljin Productions

Payment platforms and complex banking systems are perfect for model-based data generation. We can break their intricate logic down into intuitive visual models, applying algorithms that create the smallest message set needed to cover diverse combinations.

Program Manager, ISO 20022

Visual models break our system down in reusable chunks that generate the functional and visual tests we need. We can further assemble these reusable building blocks automatically as new courses are checked in, generating rigorous tests.

Greg Sypolt

VP of Quality Engineering

Curiosity’s platform enabled rigorous in-sprint testing, while facilitating cutting-edge development practices like shift left API testing, fail-fast experimentation, and test-driven API design. It worked seamlessly alongside our teams and processes.

Johnny Pitt

Founder of ThinkDonate

Importing requirements to visual models not only generates rigorous test automation at speed; it also improves the requirements and pays off technical debt. This transparency and shared vision allows critical thinking upfront, while building quality throughout the delivery lifecycle.

Product Owner

Miscommunications, silos, and a lack of transparency create bottlenecks throughout software delivery. AI can now diagram complex requirements and generate tests. This not only accelerates delivery and pays off technical debt; it also provides a collaborative vision that aligns every team.

VP of Application Delivery

Start with a free resource, or, talk with an expert about how you can embed quality and productivity throughout your software delivery.

Simplify your complex data landscape and provide confidence and clarity at every step of your data...

Read more about Discover Enterprise Test Data® Learn more

Discover how a Global 2000 software vendor uses Curiosity's Enterprise Test Data.

Read more about Test data generation for an AI-driven platform Learn more

Learn how you can remove bugs and productivity blockers throughout your delivery ecosystem.

Read more about Meet with a Curiosity expert Book nowCuriosity's platform integrates with your people, processes and tools, accelerating requirements engineering, data delivery and more.

View all integrations